Abstract

Uncertainty quantification accuracy of system performance has an important influence on the results of reliability-based design optimization (RBDO). A new uncertain identification and quantification methodology is proposed considering the strong statistical variables, sparse variables, and interval variables simultaneously. Maximum likelihood function and Akaike information criterion (AIC) methods are used to identify the best-fitted distribution types and distribution parameters of sparse variables. The interval variables are represented with evidence theory. Finally, a unified uncertainty quantification framework considering the three types of uncertain design variables is put forward, and then the failure probability of system performance is quantified with belief and plausibility measures. The Kriging metamodel and random sampling method are used to reduce the computational complexity. Three examples are illustrated to verify the effectiveness of the proposed methodology.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Reliability analysis is concerned with the assessment of system performance in the presence of uncertainty. Reliability-based design optimization (RBDO) approach attempts to find the optimum structure such that the failure probability of system performance is satisfied (Zhu and Du 2016), and has been researched and applied widely by industry, government, and academia (Cho et al. 2016; Matsumura and Haftka 2013; Paulson and Starkey 2013; Suryawanshi and Ghosh 2015).

Uncertainties in the RBDO include input variable uncertainty and model uncertainty. The uncertain input variables can be divided into strong statistical variables, sparse variables, and interval variables according to the available amounts of input experimental data (Oberkampf et al. 2004). Strong statistical variables are aleatory uncertain variables, which are inherent and irreducible variation associated with the physical system (Mullins et al. 2016). Sparse variables and interval variables are epistemic uncertain variables, which derive from some levels of ignorance or incomplete information about the physical system or environment (Du 2006). Model uncertainty is also epistemic uncertainty, which is due to uncertainty in model parameters, numerical solution errors, and model form errors (Nannapaneni et al. 2016). Model uncertainty has an important influence on the reliability calculation, and has been researched widely (Arendt et al. 2012) (Jiang et al. 2016) (Hu et al. 2017). The paper focuses on the quantification of input variable uncertainty, so model uncertainty is not considered, and the design model is assumed to be deterministic.

Representation and management of input variable uncertainty are important in RBDO. Strong statistical variables are treated commonly as random variables with known probability distributions, such as Normal distribution, Gamma distribution, Beta distribution. Interval variables are represented using non-probabilistic approaches, such as evidence theory, fuzzy theory, convex model theory, interval method (Bae et al. 2004; Beer et al. 2013; Simoen et al. 2015). The uncertainties of sparse variables are complicated because their distribution types and distribution parameters are uncertain. Though maximum likelihood estimation method (Sankararaman and Mahadevan 2011), Bayesian method (Xi et al. 2014), stochastic inverse method (Choi and Yoo 2016), nonparametric minimum power method (Chee 2017) have been presented, the accuracy of results is influenced by the distribution types. If the selected distribution types are not proper, the accuracy is very low. Then, Kang et al. (2016) proposed a sequential statistical modeling method to select appropriate candidate distribution types, but the method can accurately identify distribution types only if sufficient data are available. To solve this problem, a selection criterion of distribution types is proposed for the uncertainty representation of sparse variables in this paper.

The three types of input variable uncertainties are propagated to the uncertainty of system performance, so large amounts of methods have been proposed to integrate these uncertainties and quantify the influence on the system performance. The uncertainties of performance function considering the influences of both strong statistical variables and interval variables are quantified using belief and plausibility measures (Du 2008), probabilistic approach (Zaman et al. 2011), sampling-based worst case method (Yoo and Lee 2013), unified uncertain analysis method (Li et al. 2016), and probability box model (Liu et al. 2017). The performance uncertainty under sparse variables are calculated using Bayesian model averaging method and Bayesian hypothesis testing method (Sankararaman and Mahadevan 2013), possibility theory (Ren et al. 2015), and single-loop sampling approach (Nannapaneni et al. 2016). Although many methods have been proposed for uncertainty quantification, there is not a unified framework of uncertainty quantification considering these three types of input uncertain variables, simultaneously.

Bayesian theory is an attractive framework in uncertainty representation and management, and has been widely applied, such as identification of material parameters in high strength steel (Wang et al. 2015), construction of surrogate dictionary in elasticity problems (Contreras et al. 2016), representation of model uncertainty (Gal and Ghahramani 2016), construction of analysis model in credit scoring (Xia et al. 2017). Therefore, through using Bayesian theory, we proposed a unified uncertainty identification and quantification methodology considering these three types of uncertain variables due to insufficient input data. The construction of the work is as follows: In Section 2, the three types of uncertainties are analyzed and represented using different presentation functions. The distribution types, distribution parameters and weight ratios of sparse variables are identified and represented in Section 3. In Section 4, the interval variables are represented using evidence theory. The unified uncertainty quantification framework is proposed, and the calculation flowrate is shown in Section 5 followed by three examples in Section 6.

2 Analysis of different types of uncertain input variables

There are three types of uncertain input variables, including strong statistical variables X, sparse variables Y, and interval variables Z (Oberkampf et al. 2004). The three types of uncertain input variables are represented using different presentation forms.

-

(1)

Strong statistical variables X

The random distribution types can be acquired easily or a large amount of experimental data is available, the uncertain variables X are sufficient to derive an accurate statistical model. So, X are specified by a probability distribution type θ with precise distribution parameters ξ.

The distribution type θ may be Normal distribution, Gamma distribution, Exponential distribution, etc. If θ is Normal distribution type, ξ are mean ξ 1 and standard deviation ξ 2.

-

(2)

Sparse variables Y

Only a small number of experimental input point or interval data can be acquired, it is time-consuming and computational complex to acquire more input data, so the uncertain variables Y are insufficient. A single distribution type may not fit the available input data, so the sum of multiple distribution types θ i is used to fit Y.

where the subscript i represents the i-th distribution type. The distribution type θ i , weight ratio w i and distribution parameters ξ i will be identified and represented in Section 3.

-

(3)

Interval variables Z

Interval variables Z are also epistemic uncertain variables. Experimental input data are missing, so the interval variable z i ∈ Z is specified using evidence theory. The details will be explained in Section 4.1.

3 Parameter identification and representation of sparse variables

3.1 Distribution parameter estimation under candidate distribution type

The probability density function of sparse variables Y cannot be acquired directly, it is difficult to fit Y using arbitrary fixed distribution type. So, the eight candidate distribution types are used, which are shown in Table 1.

Assuming the insufficient data of Y contains m point data a i (i = 1, ⋯ , m) and n interval data \( \left[\underset{\bar{\mkern6mu}}{b_j},{\overline{b}}_j\right]\kern0.2em \left( j=1,\cdots, n\right) \). Let f y (y|ξ, θ k ) denote the probability density function (PDF) under candidate distribution type θ k (k = 1, ⋯ , 8) and distribution parameters ξ. Firstly, for every candidate distribution type θ k , the likelihood estimation function (Sankararaman and Mahadevan 2011) L(ξ, θ k ) using the prescribed point data and interval data is constructed.

The maximum likelihood estimations of ξ under distribution type θ k are acquired by maximizing L(ξ, θ k ). Further, the uncertainty of distribution parameters ξ is calculated using Bayes’ theorem. The probability density function f ξ (ξ|θ k ) of the distribution parameters ξ is expressed as

3.2 Distribution type identification based on Akaike information criterion

The estimated distribution parameters under different distribution type are different based on the same insufficient input data. Therefore, Akaike information criterion (AIC) method is employed to estimate the best-fitted distribution types and corresponding weight ratios.

AIC is the relative quality measure of statistical models for a given set of data, which can compute the fitting degree of the candidate distributions. (Gelman et al. 2013) The AIC value of the candidate distribution type θ k is defined in (5)

where, num k is the amount of estimated distribution parameters in the candidate distribution type θ k , L max(ξ, θ k ) is the maximum value of the likelihood function L(ξ, θ k ) under distribution type θ k , the subscript k(k = 1, ⋯ , 8) presents the eight candidate distribution type defined in Section 3.1.

The AIC values of the eight candidate distribution types are calculated and denoted by AIC 1 , AIC 2 , ⋯ , AIC 8, respectively. Let AIC min be the minimum of these values. Then, the probability P θ_k that the k-th model minimizes the estimated information loss can be interpreted in (6).

The distribution types with P θ_k ≥ 0.1 (Taguri et al. 2014) are selected to represent the sparse variables Y. The weight ratios w k of these selected distribution types are proportional to the probability P θ_k , and the summation of these weight ratios is 1.

As an example, suppose that the AIC values are 100, 102, 104, 106, 108, 110, 112 and 114, respectively. Then the probabilities of these distribution types P θ are 1, 0.368, 0.135, 0.05, 0.02, 0.01, 0.0025 and 0.0009, respectively. Therefore, the distribution type 1 (Normal distribution), distribution type 2 (F distribution) and distribution type 3 (Gamma distribution) are used, and the weight ratios are 0.665, 0.245, and 0.090, respectively.

3.3 Distribution parameters estimation using mixed distribution types

After determining the distribution types and corresponding weight ratios, the distribution parameters are determined using the Bayesian model averaging method (Nannapaneni et al. 2016).

The probability density function under multiple distribution types is calculated using (8).

where θ k is the selected distribution type, w k is the corresponding weight ratio, and f y (y|ξ, θ k ) is the probability density function under distribution type θ k ,

The combined likelihood function for insufficient input point data and interval data is constructed as follows.

The optimum distribution parameters ξ ∗ of the selected distribution types are calculated through maximizing L(ξ). Further, the uncertainty of the distribution parameters ξ is calculated using Bayes’ theorem. The probability density function f ξ (ξ) is expressed in (10).

3.4 Discrete representation of uncertain distribution parameters

To reduce the computational difficulty in the representation of sparse variables, the continuous uncertain distribution parameters ξ are dispersed in the neighborhood of optimum distribution parameters ξ ∗. For example, there are mm parameters ξ 1 , ξ 2 , ⋯ , ξ mm in ξ, and the optimum parameters are \( {\xi}_1^{\ast },{\xi}_2^{\ast },\cdots, {\xi}_{mm}^{\ast } \), respectively. In the dispersion of ξ i , ξ 1 , ⋯ , ξ i − 1 , ξ i + 1 , ⋯ , ξ mm are fixed to be \( {\xi}_1^{\ast },\cdots, {\xi}_{i-1}^{\ast },{\xi}_{i-1}^{\ast },\cdots, {\xi}_{mm}^{\ast } \), respectively. The step to step flowrate to disperse ξ i is shown as follows.

-

Step 1:

The lower and upper bound of distribution parameter ξ i are determined through analyzing the probability density function f ξ (ξ) in (10). In theory, the uncertain design space of ξ i is (−∞, +∞), it is impossible to obtain the bounds. So, the 99% confidence interval is used, the lower bound is set to be \( {\xi}_i^{\min }={\Gamma}_{\xi_i}^{-1}(0.005) \), and the upper bound is set to be \( {\xi}_i^{\max }={\Gamma}_{\xi_i}^{-1}(0.995) \), where \( {\Gamma}_{\xi_i}^{-1}(p) \) is the inverse cumulative distribution function of ξ i .

-

Step 2:

The interval \( \left[{\xi}_i^{\min },{\xi}_i^{\max}\right] \) is decomposed according to the inverse cumulative distribution function. \( {\xi}_i^j={\Gamma}_{\xi_i}^{-1}\left(0.1 j\right), j=1,\cdots, 9 \) are calculated, and then the design space of ξ i is decomposed to ten Bayesian evidence intervals, \( \left[{\xi}_i^{\min },{\xi}_i^1\right] \), \( \left[{\xi}_i^j,{\xi}_i^{j+1}\right]\left( j=1,\cdots, 8\right) \) and \( \left[{\xi}_i^9,{\xi}_i^{\max}\right] \), which have the same BPA m = 0.1.

-

Step 3:

The long interval is decomposed. If the interval length \( {\xi}_i^{j+1}\hbox{-} {\xi}_i^j>0.2\left({\xi}_i^{\max }-{\xi}_i^{\min}\right) \), the interval is decomposed to \( \left[{\xi}_i^j,\left({\xi}_i^j+{\xi}_i^{j+1}\right)/2\right] \) and \( \left[\left({\xi}_i^j+{\xi}_i^{j+1}\right)/2,{\xi}_i^{j+1}\right] \), and the corresponding BPA are calculated using the cumulative distribution function of ξ i .

-

Step 4:

If the length of the decomposed sub-interval exceeds \( 0.2\left({\xi}_i^{\max }-{\xi}_i^{\min}\right) \), Step 3 is implemented again.

-

Step 5:

After executing Step 4, the uncertain distribution parameters ξ are decomposed into nm sub-interval \( \left[{\xi}_i^{j-1},{\xi}_i^j\right]\left( j=1,\cdots, nm\right) \), where \( {\xi}_i^0={\xi}_i^{\min } \) and \( {\xi}_i^{nm}={\xi}_i^{\max } \). Then ξ i is represented using discrete points \( \left({\xi}_i^{j-1}+{\xi}_i^j\right)/2\left( j=1,\cdots, nm\right) \) with the BPA of interval \( \left[{\xi}_i^{j-1},{\xi}_i^j\right] \).

4 Representation of interval variables based on evidence theory

4.1 Evidence theory

Evidence theory, also referred to as Dempster-Shafer (DS) theory, is presented by Shafer (Shafer 1976). It combines evidence from different incomplete knowledge situations and arrives at a degree of belief that considers all available evidences. In evidence theory, the basic probability assignment (BPA) represents the uncertain distribution. There are three formations of BPAs, including general, Bayesian and consonant BPA, respectively (Tao et al. 2016).

There are no experimental input data, and the BPAs are acquired from the expert knowledge, so the Bayesian BPA structure is used to represent interval variables Z. Let Ψ denote the set of all possible values for the interval variable Z i , and γ is the subset of Ψ. The BPA \( {s}_{Z_i}\left(\gamma \right) \) satisfies the following axioms (Du 2008).

While there are multiple interval variables, the multiple BPA structures can be aggregated using the rules of combination, which is similar to a joint probability in probability theory. For example, there are interval variables Z 1 and Z 2, the joint BPA s Z (γ Z ) can be calculated using (12).

4.2 Uncertain measure of interval variables

In the reliability analysis problem only considering interval variables Z = {Z 1, Z 2, ⋯ , Z q }, the system performance function is expressed by G = g(Z), and the failure model F is defined as F = {Z|g(Z) ≤ c}, where c is a limit state. m Z (γ) is the joint BPA over the interval space Z = {Z 1, Z 2, ⋯ , Z q }, the failure likelihood of the system performance function is quantified by belief measure Bel(F) and plausibility measure Pl(F).

The belief measureBel(F) is the lower bound of failure probability, which reflects the degree of belief that the failure event F would occur. It is calculated by adding the BPAs of subset γ entirely within the failure region F = {Z|g(Z) ≤ c}, which is shown in (13)

The plausibility measure Pl(F) is the upper bound of failure probability, which is calculated by adding the BPAs of the subsets γ that are in the failure region and the BPAs of the subsets γ which intersect with the failure region. So Pl(F) is defined as follows.

Then the true failure probability p F is bounded in the interval between Bel(F) and Pl(F), namely.

5 Uncertainty quantification considering multiple types of uncertain input variables

5.1 Reliability calculation considering mixture uncertainties

The system performance function considering three types of uncertainties can be expressed as G = g(X, Y(ξ), Z). The strong statistical variables X are expressed using probability distribution function with determinate distribution parameters. The sparse variables Y are determined with multiple distribution types, and the distribution parameters ξ are represented using discrete sampling points with their BPAs. The interval variables Z are quantified using intervals with their BPAs.

The uncertain distribution parameters ξ are represented using discrete sampling points (ξ i − 1 + ξ i)/2(i = 1, ⋯ , nm) with the BPA of interval [ξ i − 1, ξ i], and the uncertain interval variables Z are represented using intervals [η j , η j + 1](j = 1, ⋯ , nn) with their BPAs.

According to the theorem of total probability, the total failure probability p F can be computed by

where

The minimum and maximum of p F_ij are defined to be \( {p}_{F\_ ij}^L \) and \( {p}_{F\_ ij}^U \), respectively. The Kriging model and random sampling method are used to calculate \( {p}_{F\_ ij}^L \) and \( {p}_{F\_ ij}^U \). Optimal Latin Hypercube technique is used to obtain the sample points, and then the Kriging metamodel \( \widehat{G} \) of system performance function G is constructed using MATLAB Kriging Toolbox DACE (Søren et al. 2017). In the sub-interval ξ ∈ [ξ i − 1, ξ i] and Z ∈ [η j , η j + 1], The ξm random samplings of ξ ∈ [ξ i − 1, ξ i] and zn random samplings of Z ∈ [η j , η j + 1] are obtained, and then the system performance under these sample points are calculated using the Kriging metamodel \( \widehat{G} \). The minimum and maximum of \( \widehat{G} \) under every samples of Z can be calculated and named as Min ij − q and Max ij − q , respectively, where q ∈ [1, ⋯ , zn]. Then \( {p}_{f\_ ij}^L \) and \( {p}_{f\_ ij}^U \) are the failure probability of Min ij − q and Max ij − q , and calculated in (18) and (19).

After the calculation of \( {p}_{F\_ ij}^L \) and \( {p}_{F\_ ij}^U \), the belief and plausibility of p F are calculated in (20) and (21), respectively.

5.2 Calculation procedure of the proposed algorithm

The uncertainty identification and quantification of system failure probability are implemented using mixture of three types of uncertainties based on insufficient input data. The procedure of the proposed algorithm is summarized as follows.

-

(1)

The uncertain variables are decomposed to strong statistical variables X, sparse variables Y, and intervals variables Z according to the available amount of input experimental data.

-

(2)

The strong statistical variables X are represented using probability distribution function with determinate distribution parameters.

-

(3)

The interval variables Z are represented by sub-intervals with basic probability assignment.

-

(4)

For sparse variables Y, the distribution parameters under multiple distribution types are calculated through maximum of L(ξ, θ k ) based on sparse input point and interval data. Then, the best-fitting distribution types θ and corresponding weight ratios w are calculated using AIC method, the distribution parameters ξ and their probability density function f ξ (ξ) are determined using the Bayesian model averaging method.

-

(5)

The continuous uncertain distribution parameters ξ are decomposed to reduce computation complexity. ξ are represented using discrete points (ξ i − 1 + ξ i)/2(i = 1, ⋯ , nm) with the BPA of interval [ξ i − 1, ξ i].

-

(6)

The kriging model \( \widehat{G} \) of the system performance functionG = g(X, Y(ξ), Z) is constructed using Optimal Latin Hypercube technique. In the ij-th sub-interval where ξ ∈ [ξ i − 1, ξ i] andZ ∈ [η j , η j + 1], the minimum value Min ij − q and maximum value Max ij − q of \( \widehat{G} \) are calculated using the random sampling method, and then \( {p}_{f\_ ij}^L \) and \( {p}_{f\_ ij}^U \) are calculated.

-

(7)

The belief Bel(G) and plausibility Pl(G) of p f are calculated using (20) and (21).

6 Numerical examples

6.1 Example 1-mathematical problem

A mathematical example is considered to demonstrate the effectiveness of the proposed method. The system performance function is G = (x + y)z, where x is strong statistical variable, y is sparse variable, and z is interval variable.

The strong statistical variable x is assumed to be Normal distribution with mean 6 and standard deviation 0.5, then x ~ N(6, 0.52). The available data of ycontain sparse points {3.8, 4.1, 5.6} and intervals [3.5, 4], [3.9, 4.1], [5, 6]. The intervals and their BPAs of z are shown in Table 2. The input data of these three variables are shown in Fig. 1.

The eight candidate distribution types θ in Table 1 are used to identify distribution parameters ξ of y. The optimum distribution parameters under these distribution types are calculated through maximizing L(ξ, θ k ) in (3), and the results are listed in Table 3. The probability density function of y with optimum distribution parameters under these candidate distribution types are shown in Fig. 2.

The best-fitted distribution types are selected using AIC method. The AIC values of these eight distribution types are also listed in Table 3, so the distribution type with minimum AIC value is uniform distribution, and the relative probability p θ_k of these eight distribution types are 0.1182, 6.5155 × 10−7, 0.1464, 0.0890, 0.0591, 1, 1.1240 × 10−7, and 0.024, respectivley. According to the algorithm in Section 3.2, the best-fitted distribution types are Uniform distribution, Normal distribution, and Gamma distribution. Their weigh ratios w are 0.7908, 0.0935, and 0.1157, respectively. So, the uncertainty of distribution parameters are calculted using (10), and the results are shown in Fig. 3.

To reduce the computional complexity, the uncertain distribution parameters are dispersed to discrete points with theirs BPAs, the dispersed results of the six distribution parameters are shown in Fig. 4. The Kriging model of performance function G is constructed based on 103 samples generated using Optimal Latin Hypercube method, and the root model square error (RMSE) of the constructed Kriging model is less than 0.0258. The uncertainty of system performance function G is estimated using the proposed unified quantification framework considering three types of input uncertainties, and Monto Carlo method, respectively. The belief and plausibility measures under different reliability limit values c are calculated and shown in Fig. 5a. The comparison of belief and plausibility results in the moderate reliability (0.90 < Reliability < 0.99) and high reliability (Reliability > 0.99) levels are shown in Fig. 5b and Fig. 5c, respectively. The uncertainty of performance function G under limit state c = 1000 are listed in Table 4. The proposed framework can obtain the accurate uncertainty quantification results of performance function, and the calculation number of system performance function decreases from 6 × 107 to 103, the computational complexity decreases seriously.

6.2 Example 2-crank slider mechanism

The example of a crank-slider mechanism in Ref. (Du 2008) is used to demonstrate the effectiveness and application of the proposed method. The mechanism is shown in Fig. 6. The length of the crank x 1, the length of the coupler x 2 and the external force x 3 are strong statistical variables. The distributions of the strong statistical variables are given in Table 5. Difference from Ref. (Du 2008), the material Young’s modulus y 1 and the yield strength y 2 of the coupler are assumed to be sparse variables. The available data of y 1 are points {195, 200, 204}(GPa) and intervals [180, 197], [199, 209], [210, 212](GPa). The available data of y 2 are points {280, 290, 298}(MPa) and intervals [270, 282], [288, 292], [299, 313](MPa). The friction coefficient z 1 and the offset z 2 are interval variables, their sub-intervals and BPAs are shown in Table 6. The internal diameter d 1 and external diameter d 2 of coupler are 25 mm and 60 mm, respectively.

The system performance function is defined by the difference between the critical load and the axial load, which is written in (22).

Through comparing the AIC values of the eight candidate distribution types, the uncertainty of the yield strength y 1 is represented using weight sum of Normal distribution, Gamma distribution, Weibull distribution, and Extreme distribution with weight ratios of 0.352, 0.091, 0.284, and 0.273, respectively. The uncertainties of distribution parameters are shown in Fig. 7.

The failure event is defined by F = {X, Y, Z|G(X, Y, Z) < c}, where c is the limit state. The Kriging model is constructed based on 2 × 103 samples, and the RMSE of the Kriging model is less than 0.0321. The belief and plausibility measures under different reliability limit values c are calculated using the proposed method and Monto Carlo method, and there are 9 × 104 samples under every limit value c in MCS method. The results are shown in Fig. 8. In the range of limit state 180 ≤ c ≤ 250, the belief and plausibility value of the proposed method are much the same as that of MCS method, which shows that the unified uncertainty quantification method presented in the paper is effective and practical.

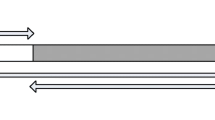

6.3 Example 3-heat exchanger

A practical heat exchanger structure shown in Fig. 9 is considered, which consists of inlet header, fin channels and outlet header. The hot streams and cold streams flow from inlet header to parallel fin channels, and then flow out in the outlet header. The fin channels, which has dimensions of 1000 × 480 × 380 mm (length × width × height), are the important heat transfer region of hot and cold streams, and the heat transfer rate between hot streams (air) and cold streams (water) through separating fins (Fig. 10) is an important index to evaluate its performance. The fin height of hot stream x 1 and cold stream x 2 are strong statistical variables because of manufacturing error, and their distributions are listed in Table 7. The fin space z 1 of hot stream and cold stream is fabricated in the predetermined length intervals, so z 1 is interval variable, its sub-intervals and BPA are listed in Table 8. Only sparse sampling point and interval data can be acquired using data acquisition machine for the input temperature and velocity of hot and cold streams y 1 ~ y 4, so y 1 ~ y 4 are sparse variables, and their sampling values are listed in Table 9.

The heat transfer rate is the most important index for heat exchanger, so the limit state function can be defined in (23).

Where Q is the heat transfer rate, which can be calculated after simulating the temperature distribution in the fin channel using finite element analysis (FEA) method, and Q m = 500kW is the allowable minimum heat transfer rate. In the FEA, the simulation model is constructed and meshed using hexahedral elements in Gambit, the inlets of streams are set to be velocity inlet, and the outlets are set to be outflow. The interface of flow regions and fin regions are set to be coupled to calculate the heat transfer rate. The meshed structures are analyzed in Fluent, and then the temperature and pressure distributions in the heat exchange are calculated. The simulation results of temperature distributions in the hot streams are plotted in Fig. 11.

The distribution types and distribution parameters of sparse variables y 1 ~ y 4 are identified using the rules in Section 3, the results are shown in Table 10. The Kriging model is constructed based on the FEA results of 2 × 103 samples, which is shown in Fig. 12. Then the presented unified uncertainty quantification method and the conventional MCS method are applied to solve the problem. The total number of the function calls by the proposed algorithm is 5 × 103, and the calculation can be finished in 2 h; while the total number of performance function evaluation is 7.2 × 108 in MCS method, and the calculation will be finished in 3d. The belief and plausibility value of the proposed method and MCS method are listed in Table 11. The differences between the two results are less than 1.7%, which shows that the presented method in the paper is effective and practical.

7 Conclusions

A unified uncertainty quantification method considering strong statistical variables, sparse variables and interval variables simultaneously is proposed. To increase accuracy and decrease computational difficulty in the representation of sparse variables, mixed distribution types are identified and the calculated continuous uncertain distribution parameters are dispersed based on their probability density function. Then, a unified uncertainty quantification framework is constructed, the strong variables and sparse variables are represented through random samplings of distribution functions, and the interval variables are represented using evidence theory. The belief and plausibility of system performance function are calculated, and the kriging model of the performance function is used to increase computational efficiency. The proposed method and MCS method are used to calculate the failure probability of three examples. The exact solutions and the results solved by the presented method are almost the same. Moreover, the required sample numbers of the proposed method is fewer than that in conventional method.

References

Arendt PD, Apley DW, Chen W (2012) Quantification of model uncertainty: calibration, model discrepancy, and identifiability. J Mech Des 134:100908. doi:10.1115/1.4007390

Bae HR, Grandhi RV, Canfield RA (2004) Epistemic uncertainty quantification techniques including evidence theory for large-scale structures. Comput Struct 82:1101–1112. doi:10.1016/j.compstruc.2004.03.014

Beer M, Ferson S, Kreinovich V (2013) Imprecise probabilities in engineering analyses. Mech Syst Signal Process 37:4–29. doi:10.1016/j.ymssp.2013.01.024

Chee CS (2017) A mixture model-based nonparametric approach to estimating a count distribution. Comput Stat Data Anal 109:34–44. doi:10.1016/j.csda.2016.11.012

Cho H, Choi KK, Gaul NJ, Lee I, Lamb D, Gorsich D (2016) Conservative reliability-based design optimization method with insufficient input data. Struct Multidiscip Optim 54(6):1609-1630. doi:10.1007/s00158-016-1492-4

Choi CK, Yoo HH (2016) Stochastic inverse method to identify parameter random fields in a structure. Struct Multidiscip Optim 54(6):1557-1571. doi:10.1007/s00158-016-1534-y

Contreras AA, Olivier PLM, Wilkins A, Omar MK (2016) Multi-model polynomial chaos surrogate dictionary for Bayesian inference in elasticity problems. Probabilist Eng Mech 46:107–119. doi:10.1016/j.probengmech.2016.08.004

Du X (2006) Uncertainty analysis with probability and evidence theories. Paper presented at the ASME 2006 international design engineering technical conference, USA

Du X (2008) Unified uncertainty analysis by the first order reliability method. J Mech Des 130:091401. doi:10.1115/1.2943295

Gal Y, Ghahramani Z (2016) Dropout as a Bayesian approximation: representing model uncertainty in deep learning. In international conference on machine learning (pp. 1050-1059).

Gelman A, Hwang J, Vehtari A (2013) Understanding predictive information criteria for Bayesian models. Stat Comput 24:997–1016. doi:10.1007/s11222-013-9416-2

Hu Z, Ao D, Mahadevan S (2017) Calibration experimental design considering field response and model uncertainty. Comput Methods Appl Mech Eng 318:92-119. doi:10.1016/j.cma.2017.01.007

Jiang Z, Chen S, Apley DW, Chen W (2016) Reduction of epistemic model uncertainty in simulation-based multidisciplinary design. J Mech Des 138:081403. doi:10.1115/1.4033918

Kang YJ, Lim OK, Noh Y (2016) Sequential statistical modeling method for distribution type identification. Struct Multidiscip Optim. doi:10.1007/s00158-016-1567-2

Li G, Lu Z, Li L, Ren B (2016) Aleatory and epistemic uncertainties analysis based on non-probabilistic reliability and its kriging solution. Appl Math Model 40:5703–5716. doi:10.1016/j.apm.2016.01.017

Liu X, Yin L, Hu L, Zhang Z (2017) An efficient reliability analysis approach for structure based on probability and probability box models. Struct Multidiscip Optim. doi:10.1007/s00158-017-1659-7

Matsumura T, Haftka RT (2013) Reliability based design optimization modeling future redesign with different epistemic uncertainty treatments. J Mech Des 135:091006. doi:10.1115/1.4024726

Mullins J, Ling Y, Mahadevan S, Sun L, Strachan A (2016) Separation of aleatory and epistemic uncertainty in probabilistic model validation. Reliab Eng Syst Saf 147:49–59. doi:10.1016/j.ress.2015.10.003

Nannapaneni S, Hu Z, Mahadevan S (2016) Uncertainty quantification in reliability estimation with limit state surrogates. Struct Multidiscip Optim. doi:10.1007/s00158-016-1487-1

Oberkampf WL, Helton JC, Joslyn CA, Wojtkiewicz SF, Ferson S (2004) Challenge problems: uncertainty in system response given uncertain parameters. Reliab Eng Syst Saf 85:11–19. doi:10.1016/j.ress.2004.03.002

Paulson EJ, Starkey RP (2013) Development of a multistage reliability-based design optimization method. J Mech Des 136:011007. doi:10.1115/1.4025492

Ren Z, Cho H, Yeon J, Koh CS (2015) A new reliability analysis algorithm with insufficient uncertainty data for optimal robust design of electromagnetic devices. IEEE Trans Magn 51:1–4. doi:10.1109/tmag.2014.2360753

Sankararaman S, Mahadevan S (2011) Likelihood-based representation of epistemic uncertainty due to sparse point data and/or interval data. Reliab Eng Syst Saf 96:814–824. doi:10.1016/j.ress.2011.02.003

Sankararaman S, Mahadevan S (2013) Distribution type uncertainty due to sparse and imprecise data. Mech Syst Signal Process 37:182–198. doi:10.1016/j.ymssp.2012.07.008

Shafer G (1976) A mathematical theory of evidence. Princeton university press, Princeton

Simoen E, De Roeck G, Lombaert G (2015) Dealing with uncertainty in model updating for damage assessment: a review. Mech Syst Signal Process 56-57:123–149. doi:10.1016/j.ymssp.2014.11.001

Søren NL, Hans BN and Jacob S (2017) DACE a matlab kriging toolbox (Version 2.0), http://www2.imm.dtu.dk/projects/dace/. Accessed 7 February 2017

Suryawanshi A, Ghosh D (2015) Reliability based optimization in aeroelastic stability problems using polynomial chaos based metamodels. Struct Multidiscip Optim. doi:10.1007/s00158-015-1322-0

Taguri M, Matsuyama Y, Ohashi Y (2014) Model selection criterion for causal parameters in structural mean models based on a quasi-likelihood. Biometrics 70:721–730. doi:10.1111/biom.12165

Tao YR, Cao L, Huang ZHH (2016) A novel evidence-based fuzzy reliability analysis method for structures. Struct Multidiscip Optim 55:1237-1249. doi:10.1007/s00158-016-1570-7

Wang H, Zeng Y, Yu X, Li G, Li E (2015) Surrogate-assisted Bayesian inference inverse material identification method and application to advanced high strength steel. Inverse Probl Sci Eng 24(7):1133–1161. doi:10.1080/17415977.2015.1113960

Xi Z, Youn BD, Jung BC, Yoon JT (2014) Random field modeling with insufficient field data for probability analysis and design. Struct Multidiscip Optim 51:599–611. doi:10.1007/s00158-014-1165-0

Xia Y, Liu C, Li Y, Liu N (2017) A boosted decision tree approach using Bayesian hyper-parameter optimization for credit scoring. Expert Syst Appl 78:225–241. doi:10.1016/j.eswa.2017.02.017

Yoo D, Lee I (2013) Sampling-based approach for design optimization in the presence of interval variables. Struct Multidiscip Optim 49:253–266. doi:10.1007/s00158-013-0969-7

Zaman K, McDonald M, Mahadevan S (2011) Probabilistic framework for uncertainty propagation with both probabilistic and interval variables. J Mech Des 133:021010. doi:10.1115/1.4002720

Zhu Z, Du X (2016) Reliability analysis with monte carlo simulation and dependent kriging predictions. J Mech Des 138:121403. doi:10.1115/1.4034219

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Funding

This work was supported by the National Natural Science Foundation of China under grant [number: 51505421, 51375451, U1608256], and Zhejiang Provincial Natural Science Foundation of China under grant [number: LY15E050015].

Rights and permissions

About this article

Cite this article

Peng, X., Li, J. & Jiang, S. Unified uncertainty representation and quantification based on insufficient input data. Struct Multidisc Optim 56, 1305–1317 (2017). https://doi.org/10.1007/s00158-017-1722-4

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00158-017-1722-4