Abstract

We present a new multiparty protocol for the distributed generation of biprime RSA moduli, with security against any subset of maliciously colluding parties assuming oblivious transfer and the hardness of factoring. Our protocol is highly modular, and its uppermost layer can be viewed as a template that generalizes the structure of prior works and leads to a simpler security proof. We introduce a combined sampling-and-sieving technique that eliminates both the inherent leakage in the approach of Frederiksen et al. (Crypto’18) and the dependence upon additively homomorphic encryption in the approach of Hazay et al. (JCrypt’19). We combine this technique with an efficient, privacy-free check to detect malicious behavior retroactively when a sampled candidate is not a biprime and thereby overcome covert rejection-sampling attacks and achieve both asymptotic and concrete efficiency improvements over the previous state of the art.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

A biprime is a number \(N\) of the form \(N=p\cdot q\) where \(p\) and \(q\) are primes. Such numbers are used as a component of the public key (i.e., the modulus) in the RSA cryptosystem [46], with the factorization being a component of the secret key. A long line of research has studied methods for sampling biprimes efficiently; in the early days, the task required specialized hardware and was not considered generally practical [44, 45]. In subsequent years, advances in computational power brought RSA into the realm of practicality and then ubiquity. Given a security parameter \({\kappa } \), the de facto standard method for sampling RSA biprimes involves choosing random \({\kappa } \)-bit numbers and subjecting them to the Miller–Rabin primality test [38, 43] until two primes are found; these primes are then multiplied to form a \(2{\kappa } \)-bit modulus. This method suffices when a single party wishes to generate a modulus, and is permitted to know the associated factorization.

Boneh and Franklin [4, 5] initiated the study of distributed RSA modulus generation.Footnote 1 This problem involves a set of parties who wish to jointly sample a biprime in such a way that no corrupt and colluding subset (below some defined threshold size) can learn the biprime’s factorization.

It is clear that applying generic multiparty computation (MPC) techniques to the standard sampling algorithm yields an impractical solution: implementing the Miller–Rabin primality test requires repeatedly computing \(a^{p-1}\bmod {p}\), where \(p\) is (in this case) secret, and so such an approach would require the generic protocol to evaluate a circuit containing many modular exponentiations over \({\kappa } \) bits each. Instead, Boneh and Franklin [4, 5] constructed a new biprimality test that generalizes Miller–Rabin and avoids computing modular exponentiations with secret moduli. Their test carries out all exponentiations modulo the public biprime \(N\), and this allows the exponentiations to be performed locally by the parties. Furthermore, they introduced a three-phase structure for the overall sampling protocol, which subsequent works have embraced:

-

1.

Prime Candidate Sieving: candidate values for \(p\) and \(q\) are sampled jointly in secret-shared form, and a weak-but-cheap form of trial division sieves them, culling candidates with small factors.

-

2.

Modulus Reconstruction: \(N:=p\cdot q\) is securely computed and revealed.

-

3.

Biprimality Testing: using a distributed protocol, \(N\) is tested for biprimality. If \(N\) is not a biprime, then the process is repeated.

The seminal work of Boneh and Franklin considered the semi-honest n-party setting with an honest majority of participants. Many extensions and improvements followed (as detailed in Sect. 1.3), the most notable of which (for our purposes) are two recent works that achieve malicious security against a dishonest majority. In the first, Hazay et al. [28, 29] proposed an n-party protocol in which both sieving and modulus reconstruction are achieved via additively homomorphic encryption. Specifically, they rely upon both ElGamal and Paillier encryption, and in order to achieve malicious security, they use zero-knowledge proofs for a variety of relations over the ciphertexts. Thus, their protocol represents a substantial advancement in terms of its security guarantee, but this comes at the cost of additional assumptions and an intricate proof, and also at substantial concrete cost, due to the use of many custom zero-knowledge proofs.

The subsequent protocol of Frederiksen et al. [23] (the second recent work of note) relies mainly on oblivious transfer (OT), which they use to perform both sieving and, via Gilboa’s classic multiplication protocol [24], modulus reconstruction. They achieved malicious security using the folklore technique in which a “Proof of Honesty” is evaluated as the last step and demonstrated practicality by implementing their protocol; however, it is not clear how to extend their approach to more than two parties in a straightforward way. Moreover, their approach to sieving admits selective-failure attacks, for which they account by including some leakage in the functionality. It also permits a malicious adversary to selectively and covertly induce false negatives (i.e., force the rejection of true biprimes after the sieving stage), a property that is again modeled in their functionality. In conjunction, these attributes degrade security, because the adversary can rejection-sample biprimes based on the additional leaked information, and efficiency, because ruling out malicious false-negatives involves running sufficiently many instances to make the probability of statistical failure in all instances negligible.

Thus, given the current state of the art, it remains unclear whether one can sample an RSA modulus among two parties (one being malicious) without leaking additional information or permitting covert rejection sampling, or whether one can sample an RSA modulus among many parties (all but one being malicious) without involving heavy cryptographic primitives such as additively homomorphic encryption, and their associated performance penalties. In this work, we present a protocol which efficiently achieves both tasks.

1.1 Results and Contributions

A Clean Functionality. We define  , a simple, natural functionality for sampling biprimes from the same well-known distribution used by prior works [5, 23, 29], with no leakage or conflation of sampling failures with adversarial behavior.

, a simple, natural functionality for sampling biprimes from the same well-known distribution used by prior works [5, 23, 29], with no leakage or conflation of sampling failures with adversarial behavior.

A Modular Protocol, with Natural Assumptions. We present a protocol  in the

in the  -hybrid model, where

-hybrid model, where  is an augmented multiplier functionality and

is an augmented multiplier functionality and  is a biprimality-testing functionality, and prove that it UC-realizes

is a biprimality-testing functionality, and prove that it UC-realizes  in the malicious setting, assuming the hardness of factoring. More specifically, we prove:

in the malicious setting, assuming the hardness of factoring. More specifically, we prove:

Theorem 1.1

(Main security theorem, informal) In the presence of a PPT malicious adversary corrupting any subset of parties,  can be securely computed with abort in the

can be securely computed with abort in the  -hybrid model, assuming the hardness of factoring.

-hybrid model, assuming the hardness of factoring.

Additionally, because our security proof relies upon the hardness of factoring only when the adversary cheats, we find to our surprise that our protocol achieves perfect security against semi-honest adversaries.

Theorem 1.2

(Semi-honest security theorem, informal) In the presence of a computationally unbounded semi-honest adversary corrupting any subset of parties,  can be computed with perfect security in the

can be computed with perfect security in the  -hybrid model.

-hybrid model.

Supporting Functionalities and Protocols. We define  , a simple, natural functionality for biprimality testing and show that it is UC-realized in the semi-honest setting by a well-known protocol of Boneh and Franklin [5], and in the malicious setting by a derivative of the protocol of Frederiksen et al. [23]. We believe this dramatically simplifies the composition of these two protocols and, as a consequence, leads to a simpler analysis. Either protocol can be based exclusively upon oblivious transfer.

, a simple, natural functionality for biprimality testing and show that it is UC-realized in the semi-honest setting by a well-known protocol of Boneh and Franklin [5], and in the malicious setting by a derivative of the protocol of Frederiksen et al. [23]. We believe this dramatically simplifies the composition of these two protocols and, as a consequence, leads to a simpler analysis. Either protocol can be based exclusively upon oblivious transfer.

We also define  , a functionality for sampling and multiplying secret-shared values in a special form derived from the Chinese remainder theorem. In the context of

, a functionality for sampling and multiplying secret-shared values in a special form derived from the Chinese remainder theorem. In the context of  , this functionality allows us to efficiently sample numbers in a specific range, with no small factors, and then compute their product. We prove that it can be UC-realized exclusively from oblivious transfer, using derivatives of well-known multiplication protocols [19, 20].

, this functionality allows us to efficiently sample numbers in a specific range, with no small factors, and then compute their product. We prove that it can be UC-realized exclusively from oblivious transfer, using derivatives of well-known multiplication protocols [19, 20].

Asymptotic Efficiency. We perform an asymptotic analysis of our composed protocols and find that our semi-honest protocol is a factor of \({\kappa }/\log {\kappa } \) more bandwidth-efficient than that of Frederiksen et al. [23], where \({\kappa } \) is the bit-length of the primes p and q. Our malicious protocol is a factor of \({\kappa }/{s} \) more efficient than theirs in the optimistic case (when parties follow the protocol), where \({s} \) is a statistical security parameter, and it is a factor of \({\kappa } \) more efficient when parties deviate from the protocol. Frederiksen et al. claim in turn that their protocol is strictly superior to the protocol of Hazay et al. [29] with respect to asymptotic bandwidth performance.

Concrete Efficiency. We perform a closed-form concrete communication cost analysis of our protocol (with some optimizations, including the use of random oracles), and compare our results to the protocol of Frederiksen et al. (the most efficient prior work). For \({\kappa } =1024\) (i.e., when sampling a 2048-bit biprime), our protocol outperforms theirs by a factor of roughly five in the presence of worst-case malicious adversaries, and by a factor of roughly eighty in the semi-honest setting. As the bitlength of the sampled prime grows, so too does the concrete performance advantage of our protocol.

1.2 Overview of Techniques

Constructive Sampling and Efficient Modulus Reconstruction. Most prior works use rejection sampling to generate a pair of candidate primes and then multiply those primes together in a separate step. Specifically, they sample a shared value \(p\leftarrow [0,2^{\kappa })\) uniformly, and then run a trial-division protocol repeatedly, discarding both the value and the work that has gone into testing it if trial division fails. This represents a substantial amount of wasted work in expectation. Furthermore, Frederiksen et al. [23] report that multiplication of candidates after sieving accounts for two thirds of their concrete cost.

We propose a different approach that leverages the Chinese remainder theorem (CRT) to constructively sample a pair of candidate primes and multiply them together efficiently. A similar sieving approach (in spirit) was initially formulated as an optimization in a different setting by Malkin et al. [37]. The CRT implies an isomorphism between a set of values, each in a field modulo a distinct prime, and a single value in a ring modulo the product of those primes (i.e., \({\mathbb {Z}}_{m_1}\times \cdots \times {\mathbb {Z}}_{m_\ell }\simeq {\mathbb {Z}}_{m_1\cdot \ldots \cdot m_\ell }\)). We refer to the set of values as the CRT form or CRT representation of the single value to which they are isomorphic. We formulate a sampling mechanism based on this isomorphism as follows: for each of the first \(O({\kappa }/\log {\kappa })\) odd primes, the parties jointly (and efficiently) sample shares of a value that is nonzero modulo that prime. These values are the shared CRT form of a single \({\kappa } \)-bit value that is guaranteed to be indivisible by any prime in the set sampled against. For technical reasons, we sample two such candidates simultaneously.

Rather than converting pairs of candidate primes from CRT form to standard form, and then multiplying them, we instead multiply them component-wise in CRT form and then convert the product to standard form to complete the protocol. This effectively replaces a single “full-width” multiplication of size \({\kappa } \) with \(O({\kappa }/\log {\kappa })\) individual multiplications, each of size \(O(\log {\kappa })\). We intend to perform multiplication via an OT-based protocol, and the computation and communication complexity of such protocols grows at least with the square of their input length, even in the semi-honest case [24]. Thus, in the semi-honest case, our approach yields an overall complexity of \(O({\kappa } \log {\kappa })\), as compared to \(O({\kappa } ^2)\) for a single full-width multiplication. In the malicious case, combining the best known multiplier construction [19, 20] with the most efficient known OT extension scheme [6] yields a complexity that also grows with the product of the input length and a statistical parameter \({s} \), and so our approach achieves an overall complexity of \(O({\kappa } \log {\kappa } + {\kappa } \cdot {s})\), as compared to \(O({\kappa } ^2+{\kappa } \cdot {s})\) for a single full-width malicious multiplication. Via closed-form analysis, we show that this asymptotic improvement is also reflected concretely.

Achieving Security with Abort Efficiently. The fact that we sample primes in CRT form also plays a crucial role in our security analysis. Unlike the work of Frederiksen et al. [23], our protocol achieves the standard, intuitive notion of security with abort: the adversary can instruct the functionality to abort regardless of whether a biprime is successfully sampled, and the honest parties are always made aware of such adversarial aborts. There is, in other words, absolutely no conflation of sampling failures with adversarial behavior. For the sake of efficiency, our protocol permits the adversary to cheat prior to biprimality testing and then rules out such cheats retroactively using one of two strategies. In the case that a biprime is successfully sampled, adversarial behavior is ruled out, retroactively, in a privacy-preserving fashion using well-known but moderately expensive techniques, which is tolerable only because it need not be done more than once. In the case that a sampled value is not a biprime, however, the inputs to the sampling protocol are revealed to all parties, and the retroactive check is carried out in the clear. Proving the latter approach secure turns out to be surprisingly subtle.

The challenge arises from the fact that the simulator must simulate the protocol transcript for the OT-multipliers on behalf of the honest parties without knowing their inputs. Later, if the sampling-protocol inputs are revealed, the simulator must “explain” how the simulated transcript is consistent with the true inputs of the honest parties. Specifically, in maliciously secure OT-multipliers of the sort we use [19, 20], the OT receiver (Bob) uses a high-entropy encoding of his input, and the sender (Alice) can, by cheating, learn a one-bit predicate of this encoding. Before Bob’s true input is known to the simulator, it must pick an encoding at random. When Bob’s input is revealed, the simulator must find an encoding of his input which is consistent with the predicate on the random encoding that Alice has learned. This task closely resembles solving a random instance of subset sum.

We are able to overcome this difficulty because our multiplications are performed component-wise over CRT-form representations of their operands. Because each component is of size \(O(\log {\kappa })\) bits, the simulator can simply guess random encodings until it finds one that matches the required constraints. We show that this strategy succeeds in strict polynomial time and that it induces a distribution statistically close to that of the real execution.

This form of “privacy-free” malicious security (wherein honest behavior is verified at the cost of sacrificing privacy) leads to considerable efficiency gains in our case: it is up to a multiplicative factor of \({s} \) (the statistical parameter) cheaper than the privacy-preserving check used in the case that a candidate passes the biprimality test (and the one used in prior OT-multipliers [19, 20]). Since most candidates fail the biprimality test, using the privacy-free check to verify that they were generated honestly results in substantial savings.

Biprimality Testing as a Black Box. We specify a functionality for biprimality testing and prove that it can be realized by a maliciously secure version of the Boneh–Franklin biprimality test. Our functionality has a clean interface and does not, for example, require its inputs to be authenticated to ensure that they were actually generated by the sampling phase of the protocol. The key insight that allows us to achieve this level of modularity is a reduction to factoring: if an adversary is able to cheat by supplying incorrect inputs to the biprimality test, relative to a candidate biprime \(N\), and the biprimality test succeeds, then we show that the adversary can be used to factor biprimes. We are careful to rely on this reduction only in the case that \(N\) is actually a biprime, and to prevent the adversary from influencing the distribution of candidates.

The Benefits of Modularity. We claim as a contribution the fact that modularity has yielded both a simpler protocol description and a reasonably simple proof of security. We believe that this approach will lead to derivatives of our work with stronger security properties or with security against stronger adversaries. As a first example, we prove that a semi-honest version of our protocol (differing only in that it omits the retroactive consistency check in the protocol’s final step) achieves perfect security. We furthermore observe that in the malicious setting, instantiating  and

and  with security against adaptive adversaries yields an RSA modulus sampling protocol that is adaptively secure.

with security against adaptive adversaries yields an RSA modulus sampling protocol that is adaptively secure.

Similarly, only minor adjustments to the main protocol are required to achieve security with identifiable abort [16, 32]. If we assume that the underlying functionalities  and

and  are instantiated with identifiable abort, then it remains only to ensure the use of consistent inputs across these functionalities, and to detect which party has provided inconsistent inputs if an abort occurs. This can be accomplished by augmenting

are instantiated with identifiable abort, then it remains only to ensure the use of consistent inputs across these functionalities, and to detect which party has provided inconsistent inputs if an abort occurs. This can be accomplished by augmenting  with an additional interface for revealing the input values provided by all the parties upon global request (e.g., when the candidate \(N\) is not a biprime). Given identifiable abort, it is possible to guarantee output delivery in the presence of up to \(n-1\) corruptions via standard techniques, although the functionality must be weakened to allow the adversary to reject one biprime per corrupt party.Footnote 2 A proof of this extension is beyond the scope of this work; we focus instead on the advancements our framework yields in the setting of security with abort.

with an additional interface for revealing the input values provided by all the parties upon global request (e.g., when the candidate \(N\) is not a biprime). Given identifiable abort, it is possible to guarantee output delivery in the presence of up to \(n-1\) corruptions via standard techniques, although the functionality must be weakened to allow the adversary to reject one biprime per corrupt party.Footnote 2 A proof of this extension is beyond the scope of this work; we focus instead on the advancements our framework yields in the setting of security with abort.

1.3 Additional Related Work

Frankel, MacKenzie, and Yung [22] adjusted the protocol of Boneh and Franklin [4] to achieve security against malicious adversaries in the honest-majority setting. Their main contribution was the introduction of a method for robust distributed multiplication over the integers. Cocks [11] proposed a method for multiparty RSA key generation under heuristic assumptions, and later attacks by Coppersmith (see [12]) and Joye and Pinch [33] suggested this method may be insecure. Poupard and Stern [42] presented a maliciously secure two-party protocol based on oblivious transfer. Gilboa [24] improved efficiency in the semi-honest two-party model, and introduced a novel method for multiplication from oblivious transfer, from which our own multipliers derive.

Malkin, Wu, and Boneh [37] implemented the protocol of Boneh and Franklin and introduced an optimized sieving method similar in spirit to ours. In particular, their protocol generates sharings of random values in \({\mathbb {Z}}_M^*\) (where M is a primorial modulus) during the sieving phase, instead of naïve random candidates for primes p and q. However, their method produces multiplicative sharings of p and q, which are converted into additive sharings for biprimality testing via an honest-majority, semi-honest protocol. This conversion requires rounds linear in the party count, and it is unclear how to adapt it to tolerate a malicious majority of parties without a significant performance penalty.

Algesheimer, Camenish, and Shoup [1] described a method to compute a distributed version of the Miller–Rabin test: they used secret-sharing conversion techniques reliant on approximations of \(1/p\) to compute exponentiations modulo a shared \(p\). However, each invocation of their Miller–Rabin test still has complexity in \(O({\kappa } ^3)\) per party, and their overall protocol has communication complexity in \(O({\kappa } ^5/\log ^2{{\kappa }})\), with \(\Theta ({\kappa })\) rounds of interaction. Concretely, Damgård and Mikkelsen [18] estimate that 10,000 rounds are required to sample a 2000-bit biprime using this method. Damgård and Mikkelsen also extended their work to improve both its communication and round complexity by several orders of magnitude, and to achieve malicious security in the honest-majority setting. Their protocol is at least a factor of \(O({\kappa })\) better than that of Algesheimer, Camenish, and Shoup, but it still requires hundreds of rounds. We were not able to compute an explicit complexity analysis of their approach. We give a summary of prior works in Table 1, for ease of comparison.

In a follow-up work, Chen et al. [10] make use of our CRT-based biprime sampling technique, but abandon our modular protocol and proof in favor of a monolithic construction that leverages recent advancements in additively-homomorphic encryption and zero-knowledge arguments. They focus on the setting wherein there is a powerful, semi-honest aggregator, and many weak, malicious clients. This mixed security model yields opportunities for optimization, and they show that their approach is sufficiently efficient for real-world use, even with thousands of participants spread around the world.

Finally, we note that the manipulation of values in what we have referred to as CRT form has long been studied under the guise of residue number systems [48]. Though we take few pains to formalize the connection or to generalize beyond what is required for this work, some of our techniques could be viewed as multiparty adaptations of techniques from the RNS literature.

1.4 Organization

Basic notation and background information are given in Sect. 2. Our ideal biprime-sampling functionality is defined in Sect. 3, and we give a protocol that realizes it in Sect. 4. In Sect. 5, we present our biprimality-testing protocol. In Sect. 6, we give an efficiency analysis. We defer full proofs of security and the details of our multiplication protocol to the appendices.

2 Preliminaries

Notation. We use \(=\) for equality, \(:=\) for assignment, \(\leftarrow \) for sampling from a distribution, \(\equiv \) for congruence, \(\smash {\approx _{\mathrm{c}}}\) for computational indistinguishability, and \(\smash {\approx _{\mathrm{s}}}\) for statistical indistinguishability. In general, single-letter variables are set in italic font, multiletter variables and function names are set in sans-serif font, and string literals are set in \(\texttt {slab-serif}\) font. We use \(\bmod {}\) to indicate the modulus operator, while \(\pmod {m}\) at the end of a line indicates that all equivalence relations on that line are to be taken over the integers modulo m. By convention, we parameterize computational security by the bit-length of each prime in an RSA biprime; we denote this length by \({\kappa } \) throughout. We use \({s} \) to represent the statistical parameter. Where concrete efficiency is concerned, we introduce a second computational security parameter, \({\lambda } \), which represents the length of a symmetric key of equivalent strength to a biprime of length \(2{\kappa } \).Footnote 3\({\kappa } \) and \({\lambda } \) must vary together, and a recommendation for the relationship between them has been laid down by NIST [2].

Vectors and arrays are given in bold and indexed by subscripts; thus, \({\varvec{\mathrm {x}}}_i\) is the \(i\)th element of the vector \({\varvec{\mathrm {x}}}\), which is distinct from the scalar variable x. When we wish to select a row or column from a two-dimensional array, we place a \(*\) in the dimension along which we are not selecting. Thus, \(\smash {{\varvec{\mathrm {y}}}_{*,j}}\) is the \(j\)th column of matrix \({\varvec{\mathrm {y}}}\), and \(\smash {{\varvec{\mathrm {y}}}_{j,*}}\) is the \(j\)th row. We use \(\mathcal{P} _i\) to denote the party with index i, and when only two parties are present, we refer to them as Alice and Bob. Variables may often be subscripted with an index to indicate that they belong to a particular party. When arrays are owned by a party, the party index always comes first. We use |x| to denote the bit-length of x and \(|{\varvec{\mathrm {y}}}|\) to denote the number of elements in the vector \({\varvec{\mathrm {y}}}\).

Universal Composability. We prove our protocols secure in the universal composability (UC) framework and use standard UC notation. In Appendix A, we give a high-level overview and refer the reader to Canetti [7] for further details. In functionality descriptions, we leave some standard bookkeeping elements implicit. For example, we assume that the functionality aborts if a party tries to reuse a session identifier inappropriately, send messages out of order, etc. For convenience, we provide a function \(\mathsf {GenSID} \), which takes any number of arguments and deterministically derives a unique Session ID from those arguments. For example, \(\mathsf {GenSID} (\mathsf {sid},x,\texttt {x})\) derives a new Session ID from the variables \(\mathsf {sid}\) and x, and the string literal “x”.

Chinese Remainder Theorem. The Chinese remainder theorem (CRT) defines an isomorphism between a set of residues modulo a set of respective pairwise-coprime values and a single value modulo the product of the same set of pairwise-coprime values. This forms the basis of our sampling procedure.

Theorem 2.1

(CRT) Let \({\varvec{\mathrm {m}}} \) be a vector of pairwise-coprime positive integers and let \({\varvec{\mathrm {x}}}\) be a vector of numbers such that \(|{\varvec{\mathrm {m}}} |=|{\varvec{\mathrm {x}}}|=\ell \) and \(0\le {\varvec{\mathrm {x}}}_j<{\varvec{\mathrm {m}}} _j\) for all \(j\in [\ell ]\), and finally let \(M:=\prod _{j\in [\ell ]} {\varvec{\mathrm {m}}} _j\). Under these conditions, there exists a unique value y such that \(0\le y<M\) and \(y\equiv {\varvec{\mathrm {x}}}_j\pmod {{\varvec{\mathrm {m}}} _j}\) for every \(j\in [\ell ]\).

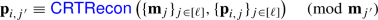

We refer to \({\varvec{\mathrm {x}}}\) as the CRT form of y with respect to \({\varvec{\mathrm {m}}} \). For completeness, we give the  algorithm, which finds the unique y given \({\varvec{\mathrm {m}}} \) and \({\varvec{\mathrm {x}}}\).

algorithm, which finds the unique y given \({\varvec{\mathrm {m}}} \) and \({\varvec{\mathrm {x}}}\).

3 Assumptions and Ideal Functionality

We begin this section by discussing the distribution of biprimes from which we sample and thus the precise factoring assumption that we make, and then we give an efficient sampling algorithm and an ideal functionality that computes it.

3.1 Factoring Assumptions

The standard factoring experiment (Experiment 3.1) as formalized by Katz and Lindell [34] is parametrized by an adversary \(\mathcal{A} \) and a biprime-sampling algorithm \(\mathsf {GenModulus} \). On input \(1^{\kappa } \), this algorithm returns \((N,p,q)\), where \(N=p\cdot q\), and \(p\) and \(q\) are \({\kappa } \)-bit primes.Footnote 4

In many cryptographic applications, \(\mathsf {GenModulus} (1^{\kappa })\) is defined to sample \(p\) and \(q\) uniformly from the set of primes in the range \([2^{{\kappa }-1},2^{\kappa })\) [25], and the factoring assumption with respect to this common \(\mathsf {GenModulus}\) function states that for every PPT adversary \(\mathcal{A} \) there exists a negligible function \(\mathsf {negl}\) such that

Because efficiently sampling according to this uniform biprime distribution is difficult in a multiparty context, most prior works sample according to a different distribution, and thus using the moduli they produce requires a slightly different factoring assumption than the traditional one. In particular, several recent works use a distribution originally proposed by Boneh and Franklin [5], which is well adapted to multiparty sampling. Our work follows this pattern.

Boneh and Franklin’s distribution is defined by the sampling algorithm  , which takes as an additional parameter the number of parties n. The algorithm samples n integer shares, each in the range \([0,2^{{\kappa }-\log {n}})\),Footnote 5 and sums these shares to arrive at a candidate prime. This does not induce a uniform distribution on the set of \({\kappa } \)-bit primes. Furthermore,

, which takes as an additional parameter the number of parties n. The algorithm samples n integer shares, each in the range \([0,2^{{\kappa }-\log {n}})\),Footnote 5 and sums these shares to arrive at a candidate prime. This does not induce a uniform distribution on the set of \({\kappa } \)-bit primes. Furthermore,  only samples individual primes \(p\) or \(q\) that have \(p\equiv q\equiv 3\pmod {4}\), in order to facilitate efficient distributed primality testing, and it filters out the subset of otherwise-valid moduli \(N=p\cdot q\) that have \(p\equiv 1\pmod {q}\) or \(q\equiv 1\pmod {p}\).Footnote 6

only samples individual primes \(p\) or \(q\) that have \(p\equiv q\equiv 3\pmod {4}\), in order to facilitate efficient distributed primality testing, and it filters out the subset of otherwise-valid moduli \(N=p\cdot q\) that have \(p\equiv 1\pmod {q}\) or \(q\equiv 1\pmod {p}\).Footnote 6

Any protocol whose security depends upon the hardness of factoring moduli output by our protocol (including our protocol itself) must rely upon the assumption that for every PPT adversary \(\mathcal{A} \),

3.2 The Distributed Biprime-Sampling Functionality

Unfortunately, our ideal modulus-sampling functionality cannot merely call  ; we wish our functionality to run in strict polynomial time, whereas the running time of

; we wish our functionality to run in strict polynomial time, whereas the running time of  is only expected polynomial. Thus, we define a new sampling algorithm,

is only expected polynomial. Thus, we define a new sampling algorithm,  , which might fail, but conditioned on success outputs a distribution statistically indistinguishable from that of

, which might fail, but conditioned on success outputs a distribution statistically indistinguishable from that of  . Specifically, a coprimality constraint implies that there exists some concrete lower bound in \(O({\kappa })\) on the factors of the biprimes produced by

. Specifically, a coprimality constraint implies that there exists some concrete lower bound in \(O({\kappa })\) on the factors of the biprimes produced by  , whereas with probability negligible in \(\kappa \),

, whereas with probability negligible in \(\kappa \),  outputs biprimes with factors below this bound. Otherwise, for the appropriate (possibly non-integer) value of \({\kappa } \), the two output identical distributions, conditioned on success. However, we give

outputs biprimes with factors below this bound. Otherwise, for the appropriate (possibly non-integer) value of \({\kappa } \), the two output identical distributions, conditioned on success. However, we give  a specific distribution of failures that is tied to the design of our protocol. As a second concession to our protocol design (and following Hazay et al. [29]),

a specific distribution of failures that is tied to the design of our protocol. As a second concession to our protocol design (and following Hazay et al. [29]),  takes as input up to \(n-1\) integer shares of \(p\) and \(q\), arbitrarily determined by the adversary, while the remaining shares are sampled randomly. We begin with a few useful notions.

takes as input up to \(n-1\) integer shares of \(p\) and \(q\), arbitrarily determined by the adversary, while the remaining shares are sampled randomly. We begin with a few useful notions.

Definition 3.3

(Primorial Number) The \(i\)th primorial number is defined to be the product of the first i prime numbers.

Definition 3.4

(\(({\kappa },n)\)-Near-Primorial Vector) Let \(\ell \) be the largest number such that the \(\ell \)th primorial number is less than \(2^{{\kappa }-\log {n}-1}\), and let \({\varvec{\mathrm {m}}} \) be a vector of length \(\ell \) such that \({\varvec{\mathrm {m}}} _1=4\) and \({\varvec{\mathrm {m}}} _2,\ldots ,{\varvec{\mathrm {m}}} _\ell \) are the odd factors of the \(\ell ^\text {th}\) primorial number, in ascending order. \({\varvec{\mathrm {m}}} \) is the unique \(({\kappa },n)\)-near-primorial vector.

Definition 3.5

(\({\varvec{\mathrm {m}}} \)-Coprimality) Let \({\varvec{\mathrm {m}}} \) be a vector of integers. An integer x is \({\varvec{\mathrm {m}}} \)-coprime if and only if it is not divisible by any \({\varvec{\mathrm {m}}} _i\) for \(i\in [|{\varvec{\mathrm {m}}} |]\).

Boneh and Franklin [5, Lemma 2.1] showed that knowledge of \(n-1\) integer shares of the factors \(p\) and \(q\) does not give the adversary any meaningful advantage in factoring biprimes from the distribution produced by  . Hazay et al. [29, Lemma 4.1] extended this argument to the malicious setting, wherein the adversary is allowed to choose its own shares. A similar lemma must hold for

. Hazay et al. [29, Lemma 4.1] extended this argument to the malicious setting, wherein the adversary is allowed to choose its own shares. A similar lemma must hold for  , as a corollary of the fact that its output distribution differs only negligibly from that of

, as a corollary of the fact that its output distribution differs only negligibly from that of  .

.

Lemma 3.7

([5, 29]) Let \(n<{\kappa } \) and let \((\mathcal{A} _1,\mathcal{A} _2)\) be a pair of PPT algorithms. For \((\mathsf {state},\{(p_i,q_i)\}_{i\in [n-1]})\leftarrow \mathcal{A} _1(1^{\kappa },1^n)\), let \(N\) be a biprime sampled by running  . If \(\mathcal{A} _2(\mathsf {state},N)\) outputs the factors of \(N\) with probability at least \(\smash {1/{\kappa } ^d}\), then there exists an expected-polynomial-time algorithm \(\mathcal{B} \) that succeeds with probability at least \(1/2^4n^3{\kappa } ^d-\mathsf {negl}({\kappa })\) in the experiment

. If \(\mathcal{A} _2(\mathsf {state},N)\) outputs the factors of \(N\) with probability at least \(\smash {1/{\kappa } ^d}\), then there exists an expected-polynomial-time algorithm \(\mathcal{B} \) that succeeds with probability at least \(1/2^4n^3{\kappa } ^d-\mathsf {negl}({\kappa })\) in the experiment  .

.

Multiparty functionality. Our ideal functionality  is a natural embedding of

is a natural embedding of  in a multiparty functionality: it receives inputs \(\{(p_i,q_i)\}_{i\in {{\varvec{\mathrm {P}}}^*}}\) from the adversary and runs a single iteration of

in a multiparty functionality: it receives inputs \(\{(p_i,q_i)\}_{i\in {{\varvec{\mathrm {P}}}^*}}\) from the adversary and runs a single iteration of  with these inputs when invoked. It either outputs the corresponding modulus \(N:=p\cdot q\) if it is valid, or indicates that a sampling failure has occurred. Running a single iteration of

with these inputs when invoked. It either outputs the corresponding modulus \(N:=p\cdot q\) if it is valid, or indicates that a sampling failure has occurred. Running a single iteration of  per invocation of

per invocation of  enables significant freedom in the use of

enables significant freedom in the use of  , because it can be composed in different ways to tune the trade-off between resource usage and execution time. It also simplifies the analysis of the protocol

, because it can be composed in different ways to tune the trade-off between resource usage and execution time. It also simplifies the analysis of the protocol  that realizes

that realizes  , because the analysis is made independent of the success rate of the sampling procedure.

, because the analysis is made independent of the success rate of the sampling procedure.

The functionality may not deliver \(N\) to the honest parties for one of two reasons: either  failed to sample a biprime, or the adversary caused the computation to abort. In either case, the honest parties are informed of the cause of the failure, and consequently the adversary is unable to conflate the two cases. This is essentially the standard notion of security with abort, applied to the multiparty computation of the

failed to sample a biprime, or the adversary caused the computation to abort. In either case, the honest parties are informed of the cause of the failure, and consequently the adversary is unable to conflate the two cases. This is essentially the standard notion of security with abort, applied to the multiparty computation of the  algorithm. In both cases, the \(p\) and \(q\) output by

algorithm. In both cases, the \(p\) and \(q\) output by  are given to the adversary. This leakage simplifies our proof considerably, and we consider it benign, since the honest parties never receive (and therefore cannot possibly use) \(N\).

are given to the adversary. This leakage simplifies our proof considerably, and we consider it benign, since the honest parties never receive (and therefore cannot possibly use) \(N\).

4 The Distributed Biprime-Sampling Protocol

In this section, we present the distributed biprime-sampling protocol  , with which we realize

, with which we realize  . We begin with a high-level overview, and then in Sect. 4.2, we formally define the two ideal functionalities on which our protocol relies, after which in Sect. 4.3 we give the protocol itself. In Sect. 4.4, we present proof sketches of semi-honest and malicious security.

. We begin with a high-level overview, and then in Sect. 4.2, we formally define the two ideal functionalities on which our protocol relies, after which in Sect. 4.3 we give the protocol itself. In Sect. 4.4, we present proof sketches of semi-honest and malicious security.

4.1 High-Level Overview

As described in the Introduction, our protocol derives from that of Boneh and Franklin [5], the main technical differences relative to other recent Boneh–Franklin derivatives [23, 29] being the modularity with which it is described and proven, and the use of CRT-based sampling. Our protocol has three main phases, which we now describe in sequence.

Candidate Sieving. In the first phase of our protocol, the parties jointly sample two \({\kappa } \)-bit candidate primes \(p\) and \(q\) without any small factors, and multiply them to learn their product \(N\). Our protocol achieves these two tasks in an integrated way, thanks to the  .

.

Consider a prime \(m\) and a set of shares \(x_i\) for \(i\in [n]\) over the field \({\mathbb {Z}}_m\). As in the description of  , let a and b be defined such that \(a\cdot b\equiv 1\pmod {m}\), and let M be an integer. Observe that if m divides M, then

, let a and b be defined such that \(a\cdot b\equiv 1\pmod {m}\), and let M be an integer. Observe that if m divides M, then

Now consider a vector of coprime integers \({\varvec{\mathrm {m}}} \) of length \(\ell \), and let \(M\) be their product. Let \({\varvec{\mathrm {x}}}\) be a vector, each element secret shared over the fields defined by the corresponding element of \({\varvec{\mathrm {m}}} \), and let \({\varvec{\mathrm {a}}}\) and \({\varvec{\mathrm {b}}}\) be defined as in  (i.e., \({\varvec{\mathrm {a}}}_j :=M/{\varvec{\mathrm {m}}} _j\) and \({\varvec{\mathrm {a}}}_j\cdot {\varvec{\mathrm {b}}}_j \equiv 1 \pmod {{\varvec{\mathrm {m}}} _j}\)). We can see that for any \(k,j\in [\ell ]\) such that \(k\ne j\),

(i.e., \({\varvec{\mathrm {a}}}_j :=M/{\varvec{\mathrm {m}}} _j\) and \({\varvec{\mathrm {a}}}_j\cdot {\varvec{\mathrm {b}}}_j \equiv 1 \pmod {{\varvec{\mathrm {m}}} _j}\)). We can see that for any \(k,j\in [\ell ]\) such that \(k\ne j\),

and the conjunction of Eqs. 1 and 2 gives us

for all \(k\in [\ell ]\). Observe that this holds regardless of which order we perform the sums in, and regardless of whether the \(\bmod {\,M}\) operation is done at the end, or between the two sums, or not at all.

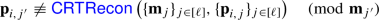

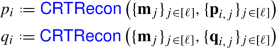

It follows then that we can sample n shares for an additive secret sharing over the integers of a \({\kappa } \)-bit value x (distributed between 0 and \(n\cdot M\)) by choosing \({\varvec{\mathrm {m}}} \) to be the \(({\kappa },n)\)-near-primorial vector (per Definition 3.4), instructing each party \(\mathcal{P} _i\) for \(i\in [n]\) to pick \({\varvec{\mathrm {x}}}_{i,j}\) locally for \(j\in [\ell ]\) such that \(0\le {\varvec{\mathrm {x}}}_{i,j}<{\varvec{\mathrm {m}}} _j\), and then instructing each party to locally reconstruct  , its share of x. It furthermore follows that if the parties can contrive to ensure that

, its share of x. It furthermore follows that if the parties can contrive to ensure that

for \(j\in [\ell ]\), then x will not be divisible by any prime in \({\varvec{\mathrm {m}}} \).

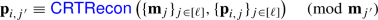

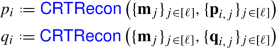

Observe next that if the parties sample two shared vectors \({\varvec{\mathrm {p}}}\) and \({\varvec{\mathrm {q}}}\) as above (corresponding to the candidate primes \(p\) and \(q\)) and compute a shared vector \({\varvec{\mathrm {N}}}\) of identical dimension such that

for all \(j\in [\ell ]\), then it follows that

and from this it follows that the parties can calculate integer shares of \(N=p\cdot q\) by multiplying \({\varvec{\mathrm {p}}}\) and \({\varvec{\mathrm {q}}}\) together element-wise using a modular-multiplication protocol for linear secret shares, and then locally running  on the output to reconstruct \(N\). In fact, our sampling protocol makes use of a special functionality

on the output to reconstruct \(N\). In fact, our sampling protocol makes use of a special functionality  , which samples \({\varvec{\mathrm {p}}}\), \({\varvec{\mathrm {q}}}\), and \({\varvec{\mathrm {N}}}\) simultaneously such that the conditions in Eqs. 3 and 4 hold.

, which samples \({\varvec{\mathrm {p}}}\), \({\varvec{\mathrm {q}}}\), and \({\varvec{\mathrm {N}}}\) simultaneously such that the conditions in Eqs. 3 and 4 hold.

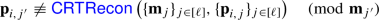

There remains one problem: our vector \({\varvec{\mathrm {m}}} \) was chosen for sampling integer-shared values between 0 and \(n\cdot M\) (with each share no larger than \(M\)), but \(N\) might be as large as \(n^2\cdot M^2\). In order to avoid wrapping during reconstruction of \(N\), we must reconstruct with respect to a larger vector of primes (while continuing to sample with respect to a smaller one). Let \({\varvec{\mathrm {m}}} \) now be of length \({\ell '}\), and let \(\ell \) continue to denote the length of the prefix of \({\varvec{\mathrm {m}}} \) with respect to which sampling is performed. After sampling the initial vectors \({\varvec{\mathrm {p}}}\), \({\varvec{\mathrm {q}}}\), and \({\varvec{\mathrm {N}}}\), each party \(\mathcal{P} _i\) for \(i\in [n]\) must extend \({\varvec{\mathrm {p}}}_{i,*}\) locally to \({\ell '}\) elements, by computing

for \(j\in [\ell +1,{\ell '}]\), and then likewise for \({\varvec{\mathrm {q}}}_{i,*}\).Footnote 7 Finally, the parties must use a modular-multiplication protocol to compute the appropriate extension of \({\varvec{\mathrm {N}}}\); from this extended \({\varvec{\mathrm {N}}}\), they can reconstruct shares of \(N=p\cdot q\). They swap these shares, and thus, each party ends the sieving phase of our protocol with a candidate biprime \(N\) and an integer share of each of its factors, \(p_i\) and \(q_i\).

Each party completes the first phase by performing a local trial division to check if \(N\) is divisible by any prime smaller than some bound B (which is a parameter of the protocol). The purpose of this step is to reduce the number of calls to  and thus improve efficiency.

and thus improve efficiency.

Biprimality Test. The parties jointly execute a biprimality test, where every party inputs the candidate \(N\) and its shares \(p_i\) and \(q_i\), and receives back a biprimality indicator. This phase essentially comprises a single call to a functionality  , which allows an adversary to force spurious negative results, but never returns false positive results. Though this phase is simple, much of the subtlety of our proof concentrates here: we show via a reduction to factoring that cheating parties have a negligible chance to pass the biprimality test if they provide wrong inputs. This eliminates the need to authenticate the inputs in any way.

, which allows an adversary to force spurious negative results, but never returns false positive results. Though this phase is simple, much of the subtlety of our proof concentrates here: we show via a reduction to factoring that cheating parties have a negligible chance to pass the biprimality test if they provide wrong inputs. This eliminates the need to authenticate the inputs in any way.

Consistency Check. To achieve malicious security, the parties must ensure that none among them cheated during the previous stages in a way that might influence the result of the computation. This is what we have previously termed the retroactive consistency check. If the biprimality test indicated that \(N\) is not a biprime, then the parties use a special interface of  to reveal the shares they used during the protocol, and then they verify locally and independently that \(p\) and \(q\) are not both primes. If the biprimality test indicated that \(N\) is a biprime, then the parties run a secure test (again via a special interface of

to reveal the shares they used during the protocol, and then they verify locally and independently that \(p\) and \(q\) are not both primes. If the biprimality test indicated that \(N\) is a biprime, then the parties run a secure test (again via a special interface of  ) to ensure that length extensions of \({\varvec{\mathrm {p}}}\) and \({\varvec{\mathrm {q}}}\) were performed honestly. To achieve semi-honest security, this phase is unnecessary, and the protocol can end with the biprimality test.

) to ensure that length extensions of \({\varvec{\mathrm {p}}}\) and \({\varvec{\mathrm {q}}}\) were performed honestly. To achieve semi-honest security, this phase is unnecessary, and the protocol can end with the biprimality test.

4.2 Ideal Functionalities Used in the Protocol

Augmented Multiparty Multiplier. The augmented multiplier functionality  (Functionality 4.1) is a reactive functionality that operates in multiple phases and stores internal state across calls. It is meant to help in manipulating CRT-form secret shares, and to enforce correct reuse of a set of shares across multiple operations. It contains five basic interfaces.

(Functionality 4.1) is a reactive functionality that operates in multiple phases and stores internal state across calls. It is meant to help in manipulating CRT-form secret shares, and to enforce correct reuse of a set of shares across multiple operations. It contains five basic interfaces.

-

The \(\texttt {sample}\) interface allows the parties to sample shares of nonzero multiplication triplets over small primes. That is, given a prime \(m\), the functionality receives a triplet \((x_i,y_i,z_i)\) from every corrupted party \(\mathcal{P} _i\), and then samples a triplet \((x_j,y_j,z_j)\leftarrow {\mathbb {Z}}^3_m\) for every honest \(\mathcal{P} _j\) conditioned on

$$\begin{aligned} \sum \limits _{i\in [n]}z_i\equiv \sum \limits _{i\in [n]}x_i\cdot \sum \limits _{i\in [n]}y_i \not \equiv 0\pmod {m} \end{aligned}$$In the context of

, this is used to sample CRT-shares of \(p\) and \(q\).

, this is used to sample CRT-shares of \(p\) and \(q\). -

The \(\texttt {input}\) and \(\texttt {multiply}\) interfaces, taken together, allow the parties to load shares (with respect to some small prime modulus \(m\)) into the functionality’s memory, and later perform modular multiplication on two sets of shares that are associated with the same modulus. That is, given a prime \(m\), each party \(\mathcal{P} _i\) inputs \(x_i\) and, independently, \(y_i\), and when the parties request a product, the functionality samples a share of z from \({\mathbb {Z}}_m\) for each party subject to

$$\begin{aligned} \sum \limits _{i\in [n]}z_i\equiv \sum \limits _{i\in [n]}x_i\cdot \sum \limits _{i\in [n]}y_i\pmod {m} \end{aligned}$$In the context of

, this interface is used to perform length-extension on CRT-shares of \(p\) and \(q\).

, this interface is used to perform length-extension on CRT-shares of \(p\) and \(q\). -

The \(\texttt {check}\) interface allows the parties to securely compute a predicate over the set of stored values. In the context of

, this is used to check that the CRT-share extension of \(p\) and \(q\) has been performed correctly, when \(N\) is a biprime.

, this is used to check that the CRT-share extension of \(p\) and \(q\) has been performed correctly, when \(N\) is a biprime. -

The \(\texttt {open}\) interface allows the parties to retroactively reveal their inputs to one another. In the context of

, this is used to verify the sampling procedure and biprimality test when \(N\) is not a biprime.

, this is used to verify the sampling procedure and biprimality test when \(N\) is not a biprime.

These five interfaces suffice for the malicious version of the protocol, and the first three alone suffice for the semi-honest version. We make a final adjustment, which leads to a substantial efficiency improvement in the protocol with which we realize  (which we describe in Appendix B). Specifically, we give the adversary an interface by which it can request that any stored value be leaked to itself, and by which it can (arbitrarily) determine the output of any call to the \(\texttt {sample}\) or \(\texttt {multiply}\) interfaces. However, if the adversary uses this interface, the functionality remembers, and informs the honest parties by aborting when the \(\texttt {check}\) or \(\texttt {open}\) interfaces is used.

(which we describe in Appendix B). Specifically, we give the adversary an interface by which it can request that any stored value be leaked to itself, and by which it can (arbitrarily) determine the output of any call to the \(\texttt {sample}\) or \(\texttt {multiply}\) interfaces. However, if the adversary uses this interface, the functionality remembers, and informs the honest parties by aborting when the \(\texttt {check}\) or \(\texttt {open}\) interfaces is used.

Biprimality Test. The biprimality-test functionality  (Functionality 4.2) abstracts the behavior of the biprimality test of Boneh and Franklin [5]. The functionality receives from each party a candidate biprime \(N\), along with shares of its factors \(p\) and \(q\). It checks whether \(p\) and \(q\) are primes and whether \(N=p\cdot q\). The adversary is given an additional interface, by which it can ask the functionality to leak the honest parties’ inputs, but when this interface is used then the functionality reports to the honest parties that \(N\) is not a biprime, even if it is one.

(Functionality 4.2) abstracts the behavior of the biprimality test of Boneh and Franklin [5]. The functionality receives from each party a candidate biprime \(N\), along with shares of its factors \(p\) and \(q\). It checks whether \(p\) and \(q\) are primes and whether \(N=p\cdot q\). The adversary is given an additional interface, by which it can ask the functionality to leak the honest parties’ inputs, but when this interface is used then the functionality reports to the honest parties that \(N\) is not a biprime, even if it is one.

Realizations. In Appendix B, we discuss a protocol to realize  , and in Sect. 5, we propose a protocol to realize

, and in Sect. 5, we propose a protocol to realize  . Both make use of generic MPC, but in such a way that no generic MPC is required unless \(N\) is a biprime.

. Both make use of generic MPC, but in such a way that no generic MPC is required unless \(N\) is a biprime.

4.3 The Protocol Itself

We refer the reader back to Sect. 4.1 for an overview of our protocol. We have mentioned that it requires a vector of coprime values, which is prefixed by the \(({\kappa },n)\)-near-primorial vector. We now give this vector a precise definition. Note that the efficiency of our protocol relies upon this vector, because we use its contents to sieve candidate primes. Since smaller numbers are more likely to be factors for the candidate primes, we choose the largest allowable set of the smallest sequential primes.

Definition 4.3

(\(({\kappa },n)\)-Compatible Parameter Set) Let \({\ell '}\) be the smallest number such that the \({\ell '}^\text {th}\) primorial number is greater than \(2^{2{\kappa }-1}\), and let \({\varvec{\mathrm {m}}} \) be a vector of length \({\ell '}\) such that \({\varvec{\mathrm {m}}} _1=4\) and \({\varvec{\mathrm {m}}} _2,\ldots ,{\varvec{\mathrm {m}}} _{\ell '}\) are the odd factors of the \({\ell '}^\text {th}\) primorial number, in ascending order. \(({\varvec{\mathrm {m}}},{\ell '},\ell ,M)\) is the \(({\kappa },n)\)-compatible parameter set if \(\ell < {\ell '}\) and the prefix of \({\varvec{\mathrm {m}}} \) of length \(\ell \) is the \(({\kappa }, n)\)-near-primorial vector per Definition 3.4, and if \(M\) is the product of this prefix.

4.4 Security Sketches

We now informally argue that  realizes

realizes  in the semi-honest and malicious settings. We give a full proof for the malicious setting in Appendix C.

in the semi-honest and malicious settings. We give a full proof for the malicious setting in Appendix C.

Theorem 4.5

The protocol  perfectly UC-realizes

perfectly UC-realizes  in the

in the  ,

, -hybrid model against a semi-honest adversary that statically corrupts up to \(n-1\) parties.

-hybrid model against a semi-honest adversary that statically corrupts up to \(n-1\) parties.

Proof Sketch

In lieu of arguing for the correctness of our protocol, we refer the reader to the explanation in Sect. 4.1 and focus here on the strategy of a simulator \(\mathcal{S} \) against a semi-honest adversary \(\mathcal{A} \) who corrupts the parties indexed by \({{\varvec{\mathrm {P}}}^*} \). \(\mathcal S\) forwards all messages between \(\mathcal{A} \) and the environment faithfully.

In Step 1 of  , for each \(j\in [2,\ell ]\), \(\mathcal S\) receives the \(\texttt {sample}\) instruction with modulus \({\varvec{\mathrm {m}}} _j\) on behalf of

, for each \(j\in [2,\ell ]\), \(\mathcal S\) receives the \(\texttt {sample}\) instruction with modulus \({\varvec{\mathrm {m}}} _j\) on behalf of  from all parties indexed by \({{\varvec{\mathrm {P}}}^*} \). For each j, it then samples \(({\varvec{\mathrm {p}}}_{i,j},{\varvec{\mathrm {q}}}_{i,j},{\varvec{\mathrm {N}}}_{i,j})\leftarrow {\mathbb {Z}}^3_{{\varvec{\mathrm {m}}} _j}\) uniformly for \(i\in {{\varvec{\mathrm {P}}}^*} \), and returns each triple to the appropriate party.

from all parties indexed by \({{\varvec{\mathrm {P}}}^*} \). For each j, it then samples \(({\varvec{\mathrm {p}}}_{i,j},{\varvec{\mathrm {q}}}_{i,j},{\varvec{\mathrm {N}}}_{i,j})\leftarrow {\mathbb {Z}}^3_{{\varvec{\mathrm {m}}} _j}\) uniformly for \(i\in {{\varvec{\mathrm {P}}}^*} \), and returns each triple to the appropriate party.

Step 2 involves no interaction on the part of the parties, but it is at this point that \(\mathcal S\) computes \(p_i\) and \(q_i\) for \(i\in {{\varvec{\mathrm {P}}}^*} \), in the same way that the parties themselves do. Note that since \({\varvec{\mathrm {p}}}_{*,1}\) and \({\varvec{\mathrm {q}}}_{*,1}\) are deterministically chosen, they are known to \(\mathcal S\). The simulator then sends these shares to  via the functionality’s \(\texttt {adv-input}\) interface and receives in return either a biprime \(N\), or two factors \(p\) and \(q\) such that \(N:=p\cdot q\) is not a biprime. Regardless, it instructs

via the functionality’s \(\texttt {adv-input}\) interface and receives in return either a biprime \(N\), or two factors \(p\) and \(q\) such that \(N:=p\cdot q\) is not a biprime. Regardless, it instructs  to \(\texttt {proceed}\).

to \(\texttt {proceed}\).

In Step 3 of  , \(\mathcal S\) receives two \(\texttt {input}\) instructions from each corrupted party for each \(j\in [\ell +1,{\ell '}]\) on behalf of

, \(\mathcal S\) receives two \(\texttt {input}\) instructions from each corrupted party for each \(j\in [\ell +1,{\ell '}]\) on behalf of  , and confirms receipt as

, and confirms receipt as  would. Subsequently, for each \(j\in [\ell +1,{\ell '}]\), the corrupt parties all send a \(\texttt {multiply}\) instruction, and then \(\mathcal S\) samples \({\varvec{\mathrm {N}}}_{i,j}\leftarrow {\mathbb {Z}}_{{\varvec{\mathrm {m}}} _j}\) for \(i\in [n]\) subject to

would. Subsequently, for each \(j\in [\ell +1,{\ell '}]\), the corrupt parties all send a \(\texttt {multiply}\) instruction, and then \(\mathcal S\) samples \({\varvec{\mathrm {N}}}_{i,j}\leftarrow {\mathbb {Z}}_{{\varvec{\mathrm {m}}} _j}\) for \(i\in [n]\) subject to

and returns each share to the matching corrupt party.

In Step 4 of  , for every \(j\in [{\ell '}]\), every corrupt party \(\mathcal{P} _{i'}\) for \(i'\in {{\varvec{\mathrm {P}}}^*} \), and every honest party \(\mathcal{P} _i\) for \(i\in [n]{\setminus }{{\varvec{\mathrm {P}}}^*} \), \(\mathcal S\) sends \({\varvec{\mathrm {N}}}_{i,j}\) to \(\mathcal{P} _{i'}\) on behalf of \(\mathcal{P} _i\), and receives \({\varvec{\mathrm {N}}}_{i',j}\) (which it already knows) in reply.

, for every \(j\in [{\ell '}]\), every corrupt party \(\mathcal{P} _{i'}\) for \(i'\in {{\varvec{\mathrm {P}}}^*} \), and every honest party \(\mathcal{P} _i\) for \(i\in [n]{\setminus }{{\varvec{\mathrm {P}}}^*} \), \(\mathcal S\) sends \({\varvec{\mathrm {N}}}_{i,j}\) to \(\mathcal{P} _{i'}\) on behalf of \(\mathcal{P} _i\), and receives \({\varvec{\mathrm {N}}}_{i',j}\) (which it already knows) in reply.

To simulate the final steps of  , \(\mathcal S\) tries to divide \(N\) by all primes smaller than B. If it succeeds, then the protocol is complete. Otherwise, it receives \(\texttt {check-}\texttt {biprimality}\) from all of the corrupt parties on behalf of

, \(\mathcal S\) tries to divide \(N\) by all primes smaller than B. If it succeeds, then the protocol is complete. Otherwise, it receives \(\texttt {check-}\texttt {biprimality}\) from all of the corrupt parties on behalf of  , and replies with \(\texttt {biprime}\) or \(\texttt {not-biprime}\) as appropriate. It can be verified by inspection that the view of the environment is identically distributed in the ideal-world experiment containing \(\mathcal S\) and honest parties that interact with

, and replies with \(\texttt {biprime}\) or \(\texttt {not-biprime}\) as appropriate. It can be verified by inspection that the view of the environment is identically distributed in the ideal-world experiment containing \(\mathcal S\) and honest parties that interact with  , and the real-world experiment containing \(\mathcal A\) and parties running

, and the real-world experiment containing \(\mathcal A\) and parties running  .

.

Theorem 4.6

If factoring biprimes sampled by  is hard, then

is hard, then  UC-realizes

UC-realizes  in the

in the  -hybrid model against a malicious PPT adversary that statically corrupts up to \(n-1\) parties.

-hybrid model against a malicious PPT adversary that statically corrupts up to \(n-1\) parties.

Proof Sketch

We observe that if the adversary simply follows the specification of the protocol and does not cheat in its inputs to  or

or  , then the simulator can follow the same strategy as in the semi-honest case. At any point, if the adversary deviates from the protocol, the simulator requests

, then the simulator can follow the same strategy as in the semi-honest case. At any point, if the adversary deviates from the protocol, the simulator requests  to reveal all honest parties’ shares, and thereafter the simulator uses them by effectively running the code of the honest parties. This matches the adversary’s view in the real protocol as far as the distribution of the honest parties’ shares is concerned.

to reveal all honest parties’ shares, and thereafter the simulator uses them by effectively running the code of the honest parties. This matches the adversary’s view in the real protocol as far as the distribution of the honest parties’ shares is concerned.

It remains to be argued that any deviation from the protocol specification will also result in an abort in the real world with honest parties and will additionally be recognized by the honest parties as an adversarially induced cheat (as opposed to a statistical sampling failure). Note that the honest parties must only detect cheating when \(N\) is truly a biprime and the adversary has sabotaged a successful candidate; if \(N\) is not a biprime and would have been rejected anyway, then cheat detection is unimportant. We analyze all possible cases where the adversary deviates from the protocol below. Let \(N\) be defined as the value implied by parties’ sampled shares in Step 1 of  .

.

Case 1: \(N\) is a non-biprime and reconstructed correctly. In this case,  will always reject \(N\) as there exist no satisfying inputs (i.e., there are no two prime factors \(p,q\) such that \(p\cdot q=N\)).

will always reject \(N\) as there exist no satisfying inputs (i.e., there are no two prime factors \(p,q\) such that \(p\cdot q=N\)).

Case 2: \(N\) is a non-biprime and reconstructed incorrectly as \(N'\). If by fluke \(N'\) happens to be a biprime, then the incorrect reconstruction will be caught by the explicit secure predicate check during the consistency-check phase. If \(N'\) is a non-biprime, then the argument from the previous case applies.

Case 3: \(N\) is a biprime and reconstructed correctly. If consistent inputs are used for the biprimality test and nobody cheats, the candidate \(N\) is successfully accepted (this case essentially corresponds to the semi-honest case). Otherwise, if inconsistent inputs are used for the biprimality test, one of the following events will occur:

-

rejects this candidate. In this case, all parties reveal their shares of \(p\) and \(q\) to one another (with guaranteed correctness via

rejects this candidate. In this case, all parties reveal their shares of \(p\) and \(q\) to one another (with guaranteed correctness via  ) and locally test their primality. This will reveal that \(N\) was a biprime and that

) and locally test their primality. This will reveal that \(N\) was a biprime and that  must have been supplied with inconsistent inputs, implying that some party has cheated.

must have been supplied with inconsistent inputs, implying that some party has cheated. -

accepts this candidate. This case occurs with negligible probability (assuming factoring is hard). Because \(N\) only has two factors, there is exactly one pair of inputs that the adversary can supply to

accepts this candidate. This case occurs with negligible probability (assuming factoring is hard). Because \(N\) only has two factors, there is exactly one pair of inputs that the adversary can supply to  to induce this scenario, apart from the pair specified by the protocol. In our full proof (see Appendix C) we show that finding this alternative pair of satisfying inputs implies factoring \(N\). We are careful to rely on the hardness of factoring only in this case, where by premise \(N\) is a biprime with \({\kappa } \)-bit factors (i.e., an instance of the factoring problem).

to induce this scenario, apart from the pair specified by the protocol. In our full proof (see Appendix C) we show that finding this alternative pair of satisfying inputs implies factoring \(N\). We are careful to rely on the hardness of factoring only in this case, where by premise \(N\) is a biprime with \({\kappa } \)-bit factors (i.e., an instance of the factoring problem).

Case 4: \(N\) is a biprime and reconstructed incorrectly as \(N'\). If \(N'\) is a biprime then the incorrect reconstruction will be caught during the consistency-check phase, just as when \(N\) is a biprime. If \(N'\) is a non-biprime, then it will be rejected by  , inducing all parties to reveal their shares and find that their shares do not in fact reconstruct to \(N'\), with the implication that some party has cheated.

, inducing all parties to reveal their shares and find that their shares do not in fact reconstruct to \(N'\), with the implication that some party has cheated.

Thus, the adversary is always caught when trying to sabotage a true biprime, and it can never sneak a non-biprime past the consistency check. Because the real-world protocol always aborts in the case of cheating, it is indistinguishable from the simulation described above, assuming that factoring is hard. \(\square \)

5 Distributed Biprimality Testing

In this section, we present protocols realizing  . In Sect. 5.1, we discuss the semi-honest setting, and in Sect. 5.2, the malicious setting.

. In Sect. 5.1, we discuss the semi-honest setting, and in Sect. 5.2, the malicious setting.

5.1 The Semi-Honest Setting

In the semi-honest setting,  can be realized by the biprimality-testing protocol of Boneh and Franklin [5]. Loosely speaking, the Boneh–Franklin protocol is a variant of the Miller–Rabin test: for a randomly chosen \(\gamma \in {\mathbb {Z}}^*_N\) with Jacobi symbol 1, it checks whether \(\gamma ^{(N- p- q+1)/4} \equiv \pm 1 \pmod {N}\) (recall that \(\varphi (N)=N- p- q+1\)). A biprime will always pass this test, but non-biprimes may yield a false positive with probability 1/2. The test is repeated \({s} \) times (either sequentially or concurrently) in order to bound the probability of proceeding with a false positive to \(2^{-{s}}\) (where \({s} \) is the statistical security parameter).

can be realized by the biprimality-testing protocol of Boneh and Franklin [5]. Loosely speaking, the Boneh–Franklin protocol is a variant of the Miller–Rabin test: for a randomly chosen \(\gamma \in {\mathbb {Z}}^*_N\) with Jacobi symbol 1, it checks whether \(\gamma ^{(N- p- q+1)/4} \equiv \pm 1 \pmod {N}\) (recall that \(\varphi (N)=N- p- q+1\)). A biprime will always pass this test, but non-biprimes may yield a false positive with probability 1/2. The test is repeated \({s} \) times (either sequentially or concurrently) in order to bound the probability of proceeding with a false positive to \(2^{-{s}}\) (where \({s} \) is the statistical security parameter).

The above test filters out all non-biprimes except those with factors of the form \(p=a_1^{b_1}\) and \(q=a_2^{b_2}\), with \(q\equiv 1 \pmod {a_1^{b_1-1}}\). This final class of non-biprimes is filtered by securely sampling \(r\leftarrow {\mathbb {Z}}_N\), computing \(z:=r\cdot (p+q-1)\), and then testing whether \(\gcd (z,N)=1\).Footnote 8 Boneh and Franklin suggest that the secure sampling of r and the computation of z can be done via generic MPC; we provide a functionality  (see Appendix A.2) that is adequate for the task, but note that other strategies exist: for example, Frederiksen et al. use a bespoke protocol, which could be further optimized by performing its multiparty multiplication operations in CRT form, as we have outlined in this work. As we report in Sect. 6, this final GCD test does not represent a substantial fraction of the overall cost in the semi-honest setting, even if it is instantiated generically, and so the impact of such optimizations would be minimal. Regardless, we note that the GCD test does induce some false negatives (modeled by

(see Appendix A.2) that is adequate for the task, but note that other strategies exist: for example, Frederiksen et al. use a bespoke protocol, which could be further optimized by performing its multiparty multiplication operations in CRT form, as we have outlined in this work. As we report in Sect. 6, this final GCD test does not represent a substantial fraction of the overall cost in the semi-honest setting, even if it is instantiated generically, and so the impact of such optimizations would be minimal. Regardless, we note that the GCD test does induce some false negatives (modeled by  ) and refer the reader to Boneh and Franklin [5, Section 4.1] for a more comprehensive discussion. The following lemma is immediately implied by their work.

) and refer the reader to Boneh and Franklin [5, Section 4.1] for a more comprehensive discussion. The following lemma is immediately implied by their work.

Lemma 5.1

The biprimality-testing protocol described by Boneh and Franklin [5] UC-realizes  with statistical security in the

with statistical security in the  -hybrid model against a static, semi-honest adversary who corrupts up to \(n-1\) parties.

-hybrid model against a static, semi-honest adversary who corrupts up to \(n-1\) parties.

5.2 The Malicious Setting

Unlike a semi-honest adversary, we permit a malicious adversary to force a true biprime to fail our biprimality test and detect such behavior using independent mechanisms in the  protocol. However, we must ensure that a non-biprime can never pass the test with more than negligible probability. To achieve this, we use a derivative of the biprimality-testing protocol of Frederiksen et al. [23]. Our protocol takes essentially the same high-level approach as theirs, but the complexity of our protocol is reduced because we consider multiple soundness errors jointly instead of independently. Furthermore, by careful reordering and the right choice of functionalities, we eliminate the non-black-box use of commitments, as well as an expensive, redundant multiparty multiplication over \(2{\kappa } \)-bit inputs.

protocol. However, we must ensure that a non-biprime can never pass the test with more than negligible probability. To achieve this, we use a derivative of the biprimality-testing protocol of Frederiksen et al. [23]. Our protocol takes essentially the same high-level approach as theirs, but the complexity of our protocol is reduced because we consider multiple soundness errors jointly instead of independently. Furthermore, by careful reordering and the right choice of functionalities, we eliminate the non-black-box use of commitments, as well as an expensive, redundant multiparty multiplication over \(2{\kappa } \)-bit inputs.

Our protocol essentially comprises a randomized version of the semi-honest Boneh–Franklin test described previously, followed by a Schnorr-like protocol to verify that the test was performed correctly. The soundness error of the underlying biprimality test is compounded by the Schnorr-like protocol’s soundness error to yield a combined error of 3/4, which implies that the test must repeated \({s} \cdot \log _{4/3}(2)<2.5{s} \) times in order to achieve a soundness error no greater than \(2^{-{s}}\) overall. While this is sufficient to ensure the test itself is carried out honestly, it does not ensure the correct inputs are used. Consequently, generic MPC is used to verify the relationship between the messages involved in the Schnorr-like protocol and the true candidate given by \(N\) and shares of its factors. As a side effect, this generic computation samples \(r\leftarrow {\mathbb {Z}}_N\) and outputs \(z=r\cdot (p+q-1)\bmod {N}\) so that the GCD test can afterward be run locally by each party.

Our protocol makes use of a number of subfunctionalities, all of which are standard and described in Appendix A.2. Namely, we use a coin-tossing functionality  to uniformly sample an element from some set, the one-to-many commitment functionality

to uniformly sample an element from some set, the one-to-many commitment functionality  , the generic MPC functionality over committed inputs

, the generic MPC functionality over committed inputs  , and the integer-sharing-of-zero functionality

, and the integer-sharing-of-zero functionality  . In addition, the protocol uses the algorithm

. In addition, the protocol uses the algorithm  (Algorithm 5.3).

(Algorithm 5.3).

Below we present the algorithm  that is used for the GCD test. The inputs are the candidate biprime \(N\), an integer \(M\) (the bound on the shares’ size), a bit-vector \({\varvec{\mathrm {c}}}\) of length \(2.5{s} \), and for each \(i\in [n]\) a tuple consisting of the shares \(p_i\) and \(q_i\) with the Schnorr-like messages \({\varvec{\mathrm {\tau }}}_{i,*}\) and \({\varvec{\mathrm {\zeta }}}_{i,*}\) generated by \(\mathcal{P} _i\). The algorithm verifies that all input values are compatible and returns \(z=r\cdot (p+q-1) \bmod N\) for a random r.

that is used for the GCD test. The inputs are the candidate biprime \(N\), an integer \(M\) (the bound on the shares’ size), a bit-vector \({\varvec{\mathrm {c}}}\) of length \(2.5{s} \), and for each \(i\in [n]\) a tuple consisting of the shares \(p_i\) and \(q_i\) with the Schnorr-like messages \({\varvec{\mathrm {\tau }}}_{i,*}\) and \({\varvec{\mathrm {\zeta }}}_{i,*}\) generated by \(\mathcal{P} _i\). The algorithm verifies that all input values are compatible and returns \(z=r\cdot (p+q-1) \bmod N\) for a random r.

Theorem 5.4

The protocol  statistically UC-realizes

statistically UC-realizes  in the

in the  -hybrid model against a malicious adversary that statically corrupts up to \(n-1\) parties.

-hybrid model against a malicious adversary that statically corrupts up to \(n-1\) parties.

Proof Sketch

Our simulator \(\mathcal{S} \) for  receives \(N\) as common input. Let \({{\varvec{\mathrm {P}}}^*} \) and

receives \(N\) as common input. Let \({{\varvec{\mathrm {P}}}^*} \) and  be vectors indexing the corrupt and honest parties, respectively. To simulate Steps 1 through 3 of

be vectors indexing the corrupt and honest parties, respectively. To simulate Steps 1 through 3 of  , \(\mathcal S\) simply behaves as

, \(\mathcal S\) simply behaves as  ,

,  , and

, and  would in its interactions with the corrupt parties on their behalf, remembering the values received and transmitted. Before continuing, \(\mathcal S\) submits the corrupted parties’ shares of \(p\) and \(q\) to

would in its interactions with the corrupt parties on their behalf, remembering the values received and transmitted. Before continuing, \(\mathcal S\) submits the corrupted parties’ shares of \(p\) and \(q\) to  on their behalf. In response,

on their behalf. In response,  either informs \(\mathcal S\) that \(N\) is a biprime or leaks the honest parties’ shares. In Step 4, \(\mathcal S\) again behaves exactly as

either informs \(\mathcal S\) that \(N\) is a biprime or leaks the honest parties’ shares. In Step 4, \(\mathcal S\) again behaves exactly as  would. During the remainder of the protocol, the simulator must follow one of two different strategies, conditioned on whether or not \(N\) is a biprime. We will show that both strategies lead to a simulation that is statistically indistinguishable from the real-world experiment.

would. During the remainder of the protocol, the simulator must follow one of two different strategies, conditioned on whether or not \(N\) is a biprime. We will show that both strategies lead to a simulation that is statistically indistinguishable from the real-world experiment.

-

If

reported that \(N\) is a biprime, then we know by the specification of

reported that \(N\) is a biprime, then we know by the specification of  that the corrupt parties committed to correct shares of \(p\) and \(q\) in Step 1 of