Abstract

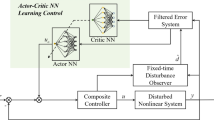

An optimal control method is developed for unknown continuous time systems with unknown disturbances in this chapter. The integral reinforcement learning (IRL) algorithm is presented to obtain the iterative control. Off-policy learning is used to allow the dynamics to be completely unknown. Neural networks (NN) are used to construct critic and action networks. It is shown that if there are unknown disturbances, off-policy IRL may not converge or may be biased. For reducing the influence of unknown disturbances, a disturbances compensation controller is added. It is proven that the weight errors are uniformly ultimately bounded (UUB) based on Lyapunov techniques. Convergence of the Hamiltonian function is also proven. The simulation study demonstrates the effectiveness of the proposed optimal control method for unknown systems with disturbances.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Jiang, Y., Jiang, Z.: Robust adaptive dynamic programming for large-scale systems with an application to multimachine power systems. IEEE Trans. Circuits Syst. II: Express Br. 59(10), 693–697 (2012)

Chen, B., Liu, K., Liu, X., Shi, P., Lin, C., Zhang, H.: Approximation-based adaptive neural control design for a class of nonlinear systems. IEEE Trans. Cybern. 44(5), 610–619 (2014)

Lewis, F., Vamvoudakis, K.: Reinforcement learning for partially observable dynamic processes: adaptive dynamic programming using measured output data. IEEE Trans. Syst. Man Cybern. Part B: Cybern. 41(1), 14–25 (2011)

Song, R., Xiao, W., Zhang, H., Sun, C.: Adaptive dynamic programming for a class of complex-valued nonlinear systems. IEEE Trans. Neural Netw. Learn. Syst. 25(9), 1733–1739 (2014)

Lee, J., Park, J., Choi, Y.: Integral Q-learning and explorized policy iteration for adaptive optimal control of continuous-time linear systems. Automatica 48(11), 2850–2859 (2012)

Kiumarsi, B., Lewis, F., Modares, H., Karimpour, A., Naghibi-Sistani, M.: Reinforcement Q-learning for optimal tracking control of linear discrete-time systems with unknown dynamics. Automatica 50(4), 1167–1175 (2014)

Vrabie, D., Pastravanu, O., Lewis, F., Abu-Khalaf, M.: Adaptive optimal control for continuous-time linear systems based on policy iteration. Automatica 45(2), 477–484 (2009)

Lewis, F., Vrabie, D., Vamvoudakis, K.: Reinforcement learning and feedback control: using natural decision methods to design optimal adaptive controllers. IEEE Control Syst. Mag. 32(6), 76–105 (2012)

Bhasin, S., Kamalapurkar, R., Johnson, M., Vamvoudakis, K., Lewis, F., Dixon, W.: A novel actor-critic-identifier architecture for approximate optimal control of uncertain nonlinear systems. Automatica 49(1), 82–92 (2013)

Vrabie, D., Lewis, F.: Adaptive dynamic programming for online solution of a zero-sum differential game. J. Control Theory Appl. 9(3), 353–360 (2011)

Vrabie, D., Lewis, F.: Integral reinforcement learning for online computation of feedback nash strategies of nonzero-sum differential games. In: Proceedings of Decision and Control, Atlanta, GA, USA, pp. 3066–3071 (2010)

Sutton, R., Barto, A.: Reinforcement Learning: An Introduction. A Bradford Book. The MIT Press, Cambridge (2005)

Wang, J., Xu, X., Liu, D., Sun, Z., Chen, Q.: Self-learning cruise control using Kernel-based least squares policy iteration. IEEE Trans. Control Syst. Technol. 22(3), 1078–1087 (2014)

Vamvoudakis, K., Vrabie, D., Lewis, F.: Online adaptive algorithm for optimal control with integral reinforcement learning. Int. J. Robust Nonlinear Control 24(17), 2686–2710 (2015)

Li, H., Liu, D., Wang, D.: Integral reinforcement learning for linear continuous-time zero-sum games with completely unknown dynamics. IEEE Trans. Autom. Sci. Eng. 11(3), 706–714 (2014)

Luo, B., Wu, H., Huang, T.: Off-policy reinforcement learning for \(H_{\infty }\) control design. IEEE Trans. Cybern. 45(1), 65–76 (2015)

Jiang, Y., Jiang, Z.: Computational adaptive optimal control for continuous-time linear systems with completely unknown dynamics. Automatica 48(10), 2699–2704 (2012)

Jiang, Y., Jiang, Z.: Robust adaptive dynamic programming for nonlinear control design. In: Proceedings of IEEE Conference on Decision and Control, Maui, Hawaii, USA, pp. 1896–1901 (2012)

Jiang, Y., Jiang, Z.: Robust adaptive dynamic programming and feedback stabilization of nonlinear systems. IEEE Trans. Neural Netw. Learn. Syst. 25(5), 882–893 (2014)

Beard, R., Saridis, G., Wen, J.: Galerkin approximations of the generalized Hamilton-Jacobi-Bellman equation. Automatica 33(12), 2159–2177 (1997)

Abu-Khalaf, M., Lewis, F.: Nearly optimal control laws for nonlinear systems with saturating actuators using a neural network HJB approach. Automatica 41(5), 779–791 (2005)

Saridis, G., Lee, C.: An approximation theory of optimal control for trainable manipulators. IEEE Trans. Syst. Man Cybern. Part B: Cybern. 9(3), 152–159 (1979)

Hornik, K., Stinchcombe, M., White, H., Auer, P.: Degree of approximation results for feedforward networks approximating unknown mappings and their derivatives. Neural Comput. 6(6), 1262–1275 (1994)

Lewis, F., Jagannathan, S., Yesildirek, A.: Neural Network Control of Robot Manipulators and Nonlinear Systems. Taylor and Francis, London (1999)

Liu, D., Wei, Q.: Policy iteration adaptive dynamic programming algorithm for discrete-time nonlinear systems. IEEE Trans. Neural Netw. Learn. Syst. 25(3), 621–634 (2014)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Copyright information

© 2019 Science Press, Beijing and Springer Nature Singapore Pte Ltd.

About this chapter

Cite this chapter

Song, R., Wei, Q., Li, Q. (2019). Off-Policy Actor-Critic Structure for Optimal Control of Unknown Systems with Disturbances. In: Adaptive Dynamic Programming: Single and Multiple Controllers. Studies in Systems, Decision and Control, vol 166. Springer, Singapore. https://doi.org/10.1007/978-981-13-1712-5_9

Download citation

DOI: https://doi.org/10.1007/978-981-13-1712-5_9

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-13-1711-8

Online ISBN: 978-981-13-1712-5

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)