Abstract

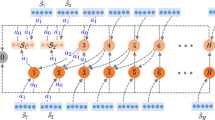

We consider the learning problem under an online Markov decision process (MDP), which is aimed at learning the time-dependent decision-making policy of an agent that minimizes the regret — the difference from the best fixed policy. The difficulty of online MDP learning is that the reward function changes over time. In this paper, we show that a simple online policy gradient algorithm achieves regret \(O(\sqrt{T})\) for T steps under a certain concavity assumption and O(logT) under a strong concavity assumption. To the best of our knowledge, this is the first work to give an online MDP algorithm that can handle continuous state, action, and parameter spaces with guarantee. We also illustrate the behavior of the online policy gradient method through experiments.

Chapter PDF

Similar content being viewed by others

References

Even-Dar, E., Kakade, S.M., Mansour, Y.: Experts in a Markov Decision Process. In: Advances in Neural Information Processing System 17, pp. 401–408. MIT Press, Cambridge (2005)

Even-Dar, E., Karade, S.M., Mansour, Y.: Online Markov Decision Processes. Mathematics of Operations Research 34(3), 726–736 (2009)

Neu, G., György, A., Szepesvári, C., Antos, A.: Online Markov Decision Processes under Bandit Feedback. In: Advances in Neural Information Processing Systems 23, pp. 1804–1812 (2010)

Neu, G., György, A., Szepesvári, C.: The Online Loop-free Stochastic Shortest-path Problem. In: Conference on Learning Theory, pp. 231–243 (2010)

Peter, J., Schaal, S.: Policy Gradient Methods for Robotics. In: Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (2006)

Sutton, R.S., Barto, A.G.: Reinforcement Learning: An Introduction. MIT Press (1998)

Williams, R.J.: Simple Statistical Gradient-following Algorithms for Connetionist Reinforcement Learning. Machine Learning 8(3-4), 229–256 (1992)

Yu, J.Y., Mannor, S., Shimkin, N.: Markov Decision Processes with Arbitrary Reward Processes. Mathematics of Operations Research 34(3), 737–757 (2009)

Zimin, A., Neu, G.: Online Learning in Episodic Markovian Decision Processes by Relative Entropy Policy Search. In: Advances in Neural Information Processing Systems 26, pp. 1583–1591 (2013)

Zinkevich, M.: Online Convex Programming and Generalized Infinitesimal Gradient Ascent. In: International Conference on Machine Learning, pp. 928–936. AAAI Press (2003)

Abbasi-Yadkori, Y., Bartlett, P., Kanade, V., Seldin, Y., Szepesvári, C.: Online Learning in Markov Decision Processes with Adversarially Chosen Transition Probability Distributions. In: Advances in Neural Information Processing Systems 26 (2013)

Hazan, E., Agarwal, A., Kale, S.: Logarithmic Regret Algorithms for Online Convex Optimization. Machine Learning 69, 169–192 (2007)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Ma, Y., Zhao, T., Hatano, K., Sugiyama, M. (2014). An Online Policy Gradient Algorithm for Markov Decision Processes with Continuous States and Actions. In: Calders, T., Esposito, F., Hüllermeier, E., Meo, R. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2014. Lecture Notes in Computer Science(), vol 8725. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-662-44851-9_23

Download citation

DOI: https://doi.org/10.1007/978-3-662-44851-9_23

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-662-44850-2

Online ISBN: 978-3-662-44851-9

eBook Packages: Computer ScienceComputer Science (R0)