Abstract

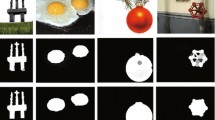

In this paper, we take the advantages of color contrast and color distribution to get high quality saliency maps. The overall procedure flow of our unified framework contains superpixel pre-segmentation, color contrast and color distribution computation, combination, final refinement and then object segmentation. During color contrast saliency computation, we combine two color systems and then introduce the using of distribution prior before saliency smoothing. It works to select correct color components. In addition, we propose a novel saliency smoothing procedure that is based on superpixel regions and is realized in color space. This processing step leads to total object being highlighted evenly, contributing to high quality color contrast saliency maps. Finally, a new refinement approach is utilized to eliminate artifacts and recover unconnected parts in the combined saliency maps. In visual comparison, our method produces higher quality saliency maps which stress out the total object meanwhile suppress background clutters. Both qualitative and quantitative experiments show our approach outperforms 8 state-of-the-art methods, achieving the highest precision rate 96% (3% improvement from the current highest), when evaluated via one of the most popular data sets [1]. Excellent content-aware image resizing also can be achieved with our saliency maps.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Achanta, R., Hemami, S., Estrada, F., Susstrunk, S.: Frequency-tuned salient region detection. In: CVPR (2009)

Rutishauser, U., Walther, D., Koch, C., Perona, P.: Is bottom-up attention useful for object recognition? In: CVPR (2004)

Han, J., Ngan, K., Li, M., Zhang, H.: Unsupervised extraction of visual attention objects in color images. IEEE TCSV 16, 141–145 (2006)

Chen, T., Cheng, M., Tan, P., Shamir, A., Hu, S.: Sketch2photo: Internet image montage. ACM Trans. Graph. 28, 1–10 (2009)

Ding, Y., Jing, X., Yu, J.: Importance filtering for image retargeting. In: CVPR (2011)

Pritch, Y., Kav-Venaki, E., Peleg, S.: Shift-map image editing. In: ICCV (2009)

Grundmann, M., Kwatra, V., Han, M., Essa, I.: Discontinuous seam carving for video retargeting. In: CVPR (2010)

Wolf, L., Guttmann, M., Cohen-Or, D.: Non-homogeneous content driven video-retargeting. In: ICCV (2007)

Itti, L., Koch, C., NieBur, E.: A model of saliency-based visual attention for rapid scene analysis. IEEE TPAMI 20, 1254–1259 (1998)

Cheng, M., Zhang, G., Mitra, N., Huang, X., Hu, S.: Global contrast based salient region detection. In: CVPR (2011)

Perazzi, F., Krahenbul, P., Pritch, Y., Hornung, A.: Saliency filters: Contrast based filtering for salient region detection. In: CVPR (2012)

Hou, X., Zhang, L.: Saliency detection: A spectral residual approach. In: CVPR (2007)

Zhai, Y., Shah, M.: Visual attention detection in video sequences using spatiotemporal cues. ACM Multimedia, 815–824 (2006)

Goferman, S., Zelnik-Manor, L., Tal, A.: Context-aware saliency detection. In: CVPR (2010)

Parkhurst, D., Law, K., Niebur, E.: Modeling the role of salience in the allocation of overt visual attention. Vision Res. 42, 107–123 (2002)

Einhauser, W., Konig, P.: Does luminance-contrast contribute to a saliency map for overt visual attention? Eur. J. Neurosci. 17, 1089–1097 (2003)

Fergus, R., Perona, P., Zisserman, A.: Object class recognition by unsupervised scale-invariant learning. In: CVPR (2003)

Parikh, D., Zitnick, C.L., Chen, T.: Determining Patch Saliency Using Low-Level Context. In: Forsyth, D., Torr, P., Zisserman, A. (eds.) ECCV 2008, Part II. LNCS, vol. 5303, pp. 446–459. Springer, Heidelberg (2008)

Judd, T., Ehinger, K., Durand, F., Torralba, A.: Learning to predict where humans look. In: ICCV (2009)

Rother, C., Kolmogorov, V., Blake, A.: Grabcut- interactive foreground extraction using iterated graph cuts. ACM Trans. Graph. 23, 309–314 (2004)

Liu, T., Yuan, Z., Sun, J., Wang, J., Zheng, N.: Learning to detect a salient object. IEEE TPAMI 33, 353–367 (2011)

Alexe, B., Deselaers, T., Ferrari, V.: What is an object? In: CVPR (2010)

Feng, J., Wei, Y., Tao, L., Zhang, C., Sun, J.: Salient object detection by composition. In: ICCV (2011)

Achanta, R., Shaji, A., Smith, K., Lucchi, A., Fua, P., Ssstrunk, S.: Slic superpixels. In: Technical report (2010)

Borji, A., Itti, L.: Exploiting local and global patch rarities for saliency detection. In: CVPR (2012)

Christoudias, C., Georgescu, B., Meer, P.: Synergism in low level vision. In: ICPR (2002)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2013 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Fu, K., Gong, C., Yang, J., Zhou, Y. (2013). Salient Object Detection via Color Contrast and Color Distribution. In: Lee, K.M., Matsushita, Y., Rehg, J.M., Hu, Z. (eds) Computer Vision – ACCV 2012. ACCV 2012. Lecture Notes in Computer Science, vol 7724. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-37331-2_9

Download citation

DOI: https://doi.org/10.1007/978-3-642-37331-2_9

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-37330-5

Online ISBN: 978-3-642-37331-2

eBook Packages: Computer ScienceComputer Science (R0)