Abstract

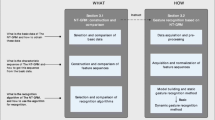

This paper proposes a novel approach to multi-modal gesture recognition by using skeletal joints and motion trail model. The approach includes two modules, i.e. spotting and recognition. In the spotting module, a continuous gesture sequence is segmented into individual gesture intervals based on hand joint positions within a sliding window. In the recognition module, three models are combined to classify each gesture interval into one gesture category. For skeletal model, Hidden Markov Models (HMM) and Support Vector Machines (SVM) are adopted for classifying skeleton features. For depth maps and user masks, we employ 2D Motion Trail Model (2DMTM) for gesture representation to capture motion region information. SVM is then used to classify Pyramid Histograms of Oriented Gradient (PHOG) features from 2DMTM. These three models are complementary to each other. Finally, a fusion scheme incorporates the probability weights of each classifier for gesture recognition. The proposed approach is evaluated on the 2014 ChaLearn Multi-modal Gesture Recognition Challenge dataset. Experimental results demonstrate that the proposed approach using combined models outperforms single-modal approaches, and the recognition module can perform effectively on user-independent gesture recognition.

Chapter PDF

Similar content being viewed by others

References

Aggarwal, J., Ryoo, M.S.: Human activity analysis: A review. ACM Computing Surveys (CSUR) 43(3), 16 (2011)

Bayer, I., Silbermann, T.: A multi modal approach to gesture recognition from audio and video data. In: Proceedings of the 15th ACM on International Conference on Multimodal Interaction, pp. 461–466. ACM (2013)

Bobick, A.F., Davis, J.W.: The recognition of human movement using temporal templates. IEEE Transactions on Pattern Analysis and Machine Intelligence 23(3), 257–267 (2001)

Chang, C.C., Lin, C.J.: LIBSVM: A library for support vector machines. ACM Transactions on Intelligent Systems and Technology 2, 27:1–27:27 (2011). http://www.csie.ntu.edu.tw/cjlin/libsvm

Chen, X., Koskela, M.: Online rgb-d gesture recognition with extreme learning machines. In: Proceedings of the 15th ACM on International Conference On Multimodal Interaction, pp. 467–474. ACM (2013)

Dollár, P., Rabaud, V., Cottrell, G., Belongie, S.: Behavior recognition via sparse spatio-temporal features. In: 2nd Joint IEEE International Workshop on Visual Surveillance and Performance Evaluation of Tracking and Surveillance 2005, pp. 65–72. IEEE (2005)

Escalera, S., Bar, X., Gonzlez, J., Bautista, M.A., Madadi, M., Reyes, M., Ponce, V., Escalante, H.J., Shotton, J., Guyon, I.: Chalearn looking at people challenge 2014: Dataset and results. In: European Conference on Computer Vision Workshops (ECCVW) (2014)

Escalera, S., Gonzàlez, J., Baró, X., Reyes, M., Lopes, O., Guyon, I., Athitsos, V., Escalante, H.: Multi-modal gesture recognition challenge 2013: Dataset and results. In: Proceedings of the 15th ACM on International Conference On Multimodal Interaction, pp. 445–452. ACM (2013)

Jaimes, A., Sebe, N.: Multimodal human-computer interaction: A survey. Computer Vision and Image Understanding 108(1), 116–134 (2007)

Jalal, A., Uddin, M.Z., Kim, J.T., Kim, T.S.: Recognition of human home activities via depth silhouettes and transformation for smart homes. In: Indoor and Built Environment, p. 1420326X11423163 (2011)

Janoch, A., Karayev, S., Jia, Y., Barron, J.T., Fritz, M., Saenko, K., Darrell, T.: A category-level 3d object dataset: Putting the kinect to work. In: Consumer Depth Cameras for Computer Vision, pp. 141–165. Springer (2013)

Jhuang, H., Serre, T., Wolf, L., Poggio, T.: A biologically inspired system for action recognition. In: IEEE 11th International Conference on Computer Vision, ICCV 2007, pp. 1–8. IEEE (2007)

Laptev, I.: On space-time interest points. International Journal of Computer Vision 64(2–3), 107–123 (2005)

Laptev, I., Lindeberg, T.: Local descriptors for spatio-temporal recognition. In: MacLean, W.J. (ed.) SCVMA 2004. LNCS, vol. 3667, pp. 91–103. Springer, Heidelberg (2006)

Li, W., Zhang, Z., Liu, Z.: Action recognition based on a bag of 3d points. In: 2010 IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), pp. 9–14. IEEE (2010)

Liang, B., Zheng, L.: Three dimensional motion trail model for gesture recognition. In: 2013 IEEE International Conference on Computer Vision Workshops (ICCVW), pp. 684–691 (December 2013)

Nandakumar, K., Wan, K.W., Chan, S.M.A., Ng, W.Z.T., Wang, J.G., Yau, W.Y.: A multi-modal gesture recognition system using audio, video, and skeletal joint data. In: Proceedings of the 15th ACM on International Conference on Multimodal Interaction, pp. 475–482. ACM (2013)

Oikonomopoulos, A., Patras, I., Pantic, M.: Spatiotemporal salient points for visual recognition of human actions. IEEE Transactions on Systems, Man, and Cybernetics, Part B: Cybernetics 36(3), 710–719 (2005)

Oreifej, O., Liu, Z.: Hon4d: Histogram of oriented 4d normals for activity recognition from depth sequences. In: 2013 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 716–723. IEEE (2013)

Rabiner, L.: A tutorial on hidden markov models and selected applications in speech recognition. Proceedings of the IEEE 77(2), 257–286 (1989)

Scovanner, P., Ali, S., Shah, M.: A 3-dimensional sift descriptor and its application to action recognition. In: Proceedings of the 15th International Conference on Multimedia, pp. 357–360. ACM (2007)

Shotton, J., Sharp, T., Kipman, A., Fitzgibbon, A., Finocchio, M., Blake, A., Cook, M., Moore, R.: Real-time human pose recognition in parts from single depth images. Communications of the ACM 56(1), 116–124 (2013)

Tian, Y., Cao, L., Liu, Z., Zhang, Z.: Hierarchical filtered motion for action recognition in crowded videos. IEEE Transactions on Systems, Man, and Cybernetics, Part C: Applications and Reviews 42(3), 313–323 (2012)

Vieira, A.W., Nascimento, E.R., Oliveira, G.L., Liu, Z., Campos, M.F.M.: STOP: space-time occupancy patterns for 3d action recognition from depth map sequences. In: Alvarez, L., Mejail, M., Gomez, L., Jacobo, J. (eds.) CIARP 2012. LNCS, vol. 7441, pp. 252–259. Springer, Heidelberg (2012)

Wang, J., Liu, Z., Wu, Y., Yuan, J.: Mining actionlet ensemble for action recognition with depth cameras. In: 2012 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 1290–1297. IEEE (2012)

Willems, G., Tuytelaars, T., Van Gool, L.: An efficient dense and scale-invariant spatio-temporal interest point detector. In: Forsyth, D., Torr, P., Zisserman, A. (eds.) ECCV 2008, Part II. LNCS, vol. 5303, pp. 650–663. Springer, Heidelberg (2008)

Wong, K.Y.K., Cipolla, R.: Extracting spatiotemporal interest points using global information. In: IEEE 11th International Conference on Computer Vision, ICCV 2007, pp. 1–8. IEEE (2007)

Wu, J., Cheng, J., Zhao, C., Lu, H.: Fusing multi-modal features for gesture recognition. In: Proceedings of the 15th ACM on International Conference On Multimodal Interaction, pp. 453–460. ACM (2013)

Xia, L., Chen, C.C., Aggarwal, J.: View invariant human action recognition using histograms of 3d joints. In: 2012 IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), pp. 20–27. IEEE (2012)

Yang, X., Tian, Y.: Eigenjoints-based action recognition using naive-bayes-nearest-neighbor. In: 2012 IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), pp. 14–19. IEEE (2012)

Yang, X., Zhang, C., Tian, Y.: Recognizing actions using depth motion maps-based histograms of oriented gradients. In: Proceedings of the 20th ACM International Conference on Multimedia. pp. 1057–1060. ACM (2012)

Yao, A., Gall, J., Van Gool, L.: Coupled action recognition and pose estimation from multiple views. International Journal of Computer Vision 100(1), 16–37 (2012)

Zhu, Y., Chen, W., Guo, G.: Fusing spatiotemporal features and joints for 3d action recognition. In: 2013 IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), pp. 486–491 (June 2013)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2015 Springer International Publishing Switzerland

About this paper

Cite this paper

Liang, B., Zheng, L. (2015). Multi-modal Gesture Recognition Using Skeletal Joints and Motion Trail Model. In: Agapito, L., Bronstein, M., Rother, C. (eds) Computer Vision - ECCV 2014 Workshops. ECCV 2014. Lecture Notes in Computer Science(), vol 8925. Springer, Cham. https://doi.org/10.1007/978-3-319-16178-5_44

Download citation

DOI: https://doi.org/10.1007/978-3-319-16178-5_44

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-16177-8

Online ISBN: 978-3-319-16178-5

eBook Packages: Computer ScienceComputer Science (R0)