Abstract

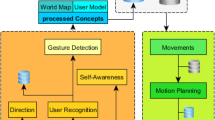

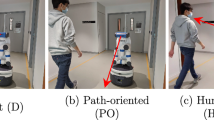

Various studies have shown that human visual attention is generally attracted by motion in the field of view. In order to embody this kind of social behavior in a robot, its gaze should focus on key points in its environment, such as objects or humans moving. In this paper, we have developed a social natural attention system and we explore the perception of people while interacting with a robot in three different situations: one where the robot has a totally random gaze behavior, one where its gaze is fixed on the person in the interaction, and one where its gaze behavior adapts to the motion-based environmental context. We conducted an online survey and an on-site experiment with the Meka robot so as to evaluate people’s perception towards these three types of gaze. Our results show that motion-oriented gaze can help to make the robot more engaging and more natural to people.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Exline, R.V., Gray, D., Schuette, D.: Visual behavior in a dyad as affected by interview content and sex of respondent. In: Tomkins, S., Izzard, C. (eds.) Affect, Cognition and Personality, Springer, New York (1965)

Kendon: Some functions of gaze direction in social interaction. Acta Psychologica 26(1), 1–47 (1967)

Koelemans, D.: The effect of virtual eye behaviour during human-robot interaction. In: Proceedings of the 18th Twente Student Conference on IT (2013)

Mutlu, B., Forlizzi, J., Hodgins, J.: A storytelling robot: Modeling and evaluation of human-like gaze behavior. In: Proceedings of the 6th IEEE-RAS International Conference on Humanoid Robots, pp. 518–523. IEEE (2006)

Muhl, C., Nagai, Y.: Does disturbance discourage people from communicating with a robot? In: The 16th IEEE International Symposium on Robot and Human interactive Communication, RO-MAN 2007, pp. 1137–1142. IEEE (2007)

Holthaus, P., Pitsch, K., Wachsmuth, S.: How can i help? International Journal of Social Robotics 3(4), 383–393 (2011)

Ruesch, J., Lopes, M., Bernardino, A., Hornstein, J., Santos-Victor, J., Pfeifer, R.: Multimodal saliency-based bottom-up attention a framework for the humanoid robot icub. In: Proceedings of the International Conference on Robotics and Automation, pp. 962–967. IEEE (2008)

Garrigues, M., Manzanera, A., Bernard, T.M.: Video extruder: a semi-dense point tracker for extracting beams of trajectories in real time. Journal of Real-Time Image Processing, 1–14 (2014)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 Springer International Publishing Switzerland

About this paper

Cite this paper

Sorostinean, M., Ferland, F., Dang, THH., Tapus, A. (2014). Motion-Oriented Attention for a Social Gaze Robot Behavior. In: Beetz, M., Johnston, B., Williams, MA. (eds) Social Robotics. ICSR 2014. Lecture Notes in Computer Science(), vol 8755. Springer, Cham. https://doi.org/10.1007/978-3-319-11973-1_32

Download citation

DOI: https://doi.org/10.1007/978-3-319-11973-1_32

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-11972-4

Online ISBN: 978-3-319-11973-1

eBook Packages: Computer ScienceComputer Science (R0)