Abstract

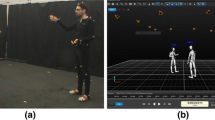

In this paper we present a new multi-modal dataset of spontaneous three way human interactions. Participants were recorded in an unconstrained environment at various locations during a sequence of debates in a video conference, Skype style arrangement. An additional depth modality was introduced, which permitted the capture of 3D information in addition to the video and audio signals. The dataset consists of 16 participants and is subdivided into 6 unique sections. The dataset was manually annotated on a continuously scale across 5 different affective dimensions including arousal, valence, agreement, content and interest. The annotation was performed by three human annotators with the ensemble average calculated for use in the dataset. The corpus enables the analysis of human affect during conversations in a real life scenario. We first briefly reviewed the existing affect dataset and the methodologies related to affect dataset construction, then we detailed how our unique dataset was constructed.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

References

Cowie, R., Douglas-Cowie, E., Tsapatsoulis, N., Votsis, G., Kollias, S., Fellenz, W., Taylor, J.G.: Emotion recognition in human-computer interaction. IEEE Signal Processing Magazine 18(1), 32–80 (2001)

Cowie, R., Schröder, M.: Piecing together the emotion jigsaw. In: Bengio, S., Bourlard, H. (eds.) MLMI 2004. LNCS, vol. 3361, pp. 305–317. Springer, Heidelberg (2005)

McKeown, G., Valstar, M., Cowie, R., Pantic, M., Schroder, M.: The semaine database: Annotated multimodal records of emotionally colored conversations between a person and a limited agent. IEEE Transactions on Affective Computing 3(1), 5–17 (2012)

Ringeval, F., Sonderegger, A., Sauer, J., Lalanne, D.: Introducing the recola multimodal corpus of remote collaborative and affective interactions. In: 2013 10th IEEE International Conference and Workshops on Automatic Face and Gesture Recognition (FG), pp. 1–8 (April 2013)

Cowie, R., Douglas-Cowie, E., Martin, J.-C., Devillers, L.: The essential role of human databases for learning in and validation of affectively competent agents. OUP, Oxford (2010)

Sandbach, G., Zafeiriou, S., Pantic, M., Yin, L.: Static and dynamic 3d facial expression recognition: A comprehensive survey. Image Vision Comput. 30(10), 683–697 (2012)

Kleinsmith, A., Bianchi-Berthouze, N.: Affective body expression perception and recognition: A survey. IEEE Transactions on Affective Computing 4(1), 15–33 (2013)

Aggarwal, J.K., Xia, L.: Human activity recognition from 3d data: A review. Pattern Recognition Letters (2014)

Pantic, M., Bartlett, M.S.: Machine analysis of facial expressions. In: Delac, K., Grgic, M. (eds.) Face Recognition, pp. 377–416. I-Tech Education and Publishing, Vienna (2007)

Gunes, H., Pantic, M.: Dimensional emotion prediction from spontaneous head gestures for interaction with sensitive artificial listeners. In: Allbeck, J., Badler, N., Bickmore, T., Pelachaud, C., Safonova, A. (eds.) IVA 2010. LNCS, vol. 6356, pp. 371–377. Springer, Heidelberg (2010)

Afzal, S., Robinson, P.: Natural affect data; collection and annotation in a learning context. In: 3rd International Conference on Affective Computing and Intelligent Interaction and Workshops, ACII 2009, pp. 1–7 (September 2009)

Soleymani, M., Lichtenauer, J., Pun, T., Pantic, M.: A multimodal database for affect recognition and implicit tagging. IEEE Transactions on Affective Computing 3(1), 42–55 (2012)

Mahmoud, M., Baltrušaitis, T., Robinson, P., Riek, L.D.: 3D corpus of Spontaneous Complex Mental States. In: D’Mello, S., Graesser, A., Schuller, B., Martin, J.-C. (eds.) ACII 2011, Part I. LNCS, vol. 6974, pp. 205–214. Springer, Heidelberg (2011)

Gunes, H., Schuller, B.: Categorical and dimensional affect analysis in continuous input: Current trends and future directions. Image Vision Comput. 31(2), 120–136 (2013)

Yin, L., Chen, X., Sun, Y., Worm, T., Reale, M.: A high-resolution 3d dynamic facial expression database. In: 8th IEEE International Conference on Automatic Face Gesture Recognition, FG 2008, pp. 1–6 (September 2008)

Schuller, B., Valstar, M., Eyben, F., McKeown, G., Cowie, R., Pantic, M.: AVEC 2011–the first international audio/visual emotion challenge. In: D’Mello, S., Graesser, A., Schuller, B., Martin, J.-C. (eds.) ACII 2011, Part II. LNCS, vol. 6975, pp. 415–424. Springer, Heidelberg (2011)

Schuller, B., Valstar, M., Cowie, R., Pantic, M.: AVEC 2012: The continuous audio/visual emotion challenge - an introduction. In: Proceedings of the 14th ACM International Conference on Multimodal Interaction, ICMI 2012, pp. 361–362. ACM, New York (2012)

Zhang, X., Yin, L., Cohn, J.F., Canavan, S., Reale, M., Horowitz, A., Liu, P.: A high resolution spontaneous 3d dynamic facial expression database. In: Proceedings of 10th IEEE International (2013)

Brugman, H., Russel, A.: Annotating multi-media/multi-modal resources with elan. In: LREC (2004)

Kipp, M.: Anvil - a generic annotation tool for multimodal dialogue (2001)

Schröder, M., Cowie, R., Douglas-Cowie, E., Savvidou, S., McMahon, E., Sawey, M.: ‘FEELTRACE’: An Instrument for Recording Perceived Emotion in Real Time. In: Proceedings of the ISCA Workshop on Speech and Emotion: A Conceptual Framework for Research, Belfast, pp. 19–24. Textflow (2000)

Cowie, R., Sawey, M.: GTrace-General Trace program from Queen’s, Belfast (2011), https://sites.google.com/site/roddycowie/work-resources (Online; accessed April 29, 2014)

Vinciarelli, A., Dielmann, A., Favre, S., Salamin, H.: Canal9: A database of political debates for analysis of social interactions. In: 3rd International Conference on Affective Computing and Intelligent Interaction and Workshops, ACII 2009, pp. 1–4 (September 2009)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 Springer International Publishing Switzerland

About this paper

Cite this paper

Wei, H., Monaghan, D.S., O’Connor, N.E., Scanlon, P. (2014). A New Multi-modal Dataset for Human Affect Analysis. In: Park, H.S., Salah, A.A., Lee, Y.J., Morency, LP., Sheikh, Y., Cucchiara, R. (eds) Human Behavior Understanding. HBU 2014. Lecture Notes in Computer Science, vol 8749. Springer, Cham. https://doi.org/10.1007/978-3-319-11839-0_4

Download citation

DOI: https://doi.org/10.1007/978-3-319-11839-0_4

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-11838-3

Online ISBN: 978-3-319-11839-0

eBook Packages: Computer ScienceComputer Science (R0)