Abstract

Previously, we proposed a research program to analyze spatial aspects of the science system which we called “spatial scientometrics” (Frenken, Hardeman, & Hoekman, 2009). The aim of this chapter is to systematically review recent (post-2008) contributions to spatial scientometrics on the basis of a standardized literature search. We focus our review on contributions addressing spatial aspects of scholarly impact, particularly, the spatial distribution of publication and citation impact, and the effect of spatial biases in collaboration and mobility on citation impact. We also discuss recent dedicated tools and methods for analysis and visualization of spatial scientometric data. We end with reflections about future research avenues.

Access provided by Autonomous University of Puebla. Download chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

1 Introduction

One of the main trends in scientometrics has been the increased attention to spatial aspects. Parallel to a broader interest in the “geography of science” in fields as history of science, science and technology studies, human geography and economic geography (Barnes, 2001; Finnegan, 2008; Frenken, 2010; Livingstone, 2010; Meusburger, Livingstone, & Jöns, 2010; Shapin, 1998), the field of scientometrics has witnessed a rapid increase in studies using spatial data. In an earlier review, Frenken et al. (2009, p. 222) labelled these studies as “spatial scientometrics” and defined this subfield as “quantitative science studies that explicitly address spatial aspects of scientific research activities.”

The chapter provides an update of the previous review on spatial scientometrics by Frenken et al. (2009), specifically focusing on contributions from the post-2008 period that address spatial aspects of scholarly impact. We will do so by reviewing contributions that describe the spatial distribution of publication and citation impact, and the effect of spatial biases in collaboration and mobility on citation impact, as two spatial aspects of scholarly impact. We then discuss recent efforts to develop tools and methods that visualize scholarly impact using spatial scientometric data. At the end of the chapter, we look ahead at promising future research avenues.

2 Selection of Reviewed Papers

2.1 Scope of Review

We conducted a systematic review of contributions to spatial scientometrics that focused on scholarly impact by considering original articles published since 2008. Following the definition of spatial scientometrics introduced by Frenken et al. (2009), we only included empirical papers that made use of spatial information as it can be retrieved from publication data. Moreover, we paid special attention to three bodies of research within the spatial scientometrics literature. First, studies were eligible when they either describe or explain the distribution of publication or citation output across spatial units (e.g., cities, countries, world regions). Second, studies were considered when they explain scholarly impact of articles based on the spatial organization of research activities (e.g., international collaboration). We refer to this body of research as “geography of citation impact.” Third, the review considered studies that report on tools and methods to visualize the publication and citation output of spatial units on geographic maps.

Given the focus of this book on scholarly impact, we chose not to provide a comprehensive overview of all spatial scientometrics studies published since 2008. Hence we did not consider contributions focused on the spatial organization of research collaboration or the localized emergence of research fields. For notable advancements in these subfields of spatial scientometrics we refer amongst others to Hoekman, Frenken, and Tijssen (2010); Waltman, Tijssen, and Eck (2011); Leydesdorff and Rafols (2011); Boschma, Heimeriks, and Balland (2014).

2.2 Search Procedure

The procedure to select papers for review followed three steps. First, we retrieved all papers that were citing the 2009 spatial scientometrics review paper (Frenken et al., 2009) either in Thomson Reuters Web of Science (from now on: WoS) or Elsevier Scopus (from now on: Scopus). Second, we queried WoS to get a comprehensive overview of all spatial scientometric articles published since 2008. The search was limited to WoS subject categories “information science & library science,” “geography,” “planning & development,” and “multidisciplinary sciences.” The following search query consisting of a combination of spatial and scientometric search terms was performed on March 1, 2014:

-

TS = (spatial* OR “space” OR spatio* OR geograph* OR region* OR “cities” OR “city” OR international* OR countr* OR “proximity” OR “distance” OR “mobility”) AND TS = (“publications” OR “co-publications” OR “articles” OR “papers” OR “web of science” OR “web of knowledge” OR “science citation index” OR “scopus” OR scientometr* OR bibliometr* OR citation*) Footnote 1

Third, a number of additional articles were included after full-text reading of key contributions and evaluation of the cited and citing articles therein.

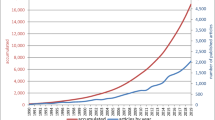

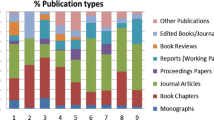

A total of 1,841 articles met the search criteria of the first two steps. Titles and abstracts of all articles were evaluated manually to exclude articles that (1) did not make use of publication data (n = 1,082) or (2) did not report on the spatial organization of research (n = 405). All other 354 publications were manually evaluated and selected for review when they focused on one of three research topics on scholarly impact mentioned above. Subsequently, articles not identified in the WoS search, but cited in or citing key contributions were added.

3 Review

We organize our review in three topics. First, we focus on contributions that analyze the spatial distribution of publication and citation impact accross world regions, countries and subnational regions. We subsequently pay attention to the geography of citation impact and provide an exhaustive review of all contributions that have analyzed the effect of the spatial organization of research activities on scholarly impact. In a third section we focus on the development of tools and methods to support the analysis and visualization of spatial aspects of science.

3.1 Spatial Distribution of Publication and Citation Impact

The spatial distribution of research activities continues to be a topic of major interest for academic scholars and policy makers alike. In Nature News, Van Noorden (2010) discussed recent developments in the field by focusing on the strategies of urban regions to be successful in the production of high-quality scientific research. Another recent initiative that received attention is the Living Science initiative (http://livingscience.inn.ac/) which provides real-time geographic maps of the publication activity of more than 100,000 scientists.

The interest in the spatial distribution of research activities is also noted in our systematic search for spatial scientometric contributions in this sub-field. We found more than 200 papers that analyzed distributions of bibliometric indicators such as publication or citation counts across countries and regions. Space does not allow us to review all these papers. What follows are a number of outstanding papers on the topic organized according to the spatial level of analysis.

3.1.1 World Regions and Countries

Two debates have dominated recent analyses of the distribution of research activities over world regions and countries. First, it is well known that for many decades scientific research activities were disproportionally concentrated in a small number of countries, with the USA and the UK consistently ranking on top in terms of absolute publication output and citation impact. Yet, in recent years this “hegemony” has been challenged by a number of emerging economies such as China, India, and Brazil.

In particular the report of the Royal Society in 2011, “Knowledge, Networks and Nations” emphasizes the rapid emergence of new scientific powerhouses. Using data from Scopus covering the period 1996–2008, the report concludeds that “Meanwhile, China has increased its publications to the extent that it is now the second highest producer of research output in the world. India has replaced the Russian Federation in the top ten, climbing from 13th in 1996 to tenth between 2004 and 2008” (Royal Society, 2011, p. 18). Based on a linear extrapolation of these observations, the report also predicts that China is expected to surpass the USA in terms of publication output before 2020. The prediction was widely covered in the media, yet also criticized on both substantial (Jacsó, 2011) and empirical grounds (Leydesdorff, 2012). For instance, Leydesdorff (2012) replicated the analysis using the WoS database for the period 2000–2010 and finds considerable uncertainty around the prediction estimates, suggesting that the USA will be the global leader in publication output for at least another decade. Moreover, Moiwo and Tao (2013) show that China's normalized publication counts for overall population, population with tertiary education and GDP, is relatively low, while smaller countries such as Switzerland, The Netherlands and Scandinavian countries are world leaders on these indicators.

Huang, Chang, and Chen (2012) also analyze changes in the spatial concentration of national publication and citation output for the period 1981–2008 using several measures such as the Gini coefficient and Herfindahl index. Using National Science Indicators data derived from WoS, they show that publication activity continues to be concentrated in a small number of countries including the USA, UK, Germany, and France. Yet, their analysis also reveals that the degree of concentration is gradually decreasing over time due to the rapid growth of publication output in China and other Asian countries such as Taiwan and Korea. What is more, when the USA is removed from the analysis, concentration indicators drop significantly and a pluralist map of publications and citations becomes visible.

A second issue concerning the spatial distribution of research activities that has received considerable interest in recent years is the debate about the European Paradox. For a long time it was assumed that European countries were global leaders in terms of impact as measured by citation counts, but lagged behind in converting this strength into innovation, economic growth and employment (Dosi, Llerena, & Labini, 2006). The idea originated from the European Commission’s White Paper on Growth, Competitiveness and Employment which stated that “the greatest weakness of Europe’s research base however, is the comparatively limited capacity to convert scientific breakthroughs and technological achievements into industrial and commercial successes” (Commission of the European Communities, 1993, p. 87). This assumption about European excellence became a major pillar of the Lisbon Agenda and creation of a European Research Area.

To scrutinize the conjecture of Europe’s leading role in citation output, Albarrán, Ortuño, and Ruiz-Castillo (2011a) compared the citation distributions of 3.6 million articles published in 22 scientific fields between 1998 and 2002. The contributions of the EU, USA and Rest of the World are partitioned to obtain two novel indicators of the distribution of the most and least cited papers as further explained in a twin paper (Albarrán, Ortuño, & Ruiz-Castillo, 2011b). They observe that the USA “performs dramatically better than the EU and the RW on both indicators in all scientific fields” (Albarrán, Ortuño, & Ruiz-Castillo, 2011a, p. 122), especially when considering the upper part of the distribution. The results are confirmed using mean citation rates instead of citation distributions, although the gap between the USA and Europe is smaller in this case (Albarrán, Ortuño, & Ruiz-Castillo, 2011c). Herranz and Ruiz-Castillo (2013) further refine the analysis by comparing the citation performance of the USA and EU in 219 subfields instead of 22 general scientific fields. They find that on this fine-grained level the USA outperforms the EU in 189 out of 219 subfields. They do not find a particular cluster of subfields in which the EU outperforms the USA. On the basis of this finding they conclude that the idea of the European Paradox can definitely be put to rest.

In addition to studies describing the spatial distribution of research activities across countries and world regions, a number of studies have focused on explaining these distributions using multivariate models with national publication output as the dependent variable. For instance, Pasgaard and Strange (2013) and Huffman et al. (2013) explain national distributions of publication output in climate change research and cardiovascular research, while Meo, Al Masri, Usmani, Memon, and Zaidi (2013) build a similar model to explain the overall publication count of a set of Asian countries. All three studies observe significant positive effects of Research and Development (R&D) investments, Gross Domestic Product (GDP) and population on publication output. Pasgaard and Strange (2013) and Huffman et al. (2013) also find that field-related variables such as burden of disease in the case of cardiovascular research and CO2 emissions in the case of climate change research explain national publication output.

Focusing specifically on European countries, Almeida, Pais, and Formosinho (2009) explain publication output of countries on the basis of specialization patterns. Using principal component analysis on national publication and citation distributions of countries by research fields, they find that countries located in close physical proximity to each other also show similar specialization patterns. This suggests that these countries profit from each other through knowledge spillovers. We return to this issue in the next section where we discuss a number of papers that explain publication output at the sub-national instead of national level.

3.1.2 Regions and Cities

The interest in describing the spatial distribution of scientific output at the level of sub-national regions and cities has been less than in analysis of the output of countries or aggregates of countries. This is likely due to the fact that larger data efforts are required to accurately classify addresses from scientific papers into urban or regional categories as well as from the fact that science policy is mainly organized at national and transnational levels. Nevertheless, the number of studies addressing the urban and regional scales has been rapidly expanding after 2008 and this trend is likely to continue given the increased availability of tools and methods to conduct analysis at fine-grained spatial levels (see Sect. 6.3.3).

Matthiessen and Schwarz (2010) study the 100 largest cities in the world in terms of publication and citation output for two periods: 1996–1998 and 2004–2006. Even during this short period they observe a rapid rise of cities in Southeast Asia as major nodes in the global science system when considering either publication or citation impact. They also note the rise of Australian, South American, and Eastern European cities. These patterns all indicate that the traditional dominance of cities in North America and Western Europe is weakening, although some of these cities remain major world-city hubs such as the San Francisco Bay Area, New York, London-Cambridge, and Amsterdam.

Cho, Hu, and Liu (2010) analyze the regional development of the Chinese science system in great detail for the period 2000–2006. They observe that the regional distribution of output and citations is highly skewed with coastal regions dominating. However, mainland regions have succeeded in quickly raising their scientific production, but still have low citation impact, exceptions aside. Tang and Shapira (2010) find very similar patterns for the specific field of nanotechnology.

An interesting body of research has analyzed whether publication and citation output within countries is concentrating or de-concentrating over time. A comprehensive study by Grossetti et al. (2013) covering WoS data for the period 1987–2007 finds that in most countries in the world, the urban science system is de-concentrating, indicating that the largest cities are undergoing a relative decline in a country’s scientific output (see also an earlier study on five countries by Grossetti, Milard, and Losego (2009)). The same trend is observed by looking at citation instead of publication output. Two other studies on France, with a specific focus on small and medium-sized cities (Levy & Jégou, 2013; Levy, Sibertin-Blanc, & Jégou, 2013), and a study on Spain (Morillo & De Filippo, 2009), support this conclusion. The research results are significant in debunking the popular policy notion that the spatial concentration of recourses and people supports scientific excellence.

Remarkably, the explanation of publication output at the regional level has received a great deal of attention in recent years. That is, a number of contributions address the question why certain regions generate more publication output than others. This body of research relies on a so-called knowledge production function framework where number of publications are considered as the output variables and research investment, amongst other variables, as input in the knowledge production system. Acosta, Coronado, Ferrándiz, and León (2014) look at the effect of public spending on regional publication output using Eurostat data on R&D spending in the Higher Education sector. They find a strong effect of public investment on regional publication output. Interestingly, this effect is strongest in less developed regions (“Objective 1 regions” in the European Union) when compared to more developed regions, meaning that an increase in budget has a higher payoff in less developed regions than in more developed regions. This result is in line with Hoekman, Scherngell, Frenken, and Tijssen (2013) who find that the effect of European Framework Program subsidies is larger in regions that publish less compared to regions that publish more.

Sebestyén and Varga (2013) also apply a knowledge production framework with a specific focus on the role of inter-regional collaboration networks and knowledge spillover effects between neighboring regions. They find that scientific output is dependent on embeddedness in national and international networks, while it is not supported by regional agglomeration of industry or publication activity in neighboring regions. They conclude that regional science policy should focus on networking with other regions domestically and internationally, rather than on local factors or regions in close physical proximity. Their results also confirm the results of an earlier study on Chinese regions which highlight the importance of spillovers stemming from international collaborations (Cho et al., 2010).

Finally, some papers analyze the impact of exogenous events on the publication output of regions or countries. Magnone (2012) studies the impact of the triple disaster in Japan (earthquake, tsunami and nuclear accident) on the Materials Science publication output in the cities of Sendai, Tsukuba and Kyoto (the latter being a “non-disaster situation” control). As expected, the author observes clear and consistent negative effects of the disaster on publication output in Sendai and Tsukuba, compared to Kyoto. Studies with similar research questions include Braun (2012) who studies the effect of war on mathematics research activity in Croatia; Miguel, Moya-Anegón, and Herrero-Solana (2010) scrutinizing the effect of the socio-economic crisis in Argentina on scientific output and impact, and Orduña-Malea, Ontalba-Ruipérez, and Serrano-Cobos (2010) focusing on the impact of 9/11 on international scientific collaboration in Middle Eastern countries.

3.2 Geography of Citation Impact

3.2.1 Collaboration

A topic which has received considerable attention is the effect of geography, particularly international collaboration, on the citation impact of articles. These geographical effects can be assessed at both the author level and the article level. Typically, studies use a multivariate regression method with the number of citations of a paper or article as the dependent variable, following an early study by Frenken, Hölzl, and Vor (2005). This research set-up allows to control for many other factors that may affect citation impact, such as the number of authors and country effects (e.g., English speaking countries) when explaining citations to articles, and age and gender when explaining citations to individual scientists.

He (2009) finds for 1,860 papers written by 65 biomedical scientists in New Zealand that internationally co-authored papers indeed receive more citations than national collaborations, while controlling for many other factors. More interestingly, he also finds even higher citation impact of local collaborations within the same university when compared to international collaborations. This suggests that local collaboration, which is often not taken into account in the geography of citation impact, may have much more benefits than previously assumed.

The importance of local collaboration is confirmed by Lee, Brownstein, Mills, and Kohane (2010) who consider the effect of physical distance on citation impact by analyzing collaboration patterns between first and last authors that are both located at the Longwood campus of Harvard Medical School. They find that at this microscale, physical proximity in meters and within-building collaboration is positively related to citation impact. The authors do not, however, control for alternative factors that may explain these patterns such as specialization.

Frenken, Ponds, and Van Oort (2010) test the effects of international, national and regional collaboration for Dutch publications in life sciences and physical sciences derived from WoS. They show that research collaboration in the life sciences has a higher citation impact if organized at the regional level than at the national level, while the opposite is found for the physical sciences. In both fields the citation impact of international collaboration exceeds the citation impact of both national and regional research collaboration, in particular for collaborations with the USA. Sin (2011) compares the impact of international versus national collaboration in the field of Library and Information Sciences. In line with other studies, a positive effect for international collaboration is found, while no significant effect of national collaboration as compared to single authorships is observed.

One problem in interpreting the positive effect of international collaboration on citation impact holds that this finding may indeed signal that international collaboration results in higher research quality, yet also that the results from internationally coauthored papers diffuse from centers in multiple countries, as noted by Frenken et al. (2010). These two effects are not necessarily mutually exclusive. Lancho Barrantes, Bote, Vicente, Rodríguez, and de Moya Anegón (2012) try to disentangle between the “quality” and “audience” effect by studying whether national biases on citation impact are larger in countries that produce many papers. They find that the “audience” effect is especially large in relatively small countries, while the quality effect of internationally co-authored papers seems to be a general property irrespective of country size.

Nomaler, Frenken, and Heimeriks (2013) do not look at the effect of international versus national collaboration, but at the effect of kilometric distance between collaborating authors. On the basis of all scientific papers published in 2000 and coauthored by two or more European countries, they show that citation impact increases with the geographical distance between the collaborating countries. Interestingly, they also find a negative effect for EU countries, suggesting that collaborations with a partner outside the EU are more selective, and, hence, have higher quality.

An interesting study by Didegah and Thelwall (2013a) looks at the effects of the geographical properties of references of nanotechnology papers. In particular, they test the hypothesis that papers with more references to “international” journals—defined as journals with more geographic dispersion of authors—have more citation impact. They indeed find this effect. Moreover, after controlling for the effect they no longer observe an effect of international collaboration on citation impact. However, in a related paper that studies the effect of 11 factors on citation impact, of which international collaboration is one, Didegah and Thelwall (2013b) do observe a positive effect of international collaboration on citation impact.

Finally, a study by Eisend and Schmidt (2014) is worth mentioning in this context. They study how the internationalization strategies of business research scholars affect their research performance in terms of citation impact. Their study is original in that they specifically look at how this effect is influenced by the knowledge resources of individual researchers. They find that international collaboration supports performance more if researchers lack language skills and knowledge of foreign markets. This indicates that international collaboration provides researchers with access to complementary skills. Collaboration also improves the performance of less experienced researchers with the advantage diminishing with increasing research experience.

A methodological challenge of studies that assess the effect of international collaboration on citation impact is self-selection bias. Indeed, one can expect that better scientists are more likely to engage in international collaboration. For example, Abramo, D’Angelo, and Solazzi (2011) find that Italian natural scientists who produce higher quality research tend to collaborate more internationally. The same result was found by Kato and Ando (2013) for chemists worldwide. To control for self-selection, they investigate whether the citation rate of international papers is higher than the citation rate of domestic papers, controlling for performance, that is, by looking at papers with at least one author in common. Importantly, they still find that international collaboration positively and significantly affects citation impact. Obviously, the issue of self-selection should be high on the agenda for future research.

3.2.2 Mobility

An emerging research topic in spatial scientometrics of scholarly impact concerns the question of whether internationally mobile researchers outperform other researchers in terms of productivity and citation impact. Although descriptive studies have noted a positive effect of international mobility on the citation impact of researchers (Sandström, 2009; Zubieta, 2009), it remains unclear whether higher performance is caused by international mobility (e.g., through the acquisition of new skills), or by self-selection (better scientists being more mobile).

In a recent study, Jonkers and Cruz-Castro (2013) explored this effect for a sample of Argentinian researchers with foreign work experience. When returning home, these researchers show a higher propensity to co-publish with their former host country than with other countries. These researchers also have a higher propensity to publish in high-impact journals as compared to their non-mobile peers, even when the mobile scientists don’t publish with foreign researchers. Importantly, the study accounted for self-selection (better scientists being more mobile) by taking into account the early publication record of researchers as an explanatory variable for high-impact publications after their return to Argentina.

Another study by Trippl (2013) investigates the impact of internationally mobile star scientists on regional knowledge transfer. Here, the question holds whether a region benefits from attracting renowned scientists from abroad. It was found that mobile star scientists do not differ in their regional knowledge transfer activities from non-mobile star scientists. However, mobile scientists have more interregional linkages with firms which points to the importance of mobile scientists for complementing intraregional ties with interregional ones.

3.3 Tools and Methods

Besides empirical contributions to the field of spatial scientometrics, a growing group of scholars have focused on the development of tools and methods to support the analysis and visualization of spatial aspects of science. Following a more general interest in science mapping (see for instance: http://scimaps.org/maps/browse/) and a trend within the academic community to create open source analytical tools, most of the tools reviewed below are freely available for analysis and published alongside the publication material.

Leydesdorff and Persson (2010) provide a comprehensive review and user’s guide of several methods and software packages that were freely available to visualize research activities on geographic maps up to 2010. They focus specifically on the visualization of collaboration and citation networks that can be created on the basis of author-affiliate addresses on publications. In their review they cover, amongst others, the strengths and weaknesses of CiteSpace, Google Maps, Google Earth, GPS Visualizer and Pajek for visualization purposes. One particular strength of the paper is that it provides software to process author-affiliate addresses of publication data retrieved from Web of Science or Scopus for visualization on the city level. The software has been refined over the last years and can be found on: http://www.leydesdorff.net/software.htm.

Further to the visualization of research networks, Bornmann et al. (2011) focus on the geographic mapping of publication and citation counts of cities and regions. They extract all highly cited papers in a particular research field from Scopus, and develop a method to map “excellent cities and regions” using Google Maps. The percentile rank of a city as determined on the base of its contribution to the total set of highly cited papers is visualized by plotting circles with different radii (frequency of highly cited papers) and colors (city rank) on a geographic map. The exact procedure including a user guide for this visualization tool is provided at: http://www.leydesdorff.net/mapping_excellence/index.htm.

A disadvantage of the approach in Bornmann et al. (2011) is that it visualizes the absolute number of highly cited papers of a particular city without normalizing for the total number of publications in that city. Bornmann and Leydesdorff (2011) provide such a methodological approach using a statistical z test that compares observed proportions of highly cited papers of a particular city with expected proportions. “Excellence” can then be defined as cities in which “authors are located who publish a statistically significant higher number of highly cited papers than can be expected for these cities” (Bornmann & Leydesdorff, 2011, p.1954). The authors use similar methods as in Bornmann et al. (2011) to create geographic maps of excellence for three research fields: physics, chemistry and psychology. The maps confirm the added value of normalization as cities with high publication output do not necessarily have a disproportionate number of highly cited papers. Further methodological improvement to this method is provided by Bornmann and Leydesdorff (2012) who use the Integrated Impact Indicator (I3) as an alternative to normalized citation rates. Another improvement is that they correct observed citation rates for publication years.

Researchers using the above visualization approaches should be aware of a number of caveats that are extensively discussed in Bornmann et al. (2011). Visualization errors may occur due to amongst others imprecise allocation of geo-coordinates or incomplete author-affiliate addresses. Created geographic maps should therefore be always carefully scrutinized manually.

Building on the abovementioned contributions, Bornmann, Stefaner, de Moya Anegón, and Mutz (2014a) introduce a novel web application (www.excellencemapping.net) that can visualize the performance of academic institutions on geographic maps. The web application visualizes field-specific excellence of academic institutions that frequently publish highly-cited papers. The underlying methodology for this is based on multilevel modeling that takes into account the data at the publication level (i.e., whether a particular paper belongs to the top 10 % most cited papers in a particular institution) as well as the academic institution level (how many papers of an institution belong to the overall top 10 % of most cited papers). Using this methodology, top performers by scientific fields who publish significantly more top-10 % papers than an “average” institution in a scientific field are visualized. Results are visualized by colored circles on the location of the respective institutions on a geographic map. The web application provides the possibility to select the circles for further information about the institutions. In Bornmann, Stefaner, de Moya Anegon, and Mutz (2014b) the web application is further enhanced by adding the possibility to control for the effect of covariates (such as the number of residents of a country in which an institution is located) on the performance of institutions. Using this method one can visualize the performance of institutions under the hypothetical situation that all institutions have the same value on the covariate in question. For instance, institutions can be visualized that have a very good performance once controlled for their relatively low national GDP. In the coming years, further development of the scientific excellence tool is anticipated.

Bornmann and Waltman (2011) use a somewhat different approach to map regions of excellence based on heat maps. The visualization they propose uses density maps that can be created using the VOSviewer software for bibliometric mapping (Van Eck & Waltman, 2010). A step-by-step instruction to make these maps is provided on: http://www.ludowaltman.nl/density_map/. In short, the heat maps rely on kernel density estimations of the publication activity of geographic coordination and a specification of a kernel width (in kilometers) for smoothing. Research excellence is then visualized for regions instead of individual cities, especially when clusters of cities with high impact publication activity are located in close proximity to each other. The created density maps reveal clusters of excellence running from South England, over Netherlands/Belgium and Western Germany to Northern Switzerland.

An entirely different approach to visualizing bibliometric data is explored by Persson and Ellegård (2012). Inspired by the theory of time-geography which was initially proposed by Thorsten Hägerstrand in 1955, they reconstruct time-space paths of the scientific publications citing the work of Thorsten Hägerstrand. Publications are plotted on a two dimensional graph with time (years) on the vertical axis, space (longitude) on the horizontal axis and paths between a time-space location indicating citations between articles.

Conclusions and Recommendations

Clearly, the interest in analyzing spatial aspects of scientific activity using spatial scientometric data is on the rise. In this review we specifically looked at contributions that focused on scholarly impact and found a large number of such papers. While previously most studies focused solely on national levels, many scientometrics contributions now take into account regional and urban levels. What is more, in analyzing relational data, kilometric distance is increasingly taken into account as one of the determinants of scholarly impact. The research design of spatial scientometric papers is also more elaborate than for earlier papers, with theory-driven hypotheses and increasingly a multivariate regression set-up. Progress has also been made in the automatic generation of data as well as in visualization of this data on geographic maps. Having said this, we identify below some research avenues that fill some existing research gaps in theory, topics, methodology and data sources.

Little theorizing: As noted, many studies start from hypotheses rather than from data. Yet, most often, hypotheses are derived from general theoretical notions rather than from specific theories of scientific practices. Indeed, spatial scientometrics makes little reference to theories in economics, geography or science and technology studies, arguably the fields closest to the spatial scientometric enterprise. And, conversely, more theory-minded researchers have also shown little interest in developing more specific theories about the geography of science, so far (Frenken, 2010). Clearly, more interaction between theory and empirics is welcome at this stage of research in spatial scientometrics. One can think of theories from network science, including the “proximity framework” and social network analysis, which aim to explain both the formation of scientific collaboration networks and their effect on scholarly impact (Frenken et al., 2009). A second possibility is to revive the links with Science and Technology Studies, which have exemplified more strategic and discursive aspects of science and scientific publishing (Frenken, 2010). Thirdly, modern economic geography offers advanced theories of localization, specifically, regarding the source of knowledge spillovers that may underlie the benefits of clustering in knowledge production (Breschi & Lissoni, 2009; Scherngell, 2014). Lastly, evolutionary theorizing may be useful to analyze the long-term dynamics in the geography of science, including questions of where new fields emerge and under what conditions existing centers lose their dominance (Boschma et al., 2014; Heimeriks & Boschma, 2014). Discussion of such possibilities in further detail is, however, beyond the purpose and scope of this chapter.

Self-selection as methodological challenge: As repeatedly stressed, a major problem in assessing the effect of geography (such as mobility or long-distance collaboration) on researchers’ scholarly impact arises from self-selection effects. One can expect that more talented researchers are more internationally oriented, if only because they search for more specialized and state-of-the-art knowledge. Hence, the positive effects of internationalization on performance may not reflect, or only partially, the alleged benefits from international collaboration as such. We have highlighted some recent attempts to deal with self-selection in the case of international mobility (Jonkers & Cruz-Castro, 2013) and international collaboration (Kato & Ando, 2013). Obviously, more research in this direction is welcome.

Mobility as an underdeveloped topic: A research topic which remains underinvestigated in the literature, despite its importance for shaping spatial aspects of the science system, is scientific mobility. Although we identified a number of papers (e.g. Jonkers & Cruz-Castro, 2013; Trippl, 2013) dealing with the topic, the total number of papers is relatively low and most papers focus on theoretical rather than empirical questions. One of the reasons for this state of affairs is the known difficulty in disambiguating author names purely based on information derived from scientific publications. The challenge in these cases is to determine whether the same or similar author names on different publications refer to the same researcher (for an overview see: Smalheiser and Torvik (2009)). Arguably, the increase in authors with a Chinese last name has made such disambiguation even more difficult, due to the large number of scholars sharing only a few family names such as Zhang, Chen or Li (Tang & Walsh, 2010). To deal with this issue scholars have started to develop tools and methods to solve the disambiguation problems. Recent examples include but are not limited to Tang and Walsh (2010); D’Angelo, Giuffrida, and Abramo (2011); Onodera et al. (2011); Wang et al. (2012); Wu and Ding (2013). Most of these studies now agree on the necessity to rely on external information (e.g. name lists) for a better disambiguation or to complement bibliometric data with information from other sources (e.g. surveys, curriculum vitae). For an overview of author name disambiguation issues and methods, please see the Chap. 7.

Data source dependency: All spatial scientometric analyses are dependent on the data sources that are being used. It is important to note in this context that there are differences between the set of journals that are covered in Web of Science and Scopus, with Scopus claiming to include more ‘regional’ journals. Moreover, the coverage of bibliometric databases changes over time, which may have an effect on longitudinal analyses of research activities. A telling example of this is the earlier mentioned predictions of the rise of China in terms of publication output. Leydesdorff (2012) showed in this respect that predictions of China’s growth in publication output differ considerably between an analysis of the Scopus or Web of Science database. Basu (2010) also observes a strong association between the number of indexed journals from a particular country and the total number of publications from that country. Other notable papers focusing on this issue include Rodrigues and Abadal (2014); Shelton, Foland, and Gorelskyy (2009) and Collazo-Reyes (2014). A challenge for further research is therefore to distinguish between changes in publication output of a particular spatial unit due to changes in academic production or changes in coverage of scientific journals with a spatial bias. Following Martin and Irvine (1983) and Leydesdorff (2012) we suggest relying on “partial indicators” where results become more reliable when they indicate the same trends and results across a number of databases.

Alternative data sources: Finally, a limitation of our review is that we only focused on spatial scientometric papers of scholarly impact and papers that made use of spatial information as it can be retrieved from individual publications. As noted in Frenken et al. (2009) there are a number of other topics that analyze spatial aspects of research activities, including the spatial analysis of research collaboration and the localized emergence of new research fields. Moreover, in addition to publication data there are other large datasets to analyze spatial aspects of science including but not limited to Framework Programme data (Autant‐Bernard, Billand, Frachisse, & Massard, 2007; Scherngell & Barber, 2009) and student mobility flows (Maggioni & Uberti, 2009). Due to space limitations we were not able to review all these contributions. Yet, while performing the systematic search of the scientometrics literature, we came across a number of innovative research topics such as those focusing on spatial aspects of editorial boards (Bański & Ferenc, 2013; García-Carpintero, Granadino, & Plaza, 2010; Schubert & Sooryamoorthy, 2010); research results (Fanelli, 2012); authorships (Hoekman, Frenken, de Zeeuw, & Heerspink, 2012); journal language (Bajerski, 2011; Kirchik, Gingras, & Larivière, 2012); and the internationality of journals (Calver, Wardell-Johnson, Bradley, & Taplin, 2010; He & Liu, 2009; Kao, 2009). They provide useful additions to the growing body of spatial scientometrics articles.

Notes

- 1.

Document type=Article; Indexes=SCI-EXPANDED, SSCI, A&HCI, CPCI-S, CPCI-SSH; Timespan = 2009–2014.

References

Abramo, G., D’Angelo, C. A., & Solazzi, M. (2011). The relationship between scientists’ research performance and the degree of internationalization of their research. Scientometrics, 86(3), 629–643.

Acosta, M., Coronado, D., Ferrándiz, E., & León, M. D. (2014). Regional scientific production and specialization in Europe: the role of HERD. European Planning Studies, 22(5), 1–26. doi:10.1080/09654313.2012.752439.

Albarrán, P., Ortuño, I., & Ruiz-Castillo, J. (2011a). High-and low-impact citation measures: empirical applications. Journal of Informetrics, 5(1), 122–145. doi:10.1016/j.joi.2010.10.001.

Albarrán, P., Ortuño, I., & Ruiz-Castillo, J. (2011b). The measurement of low-and high-impact in citation distributions: Technical results. Journal of Informetrics, 5(1), 48–63. doi:10.1016/j.joi.2010.08.002.

Albarrán, P., Ortuño, I., & Ruiz-Castillo, J. (2011c). Average-based versus high-and low-impact indicators for the evaluation of scientific distributions. Research Evaluation, 20(4), 325–339. doi:10.3152/095820211X13164389670310.

Almeida, J. A. S., Pais, A. A. C. C., & Formosinho, S. J. (2009). Science indicators and science patterns in Europe. Journal of Informetrics, 3(2), 134–142. doi:10.1016/j.joi.2009.01.001.

Autant‐Bernard, C., Billand, P., Frachisse, D., & Massard, N. (2007). Social distance versus spatial distance in R&D cooperation: Empirical evidence from European collaboration choices in micro and nanotechnologies. Papers in Regional Science, 86(3), 495–519. doi:10.1111/j.1435-5957.2007.00132.x.

Bajerski, A. (2011). The role of French, German and Spanish journals in scientific communication in international geography. Area, 43(3), 305–313. doi:10.1111/j.1475-4762.2010.00989.x.

Bański, J., & Ferenc, M. (2013). “International” or “Anglo-American” journals of geography? Geoforum, 45, 285–295. doi:10.1016/j.geoforum.2012.11.016.

Barnes, T. J. (2001). ‘In the beginning was economic geography’–a science studies approach to disciplinary history. Progress in Human Geography, 25(4), 521–544.

Basu, A. (2010). Does a country’s scientific ‘productivity’ depend critically on the number of country journals indexed? Scientometrics, 82(3), 507–516.

Bornmann, L., & Leydesdorff, L. (2011). Which cities produce more excellent papers than can be expected? A new mapping approach, using Google Maps, based on statistical significance testing. Journal of the American Society for Information Science and Technology, 62(10), 1954–1962.

Bornmann, L., & Leydesdorff, L. (2012). Which are the best performing regions in information science in terms of highly cited papers? Some improvements of our previous mapping approaches. Journal of Informetrics, 6(2), 336–345.

Bornmann, L., Leydesdorff, L., Walch-Solimena, C., & Ettl, C. (2011). Mapping excellence in the geography of science: An approach based on Scopus data. Journal of Informetrics, 5(4), 537–546.

Bornmann, L., Stefaner, M., de Moya Anegón, F., & Mutz, R. (2014a). Ranking and mapping of universities and research-focused institutions worldwide based on highly-cited papers: A visualisation of results from multi-level models. Online Information Review, 38(1), 43–58.

Bornmann, L., Stefaner, M., de Moya Anegon, F., & Mutz, R. (2014b). What is the effect of country-specific characteristics on the research performance of scientific institutions? Using multi-level statistical models to rank and map universities and research-focused institutions worldwide. arXiv preprint arXiv:1401.2866.

Bornmann, L., & Waltman, L. (2011). The detection of “hot regions” in the geography of science—a visualization approach by using density maps. Journal of Informetrics, 5(4), 547–553.

Boschma, R., Heimeriks, G., & Balland, P. A. (2014). Scientific knowledge dynamics and relatedness in biotech cities. Research Policy, 43(1), 107–114.

Braun, J. D. (2012). Effects of war on scientific production: mathematics in Croatia from 1968 to 2008. Scientometrics, 93(3), 931–936.

Breschi, S., & Lissoni, F. (2009). Mobility of skilled workers and co-invention networks: An anatomy of localized knowledge flows. Journal of Economic Geography, 9, 439–468. doi:10.1093/jeg/lbp008.

Calver, M., Wardell-Johnson, G., Bradley, S., & Taplin, R. (2010). What makes a journal international? A case study using conservation biology journals. Scientometrics, 85(2), 387–400.

Cho, C. C., Hu, M. W., & Liu, M. C. (2010). Improvements in productivity based on co-authorship: a case study of published articles in China. Scientometrics, 85(2), 463–470.

Collazo-Reyes, F. (2014). Growth of the number of indexed journals of Latin America and the Caribbean: the effect on the impact of each country. Scientometrics, 98(1), 197–209.

Commission of the European Communities. (1993). White paper on growth, competitiveness and employment. Brussels: COM(93) 700 final.

D’Angelo, C. A., Giuffrida, C., & Abramo, G. (2011). A heuristic approach to author name disambiguation in bibliometrics databases for large‐scale research assessments. Journal of the American Society for Information Science and Technology, 62(2), 257–269.

Didegah, F., & Thelwall, M. (2013a). Determinants of research citation impact in nanoscience and nanotechnology. Journal of the American Society for Information Science and Technology, 64(5), 1055–1064.

Didegah, F., & Thelwall, M. (2013b). Which factors help authors produce the highest impact research? Collaboration, journal and document properties. Journal of Informetrics, 7(4), 861–873.

Dosi, G., Llerena, P., & Labini, M. S. (2006). The relationships between science, technologies and their industrial exploitation: An illustration through the myths and realities of the so-called ‘European Paradox’. Research Policy, 35(10), 1450–1464.

Eisend, M., & Schmidt, S. (2014). The influence of knowledge-based resources and business scholars’ internationalization strategies on research performance. Research Policy, 43(1), 48–59.

Fanelli, D. (2012). Negative results are disappearing from most disciplines and countries. Scientometrics, 90(3), 891–904.

Finnegan, D. A. (2008). The spatial turn: Geographical approaches in the history of science. Journal of the History of Biology, 41(2), 369–388.

Frenken, K. (2010). Geography of scientific knowledge: A proximity approach. Eindhoven Center for Innovation Studies (ECIS) working paper series 10-01. Eindhoven Center for Innovation Studies (ECIS). Retrieved from http://econpapers.repec.org/paper/dgrtuecis/wpaper_3a1001.htm

Frenken, K., Hardeman, S., & Hoekman, J. (2009). Spatial scientometrics: Towards a cumulative research program. Journal of Informetrics, 3(3), 222–232.

Frenken, K., Hölzl, W., & Vor, F. D. (2005). The citation impact of research collaborations: the case of European biotechnology and applied microbiology (1988–2002). Journal of Engineering and Technology Management, 22(1), 9–30.

Frenken, K., Ponds, R., & Van Oort, F. (2010). The citation impact of research collaboration in science‐based industries: A spatial‐institutional analysis. Papers in Regional Science, 89(2), 351–371.

García-Carpintero, E., Granadino, B., & Plaza, L. M. (2010). The representation of nationalities on the editorial boards of international journals and the promotion of the scientific output of the same countries. Scientometrics, 84(3), 799–811.

Grossetti, M., Eckert, D., Gingras, Y., Jégou, L., Larivière, V., & Milard, B. (2013, November). Cities and the geographical deconcentration of scientific activity: A multilevel analysis of publications (1987–2007). Urban Studies, 0042098013506047.

Grossetti, M., Milard, B., & Losego, P. (2009). La territorialisation comme contrepoint à l'internationalisation des activités scientifiques.L’internationalisation des systèmes de recherche en action. Les cas français et suisse. Retrieved from http://halshs.archives-ouvertes.fr/halshs-00471192

He, Z. L. (2009). International collaboration does not have greater epistemic authority. Journal of the American Society for Information Science and Technology, 60(10), 2151–2164.

He, T., & Liu, W. (2009). The internationalization of Chinese scientific journals: A quantitative comparison of three chemical journals from China, England and Japan. Scientometrics, 80(3), 583–593.

Heimeriks, G., & Boschma, R. (2014). The path- and place-dependent nature of scientific knowledge production in biotech 1986–2008. Journal of Economic Geography, 14(2), 339–364. doi:10.1093/jeg/lbs052.

Herranz, N., & Ruiz-Castillo, J. (2013). The end of the ‘European Paradox’. Scientometrics, 95(1), 453–464. doi:10.1007/s11192-012-0865-8.

Hoekman, J., Frenken, K., de Zeeuw, D., & Heerspink, H. L. (2012). The geographical distribution of leadership in globalized clinical trials. PLoS One, 7(10), e45984.

Hoekman, J., Frenken, K., & Tijssen, R. J. (2010). Research collaboration at a distance: Changing spatial patterns of scientific collaboration within Europe. Research Policy, 39(5), 662–673.

Hoekman, J., Scherngell, T., Frenken, K., & Tijssen, R. (2013). Acquisition of European research funds and its effect on international scientific collaboration. Journal of Economic Geography, 13(1), 23–52.

Huang, M. H., Chang, H. W., & Chen, D. Z. (2012). The trend of concentration in scientific research and technological innovation: A reduction of the predominant role of the US in world research & technology. Journal of Informetrics, 6(4), 457–468.

Huffman, M. D., Baldridge, A., Bloomfield, G. S., Colantonio, L. D., Prabhakaran, P., Ajay, V. S., … & Prabhakaran, D. (2013). Global cardiovascular research output, citations, and collaborations: A time-trend, bibliometric analysis (1999–2008). PloS One, 8(12), e83440.

Jacsó, P. (2011). Interpretations and misinterpretations of scientometric data in the report of the Royal Society about the scientific landscape in 2011. Online Information Review, 35(4), 669–682.

Jonkers, K., & Cruz-Castro, L. (2013). Research upon return: The effect of international mobility on scientific ties, production and impact. Research Policy, 42(8), 1366–1377.

Kao, C. (2009). The authorship and internationality of industrial engineering journals. Scientometrics, 81(1), 123–136.

Kato, M., & Ando, A. (2013). The relationship between research performance and international collaboration in chemistry. Scientometrics, 97(3), 535–553.

Kirchik, O., Gingras, Y., & Larivière, V. (2012). Changes in publication languages and citation practices and their effect on the scientific impact of Russian science (1993–2010). Journal of the American Society for Information Science and Technology, 63(7), 1411–1419.

Lancho Barrantes, B. S., Bote, G., Vicente, P., Rodríguez, Z. C., & de Moya Anegón, F. (2012). Citation flows in the zones of influence of scientific collaborations. Journal of the American Society for Information Science and Technology, 63(3), 481–489.

Lee, K., Brownstein, J. S., Mills, R. G., & Kohane, I. S. (2010). Does collocation inform the impact of collaboration? PLoS One, 5(12), e14279.

Levy, R., & Jégou, L. (2013). Diversity and location of knowledge production in small cities in France. City, Culture and Society, 4(4), 203–216.

Levy, R., Sibertin-Blanc, M., & Jégou, L. (2013). La production scientifique universitaire dans les villes françaises petites et moyennes (1980–2009). M@ ppemonde, (110 (2013/2))

Leydesdorff, L. (2012). World shares of publications of the USA, EU-27, and China compared and predicted using the new Web of Science interface versus Scopus. El profesional de la información, 21(1), 43–49.

Leydesdorff, L., & Persson, O. (2010). Mapping the geography of science: Distribution patterns and networks of relations among cities and institutes. Journal of the American Society for Information Science and Technology, 61(8), 1622–1634.

Leydesdorff, L., & Rafols, I. (2011). Local emergence and global diffusion of research technologies: An exploration of patterns of network formation. Journal of the American Society for Information Science and Technology, 62(5), 846–860.

Livingstone, D. N. (2010). Putting science in its place: Geographies of scientific knowledge. Chicago: University of Chicago Press.

Maggioni, M. A., & Uberti, T. E. (2009). Knowledge networks across Europe: Which distance matters? The Annals of Regional Science, 43(3), 691–720.

Magnone, E. (2012). An analysis for estimating the short-term effects of Japan’s triple disaster on progress in materials science. Journal of Informetrics, 6(2), 289–297.

Martin, B. R., & Irvine, J. (1983). Assessing basic research: Some partial indicators of scientific progress in radio astronomy. Research Policy, 12(2), 61–90.

Matthiessen, C. W., & Schwarz, A. W. (2010). World cities of scientific knowledge: Systems, networks and potential dynamics. An analysis based on bibliometric indicators. Urban Studies, 47(9), 1879–1897.

Meo, S. A., Al Masri, A. A., Usmani, A. M., Memon, A. N., & Zaidi, S. Z. (2013). Impact of GDP, spending on R&D, number of universities and scientific journals on research publications among Asian countries. PLoS One, 8(6), e66449.

Meusburger, P., Livingstone, D. N., & Jöns, H. (2010). Geographies of science. Dordrecht: Springer.

Miguel, S., Moya-Anegón, F., & Herrero-Solana, V. (2010). The impact of the socio-economic crisis of 2001 on the scientific system of Argentina from the scientometric perspective. Scientometrics, 85(2), 495–507.

Moiwo, J. P., & Tao, F. (2013). The changing dynamics in citation index publication position China in a race with the USA for global leadership. Scientometrics, 95(3), 1031–1050.

Morillo, F., & De Filippo, D. (2009). Descentralización de la actividad científica. El papel determinante de las regiones centrales: el caso de Madrid. Revista española de documentación científica, 32(3), 29–50.

Nomaler, Ö., Frenken, K., & Heimeriks, G. (2013). Do more distant collaborations have more citation impact? Journal of Informetrics, 7(4), 966–971.

Onodera, N., Iwasawa, M., Midorikawa, N., Yoshikane, F., Amano, K., Ootani, Y., … & Yamazaki, S. (2011). A method for eliminating articles by homonymous authors from the large number of articles retrieved by author search. Journal of the American Society for Information Science and Technology, 62(4), 677–690.

Orduña-Malea, E., Ontalba-Ruipérez, J. A., & Serrano-Cobos, J. (2010). Análisis bibliométrico de la producción y colaboración científica en Oriente Próximo (1998–2007). Investigación bibliotecológica, 24(51), 69–94.

Pasgaard, M., & Strange, N. (2013). A quantitative analysis of the causes of the global climate change research distribution. Global Environmental Change, 23(6), 1684–1693.

Persson, O., & Ellegård, K. (2012). Torsten Hägerstrand in the citation time web. The Professional Geographer, 64(2), 250–261.

Rodrigues, R. S., & Abadal, E. (2014). Ibero-American journals in Scopus and Web of Science. Learned Publishing, 27(1), 56–62.

Royal Society. (2011). Knowledge, networks and nations: Global scientific collaboration in the 21st century. London: The Royal Society.

Sandström, U. (2009). Combining curriculum vitae and bibliometric analysis: Mobility, gender and research performance. Research Evaluation, 18(2), 135–142.

Scherngell, T. (Ed.). (2014). The geography of networks and R&D collaborations. Berlin: Springer.

Scherngell, T., & Barber, M. J. (2009). Spatial interaction modelling of cross‐region R&D collaborations: Empirical evidence from the 5th EU framework programme. Papers in Regional Science, 88(3), 531–546.

Schubert, T., & Sooryamoorthy, R. (2010). Can the centre–periphery model explain patterns of international scientific collaboration among threshold and industrialised countries? The case of South Africa and Germany. Scientometrics, 83(1), 181–203.

Sebestyén, T., & Varga, A. (2013). Research productivity and the quality of interregional knowledge networks. The Annals of Regional Science, 51(1), 155–189.

Shapin, S. (1998). Placing the view from nowhere: historical and sociological problems in the location of science. Transactions of the Institute of British Geographers, 23(1), 5–12.

Shelton, R. D., Foland, P., & Gorelskyy, R. (2009). Do new SCI journals have a different national bias? Scientometrics, 79(2), 351–363.

Sin, S. C. J. (2011). International coauthorship and citation impact: A bibliometric study of six LIS journals, 1980–2008. Journal of the American Society for Information Science and Technology, 62(9), 1770–1783.

Smalheiser, N. R., & Torvik, V. I. (2009). Author name disambiguation. Annual Review of Information Science and Technology, 43(1), 1–43.

Tang, L., & Shapira, P. (2010). Regional development and interregional collaboration in the growth of nanotechnology research in China. Scientometrics, 86(2), 299–315.

Tang, L., & Walsh, J. P. (2010). Bibliometric fingerprints: Name disambiguation based on approximate structure equivalence of cognitive maps. Scientometrics, 84(3), 763–784.

Trippl, M. (2013). Scientific mobility and knowledge transfer at the interregional and intraregional level. Regional Studies, 47(10), 1653–1667.

Van Eck, N. J., & Waltman, L. (2010). Software survey: VOSviewer, a computer program for bibliometric mapping. Scientometrics, 84(2), 523–538.

Van Noorden, R. (2010). Cities: Building the best cities for science. Nature, 467(7318), 906–908.

Waltman, L., Tijssen, R. J., & Eck, N. J. V. (2011). Globalisation of science in kilometres. Journal of Informetrics, 5(4), 574–582.

Wang, J., Berzins, K., Hicks, D., Melkers, J., Xiao, F., & Pinheiro, D. (2012). A boosted-trees method for name disambiguation. Scientometrics, 93(2), 391–411.

Wu, J., & Ding, X. H. (2013). Author name disambiguation in scientific collaboration and mobility cases. Scientometrics, 96(3), 683–697.

Zubieta, A. F. (2009). Recognition and weak ties: Is there a positive effect of postdoctoral position on academic performance and career development? Research Evaluation, 18(2), 105–115.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 Springer International Publishing Switzerland

About this chapter

Cite this chapter

Frenken, K., Hoekman, J. (2014). Spatial Scientometrics and Scholarly Impact: A Review of Recent Studies, Tools, and Methods. In: Ding, Y., Rousseau, R., Wolfram, D. (eds) Measuring Scholarly Impact. Springer, Cham. https://doi.org/10.1007/978-3-319-10377-8_6

Download citation

DOI: https://doi.org/10.1007/978-3-319-10377-8_6

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-10376-1

Online ISBN: 978-3-319-10377-8

eBook Packages: Computer ScienceComputer Science (R0)