Abstract

Let η t be a Poisson point process with intensity measure tμ, t > 0, over a Borel space \(\mathbb{X}\), where μ is a fixed measure. Another point process ξ t on the real line is constructed by applying a symmetric function f to every k-tuple of distinct points of η t . It is shown that ξ t behaves after appropriate rescaling like a Poisson point process, as t → ∞, under suitable conditions on η t and f. This also implies Weibull limit theorems for related extreme values. The result is then applied to investigate problems arising in stochastic geometry, including small cells in Voronoi tessellations, random simplices generated by non-stationary hyperplane processes, triangular counts with angular constraints, and non-intersecting k-flats. Similar results are derived if the underlying Poisson point process is replaced by a binomial point process.

Access provided by Autonomous University of Puebla. Download chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

1 Introduction

This chapter deals with the application of the Malliavin–Chen–Stein method for Poisson approximation to problems arising in stochastic geometry. More precisely, we will develop a general framework which yields Poisson point process convergence and Weibull limit theorems for the order-statistic of a class of functionals driven by an underlying Poisson or binomial point process on an abstract state space.

To motivate our general theory, let us describe a particular situation to which our results can be applied (see Remark 4 and also Example 4 in [29] for more details). Let K be a convex body in \(\mathbb{R}^{d}\), d ≥ 2, (that is a compact convex set with interior points) whose volume is denoted by ℓ d (K). For t > 0 let η t be the restriction to K of a translation-invariant Poisson point process in \(\mathbb{R}^{d}\) with intensity t and let (θ t ) t > 0 be a sequence of real numbers satisfying t 2∕d θ t → ∞, as t → ∞. Taking η t as vertex set of a random graph, we connect two different points of η t by an edge if and only if their Euclidean distance does not exceed θ t . The so-constructed random geometric graph, or Gilbert graph, is among the most prominent random graph models (see [25] for some recent developments and [22] for an exhaustive reference). We now consider the order statistic \(\xi _{t} =\{ M_{t}^{(m)}: m \in \mathbb{N}\}\) defined by the edge-lengths of the random geometric graph, that is, M t (1) is the length of the shortest edge, M t (2) is the length of the second-shortest edge etc. Now, our general theory implies that the rescaled point process t 2∕d ξ t converges towards a Poisson point process on \(\mathbb{R}_{+}\) with intensity measure given by B ↦ β d ∫ B u d−1 du for Borel sets \(B \subset \mathbb{R}_{+}\), where β = κ d ℓ d (K)∕2 and κ d stands for the volume of the d-dimensional unit ball. Moreover, it implies that there is a constant C > 0 only depending on K such that

for any \(m \in \mathbb{N}\), y ∈ (0, t 2∕d θ t ) and t ≥ 1. In particular, the distribution of the rescaled length t 2∕d M t (1) of the shortest edge of the random graph converges, as t → ∞, to a Weibull distribution with survival function \(y\mapsto e^{-\beta y^{d} }\), y ≥ 0, at rate t −2∕d.

Our purpose here is to establish a general framework that can be applied to a broad class of examples. We also allow the underlying point process to be a Poisson or a binomial point process. Our main result for the Poisson case refines those in [29] or [30] and improves the rate of convergence. Its proof follows the ideas of Peccati [21] and Schulte and Thäle [29], but uses the special structure of the functional under consideration as well as recent techniques from [20] around Mehler’s formula on the Poisson space. This saves some technical computations related to the product formula for multiple stochastic integrals (cf. [18], in this volume, as well as [19, 32]). In case of an underlying binomial point process we use a bound for the Poisson approximation of (classical) U-statistics from [1]. As application of our main results, we present a couple of examples, which continue and complement those studied in [29, 30]. These are

-

1.

Cells with small (nucleus-centered) inradius in a Voronoi tessellation.

-

2.

Simplices generated by a class of rotation-invariant hyperplane processes.

-

3.

Almost collinearities and flat triangles in a planar Poisson or binomial process.

-

4.

Arbitrary length-power-proximity functionals of non-intersecting k-flats.

The rest of this chapter is organized as follows. Our main results and their framework are presented in Sect. 2. The application to problems arising in stochastic geometry is the content of Sect. 3. The proofs of the main results are postponed to the final Sect. 4.

2 Results

Let η t (t > 0) be a Poisson point process on a measurable space \((\mathbb{X},\mathcal{X})\) with intensity measure μ t : = tμ, where μ is a fixed σ-finite measure on \(\mathbb{X}\). To avoid technical complications, we shall assume in this chapter that \((\mathbb{X},\mathcal{X})\) is a standard Borel space. This ensures, for example, that any point process on \(\mathbb{X}\) can almost surely be represented as a sum of Dirac measures. Let further \(k \in \mathbb{N}\) and \(f: \mathbb{X}^{k} \rightarrow \mathbb{R}\) be a measurable symmetric function. Our aim here is to investigate the point process ξ t on \(\mathbb{R}\) which is induced by η t and f as follows:

Here η t, ≠ k stands for the set of all k-tuples of distinct points of η t and δ x is the unit Dirac measure concentrated at the point \(x \in \mathbb{R}\). We shall assume that

to ensure that ξ t is a locally finite counting measure on \(\mathbb{R}\).

For \(m \in \mathbb{N}\) we denote by M t (m) the distance from the origin to the m-th point of ξ t on the positive half-line \(\mathbb{R}_{+}:= (0,\infty )\), and by M t (−m) the distance from the origin to the m-th point on the negative half-line \(\mathbb{R}_{-}:= (-\infty,0]\). If ξ t has less than m points on the positive or negative half-line, we put M t (m) = ∞ or M t (−m) = ∞, respectively.

Fix \(\gamma \in \mathbb{R}\) and for \(y_{1},y_{2} \in \mathbb{R}\) define

We remark that, as a consequence of the multivariate Mecke formula for Poisson point processes (see [18, formula (1.11)]), α t (y 1, y 2) can be interpreted as

which is the expected number of points of ξ t in (t −γ y 1, t −γ y 2] if y 1 < y 2 and zero if y 1 ≥ y 2. Moreover, let, for k ≥ 2,

for y ≥ 0 and put r t ≡ 0 if k = 1.

Theorem 1

Let ν be a σ-finite non-atomic Borel measure on \(\mathbb{R}\) . Then, there is a constant C ≥ 1 only depending on k such that

and

for all \(m \in \mathbb{N}\) and y ≥ 0. Moreover, if

and

the rescaled point processes (t γ ξ t ) t>0 converge in distribution to a Poisson point process on \(\mathbb{R}\) with intensity measure ν.

Remark 1

Let us comment on the particular case k = 1. Here, the point process ξ t is itself a Poisson point process on \(\mathbb{R}\) with intensity measure derived from α t as a consequence of the famous mapping theorem, for which we refer to Sect. 2.3 in [16]. This is confirmed by our Theorem 1.

Remark 2

Theorem 1 generalizes earlier versions in [29, 30], which have a similar structure, but where the quantity

for y ≥ 0 is considered instead of r t (y). It is easy to see that r t (y) and \(\hat{r}_{t}(y)\) are related by

In particular, this means that the rate of convergence of the order statistics in Theorem 1 improves that in [29, 30] by removing a superfluous square root from \(\hat{r}_{t}(y)\). Moreover and in contrast to [29, 30], the constant C only depends on the parameter k.

In our applications presented in Sect. 3, the function f is always strictly positive so that ξ t is concentrated on \(\mathbb{R}_{+}\). Moreover, the measure ν will be of a special form. The following corollary deals with this situation. To state it, we use the convention that α t (y): = α t (0, y) for y ≥ 0.

Corollary 1

Let β,τ > 0. Then there is a constant C > 0 only depending on k such that

for all \(m \in \mathbb{N}\) and y ≥ 0. If, additionally,

the rescaled point processes (t γ ξ t ) t>0 converge in distribution to a Poisson point process on \(\mathbb{R}_{+}\) with the intensity measure

Remark 3

The limiting Poisson point process appearing in the context of Corollary 1 is usually called a Weibull process on \(\mathbb{R}_{+}\), the reason for this being that the distance from the origin to the next point follows a Weibull distribution.

If μ is a finite measure, i.e., if \(\mu (\mathbb{X}) <\infty\), one can replace the underlying Poisson point process η t by a binomial point process ζ n having a fixed number of n points which are independent and identically distributed according to the probability measure \(\mu (\,\cdot \,)/\mu (\mathbb{X})\). Without loss of generality we assume that \(\mu (\mathbb{X}) = 1\) in what follows. In this situation, we consider instead of ξ t defined at (1) the derived point process \(\hat{\xi }_{n}\) on \(\mathbb{R}\) given by

where ζ n, ≠ k stands for the collection of all k-tuples of distinct points of ζ n . For \(m \in \mathbb{N}\) let \(\widehat{M }_{n}^{(m)}\) and \(\widehat{M }_{n}^{(-m)}\) be defined similarly as M n (m) and M n (−m) above with ξ t replaced by \(\hat{\xi }_{n}\). For \(n,k \in \mathbb{N}\) we denote by (n) k the descending factorial \(n \cdot (n - 1) \cdot \mathop{\ldots } \cdot (n - k + 1)\). Using the notation

for \(y_{1},y_{2},y \in \mathbb{R}\), we can now present the binomial counterpart of Theorem 1.

Theorem 2

Let μ be a probability measure on \(\mathbb{X}\) and ν be a σ-finite non-atomic Borel measure on \(\mathbb{R}\) . Then, there is a constant C ≥ 1 only depending on k such that

and

for all \(m \in \mathbb{N}\) and y ≥ 0. Moreover, if

and

the rescaled point processes \((n^{\gamma }\hat{\xi }_{n})_{n\geq 1}\) converge in distribution to a Poisson point process on \(\mathbb{R}\) with intensity measure ν.

As in the Poisson case, Theorem 2 allows a reformulation as in Corollary 1 for the special situation in which f is nonnegative and ν has a power-law density. As above, we use the convention that α n (y): = α n (0, y) for y ≥ 0.

Corollary 2

Let β,τ > 0. Then there is a constant C > 0 only depending on k such that

for all \(m \in \mathbb{N}\) and y ≥ 0. If, additionally,

the rescaled point processes \((n^{\gamma }\hat{\xi }_{n})_{n\geq 1}\) converge in distribution to a Poisson point process on \(\mathbb{R}_{+}\) with intensity measure given by (5) .

3 Examples

In this section we apply the results presented above to problems arising in stochastic geometry, see [11]. The minimal nucleus-centered inradius of the cells of a Voronoi tessellation is considered in Sect. 3.1. This example is inspired by the work [5] and was not previously considered in [29], although it is closely related to the minimal edge length of the random geometric graph discussed in the introduction. Our next example generalizes Example 6 of [29] from the translation-invariant case to arbitrary distance parameters r ≥ 1. In dimension two it also sheds some new light onto the area of small cells in line tessellations. Our third example is inspired by a result in [31] and deals with approximate collinearities and flat triangles induced by a planar Poisson or binomial point process. Our last example deals with non-intersecting k-flats. The result generalizes Example 1 in [29] and one of the results in [30] to arbitrary distance powers a > 0.

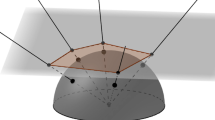

3.1 Voronoi Tessellations

For a finite set χ ≠ ∅ of points in \(\mathbb{R}^{d}\), d ≥ 2, the Voronoi cell v χ (x) with nucleus x ∈ χ is the (possibly unbounded) set

of all points in \(\mathbb{R}^{d}\) having x as their nearest neighbor in χ. The family

subdivides \(\mathbb{R}^{d}\) into a finite number of random polyhedra, which form the so-called Voronoi tessellation associated with χ, see [27, Chap. 10.2]. For χ = ∅ we put \(\mathcal{V}_{\emptyset } =\{ \mathbb{R}^{d}\}\). One characteristic measuring the size of a Voronoi cell v χ (x) is its nucleus-centered inradius R(x, χ). It is defined as the radius of the largest ball included in v χ (x) and having x as its midpoint. Note that R(x, χ) takes the value ∞ if χ = { x}. Define

for nonempty χ and \(R(\mathcal{V}_{\emptyset }):= \infty\).

In [5] the asymptotic behavior of \(R(\mathcal{V}_{\chi })\) has been investigated in the case that χ is a Poisson point process in a convex body K of intensity t > 0, as t → ∞. Using Corollary 1 we can get back one of the main results of [5] and add a rate of convergence to the limit theorem (compare with [5, Eq. (2b)] in particular). Moreover, we provide a similar result for an underlying binomial point process.

Corollary 3

Let η t be a Poisson point process with intensity measure tℓ d | K , where ℓ d | K stands for the restriction of the Lebesgue measure to a convex body K and t > 0. Then, there exists a constant C > 0 depending on K such that

for all y ≥ 0 and t ≥ 1. In addition, if ζ n is a binomial point process with n ≥ 2 independent points distributed according to ℓ d (K) −1 ℓ d | K , then

for y ≥ 0 and with a constant C > 0 depending on K.

Proof

To apply Corollary 1 we first have to investigate α t (y) for fixed y > 0. For this we abbreviate \(\mathcal{V}_{\eta _{t}}\) by \(\mathcal{V}_{t}\) and observe that—by definition of a Voronoi cell—\(R(\mathcal{V}_{t})\) is half of the minimal interpoint distance of points from η t , i.e.

Consequently, we have

where B r d(x) is the d-dimensional ball of radius r > 0 around \(x \in \mathbb{R}^{d}\). From Theorem 5.2.1 in [27] (see Eq. ( 14) in particular) it follows that

Moreover, Steiner’s formula [27, Eq. (14.5)] yields

where V 0(K), …, V d−1(K) are the so-called intrinsic volumes of K, see [11] or [27]. Choosing γ = 2∕d, this implies that α t (y) is dominated by its first integral term and that

for t ≥ 1 with a constant c 1 only depending on K.

Finally, we have to deal with r t (y). Here, we have

In the binomial case, one can derive analogous bounds for α n (y) and r n (y), y > 0. Since min(2∕d, 1) = 2∕d for all d ≥ 2, application of Corollaries 1 and 2 completes the proof. □

Remark 4

We have used in the proof that \(R(\mathcal{V}_{\eta _{t}})\) is half of the minimal inter-point distance between points of η t in K. Thus, Corollary 3 also makes a statement about this minimal inter-point distance. Consequently, \(2R(\mathcal{V}_{\eta _{t}})\) is also the same as the shortest edge length of a random geometric graph based on η t as discussed in the introduction (cf. [25] and [22] for an exhaustive reference on random geometric graphs) or as the shortest edge length of a Delaunay graph (see [11] or [6, 27] for background material on Delaunay graphs or tessellations). A similar comment applies if η t is replaced by a binomial point process ζ n .

3.2 Hyperplane Tessellations

Let \(\mathcal{H}\) be the space of hyperplanes in \(\mathbb{R}^{d}\), fix a distance parameter r ≥ 1 and a convex body \(K \subset \mathbb{R}^{d}\), and define as in [12, Sect. 3.4.5] a (finite) measure μ on \(\mathcal{H}\) by the relation

where g ≥ 0 is a measurable function on \(\mathcal{H}\), u ⊥ is the linear subspace of all vectors that are orthogonal to u, and du stands for the infinitesimal element of the normalized Lebesgue measure on the (d − 1)-dimensional unit sphere \(\mathbb{S}^{d-1}\). By η t we mean in this section a Poisson point process on \(\mathcal{H}\) with intensity measure μ t : = tμ, t > 0. Let us further write for \(n \in \mathbb{N}\) with n ≥ d + 1, ζ n for a binomial process on \(\mathcal{H}\) consisting of \(n \in \mathbb{N}\) hyperplanes distributed according to the probability measure \(\mu (\mathcal{H})^{-1}\,\mu\).

If \(K = \mathbb{R}^{d}\) in the Poisson case, one obtains a tessellation of the whole \(\mathbb{R}^{d}\) into bounded cells. In this context one is interested in the so-called zero cell Z 0, which is the almost surely uniquely determined cell containing the origin. If r = 1, Z 0 has the same distribution as the zero-cell of a rotation- and translation-invariant Poisson hyperplane tessellation. If r = d, Z 0 is equal in distribution to the so-called typical cell of a Poisson–Voronoi tessellation as considered in the previous section, see [27]. Thus, the tessellation induced by η t interpolates in some sense between the translation-invariant Poisson hyperplane and the Poisson–Voronoi tessellation, which explains the recent interest in this model [8, 9, 12]. For more background material about random tessellations (and in particular Poisson hyperplane and Poisson–Voronoi tessellations) we refer to Chap. 10 in [27] and Chap. 9 in [6] and also to [11].

We are interested here in the simplices generated by the hyperplanes of η t or ζ n , which are contained in the prescribed convex set K. For a (d + 1)-tuple (H 1, …, H d+1) of distinct hyperplanes of η t or ζ n let us write [H 1, …, H d+1] for the simplex generated by H 1, …, H d+1 and define the point processes

and

By M t (m) and \(\widehat{M }_{n}^{(m)}\) we mean the mth order statistics associated with ξ t and \(\hat{\xi }_{n}\), respectively. In particular M t (1) and \(\widehat{M } _{n}^{(1)}\) are the smallest volume of a simplex included in K. Moreover, for fixed hyperplanes H 1, …, H d in general position let \(z(H_{1},\mathop{\ldots },H_{d}):= H_{1} \cap \ldots \cap H_{d}\) be the intersection point of \(H_{1},\mathop{\ldots },H_{d}\). By H δ, u we denote the hyperplane with unit normal vector \(u \in \mathbb{S}^{d-1}\) and distance δ > 0 to the origin. The following result generalizes [29, Theorem 2.6] from the translation-invariant case r = 1 to arbitrary distance parameter r ≥ 1.

Corollary 4

Define

Then t d(d+1) ξ t and \(n^{d(d+1)}\hat{\xi }_{n}\) converge, as t →∞ or n →∞, in distribution to a Poisson point process on \(\mathbb{R}_{+}\) with intensity measure given by

for Borel sets \(B \subset \mathbb{R}_{+}\) . In particular, for each \(m \in \mathbb{N}\) , t d(d+1) M t (m) and \(n^{d(d+1)}\widehat{M } _{n}^{(m)}\) converge towards a random variable with survival function

Proof

For y > 0 we have

For fixed hyperplanes H 1, …, H d in general position we parametrize H d+1 by a pair \((\delta,u) \in [0,\infty ) \times \mathbb{S}^{d-1}\), where δ is the distance of H d+1 to the origin. Then α t (y) can be rewritten as

Since the hyperplane H δ, u has the distance \(\vert u^{T}z(H_{1},\mathop{\ldots },H_{d}) -\delta \vert\) to \(z(H_{1},\mathop{\ldots },H_{d})\), we have that

Let γ = d(d + 1) and \(M:=\max \{\| z\|^{r-1}: z \in K\}\). For fixed \(H_{1},\mathop{\ldots },H_{d} \in \mathcal{H}^{d}\) such that \(H_{1} \cap \mathop{\ldots } \cap H_{d} \cap K\neq \emptyset\) and \(u \in \mathbb{S}^{d-1}\) we can estimate the inner integral in (6) from above by

The hyperplanes \(H_{1} - z(H_{1},\mathop{\ldots },H_{d}),\ldots,H_{d} - z(H_{1},\mathop{\ldots },H_{d})\) partition the unit sphere \(\mathbb{S}^{d-1}\) into 2d spherical caps \(S_{1},\ldots,S_{2^{d}}\). For each u ∈ S j (1 ≤ j ≤ 2d), transformation into spherical coordinates shows that

where c d > 0 is a dimension dependent constant and ℓ d−1(S j ) is the spherical Lebesgue measure of S j . Consequently, we have

Since the last expression is finite, we can apply the dominated convergence theorem in (6). By the same arguments we used to obtain an upper bound for the inner integral in (6), we see that, for \(H_{1},\ldots,H_{d} \in \mathcal{H}^{d}\) and \(u \in \mathbb{S}^{d-1}\),

Altogether, we obtain that

By the same estimates as above, we have that, for any \(\ell\in \{ 1,\mathop{\ldots },d\}\),

Hence, r t (y) → 0 as t → ∞ so that application of Corollary 1 completes the proof of the Poisson case. The result for an underlying binomial point process follows from similar estimates and Corollary 2. □

Remark 5

Although Corollary 1 or Corollary 2 deliver a rate of convergence, we cannot provide such rate for this particular example. This is due to the fact that the exact asymptotic behavior of α t (y) or α n (y) depends in a delicate way on the smoothness behavior of the boundary of K.

Corollary 4 admits a nice interpretation in the planar case d = 2. Namely, the smallest triangle contained in K coincides with the smallest triangular cell included in K of the line tessellation induced by η t or ζ n (note that this argument fails in higher dimensions). This way, Corollary 4 also makes a statement about the area of small triangular cells, which generalizes Corollary 2.7 in [29] from the translation-invariant case r = 1 to arbitrary distance parameters r ≥ 1:

Corollary 5

Denote by A t or A n the area of the smallest triangular cell in K of a line tessellation generated by a Poisson line process η t or a binomial line process ζ n with distance parameter r ≥ 1, respectively. Then t 6 A t and n 6 A n both converge in distribution, as t →∞ or n →∞, to a Weibull random variable with survival function y ↦ exp (−β y 1∕2 ), y ≥ 0, where β is as in Corollary 4 .

3.3 Flat Triangles

So-called ley lines are expected alignments of a set of locations that are of geographical and/or historical interest, such as ancient monuments, megaliths and natural ridge-tops [4]. For this reason, there is some interest in archaeology, for example, to test a point pattern on spatial randomness against an alternative favoring collinearities. We carry out this program in case of a planar Poisson or binomial point process and follow [31, Sect. 5], where the asymptotic behavior of the number of so-called flat triangles in a binomial point process has been investigated.

Let K be a convex body in the plane and let μ be a probability measure on K which has a continuous density φ with respect to the Lebesgue measure ℓ 2 | K restricted to K. By η t we denote a Poisson point process with intensity measure μ t : = tμ, t > 0, and by ζ n a binomial process of n ≥ 1 points which are independent and identically distributed according to μ. For a triple (x 1, x 2, x 3) of distinct points of η t or ζ n we let θ(x 1, x 2, x 3) be the largest angle of the triangle formed by x 1, x 2 and x 3. We can now build the point processes

and

on the positive real half-line. The interpretation is as follows: if for a triple (x 1, x 2, x 3) in η t, ≠ 3 or ζ n, ≠ 3 the value π −θ(x 1, x 2, x 3) is small, then the triangle formed by these points is flat in the sense that its height on the longest side is small.

Corollary 6

Define

Further assume that the density φ is Lipschitz continuous. Then the rescaled point processes t 3 ξ t and \(n^{3}\hat{\xi }_{n}\) both converge in distribution to a homogeneous Poisson point process on \(\mathbb{R}_{+}\) with intensity β, as t →∞ or n →∞, respectively. In addition, there is a constant C y > 0 depending on K, φ and y such that

and

for all t ≥ 1, n ≥ 3 and \(m \in \mathbb{N}\) .

Proof

To apply Corollary 1 we have to consider the limit behavior of α t (y) and r t (y) for fixed y > 0, as t → ∞. For x 1, x 2 ∈ K and ɛ > 0 define A(x 1, x 2, ɛ) as the set of all x 3 ∈ K such that π −θ(x 1, x 2, x 3) ≤ ɛ. Then we have

Without loss of generality we can assume that x 3 is the vertex adjacent to the largest angle. We indicate this by writing x 3 = LA(x 1, x 2, x 3). We parametrize x 3 by its distance h to the line through x 1 and x 2 and the projection of x 3 onto that line, which can be represented as sx 1 + (1 − s)x 2 for some s ∈ [0, 1]. Writing x 3 = x 3(s, h), we obtain that

The sum of the angles at x 1 and x 2 is given by

Using, for x ≥ 0, the elementary inequality \(x - x^{2} \leq \arctan x \leq x\), we deduce that

Consequently, π −θ(x 1, x 2, x 3(s, h)) ≤ yt −γ is satisfied if

and cannot hold if

and t is sufficiently large. Let A y, t be the set of all x 1, x 2 ∈ K such that

Now the previous considerations yield that, for t sufficiently large and (x 1, x 2) ∈ A y, t ,

with R(x 1, x 2, s) satisfying the estimate \(\vert R(x_{1},x_{2},s)\vert \leq 2s(1 - s)\|x_{1} - x_{2}\|y^{2}t^{-2\gamma }\). For (x 1, x 2) ∉ A y, t the right hand-side is an upper bound. The choice γ = 3 leads to

Note that ℓ 2 2(K ∖ A y, t ) is of order t −3 so that the first integral on the right-hand side is of the same order. By the Lipschitz continuity of the density φ there is a constant C φ > 0 such that

This implies that the third integral is of order t −3. Combined with the fact that also the second integral above is of order t −3, we see that there is a constant C y, 1 > 0 such that

for t ≥ 1.

For given x 1, x 2 ∈ K, we have that

with M = sup z ∈ K φ(z). By the same arguments as above, we see that the integral over all x 3 such that the largest angle is adjacent to x 3 is bounded by

where \(\mathop{\mathrm{diam}}\nolimits (K)\) stands for the diameter of K. The maximal angle is at x 1 or x 2 if x 3 is contained in the union of two cones with opening angle 2t −3 y and apices at x 1 and x 2, respectively. The integral over these x 3 is bounded by \(2M\mathop{\mathrm{diam}}\nolimits (K)^{2}t^{-3}y\). Altogether, we obtain that

This estimate implies that, for any ℓ ∈ { 1, 2},

Since the upper bound behaves like t −ℓ for t ≥ 1, there is a constant C y, 2 > 0 such that

for t ≥ 1. Now an application of Corollary 1 concludes the proof in case of an underlying Poisson point process. The binomial case can be handled similarly using Corollary 2. □

Remark 6

We have assumed that the density φ is Lipschitz continuous. If this is not the case, one can still show that the rescaled point processes t 3 ξ t and \(n^{3}\hat{\xi }_{n}\) converge in distribution to a homogeneous Poisson point process on \(\mathbb{R}_{+}\) with intensity β. However, we are then no more able to provide a rate of convergence for the associated order statistics M t (m).

Remark 7

In [31, Sect. 5] the asymptotic behavior of the number of flat triangles in a binomial point process has been investigated, while our focus here was on the angle statistic of such triangles. However, these two random variables are asymptotically equivalent so that Corollary 6 also delivers an alternative approach to the results in [31]. In addition, it allows to deal with an underlying Poisson point process, where it provides rates of convergence in the case of a Lipschitz density.

3.4 Non-Intersecting k-Flats

Fix a space dimension d ≥ 3 and let k ≥ 1 be such that 2k < d. By G(d, k) let us denote the space of k-dimensional linear subspaces of \(\mathbb{R}^{d}\), which is equipped with a probability measure ς. In what follows we shall assume that ς is absolutely continuous with respect to the Haar probability measure on G(d, k). The space of k-dimensional affine subspaces of \(\mathbb{R}^{d}\) is denoted by A(d, k) and for t > 0 a translation-invariant measure μ t on A(d, k) is defined by the relation

where g ≥ 0 is a measurable function on A(d, k). We will use E and F to indicate elements of A(d, k), while L and M will stand for linear subspaces in G(d, k), see [11, formula (1)] in this book. We also put μ = μ 1. For two fixed k-flats E, F ∈ A(d, k) we denote by \(d(E,F) =\inf \{\| x_{1} - x_{2}\|: x_{1} \in E,\,x_{2} \in F\}\) the distance of E and F. For almost all E and F it is realized by two uniquely determined points x E ∈ E and x F ∈ F, i.e. \(d(E,F) =\| x_{E} - x_{F}\|\), and we let m(E, F): = (x E + x F )∕2 be the midpoint of the line segment joining x E with x F .

Let \(K \subset \mathbb{R}^{d}\) be a convex body and let η t be a Poisson point process on A(d, k) with intensity measure μ t as defined in (7). We will speak about η t as a Poisson k-flat process and denote, more generally, the elements of A(d, k) or G(d, k) as k-flats. We will not treat the binomial case in what follows since the measures μ t are not finite. We notice that in view of [27, Theorem 4.4.5 (c)] any two k-flats of η t are almost surely in general position, a fact which from now on will be used without further comment.

Point processes of k-dimensional flats in \(\mathbb{R}^{d}\) have a long tradition in stochastic geometry and we refer to [6] or [27] as well as to [11] for general background material. Moreover, we mention the works [10, 26], which deal with distance measurements and the so-called proximity of Poisson k-flat processes and are close to what we consider here. While in these papers only mean values are considered, we are interested in the point process ξ t on \(\mathbb{R}_{+}\) defined by

for a fixed parameter a > 0. A particular case arises when a = 1. Then M t (1), for example, is the smallest distance between two k-flats from η t that have their midpoint in K.

Corollary 7

Define

where [L,M] is the 2k-dimensional volume of a parallelepiped spanned by two orthonormal bases in L and M. Then, as t →∞, t 2a∕(d−2k) ξ t converges in distribution to a Poisson point process on \(\mathbb{R}_{+}\) with intensity measure

Moreover, there is a constant C > 0 depending on K, ς and a such that

for any t ≥ 1, y ≥ 0 and \(m \in \mathbb{N}\) .

Proof

For y > 0 and t > 0 we have that

We abbreviate δ: = y 1∕a t −γ∕a and evaluate the integral

For this, we define V: = E + F and U: = V ⊥ and write E and F as E = L + x 1 and F = M + x 2 with L, M ∈ G(d, k) and x 1 ∈ L ⊥ , x 2 ∈ M ⊥ . Applying now the definition (7) of the measure μ and arguing along the lines of the proof of Theorem 4.4.10 in [27], we arrive at the expression

Substituting u = x 1 − x 2, v = (x 1 + x 2)∕2 (a transformation having Jacobian equal to 1), we find that

Since U has dimension d − 2k, transformation into spherical coordinates in U gives

Moreover,

since V = U ⊥ . Combining these facts with (8) we find that

and that

Consequently, choosing γ = 2a∕(d − 2k) we have that

For the remainder term r t (y) we write

This can be estimated along the lines of the proof of Theorem 3 in [30]. Namely, using that [ ⋅ , ⋅ ] ≤ 1 and writing diam(K) for the diameter of K, we find that

where we have used that γ = 2a∕(d − 2k). This puts us in the position to apply Corollary 1, which completes the proof. □

Remark 8

A particularly interesting case arises when the distribution ς coincides with the Haar probability measure on G(d, k). Then the double integral in the definition of β in Corollary 7 can be evaluated explicitly, namely we have

according to [13, Lemma 4.4].

Remark 9

Corollary 7 generalizes Theorem 4 in [30] (where the case a = 1 has been investigated) to general length-powers a > 0. However, it should be noticed that the set-up in [30] slightly differs from the one here. In [30] the intensity parameter t was kept fixed, whereas the set K was increased by dilations. But because of the scaling properties of a Poisson k-flat process and the a-homogeneity of d(E, F)a, one can translate one result into the other. Moreover, we refer to [14] for closely related results including directional constraints.

Remark 10

In [29] a similar problem has been addressed in the case where ς coincides with the Haar probability measure on G(d, k). For a pair (E, F) ∈ η t, ≠ 2 satisfying E ∩ K ≠ ∅ and F ∩ K ≠ ∅, the distance between E and F was measured by

and it has been shown in Theorem 2.1 ibidem that the associated point process

converges, after rescaling with t 2∕(d−2k), towards the same Poisson point process as in Corollary 7 when ς is the Haar probability measure on G(d, k) and a = 1.

4 Proofs of the Main Results

4.1 Moment Formulas for Poisson U-Statistics

We call a Poisson functional S of the form

with \(k \in \mathbb{N}_{0}:= \mathbb{N} \cup \{ 0\}\) and \(f: \mathbb{X}^{k} \rightarrow \mathbb{R}\) a U-statistic of order k of η t , or a Poisson U-statistic for short (see [17]). For k = 0 we use the convention that f is a constant and S = f. In the following, we always assume that f is integrable. Moreover, without loss of generality we assume that f is symmetric since we sum over all permutations of a fixed k-tuple of points in the definition of S.

In order to compute mixed moments of Poisson U-statistics, we use the following notation. For \(\ell\in \mathbb{N}\) and \(n_{1},\mathop{\ldots },n_{\ell} \in \mathbb{N}_{0}\) we define N 0 = 0, \(N_{i} =\sum _{ j=1}^{i}n_{j},\ i \in \{ 1,\mathop{\ldots },\ell\}\), and

Let \(\varPi (n_{1},\mathop{\ldots },n_{\ell})\) be the set of all partitions σ of \(\{1,\mathop{\ldots },N_{\ell}\}\) such that for any \(i \in \{ 1,\mathop{\ldots },\ell\}\) all elements of J i are in different blocks of σ. By | σ | we denote the number of blocks of σ. We say that two blocks B 1 and B 2 of a partition \(\sigma \in \varPi (n_{1},\mathop{\ldots },n_{\ell})\) intersect if there is an \(i \in \{ 1,\mathop{\ldots },\ell\}\) such that B 1 ∩ J i ≠ ∅ and B 2 ∩ J i ≠ ∅. A partition \(\sigma \in \varPi (n_{1},\mathop{\ldots },n_{\ell})\) with blocks \(B_{1},\mathop{\ldots },B_{\vert \sigma \vert }\) belongs to \(\tilde{\varPi }(n_{1},\mathop{\ldots },n_{\ell})\) if there are no nonempty sets \(M_{1},M_{2} \subset \{ 1,\mathop{\ldots },\vert \sigma \vert \}\) with M 1 ∩ M 2 = ∅ and \(M_{1} \cup M_{2} =\{ 1,\mathop{\ldots },\vert \sigma \vert \}\) such that for any i ∈ M 1 and j ∈ M 2 the blocks B i and B j do not intersect. Moreover, we define

If there are \(i,j \in \{ 1,\mathop{\ldots },\ell\}\) with n i ≠ n j , we have \(\varPi _{\neq }(n_{1},\mathop{\ldots },n_{\ell}) =\varPi (n_{1},\mathop{\ldots },n_{\ell})\).

For \(\sigma \in \varPi (n_{1},\mathop{\ldots },n_{\ell})\) and \(f: \mathbb{X}^{N_{\ell}} \rightarrow \mathbb{R}\) we define \(f_{\sigma }: \mathbb{X}^{\vert \sigma \vert }\rightarrow \mathbb{R}\) as the function which arises by replacing in the arguments of f all variables belonging to the same block of σ by a new common variable. Since we are only interested in the integral of this new function in the sequel, the order of the new variables does not matter. For \(f^{(i)}: \mathbb{X}^{n_{i}} \rightarrow \mathbb{R}\), \(i \in \{ 1,\mathop{\ldots },\ell\}\), let \(\otimes _{i=1}^{\ell}f^{(i)}: \mathbb{X}^{N_{\ell}} \rightarrow \mathbb{R}\) be given by

The following lemma allows us to compute moments of Poisson U-statistics (see also [23]. Here and in what follows we mean by a Poisson functional F = F(η t ) a random variable only depending on the Poisson point process η t for some fixed t > 0.

Lemma 1

For \(\ell\in \mathbb{N}\) and \(f^{(i)} \in L_{s}^{1}(\mu _{t}^{k_{i}})\) with \(k_{i} \in \mathbb{N}_{0}\) , \(i = 1,\mathop{\ldots },\ell\) , such that

let

and let F be a bounded Poisson functional. Then

Proof

We can rewrite the product as

since points occurring in different sums on the left-hand side can be either equal or distinct. Now an application of the multivariate Mecke formula (see [18, formula (1.11)]) completes the proof of the lemma. □

4.2 Poisson Approximation of Poisson U-Statistics

The key argument of the proof of Theorem 1 is a quantitative bound for the Poisson approximation of Poisson U-statistics which is established in this subsection. From now on we consider the Poisson U-statistic

where f is as in Sect. 2 and \(A \subset \mathbb{R}\) is measurable and bounded. We assume that k ≥ 2 since S A follows a Poisson distribution for k = 1 (see Sect. 2.3 in [16], for example). In the sequel, we use the abbreviation

It follows from the multivariate Mecke formula (see [18, formula (1.11)]) that

In order to compare the distributions of two integer-valued random variables Y and Z, we use the so-called total variation distance d TV defined by

Proposition 1

Let S A be as above, let Y be a Poisson distributed random variable with mean s > 0 and define

Then there is a constant C ≥ 1 only depending on k such that

Remark 11

The inequality (9) still holds if Y is almost surely zero (such a Y can be interpreted as a Poisson distributed random variable with mean s = 0). In this case, we obtain by Markov’s inequality that

Our proof of Proposition 1 is a modification of the proof of Theorem 3.1 in [21]. It makes use of the special structure of S A and improves of the bound in [21] in case of Poisson U-statistics. To prepare for what follows, we need to introduce some facts around the Chen–Stein method for Poisson approximation (compare with [3]). For a function \(f: \mathbb{N}_{0} \rightarrow \mathbb{R}\) let us define Δ f(k): = f(k + 1) − f(k), \(k \in \mathbb{N}_{0}\), and Δ 2 f(k): = f(k + 2) − 2f(k + 1) + f(k), \(k \in \mathbb{N}_{0}\). For \(B \subset \mathbb{N}_{0}\) let f B be the solution of the Chen–Stein equation

It is known (see Lemma 1.1.1 in [2]) that f B satisfies

where \(\|\cdot \|_{\infty }\) is the usual supremum norm.

Besides the Chen–Stein method we need some facts concerning the Malliavin calculus of variations on the Poisson space (see [18]). First, the so-called integration by parts formula implies that

where D stands for the difference operator and L −1 is the inverse of the Ornstein–Uhlenbeck generator (this step requires that \(\mathbb{E}\int _{\mathbb{X}}(D_{x}S_{A})^{2}\,\mu _{t}(\text{d}x) <\infty\), which is a consequence of the calculations in the proof of Proposition 1). The following lemma (see Lemma 3.3 in [24]) implies that the difference operator applied to a Poisson U-statistic leads again to a Poisson U-statistic.

Lemma 2

Let \(k \in \mathbb{N}\) , f ∈ L s 1 (μ t k ) and

Then

Proof

It follows from the definition of the difference operator and the assumption that f is a symmetric function that

for \(x \in \mathbb{X}\). This completes the proof. □

In order to derive an explicit formula for the combination of the difference operator and the inverse of the Ornstein–Uhlenbeck generator of S A , we define \(h_{\ell}: \mathbb{X}^{\ell} \rightarrow \mathbb{R}\), \(\ell\in \{ 1,\mathop{\ldots },k\}\), by

We shall see now that the operator − DL −1 applied to S A can be expressed as a sum of Poisson U-statistics (see also Lemma 5.1 in [28]).

Lemma 3

For \(x \in \mathbb{X}\) ,

Proof

By Mehler’s formula (see Theorem 3.2 in [20] and also [18, Sect. 1.7]) we have

where η t (s), s ∈ [0, 1], is an s-thinning of η t and \(\mathbb{P}_{(1-s)\mu _{t}}\) is the distribution of a Poisson point process with intensity measure (1 − s)μ t . Note in particular that η t (s) +χ is a Poisson point process with intensity measure sμ t + (1 − s)μ t = μ t . The last expression can be rewritten as

By the multivariate Mecke formula (see [18, formula (1.11)]), we obtain for the first term that

To evaluate the second term further, we notice that for an ℓ-tuple (x 1, …, x ℓ ) ∈ η t, ≠ ℓ the probability of surviving the s-thinning procedure is s ℓ. Thus

for \(\ell\in \{ 1,\mathop{\ldots },k\}\). This leads to

Finally, we may interpret χ as (1 − s)-thinning of an independent copy of η t , in which each point has survival probability (1 − s). Then the multivariate Mecke formula ([18, formula (1.11)]) implies that

Together with

we see that

Applying now the difference operator to the last equation, we see that the first term does not contribute, whereas the second term can be handled by using Lemma 2. □

Now we are prepared for the proof of Proposition 1.

Proof (of Proposition 1)

Let Y A be a Poisson distributed random variable with mean s A > 0. The triangle inequality for the total variation distance implies that

A standard calculation shows that

so that it remains to bound

For a fixed \(B \subset \mathbb{N}_{0}\) it follows from (10) and (12) that

Now a straightforward computation using a discrete Taylor-type expansion as in [21] shows that

Together with (11), we obtain that

with

Hence, we have

where the remainder term satisfies | R x | ≤ 2ɛ 1, A max{0, D x S A − 1}. Together with (13) and − D x L −1 S A ≥ 0, which follows from Lemma 3, we obtain that

It follows from Lemmas 2 and 3 that

Consequently, we can deduce from Lemma 1 that

For the particular choice ℓ = k and | σ | = k − 1 we have

Since there are (k − 1)! partitions σ ∈ Π(k − 1, k − 1) with | σ | = k − 1, we obtain that

Now (11) and the definition of ϱ A imply that, for \(\ell\in \{ 1,\mathop{\ldots },k\}\),

Hence, the first summand above is bounded by

By (11) we see that

and the multivariate Mecke formula for Poisson point processes (see [18, formula (1.11)]) leads to

where for a subset I = { i 1, …, i j } ⊂ { 1, …, k − 1} we use the shorthand notation x I for \((x_{i_{1}},\ldots,x_{i_{j}})\). Hence,

This implies that

For the second term in (14) we have

It follows from Lemmas 2 and 1 that

Since there are (k − 1)! partitions with | σ | = k − 1 and for each of them

this leads to

so that Lemma 1 yields

From the previous estimates, we can deduce that

Combining (14) with (15) and (16) shows that

which concludes the proof. □

Remark 12

As already discussed in the introduction, the proof of Proposition 1—the main tool for the proof of Theorem 1—is different from that given in [29]. One of the differences is Lemma 3, which provides an explicit representation for − D x L −1 S A based on Mehler’s formula. We took considerable advantage of this in the proof of Proposition 1 and remark that the proof of the corresponding result in [29] uses the chaotic decomposition of U-statistics and the product formula for multiple stochastic integrals (see [18]). Another difference is that our proof here does not make use of the estimates established by the Malliavin–Chen–Stein method in [21]. Instead, we directly manipulate the Chen–Stein equation for Poisson approximation and this way improve the rate of convergence compared to [29]. A different method to show Theorems 1 and 2 is the content of the recent paper [7].

4.3 Poisson Approximation of Classical U-Statistics

In this section we consider U-statistics based on a binomial point process ζ n defined as

where f is as in Sect. 2 and \(A \subset \mathbb{R}\) is bounded and measurable. Recall that in the context of a binomial point process ζ n we assume that \(\mu (\mathbb{X}) = 1\). Denote as in the previous section by \(s_{A}:= \mathbb{E}[S_{A}]\) the expectation of S A . Notice that

with \(h(x_{1}\ldots,x_{k}) = (k!)^{-1}\mathbb{1}\{\,f(x_{1},\ldots,x_{k}) \in A\}\).

Proposition 2

Let S A be as above and let Y be a Poisson distributed random variable with mean s > 0 and define

Then there is a constant C ≥ 1 only depending on k such that

Proof

By the same arguments as at the beginning of the proof of Proposition 1 it is sufficient to assume that s = s A in what follows. To simplify the presentation we put N: = { I ⊂ { 1, …, n}: | I | = k} and rewrite S A as

where X 1, …, X n are i.i.d. random elements in \(\mathbb{X}\) with distribution μ and where X I is shorthand for \((X_{i_{1}},\ldots,X_{i_{k}})\) if I = { i 1, …, i k }. In this situation it follows from Theorem 2 in [1] that

Since \(s_{A} = \mathbb{E}[S_{A}] = \frac{(n)_{k}} {k!} \mathbb{P}(\,f(X_{1},\ldots,X_{k}) \in A)\), we have that

For the second term we find that

Putting C: = 2k k! proves the claim. □

4.4 Proofs of Theorems 1 and 2 and Corollaries 1 and 2

Proof (of Theorem 1)

We define the set classes

and

From [15, Theorem 16.29] it follows that (t γ ξ t ) t > 0 converges in distribution to a Poisson point process ξ with intensity measure ν if

and

Note that I ⊂ V and that every set V ∈ V can be represented in the form

For V ∈ V we define the Poisson U-statistic

which has expectation

Since ξ(V ) is Poisson distributed with mean ν(V ) = ∑ i = 1 n ν((a i , b i ]), it follows from Proposition 1 that

with y max: = max{ | a 1 | , | b n | } and C ≥ 1. Now, assumptions (2) and (3) yield that

Consequently, the conditions (18) and (19) are satisfied so that (t γ ξ t ) t > 0 converges in distribution to ξ. Choosing V = (0, y] and using the fact that t γ M t (m) > y is equivalent to S (0, y], t < m lead to the first inequality in Theorem 1. The second one follows analogously from V = (−y, 0] and by using the equivalence of t γ M t (−m) ≥ y and S (−y, 0], t < m. □

Proof (of Corollary 1)

Theorem 1 with ν defined as in (5) yields the assertions of Corollary 1. □

Proof (of Theorem 2 and Corollary 2)

Since the proofs are similar to those of Theorem 1 and Corollary 1, we skip the details. □

References

Barbour, A.D., Eagleson, G.K.: Poisson convergence for dissociated statistics. J. R. Stat. Soc. B 46, 397–402 (1984)

Barbour, A.D., Holst, L., Janson, S.: Poisson Approximation. Oxford University Press, Oxford (1992)

Bourguin, S., Peccati, G.: The Malliavin-Stein method on the Poisson space. In: Peccati, G., Reitzner, M. (eds.) Stochastic Analysis for Poisson Point Processes: Malliavin Calculus, Wiener-Ito Chaos Expansions and Stochastic Geometry. Bocconi & Springer Series, vol. 7, pp. 185–228. Springer, Cham (2016)

Broadbent, S.: Simulating the ley-hunter. J. R. Stat. Soc. Ser. A (Gen) 143, 109–140 (1980)

Calka, P., Chenavier, N.: Extreme values for characteristic radii of a Poisson-Voronoi tessellation. Extremes 17, 359–385 (2014)

Chiu, S.N., Stoyan, D., Kendall, W.S., Mecke, J.: Stochastic Geometry and its Applications, 3rd edn. Wiley, Chichester (2013)

Decreusefond, L., Schulte, M., Thäle, C.: Functional Poisson approximation in Kantorovich-Rubinstein distance with applications to U-statistics and stochastic geometry. Accepted for publication in Ann. Probab. (2015)

Hörrmann, J., Hug, D.: On the volume of the zero cell of a class of isotropic Poisson hyperplane tessellations. Adv. Appl. Probab. 46, 622–642 (2014)

Hörrmann, J., Hug, D., Reitzner, M., Thäle, C.: Poisson polyhedra in high dimensions. Adv. Math. 281, 1–39 (2015)

Hug, D., Last, G., Weil, W.: Distance measurements on processes of flats. Adv. Appl. Probab. 35, 70–95 (2003)

Hug, D., Reitzner, M.: Introduction to stochastic geometry. In: Peccati, G., Reitzner, M. (eds.) Stochastic Analysis for Poisson Point Processes: Malliavin Calculus, Wiener-Ito Chaos Expansions and Stochastic Geometry. Bocconi and Springer Series, vol. 6, pp. 145–184. Springer/Bocconi University Press, Milan (2016)

Hug, D., Schneider, R.: Asymptotic shapes of large cells in random tessellations. Geom. Funct. Anal. 17, 156–191 (2007)

Hug, D., Schneider, R., Schuster, R.: Integral geometry of tensor valuations. Adv. Appl. Math. 41, 482–509 (2008)

Hug, D., Thäle, C., Weil, W.: Intersection and proximity for processes of flats. J. Math. Anal. Appl. 426, 1–42 (2015)

Kallenberg, O.: Foundations of Modern Probability, 2nd edn. Springer, New York (2001)

Kingman, J.F.C.: Poisson Processes. Oxford University Press, Oxford (1993)

Lachièze-Rey, R., Reitzner, M.: U-statistics in stochastic geometry. In: Peccati, G., Reitzner, M. (eds.) Stochastic Analysis for Poisson Point Processes: Malliavin Calculus, Wiener-Ito Chaos Expansions and Stochastic geometry. Bocconi & Springer Series, vol. 7, pp. 229–253. Springer, Cham (2016)

Last, G.: Stochastic analysis for Poisson processes. In: Peccati, G., Reitzner, M. (eds.) Stochastic Analysis for Poisson Point Processes: Malliavin Calculus, Wiener-Ito Chaos Expansions and Stochastic Geometry. Bocconi & Springer Series, vol. 7, pp. 1–36. Springer, Milan (2016)

Last, G., Penrose, M., Schulte, M., Thäle, C.: Moments and central limit theorems for some multivariate Poisson functionals. Adv. Appl. Probab. 46, 348–364 (2014)

Last, G., Peccati, G., Schulte, M.: Normal approximation on Poisson spaces: Mehler’s formula, second order Poincaré inequality and stabilization. Accepted for Publication in Probab. Theory Relat. Fields (2015)

Peccati, G.: The Chen-Stein method for Poisson functionals. arXiv: 1112.5051 (2011)

Penrose, M.: Random Geometric Graphs. Oxford University Press, Oxford (2003)

Privault, N.: Combinatorics of Poisson stochastic integrals with random integrands. In: Peccati, G., Reitzner, M. (eds.) Stochastic Analysis for Poisson Point Processes: Malliavin Calculus, Wiener-Ito Chaos Expansions and Stochastic Geometry. Bocconi & Springer Series, vol. 7, pp. 37–80. Springer, Cham (2016)

Reitzner, M., Schulte, M.: Central limit theorems for U-statistics of Poisson point processes. Ann. Probab. 41, 3879–3909 (2013)

Reitzner, M., Schulte, M., Thäle, C.: Limit theory for the Gilbert graph. arXiv: 1312.4861 (2013)

Schneider, R.: A duality for Poisson flats. Adv. Appl. Probab. 31, 63–68 (1999)

Schneider, R., Weil, W.: Stochastic and Integral Geometry. Springer, Berlin (2008)

Schulte, M.: Normal approximation of Poisson functionals in Kolmogorov distance. Accepted for publication in J. Theoret. Probab. 29, 96–117 (2016)

Schulte, M., Thäle, C.: The scaling limit of Poisson-driven order statistics with applications in geometric probability. Stoch. Proc. Appl. 122, 4096–4120 (2012)

Schulte, M., Thäle, C.: Distances between Poisson k-flats. Methodol. Comput. Appl. Probab. 16, 311–329 (2014)

Silverman, B., Brown, T.: Short distances, flat triangles and Poisson limits. J. Appl. Probab. 15, 815–825 (1978)

Surgailis, D.: On multiple Poisson stochastic integrals and associated Markov semigroups. Probab. Math. Statist. 3, 217–239 (1984)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2016 Springer International Publishing Switzerland

About this chapter

Cite this chapter

Schulte, M., Thäle, C. (2016). Poisson Point Process Convergence and Extreme Values in Stochastic Geometry. In: Peccati, G., Reitzner, M. (eds) Stochastic Analysis for Poisson Point Processes. Bocconi & Springer Series, vol 7. Springer, Cham. https://doi.org/10.1007/978-3-319-05233-5_8

Download citation

DOI: https://doi.org/10.1007/978-3-319-05233-5_8

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-05232-8

Online ISBN: 978-3-319-05233-5

eBook Packages: Mathematics and StatisticsMathematics and Statistics (R0)