Abstract

In this paper, we study the isotropic–nematic phase transition for the nematic liquid crystal based on the Landau–de Gennes \(\mathbf {Q}\)-tensor theory. We justify the limit from the Landau–de Gennes flow to a sharp interface model: in the isotropic region, \(\mathbf {Q}\equiv 0\); in the nematic region, the \(\mathbf {Q}\)-tensor is constrained on the manifolds \(\mathcal {N}=\{s_+(\mathbf {n}\otimes \mathbf {n}-\frac{1}{3}\mathbf {I}), \mathbf {n}\in {\mathbb {S}^2}\}\) with \(s_+\) a positive constant, and the evolution of alignment vector field \(\mathbf {n}\) obeys the harmonic map heat flow, while the interface separating the isotropic and nematic regions evolves by the mean curvature flow. This problem can be viewed as a concrete but representative case of the Rubinstein–Sternberg–Keller problem introduced in Rubinstein et al. (SIAM J. Appl. Math. 49:116–133, 1989; SIAM J. Appl. Math. 49:1722–1733, 1989).

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

1.1 Isotropic–Nematic Phase Transition

Liquid crystal is a state of matter between liquid and solid, in which molecules tend to align a preferred direction. There are several phases in liquid crystals. One of the most common phases is the nematic phase, in which the molecules tend to have the same alignment, but their positions are not correlated. Phase transitions between different phases give rise to a variety of mathematical questions of great interest. In this paper, we are concerned with the isotropic–nematic phase transition problem.

In physics, the different order parameters are used to describe the anisotropic behavior of liquid crystals. The most simple one is the vector theory, which uses a unit vector field \(\mathbf {n}(x)\) to describe the locally preferred alignment of liquid crystal molecules near the material point x. Onsager introduced the molecular theory, in which the orientational distribution function \(f(x,\mathbf {m})\) is introduced to describe the number density of molecules whose orientation is parallel to \(\mathbf {m}\) at the material point x. The \(\mathbf {Q}\)-tensor theory uses a symmetric traceless \(3\times 3\) matrix \(\mathbf {Q}\) to describe the alignment behavior of liquid crystals. Physically, \(\mathbf {Q}\) could be understood as the second momentum of f:

Since the order tensor \(\mathbf {Q}\) vanishes when f is the probability density \(\frac{1}{4\pi }\) for the isotropic phase, the tensor \(\mathbf {Q}\) measures how the second moments of a given probability density deviates from the isotropic value. Thus, it is convenient to use the \(\mathbf {Q}\)-tensor theory to model the isotropic–nematic phase transition problem. More precisely, we consider the following dynamical Landau–de Gennes model in \(\Omega \times (0,T), \Omega \subseteq \mathbb {R}^3\):

where

Equation (1.1) can be regarded as the gradient flow of the following Landau–de Gennes energy functional:

where the elastic energy density \(|\nabla \mathbf {Q}|^2\triangleq \sum \nolimits _{1\le i,j,k\le 3}|\partial _k\mathbf {Q}_{ij}|^2\), and the bulk energy density \(F(\mathbf {Q})\) takes the following form:

with \(b, c>0\) are constants dependent on materials, and a depends on materials and temperature. It is easy to check \(f(\mathbf {Q})=F'(\mathbf {Q})\).

In general, \(F(\mathbf {Q})\) may attain its (local) minimum at two sets:

In the Landau–de Gennes theory, \(\mathbf {Q}=0\) corresponds to the isotropic phase, which means no orientational order, while \(\mathbf {Q}=s_+(\mathbf {n}\otimes \mathbf {n}-\frac{1}{3}\mathbf {I})\) represents the nematic phase and \(\mathbf {n}\) is the local orientation direction of liquid crystal molecules. We are interested in the case that the isotropic and nematic phases have equal energy, that is

which is equivalent to

In physics, it means that the temperature is at the isotropic–nematic transition temperature. In this case, we have \(s_+=\sqrt{\frac{3a}{c}}\).

The statics and dynamics of the one-dimensional isotropic–nematic interface have been studied in [11, 17,18,19]. For the high-dimension case, by the asymptotic expansion method, it has been derived in [12] (see also [5] and [21] for some related cases) that the interface, referred as \(\Gamma\), which separates the isotropic and nematic regions, evolves by the well-known mean curvature flow. In the isotropic region, denoted by \(\Omega ^-\), \(\mathbf {Q}\equiv 0\), and there is no dynamics. In the nematic region, denoted by \(\Omega ^+\), the \(\mathbf {Q}\)-tensor is constrained on the manifolds \(\mathcal {N}\), and the evolution of alignment vector field \(\mathbf {n}\) obeys the harmonic map heat flow:

Here, \(\nu\) is the unit normal vector to \(\Gamma\), V is the normal velocity of \(\Gamma\), \(\kappa\) is the mean curvature, and \(\sigma\) is a constant representing the surface tension.

The goal of this paper is to justify the asymptotic limit \(\varepsilon \rightarrow 0\) of the system (1.1) to the system (1.4). In [16], under the hypothesis of radial symmetry, Majumdar–Milewski–Spicer analyzed this problem in a rigorous mathematical framework, and they proved that an interface can be generated by a large class of initial data and propagates according to the mean curvature.

A more general problem is to consider the Landau–de Gennes energy of the form:

where \(L_2\) is an elastic coefficient characterizing the elastic anisotropy for liquid crystal materials, and is usually not zero. The effect of the \(L_2\) term on the isotropic–nematic interface has been investigated by several studies [14, 17,18,19]. For example, when \(L_2>0\), the uniaxial assumption near the interface is not valid and the biaxiality should be taken into account. In [17], it has been proved that the uniaxial solutions remains to be stable when \(L_2<0\). In [12], the authors derived the sharp interface model by formal matched asymptotic expansion, where the Neumann-type boundary condition should be replaced by a strong anchoring condition, that is, \(\mathbf {n}=\nu\) on \(\Gamma\). However, the rigorous analysis is very challenging.

1.2 Rubinstein–Sternberg–Keller’s Problem

Due to the physical importance and mathematical interests and challenges, the phase transition problems have attracted a great deal of interest in analysis and numerical simulations. The simplest model for phase transition is the Allen–Cahn equation:

where u is a scalar function and F(u) is a function with double well (a simple choice is \(F(u)=(u^2-1)^2/4\)). This model has been used by Allen–Cahn [2] to model the motion of antiphase boundaries in crystalline solids. It is well known that, as \(\varepsilon \rightarrow 0\), the domain will be separated into two regions, where \(u\rightarrow 1\) and \(u\rightarrow -1\), respectively. The interface between these two regions will move as the mean curvature flow. This asymptotic limit has been rigorously analyzed by different authors via various proposals (see [3, 4, 7, 9, 10, 13]). In particular, the authors in [9] conducted the asymptotic expansions of high order to construct an approximate solution and then conclude the error estimation between the true solution and the approximate solution with the help of the spectrum of a linearized operator. Following the lines in [9], Alikakos–Bates–Chen proved the Cahn–Hilliard equation approximates the Hele–Shaw problem in [1].

In [20, 21], Rubinstein–Sternberg–Keller introduced the following equations:

to study certain chemical reaction–diffusion processes, where \(\Omega\) is a subset of \(\mathbf {R}^m\), \(u:\Omega \rightarrow \mathbf {R}^k\) is a phase-indicator function, and \(F(u): \mathbf {R}^k\rightarrow \mathbf {R}\) is an bulk energy function which can attain it minimum at two (or even more) disjoint connected sub-manifolds in \(\mathbf {R}^k\). Based on the formal asymptotic expansion, they found that the interface moves by its mean curvature and u tends to a harmonic map heat flow (to the sub-manifolds) away from the interface when \(\varepsilon \rightarrow 0\).

Up to the authors’ knowledge, mathematical results on rigorous analysis of \(\varepsilon \rightarrow 0\) on the vector-valued Rubinstein–Sternberg–Keller problem (1.7) are quite few. Some preliminary analysis was done by Bronsard and Stoth [5] for \(k=2\) under the radially symmetric setting, in which the Neumann boundary condition on the interface is also derived. The study on the asymptotic limit \(\varepsilon \rightarrow 0\) for minimizers of the time-independent problem was carried out by Lin et al. [15].

Despite the problems (1.1) and (1.7) are introduced from different physical motivations, the system (1.1) provides a special, but concrete and physical relevant, example to the system (1.7). Therefore, our work can be viewed as a first attempt on the rigorous analysis for the asymptotic limit \(\varepsilon \rightarrow 0\) for the general time-dependent problem (1.7).

Indeed, since a symmetric traceless \(3\times 3\) matrix \(\mathbf {Q}\) can be regarded as a vector with five independent variables, the problem (1.1) can be viewed as a special case of (1.7), where \(k=5\), and the bulk energy density F attains its minimum at \(u=0\) and a two-dimensional sub-manifold \(\mathcal {N}\). Although the dimension k and the energy function F are specified, the main important feature of the problem (1.7) is still kept: the minimum of F are attained not just at isolated points but at a point and a manifold. This fact induces a non-trivial dynamics, i.e., a harmonic map heat flow into \(\mathcal {N}\), in one bulk region. As we will show, the high dimensionality of the minima manifolds will bring some serious difficulties into our problem. Therefore, understanding the dynamics of isotropic–nematic interface will shed light on the Rubinstein–Sternberg–Keller problem (1.7) with general F(u).

1.3 Main Results

We consider the whole space case \(\Omega =\mathbb {R}^3\) or the periodic domain case \(\Omega =\mathbb {T}^3\).

Let \((\mathbf {n},\Gamma )\) be a smooth solution to the system (1.4). For \(t\in [0,T]\), we set \(\Gamma _t=\Gamma \times \{t\}\). Let \(\delta _0\le \min \{1/2, (2\Vert \Gamma _t\Vert _{C^2})^{-1}\}\), and for \(\delta \le \delta _0\), we define

We can extend \(\mathbf {n}\) to be a smooth direction field in \(\Gamma _t(\delta )\).

Let \(\varphi (x,t)\) be the signed distance to \(\Gamma _t\). Then, we have

Since \(\Gamma _t\) evolves according to the mean curvature, we have

We also introduce

Our first result is the existence of approximated solutions to (1.1) up to arbitrary order of \(\varepsilon\), which is based on the matched asymptotic method.

Theorem 1.1

Given a smooth solution\((\mathbf {n},\Gamma )\)in\(\Omega ^+\times [0,T]\)to (1.4); then, for any\(k\in \mathbb {N}\), there exists an approximate solution\(\mathbf {Q}^{[k]}(x,t)\)which isclose to\(s_+(\mathbf {n}\mathbf {n}-\frac{1}{3}\mathbf {I})\)and 0 in\(\Omega ^+\backslash \Gamma (\delta )\)and\(\Omega ^-\backslash \Gamma (\delta )\), respectively, and satisfies

where

In what follows, we let \(\mathbf {Q}_A^{\varepsilon }=\mathbf {Q}^{[k]}\) be the approximated solution. To estimate the error between the real solution and the approximate solution, the most key ingredient is to establish the following spectral condition of a linearized operator.

Theorem 1.2

(Spectral condition) There exists a positive constantCindependent of\(\varepsilon\)and\(t\in [0,T]\), such that

for any traceless and symmetric\(3\times 3\)matrix\(\mathbf {Q}\). Here,\(\mathcal {H}_{\mathbf {Q}_{A}^{\varepsilon }}\mathbf {Q}\)is defined in (2.9).

With the help of the spectral condition, we can estimate the error between the real solution and the approximate solution.

Theorem 1.3

Assume that\(k\ge 10\)and

for some positive constantc. Then, there exists a positiveconstant\(C>0\)independent of\(\varepsilon\), such that

In particular, there holds

As a corollary of Theorem 1.3, we have the following.

Corollary 1.4

There exists a positive constant\(C>0\)independent of\(\varepsilon\)suchthat

where and in what follows\(\eta \in C^\infty (\mathbb {R})\)is acut-off function satisfying\(\eta =0\)in\((-\infty ,-1)\cup (1,+\infty )\)and\(\eta =1\)in\((-\frac{1}{2},\frac{1}{2})\), ands(z) is defined by (6.1) in “Appendix 1”, and\(\varphi ^{(1)}\)is defined in (2.2).

Remark 1.5

In Theorem 1.1, we construct an explicit approximate solution to (1.1) around the local smooth solution of the system (1.4) by assuming the latter exists. The well-posedness of the system (1.4) will be studied in a future work.

1.4 Sketch of the Proof

The proof is based on the asymptotic expansion method. This method has been used to rigorously justify the hydrodynamic limit of the Boltzmann equation [6] and other singular limits in various problems, and developed by several authors to study the sharp interface limit of diffuse interface models such as the Allen–Cahn equation [9] and Cahn–Hilliard equations [1]. The proof consists of three steps: the construction of approximated solutions based on smooth solutions of the limit system; the spectral estimate for the linearized system around the approximated solution; and suitable energy estimates for the remainder terms. There are some new complexities or difficulties when we applied this scheme to the isotropic–nematic interface problem.

First of all, to construct the approximate solution, we have to deal with the degeneracy of the linearized operator, not only in the inner region but also in the outer region, when we solve the corrective solution of each order. To overcome this difficulty, we need to split the corrective solution into two parts: the kernel part and the out-of-kernel part, and solve them separately. In contrast, the linearized operator in outer region is always invertible in the Allen–Cahn or Cahn–Hilliard problem. More importantly, to match these approximated solutions, we have to solve a much more complicated and subtle coupling system in the bulk region and on the sharp interface.

The most difficult part is to prove an uniform-in-\(\varepsilon\) lower bound estimate for the first eigenvalue of the linearized operator:

around the approximate solution \(\mathbf {Q}_{A}^{\varepsilon }=\mathbf {Q}^{[k]}\). Since the operator acts on a matrix-valued function, the methods in [9] or [8] which involves the Hopf maximum principle and the Harnack inequality may not work directly. Our proof consists of two parts: estimate the linearized operator with \(\mathbf {Q}_{A}^{\varepsilon }\) replaced by the leading order term \(\mathbf {Q}^{(0)}\), and exclude the singular effect coming from the first correction term \(\mathbf {Q}^{(1)}\).

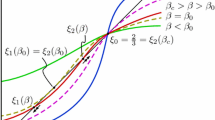

For the first part, our key observation is that, by introducing a suitable decomposition, we can reduce the problem to analyzing the spectrum of two one-dimensional linear operators acting on scalar functions [see \(\mathcal {J}_0\) defined in (3.1) and \(\mathcal {J}_1\) defined in (3.2)]. These two operators come from the kernel space of the linearized operator in the inner region. The first one \(\mathcal {J}_0\) corresponds to the translational invariance of the linearized operator, which has been studied by [8]. The second one \(\mathcal {J}_1\), which is new, corresponds to the rotational invariance due to the rotational symmetry of the minimum manifold \(\mathcal {N}\). It seems difficult to use the methods in [8] or [9] to analyze \(\mathcal {J}_1\), since its potential degenerates at \(x=+\infty\). To overcome this difficulty, we employ a new method which does not need to use the Hopf maximum principle and the Harnack inequality. In addition, this method also works on the spectral analysis of \(\mathcal {J}_0\), and thus, we present a new proof of Lemma 2.1 in [8]. In addition, a little bit surprisingly, the homogeneous Neumann boundary condition on \(\Gamma\) in (1.4) plays an important role in estimating the crossing terms (see Sects. 4.2 and 4.3).

For the second part, the key observation is that the singular effect arising form the first correction term \(\mathbf {Q}^{(1)}\) can be excluded by a cancellation relation between the leading order term \(\mathbf {Q}^{(0)}\) and the first correction term \(\mathbf {Q}^{(1)}\) (see (6.14) and Sect. 4.4).

Finally, we can follow the method in [1] and [9] to estimate the error \(\mathbf {Q}^{\varepsilon }-\mathbf {Q}_A^{\varepsilon }\). Here, we use a direct and simple way; that is, estimate the following energy:

with

for \(m=9\). The reasons for taking \(m=9\) and choosing the above \(\varepsilon\) powers in the energy come from the detailed computations in Sect . 5.

Notations. For any two vectors \(\mathbf {m}=(m_1, m_2, m_3), \mathbf {n}= (n_1, n_2, n_3)\in \mathbb {R}^3\), we denote the tensor product by \(\mathbf {m}\otimes \mathbf {n}=[m_in_j], 1\le i,j\le 3\). In the sequel, we use \(\mathbf {m}\mathbf {n}\) to denote \(\mathbf {m}\otimes \mathbf {n}\) for simplicity when no ambiguity is possible. For any two tensors A and B, A : B denotes \(\mathrm {Tr}(AB)=A_{ij}B_{ji}\) and \(|A|^2=A : A\). The divergence of a tensor is defined by \(\nabla \cdot A=\partial _jA_{ij}\).

2 Construction of the Approximated Solutions

For the diffuse interface models, the solutions behave differently in regions near and away from the interface. To avoid the complicated calculations near the interface, we choose the method of asymptotic matching introduced in [1] rather than using a local parameterization of the interface as in [9] to construct the approximate solution.

In the region \(\Omega ^\pm \setminus \Gamma (\delta /2)\) away from the interface, which is called outer region, we make the following expansion:

and seek the solution of the form:

where k is to be determined.

While, in \(\Gamma (\delta )\) called inner region, we perform inner expansion in \(\Gamma (\delta )\). Let \(\Gamma ^\varepsilon\) be the smooth interface centered in the transition region and \(\varphi ^\varepsilon\) be the signed distance function to \(\Gamma ^\varepsilon\). Due to \(\mathbf {Q}^{\varepsilon }\) varies rapidly from \(\Omega ^+\) to \(\Omega ^-\), we introduce a fast variable \(z=\frac{\varphi ^\varepsilon }{\varepsilon }\) and conduct the following expansions:

where \(\varphi ^{(0)}(x,t)\triangleq \varphi (x,t)\).

In the overlapped region \(\Gamma (\delta )\setminus \Gamma (\delta /2)=(\Omega \setminus \Gamma (\delta /2))\cap \Gamma (\delta )\), two kinds of solutions in outer expansion and inner expansion should be a good approximation, and thus, they have to be almost the same. This gives a matching condition or a “boundary condition” for \(\mathbf {Q}_{I}^{(i)}(z,x,t)\), that is

In \(\Gamma (\delta )\), we then truncate \(\varphi ^{\varepsilon }\) and seek the solution of the form:

with

Then, we define the approximate solution

where

and recall that \(\eta \in C^\infty (\mathbb {R})\) is a cut-off function satisfying \(\eta =0\) in \((-\infty ,-1)\cup (1,+\infty )\) and \(\eta =1\) in \((-\frac{1}{2},\frac{1}{2})\).

2.1 Outer Expansion

We introduce the notations

We perform outer expansion in the \(\Omega ^\pm\) rather than \(\Omega ^\pm \setminus \Gamma (\delta /2)\) using the Hilbert expansion method as in [23, 24]. In \(\Omega ^\pm\), we assume that

where \(\mathbf {Q}_\pm ^{(i)}(x,t)\) are smooth functions which are independent of \(\varepsilon\). Then, we have

where

Equating the \(O(\varepsilon ^k)(k\ge -2)\) system in (1.1) yields that

2.1.1 Solving \(\mathbf {Q}_{+}^{(0)}\) and \(\mathbf {Q}_{-}^{(k)}(k\ge 0)\)

Equation (2.14) implies that \(\mathbf {Q}_\pm ^{(0)}\) is a critical point of the bulk energy \(F(\mathbf {Q})\). Thus, we may take

Since \(\mathbf {Q}_-=0\) exactly solves (1.1), we can simply take

Therefore, we only need to show how to determine \(\mathbf {Q}_+^{(i)}\) in \(\Omega ^+\) for \(i\ge 1\).

Using (2.17), the linearized operator \(\mathcal {H}_{+}\) can be written as follows:

We need the following lemma from [23].

Lemma 2.1

-

(i)

\(\text {Ker}\,\mathcal {H}_+=\{\mathbf {n}\mathbf {n}^\perp +\mathbf {n}^\perp \mathbf {n}:\mathbf {n}^\perp \in \mathbb {V}_\mathbf {n}\}\)with\(\mathbb {V}_\mathbf {n}\triangleq \{\mathbf {n}^\perp :\mathbf {n}^\perp \cdot \mathbf {n}=0\}\).

-

(ii)

\(\mathcal {H}_+\)is a one-to-one mapping on\(\left( \text {Ker}\,\mathcal {H}_+ \right) ^\perp\); here,\(\left( \text {Ker}\,\mathcal {H}_+ \right) ^\perp \triangleq \{\mathbf {Q}: (\mathbf {Q}:\mathbf {n}\mathbf {n}^\perp +\mathbf {n}^\perp \mathbf {n})=0, \forall \mathbf {n}^\perp \in \mathbb {V}_\mathbf {n}\}\)is the orthogonal complement of\(\text {Ker}\mathcal {H}_+\). Moreover, the inverse operator

$$\begin{aligned} \mathcal {H}_+^{-1}\mathbf {Q}=\frac{1}{bs_{+}} \left( \mathbf {Q}-\mathbf {n}\mathbf {n}\cdot \mathbf {Q}-\mathbf {Q}\cdot \mathbf {n}\mathbf {n}+\frac{2}{3}(\mathbf {n}\mathbf {n}:\mathbf {Q})\mathbf {I}\right) +\frac{14}{bs_{+}} \left( \mathbf {n}\mathbf {n}-\frac{1}{3}\mathbf {I}\right) (\mathbf {n}\mathbf {n}:\mathbf {Q}). \end{aligned}$$(2.19)

To solve \(\mathbf {Q}_+^{(i)}\) in \(\Omega ^+\) for \(i\ge 1\) from (2.16), we first introduce the decomposition:

and then solve \(\mathbf {P}_\top ^{(i)}\), \(\mathbf {P}_\bot ^{(i)}\) separately. To this end, we further introduce the orthogonal basis:

where \(\mathbf {l}, \mathbf {m}\) are smooth unit vectors, which together with \(\mathbf {n}\) form an orthogonal coordinate frame. It is easy to see that \(\mathbf {E}_1, \mathbf {E}_2\in \mathrm {Ker}~\mathcal {H}_{+}\) and \(\mathbf {E}_0, \mathbf {E}_3,\mathbf {E}_4\in (\mathrm {Ker}~\mathcal {H}_{+})^\bot\). Thus, one may decompose \(\mathbf {P}_\bot\) and \(\mathbf {P}_\top\) as follows:

Remark 2.2

In some situations, even though\(\mathbf {n}\)is smooth, we may not able to find corresponding smooth unit vector fields\(\mathbf {l}, \mathbf {m}\). Therefore, we assume this to ensure the existence of smooth orthogonal basis\(\mathbf {E}_0\)–\(\mathbf {E}_4\).

2.1.2 Solving \(\mathbf {P}_\bot ^{(2)}\) and \((p_{11},p_{12})\)

From Eq. (2.15) and Lemma 2.1, we know that \(\mathbf {Q}_+^{(1)}\in \mathrm {Ker}~\mathcal {H}_{+}\). Thus

Let us define two linear operators

Lemma 2.3

If \(\mathbf {Q}\in \mathrm {Ker}~\mathcal {H}_+\) , then \(\mathcal {B}_{+}\mathbf {Q},~~ \mathcal {C}_{+}\mathbf {Q}\in (\mathrm {Ker}~\mathcal {H}_+)^\bot .\)

Proof

If suffices to prove that for\(\mathbf {P}_1, \mathbf {P}_2\in \text {Ker}~\mathcal {H}_+\), \(\mathcal {B}_{+}\mathbf {P}_1:\mathbf {P}_2= \mathcal {C}_{+}\mathbf {P}_1:\mathbf {P}_2 =0.\) As \(\mathbf {Q}_+^{(1)}, \mathbf {P}_1, \mathbf {P}_2\in \text {Ker}~\mathcal {H}_+\), we may assume that

Then, it is easy to verify that \(\mathcal {C}_{+}\mathbf {P}_1:\mathbf {P}_2=0\) and

\(\square\)

From (2.12)–(2.13) for \(k=2\), we have

Thus, by (2.16) for \(k=0\), we get

As \(\mathcal {H}_+\mathbf {P}_\bot ^{(2)}, \mathcal {B}_+\mathbf {Q}_+^{(1)}, \mathcal {C}_+\mathbf {Q}_+^{(1)}\in (\mathrm {Ker}~\mathcal {H}_+)^\bot\) , we have

This is a compatibility condition on the solvability of (2.25), which can be ensured if we choose \(\mathbf {n}(x,t)\) solving the heat flow (1.4). Using the invertibility of \(\mathcal {H}_{+}\) in \((\mathrm {Ker}~\mathcal {H}_+)^\bot\), we can write

here, \(\mathbf {A}_1\) depends on \(\mathbf {Q}_+^{(0)}\) and \(\mathbf {Q}_+^{(1)}\).

From (2.16) for \(k=1\), we have

This leads to the following compatibility condition for \(\mathbf {P}_\top ^{(1)}\) (recall \(\mathbf {P}_\bot ^{(1)}=0\)):

Substituting (2.26) into 2.28 gives an equation for \(\mathbf {P}_\top ^{(1)}\):

To solve the system, we have to know the boundary conditions for \(\mathbf {P}_\top ^{(1)}\) on \(\Gamma\), which will be fixed in the next section by matching the outer and inner expansions.

Once \(\mathbf {P}_\top ^{(1)}\) is solved, then \(\mathbf {P}_\bot ^{(2)}\) is solved by (2.26). In addition, we know from (2.27) and (2.28) that

and here, \(\mathbf {A}_2\) depends on \(\mathbf {Q}_+^{(0)},\mathbf {Q}_+^{(1)}\) and \(\mathbf {Q}_+^{(2)}\). Note that \(\mathbf {P}_\top ^{(2)}\) is still unknown which will be solved in the next step.

2.1.3 Solving \(\mathbf {P}_\bot ^{(i+1)}\) and \((p_{i1},p_{i2})\ (i\ge 2)\)

Let us assume that \(\mathbf {P}_{\top }^{(j)}, \mathbf {P}_{\bot }^{(j+1)}(1\le j\le i-1 )\) have been determined for \(i\ge 2\), and

Here, \(\mathbf {A}_i\) depends on \(\big \{\mathbf {Q}_+^{(j)}\big \}_{j=0}^{j=i}\). Then, we can write

with \(\mathbf {D}^{(i)}=\mathbf {D}^{(i)}(\mathbf {Q}_+^{(0)}, \mathbf {Q}_+^{(1)},\ldots , \mathbf {Q}_+^{(i-1)}, \mathbf {P}_\bot ^{(i)})\).

We will show how to solve \(\mathbf {P}_{\top }^{(i)}\) and \(\mathbf {P}_{\bot }^{(i+1)}\). First of all, we may write

where \({\tilde{\mathbf {B}}}^{(i)}\) only depends on \(\mathbf {Q}_+^{(0)}, \mathbf {Q}_+^{(1)},\ldots , \mathbf {Q}_+^{(i)}\). Similarly, we may write

where \({\tilde{\mathbf {C}}}^{(i)}={\tilde{\mathbf {C}}}^{(i)}(\mathbf {Q}_+^{(0)}, \mathbf {Q}_+^{(1)},\ldots , \mathbf {Q}_+^{(i)})\). Then, from (2.16), we have

which leads to the following compatibility condition for \(\mathbf {P}_\top ^{(i)}\):

This defines an equation for \(\mathbf {P}_\top ^{(i)}\) in terms of \(p_{i1}\) and \(p_{i2}\) in \(\Omega _{+}\). Together, with boundary conditions on \(\Gamma\) for \(p_{i1}\) and \(p_{i2}\), which will be specified in next section, we can solve \(\mathbf {P}_\top ^{(i)}\). Once \(\mathbf {P}_\top ^{(i)}\) is determined, we can solve \(\mathbf {P}_\bot ^{(i+1)}\) according to (2.33).

2.2 Inner Expansion

In this subsection, we perform inner expansion (2.3) in \(\Gamma (\delta )\). More precisely, we show how to construct a family of functions:

such that

is a good approximation of \(\mathbf {Q}^\varepsilon\) in \(\Gamma (\delta )\)

One can easily find from the fact \(|\nabla \varphi ^{\varepsilon }|^2=1\) that

The truncated function \(\varphi ^{[k]}(x,t)\) is no longer a distance function, as \(|\nabla \varphi ^{[k]}|\ne 1\) (although it is small). Nevertheless, \(\varphi ^{[k]}(x,t)\) is the kth approximation of the signed distance function from x to the interface \(\Gamma ^k_t\triangleq \{x:\varphi ^{[k]}(x,t)=0\}\). Actually, we have the following lemma.

Lemma 2.4

Let\(0\le i\le k\). For every fixed\(t\in [0,T]\), let\(r_t(x)\)be the signed distancefromxto\(\Gamma _t^i\). Then, for small\(\varepsilon\),

Proof

Noting that \(|\nabla \varphi ^{[i]}|^2=1+O(\varepsilon ^{i+1})\); then, for small \(\varepsilon\), one gets

and

Choosing \(x_0\in \Gamma ^i\), i.e., \(r_t(x_0)=\varphi ^{[i]}(x_0,t)=0\), then

here, C is independent of \(t\in [0,T]\) and small \(\varepsilon\). \(\square\)

Motivated by [1], we modify the system (1.1) in the inner region as follows:

where

and \(\zeta \in C^{3}(\mathbb {R}):\mathbb {R}\rightarrow \mathbb {R}\) is a function related the leading order profile of \(\mathbf {Q}\) in the inner region. In particular, \(\zeta (z)\) satisfies

where the monotonic function s(z) is given by (2.50). Such a function \(\zeta (z)\) will be constructed in Lemma 6.2.

Remark 2.5

Due to\(z=\frac{\varphi ^\varepsilon }{\varepsilon }\)in (2.37), we have\(\mathbf {G}^{\varepsilon }\zeta '(\varphi ^{\varepsilon }-\varepsilon z)=0\), and thus, we do not change the system (1.1). However, the equations for\(\mathbf {Q}_{I}^{(i)}\ (i\ge 1)\)have been changed in order that\(\mathbf {Q}_{I}^{(i)}\ (i\ge 1)\)are solvable in our framework. More importantly, the matching condition (2.4) and the cancellation property (6.14) could hold.

2.2.1 The Leading Order \(\mathbf {Q}_I^{(0)}\)

For \(k\ge 2\), let

Then, we have

Notice that

and define

and here, we used the notation \(\frac{\mathrm{d}^2}{\mathrm{{d}}z^2}\), since (x, t) is seen as a fixed parameter when we solve \(\mathbf {Q}_{I}^{(i)}\ (i=1,2,\ldots )\). Then, we have the following systems for order \(\varepsilon ^{-2}, \varepsilon ^{-1}\) and \(\varepsilon ^{k}\ (k\ge 0)\), respectively:

Now, we seek the solution \(\mathbf {Q}_I^{(i)}\ (i=0,1,\ldots )\), which take the form:

where

First of all, the leading order Eq. (2.44) has an explicit solution

where s(z) is defined by (6.1). Obviously, we have

for all \(j,l,m\ge 0\). In fact, we only need \(j,l,m\le k\) for some fixed k depending on the actual order of expansion.

In what follows, we derive the compatibility conditions for solving \(\mathbf {Q}_{I}^{(1)}\) and \(\mathbf {Q}_{I}^{(2)}\).

2.2.2 Compatibility Condition for Solving \(\mathbf {Q}_I^{(1)}\)

For \((x,t)\in \Gamma (\delta )\), noting that \((\nabla \varphi \cdot \nabla )\mathbf {n}\cdot \mathbf {n}=0\), let

In addition, we choose

and substitute (2.50) into (2.45), and then, the right-hand side of (2.45) can be written as follows:

In addition, one has

Accordingly, (2.45) is reduced to

Here and in what follows, for a function \(\tilde{s}=\tilde{s}(z,x,t)\), we write \(\partial _z\tilde{s}\) as \(\tilde{s}'\) for simplicity.

We denote

Combining with (2.4) (\(i=1\)), we obtain the following equations for \((x,t)\in \Gamma (\delta )\):

with

To solve \(\{s_{1j}\}\ (j=0,\ldots ,4)\), we need to study the solutions to the following ODEs in \(\mathbb {R}\) for fixed \((x,t)\in \Gamma (\delta )\) :

We present the following lemmas on the solvability of (2.59)–(2.61), which will be proved in “Appendix 1”.

Lemma 2.6

If the following decay conditions:

with some\(k\in \mathbb {N}\)and the compatibility condition

hold, then (2.59) has a unique bounded solution, such that\(s_{0}(0,x,t)=1\)and

where\(j,l,m=0,1,\ldots ,\)\(C>0\)is independent ofz, x, tand\(s_{0}^{+}(x,t)=\frac{f_{0}^{+}(x,t)}{a}\). More concretely, we have

Lemma 2.7

If the following decay conditions:

with some\(k\in \mathbb {N}\)and the compatibility condition

hold, then for any given\(s_1^{+}(x,t)\), (2.60) has aunique bounded solution, such that

where\(j,l,m=0,1,\ldots ,\)\(C>0\)is independent ofz, x, t. Moreconcretely, we have

Lemma 2.8

If the following decay conditions:

with some\(k\in \mathbb {N}\)hold, then (2.61) has a uniquebounded solution, such that

where\(j,l,m=0,1,\ldots\)and\(C>0\)is independent ofz, x, t.

Remark 2.9

Lemma 2.6, with some slight differences, has been proved in Lemma 4.1 in [1]. In “Appendix 1”, we present a different proof, which can be applied to prove Lemma 2.7–2.8.

Obviously, the Eq. (2.57) have only trivial solution \(s_{13}=s_{14}=0.\) To solve (2.55), we need to ensure the compatibility condition (2.64), which requires that for \((x,t)\in \Gamma (\delta )\),

For \((x,t)\in \Gamma\), we have \(\varphi =0\), and thus

which is the evolution of the interface (1.8). In addition, \(g_{00}\) is determined as follows:

On the other hand, if we take \(g_{00}\) as in (2.78), then we can solve \(s_{10}\) using Lemma 2.6 in the case of \(k=0\). More concretely, we have

Accordingly, we get

The compatibility condition (2.70) for solving \(s_{11}\) and \(s_{12}\) implies that, for \((x,t)\in \Gamma (\delta )\):

For \((x,t)\in \Gamma\), we have \(\varphi =0\), and then, \(\phi _1=\phi _2=0\) on \(\Gamma\). Thus, we can derive that \(\mathbf {n}\) should satisfy the Neumann condition on the sharp interface:

here, \(\nu =\nabla \varphi\) is the unit outer normal of \(\Gamma\). We then determine \(g_{01}\) and \(g_{02}\) as follows:

for \(i=1,2\).

2.2.3 Compatibility Conditions for Solving \(\mathbf {Q}_I^{(2)}\)

We first write (2.46) \((k=0)\) as follows:

where

Let

Then, one has, from the similar calculation in (2.53), that the system (2.83) combined with (2.4)(\(i=2\)) is reduced into the following five ODEs:

with

By Lemmas 2.6 and 2.7, to solve \(s_{20}, s_{21},\) and \(s_{22}\), we need the following equalities for \((x,t)\in \Gamma (\delta )\):

When \((x,t)\in \Gamma\), we have \(\varphi (x,t)=0\), and thus, for \((x,t)\in \Gamma\):

Careful computations yield that on \(\Gamma\)

where we have used the facts that on \(\Gamma\)

Consequently, we get that on \(\Gamma\)

and

Thus, the equality \(\mathbf {E}_0:\int _{\mathbb {R}}\mathbf {F}^{(1)}(z,x,t)\mathrm {d}z=0\) on \(\Gamma\) is equivalent to

where

(2.92) is actually the evolution equation of \(\varphi ^{(1)}\) on \(\Gamma\). Furthermore, the equalities

on \(\Gamma\) are equivalent to

on \(\Gamma\), respectively, where

We can note that, if \(p_{11}\) and \(p_{12}\) are given, then

Moreover, (2.92), (2.93), and (2.94) are equivalent to

In summary, we have a nonlinear differential system for \(\varphi ^{(1)}, p_{11}\), and \(p_{12}\) as follows:

where the fourth equation comes from (2.35) (\(i=1\)).

In “Appendix 1”, we will give a sketch of solving (2.99). Then, we solve \(\mathbf {Q}_{I}^{(1)}, \mathbf {G}^{(1)}\) and \(\mathbf {Q}_{I,\bot }^{(2)}\), and, finally, solve the inner expansion for the kth order (\(k\ge 2\)).

2.3 Proof of Theorem 1.1

From the process of our asymptotic matching expansions, we find that

Moreover, there hold

Furthermore, due to (7.24) and (7.25), if \(\varepsilon\) is small, then \(\partial ^j_z\partial ^l_x\partial ^m_t\left( \mathbf {Q}_{I}^{(k)}(z,x,t)-\mathbf {Q}_{\pm }^{(k)}(x,t)\right) =O \left( \mathrm{e}^{-\frac{C}{\varepsilon }} \right)\) for \(j,l,m=0,1,\ldots ,\) and \((x,t)\in \Gamma (\delta )\backslash \Gamma (\frac{\delta }{2})\). Consequently, we can find that, for small \(\varepsilon\), \(\mathbf {Q}^{[k]}\) defined in (2.6) satisfies

This completes the proof of the theorem.

3 Spectral Analysis of the 1-D Linear Operators

In this section, we conduct the spectral analysis for two 1-D linear operators defined on the interval \(I_\varepsilon =(-\frac{1}{\varepsilon },\frac{1}{\varepsilon })\):

These two operators will play important roles in the proof of Theorem 1.2.

3.1 Spectral Estimates of \(\mathcal {J}_0\)

Let \(\Vert q\Vert =\Vert q\Vert _{L^2(I_\varepsilon )}\). We consider the following Neumann-type eigenvalue problem:

Lemma 3.1

-

(1)

(Estimate of the first eigenvalue of\(\mathcal {J}_0\))

$$\begin{aligned} \lambda _{\theta ,1}\triangleq \inf _{\Vert q\Vert =1}\int _{I_{\varepsilon }}\left( \left( q' \right) ^2+\theta (s)q^2\right) \mathrm{{d}}z=O \left( \mathrm{e}^{-\frac{C}{\varepsilon }} \right) ; \end{aligned}$$(3.4)here,Cis a positive constant independent of small\(\varepsilon\).

-

(2)

(Estimate of the second eigenvalue of\(\mathcal {J}_0\))

$$\begin{aligned} \lambda _{\theta ,2}\triangleq \inf _{\Vert q\Vert =1,q\bot q_{\theta }}\int _{I_{\varepsilon }}\left( \left( q' \right) ^2+\theta (s)q^2\right) \mathrm{{d}}z\ge c_{\theta }>0 ; \end{aligned}$$(3.5)here,\(q\bot q_{\theta }\Leftrightarrow \int _{I_{\varepsilon }} qq_{\theta }\mathrm{{d}}z=0\), \(q_{\theta }\)is the normalized eigenfunctioncorresponding to\(\lambda _{\theta ,1}\)and\(c_{\theta }\)is a positiveconstant independent of small\(\varepsilon\).

-

(3)

(Characterization of the first normalized eigenfunctionof\(\mathcal {J}_0\))

$$\begin{aligned} \Vert q_{\theta }-\alpha s'\Vert ^2=O \left( \mathrm{e}^{-\frac{C}{\varepsilon }} \right) ; \end{aligned}$$(3.6)here,\(\alpha =\frac{1}{\Vert s'\Vert }\). In addition to (3.6), we have

$$\begin{aligned} \Vert (q_{\theta }-\alpha s')'\Vert ^2=O \left( \mathrm{e}^{-\frac{C}{\varepsilon }} \right) . \end{aligned}$$(3.7)In particular

$$\begin{aligned} \int _{I_\varepsilon }\big |q_{\theta }'\big |^2\mathrm{{d}}z\le C. \end{aligned}$$(3.8)

Proof

(3.4)–(3.6) have been proved in Lemma 2.1 in [8]. Here, we will use another method to prove (3.4)–(3.5). The method is helpful for us to prove (3.19) and (3.23).

-

(1)

Thanks to (2.6) in [8], we have

$$\begin{aligned} \lambda _{\theta ,1}\le O \left( \mathrm{e}^{-\frac{C}{\varepsilon }} \right) . \end{aligned}$$(3.9)Let \(z^*>0\), such that \(\theta (s)>\frac{3a}{4}\) for \(z\in (-\infty ,-z^*)\cup (z^*,+\infty )\) and \(\lambda _{\theta ,1}\le \frac{a}{4}\). Let \(q_{\theta }\) be the normalized eigenfunction corresponding to \(\lambda _{\theta ,1}\). Then, \(q_{\theta }>0\) and \(q_{\theta }'(\pm \frac{1}{\varepsilon })=0\) ([22]). For any fixed \(\bar{z}\in [z^*,\frac{1}{\varepsilon }]\), according to the arguments (the comparison principle) in Lemma 2.1 in [8], we have

$$\begin{aligned} |q_{\theta }(\pm z)|\le |q_{\theta }(\bar{z})|\cdot \frac{\cosh \sqrt{\frac{a}{2}}\left( \frac{1}{\varepsilon }-|z|\right) }{\cosh \sqrt{\frac{a}{2}}\left( \frac{1}{\varepsilon }-\bar{z} \right) },\quad z\in \left[ \bar{z},\frac{1}{\varepsilon } \right] . \end{aligned}$$(3.10)In particular, if we choose \(\bar{z}\in [z^*,z^*+1]\), such that \(|q_{\theta }(\bar{z})|\le 1\), then

$$\begin{aligned} |q_{\theta }(z)|\le O \left( \mathrm{e}^{-C|z|} \right) ,\quad z\in [-\frac{1}{\varepsilon },-\bar{z}]\cup \left[ \bar{z},\frac{1}{\varepsilon } \right] . \end{aligned}$$(3.11)Thus, we have

$$\begin{aligned} \lambda _{\theta ,1}&=\int _{I_{\varepsilon }}\bigg (\left( q_{\theta }'\right) ^2+\theta (s)q_{\theta }^2\bigg )\mathrm{{d}}z\nonumber \\&=\int _{I_{\varepsilon }}\bigg (\left( q_{\theta }'\right) ^2+\frac{s'''}{s'}q_{\theta }^2\bigg )\mathrm{{d}}z\nonumber \\&=\int _{I_{\varepsilon }}\bigg (\left( q_{\theta }'\right) ^2+\left( \frac{s''}{s'}\right) ^2q_{\theta }^2+\left( \frac{s''}{s'}\right) 'q_{\theta }^2\bigg )\mathrm{{d}}z\nonumber \\&=\int _{I_{\varepsilon }}\bigg (\left( q_{\theta }'\right) ^2+\left( \frac{s''}{s'}\right) ^2q_{\theta }^2-2\frac{s''}{s'}\cdot q_{\theta }q_{\theta }'\bigg )\mathrm{{d}}z+ \frac{s''}{s'}q_{\theta }^2\bigg |_{-\frac{1}{\varepsilon }}^{\frac{1}{\varepsilon }}\nonumber \\&=\int _{I_{\varepsilon }}\left( q_{\theta }'-\frac{s''}{s'}q_{\theta }\right) ^2\mathrm{{d}}z+ \frac{s''}{s'}q_{\theta }^2\bigg |_{-\frac{1}{\varepsilon }}^{\frac{1}{\varepsilon }}\nonumber \\&\ge \frac{s''}{s'}q_{\theta }^2\bigg |_{-\frac{1}{\varepsilon }}^{\frac{1}{\varepsilon }} =\sqrt{\frac{c}{3}}(s_+-2s)q_{\theta }^2\bigg |_{-\frac{1}{\varepsilon }}^{\frac{1}{\varepsilon }}=O \left( \mathrm{e}^{-\frac{C}{\varepsilon }}\right) . \end{aligned}$$(3.12) -

(2)

Let \(\lambda _{\theta ,2}\le \frac{a}{4}\) and \(q_{\theta ,2}\) be the normalized eigenfunction corresponding to \(\lambda _{\theta ,2}\), and then, \(q_{\theta }\perp q_{\theta ,2}\), \((q_{\theta ,2})'(\pm \frac{1}{\varepsilon })=0\) and \(q_{\theta ,2}\) only has one zero point denoted by \(z_0\) in \(I_\varepsilon\)( [22]). Applying the comparison arguments, (3.10) and (3.11) also hold for \(q_{\theta ,2}\). Thanks to (3.10), we have \(z_0\in (-z^*,z^*)\). Then

$$\begin{aligned} \lambda _{\theta ,2}=\lambda _{\theta ,2}\int _{I_\varepsilon }(q_{\theta ,2})^2\mathrm{{d}}z&=\int _{I_\varepsilon }\bigg (\left( (q_{\theta ,2})'\right) ^2+\theta (s)(q_{\theta ,2})^2\bigg )\mathrm{{d}}z\nonumber \\&=\int _{I_\varepsilon }\left( (q_{\theta ,2})'-\frac{s''}{s'}q_{\theta ,2}\right) ^2\mathrm{{d}}z+ \frac{s''}{s'}(q_{\theta ,2})^2\bigg |_{-\frac{1}{\varepsilon }}^{\frac{1}{\varepsilon }} \nonumber \\&=\int _{I_\varepsilon }(s')^2\bigg [\left( \frac{q_{\theta ,2}}{s'}\right) '\bigg ]^2\mathrm{{d}}z+O \left( \mathrm{e}^{-\frac{C}{\varepsilon }} \right) . \end{aligned}$$(3.13)Set \(\hat{q_2}=s'\left( \frac{q_{\theta ,2}}{s'}\right) '\) and \(z_0^{-}=\min \{z_0,0\}\), and then, for \(z\ge z_0\)

$$\begin{aligned} q_{\theta ,2}(z)=s'(z)\int _{z_0}^{z}\frac{\hat{q_2}}{s'}(\tau )\mathrm{{d}}\tau =s'(z)\int _{z_0^{-}}^{0}\frac{\hat{q_2}}{s'}(\tau )\mathrm{{d}}\tau +s'(z)\int _{0}^{z}\frac{\hat{q_2}}{s'}(\tau )\mathrm{{d}}\tau . \end{aligned}$$(3.14)Using \(s'(z)>0\) in \(\mathbb {R}\) and (3.13), we get

$$\begin{aligned} \bigg |q_{\theta ,2}(z)s'(z)\int _{z_0^{-}}^{0}\frac{\hat{q_2}}{s'}(\tau )\mathrm{{d}}\tau \bigg |&\le C|z_0|\big |q_{\theta ,2}(z)s'(z)\big |\bigg (\int _{z_0^{-}}^{0}|\hat{q_2}|^2(\tau )\mathrm{{d}}\tau \bigg )^{\frac{1}{2}}\nonumber \\&\le C \left( \lambda _{\theta ,2}+O \left( \mathrm{e}^{-\frac{C}{\varepsilon }} \right) \right) \big |q_{\theta ,2}(z)s'(z)\big |. \end{aligned}$$(3.15)Moreover, using \(s''>0\) in \((0,\frac{1}{\varepsilon })\) and (3.13), we get

$$\begin{aligned} \bigg |q_{\theta ,2}(z)s'(z)\int _{0}^{z}\frac{\hat{q_2}}{s'}(\tau )\mathrm{{d}}\tau \bigg |&\le \big |q_{\theta ,2}(z)\big |\int _{z_0}^{z}|\hat{q_2}(\tau )|\mathrm{{d}}\tau \le \big |q_{\theta ,2}(z)\big ||z-z_0|^{\frac{1}{2}}\bigg (\int _{z_0}^{z}|\hat{q_2}(\tau )|^2\mathrm{{d}}\tau \bigg )^{\frac{1}{2}}\nonumber \\&\le \left( \lambda _{\theta ,2}+O \left( \mathrm{e}^{-\frac{C}{\varepsilon }}\right) \right) \big |q_{\theta ,2}(z)\big ||z-z_0|^{\frac{1}{2}}. \end{aligned}$$(3.16)Putting (3.15) and (3.16) into (3.14), one has

$$\begin{aligned} 1&=\int _{I_\varepsilon }(q_{\theta ,2})^2(z)\mathrm{{d}}z \le C \left( \lambda _{\theta ,2}+O \left( \mathrm{e}^{-\frac{C}{\varepsilon }} \right) \right) \int _{I_\varepsilon }\big |q_{\theta ,2}(z)s'(z)\big |\mathrm{{d}}z \nonumber \\&\quad +\,\left( \lambda _{\theta ,2}+O \left( \mathrm{e}^{-\frac{C}{\varepsilon }} \right) \right) \int _{I_\varepsilon }\big |q_{\theta ,2}(z)\big ||z-z_0|^{\frac{1}{2}}\mathrm{{d}}z \le C \left( \lambda _{\theta ,2}+O \left( \mathrm{e}^{-\frac{C}{\varepsilon }} \right) \right) ; \end{aligned}$$(3.17)here, we have used the fact that \(q_{\theta ,2}(z)\) decays exponentially to zero at \(\infty\). It easily follows from (3.17) that (3.5) holds for small \(\varepsilon\).

-

(3)

We easily find that

$$\begin{aligned} -\left( q_{\theta }-\alpha s'\right) ''+\theta (s)(q_{\theta }-\alpha s')=\lambda _{\theta ,1} q_{\theta },\ \ (q_{\theta }-\alpha s')'\big |^{{\varepsilon ^{-1}}}_{-{\varepsilon ^{-1}}}=-\alpha s''\big |^{{\varepsilon ^{-1}}}_{-{\varepsilon ^{-1}}}. \end{aligned}$$Multiplying the above equation by \(q_{\theta }-\alpha s'\), integrating by parts and using (3.6), we have

$$\begin{aligned} \int _{I_\varepsilon }\big |\left( q_{\theta }-\alpha s'\right) '\big |^2\mathrm{{d}}z&=-\int _{I_\varepsilon }\theta (s)\left( q_{\theta }-\alpha s'\right) ^2\mathrm{{d}}z +\lambda _{\theta ,1}\int _{I_\varepsilon }q_{\theta }(q_{\theta }-\alpha s')\mathrm{{d}}z\\&\quad -\alpha ^2s''({\varepsilon ^{-1}})s'({\varepsilon ^{-1}})+\alpha ^2s''(-{\varepsilon ^{-1}})s'(-{\varepsilon ^{-1}})\\&=O \left( \mathrm{e}^{-\frac{C}{\varepsilon }} \right) . \end{aligned}$$This completes the proof of this lemma.

\(\square\)

3.2 Spectral Estimates of \(\mathcal {J}_1\)

Let \(\omega\) be a positive and bounded function, which decays exponentially to zero at \(z=+\infty\) and \(\Vert q\Vert _\omega =\left( \int _{I_{\varepsilon }}\omega q^2\mathrm{{d}}z\right) ^{\frac{1}{2}}\). We study the following Neumann eigenvalue problem:

Lemma 3.2

(Estimate of the first eigenvalue of \(\mathcal {J}_1\))

Proof

Set \(\beta =\frac{1}{\Vert s\Vert _\omega }\), then

Together with the fact that

we get

On the other hand, let \(z^*>0\), such that \(\kappa (s)>\frac{a}{2}\) for \(z\in (-\infty ,-z^*)\). Assume that \(\lambda _{\kappa ,1}\le \frac{\frac{a}{4}}{\sup \limits _{z\in (-{\varepsilon }^{-1},-z^*)}\omega (z)}\Leftrightarrow \lambda _{\kappa ,1}\sup \limits _{z\in (-{\varepsilon }^{-1},-z^*)}\omega (z)\le \frac{a}{4}\) and \(q_{\kappa }\) is the normalized eigenfunction corresponding to \(\lambda _{\kappa ,1}\). Then, \(q_{\kappa }>0\) and \((q_{\kappa })'(\pm {\varepsilon }^{-1})=0\) ([22]). For any fixed \(\bar{z}\in [-{\varepsilon }^{-1},-z^*]\), by applying the comparison principle in \([-{\varepsilon }^{-1},\bar{z}]\), we get

Due to \(\Vert q_{\kappa }\Vert _\omega =1\), there exists \(\hat{z}\in (-z^*-1,-z^*)\), such that \(|q_{\kappa }(\hat{z})|\le \frac{1}{\sqrt{\omega (\hat{z})}}\le \frac{1}{\inf \limits _{z\in (-z^*-1,-z^*)}\sqrt{\omega (z)}}\). Thus, we have

Therefore, we obtain

and here, we have used the fact that \(q_{\kappa }(z)\) decays exponentially to zero at \(-\infty\). Combining (3.20) and (3.22) yields the desired conclusion. \(\square\)

Lemma 3.3

(Estimate of the second eigenvalue of \(\mathcal {J}_1\))

here,\(q\bot _\omega q_{\kappa }\Leftrightarrow \int _{I_{\varepsilon }}\omega qq_{\kappa }\mathrm{{d}}z=0\), \(q_{\kappa }\)is the normalized eigenfunctioncorresponding to\(\lambda _{\kappa ,1}\), and\(c_{\kappa }\)is apositive constant independent of small\(\varepsilon\).

Proof

For clarity, we let \(q_2\), in this proof, be the normalized eigenfunction corresponding to \(\lambda _{\kappa ,2}\). Then, \(q_{\kappa }\perp _\omega q_2\), \(q_2'(\pm \frac{1}{\varepsilon })=0\), and \(q_2\) only has one zero point denoted by \(z_0\) in \(I_\varepsilon\)( [22]). Thanks to (3.21), we have \(z_0\in (-z^*,+\frac{1}{\varepsilon })\). Then, we have

Moreover, set \(\hat{q_2}=s\left( \frac{q_2}{s}\right) '\), and then, for \(z\ge z_0\), \(q_2(z)=s(z)\int _{z_0}^{z}\frac{\hat{q_2}}{s}(\tau )\mathrm{{d}}\tau\) and

Furthermore, we can observe that if \(z_0\ge 0\), then

and if \(z_0\in (-z^*,0)\), then

Therefore, we get by (3.24) that

and then

This completes the proof of this lemma. \(\square\)

Lemma 3.4

(Characterization of the first normalized eigenfunction of \(\mathcal {J}_1\))

here,Cis a positive constant independent of small\(\varepsilon\). In particular

Proof

Set \(\beta s=\gamma q_{\kappa }+(q_{\kappa })^{\perp _\omega }\). Then, \(\Vert (q_{\kappa })^{\perp _\omega }\Vert ^2_\omega +\gamma ^2=1\) and

Accordingly \(\Vert (q_{\kappa })^{\perp _\omega }\Vert ^2_\omega =O \left( \mathrm{e}^{-\frac{C}{\varepsilon }} \right)\) and

Next, we proceed along the same line of the proof of (3.7). One can directly find that

Multiplying the above equation by \(q_{\kappa }-\beta s\) and integrating by parts, we have

Then, (3.26) follows. \(\square\)

Remark 3.5

In view of the same line as in Lemmas3.2–3.4, we can obtain the corresponding conclusions to the case without weight\(\omega\)as follows:

Here, we omit the corresponding details.

From (3.2) and (3.11), we find that \(\kappa (s)\) and \(q_{\theta }\) are bounded and decay exponentially to zero at \(z=+\infty\). In what follows, we fix a positive and bounded function \(\omega (z)\) decaying exponentially to zero at \(z=+\infty\), such that

3.3 Coercive Estimates of \(\mathcal {J}_0\) and \(\mathcal {J}_1\)

Lemma 3.6

There exist two positive constants\(C_1,C_2\)independent of small\(\varepsilon\), such that for any\(u=\gamma q_{\theta }+ {p_1}\)and\(v=\delta q_{\kappa }+ {p_2}\)with\(p_1\bot q_{\theta }\)and\(p_2\bot _\omega q_{\kappa }\):

Proof

It follows from (3.5) that

Similarly, using (3.23) and the fact that \(|\kappa (s(z))|\le \omega (z)\), we have

which concludes the proof of the lemma. \(\square\)

4 The Spectral Condition of the Linearized Operator

4.1 Reduction to the Transition Region

According to the definition of the approximate solution (2.6), we have

where

in which \(z=\frac{\varphi ^{[k]}(x,t)}{\varepsilon }\) and

One can directly have

here, recall the definition of \(\mathbf {B}\) and \(\mathbf {C}\) in (2.7)–(2.8):

In addition, we have the following lemma.

Lemma 4.1

It holds that

where\(\Gamma ^k_t(\frac{\delta }{4})=\{x:|\varphi ^{[k]}(x,t)|< \frac{\delta }{4}\}\), and Cis independent of small\(\varepsilon\)and\(t\in [0,T]\).

Proof

We write \(\mathbf {Q}\) as

Using (4.1) and (4.2), direct computations lead to

and

Noting that there exists a positive number \(C_0\), such that

and

Therefore, for small \(\varepsilon\)

and

where \(\Gamma ^k(\frac{\delta }{4})=\{(x,t):|\varphi ^{[k]}(x,t)|< \frac{\delta }{4}\}\).

Thus, we obtain

Noting that for small \(\varepsilon\)

then

Moreover, by the Young’s inequality and (4.10), we have

Thanks to (4.7), (4.13), and (4.14), one has for small \(\varepsilon\):

With (4.10), (4.12), and (4.15), we arrive at for small \(\varepsilon\) and \((x,t)\in \left( \Omega \times [0,T]\right) \backslash \Gamma ^k(\frac{\delta }{4}),\)

Therefore, we have for \(t\in [0,T]\):

This completes the proof of this lemma. \(\square\)

As a direct consequence of the above lemma, to prove Theorem 1.2, it suffices to prove a lower bound estimate independent of small \(\varepsilon\) and \(t\in [0,T]\) in the phase transition region:

In \(\Gamma ^k(\delta )\), we have \(\partial _z\mathbf {Q}=\sum _{j=0}^4 (\partial _zq_{j}\mathbf {E}_{j}+\partial _zq_{j}\partial _z\mathbf {E}_{j})\), and then, direct computations lead to

where

Due to Lemma 2.4, we may assume that \(\varphi ^{[k]}(x,t)\) is the signed distance to \(\Gamma _t^k=\{x:\varphi ^{[k]}(x,t)=0\}\) for \(k\ge 1\) without loss of generality. And let \(\sigma (x,t)\) be the projection of x on \(\Gamma _t^k\) along the normal of \(\Gamma _t^k\). Then, the transformation \(x\longmapsto (\varphi ^{[k]}(x,t),\sigma (x,t))\) is a diffeomorphism for small \(\delta\) and \(t\in [0,T]\). Let \(J(\varphi ^{[k]},\sigma )=\det \frac{\partial x^{-1}(\varphi ^{[k]},\sigma )}{\partial (\varphi ^{[k]},\sigma )}\) be the Jacobian of the transformation. Then, \(J|_{\Gamma _t^k}=1\) and \(\frac{\partial J}{\partial \varphi ^{[k]}}|_{\Gamma _t^k}=0.\) Thus

As \(\delta\) is a small fixed positive constant, for convenience, we assume \(\delta =4\) in what follows. Thus, one has for \(t\in [0,T]\):

where \(\mathfrak {M}_{G}\) is the collection of “good terms” which contain \(q_3\) and \(q_4\):

\(\mathfrak {M}_{M}\) represents “mild terms” like \(q_i\partial _zq_j\ (i,j=0,1,2)\):

\(\mathfrak {M}_{B}\) contains “bad terms” given by

In the following lemma, we deal with the integral with Jacobian. The method here is different from (2.14) in [8].

Lemma 4.2

Given a function\(u=u(z,\sigma )\)defined in\((-\frac{1}{\varepsilon }, \frac{1}{\varepsilon })\times \Gamma ^k\), we have

where\(\hat{u}=u J^{\frac{1}{2}}\)andCis a constant independentof small\(\varepsilon\)andu.

Proof

First, we can observe that

Let \(\hat{u}=\gamma q_{\theta }+p_1\), then

By (3.7), we get

and

Moreover, by (3.29) and the Young’s inequality, one has

This gives

which together with (4.25) leads to (4.23). The proof of (4.24) is similar. \(\square\)

4.2 Estimates of \(\mathfrak {M}_G\)

In this subsection, we deal with the “good terms” defined in (4.20). In this and next two subsections, we define \(\hat{q}_{j}=q_{j}J^{\frac{1}{2}}\ (0\le j\le 4)\), and assume the decompositions:

and here, \(p_0\bot q_{\theta }\) and \(p_1,p_2\bot _\omega q_{\kappa }\). Then, \(\Vert \hat{q}_{0}\Vert ^2=\gamma ^2+\Vert p_{0}\Vert ^2\), \(\Vert \hat{q}_{1}\Vert _\omega ^2=\delta ^2+\Vert p_{1}\Vert _\omega ^2\) and \(\Vert \hat{q}_{2}\Vert _\omega ^2=\mu ^2+\Vert p_{2}\Vert _\omega ^2\).

In addition, we easily get \(\partial _{z}\mathbf {n}=\varepsilon \partial _{\varphi ^{[k]}}\mathbf {n}=O(\varepsilon )\). Similarly,

Moreover, noting that \(\partial _{\nabla \varphi }\mathbf {n}\big |_{\varphi =0}=0\) (homogeneous Neumann boundary condition in (1.4)), one has

which further implies that

Now, we start to estimate \(\mathfrak {M}_G\). Due to the decomposition \(\hat{q}_{1}\), (4.28) and the Young’s inequality, we infer that, for small \(\varepsilon\):

According to similar arguments, we have, for small \(\varepsilon\):

Therefore, for small \(\varepsilon\), we have

Furthermore, we easily see that for small \(\varepsilon\)

and

and

Combining (4.30)–(4.33) with (3.30), we immediately find that, for small \(\varepsilon\) and \(t\in [0,T]\):

4.3 Estimates of \(\mathfrak {M}_M\)

In this subsection, we deal with the “mild terms” defined in (4.21).

Step 1. Due to the decomposition of \(\hat{q}_{0}\) and \(\hat{q}_{1}\), (3.7), (4.18), (4.28), and the Young’s inequality, we deduce that

and

Here, \(C_1\) is defined in Lemma 3.6, and we have used the following observations:

Similarly, there holds

\(\underline{\mathrm{Step\ 2}}\). Due to the decomposition \(\hat{q}_{1}\), (3.23), (3.26), (4.27), and the Young inequality, we have

and

Consequently, we arrive at

Similarly, we can deduce that

Combining (4.41) with (4.42), we get

and here, we have used the fact \(\mathbf {l}\cdot \partial _z\mathbf {m}+\mathbf {m}\cdot \partial _z\mathbf {l}=\partial _z(\mathbf {l}\cdot \mathbf {m})=0.\)

In conclusion, with the help of (3.29)–(3.30), we have for small \(\varepsilon\) and \(t\in [0,T]\)

4.4 Estimates of \(\mathfrak {M}_B\)

In this subsection, we deal with the “bad terms” defined in (4.22). Based on (4.23), (4.24), (4.34), and (4.44), one has, for small \(\varepsilon\) and \(t\in [0,T]\):

To proceed, we first establish the following conclusions.

Lemma 4.3

For \((x,t)\in \Gamma ^k(1)\) , we have

Proof

We only prove (4.46). The remaining results can be proved similarly. For \((x,t)\in \Gamma ^k(1)\subset \Gamma (2)\), as \(|\nabla _xs_{10}(z,x,t)|\le C\), there holds

Then, it follows from (6.14) that

Using the similar arguments, we get

Hence, this Lemma is proved. \(\square\)

Due to the decomposition of \(\hat{q}_{0}\), (3.4), (3.5), (6.14) and the Young’s inequality, we infer that for small \(\varepsilon\)

Due to the decomposition of \(\hat{q}_{1}\), (3.19), (3.23), (6.14), and the Young inequality, we deduce that, for small \(\varepsilon\):

Similarly, for small \(\varepsilon\), we have

Finally, we aim to estimate \(\mathfrak {B}_7\) and \(\mathfrak {B}_8\). We only need to consider \(\mathfrak {B}_7\), as \(\mathfrak {B}_8\) can be estimated similarly. Due to the decompositions of \(\hat{q}_{1}\) and \(\hat{q}_{2}\), we have

By (6.14), (3.6), and (3.26), we infer that for small \(\varepsilon\)

From Young’s inequality and Hölder’s inequality, we deduce that

Therefore, it holds

Similarly, we have

Thanks to (4.48)–(4.52), we conclude that for small \(\varepsilon\) and \(t\in [0,T]\)

4.5 Proof of Theorem 1.2

By (4.45) and (4.53), there holds

According to (4.5), (4.19), and (4.54), we have

where C is independent of small \(\varepsilon\) and \(t\in [0,T]\).

Thus, the proof of Theorem 1.2 is completed.

5 Uniform Estimates for the Remainder Equation

This section is devoted to the proof of Theorem 1.3 and Corollary 1.4.

Proof of Theorem 1.3

We consider the error \(\mathbf {Q}_R^{\varepsilon }\triangleq \frac{\mathbf {Q}^{\varepsilon }-\mathbf {Q}_A^{\varepsilon }}{\varepsilon ^m}\) with m determined later. We introduce the energy \(\mathcal {E}(t)=\mathcal {E}_0(t)+\mathcal {E}_1(t)+\mathcal {E}_2(t)\) with

Thanks to the Sobolev embedding, we have

From (1.1) and (1.9), we find that

Multiplying (5.4) by \(\mathbf {Q}_R^{\varepsilon }\) and integrating it over \(\Omega\), we obtain

Applying the spectral condition in Theorem 1.2 and (5.2) to (5.5), we deduce that

Noting that, for \(i\in \{1,2,3\}\):

Then, we have

Using the spectral condition in Theorem 1.2 again, and noting that \(\partial _i\mathbf {Q}_A^{\varepsilon }\sim \frac{1}{\varepsilon }\) and \(\partial _i\mathfrak {R}_k^{\varepsilon }\sim \varepsilon ^{k-2}\), we can deduce that

Based on (5.8), (5.1), and (5.2), we arrive at

Similarly, we obtain

In addition, from (5.10) and (5.1)–(5.3), we infer that

Combining (5.6), (5.9), and (5.11), we get

Taking \(m=9\) and \(k=10\) in (5.12), we then have

Noting that from (1.11), we derive that \(\mathcal {E}(0)\le C_2\) for some \(C_2>0\). Define

By (5.13), there exists \(\varepsilon _0>0\) dependent of \(C_1,C_2\), and T, such that for \(\varepsilon <\varepsilon _0\):

which implies that

Thus, \(T_1=T\) from a continuous argument. Noting that \(\mathbf {Q}^{\varepsilon }-\mathbf {Q}_A^{\varepsilon }=\varepsilon ^m\mathbf {Q}_R^{\varepsilon }\), we can end the proof of Theorem 1.3.

Remark 5.1

Such a qualitative conclusion immediately tells us that making higher order expansion is very necessary. From the procedure of the proof, the higher order expansion will remedy the order of decay with respect to\(\varepsilon\)for the term\(\int _{\Omega }(\mathbf {Q}_{B}^{\varepsilon }:\mathbf {Q}_R^{\varepsilon })\mathrm{{d}}x\).

Finally, let us prove Corollary 1.4.

Proof of Corollary 1.4

From (2.6), we deduce that

and

which conclude the proof. \(\square\)

References

Alikakos, N.D., Bates, P.W., Chen, X.: Convergence of the Cahn–Hilliard equation to the Hele-Shaw model. Arch. Ration. Mech. Anal. 128, 165–205 (1994)

Allen, S., Cahn, J.: A microscopic theory for antiphase motion and its application to antiphase domain coarsening. Acta Metall. 27, 1084–1095 (1979)

Bronsard, L., Kohn, R.V.: On the slowness of the phase boundary motion in one space dimension. Commun. Pure Appl. Math. 43, 983–997 (1990)

Bronsard, L., Kohn, R.V.: Motion by mean curvature limit of Ginzburg–Landau as the singular dynamics. J. Differ. Equ. 237, 211–237 (1991)

Bronsard, L., Stoth, B.: The singular limit of a vector-valued reaction–diffusion process. Trans. Am. Math. Soc. 350, 4931–4953 (1998)

Caflisch, R.E.: The fluid dynamic limit of the nonlinear Boltzmann equation. Commun. Pure Appl. Math. 33(5), 651–666 (1980)

Chen, X.: Generation and propagation of interfaces for reaction–diffusion equations. J. Differ. Equ. 96, 116–141 (1992)

Chen, X.: Spectrum for the Allen–Cahn, Cahn–Hilliard, and phase-field equations for generic interfaces. Commun. Partial Differ. Equ. 19, 1371–1395 (1994)

de Mottoni, P., Schatzman, M.: Geometrical evolution of developed interfaces. Trans. Am. Math. Soc. 347, 1533–1589 (1995)

Evans, L.C., Soner, H.M., Souganidis, P.E.: Phase transitions and generalized motion by mean curvature. Commun. Pure Appl. Math. 45, 1097–1123 (1992)

De Gennes, P.G.: Short range order effects in the isotropic phase of nematics and cholesteric. Mol. Cryst. Liq. Cryst. 12, 193–214 (1971)

Fei, M., Wang, W., Zhang, P., Zhang, Z.: Dynamics of the nematic–isotropic sharp interface for the liquid crystal. SIAM J. Appl. Math. 75, 1700–1724 (2015)

Ilmanen, T.: Convergence of the Allen–Cahn equation to Brakke's motion by mean curvature. J. Differ. Geom. 38, 417–461 (1993)

Kamil, S.M., Bhattacharjee, A.K., Adhikari, R., Menon, G.I.: The isotropic–nematic interface with an oblique anchoring condition. J. Chem. Phys. 131, 174701 (2009)

Lin, F.H., Pan, X., Wang, C.: Phase transition for potentials of high-dimensional wells. Commun. Pure Appl. Math. 65, 833–888 (2012)

Majumdar, A., Milewski, P.A., Spicer, A.: Front propagation at the nematic–isotropic transition temperature. SIAM J. Appl. Math. 76, 1296–1320 (2016)

Park, J., Wang, W., Zhang, P., Zhang, Z.: On minimizers for the isotropic–nematic interface problem. Calc. Var. Partial. Differ. Equ. 56, 41 (2017)

Popa-Nita, V., Sluckin, T.J.: Kinetics of the nematic-isotropic interface. J. Phys. II (France) 6, 873–884 (1996)

Popa-Nita, V., Sluckin, T.J., Wheeler, A.A.: Statics and kinetics at the nematic-isotropic interface: effects of biaxiality. J. Phys. II (France) 7, 1225–1243 (1997)

Rubinstein, J., Sternberg, P., Keller, J.: Fast reaction, slow diffusion, and curve shortening. SIAM J. Appl. Math. 49, 116–133 (1989)

Rubinstein, J., Sternberg, P., Keller, J.: Reaction–diffusion processes and evolution to harmonic maps. SIAM J. Appl. Math. 49, 1722–1733 (1989)

Strauss, W.A.: Partial Differential Equations: An Introduction, 2nd edn. Wiley, Hoboken (2008)

Wang, W., Zhang, P., Zhang, Z.: Rigorous derivation from Landau–de Gennes theory to Ericksen-Leslie theory. SIAM J. Math. Anal. 47, 127–158 (2015)

Wang, W., Zhang, P., Zhang, Z.: The small Deborah number limit of the Doi-Onsager equation to the Ericksen–Leslie equation. Commun. Pure Appl. Math. 68, 1326–1398 (2015)

Acknowledgements

M. Fei is partly supported by NSF of China under Grant 11301005 and AHNSF grant 1608085MA13. W. Wang is partly supported by NSF of China under Grant 11501502 and “the Fundamental Research Funds for the Central Universities” No. 2016QNA3004. P. Zhang is partially supported by NSF of China under Grant 11421101 and 11421110001. Z. Zhang is partially supported by NSF of China under Grant 11425103.

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendix 1

1.1 The Leading Order Profile of \(\mathbf {Q}\) Near the Transition Layer

The following equation describes the profile of the leading order of \(\mathbf {Q}\) near the transition layer [see (2.44)]:

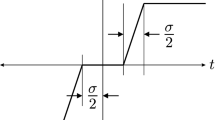

It has a uniaxial solution \(\mathbf {Q}_I^{(0)}(z)=s(z)(\mathbf {n}\mathbf {n}-\frac{1}{3}\mathbf {I})\), with s(z) given by the following:

This equation has an explicit solution

Obviously, \(s'=\sqrt{\frac{c}{3}}s(s_+-s)\) and

Remark 6.1

Here, \(\tanh x=\frac{\mathrm{e}^x-\mathrm{e}^{-x}}{\mathrm{e}^x+\mathrm{e}^{-x}}\) satisfies \(\tanh '' x=2\tanh x(\tanh x-1)(\tanh x+1)\), \(\lim_{x\rightarrow -\infty }\tanh x=-1\) and \(\lim_{x\rightarrow +\infty }\tanh x=1\). More generally, the function \(u(z)=\frac{\beta -\alpha }{2}\left( \frac{\beta +\alpha }{\beta -\alpha }+\tanh (|\beta -\alpha |\sqrt{\frac{\gamma }{8}}(z-z_0))\right)\) satisfies \(u'' =\gamma (u-\frac{\beta +\alpha }{2} )(u-\alpha )(u-\beta )\), \(\lim_{z\rightarrow -\infty }u(z)=\alpha\), \(u(z_0)=\frac{\alpha +\beta }{2}\) and \(\lim_{z\rightarrow +\infty }u(z)=\beta , \ \alpha ,\beta ,z_0\in \mathbb {R},\gamma \in \mathbb {R}^+\).

1.2 Existence of \(\zeta\)

Lemma 6.2

There exists a smooth function\(\zeta\)satisfying (2.38)–(2.39).

Proof

First of all, we have \(s(-z)+s(z)=s_+\). Let \(\chi (x)\) be a smooth non-decreasing function with \(\chi (x)=0\) for \(x\le s_+/5\) and \(\chi (x)=1\) for \(s\ge 4s_+/5.\) In addition, we assume that \(\chi (x)+\chi (s_+-x)=1\) for \(x\in \mathbb {R}\). Define \(\zeta (z)=\chi (s(z))\), and then, \(\zeta (-z)+\zeta (z)=\chi (s(-z))+\chi (s(z))=\chi (s_+-s(z))+\chi (s(z))=1\), which implies that \(\zeta ''\) is odd. As \(s'\) is even, we know that

On the other hand, we have

and

and

then, (2.39) implies that

Let

Then, we have

Thus, there exist smooth non-decreasing functions \(\chi _1(x), \chi _2(x)\) close to \({\bar{\chi }}_1(x), {\bar{\chi }}_2(x)\), such that

Therefore, we can choose \(\chi (x)=a_1\chi _1(x)+(1-a_1)\chi _2(x)\) as a linear combination of \(\chi _1(x)\) and \(\chi _2(x)\), such that

Then, (2.38)–(2.39) are satisfied for \(\zeta (z)=\chi (s(z))\). \(\square\)

1.3 Differential Operators in the Transition Layer

Let \(X(\hat{x}_1,\hat{x}_2)\in \Gamma\) be the parameterization of \(\Gamma\), and \(\mathbf {n}=\mathbf {n}(\hat{x}_1,\hat{x}_2)=(\mathbf {n}_1,\mathbf {n}_2,\mathbf {n}_3)\) be the unit normal vector to \(\Gamma\). Two tangent vectors to \(\Gamma\) are \(\mathbf {t}_i=\frac{\partial X}{\partial \hat{x}_i}=(\mathbf {t}_{i1},\mathbf {t}_{i2},\mathbf {t}_{i3}),\ i=1,2\). Set \(\hat{x}=(\hat{x}_1,\hat{x}_2)\) and \(\hat{y}= \varphi \in (-2\delta ,2\delta )\). Then, for any \(x\in \Gamma (2\delta )\):

Let h(0) and b denote the first fundamental form and the second fundamental form on \(\Gamma\). Then, for \(i,j\in \{1,2\}\):

Moreover, h(0) is a positive definite matrix.

Define the matrix \(w(\hat{x},\hat{y})=I+\hat{y}bh^{-1}(0)\), here I is the unit matrix. Direct computations lead to \(\text {det}w=1+\text {tr}(bh^{-1}(0))\hat{y}+\text {det}(bh^{-1}(0))\hat{y}^2\), and hence, \(0<C_1\le \text {det}\,w\le C_2\). Moreover, \(G(\hat{x},\hat{y}):=\text {det}\,h(0)(\text {det}\,w)^2\) and \(\gamma (\hat{x},\hat{y}):=\frac{1}{\text {det}\, w}\frac{\partial \text {det}\, w}{\partial \hat{y}}=\partial _{\hat{y}}(\text {ln}\,\text {det}\,w).\) Then, for the function \(f:\Gamma (2\delta )\rightarrow \mathbf {R},\) we have

and here, \((wh(0))^{ij}\) and \((w^2h(0))^{ij}\) are the entries of the matrix \((wh(0))^{-1}\) and \((w^2h(0))^{-1}.\)

1.4 Proofs of Lemmas 2.6–2.8

Proof of Lemma 2.6

By the method of variation of constants, we can solve (2.59) with \(s_{0}(0,x,t)=1\) explicitly as in (2.67). First, we check (2.65) in the case of \(j=0,1,2\) and \(l=m=0\), and other cases can be obtained by the induction argument. Fixing \(z_0\gg 1\), we have for \(z>z_0\)

Since \(s'=\sqrt{\frac{c}{3}}s(s_+-s)\) and \(s''=s(a-\frac{b}{3}s+\frac{2c}{3}s^2)=\frac{2c}{3}s(s-\frac{s_+}{2})(s-s_+)\), we can get

and

Therefore, for \(z\gg 1\)

Noting that

From (6.8), one has

In addition, we have

then there holds

Moreover, one has

Consequently, one has

where the constant C is independent of z, x, t.

Furthermore, due to (2.59), we get

where the constant C is independent of z, x, t.

Second, we check (2.66) in the case of \(j=0,1,2\) and \(l=m=0\), and other cases can be obtained by the induction argument. Noting that for \(z<0\) and \(|z|\gg 1\), one has

Since \(s'=\sqrt{\frac{c}{3}}s(s_+-s)\) and \(s''=s(a-\frac{b}{3}s+\frac{2c}{3}s^2)\), we have

Thus,

where the constant C is independent of z, x, and t.

Noting that, for \(z<0\) and \(|z|\gg 1\):

From (6.9), one has

Moreover, we have

and then, there holds

Consequently, one has

where the constant C is independent of z, x, and t. Furthermore, due to (2.59), we get

where the constant C is independent of z, x, and t.

Furthermore, if v satisfies

and then, \(ws'''-s'w''=0\). Thus, \(ws''-s'w'=0\), and then, \((\frac{w}{s'})'=0\), which implies \(w=0\). Therefore, we obtain the uniqueness of the solution. \(\square\)

Proof of Lemma 2.7

Let \(s_{1}(z,x,t)=s(z)r_{1}(z,x,t)\). From (2.60), we need to focus on the following equation for \((x,t)\in \Gamma (\delta )\):

We can easily verify that

satisfies (6.10), and then, \(s_1\) defined in (2.73) satisfies (2.60). Provided that (2.68)–(2.70) hold, we next check the behaviors (2.71) and (2.72). By the induction argument, we only need to check them in the case of \(j=0,1,2\) and \(l=m=0\).

For \(z\gg 1\), one has

which implies that (2.71) holds in the case of \(j=0\) and \(l=m=0\). Similarly, we can deduce that (2.71) holds in the case of \(j=1,2\) and \(l=m=0\).

For fixed \(z_0\ll 1\) and \(z<z_0\), we arrive at

Noting that

which implies that

Therefore,

where the constant C is independent of z, x, t.

Based on similar arguments, we easily obtain that, for \(z\ll 1\):

In addition

which implies that

Then, we get

where the constant C is independent of z, x, and t.

Due to (2.60), we get

where the constant C is independent of z, x, t.

Furthermore, if v satisfies

then \(ws''-sw''=0\). Thus, \(ws'-sw'=0\), and then, \((\frac{w}{s})'=0\), which implies \(w=0\). Therefore, we obtain the uniqueness of the solution. \(\square\)

Proof of Lemma 2.8

Since

there exist two linearly independent functions \(\varpi _1(z)\) and \(\varpi _2(z)\), which satisfy

and as \(z\rightarrow -\infty\)

and as \(z\rightarrow +\infty\)

and here, \(f\sim g\) means \(\frac{f}{g}\rightarrow 1.\)

Using the method of variation of constants, one has

here, \(\omega _0=\varpi _1\varpi _2'-\varpi _2\varpi _1'\). In addition, the solution is unique by \(a+\frac{2b}{3}s(z)+\frac{2c}{3}s^2(z)\ge a>0\).

According to the expression of \(s_2(z,x,t)\), we find that

where the constant C is independent of z, x, t. Then, from the maximum principle and the induction argument, we can get (2.76) and

To verify (2.77), we rewrite (2.61) as

Noting that

and

Using the same arguments in proving Lemma 2.7, we can get (2.77). \(\square\)

1.5 Cancellation Lemma

The following lemma is key to deal with the “bad terms” during the procedure of proving the spectral condition.

Lemma 6.3

(Cancellation)

Proof

Due to (2.55), one has

Multiplying the above equation by \(s'\), integrating by parts and using (2.39), we get

Notice that \(\kappa (s)=\frac{f(s)}{s}\), and then, \(\kappa '(s)=\frac{\theta (s)s-f(s)}{s^2}\) and \(\kappa '(s)s^2=\theta (s)s-f(s).\) Multiplying (2.55) by s, integrating by parts, and using (2.39) and (2.78), we get

Due to (2.56), one has

Multiplying the above equation by s, integrating by parts and using (2.39) and (2.82), we get

Similarly, we have

This concludes the proof of the lemma. \(\square\)

Appendix 2: More Details on Inner Expansion

1.1 Solving \(\varphi ^{(1)}, p_{11}\), and \(p_{12}\)

In this section, we give a sketch of solving (2.99). The proof is split into three steps.

\(\underline{\mathrm{Step\ 1}}.\) Assume that \(\varphi ^{(1)}\) is known, by simplifications, we find \(p_{11}\) and \(p_{12}\) satisfy the following system:

with the following mixed boundary values:

Here, \(\mu _{01},\ldots, \mu _{09}\) are known terms and are defined by the following:

and

Denote by \(\mathcal {P}\) the operator defined by the following:

\(\underline{\mathrm{Step\ 2}}\). We aim to get \(\varphi ^{(1)}\) in \(\Gamma (\delta )\) from the equation \(\nabla \varphi \cdot \nabla \varphi ^{(1)}=0\) which comes from (2.35). Since \(\Gamma\) is non-characteristic for the equation, we only need to obtain \(\varphi ^{(1)}\) on \(\Gamma\) by solving the following system:

where \(\mu _{010},\ldots, \mu _{014}\) are known terms and are defined by the following:

In [1] (p.200–201), let \(x=X(\sigma ,t), \sigma \in \mathbb {S}^{2}, t\in [0,T]\) be a parameterization of \(\Gamma\). More concretely, we assume that

Let \(\psi ^{(1)}(\sigma ,t)=\varphi ^{(1)}(X(\sigma ,t),t)\). Then, one has

and using (6.7) in “Appendix 1”, we get

Therefore, (7.4) is reduced to the following parabolic equation:

where we used \(\mu _{010}(\sigma ,t)=\mu _{010}(X(\sigma ,t),t),\cdots ,\mu _{014}(\sigma ,t)=\mu _{014}(X(\sigma ,t),t)\) and

Noting that \(\mathcal {P}\) defined in (7.3) is bounded from \(L^2(0,T;H^1(\mathbb {S}^{2}))\) to \(L^2(0,T;H^{\frac{1}{2}}(\mathbb {S}^{2}))\), and then, there exists a solution \(\psi ^{(1)}\in L^2(0,T;H^1(\mathbb {S}^{2}))\) to (7.7), and then, a solution \((p_{11},p_{12})\in L^2(0,T;H^1(\Omega ^{+}))\) to (7.1). It follows from further arguments that \(\psi ^{(1)}, p_{11}\) and \(p_{12}\) have higher regularity if \((p_{11}(x,0),p_{12}(x,0))\) is smooth.

\(\underline{\mathrm{Step\ 3}}\). As in [1] (p.178), we extend \(p_{11}\) and \(p_{12}\) in \(\Omega ^{+}\) smoothly to \(\Gamma (\delta )\).

1.2 Solving \(\mathbf {Q}_{+}^{(1)}, \mathbf {P}_{\bot }^{(2)}, \mathbf {Q}_{I}^{(1)}, \mathbf {G}^{(1)}\), and \(\mathbf {Q}_{I,\bot }^{(2)}\)

Once we determined \(p_{11}\) and \(p_{12}\) (and thus, \(\mathbf {Q}_{+}^{(1)}\) by (2.23)), we can solve \(\mathbf {P}_{\bot }^{(2)}\), or equivalently \(p_{20}, p_{23}\) and \(p_{24}\) from (2.26).

\(\mathbf {Q}_{I,\bot }^{(1)}\) is determined by (2.80). \(\mathbf {Q}_{I,\top }^{(1)}\), or \(\{s_{1j}\}_{j=1}^{2}\), can be obtained using Lemma 2.7 in the case of \(k=0\) to solve (2.56) with boundary conditions (2.58). Thus, \(\mathbf {Q}_{I}^{(1)}\) is solved and satisfies

Proposition 7.1

There holds

where\(j,l,m=0,1,\ldots\)and the positive constantCis independent ofz, x, and t.

As a corollary, one immediately has

Corollary 7.2

where\(j,l,m=0,1,\ldots\), \(\mathbf {A}_{1}\)is defined in (2.26) and the positive constantCis independent ofz, x, and t.

In order that (2.88), (2.89), and (2.90) hold for \((x,t)\in \Gamma (\delta )\backslash \Gamma\), we define the following:

and

Furthermore, for simplicity, we take \(g_{13}=g_{14}=0\), and thus, we get \(\mathbf {G}^{(1)}\).

Finally, with the help of (2.88), (7.10) and (7.11), we can obtain \(s_{20}, s_{23}\) and \(s_{24}\), and then, \(\mathbf {Q}_{I,\bot }^{(2)}\), by solving (2.84) and (2.86) with the corresponding boundary condition (2.87). Here, we have used the fact that

which is known as we have already solved \(\mathbf {P}_{\bot }^{(2)}\).

1.3 Compatibility Conditions for Solving \(\mathbf {Q}_{I}^{(k+1)}\)

By (2.46), we get

where

Let

Then, the system (7.12) combined with (2.4) (\(i=k+1\)) is reduced into the following five ODEs:

with

To solve (7.13)–(7.14), we need to verify all the terms on the right-hand side of them satisfy the corresponding compatibility conditions by Lemmas 2.6–2.7, that is, to guarantee the following compatibility conditions for (7.13)–(7.14):

In what follows, we first ensure that (7.17) and (7.18) hold on \(\Gamma\), which will lead to the evolution law of \(\varphi ^{(k)}\) on \(\Gamma\) and the boundary values of \(p_{k1}\) and \(p_{k2}\) on \(\Gamma\). Then, we ensure that (7.17) and (7.18) hold for \((x,t)\in \Gamma (\delta )\backslash \Gamma\), which lead to the definitions of \(g_{k0}, g_{k1}\), and \(g_{k2}\).

1.4 The Equations for \(\varphi ^{(k)}, p_{k1}\) and \(p_{k2}\) on \(\Gamma\)

It is noted that on \(\Gamma\):

where

which depends on the terms up to \(k-1\) order.

Direct computations yield that on \(\Gamma\)

As \(\varphi =0\) on \(\Gamma\), (7.17) implies that \(\int _{\mathbb {R}}\left( \mathbf {F}^{(k)}:\mathbf {E}_0\right) s'\mathrm{{d}}z=0\) on \(\Gamma\), which is equivalent to

where

In addition, (7.18) implies that \(\int _{\mathbb {R}}\left( \mathbf {F}^{(k)}:\mathbf {E}_i\right) s\mathrm{{d}}z=0\) on \(\Gamma\) for \(j=1,2\), which are equivalent to

where

Furthermore, by the induction, \(s_{k1}\) and \(s_{k2}\) can be solved and there hold (one can see (2.95) for \(s_{11}\) and \(s_{12}\)):

Thus, (7.19), (7.20), and (7.21) actually are three equations on \(\Gamma\) for \(\varphi ^{(k)}, p_{k1}\), and \(p_{k2}\).

1.5 Solving \(\varphi ^{(k)},\mathbf {P}_{\top }^{(k)}, \mathbf {P}_\bot ^{(k+1)}, \mathbf {Q}_{I,\top }^{(k)}, \mathbf {G}^{(k)}, \mathbf {Q}_{I,\bot }^{(k+1)}\)

In summary, up to now, we have a nonlinear differential system for \(\varphi ^{(k)}, p_{k1}\), and \(p_{k2}\) as follows:

where the fourth equation comes from (2.35) (\(i=k\)). Solving (7.23) is similar to the argument of solving (2.99), and hence, the corresponding details are omitted here. In addition, we also extend \(p_{11}\) and \(p_{12}\) in \(\Omega ^{+}\) smoothly to \(\Gamma (\delta )\) as in [1] (p.178). Thus, \(\mathbf {P}_\top ^{(k)}\) is solved.

By that induction that \(\mathbf {P}_\bot ^{(k)}\) is known, we know that \(\mathbf {Q}_{+}^{(k)}\) is known. Using (2.32)(\(i=k\)), we can get

which implies that \(p_{(k+1)j}\ (j=0,3,4)\) can be solved.

From (7.22), we immediately get \(\{s_{kj}\}_{j=1,2}\), or equivalently, \(\mathbf {Q}_{I,\top }^{(k)}\). In addition, it holds that

Proposition 7.3

There holds

where\(j,l,m=0,1,\ldots\)and the positive constantCisindependent ofz, x, and t.

As a corollary, one has

Corollary 7.4

where\(j,l,m=0,1,\cdots\), \(\mathbf {A}_{k}\)is defined in (2.32) (\(i=k\)) and the positive constantCisindependent ofz, x, t.

In order that (7.17) and (7.18) hold for \((x,t)\in \Gamma (\delta )\backslash \Gamma\), we define the following:

and

Furthermore, for simplicity, we take \(g_{k3}=g_{k4}=0\). Therefore, we arrive at \(\mathbf {G}^{(k)}\).

Now, we focus on solving (7.13) and (7.15) with the corresponding boundary conditions in (7.16) to get \(s_{(k+1)j}\ (j=0,3,4)\), and then, \(\mathbf {Q}_{I,\bot }^{(k+1)}\). For this, we need to check all the terms on the right-hand side of them satisfy the corresponding decay conditions in Lemmas 2.6 and 2.8. (7.27) implies that the second kind of decay condition holds. For the first kind of decay condition, we only check

since the other cases are similar. In fact, according to (7.26), one has

Moreover, from (2.32) (\(i=k\)), we obtain

Therefore, we have obtained \(\varphi ^{(k)},\mathbf {P}_{\top }^{(k)}, \mathbf {P}_\bot ^{(k+1)}, \mathbf {Q}_{I,\top }^{(k)}, \mathbf {G}^{(k)}\) and \(\mathbf {Q}_{I,\bot }^{(k+1)}\).

Rights and permissions

About this article

Cite this article

Fei, M., Wang, W., Zhang, P. et al. On the Isotropic–Nematic Phase Transition for the Liquid Crystal. Peking Math J 1, 141–219 (2018). https://doi.org/10.1007/s42543-018-0005-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s42543-018-0005-3