Abstract

Artificial intelligence (AI) refers to technologies which support the execution of tasks normally requiring human intelligence (e.g., visual perception, speech recognition, or decision-making). Examples for AI systems are chatbots, robots, or autonomous vehicles, all of which have become an important phenomenon in the economy and society. Determining which AI system to trust and which not to trust is critical, because such systems carry out tasks autonomously and influence human-decision making. This growing importance of trust in AI systems has paralleled another trend: the increasing understanding that user personality is related to trust, thereby affecting the acceptance and adoption of AI systems. We developed a framework of user personality and trust in AI systems which distinguishes universal personality traits (e.g., Big Five), specific personality traits (e.g., propensity to trust), general behavioral tendencies (e.g., trust in a specific AI system), and specific behaviors (e.g., adherence to the recommendation of an AI system in a decision-making context). Based on this framework, we reviewed the scientific literature. We analyzed N = 58 empirical studies published in various scientific disciplines and developed a “big picture” view, revealing significant relationships between personality traits and trust in AI systems. However, our review also shows several unexplored research areas. In particular, it was found that prescriptive knowledge about how to design trustworthy AI systems as a function of user personality lags far behind descriptive knowledge about the use and trust effects of AI systems. Based on these findings, we discuss possible directions for future research, including adaptive systems as focus of future design science research.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

McCarthy et al. (1955) referred to Artificial Intelligence (AI) as “making a machine behave in ways that would be called intelligent if a human were so behaving” (p. 11). In his seminal book on AI history, Nilsson (2010) specifies that intelligence is “that quality that enables an entity to function appropriately and with foresight in its environment [… and] because ‘functioning appropriately and with foresight’ requires so many different capabilities, depending on the environment, we actually have several continua of intelligences” (book preface). Thus, despite the fact that we observe an ongoing discussion on AI definitions in the scientific literature (that mainly results from the way how intelligence is conceptualized), in recent years AI systems such as chatbots, recommendation agents, autonomous vehicles, and robots have become a dominating topic, both in science and practice (e.g., Berente et al., 2021; Dwivedi et al., 2021). The success of integrating AI systems into society and the economy significantly depends on users’ trust (e.g., Glikson & Woolley, 2020; Jacovi et al., 2021). AI systems increasingly carry out tasks autonomously and significantly influence human-decision making. Hence, determining which AI system to trust and which not to trust has become crucial. Trust in systems and machines which turn out not to be trustworthy may lead to undesired consequences (Lee & Moray, 1992). These may range from unfavorable purchases (e.g., when using recommendation agents in online shops) to death (e.g., when an autonomous vehicle causes a fatal accident). What follows is that the decision to trust AI systems always comes with some form of vulnerability. This explains why many novel AI systems are not always embraced by people, despite their enormous potential to make organizations more efficient and human life more comfortable (Collins et al., 2021). Considering this enormous relevance of users’ trust for the acceptance and adoption of AI systems, it is no surprise that scientific research has studied this phenomenon extensively. In the past decade, several reviews on trust in autonomous systems and AI systems were published (Glikson & Woolley, 2020; Hoff & Bashir, 2015; Siau & Wang, 2018; Thiebes et al., 2021), and also meta-analyses are available (Hancock et al., 2011; Schaefer et al., 2016).

This growing importance of trust in AI systems has paralleled another trend: the increasing relevance of personality research in the fields of AI, robotics, and autonomous systems (Matthews et al., 2021). The American Psychological Association (APA) defines personality as “individual differences in characteristic patterns of thinking, feeling and behaving”.Footnote 1 Other conceptualizations highlight the stability of the characteristics over time. For example, Mount et al. (2005) indicate that personality traits can be defined as “characteristics that are stable over time [… which] provide the reasons for the person’s behavior […] they reflect who we are and in aggregate determine our affective, behavioral, and cognitive style” (pp. 448-449, italics added). In a more recent paper, Montag and Panksepp (2017) define personality as a set of “stable individual differences in cognitive, emotional and motivational aspects of mental states that result in stable behavioral action […] especially emotional tendencies” (p. 1, italics added).

Considering these definitions, it is obvious that human personality and trust tendencies must be related, a fact that also holds true in the specific context of trust in AI systems. However, to date, the role of personality in the context of trust in AI systems has not been investigated in the form of a systematic review of existing empirical evidence. Rather, existing works only briefly mention user personality as a factor related to trust in automation and AI systems (Glikson & Woolley, 2020; Hoff & Bashir, 2015; Siau & Wang, 2018; Thiebes et al., 2021). While this confirms the relevance of this article’s topic, these brief references to user personality do not constitute systematic analysis of the existing knowledge. Moreover, recently Matthews et al. (2021) made the following statement in their paper on personality research in robotics, autonomous systems, and AI: “Person factors in trust in machines have been a relatively neglected aspect of research […]” (p. 3). User personality is a major person factor. In another recent paper, Jacovi et al. (2021) make an explicit call for personality research in the context of trust in AI systems—they write: “Personal Attributes of the Trustor […] Future work in this area may incorporate elements of the personal attributes of the trustor into the model, such as personality” (p. 633).

The fact that personality research in the context of trust in AI systems has not been examined systematically in the form of a review is problematic for at least four reasons.

First, as it has turned out after analyzing the extant literature, the existing empirical studies are published in various scientific disciplines, including computer science and robotics, ergonomics, human-computer interaction, information systems (IS), and psychology. Hence, a cumulative research tradition hardly exists. What follows is that to date a “big-picture” view of user personality and trust in the context of AI systems is not available. Thus, it is a fruitful scientific endeavor to integrate empirical results across discipline boundaries and contexts.

Second, investigation of the development of research at the nexus of user personality and trust in AI systems contributes to identity development in this important interdisciplinary and relatively new field. Based on a review of N = 58 empirical papers published in the period 2008-2021, in the current article we integrate available, but highly fragmented literature that is published in outlets across various scientific disciplines. Examination of a research field’s development may provide valuable insights into the future development. In an essay on the identity of the IS discipline, Klein and Hirschheim (2008) write that “a shared sense of history provides the ultimate grounding and background information (preunderstanding) for communication in large and diverse collectives such as societies (and by extension to diverse disciplines)” (p. 298). Bearing this argument in mind, a main motivation of the present paper is to provide insights into the status of personality research in the context of trust in AI systems, thereby contributing to identity development. Knowing the history of the research field, even if it is short, facilitates identity formation by identification of emergent thematic and methodological patterns. In short, knowing one’s past is important for coping with future challenges (Webster & Watson, 2002).

Third, personality has been identified as a major individual difference variable (along with gender, age, and culture) which affects trust in the context of automation and AI systems, and it has been argued that this fact has far-reaching design consequences (e.g., Hoff & Bashir, 2015). For example, Tapus et al.’s (2008) robot study demonstrated the feasibility of “a behavior adaptation system capable of adjusting its social interaction parameters (e.g., interaction distances/proxemics, speed, and vocal content) […] based on the user’s personality traits and task performance” (p. 169). Importantly, a sound understanding of users’ personality and trust constitutes a foundation for the successful development of adaptive systems. In general, such systems automatically adapt in real time based on users’ states or traits to improve human-technology interactions (e.g., vom Brocke et al., 2020). Development of adaptive systems has become an important topic in IS research (e.g., Astor et al., 2013; Demazure et al., 2021) and also beyond IS, as signified by developments in disciplines like affective computing (e.g., Poria et al., 2017).

Fourth, a major motivation to study personality research in the context of trust in AI systems is that IS research has ignored this personality focus thus far. Maier (2012) and recently Sindermann et al. (2020) reviewed personality research in the IS discipline and report two thematic domains in which personality has played a major role: as antecedent in technology acceptance and adoption decisions, and as characteristic of computer personnel which influences further downstream variables (e.g., willingness to change job). Regarding acceptance and adoption, it is critical to emphasize that the extant IS literature had a focus on traditional applications, including enterprise systems and online shops without any AI component (e.g., Devaraj et al., 2008; McElroy et al., 2007). Therefore, while personality research has its place in the IS discipline, the present paper’s specific thematic focus has not been addressed thus far.

The article is structured as follows. In Sect. 2, we develop a theoretical foundation for the present article. Specifically, we summarize foundations of personality research, as well as major insights into trust in the context of AI systems. In Sect. 3, we outline our review methodology. Sect. 4 presents major results of the review. Sect. 5 discusses the results, identifies several unexplored research areas, and outlines several fruitful directions for future research. In Sect. 6, we specifically discuss design implications and describe the development of adaptive systems as possible focus of future design science research. In Sect. 7, this paper’s limitations and a concluding statement are provided. Altogether, the present article contributes to a better understanding of an increasingly important phenomenon in the digital economy and society, namely the influence of user personality on trust in AI systems, which, in turn, significantly affects the acceptance and adoption decisions. Therefore, the current topic is not only essential from an academic perspective, but also from a practice point of view.

Theoretical foundations

Personality

Human personality refers to stable characteristics that determine individual differences in thoughts, emotions, and behavior (Funder, 2001). Thus, personality affects how people react to all forms of stimuli, including situations in which humans interact with AI systems.

Today consensus exists that the Five-factor Model—in short, Big Five—constitutes the “broadest level of abstraction” to conceptualize human personality, where “each dimension summarizes a large number of distinct, more specific personality characteristics” (John & Srivastava, 1999, p. 105).Footnote 2 Initially described in the 1960s (Tupes & Christal, 1961), several research groups have contributed independently to the development of the model in the past decades (Cattell et al., 1970; Goldberg, 1990; McCrae & Costa, 1987). The model comprises the following five factors: extraversion (“an energetic approach toward the social and material world”, example traits: sociability, activity, assertiveness, positive emotionality), agreeableness (“contrasts a prosocial and communal orientation toward others with antagonism”, example traits: altruism, tender-mindedness, trust), conscientiousness (“describes socially prescribed impulse control that facilitates task- and goal-directed behavior”, example traits: thinking before acting, delaying gratification, following norms and rules, planning, organizing, and prioritizing tasks), neuroticismFootnote 3 (“contrasts emotional stability and even-temperedness with negative emotionality”, trait examples: feeling anxious, nervous, sad, tense), and opennessFootnote 4 (“describes the breadth, depth, originality, and complexity of an individual’s mental and experiential life”, example traits: being imaginative, original, insightful, curious) (conceptualizations and examples taken from John et al., 2008, p. 120; for further details, see McCrae & Costa, 1997, 1999).

In addition to the Big Five, further well-known personality models exist. Based on work by Carl Jung (1923), Myers and Briggs developed a personality model referred to as Myers-Briggs Type Indicator (MBTI). In essence, this model dichotomizes four dimensions: extraversion vs. introversion, sensation vs. intuition, thinking vs. feeling, judging vs. perceiving (Myers et al., 1998). Hence, this conceptualization comes along with 24 = 16 unique personality types. Another framework is the Eysenck personality model which is based on two dimensions: extraversion (E) and neuroticism (N) (Eysenck, 1947). Based on high and low levels of each dimension, four personality types emerge: EH + NH: choleric type, EL + NH: melancholic type, EH + NL: sanguine type, EL + NL: phlegmatic type. Later, psychoticism was added as a third dimension, which can be divided into impulsivity and sensation-seeking (Eysenck & Eysenck, 1976). The HEXACO model of personality was developed by Ashton et al. (2004) and is based on the work of Costa Jr. and McCrae (1992) and Goldberg (1993). Hence, this model resembles the Big Five framework. It has six dimensions: honesty-humility, emotionality, extraversion, agreeableness, conscientiousness, and openness to experience. Thus, if compared to the Big Five, HEXACO has a sixth dimension, namely honesty-humility (with the sub-dimensions sincerity, fairness, greed avoidance, and modesty).Footnote 5 Finally, the Revised NEO Personality Inventory (NEO PI-R) is a model to conceptualize personality based on the Big Five. However, additionally this inventory includes six sub-factors for each trait. It follows that this model uses 30 factors to conceptualize personality. The most recent version of this inventory is referred to as NEO PI-3 (McCrae et al., 2005) and it includes the following factors: extraversion (warmth, gregariousness, assertiveness, activity, excitement seeking, positive emotions), agreeableness (trust, straightforwardness, altruism, compliance, modesty, tender-mindedness), conscientiousness (competence, order, dutifulness, achievement striving, self-discipline, deliberation), neuroticism (anxiety, hostility, depression, self-consciousness, impulsiveness, vulnerability), and openness to experience (fantasy, aesthetics, feelings, actions, ideas, values).

Trust

In situations of interpersonal interaction, trust is defined as “the willingness of a party to be vulnerable to the actions of another party based on the expectation that the other will perform a particular action important to the trustor, irrespective of the ability to monitor or control that other party” (Mayer et al., 1995, p. 712). Moreover, it is an established fact that trustworthiness beliefs are significantly influenced by perceptions about the trustee’s ability, benevolence, and integrity (e.g., Mayer et al., 1995; Rousseau et al., 1998). However, trust in machines, computers, and AI systems differs from interpersonal trust. In a recent paper, Riedl (2021) analyzed work by the research groups of David Gefen (e.g., Paravastu et al., 2014) and Harrison McKnight (e.g., Lankton et al., 2015), two well-known IS scholars with a particular focus on trust research, and concludes that more appropriate trusting beliefs in human-technology relationships are performance and functionality (instead of ability), helpfulness (instead of benevolence), and predictability and reliability (instead of integrity).Footnote 6 This conclusion is consistent with works in (e.g., Siau & Wang, 2018; Söllner et al., 2012; Thiebes et al., 2021) and outside (e.g., Hancock et al., 2011; Hoff & Bashir, 2015; Glikson & Woolley, 2020) the IS discipline.Footnote 7

Disposition to trust (synonyms: trust propensity, interpersonal propensity to trust) also plays a major role in explaining behavioral intentions and actual behavior. McKnight et al. (1998) define disposition to trust as “a tendency to be willing to depend on others […] across a broad spectrum of situations and persons” (p. 474, 477). Disposition to trust is typically conceptualized as an antecedent of more situation-specific trust (e.g., Hoff & Bashir, 2015; McKnight et al., 1998). Thus, whether a user develops trusting beliefs and trusting intentions in, and shows actual trusting behavior toward, an IT artifact (e.g., AI system) is influenced by trust disposition. McKnight et al. (1998) further decompose disposition to trust into faith in humanity (defined as “one believes that others are typically well-meaning and reliable”, p. 477) and trusting stance (defined as “one believes that, regardless of whether people are reliable or not, one will obtain better interpersonal outcomes by dealing with people as though they are well-meaning and reliable”, p. 477). Complementing McKnight et al.’s (1998) work, Gefen (2000) argues that trust disposition “is not based upon experience with or knowledge of a specific trusted party […] but is the result of an ongoing lifelong experience […] and socialization […] disposition to trust is most effective in the initiation phases of a relationship when the parties are still mostly unfamiliar with each other […] and before extensive ongoing relationships provide a necessary background for the formation of other trust-building beliefs, such as integrity, benevolence, and ability” (p. 728). Consistent with these views, Mayer et al. (1995) argued that “[p]ropensity to trust is proposed to be a stable within-party factor that will affect the likelihood the party will trust […] People with different developmental experiences, personality types, and cultural backgrounds vary in their propensity to trust” (p. 715).

Framework of personality and trust in AI systems

A fundamental question is how personality is related to trust, and we focus on this important question in the context of AI systems. Moreover, it is essential how both personality and trust affect acceptance and adoption.

Propensity to trust is considered to be a specific trait of agreeableness (e.g., John & Srivastava, 1999; John et al., 2008; McCrae & Costa, 1997, 1999).Footnote 8 What follows is that the more specific personality trait propensity to trust can be deduced from the more abstract trait agreeableness. This fact is consistent with discourse in the literature which indicates that personality traits can be conceptualized on different abstraction levels, both in psychology (e.g., McCrae et al., 2005) and IS (e.g., Maier, 2012). Regarding abstraction levels of personality, John and Srivastava (1999) wrote with reference to the Big Five that “these five dimensions represent personality at the broadest level of abstraction, and each dimension summarizes a large number of distinct, more specific personality characteristics” (p. 105). Models like those from Myers-Briggs, Eysenck, or HEXACO conceptualize personality on a similarly high abstraction level. However, this advantage of broad categories also has a disadvantage, the “low fidelity” (John & Srivastava, 1999, p. 124). Decades ago it has already been argued in the personality literature that choice of abstraction levels depends on the descriptive and predictive tasks to be addressed (Hampson et al., 1987), and “[i]n principle, the number of specific distinctions one can make in the description of an individual is infinite, limited only by one’s objectives” (John & Srivastava, 1999, p. 124).

Against this background, we devise a framework of personality and trust in AI systems with different abstraction levels. In personality research, it is well-established to include—in addition to the broad category on the highest abstraction level—at least one further level with more specific personality traits, which, in turn, manifest in general behavioral tendencies and more specific behaviors (e.g., Funder, 2001; John & Srivastava, 1999; McCrae et al., 2005).

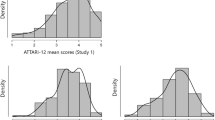

Figure 1 shows a framework of personality and trust in AI systems. This framework draws upon the logic of existing conceptualizations and models at the nexus of personality and behavior (Funder, 2001; John & Srivastava, 1999; McCrae et al., 2005; Mooradian et al., 2006). In essence, the framework specifies universal personality traits (e.g., Big Five), specific personality traits (e.g., interpersonal propensity to trust), general behavioral tendencies (e.g., trust in a specific AI system such as autonomous vehicles or speech assistants like Alexa or Siri), and specific behaviors (e.g., adherence to the recommendation of an AI system in a decision-making context).

Altogether, our framework draws upon the empirically-grounded facts that (i) personality is related to behavioral tendencies and specific behaviors and (ii) universal personality traits are related to specific personality traits (e.g., Zimbardo et al., 2021). We will refer to the framework in Fig. 1 in the following sections. First, we use the distinction between universal and specific personality traits to code the scientific literature. Second, our discussion of possible future research domains is also based on this framework.

Methodology of the literature review

Literature search

In order to identify publications at the nexus of user personality and trust in the context of AI systems, we conducted a literature search. The search process was based on existing recommendations, in particular vom Brocke et al. (2009). The search was conducted via Web of Science, Scopus, IEEE Xplore, and AIS eLibrary starting on 11/28/2021 and a last query was made on 12/31/2021. No publication year restriction was used for all searches.

Step1: Because AI systems may have different manifestations, we used several keywords. Specifically, we used the following keywords: “trust”, “personalit*”, “individual difference”, “artificial intelligence”, “AI”, “machine learning”, “autonom*”, “agent”, “bot”, “chatbot”, and “robot” (Web of Science, Scopus,, IEEE Xplore). For the search in the AIS eLibrary, we used “trust”, “personalit*”, and “individual difference”.Footnote 9

This search method resulted in the following number of hits: Web of Science = 39 papers, Scopus = 327,Footnote 10IEEE Xplore: 14, AIS eLibrary: 7. Next, we removed duplicates (as the four databases are not mutually exclusive) and read all abstracts of the remaining articles, if necessary also the full text, to identify papers eligible for our review. We applied the following inclusion criteria: (1) the article is empirical (i.e., it presents collection, analysis, and interpretation of data), (2) the focus is on user personality, and not on the personality of the AI system,Footnote 11 (3) the article deals with at least one aspect of human personality (e.g., extraversion) in the context of trust in AI systems, (4) the paper constitutes a peer-reviewed journal or conference publication, and (5) the article is written in English. After applying these inclusion criteria, 43 papers remained. Next, we read the full texts of all 43 papers and finally decided to consider them all in our review.

Step2: Based on the 43 papers, we also conducted a backward search. The same five inclusion criteria were applied as in Step1. Based on this procedure, we identified an additional 15 papers, all of which were ultimately considered eligible for our review.Footnote 12 Hence, the total number of papers included in our review is N = 58.Footnote 13 Fig. 2 graphically summarizes the literature search process. Appendix 1 lists the 58 references.

Literature coding

To systematically document the existing knowledge on the relationship between user personality and trust in the context of AI systems, as well as meta-information (e.g., publication year), we extracted the following data:

-

(1)

General information about the paper: (a) authors, (b) title, (c) publication year, (d) type of outlet (journal, conference), and (e) scientific discipline, in which a paper appeared. Due to the interdisciplinary nature of the current topic, we defined the following discipline categories ex-ante: (i) Computer Science, Informatics, Robotics, (ii) Ergonomics, Human Factors, (iii) Human-Computer Interaction, (iv) IS, (v) Psychology, and (vi) other.

-

(2)

Investigated system: Because AI systems manifest in various forms, we used different categories, namely: (a) robot (including robot simulations), (b) autonomous vehicle (including in-vehicle agents), (c) user interface (e.g., chatbot), (d) automated agents in a security context (e.g., airport), (e) AI-driven healthcare systems, (f) autonomous system (general), and (g) other (e.g., voice assistant, intelligent tutoring). The categories were developed inductively based on the coded material.

-

(3)

Research method: We grouped the empirical examinations into one of the following categories: (a) lab experiment, (b) survey study (typically conducted online), (c) online experiment, (d) lab study (in contrast to the lab experiment, this type of study does not manipulate an independent variable to systematically study the resulting effects on a dependent variable, but simply observes use of a technology in a laboratory environment). The categories were developed inductively based on the coded material.

-

(4)

Sample characteristics: We documented (a) sample size, (b) mean age of the sample, (c) a sample’s gender distribution, and (d) country of data collection.

-

(5)

Personality traits: We documented all investigated personality traits. We distinguished between (a) universal and (b) specific traits. In the category (a), we included (i) papers which investigated all Big Five traits together and (ii) one specific Big Five trait. Moreover, we captured other broad personality conceptualizations, namely (iii) Myers-Briggs type indicator, (iv) Eysenck’s model, (v) HEXACO, and (vi) NEO-PI-3. We defined these universal categories ex-ante. In the category (b), we documented all specific traits (e.g., trust propensity) which we found in the analyzed literature.

-

(6)

Theoretical framework: We documented whether a paper included a theoretical framework in the form of a graphical representation (i.e., constructs and relationships). While the minimum requirement for a theory to exist is at least one independent and one dependent variable, our data analysis revealed that most papers in which a framework was available used more sophisticated theorizing. Specifically, we documented whether the investigated personality construct(s), as well as the trust construct, were conceptualized as independent, mediator, moderator, or dependent variable. Moreover, we documented all dependent variable(s) of a framework (e.g., adoption intention of an AI system).

-

(7)

Relationship of personality traits with trust in AI system: We documented statistically significant relationships between universal traits, as well as specific traits, and trust in AI system. We emphasize that these relationships are typically those which the authors of a paper explicitly highlighted in the abstract and/or the discussion section of a paper. Based on this procedure, we guarantee a focus on the most important results from the perspective of the papers’ authors.

Results

We structure the presentation of our results into two sub-sections. We start with the descriptive results (i.e., point (1) to (4) as listed in the previous literature coding section), followed by our findings on the investigated personality traits and underlying theoretical models, point (5) to (7) in the list.

Descriptive results

Appendix 1 lists the 58 references. Appendix 2 summarizes the major characteristics of these 58 papers and their underlying studies. The 58 papers report 64 studies. Based on the information in Appendix 1 and 2, Table 1 summarizes descriptive statistics of our review. Specifically, we report publication year, the type of outlet in which the papers are published, and the scientific discipline in which a paper appeared.Footnote 14 Moreover, we document the investigated technology, research method, and country of data collection. For further sample characteristics (i.e., sample size, mean age of the sample, and rate of females in the sample) we refer the reader to Appendix 2.

Findings on personality traits

Table 2 summarizes our findings on the investigated personality traits, separated into universal and specific traits.

Regarding the universal traits, we observed that 18 out of the 58 papers studied the Big Five. Moreover, in 11 further papers, single Big Five traits were studied. While extraversion was studied in 5 papers, neuroticism was studied in 3, agreeableness in 2, and openness in 1 paper, respectively. No article had a focus on conscientiousness alone. Moreover, the results show that other general personality models only play a minor role in the extant literature. Specifically, the Myers-Briggs and Eysenck models, as well as HEXACO and NEO-PI-3, have only been applied once each.

Table 2 further indicates that we identified 33 specific personality traits.Footnote 15 We list all traits which were studied in at least two papers (i.e., 12 traits).Footnote 16 We found that trust propensity (i.e., the disposition to trust other people) and dispositional trust in automation/machines are the most studied specific personality traits (in 9 papers each). Also, locus of control plays a significant role in the literature (studied in 7 papers). Locus of control is the degree to which people believe that they have control over the outcome of events (as opposed to external forces beyond their influence); this locus can either be internal (a person believes that he or she can control their own life) or external (the belief that factors which cannot be influenced control life) (Rotter, 1966, 1990). Both attitude towards computers/robots and self-efficacy (the degree to which people believe that they can accomplish a particular task or activity, Bandura, 1977, Gist, 1987) were studied in 5 papers each. The following traits were examined in three papers each: need for cognition (“an individual’s tendency to engage in and enjoy effortful cognitive endeavors”, Cacioppo et al., 1984, p. 306), perfect automation expectation (“expectations that the automated aid [e.g., autonomous vehicle] will perform with near-perfect reliability”, Merritt et al., 2015, p. 740),Footnote 17 propensity to take risks, and sensation seeking (“the tendency to seek novel, varied, complex, and intense sensations and experiences and the willingness to take risks for the sake of such experiences”, Choi & Ji, 2015, p. 694). Finally, need for interaction and self-esteem were studied in 2 papers each. Need for interaction is a person’s preference to stay in touch with other people during the use of a service or application (Dabholkar, 1992). Self-esteem is defined as “the level of global regard one has for the self as a person” (Harter, 1993, p. 88).

Regarding the availability of theoretical frameworks, we found graphical representations with constructs and relationships in 19 out of the 58 papers.Footnote 18 Table 3 summarizes the results of our analyses. As shown in Table 3, we found that 18 out of the 19 papers conceptualize personality traits as independent variable. Another major result is that trust is conceptualized as independent variable in 3 papers only. However, trust is much more often conceptualized either as mediator (7 times) or as dependent variable (11 times). Table 3 further shows that personality traits are rarely conceptualized as mediator (2 times) or moderator (3 times). Also, we observe that in studies in which trust is not the dependent variable, often behavioral intention to use an AI system is used as outcome variable.

Regarding the relationship between personality traits and trust in AI systems, a first notable result was that many of the hypothesized relationships in the analyzed papers were statistically not significant. This concerns both the Big Five traits and the more specific traits. However, we also observed relationships which turned out to be stable across the analyzed papers.

With respect to the Big Five, we found the following results:

-

Agreeableness: 9 papers found a positive relationship with trust in AI systems (Bawack et al. 2021, Böckle et al. 2021, Chien et al. 2016, Ferronato & Bashir 2020a, Huang et al. 2020, Kraus et al. 2020a, Lyons et al. 2020, Müller et al. 2019, Rossi et al. 2018).

-

Openness: 8 papers found a positive relationship with trust in AI systems (Aliasghari et al. 2021, Antes et al. 2021, Böckle et al. 2021, Elson et al. 2020, Ferronato & Bashir 2020a, Oksanen et al. 2020, Schaefer & Straub 2016, Zhang et al. 2020).

-

Extraversion: 5 papers found a positive relationship with trust in AI systems (Böckle et al. 2021, Haring et al. 2013, Kraus et al. 2020a, Merritt & Ilgen 2008, Müller et al. 2019), while 1 paper found a negative relationship (Ferronato & Bashir 2020a). One further study found that the relationship between extraversion and trust is more complex. Elson et al. (2018) write: “Individuals high in extraversion initially rated their trust in the agent as higher than those low in extraversion. During [... further interaction] rounds, the agent gave a recommendation contrary to what was expected in highly confident conditions [...] trust in the agent increased compared to the previous round for individual’s low in extraversion while trust in the agent decreased compared to the previous round for individuals high in extraversion [...] extraverts appeared to have their trust most dramatically affected by the conditions where the agent gave an incorrect response in the highly confident condition” (p. 436). What follows is that the level of trust in an AI system is influenced by an interaction of the degree of extraversion and whether an expectation regarding decision outcome is fulfilled.

-

Conscientiousness: 3 papers found a positive relationship with trust in AI systems (Bawack et al. 2021, Chien et al. 2016, Rossi et al. 2018), while 2 papers found a negative relationship (Aliasghari et al. 2021, Oksanen et al. 2020).

-

Neuroticism: 3 papers found a negative relationship with trust in AI systems (Kraus et al. 2020a, Sharan & Romano 2020, Zhang et al. 2020).

With respect to specific personality traits, we observed a positive relationship of the following constructs with trust in AI systems:

-

trust propensity (Aliasghari et al. 2021, Huang & Bashir 2017, Rossi et al. 2018),

-

perfect automation expectation (Lyons & Guznov 2019, Lyons et al. 2020, Matthews et al., 2020),

-

dispositional trust in automation/robots (Kraus et al. 2020a, Merritt & Ilgen 2008),

-

technological innovativeness (Handrich 2021),

-

sensation seeking (Zhang et al. 2020), and

-

self-esteem (Kraus et al. 2020a).

Moreover, we observed a negative relationship between trust in AI systems and the following traits:

-

internal locus of control (Chiou et al. 2021, Sharan & Romano 2020),

-

negative attitude towards computers/robots (Matthews et al., 2020, Miller et al. 2021), and

-

propensity to take risks (Ferronato & Bashir 2020b).

To sum up, our review of the relationship between universal personality traits and trust in AI systems indicates that for three factors out of the Big Five (agreeableness, openness, extraversion) high values positively affect trust, while high neuroticism values negatively affect trust. With respect to conscientiousness, evidence is mixed and hence no clear statement on the relationship between this personality trait and trust in AI systems can be made based on the current research status. However, it is critical to consider that the reported results are only valid with the meaning of “all-other-things-being-equal”. As an example, an individual with high agreeableness trusts an AI system more than an individual with low agreeableness, but only if the two individuals do not differ in the other four personality traits (we reflect on this finding in the following Discussion section). Regarding the relationship between specific personality traits and trust in AI systems we found that research more frequently established a positive relationship (seven factors) than a negative relationship (three factors). However, in contrast to Big Five research the total number of available studies on most specific personality traits is lower and hence the reported results should be replicated.

Discussion and future research directions

We structure our discussion into two sub-sections. We start with a discussion of the descriptive results (as summarized in Table 1), followed by a discussion of the findings on personality traits (as summarized in Tables 2 and 3). This discussion also includes the identification of unexplored research areas and an outline of possible directions for future research.

Descriptive results

Regarding publication year, we identified the first studies in 2008 (Cramer et al. 2008; Merritt & Ilgen 2008). Since then, only a limited number of studies were published up until the recent past. Notably, while 29 out of the 58 identified papers were published in the period 2008-2019, the remaining 29 studies were published in 2020 and 2021. Thus, in the very recent past we observe a significant increase in empirical research at the nexus of user personality and trust in the context of AI systems, signifying the sharply rising academic interest in the topic.

Regarding publication outlet, we found almost a balance between conference papers (30 papers) and journal publications (27 papers). Considering that in several of the disciplines in which the investigated studies were published, conference publications are of high scientific value (e.g., computer science, HCI), we consider this balance as a “good sign”, predominantly because articles in conference proceedings guarantee that research results are quickly available (while review processes for journals typically can take much longer). Yet, this statement should not be interpreted as an argument against journal articles. Rather, the found balance is the state which is also desirable in the future.

Regarding scientific discipline, we identified computer science, informatics, and robotics as the dominant group (18 papers), followed by ergonomics and human factors (13 papers), and HCI (11 papers). Information systems (IS, 8 papers) and psychology (4 papers) contributed less frequently to the current body of research. A major explanation for this observation is that the dominating disciplines have already concentrated research efforts on personality, trust, and intelligent systems in the past decade, while IS and psychology did not. However, considering that recent AI articles published in mainstream IS outlets (e.g., Berente et al., 2021, MIS Quarterly) explicitly refer to trust as a “managerial issue” (p. 1440), and that three of the four identified psychology papers recently appeared in a widely visible publication outlet, namely in Frontiers in Psychology (Kraus et al. 2020b, Miller et al. 2021, Oksanen et al. 2020), we foresee that the disciplines of IS and psychology will also contribute more research in the future. Considering that it is unlikely that the currently dominating disciplines will slowdown in their publication output (Matthews et al., 2021), it is definitive that research at the nexus of user personality and trust in the context of AI systems will most likely experience an upward tendency in the future.

Regarding investigated systems, our analyses revealed that robot research (including robot simulations) are by far dominant (20 papers), followed by autonomous vehicle studies (12 papers) and user interface examinations (e.g., chatbots) (11 papers). Further domains identified by our review are automated agents in the security context (e.g., airports) (7 papers), AI-driven healthcare systems (3 papers), as well as autonomous systems and AI in general (3 papers), along with some other systems such as voice assistants (3 papers). At least to some extent, this finding reflects the historical fact that computer science, informatics, and robotics, as well as ergonomics and human factors, heavily focused their research on robots (e.g., Rossi et al. 2020) and autonomous vehicles (e.g., Tenhundfeld et al. 2020). However, as a consequence of the foreseen rise of IS and psychological research, AI systems embedded into user interfaces (e.g., automated decision-support in the business context), as well as intelligent health systems and home systems (e.g., speech assistants), will likely gain in importance in the future (see, for example, a recent MIS Quarterly special issue, No. 3, September 2021). We explicitly make a call for studies in these under-researched domains.

Furthermore, regarding investigated systems we observed that the 58 papers remained vague in the reporting of the studies’ task instructions. It follows that an inherent limitation of the analyzed articles is that it is not clear if the identified influences of personality traits on trust in AI systems are indeed specific to AI systems or hold for IT systems in general. Considering the idiosyncrasies of AI systems and their possible influences on trust (e.g., Siau & Wang, 2018; Thiebes et al., 2021), future studies should report their task instructions in detail (ideally in verbatim) in order to be better able to disentangle the specific AI effects from the more general IT effects. Such a description of task instructions should also include the definition of AI, which was provided to the study participants, if provided at all.Footnote 19

Regarding applied research method, we observe that except for some qualitative interviews which are reported as a “by-product” of larger quantitative studies (e.g., Sarkar et al., 2017),Footnote 20 the current body of research almost entirely used quantitative methods. The dominant method is the laboratory experiment (22 papers), followed by survey studies (18 papers), online experiment (14 papers), and a few laboratory studies without experimental manipulation (6 papers). We see three major implications.

First, despite the uncontested value of quantitative research which is rooted in the positivist paradigm (e.g., Chen & Hirschheim, 2004), other epistemological positions and methods, particularly interpretivism and qualitative methods (e.g., Walsham, 1995), should become more important in the future. Such an interpretivist stance is often deeply rooted in a hermeneutic tradition, thereby being of a fundamentally idiographic nature. Such research (e.g., interpreting interview data on individuals’ perceptions of their interaction with AI systems and theory building), therefore, has the objective of determining “patterns” and “richness in reality” (Mason et al., 1997, p. 308).

Second, detailed analysis of the identified online experiments revealed that these studies typically used Amazon Mechanical Turk (MTurk) for data collection. MTurk is a platform that offers access to a geographically dispersed set of respondents. While such a sample “can be more representative and diverse than locally collected samples […] respondents tend to be relatively young, digitally savvy adults” (Antes et al. 2021, p. 3). It follows that future research should replicate existing research findings with more representative populations. This call for future studies is substantiated by the fact that attitude towards AI systems (e.g., Nam, 2019), trust (e.g., Sutter & Kocher, 2007), and personality (e.g., Ashton & Lee, 2016) are related to age. As indicated in Appendix 2 (see table “mean age (years)”), we identified information on participant age in 60 out of the 64 studies. The average age of participants, or the dominating age group in studies in which the average age was not reported, is >40 years in only 5 studies (Matsui 2021: 44.6 y, Rossi et al. 2020: 61 y, Sorrentiono et al. 2021: 83.33 y, Voinescu et al. 2018: 67.52 y, Youn & Jin 2021: 40.8 y). Therefore, the findings of the current review are predominantly valid for younger age groups. Future research should be based more often on older people. This call is substantiated by the fact that older people are increasingly concerned by AI systems, as signified by the example of socially assistive robots (e.g., Sorrentiono et al. 2021).

Third, during the paper screening and selection process (see Fig. 2) we encountered a paper by Mühl et al. (2020) who used neurophysiological measurement (i.e., skin conductance) as a complement to self-reports. However, because this paper studied passengers’ preferences and trust while being driven by a human driver and an intelligent vehicle without any focus on user personality, we did not consider this article in our body of analyzed literature. It follows that no single article in our sample of N = 58 papers used neurophysiological measurement. Importantly, research has demonstrated “associations between personality traits and the structure and function of the nervous system” (Funder, 2001, p. 206). Thus, we consider the application of neuroscientific measurement techniques as a promising methodological avenue for future studies. In this respect, the study by Mühl et al. (2020) could be a promising starting point. This call for the use of neuroscience approaches is substantiated by two research developments: first, the increasingly important role of neuroscience approaches in IS research, referred to as NeuroIS (e.g., Dimoka et al., 2012; Riedl & Léger, 2016), and second, the increasing evidence that personality traits have a strong evolutionary, affective, and hence biological component (e.g., Montag et al., 2016; Montag & Panksepp, 2017).

Regarding country of data collection, the most striking result is that in 30 out of 60 studies in which the country is reported, data gathering took place in the United States. Then, the category “mix of different countries” follows. This category comprises MTurk studies in which subjects from more than one country participated. Six studies collected data in Germany, followed by the UK (4 studies), Canada (3 studies), Italy, Australia, and Japan (2 studies each), as well as three further countries in which one study was conducted (China, Korea, South Africa). The major implication of this finding is that more future studies should be carried out outside the United States. This call is substantiated by evidence showing that personality and culture are related (e.g., Leung & Cohen, 2011; McCrae, 2000). One study in our sample investigated the relationship between trust attitudes towards automated digital technologies, Hofstede’s cultural dimensions, and the Big Five personality traits (Chien et al. 2016). Reflecting on their results, Chien et al. write: “the U.S. population had the highest trust score and Turkish group scored the lowest, with Taiwanese population falling in between [...] Evaluations of the inter-relational aspects of personality and general trust showed that an individual with a high trait of agreeableness or conscientiousness had increased trust in automation” (p. 845). Thus, culture should be a construct of interest in future research. This call for future studies is substantiated by long existing calls for more cultural studies in a seminal psychology paper. Funder (2001) writes: “[the] direction is to try to distinguish between the psychological elements that are shared by all cultures (etics) and those that are distinctive to particular cultures (emics) […] The big five have been offered as possible etics” (p. 203). Against this background, fruitful avenues for future research could be to examine the possible relationship between culture and personality traits in the context of trust in AI systems.

A seminal paper by Hofstede and McCrae (2004) could serve as a starting point.Footnote 21 They found that several culture dimensions are related to the Big Five; specifically, they report the following statistically significant correlationsFootnote 22: power distance (“the extent to which the less powerful members of organizations and institutions […] accept and expect that power is distributed unequally”) correlates with extraversion (−), conscientiousness (+), and openness (−); uncertainty avoidance (“a society’s tolerance for ambiguity [… indicating] to what extent a culture programs its members to feel either uncomfortable or comfortable in unstructured situations [… which] are novel, unknown, surprising, and different than usual”) correlates with neuroticism (+) and agreeableness (−); individualism (“the degree to which individuals are integrated into groups [… and in] individualist societies, the ties between individuals are loose: everyone is expected to look after himself or herself and his or her immediate family”) correlates with extraversion (+); finally, masculinity (“the distribution of emotional roles between the sexes […] women’s values differ less among societies than men’s values [… and] men’s values vary along a dimension from very assertive and competitive and maximally different from women’s values on one side to modest and caring and similar to women’s values on the other”) correlates with openness (+), neuroticism (+), and agreeableness (−). This existing knowledge should be used in future studies on trust in AI systems.

Findings on personality traits

Regarding the universal personality traits, we found that the Big Five were studied extensively, while other general personality models were not. Thus, future research should consider these other models more frequently. In particular, we recommend future studies based on HEXACO (Ashton et al., 2004) and NEO-PI-3 (McCrae et al., 2005), as these models are both related to the Big Five and relatively novel frameworks in the personality literature (if compared to Myers-Briggs’ and Eysenck’s models). In our sample of analyzed papers, Müller et al. (2019) used HEXACO to study personality and trust in the context of human-chatbot interaction. Moreover, Rossi et al. (2020) used NEO-PI-3 to examine human interaction with socially assistive robots in a simulated home environment. These two studies may serve as a starting point for future research.

Regarding the theoretical conceptualization of personality and trust in studies on adoption and interaction with AI systems (see Table 3), our review reveals two dominating causal chains. First, personality is conceptualized as an independent variable and trust in the AI system as a dependent variable (e.g., Elson et al. 2020). Second, personality is conceptualized as an independent variable, trust in the AI system as a mediator variable, and another outcome such as behavioral intention to adopt the AI system is conceptualized as a dependent variable (Handrich 2021). Figure 3 graphically summarizes these two theoretical mechanisms, and we added a third one. This additional mechanism describes a fruitful future research focus in which emotions and affective state is conceptualized as a consequence of personality traits and as an antecedent of trust in AI system, which in turn influences behavioral intention. A major motivation for consideration of this additional mechanism is the fact that emotions and affect have become critical constructs in IS research in general (e.g., Zhang, 2013) and in AI system adoption research in particular (e.g., Kraus et al. 2020b, Miller et al. 2021).

The Merriam-Webster Dictionary defines affect as “a set of observable manifestations of an experienced emotion: the facial expressions, gestures, postures, vocal intonations, etc., that typically accompany an emotion”.Footnote 23 Thus, emotions are closely related to physiological processes, followed by observable consequences of these processes—the affect. Interestingly, despite a few notable exceptions such as Kraus et al.’s (2020b) work on automated driving or Miller et al.’s (2021) work on human-robot interaction, emotions and affect hardly play a role in the analyzed literature. Thus, the role of emotions and affect has hardly been established in the present research context.

In Fig. 3 (bottom), we suggest positioning emotions and affective state as a consequence of personality traits and as an antecedent of trust, which in turn influences further downstream variables, such as behavioral intention to adopt an AI system. This positioning is consistent with the theoretical frameworks in Kraus et al. (2020b) and Miller et al. (2021). For example, Miller et al. (2021) write: “Besides user dispositions, users’ emotional states during the familiarization with a robot are a potential source of variance for robot trust. As the experience of emotional states has been shown to be considerably affected by personal dispositions, this research proposes a general mediation mechanism from the effects of user dispositions on trust in automation by user states […] focus[ing] on state anxiety as a specific affective state, which is expected to explain interindividual differences in trust in robots […] State anxiety is defined as “subjective, consciously perceived feelings of apprehension and tension, accompanied by or associated with activation or arousal of the autonomic nervous system” […]” (p. 5). Future empirical research should directly test this causal chain.Footnote 24 Methodologically, we recommend two things: first, to complement existing personality measurement instruments (e.g., Big Five) with the Affective Neuroscience Personality Scales (ANPS) (Montag et al., 2021; Orri et al., 2017; Reuter et al., 2017), and second, to consider measuring emotions based on neurophysiological measurement (NeuroIS). The major argument for this suggestion is that trusting beliefs in and attitudes towards AI systems are not only influenced by conscious perceptions and thoughts (which would imply the use of self-report measurement). Rather, they are also influenced by unconscious perceptions and information processing which cannot be captured based on self-reports alone (e.g., Dimoka et al., 2012; Riedl & Léger, 2016; vom Brocke et al., 2020). Thus, future research should consider measuring emotions, and measurement should include both self-report and neuroscience instruments.

As outlined in detail in the Results section, we examined the Big Five’s influence on trust in AI systems. In essence, overwhelming evidence shows that both agreeableness and openness positively affect trust. Moreover, while the evidence is less compelling if compared to agreeableness and openness, there is still a clear tendency that extraversion also positively affects trust. Regarding neuroticism, the findings are also clear-cut. Less neurotic people exhibit more trust in AI systems. However, this finding is based on fewer studies if compared to the other Big Five traits.

Finally, regarding the influence of conscientiousness on trust in AI systems the evidence is mixed. While some studies (Bawack et al. 2021, Chien et al. 2016, Rossi et al. 2018) found a positive relationship, other studies found a negative relationship (Aliasghari et al. 2021, Oksanen et al. 2020). For example, based on the context of a domestic robot for food preparation, Aliasghari et al. (2021) found that more conscientious people trusted the robot less, and hence were more likely to rely on themselves as cook or a restaurant. In contrast, another study which was carried out in the context of AI-based voice shopping (e.g., Amazon Echo) found a positive relationship between conscientiousness and trust (Bawack et al. 2021). What follows is that variance in AI system (domestic robot vs. AI-based voice assistant) and/or in the task (food preparation vs. shopping), may have caused the different relationships between the personality trait conscientiousness and trust. Thus, it is a fruitful scientific endeavor to study more systematically the influence of specific AI systems and/or the technology-supported tasks (as moderators) on the relationship between personality (independent variable) and trust (dependent variable).

We highlight that trust can vary strongly depending on what kind of AI system a user is dealing with. For example, highly critical AI systems such as medical AI systems would be harder to trust than non-critical systems such as voice assistants or recommender systems (due to the potential harm they might cause in case of a malfunction or a poor system design). Thus, despite the fact that the current research status suggests a direct relationship between the personality traits agreeableness (positive), openness (positive), extraversion (positive), and neuroticism (negative) and trust in AI systems, more research is necessary to determine whether these findings hold in the context of highly critical AI systems. Importantly, no paper in our sample of 58 empirical articles chose the context of highly critical AI systems like those in the medical domain. Thus, today we do not know whether the current results may be generalized to this context. Also, other boundary conditions should be considered in future research, including user experience and computer self-efficacy (Matthews et al., 2021).

Moreover, the kind of collaboration process should be considered in future studies. In essence, decision automation should be distinguished from scenarios where AI acts as a decision support system. Our analysis of the extant literature (see Appendix 2, column “Context”) indicates that the available literature predominantly dealt with decision support systems rather than complete decision automation. Thus, the influence of personality traits on trust in AI systems which fully automate decisions should be studied more frequently in the future. Several researchers are aware of this necessary distinction; however, they have not yet considered this distinction in their research designs. As an example, Tenhundfeld et al. (2020) studied trust in automated parking based on a Tesla vehicle. However, they highlight that their research focus was “partially automated parking” (p. 194, italics added) and not complete decision automation.Footnote 25

Despite the fact that current knowledge on the relationship between the Big Five traits and trust in an AI system is substantial, the practical implication of this knowledge is limited. Every human can be characterized as a configuration of the Big Five values. In the simplest case in which each trait is assumed to be either high or low we have 25 = 32 personality types. However, the existing body of literature does not focus on investigation of these types’ influence on trust. Rather, the extant literature established a trait’s individual relationship with trust in a specific AI system. This kind of knowledge is limited as it only allows for trust prediction for some configurations. Based on the present review’s knowledge, a person with profile1 would trust an AI system more than a person with profile2 (initial letters of the Big Five, “↑” indicates a high level, while “↓” indicates a low level): profile1 = A↑ + O↑ + E↑ + N↓ + C↑, profile2 = A↓ + O↓ + E↓ + N↑ + C↑. However, what would be the prediction for the following configuration: profile3 = A↑ + O↓ + E↑ + N↑ + C↑? Based on the insights presented in the extant literature, it would be hardly possible to make a sound prediction. However, one paper in the analyzed literature used latent profile analysis to study trust in chatbots (e.g., Alexa). Based on this technique and the HEXACO model, Müller et al. (2019) not only identified extraversion, agreeableness, and honesty-humility as the three most relevant traits for trust prediction, but also determined specific personality profiles with predictive power for trust: “introverted, careless, distrusting user”, “conscientious, curious, trusting user”, and “careless, dishonest, trusting user” (p. 35). More research of this sort is necessary in the future.

Regarding specific personality traits, we found some clear-cut results. First, trust propensity (Aliasghari et al. 2021, Huang & Bashir 2017, Rossi et al. 2018), perfect automation expectation (Lyons & Guznov 2019, Lyons et al. 2020, Matthews et al., 2020), and dispositional trust in automation/robots (Kraus et al. 2020a, Merritt & Ilgen 2008) positively affect situational trust in a specific AI system. Moreover, we found the following traits to also positively affect this trust: self-efficacy, technological innovativeness, sensation seeking, and self-esteem. However, as indicated in the Results section, because only a very limited number of studies have examined these constructs, future research should replicate these findings before more definitive conclusions can be drawn.

Two specific traits turned out to be negative predictors of trust in AI systems (at least two independent studies observed this effect for both factors): negative attitude towards computers/robots (Matthews et al., 2020, Miller et al. 2021) and internal locus of control (Chiou et al. 2021, Sharan & Romano 2020). It is highly plausible that people with a negative attitude towards computers and robots generally do exhibit little situational trust in a specific AI system. Regarding locus of control, evidence shows that people who believe that they can control their own lives (rather than being controlled by external forces) exhibit less trust towards AI systems. This finding is also plausible because a strong belief in one’s own ability to control life may come along with less trust in everything else which could control one’s life, either other humans or technological artifacts like AI-based systems. The study by Choi & Lee (2015) provides another interesting rationale for this finding; based on an autonomous vehicle context they write: “locus of control […] selected as driving-related personality factor […] External locus of control significantly influenced behavior. This result demonstrated that someone who experiences difficulty in driving, such as older adults, has greater intention to use the autonomous vehicles” (p. 699). It follows that people who believe that they do not have sufficient capabilities and skills to interact with or handle an intelligent system (= no internal locus of control) do trust an intelligent system because there is no alternative.

Overall, a major conclusion of the current review is that today only a limited number of specific personality traits were studied sufficiently so that solid knowledge exists (i.e., trust propensity, perfect automation expectation, and dispositional trust in automation/robots positively affect trust in AI systems, while negative attitude towards computers/robots and internal locus of control have a negative effect). However, more research should be conducted on the remaining traits which we found in our literature analysis (as described in detail in the column “Specific traits” in Appendix 2) as well as further traits which are described elsewhere (Matthews et al., 2021).

Our call for more research on specific personality traits is substantiated by the fact that the Big Five do not subsume “all there is to say about personality” (Funder, 2001, p. 200). As an example, consider an authoritarian personality (Adorno et al., 1950). Funder (2001) indicates that such a personality “would be high on conscientiousness and low on agreeableness and openness […] but much would be lost if we tied to reduce our understanding of authoritarianism to these three dimensions” (p. 201). It follows that while specific personality traits are definitely related to more abstract traits such as the Big Five, they feature additional information. Therefore, we reiterate for the domain of trust in AI systems what Funder (2001) already declared more than two decades ago with reference to the Big Five, namely that researchers should not be “seduced by convenience [… and] act as if they can obtain a complete portrait of personality by grabbing five quick ratings” (p. 201).

As indicated in Table 3 and Fig. 3 (see “Trust as Mediator”), some studies used behavioral intention, or adoption intention, as dependent variable. Thus, these studies provide first insights on how personality analysis can be used to improve the adoption process of AI systems. One study, for example, indicates that system transparency (e.g., “I believe that I can form a mental model and predict future behavior of the autonomous vehicle.”), technical competence (e.g., “I believe that I can depend and rely on the autonomous vehicle.”), and situation management (e.g., “I believe that the autonomous vehicle will provide adequate, effective, and responsive help.”) positively affected trust, which, in turn, increased behavioral intention to use an autonomous vehicle (Choi & Ji, 2015). In another study, it is reported that need for interaction, self-efficacy, technological innovativeness, and novelty seeking positively affected trust, which, in turn, reduced innovation resistance and increased adoption intention of intelligent personal assistants like Alexa (Handrich 2021). These and further studies (Cohen & Sergay 2011, Hegner et al. 2019, Zhang et al. 2020) constitute a starting point for future research that has the goal to develop recommendations on how to improve the adoption process of AI systems based on user personality knowledge. As signified by the examples above, current research suggests—if considered as collective evidence—that the interaction of personality traits (either the Big Five or specific traits like those investigated by Handrich 2021) with system properties (like those investigated by Choi & Ji, 2015) determines trust. In addition to system properties, task demands (e.g., workload in partly automated tasks) should also be considered. This need is substantiated by Szalma and Taylor’s (2011) work—based on significant empirical evidence, they write: “[T]he impact of automation and task demand on participant response depends to a substantial degree on human personality traits. A practical implication is that the identification of specific profiles to predict human–automation interaction is unlikely to be useful as a generic selection measure […] the complexity of the interactive effects suggest[s] that for selection tools to be effective, the optimal trait profiles would need to be developed separately for each level, type, and perhaps even domain of application of automation” (p. 91). We make a call for future studies on such interaction effects and their consequences for adoption decisions.

Design implications and adaptive systems as possible future design science research focus

Design science research has evolved as a major academic paradigm in the IS discipline, which aims to design innovative and useful IT artifacts (e.g., Hevner et al., 2004; March & Smith, 1995; Peffers et al., 2008). A major question in the current research context is how the presented empirical research can inform the design of AI systems in order to increase trust. In essence, though the 58 reviewed works have made valuable contributions to the literature (predominantly from a theoretical and empirical point of view, i.e., descriptive knowledge), the authors did not have an explicit intent to systematically conduct their research as design science research projects, nor was that the result. What follows is that concrete design implications (i.e., a form of prescriptive knowledge) of the current body of knowledge are limited.Footnote 26 This finding of the present review is consistent with a recent observation by Ahmad et al. (2022) who indicate that “previous studies mainly employ empirical methods to describe behaviors in interaction with these systems and consequently generate descriptive knowledge about the use and effectiveness of personality-adaptivity CAs [Conversational Agents, a specific type of AI system]. In addition […] there is still a research gap in providing prescriptive knowledge about how to design a PACA [personality-adaptive conversational agent] to improve interactions with users” (p. 3).

However, the analyzed literature offers some abstract design implications. Yet, these implications typically do not go beyond the recommendations which are already documented in existing conceptual papers or review works (e.g., Siau & Wang, 2018). Obviously, this constitutes a major limitation of the current body of knowledge which should be addressed in future research. As an example, in one of our 58 analyzed papers, Aliasghari et al. (2021) found, among other things, that “the participants lost trust in the robot […] when the robot made an error after it seemed to have learned the task by making no mistakes [… and] personality traits of the participants [...] affected some aspects of their trust in the robot” (p. 87). A design recommendation that would result from such an empirical finding is trivial: Developers should design AI systems which do not make errors. In a conceptual paper, Siau and Wang (2018) already indicated several years before the publication of the Aliasghari et al.’s study that trust “must be nurtured and maintained [… which] happens through […] competence of AI in completing tasks and finishing tasks in a consistent and reliable manner [… and] there should be no unexpected downtime or crashes” (p. 51).Footnote 27 Against the background of our finding that concrete design recommendations are hardly available in the current body of knowledge, we make a call for future IS design science research in the context of user personality and trust in AI systems. Then, more concrete design recommendations can be expected in the future.

As a starting point for future research, consider the following example within the mentioned domain of system errors. Foremost, it is not possible to create AI systems that do not make errors, independent from whether they are classical knowledge-based systems, are trained using machine learning algorithms, or are hybrids.Footnote 28 The aim must therefore be to prepare the user that errors might occur and how to cope with it (e.g., by implementing cross-check procedures or using an explanation component) (e.g., Shin, 2021). Also, expectation management is critical. Hence, it is important that the claims coming with an AI system are realistic because the gap between expectations and perceived delivery predominantly determines perceived quality and related trust perceptions (e.g., Jiang et al., 2002). However, today neither does scientific knowledge exist on how specific personality types perceive different preparation procedures, coping strategies, or expectation management approaches, nor do we know the procedures’, strategies’, and approaches’ efficacy regarding trust as a function of user personality. This research gap should be closed in the future. Szalma and Taylor’s (2011, p. 93) “general guidelines for incorporating individual differences into the design of human-technology interfaces” could serve as a starting point for corresponding design science research.

Moreover, a future design science research program should explicitly consider the development of adaptive systems. Recently, Matthews et al. (2021) substantiated the need for adaptive systems. They argued that task analysis is “a crucial step in the design of any system and its interfaces [… and] there should be an analogous ‘person analysis’”—moreover, they foresee “individuation in design” and “adaptive technologies” because “conventional ‘static’ products offer little or no flexibility to accommodate variation in personality and individual differences once designed” (p. 7). Against this background, future studies should contribute to the conceptualization and development of such adaptive systems. Based on publications in the literature on real-time adaptive interventions (for an overview paper, see Nahum-Shani et al., 2018), we define such adaptive systems as a technology aiming to support the execution of a task by adapting to a user’s personality, as well as to resulting neurophysiological states and behavior.

Imagine a situation in which a user interacts with an AI system. The AI system could be a chatbot or recommender system which is presented on the computer screen. In principle, however, the basic idea of adaptive systems also applies to other AI systems such as robots or autonomous vehicles. First, the system must be capable of recording the user state (e.g., emotions), its own state, context, and behavioral user data (e.g., preferences via system input in recommender systems). Second, the system must integrate this stream of data to derive the user’s personality profile (either universal personality traits like the Big Five or specific personality traits like propensity to take risks or computer anxiety). Third, based on the personality profile the AI system adapts in real-time in order to maximize the user’s trust in the system (foundations of such adaptive systems can be found, for example, in Byrne & Parasuraman, 1996, Harriott et al., 2018, or Picard, 1997).

It is critical to stress that research has already demonstrated the feasibility of adaptive systems based on user personality. Table 4 provides a summary of example works which could serve as a starting point for future design science research projects. Thus, despite the challenges which come along with the development of adaptive systems (e.g., Adam et al., 2017; Picard, 2003; vom Brocke et al., 2020), we foresee a prosperous development of research in this important domain. This positive outlook is substantiated by recent research which has demonstrated the feasibility of unobtrusive real-time measurement of trust in machines based on physiological measurement (Akash et al., 2018; Walker et al., 2019). Importantly, the development of such adaptive systems must not ignore the legal, ethical, organizational, and social aspects, which need to be explored in future studies. Because the development of adaptive systems is a highly interdisciplinary phenomenon, we expect a number of different scientific disciplines to contribute to this research (e.g., IS, informatics, organization science, and psychology). However, considering the IS discipline’s successful history in interdisciplinary research endeavors, IS researchers could take a lead role in this area.

Limitations and concluding statement

In the present article, we reviewed 58 papers published in the period 2008-2021. To the best of our knowledge, this analysis is the first systematic review of the scientific literature on the relationship between personality traits and trust in the context of AI systems. Yet, the present review has limitations: First, while we can safely assume that the literature basis of the current review is extensive (because we searched for literature with several keywords and in four databases, including several hundred relevant journals and conference proceedings), there is no formal measure to prove completeness. Second, while we consider our literature collection and analysis approaches as rigorous, our interpretation of the findings, and especially the formulation of future research implications, is predominantly interpretive. Yet, because we report the analyzed literature in a very detailed way (see also Appendix 2), other researchers can directly draw upon our basis of 58 papers to complement or revise the current interpretations. What follows is that while we consider our review as a systematic documentation of the research status on personality and trust in the context of AI systems, it is hoped that this article instigates further research, thereby being more of a sort of “starting point” rather than the “final word”. It will be rewarding to see what insights future research will reveal.

Change history

19 November 2023

Missing year, volume and pages has been added in the article header.

Notes

https://www.apa.org/topics/personality (accessed on January 16, 2022).

Emotional instability is used as a synonym in the scientific literature.

Intellect is used as a synonym in the scientific literature.

It is important to note that while the other five dimensions resemble the Big Five, they are not perfect matches.

Based on Riedl (2021, Table 3.I.), we define as follows (in verbatim): Performance is the belief about the capability of the technology to accomplish its designated purpose. Functionality is the belief that the technology has the capability, functions, or features to fulfil the requirements. Helpfulness is the belief that the specific technology provides adequate and responsive help for users. Predictability is the belief that the technology will do what it is claimed to do without adding anything malicious on top of it. Reliability is the belief that the technology will consistently operate properly.

Note that trusting beliefs in the context of automation technology and autonomous systems may also refer to purpose (defined as “the degree to which the automation is being used within the realm of the designer’s intent”) and process (defined as “the degree to which the automation’s algorithms are appropriate for the situation and able to achieve the operator’s goals” (Lee & See, 2004, p. 59). For further details, please see a recent paper by Thiebes et al. (2021) who mapped trusting beliefs (performance, etc.) and AI frameworks (e.g., OECD principles on AI) to five trustworthy AI principles (beneficence, non-maleficence, autonomy, justice, and explicability).