Abstract

To develop a method for exposing and elucidating ethical issues with human cognitive enhancement (HCE). The intended use of the method is to support and facilitate open and transparent deliberation and decision making with respect to this emerging technology with great potential formative implications for individuals and society. Literature search to identify relevant approaches. Conventional content analysis of the identified papers and methods in order to assess their suitability for assessing HCE according to four selection criteria. Method development. Amendment after pilot testing on smart-glasses. Based on three existing approaches in health technology assessment a method for exposing and elucidating ethical issues in the assessment of HCE technologies was developed. Based on a pilot test for smart-glasses, the method was amended. The method consists of six steps and a guiding list of 43 questions. A method for exposing and elucidating ethical issues in the assessment of HCE was developed. The method provides the ground work for context specific ethical assessment and analysis. Widespread use, amendments, and further developments of the method are encouraged.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

Human cognitive enhancement (HCE) technology is a group of emergent technologies that aim at altering (improving) cognitive capacities, such as attention, concentration, memory, and reasoning. A wide range of emerging technologies for HCE is making its way from mice to men (Bostrom and Sandberg 2009; Farah 2015). HCE technologies can be categorized in many ways (van Est et al. 2012; Baldwin et al. 2013). One suggestion is to differentiate between internal hardware, such as biological modifications (genetic modifications, surgery, tissue engineering, pharmaceutical or nutritional interventions and neural implants), internal software, such as mental training, and external hardware and software, such as external tools and methods to enhance memory (Sandberg and Bostrom 2006).

HCE technologies are special as they may not only change a person’s ability to reason and reflect, but also the person’s self-conception and identity, and the way that person socialises. As such, the technology has significant potential implications for individuals and society, which raise a series of ethical challenges which are discussed in the abundant literature (Butcher 2003; Cakic 2009; Chatterjee 2013; Forlini et al. 2013; Gaucher et al. 2013; Goodman 2014; Gyngell and Easteal 2015; Harris 2011; Lanni et al. 2008; Mordacci 2014; Sahakian and Morein-Zamir 2011; Santoni de Sio et al. 2014). Several technologies have now come so far in their development that they merit more systematic assessments, e.g., various drugs, smart-glasses, and external magnetic stimulators that are commercially available.

Public attitudes towards HCE have been studied (Fitz et al. 2014), and a range of policy implications were identified and discussed (Sarewitz and Karas 2012; Schermer et al. 2009). HCE is also thoroughly analyzed in light of innovation theory (Baldwin et al. 2013), and a shared European “normative framework [that] should be based on fundamental and uncontroversial values such as autonomy, fairness, and the right to physical integrity” has been suggested (Coenen et al. 2011). A wide range of perspectives and frameworks to analyze and address social, legal, and ethical aspects of HCE are available, e.g., within a responsible research and innovation (RRI) framework (Stilgoe et al. 2013) or in technology assessment, such as real-time technology assessment (Guston and Sarewitz 2002), participatory technology assessment (Klüver et al. 2000), constructive technology assessment (CTA) (Kiran et al. 2015; Rip and Te Kulve 2008), ethical technology assessment (eTA) (Palm and Hansson 2006), anticipatory ethics for emerging technologies (Brey 2012), and others (Ely et al. 2014; Klüver et al. 2015; Fisher et al. 2006).

Despite the many available frameworks, there is little agreement on how to handle ethical issues in HCE. One reason for this is that the available frameworks come from different theoretical perspectives. Another reason is that the technologies are quite different. So are the contexts. There may be different demands at a policy level compared to a consumer level. Hence, it may be difficult to find one all-purpose framework that captures it all. Nonetheless, what unites most of the perspectives or frameworks is the need to expose and elucidate the relevant ethical issues related to HCE. Every open and transparent assessment, policy- or decision-making process needs to reveal the ethical issues related to an HCE technology. Any framework that does not include a comprehensive review of the ethical issues may become biased and hamper an open and transparent appraisal.

Accordingly, the objective of this article is to develop a method for exposing and elucidating ethical issues with HCE in a systematic and comprehensive manner. The purpose of the method development is to support and facilitate open and transparent deliberation and decision making with respect to a crucial kind of emerging technology.

Exposing ethical issues here means to reveal and identify ethical issues with an HCE technology. Ethical issues may be overt and covert. They may be hidden in the presentation of facts, in concepts (such as enhancement or intelligence), and in framings. An open and transparent deliberation (of any kind) will need to take all these issues into account (in various ways).

Elucidating ethical issues goes beyond just mentioning an ethical issue, and explains why the issue is ethically relevant. For example, pointing out that trans-cranial brain stimulation may challenge authenticity is not enough. We need to know why it is an ethical challenge. In order not to frame or bypass certain steps in the consecutive deliberation process, detailed discussions of the issues or evaluation of the soundness of arguments are not included. Elucidating the ethical issues only includes what is necessary for the preparation of an open and transparent process.

Hence, the goal of this article is to present an issue-oriented method for the preparation of deliberation on HCE, both within the development of science, technology and innovation (STI) policies and for the deliberation on developing, regulating, and implementing specific HCE technologies.

Methods

A literature search on the assessment of emergent technologies was performed in order to identify relevant approaches. The search term [(“technology assessment”) AND (“method” OR “approach” OR “framework”) AND (“review” OR “overview” OR “display” OR “elucidate” OR “expose” OR “highlight” OR “reveal”) AND (“ethics” OR “moral” OR “ethical” OR “normative” OR “evaluative” OR “evaluation”)] was applied in PubMed (until December 31 2015).

Conventional qualitative content analysis (Hsieh and Shannon 2005) of the identified papers and methods was performed by the author to assess the suitability for assessing ethical issues in HCE technologies. The criteria for assessing approaches suitability are based on (Rhodes 2015; Beekman et al. 2006) and result from a group deliberation as part of a research project (see acknowledgement). The criteria were selected with respect to whether they could promote exposing and elucidating ethical issues, as defined above. The various approaches were assessed with regards to whether it could: (a) contribute to an open and transparent policy-and decision making processes in a deliberative democracy setting, (b) was easy to apply, and (c) was able to address the many health related aspects of HCE and (d) as well as HCE’s ability to change basic human capabilities.

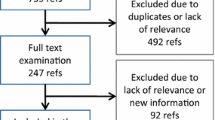

Based on these criteria a value based approach was selected as a starting point and was modified in a group deliberation process in order particularly to target HCE technologies. The developed method was then tested for a specific type of HCE, i.e., smart-glasses (Hofmann et al. 2016). Based on the experience from this pilot test, the method was revised and amended. A flowchart of the process is presented in Fig. 1.

Results

A wide range of approaches to address ethical issues in the assessment of existing and emerging technologies was identified in 417 references. Many of the approaches were identified within the field of health technology assessment (HTA) and most of them are summarized in a recent systematic review by Assasi and colleagues (Assasi et al. 2014), which was identified by the search.

Based on the selection criteria three approaches appeared to be particularly suitable: EUnetHTA Core model (Lampe et al. 2009), The approach by The Swedish Council on health technology assessment (SBU) (Heintz et al. 2015), and the “Socratic approach” (Hofmann 2005; Hofmann et al. 2014), see Table 1.

The EUnetHTA Core model consists of nine domains, where “ethical analysis” is one domain which is divided into six topics (beneficence/non-maleficence; autonomy; justice and equity, respect for persons, legislation, and ethical consequences of the assessment). Each topic consists of two to four questions, adding up to nineteen issues of assessment. The SBU approach consists of twelve items, which are organized into four different themes: the effects of the intervention on health, its compatibility with ethical norms, structural factors with ethical implications, and long term ethical consequences of using the intervention. Each item is provided with sub-questions, short explanations, and a concluding overall summary. The Socratic approach consists of six steps and 7 main questions and 33 detailed questions to guide the assessment (Hofmann et al. 2014).

As can be seen from Table 1, all three approaches appear applicable according to the criteria. Due to familiarity the Socratic approach was selected, and was adjusted and amended during the group process with participants from the research project (see acknowledgement) and from the experiences with applying the developed method on smart-glasses (reference to JSEE-D-16-00020). Some steps were added, and some questions of the model were modified.

The final steps of the approach is presented in Table 2 and the questions in step 3 are listed in Table 3.

Depending on the result of the scoping in step 1, the presented method may be used by a single person and by a team. In principle it could be performed as an armchair exercise (with good access to the literature) or preferably as an interactive process (Hofmann et al. 2015b) with full stakeholder involvement, depending on the context. It is important that these choices are openly and explicitly argued for.

Although the questions 1–36 in Table 2 are directed toward highlighting ethical issues while the questions 37–43 point to governance issues, there are overlaps between the questions.

Discussion

There are several challenges with this study. First, searching in PubMed obviously covers HTA approaches much better than parliamentary technology assessment (PTA) and science and technology studies (STS) approaches. Moreover, it does not cover approaches presented and discussed in books or methods developed and used in other areas, such as the Ethical Matrix for the assessment of food (Cotton 2014). However, an initial search in Google Scholar with the search term [(“technology assessment”) AND (“method” OR “approach” OR “framework”) AND ((“review ethical issues” OR “overview ethical issues” OR “display ethical issues” OR “elucidate ethical issues” OR “expose ethical issues” OR “highlight ethical issues” OR “reveal ethical issues”) OR (“review moral issues” OR “overview moral issues” OR “display moral issues” OR “elucidate moral issues” OR “expose moral issues” OR “highlight moral issues” OR “reveal moral issues”)) AND (“ethics” OR “moral” OR “ethical” OR “normative” OR “evaluative” OR “evaluation”)] only gave 6 references of little relevance. A search in PubMed with the same search term gave 70 references of higher relevance. This, together with the acknowledgement that methods for exposing and elucidating ethical issues have been much more appreciated in health technology assessment than in PTA or STS, warrants a search in PubMed.

Correspondingly, the selection criteria may be criticized, as the selection of other criteria may obviously have identified other approaches. However, the first selection criterion is based on overall goals in modern democracies. The second criterion is straight forward and pragmatic. If the method is not fairly easy to use, it will not be applied. The third and fourth criteria are based on core characteristics of HCE found in the literature, i.e., that it can affect people’s health, and that it can alter basic human abilities and human self-conception.

Moreover, other selection criteria may have identified other approaches or frameworks as a starting point for the development of this method, and many of them are mentioned in the introduction. However, the aim of this method development was not to provide yet another STS framework, but rather to provide practical input to such frameworks. Moreover, many of the available frameworks and methods for assessing technologies have limited practical applications (Hofmann et al. 2015a). The selected approaches, however, have been applied for practical assessment of a wide range of health technologies, and the developed method was demonstrated to be useful for smart-glasses. One obvious objection is that the author has been involved in the development of the selected method, and may have been biased. Here the reader is encouraged to repeat or critically assess the study and investigate whether this is the case.

The developed method embraces a wide range of ethical approaches, such as consequentialism, deontology, casuistry, virtue ethics, and other mixed approaches, such as principlism. This avoids the critique of being too narrow, but opens it up for the criticism for being eclectic. However, this is reasonable when aiming at a comprehensive and systematic approach for exposing and elucidating ethical issues.

Moreover, the steps and questions are not carved in stone. Contextual adjustments may be necessary. All the questions may not be relevant for all types of HCEs and other questions, not mentioned, may be relevant (see step 3 and Q43). The point is that the method is a guidance for reflective application and not a checklist for blind use.

Accordingly, the approach may not be perfectly suited to address the full diversity of future HCE technologies. Further amendments and adjustments may be necessary. However, the method is designed to be flexible, and future applications will show whether it is flexible enough to address ethical issues in the emerging range of HCE technologies. General amendments and local adjustments would be most welcome.

Another relevant critique is that there are so many other methods and frameworks available and that HCE does not merit a specific approach, and if it does, no approach is needed specifically to expose and elucidate the ethical issues. The ethical issues can be addressed directly without exposure and elucidation. However, as stated in the introduction, HCE is a special kind of technology as it alters basic human capabilities. Moreover, the various specific frameworks tend to address specific ethical issues and may not be transferable from one context to the other. Here, a broad range of ethical issues will be exposed and elucidated and can be used (as input) by a wide range of frameworks. Moreover, other and less explorative frameworks may ignore or bracket important ethical issues.

Yet another source of criticism is that the terms “exposing” and “elucidate” can be subject to a wide variety of interpretations. This is certainly true, but as defined in the introduction, these terms refer to what contributes to an open and transparent process of assessment, decision- or policy making. That is, if a type of HCE raises issues of privacy this will be important for the subsequent analysis. The same goes for in what way privacy is relevant, and how the arguments are framed, challenged or supported. However, how the arguments about the various aspects of privacy are to be taken into account in the specific assessment, policy-making or decision-making process is beyond the scope of this method. The method only provides the basis for such processes.

Both step 1 and Q1 contain a description of (the purpose of) the technology. This may appear confusing. However, in step 1 the goal is to identify the scope of the assessment and in Q1 the goal is a more detailed description of the technology with respect to function, purpose, and intention. In Q1 characteristics and applications that were not thought of in the initial step may be identified.

Another relevant objection to the development of this method is that the assessments of HCE technologies may be performed with the Socratic approach for HTA, as this is a flexible approach where existing questions can be ignored (if justified) and new issues and questions can be added. That is, no new approach is needed. This is correct. However, the purpose of HCE is so special (e.g. in potentially altering agency) that it warrants a special version of the approach. Having a specific approach may also increase the user-friendliness and the uptake. Moreover, there are significant differences between the Socratic approach for health technologies and HCE, as illustrated in Table 3. Q2, Q3, Q7, Q9, Q18, Q26, Q28, Q31, Q34–42 are new, while other questions have been modified to be better attuned to HCE, such as Q5, Q12, Q2, and Q27. Hence, the method development for HCE can be seen as an input for a revision or expansion of the Socratic approach in HTA.

It may also be argued that there are other and more fruitful ways to group and order the questions in Table 3. Moreover, several questions are closely related and some questions overlap. Here it is important to acknowledge that the question list in Table 3 is not the end product of the approach, but only a step to provide information and content for the analysis. As illustrated by the example with smart-glasses (Hofmann et al. 2016), the context and the analysis of the literature will determine how the ethical issues will be grouped and presented. Hence, instead of grouping by generic questions, themes should be grouped by technology-specific ethical issues. That said, the questions do follow a certain logic, where Q1–3 are related to the technology, Q4–6 is concerning the target group/users, Q7–12 relate to human personhood, Q13–16 address social aspects, Q17–19 are related to (potential) consequences, Q20–22 address alternative and dual use, Q23–24 are related to stakeholders, 25–35 relate to use and implementation, and Q36–43 target governance issues. This could of course have been highlighted in Table 3. However, in order not to direct or complicate the analysis unnecessary, this has not been done.

Lastly: who can use the approach? Preferably persons skilled in ethics. However, in the HTA-setting the Socratic approach is used by HTA-experts without special training in ethics.

Conclusion

A method for exposing and elucidating ethical issues with HCE was developed. The method highlights ethical issues that are relevant for open and transparent deliberation processes when assessing HCE technologies. The identified issues can be used directly in deliberation processes or as input to other assessment frameworks. It has been tested on smart-glasses and hopefully the approach can be helpful for the practical assessments of emerging HCEs and colleagues are encouraged to use, amend, and develop the method.

References

Assasi, N., Schwartz, L., Tarride, J. E., Campbell, K., & Goeree, R. (2014). Methodological guidance documents for evaluation of ethical considerations in health technology assessment: a systematic review. Expert Review of Pharmacoeconomics and Outcomes Research, 14(2), 203–220. doi:10.1586/14737167.2014.894464.

Baldwin, T., Fitzgerald, M., Kitzinger, J., Laurie, G., Price, J., Rose, N., et al. (2013). Novel neurotechnologies: Intervening in the brain. London: Nuffield Council on Bioethics.

Beekman, V., De Bakker, E., Baranzke, H., Baune, O., Deblonde, M., & Forsberg, E.-M., et al. (2006). Ethical bio-technology assessment tools for agriculture and food production. Final Report Ethical Bio-TA Tools (QLG6-CT-2002-02594). LEI, The Hague.

Bostrom, N., & Sandberg, A. (2009). Cognitive enhancement: Methods, ethics, regulatory challenges. Science and Engineering Ethics, 15(3), 311–341. doi:10.1007/s11948-009-9142-5.

Brey, P. A. (2012). Anticipatory ethics for emerging technologies. Nanoethics, 6(1), 1–13.

Butcher, J. (2003). Cognitive enhancement raises ethical concerns. Academics urge pre-emptive debate on neurotechnologies. Lancet, 362(9378), 132–133.

Cakic, V. (2009). Smart drugs for cognitive enhancement: Ethical and pragmatic considerations in the era of cosmetic neurology. Journal of Medical Ethics, 35(10), 611–615. doi:10.1136/jme.2009.030882.

Chatterjee, A. (2013). The ethics of neuroenhancement. Handbook of Clinical Neurology, 118, 323–334. doi:10.1016/b978-0-444-53501-6.00027-5.

Coenen, C., Schuijff, M., & Smits, M. (2011). The politics of human enhancement and the European Union. In J. Savulescu, R. ter Meulen, & G. Kahane (Eds.), Enhancing human capacities (pp. 521–535). London: Blackwell Publishing Ltd.

Cotton, M. (2014). Ethical matrix and agriculture. In P. B. Thompson, & D. M. Kaplan (Eds.), Encyclopedia of Food and Agricultural Ethics (pp. 622–629). Dordrecht: Springer.

Droste, S., Herrmann-Frank, A., Scheibler, F., & Krones, T. (2011). Ethical issues in autologous stem cell transplantation (ASCT) in advanced breast cancer: A systematic literature review. BMC Medical Ethics, 12(1), 1–16.

Ely, A., Van Zwanenberg, P., & Stirling, A. (2014). Broadening out and opening up technology assessment: Approaches to enhance international development, co-ordination and democratisation. Research Policy, 43(3), 505–518.

Farah, M. J. (2015). The unknowns of cognitive enhancement. Science, 350(6259), 379–380.

Fisher, E., Mahajan, R. L., & Mitcham, C. (2006). Midstream modulation of technology: Governance from within. Bulletin of Science, Technology and Society, 26(6), 485–496.

Fitz, N. S., Nadler, R., Manogaran, P., Chong, E. W., & Reiner, P. B. (2014). Public attitudes toward cognitive enhancement. Neuroethics, 7(2), 173–188.

Forlini, C., Hall, W., Maxwell, B., Outram, S. M., Reiner, P. B., Repantis, D., et al. (2013). Navigating the enhancement landscape. Ethical issues in research on cognitive enhancers for healthy individuals. EMBO Reports, 14(2), 123–128. doi:10.1038/embor.2012.225.

Gaucher, N., Payot, A., & Racine, E. (2013). Cognitive enhancement in children and adolescents: Is it in their best interests? Acta Paediatrica, 102(12), 1118–1124. doi:10.1111/apa.12409.

Goodman, R. (2014). Humility pills: Building an ethics of cognitive enhancement. Journal of Medicine and Philosophy, 39(3), 258–278. doi:10.1093/jmp/jhu017.

Guston, D. H., & Sarewitz, D. (2002). Real-time technology assessment. Technology in Society, 24(1), 93–109.

Gyngell, C., & Easteal, S. (2015). Cognitive diversity and moral enhancement. Cambridge Quarterly of Healthcare Ethics, 24(1), 66–74. doi:10.1017/s0963180114000310.

Harris, J. (2011). Moral enhancement and freedom. Bioethics, 25(2), 102–111. doi:10.1111/j.1467-8519.2010.01854.x.

Heintz, E., Lintamo, L., Hultcrantz, M., Jacobson, S., Levi, R., Munthe, C., et al. (2015). Framework for systematic identification of ethical aspects of healthcare technologies: The SBU approach. International Journal of Technology Assessment in Health Care, 31(03), 124–130.

Hofmann, B. (2005). Toward a procedure for integrating moral issues in health technology assessment. International Journal of Technology Assessment in Health Care, 21(3), 312–318.

Hofmann, B., Droste, S., Oortwijn, W., Cleemput, I., & Sacchini, D. (2014). Harmonization of ethics in health technology assessment: A revision of the Socratic approach. International Journal of Technology Assessment in Health Care, 30(1), 3–9. doi:10.1017/S0266462313000688.

Hofmann, B., Lysdahl, K. B., & Droste, S. (2015a). Evaluation of ethical aspects in health technology assessment: More methods than applications? Expert review of Pharmacoeconomics and Outcomes Research, 15(1), 5–7. doi:10.1586/14737167.2015.990886.

Hofmann, B., Oortwijn, W., Lysdahl, K., Refolo, P., Sacchini, D., Wilt, G. J. V. D., et al. (2015b). Integrating ethics in health technology assessment: many ways to Rome. [Vitenskapelig artikkel]. International Journal of Technology Assessment in Health Care, 31(3), 131–137. doi:10.1017/S0266462315000276.

Hofmann, B., Haustein, D., & Landeweerd, L. (2016). Smart-glasses: Exposing and elucidating the ethical issues. Science and Engineering Ethics. doi:10.1007/s11948-016-9792-z.

Hsieh, H. F., & Shannon, S. E. (2005). Three approaches to qualitative content analysis. Qualitative Health Research, 15(9), 1277–1288. doi:10.1177/1049732305276687.

Kiran, A. H., Oudshoorn, N., & Verbeek, P.-P. (2015). Beyond checklists: Toward an ethical-constructive technology assessment. Journal of Responsible Innovation, 2(1), 5–19.

Klüver, L., Nentwich, M., Peissl, W., Torgersen, H., Gloede, F., Hennen, L., et al. (2000). European participatory technology assessment. Participatory methods in technology assessment and technology decision-making. Copenhagen: The Danish Board of Technology.

Klüver, L., Nielsen, R. Ø., & Jørgensen, M. L. (2015). Policy-oriented technology assessment across Europe: Expanding capacities. London: Palgrave Macmillan.

Lampe, K., Makela, M., Garrido, M. V., Anttila, H., Autti-Ramo, I., Hicks, N. J., et al. (2009). The HTA core model: A novel method for producing and reporting health technology assessments. International Journal of Technology Assessment in Health Care, 25(Suppl 2), 9–20. doi:10.1017/S0266462309990638.

Lanni, C., Lenzken, S. C., Pascale, A., Del Vecchio, I., Racchi, M., Pistoia, F., et al. (2008). Cognition enhancers between treating and doping the mind. Pharmacological Research, 57(3), 196–213. doi:10.1016/j.phrs.2008.02.004.

Mordacci, R. (2014). Cognitive enhancement and personal identity. Humana.Mente Journal of Philosophical Studies, 26, 141–152.

Palm, E., & Hansson, S. O. (2006). The case for ethical technology assessment (eTA). Technological Forecasting and Social Change, 73(5), 543–558.

Rhodes, R. (2015). Good and not so good medical ethics. Journal of Medical Ethics, 41(1), 71–74. doi:10.1136/medethics-2014-102312.

Rip, A., & Te Kulve, H. (2008). Constructive technology assessment and socio-technical scenarios. In E. Fischer (Ed.), Presenting futures (Vol. 1, pp. 49–70). Dordrecht: Springer.

Sahakian, B. J., & Morein-Zamir, S. (2011). Neuroethical issues in cognitive enhancement. J Psychopharmacol, 25(2), 197–204. doi:10.1177/0269881109106926.

Sandberg, A., & Bostrom, N. (2006). Cognitive enhancement: A review of technology. EU ENHANCE Project.

Santoni de Sio, F., Faulmuller, N., & Vincent, N. A. (2014). How cognitive enhancement can change our duties. Frontiers in Systems Neuroscience, 8, 131. doi:10.3389/fnsys.2014.00131.

Sarewitz, D., & Karas, T. H. (2012). Policy implications of technologies for cognitive enhancement. In J. Giordano (Ed.), Neurotechnology: Premises, potential, and problems (pp. 267–285). Boca Raton, FL: CRC Press.

Schermer, M., Bolt, I., de Jongh, R., & Olivier, B. (2009). The future of psychopharmacological enhancements: Expectations and policies. Neuroethics, 2(2), 75–87.

Stilgoe, J., Owen, R., & Macnaghten, P. (2013). Developing a framework for responsible innovation. Research Policy, 42(9), 1568–1580.

van Est, R., Stemerding, D., Kukk, P., Hüsing, B., van Keulen, I., & Schuijff, M., et al. (2012). Making perfect life: European governance challenges in 21st century bio-engineering. Brussels: European Parliament STOA–Science and Technology Options Assessment.

Acknowledgments

I am most thankful to Ellen-Marie Forsberg, Erik Thorstensen, and Claire Shelley-Egan for valuable discussions on the method and contributions to the list of questions (Table 3). Likewise I am grateful for the wise comments and suggestions for improvement from the Editor and two anonymous reviewers. Part of the research leading to these results has received funding from the Norwegian Financial Mechanism 2009–2014 and the Ministry of Education, Youth and Sports under Project Contract No. MSMT-28477/2014, Project No. 7F14236.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Hofmann, B. Toward a Method for Exposing and Elucidating Ethical Issues with Human Cognitive Enhancement Technologies. Sci Eng Ethics 23, 413–429 (2017). https://doi.org/10.1007/s11948-016-9791-0

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11948-016-9791-0