Abstract

As Artificial Intelligence (AI) is rapidly integrated into existing technologies which has brought forth the fourth industrial and learning revolution, designing curriculum for AI education has become an important strategic development for education authorities throughout the world. Framed broadly from the Theory of Planned Behavior, this study examined a structural equational model to establish the interrelationships of students’ perceived usefulness, attitude towards using AI, subjective norms to learn about AI, basic literacy about AI and their behavioral intention to learn about AI. In addition, it examines the moderation effects of readiness, social good, and optimism on the research model. The findings confirm the hypothesized model. In addition, various moderation effects were found among students’ perception of readiness, social good, and optimism for AI. The implications of the study point towards the need to consider these factors in designing AI curriculum to foster students’ behavioral intention to learn AI.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

Artificial Intelligence (AI) refers to technology that mimic human intelligent actions which by perceive, reason, act and interact with its environment (Dirican, 2015). It is redrawing the boundaries of what human and machine can do and hence redefining the relationship between human and machine (Jarrahi, 2018). Given its growing affordances, AI is being incorporated into many current organizations and technologies to optimize effectiveness and efficiency thus requiring workers to form new relationships with the intelligence now provided by machines. This has triggered off deep and profound reforms in the education sector (Seldon & Abidoye, 2018). For example, AI advancements have prompted the creation of intelligent teaching and learning machines that cater to adaptive personalization such as the intelligent tutoring systems (Fu et al., 2020; Zawacki-Richter et al., 2019) and pedagogical agents to stimulate students’ learning motivation (Veletsianos & Russell, 2014). Teaching computers to learn by providing students with teachable agents could also be an important way to foster learning (Matsuda et al., 2020). From these developments, it seems obvious that key players in today’s education have to consider the need for students to learn AI. Equipping students with basic AI literacy will provide students with a framework to understand AI as part of their epistemic resource for lifelong learning and working with AI. However, Zawacki-Richter et al., (2019) pointed out that research on AI education for K-12 setting is generally lacking. In addition, the current research on AI in education is focused on exploring the creation and use of AI systems to facilitate learning from multiple angles rather than understanding students’ engagement with AI (Hwang et al., 2020).

Recognizing the potential of AI for education, authorities and organizations have begun formulating policies and curriculum to prepare students for the AI-enhanced world. A prominent example is an effort from the China ministry of education to educate its citizen about AI (Knox, 2020) that has resulted in the publication of primary and secondary textbooks on AI (e.g., Qin et al., 2019; Tang & Chen, 2018). Given the significance of AI education, Hwang et al., (2020) advocated the need to establish a research database for this nascent area of research, including understanding students’ learning experiences with AI. For example, unlike other traditional technologies, AI is emerging and more disruptive, and its impact is drastic and real (Seldon & Abidoye, 2018).

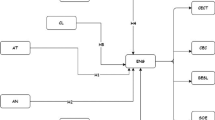

Future development of AI provides new ways of approaching problems and improving people’s lives hence it is crucial for us to understand how AI would affects human beings and society in disastrous ways (e.g., change the future of work and get out of control). From an educational perspective, being cognizant of the potentials of AI allows stakeholders to campaign for the need to foster students’ readiness and strengthen their intentions to learn AI. Accordingly, this study aims to understand students’ behavioral intention to learn AI through an extended Theory of Planned Behavior (Fishbein & Azjen, 2010) which specifies the interrelationships among students’ perceived usefulness (PU), attitude towards using AI (ATU), subjective norms (SN) to learn AI, and basic literacy about AI (AIL). Given the importance of users’ previous knowledge and experience to the acquisition of new abilities and learning of new concepts (Holzinger et al., 2011), this study selected secondary school students who have studied AI knowledge to understand the structural relationships of their learning experiences. The model established can provide useful references for future AI curriculum design. In addition, this study also aimed to provide a nuanced understanding of students’ learning experiences through the moderation effects of students’ AI readiness (RD), social good (SG), and optimism for AI (OP). The study contributes to current concerns about AI education by providing insights on the interplay of the various psychological factors that shape students’ continuous intention to learn AI.

Literature Review

Theory of Planned Behavior and Artificial Intelligence

In this study, the theory of planned behavior (TPB) is employed together with its derived theory of technology acceptance model (TAM) (Davis, 1989) to form the theoretical underpinnings for the exploration of factors influencing students’ continuous intention to learn AI. The TPB has been widely used to account for factors that influence users’ behavioral intention to engage technologies (e.g., Cheon et al., 2012; Chai et al., 2020; Guerin et al., 2018; Teo et al., 2016). However, its application for students to learn AI has been limited. Although the researchers (Chai et al., 2020, 2021) have identified some factors associated with students’ BI to learn AI using the TPB as a framework, more studies are needed to develop a comprehensive understanding of learners’ planned behavior for learning AI. Specifically, little or no moderation effects were investigated in the previous studies, thus creating a gap in the literature from which the insights generated by this study aim to fill.

Fishbein and Azjen (2010) theorized human’s action as an outcome of reasoning that formed the behavioral intention (BI). Such reasoning is based on “the information or beliefs people possess about the behavior under consideration” (p. 20). The information people held shapes their understanding of the context, possible actions, and consequences. However, one’s understanding is not dictated solely by the information received. Individual differences arising from personality trait, beliefs and experiences also shape the BI formation. Fishbein and Azjen have identified attitude towards behaviors, subjective norms (SN), and perceived behavioral control as the three main factors to account for an individual’s BI. Past studies have extensively identified these factors as significant predictors of BI with substantial variances accounted for (Ajzen, 2012). In this study, BI is operationalized as students’ intention to learn AI. Based on the foregoing review and recent studies (Chai et al., 2020, 2021), it is reasonable to hypothesize that students’ attitude towards using AI (ATU), and their subjective norms (SN) are positively associated with their BI. The hypotheses were formulated as followed:

Hypothesis 1

ATU will be a significant influence on BI.

Hypothesis 2

SN will be a significant influence on BI.

Technology Acceptance Model and AI

Davis (1989) further contextualized the TPB as the TAM, and he formulated the attitude towards behavior as perceived usefulness (PU) and the perceived behavioral control was related to the perceived ease of use in the TAM (Bhatti, 2007). Perceived usefulness referred to the extent the user believes that using certain technology would facilitate their performance while perceived ease of use refers to the belief about the effort needed to use the system (Huang et al., 2020a). Both factors were found to be significant contributing factors to BI for the use of specific technology in many subsequent studies (e.g., Kumar & Mantri 2021; Weng et al., 2018;). Studies of TAM in the context of e-learning and mobile learning and learning about AI provided further support for the significant influence of PU on BI (Buabeng-Andoh, 2021; Chai et al., 2020; Park, 2009). To date, two studies have employed the TAM to explore the use of AI for educational purpose. Chocarro et al., (2021) reported that PU and perceived ease of use could predict teachers’ intention to use chatbots and Lin et al., (2021) found that, among PU, subjective norms, perceived ease of use, attitude towards using AI, perceived ease of use, and subjective norms were significant in explaining medical professionals’ intention to learn to use AI applications for precision medicine. Following the above research findings, the following hypothesis was formulated:

Hypothesis 3

PU will be a significant influence on BI.

Perceived usefulness was also established to be positively associated with attitude to use specific technology (Huang et al., 2020b; Kumar & Mantri, 2021; Teo et al., 2018; Weng et al., 2018). For example, Weng and his colleague have reported that teachers’ intention to use multimedia in classes that they teach was predicted by their perceived usefulness of the multimedia teaching materials. Hence, hypothesis 4 was formulated.

Hypothesis 4

PU will be a significant influence on ATU.

Literacy and Subjective Norms and factors influencing behavioral intention

Behavioral intention is influenced by background factors arising from personal beliefs and social influences Fishbein & Ajzen, 2010; Huang & Teo, 2020; Huang et al., 2019; 2020a). In general, beliefs about literacy refers to knowledge and competence an individual thinks he or she possesses for a specific action. Recent research on multiple technological literacies such as computer literacy, information literacy and digital literacy are found in the literature (Nichols & Stornaiuolo, 2019; Stopar & Bartol, 2019). Being technologically literate implies that one knows how a specific type of technology works and can use it to solve problems (Davies, 2011; Moore, 2011). AI literacy (AIL) is an emerging key concept in designing AI curriculum (Watkins, 2020) and, the knowledge and basic understanding of AI would form the basis upon which users’ beliefs such as their PU of AI are shaped. In other words, literacy may be an antecedent to PU (Mei et al., 2018). These relationships were capture below:

Hypothesis 5

AIL will be a significant influence on PU.

Hypothesis 6

AIL will be a significant influence on BI.

Social influence on students’ intention to learn is commonly denoted by subjective norms (SN). The latter refers to the extent to which people close to the user regard using technology as important. In an education context, these people include parents, peers, and teachers whose opinions about pedagogical matters have been found to have significant influence on students’ career choice and PU (Law & Arthur, 2003; Huang & Teo 2021). Cheng et al.’s (2016) investigated students’ attitude towards e-collaboration and found that SN had significantly predicted students’ BI to collaborate electronically. In the context of e-learning, SN frequently exerts significant influence on students’ PU (Abdullah & Wards, 2016). Thus, hypothesis 7 was formulated.

Hypothesis 7

SN will be a significant influence on PU.

Moderators: Readiness, Social Good and Optimism

Major technological advancement would bring about changes that are disruptive and triggered industrial revolution. While the third industrial revolution was initiated by personal computer during the time the Internet had just transformed the world in the last two decades, the fourth industrial revolution was driven by digitization and automation (Hirschi, 2018; Seldon & & Abidoye, 2018). AI is part of the enabler for the fourth industrial revolution. From the literature, an instrument known as Technology Readiness Index was developed to measure the extent to which one is ready to engage technology (Parasuraman, 2000; Parasuraman & Colby, 2015). Readiness was operationalized by these researchers as an individual’s tendency to use technology as means to fulfill selected goals. The scale of change forecasted for the AI age similarly had prompted discussion about AI readiness. For instance, Holmstrom (2021) proposed a framework for business organizations to assess the readiness of their personnel to be engaged in digital transformation with AI. In the education context, Chai et al., (2021a) adapted a sub-scale from the technology readiness scale to measure primary school students’ sense of readiness. Consequently, based on the previous findings among primary school students, it is reasonable to hypothesize that AI readiness could moderate the interrelationships among the five factors (PU, ATU, SN, AIL, BI) for the proposed extended TPB model in this study.

Hypothesis 8

Readiness will be a significant moderator between the paths stated in hypotheses 1–7.

Given the potential of AI, designing AI for social good (SG) has met with strong supports from AI researchers (Bryson & Winfield, 2017; Tucker, 2019; Floridi et al., 2020) described AI for SG as “the design, development, and deployment of AI systems in ways that (i) prevent, mitigate or resolve problems adversely affecting human life and/or the wellbeing of the natural world, and/or (ii) enable socially preferable and/or environmentally sustainable development” (p.1773–1774). This notion resonated with current textbooks and organizations involved with developing AI education (e.g., https://ai.google/education/social-good-guide/) that emphasized the importance of SG (Qin et al., 2019; Tang & Chen, 2018). Such emphasis is congruent with the commonly accepted aim of education in cultivating caring citizens and the curriculum focus on computing for common good in engineering schools (Goldweber et al., 2011). Psychologically, learning that enhances one’s ability to serve the society has been found to be a strong motivation for individuals to engage in such activity (Yeager & Bundick, 2009). In addition, research have found that social good may have moderated the relationship between factors with a positive influence on students’ BI to learn AI, such as subjective norm and literacy (Chai et al., 2020, 2021). Hence, hypothesis 9 is formulated.

Hypothesis 9

Social Good will be a significant moderator between the paths stated in hypotheses 1–7.

Engagement with AI can kindle fears and anxiety among people (Wang & Wang, 2019). This may be attributed to the perception that AI would replace many jobs and has the ability to collect and analyze abundant personal data for use by the authorities to manipulate individuals and society (Johnson & Verdicchio, 2017). The negative effects arising from the misuse of AI may cause anxiety that could distort students’ view about AI hence prevent meaningful learning from taking place. On the other hand, optimism (OP) towards AI may alleviate students’ fears and anxiety to enhance their BI to learn AI (Chai et al., 2020). Optimism is a psychological trait that encourages positive expectancies about future success (King & Caleon, 2021). Optimism is a factor in the Technology Readiness Index (Parasuraman, 2000) and has been reported to be associated with perceived ease of use, PU and BI (Walczuch et al., 2007). Optimism has also found to have an influence on AIL, SN, ATU and BI to learn AI (Chai et al., 2020). From the above discussion, it is reasonable to expect optimism to moderate the relationships in the research model (Fig. 2).

Hypothesis 10

Optimism will be a significant moderator between the paths stated in hypotheses 1–7.

Based on the above review, two research questions were formulated to guide this study:

Research question 1

To what extent does the proposed research model (Fig. 1) explain users’ behavioral intentions to learn AI?

Research question 2

Do readiness, social good, and optimism moderate all the relationships (Fig. 2) in the proposed research model?

Method

Participants

Purposive sampling was adopted for this study. The participants were 511 students who have completed one AI enrichment program in secondary schools from the Northeastern cities in China such as Qingdao and Tianjin. The age range of the participants was between 14 and 18 (Mean = 14.3, SD = 1.52; grade 8–12; Female = 44.6%). They were invited via WeChat, informed about the purpose of the research, and provided with the link for the online survey questionnaire. Participation was voluntary and, upon completion of the online questionnaire, participants received a small token of appreciation provided over WeChat.

The AI course context

Participants attended an AI enrichment program prior to competing the survey questionnaire. In this course, students were taught to write a program to create an intelligent fire-fighting robots that could be launched to autonomously search and extinguish fire in a building. In the process, students learnt about computer vision, heat sensor, the working of a robot car, and coding the robots using python-based programming language. The success criteria were finding and extinguishing the fire in the fastest time. To do so, the robot has to be programmed to identify the sources of fire and compute the fastest route based on environmental data input. From these activities, students learnt the core concepts of data representation, visual recognition, machine learning, and the coding of the algorithm- commonly identified as core concepts for an AI curriculum in schools (Qin et al., 2019; Tang & Chen, 2018). The 32 1-hour sessions were taught after school hours as an enrichment programme.

Instrument

The survey questionnaire in this study measured eight factors with a total of 34 items adapted from published sources (Chai et al., 2020, 2021). Table 1 shows the details of the survey instrument. Each item in the instrument was rated with a 6-point Likert scale (1 = strongly disagree to 6 = strongly agree). Internal consistency (Cronbach Alpha) of each factor ranged from 0.82 to 0.91.

Data Analysis

The skewness and kurtosis were examined to ensure that the data did not violate the univariate normal distribution assumption. Results showed that they were within the recommended value (skewness= -0.831 to 0.188; kurtosis= -1.01 to 0.058). To test the measurement model, a confirmatory factor analysis (CFA) on a congeneric model with uncorrelated errors using the maximum likelihood estimation was performed. To test for the multivariate normality of the observed variables, the Mardia’s (1970) normalized multivariate kurtosis value was computed. The multivariate value in this study was 110.887, less than the recommended value (p (p + 2)) by Raykov & Marcoulides (2008), where p stands for the total number of observed items (21(23) = 483), supporting the assumption of multivariate normality of the data in this research. In addition, the good-of-fit indices, composite reliability (CR), average variance extracted (AVE), and discriminant validity were computed and examined. Finally, the structural model and the seven hypotheses were tested. Lastly, the moderation effects of readiness, social good and optimism on the relationships in the proposed model were tested and analyzed.

Results

Testing the measurement model

In determining the fit of the measurement model, several fit indices were used: minimum fit function (χ2) and the ratio of the χ2 to its degree of freedom (χ2/df), with a value lower than 3.0 regarded as acceptable (Carmines & Mclver, 1981). The Comparative Fit Index (CFI) and Tucker-Lewis Index (TLI) should be above the value of 0.90 to indicate an acceptable fit (Hair et al., 2010). In addition, the Root Mean Square Error of Approximation (RMSEA) with the value in the range of 0.08 to 0.10 regarded as an acceptance fit (Browne & Cudeck, 1993). Finally, the Standardized Root Mean Residual (SRMR) with the value less than 0.08 was used to indicate an acceptable fit (Hu & Bentler, 1999). The CFA yielded an acceptable model fit (Chi-square/df = 2.343, CFI = 0.955, GFI = 0.929, TLI = 0.948, RMSEA = .-51 [0.045, 0.057], SRMR = 0.0738). The composite reliability (CR) and average variance extraction (AVE) were used to test the reliability and convergent validity of each variable. Fornell & Larcker (1981) stated that the CR and AVE values of 0.50 or above can indicate adequate reliability of the measurement model. Furthermore, the standardized estimate (SE) of each item was tested, with a value higher than 0.50 indicating that an item has contributed significantly to explain its underlying construct (Hair et al., 2010). Based on the information presented in Table 2, we find support for an acceptable measurement model fit.

Discriminant validity, which reflects the extent to which a variable is unique and not just a.

reflection of other variables (Peter & Churchill, 1986), was assessed using the Fornell–Larcker criterion (Fornell & Larcker, 1981). To satisfy this criterion, the square roots of the AVEs for two latent variables must each be greater than the correlations between those two variables. In Table 3, the square root of the AVEs is highlighted in bold along the diagonal, showing that the Fornell–Larcker criterion is met; that is, all the diagonal values are greater than the off-diagonal numbers in the corresponding rows and columns.

Testing the structural model

A test of the structural model (Fig. 1) was conducted, and the fit indices indicated that the model was adequate (Chi-square/ df = 3.302, CFI = 0.922, GFI = 0.902, TLI = 0.912, RMSEA = 0.067 [0.062, 0.073] (Hair et al., 2010). In addition, the R2 for BI is 0.65, R2 for ATU is 0.08, and R2 for PU is 0.04. In this study, all hypothesized relationships were supported (Table 4).

Testing the moderation effects of readiness, social good and optimism

A path-by-path methods of moderation analysis revealed that readiness (RD), social good (SG), and optimism (OP) moderated some relationships in the research model, with chi-square values greater than 90% threshold, as shown in Table 5. In particular, the results indicate that RD had moderated the paths between PU→ATU, AIL→PU and PU→BI while SG moderated paths between PU→BI, ATU→BI, SN→BI. Finally, OP was a moderator for the paths between PU→BI and SN→BI.

Discussion

This study proposed an extended Theory of Planned Behavior (TPB) and, using structural equation modeling, tested seven hypotheses that were formulated to represent the inter-relationships in the proposed model. Overall, the findings supported that students’ BI to learn AI can be explained by the proposed TPB model, with 65% of variance accounted for BI by its antecedents (AIL, PU, SN, and ATU). The study provides support for the applicability of the TPB and TAM as the theoretical underpinnings to help researchers in understanding students’ BI to learn AI. In general, the findings were mostly congruent with a recent study (Chai et al., 2020) in which the model obtained in this study seems to be more consistent with the TPB. Given the current recognition of the importance of AI as a game changer for how we learn and work (Seldon & Abidoye, 2018), this study provides valuable insights for educators to understand students’ learning experiences. The findings in this study further address the current lack of research about AI education in the primary and secondary settings (Zawacki-Richter et al., 2019). With current AI research focusing on system design and testing (Fu et al., 2020; Hwang et al., 2020), this study provides a validated model for research into teaching and learning AI in education settings. We would argue that it is important to equip students with AI knowledge with an aim to better prepare them to leverage emerging AI technology for life-long learning with AI and about AI, which is necessary for the dynamic workplace they would be confronted with.

Three hypotheses associated to TAM were supported in this study. These suggest that, to foster students’ intention to learn AI, it would be important for teachers and AI program developers to foster students’ understanding about the usefulness of and a positive attitude towards using AI. In addition, perceived usefulness was positively associated attitude to use AI (i.e., hypotheses 1,3,4). These supported relationships are in general agreement with the myriad of studies involving the use of TAM that supported the significant role of perceived usefulness and attitude to use in predicting users’ behavioral intention (e.g., Buabeng-Andoh 2021; Park, 2009, Kumar & Mantri, 2021, Weng et al., 2018). Technologies are created to be useful despite a lack of guarantee that users will possess a positive attitude to ensure their intention to use it. For example, nuclear power, regarded by many as the epitome of human invention, is not welcomed in some parts of the world for its destructive power (e.g., Bian et al., 2021). In contrast, despite possible abuses of AI, the Chinese secondary students in this study held positive views on the usefulness of AI and had positive attitude towards using AI. They were trained to build an intelligent firefighting robot, which situate learning in a socio-technological context that highlight usefulness and social good. There are countless possibilities in structuring such examples that could be crafted as design-based challenges which require some forms and levels of intelligence to resolve. To cater to students’ individual needs, training programs should offer different challenges for students to choose through collective design by teachers from diverse backgrounds (Chiu et al., 2021). Empowering students to formulate their own challenges based on their personal concerns could also be a viable strategy. The findings in this study also supported the notion that, to learn a specific technology as subject matter (such as AI), perceived usefulness and attitude towards using that technology are important factors. The current AI textbooks in China (Qin et al., 2019; Tang & Chen, 2018) were structured around useful application of AI including cancer diagnosis and language translation. However, it is more important that students understand how the affordances of AI could be harnessed to perform tasks that they could relate to and experience in their daily life.

Subjective norms were found to be a significant influence on BI and PU (i.e., Hypotheses 2 and 7). This is consistent with the Theory of Planned Behavior Ajzen (2012), recent research in e-learning (Abdullah & Wards, 2016), and language teaching in China (Huang et al., 2021) where SN predicts BI. In the Chinese education context, teachers, school authorities, and the government are regarded as significant opinion leaders. With the advocacy of the Chinese government for AI education in the schools (Knox, 2020) and, considering the collectivist sociocultural inclination in the Chinese society (Hofstede, 2001), it follows that students’ intention to learn AI in this study had been influenced by these opinion leaders. Given the important role that subjective norm plays in AI acceptance, key stakeholders in education (e.g., school leaders, teachers, peers, etc.) who exert influence on young students’ opinion should share their positive experiences in engaging AI with students in the hope that, over time, they will form an impression that AI is useful for their learning hence strengthening their intention to use AI. The platforms for such exchanges include the classrooms, seminars, and training workshops.

In recent years, AI literacy has captured the attention of many educators (Watkins, 2020). This study found that AI literacy could predict students’ BI (Hypothesis 5 and 6) and is congruent with the theory of planned behavior which posits that knowledge about an object is key in deciding whether one would be engaged with that object (Fishbein & Ajzen, 2010). Consistent with previous studies (e.g., Chai et al., 2020; 2021), AI literacy is found to be a significant predictor for perceived usefulness in this study.

Three factors (Readiness, Social Good and Optimism) were hypothesized to moderate the path-to-path relationships in the proposed model (Fig. 2).

Readiness indicates a positive confidence with regards to using technologies to accomplish personal and work-related goals without uncontrollable outcomes (Parasuraman & Colby, 2015). The notion of readiness is akin to perceived behavioral control of TPB, which accounts for self-efficacy and controllability (Ajzen, 2002). In this study, readiness moderated the paths PU◊ BI and PU ◊ ATU indicating the role of students’ personal assessment of their ability to use and control the outcomes of using AI technologies in influencing their BI and ATU. This constitutes a more subtle understanding of usefulness in that what is perceived to be useful can be influenced by the perception of ones’ ability to control the outcomes. The teaching of AI should inspire students’ confidence that the technology and its use can be managed and regulated through personal agency.

The non-moderated paths (SN◊PU; SN◊ BI; ATU◊ BI, AIL◊PU, AIL◊ BI) suggested that SN and AIL were not influenced by the notion of readiness. This suggests that the significant positive relations of these paths were strong enough that students’ perception of readiness did not moderate the statistical relationships.

Of the four paths hypothesized to influence BI, Social good was found to moderate three (i.e. PU◊BI, SN◊BI, ATU◊BI) except for AIL◊ BI. The latter finding suggests that understanding the core concepts of AI (literacy) was sufficient to trigger students’ desire to learn AI. Students who understand how AI technologies can be useful to address problems and challenges in the society (i.e. PU◊ BI). In addition, support from teachers, friends, and parents (i.e., subjective norms) for students to learn AI (i.e., SN◊BI) is likely to be influenced by using AI for social good, given the socialist character of the China society (Hofstede, 2001). Being an identified focus of most AI curriculum (Floridi et al., 2020; Tang & Chen, 2018) and promoting social good to students learning AI is congruent to digital citizenship education. The promotion of social good would also enhance students’ attitude to use AI (i.e., ATU◊BI). For example, in a well-designed AI curriculum, the role of AI in predicting natural hazards and disasters or increasing the diagnostic precision when interpreting medical images could be highlighted (see curriculum design in Chiu et al., 2022).

The paths that were not moderated by social good (SN◊PU; PU◊ ATU; AIL◊PU, AIL◊ BI) may suggest students’ perception of social good was not statistically influencing these significant positive paths.

Optimism was found to moderate the relationships between perceived usefulness and attitude to use AI and the students’ intention to learn (i.e., PU◊BI; SN ◊ BI). The moderated patterns were similar to social good except for ATU◊ BI. Optimism is a personality trait (Parasuraman, 2000; King & Caleon, 2021) that may help to reduce fears and encourage positive reactions. The finding of this study is congruent with previous studies (Walczuch et al., 2007; Chai et al., 2020) that found people to be concerned about the future development of AI technologies and were worried that technologies might get out of control and affect society in disastrous ways (Johnson & Verdicchio, 2017; Wang & Wang, 2019). Therefore, when one is inclined not to view the AI technologies in an optimistic way, the effects of the perceived usefulness and the opinions by socially significant others would be diminished along with his/her BI. From the literature, AI can be abused to identify, track, monitor, and analyze individual’s personal data to new levels of power and speed and in ways that can invade personal privacy (Bryson & Winfield, 2017; Tucker, 2019). Therefore, it is very important to emphasize AI ethics and human bias when designing AI teaching units (Chai et al., 2021) and to make sure the ethical, legal, and societal issues are fully considered (Mueller et al., 2021). In addition, some of the concerns, fears and anxieties are caused by misunderstanding of and confusion about what AI technologies are and can do (Wang & Wang, 2019). While optimism as a personality trait may not be easily changed, it seems important to foster in students a positive outlook by well-designed curricula that keep students informed and boost the students’ confidence in the applicability of AI technologies (Chai et al., 2020).

The paths that were not moderated by optimism (SN◊PU; PU◊ ATU; ATU◊BI, AIL◊PU, AIL◊ BI) indicate that AIL was not influenced as with other moderators, and that SN◊PU; PU◊ ATU; ATU◊BI were not influenced by optimism. This could be because optimism is a personality trait that possess a level of stability inconsistent with that of the other variable in this study.

All three moderators influence the path between PU and BI. This implies that students’ view about learning AI to advance personally selected goals (Parasuraman & Colby, 2015), to create personally bright future (King & Caleon, 2021) and to contribute to society (Floridi et al., 2020) are related to their perception of the usefulness of AI and their intention to learn AI. The finding of this study refined our current understanding of what may constitute and influence perceived usefulness. They also provide insights to support our view that AI curriculum design should highlight how acquiring AI knowledge can put one in a strong position in an AI-enhanced world to foster their intention to learn.

Limitation of the study.

This study relied on students’ self-report data to measure items from eight constructs specified in a proposed model within the TPB and TAM framework. As such, the findings may be context sensitive. Given that students’ responses were curriculum-dependent, the proposed model albeit statistically valid, may have limited generalizability to other contexts. In addition, the factor of perceived ease of use was not included in this study. It may be considered as a generic factor of TAM. Similarly, perceived behavioral control is a generic factor for TPB. It was also not included in this study. Future research may consider including these factors and other learning contexts in the research model. In the similar vein, there are different ways to contextualize other relevant attitudes or background factors for the learning of AI. Future research may argue for other relevant factors to bring more light to this area of research.

Conclusions

Overall, this study has contributed to current literature by providing a psychological model of learning AI based on the TPB and TAM. It highlighted important factors that AI curriculum designer should consider. As one of the first few studies in secondary education sector for AI education (Zawacki-Richter et al., 2019), this study has the potential to lay a foundation for subsequent studies as issues related with AI and education are likely to emerge as it continues to capture the attention of researchers in the foreseeable future.

References

Abdullah, F., & Ward, R. (2016). Developing a general extended technology acceptance model for E-learning (GETAMEL) by analysing commonly used external factors. Computers in Human Behavior, 56, 238–256. https://doi.org/10.1016/j.chb.2015.11.036

Ajzen, I. (2002). Perceived behavioral control, self-efficacy, locus of control, and the theory of planned behavior. Journal of Applied Social Psychology, 32(4), 665–683. https://doi.org/10.1111/j.1559-1816.2002.tb00236.x

Ajzen, I. (2012). The Theory of planned behavior. In Van P. A. M. Lange, A. W. Kruglanski, & E. T. Higgins (Eds.), Handbook of Theories of Social Psychology (pp. 438–459). London, UK: Sage

Bhatti, T. (2007). Exploring factors influencing the adoption of mobile commerce.Journal of

Internet Banking and Commerce, 12(3),1–13

Bian, Q., Han, Z., Veuthey, J., & Ben Ma, B. (2021). Risk perceptions of nuclear energy, climate change, and earthquake: How are they correlated and differentiated by ideologies? Climate Risk Management, 32, https://doi.org/10.1016/j.crm.2021.100297

Borenstein, J., & Howard, A. (2021). Emerging challenges in AI and the need for AI ethics education. AI and Ethics, 1(1), 61–65. https://doi.org/10.1007/s43681-020-00002-7

Browne, M. W., & Cudeck, R. (1993). In K. A. Kollen (Ed.), Alternative ways of assessing model fit. & J

Long, S. (Ed.). Testing structural equation models (pp. 136 – 162). Thousand Oaks, CA:Sage

Bryson, J., & Winfield, A. (2017). Standardizing ethical design for artificial intelligence and autonomous systems. Computer, 50(5), 116–119. https://doi.org/10.1109/MC.2017.154

Buabeng-Andoh, C. (2021). Exploring University students’ intention to use mobile learning: A research model approach. Education Information and Technologies, 26, 241–256. https://doi.org/10.1007/s10639-020-10267-4

Carmines, E., & McIver, J. (1981). Analyzing models with unobserved variables: analysis of

covariance structures. Social Measurement Current Issues. Beverly Hills, CA:Sage

Chai, C. S., Wang, X., & Xu, C. (2020). An extended theory of planned behavior for the modelling of Chinese secondary school students’ intention to learn Artificial Intelligence. Mathematics 8 (11), 2089; doi:https://doi.org/10.3390/math8112089

Chai, C. S., Lin, P. Y., Jong, M. S. Y., Dai, Y., Chiu, T. K. F., & Qin, J. J. (2021). Perceptions of and behavioral intentions towards learning artificial intelligence in primary school students. Educational Technology & Society, 24 (3), 89–101.

Chiu, T. K. F., Meng, H., Chai, C. S., King, I., Wong, S., & Yam, Y. (2022). Creation and evaluation of a pretertiary Artificial Intelligence (AI) curriculum. IEEE Transactions on Education, 65(1), 30–39

Chocarro, R., Cortinas, M., & Marcos-Matas, G. (2021). Teachers’ attitudes towards chatbots in education: a technology acceptance model approach considering the effect of social language, bot proactiveness, and users’ characteristics. Educational Studies. https://doi.org/10.1080/03055698.2020.1850426

Cheng, E. W. L., Chu, S. K. W., & Ma, C. S. M. (2016). Tertiary students’ intention to e-collaborate for group projects: Exploring the missing link from an extended theory of planned behavior model. British Journal of Educational Technology, 47(5), 958–969

Cheon, J., Lee, S., Crooks, S., & Song, J. (2012). An Investigation of mobile learning readiness in higher education based on the theory of planned behavior. Computers and Education, 59(3), 1054–1064. https://doi.org/10.1016/j.compedu.2012.04.015

Chiu, T. K., Meng, H., Chai, C. S., King, I., Wong, S., & Yam, Y. (2021). Creation and evaluation of a pretertiary artificial intelligence (AI) curriculum.IEEE Transactions on Education

Davis, F. D. (1989). Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Quarterly, 13(3), 319–340

Davies, R. S. (2011). Understanding technology literacy: A Framework for evaluating educational technology integration. TechTrends, 55(5), 45–52. https://doi.org/10.1007/s11528-011-0527-3

Dirican, C. (2015). The impacts of robotics, artificial intelligence on business and economics. Procedia-Social and Behavioral Sciences, 195, 564–573

Fishbein, M., & Ajzen, I. (2010). Predicting and changing behavior: The Reasoned action approach. London, UK: Psychology Press

Floridi, L., Cowls, J., King, T. C., & Taddeo, M. (2020). How to Design AI for Social Good: Seven Essential Factors. Science and Engineering Ethics, 26, 1771–1796. https://doi.org/10.1007/s11948-020-00213-5

Fornell, C., & Larcker, D. F. (1981). Evaluating structural equation models with unobservable variables and measurement error. Journal of Marketing Research, 18(1), 39–50. https://doi.org/10.1177/002224378101800104

Fu, S., Gu, H., & Yang, B. (2020). The affordances of AI-enabled automatic scoring applications on learners’ continuous learning intention: An empirical study in China. British Journal of Educational Technology, 51(5), 1674–1692. https://doi.org/10.1111/bjet.12995

Goldweber, M., Davoli, R., Little, J. C., Riedesel, C., Walker, H., Cross, G., & Von Konsky, B. R. (2011). Enhancing the social issues components in our computing curriculum: computing for the social good. ACM Inroads, 2(1), 64–82. https://doi.org/10.1145/1929887.1929907

Guerin, R. J., Toland, M. D., Okun, A. H., Liliana Rojas-Guyler, L., & Bernard, A. L., A. L (2018). Using a modified theory of planned behavior to examine adolescents’ workplace safety and health knowledge, perceptions, and behavioral intention: A structural equation modeling approach. Journal of Youth and Adolescence, 47, 1595–1610. https://doi.org/10.1007/s10964-018-0847-0

Hair, J. F. Jr., Black, W. C., Babin, B. J., Anderson, R. E., & Tatham, R. L. (2010). SEM: An introduction. Multivariate data analysis: A global perspective (7th ed., pp. 629–686). Upper Saddle River, NJ: Pearson Education

Hirschi, A. (2018). The fourth industrial revolution: Issues and implications for career research and practice. The Career Development Quarterly, 66(3), 192–204. https://doi.org/10.1002/cdq.12142

Hofstede, G. (2001). Culture’s consequences: Comparing values, behaviors, institutions and organizations across nations (2nd ed.). Thousand Oaks CA: Sage Publications

Holmstrom, J. (2021). From AI to digital transformation: The AI readiness framework. Business Horizons. https://doi.org/10.1016/j.bushor.2021.03.006

Hu, L. T., & Bentler, P. M. (1999). Cutoff criteria for fit indexes in covariance structure analysis: Conventional criteria versus new alternatives. Structural equation modeling: a multidisciplinary journal, 6(1), 1–55

Huang, F., & Teo, T. (2020). Influence of Teacher-Perceived Organisational Culture and School Policy on Chinese Teachers’ Intention to Use Technology: An extension of Technology Acceptance Model. Educational Technology Research & Development, 68, 1547–1567

Huang, F., & Teo, T. (2021). Examining the role of technology-related policy and constructivist teaching belief on English teachers’ technology acceptance: A study in Chinese universities. British Journal of Educational Technology, 52(1), 441–460

Huang, F., Teo, T., & Zhou, M. (2019). Factors affecting Chinese English as a foreign language teachers’ technology acceptance: A qualitative study. Journal of Educational Computing Research, 57(1), 83–105

Huang, F., & Teo, T. (2020a). & Scherer, R. Investigating the antecedents of university students’ perceived ease of using the Internet for learning. Interactive Learning Environments. doi.https://doi.org/10.1080/10494820.2019.1710540

Huang, F., Teo, T., & Zhou, M. (2020b). Chinese students’ intentions to use the Internet for learning. Educational Technology Research & Development, 68(1), 575–591

Huang, F., Teo, T., & Guo, J. Y. (2021). Understanding English teachers’ non-volitional use of online teaching: A Chinese study.System,101

Holzinger, A., Searle, G., & Wernbacher, M. (2011). The effect of previous exposure to technology on acceptance and its importance in usability and accessibility engineering. Universal Access in the Information Society, 10(3), 245–260

Hwang, G. J., Xie, H., Wah, B. W., & Gašević, D. (2020). Vision, challenges, roles and research issues of Artificial Intelligence in Education. Computers and Education: Artificial Intelligence, 1. https://doi.org/10.1016/j.caeai.2020.100001

International Business Machines Corporation (IBM) (2020). Artificial intelligence. Retrieved May 2021 from https://www.ibm.com/cloud/learn/what-is-artificial-intelligence

Jarrahi, M. H. (2018). Artificial intelligence and the future of work: Human-AI symbiosis in organizational decision making. Business Horizons, 61(4), 577–586. https://doi.org/10.1016/j.bushor.2018.03.007

Johnson, D. G., & Verdicchio, M. (2017). AI anxiety. Journal of the Association for Information Science and Technology, 68(9), 2267–2270. https://doi.org/10.1002/asi.23867

King, R. B., & Caleon, I. S. (2021). School psychological capital: Instrument development, validation, and prediction. Child Indicators Research, 14(3), 341–367. https://doi.org/10.1007/s12187-020-09757-1

Knox, J. (2020). Artificial intelligence and education in China. Learning, Media and Technology, 45(3), 298–311. https://doi.org/10.1080/17439884.2020.1754236

Kumar, A., & Mantri, A. (2021). Evaluating the attitude towards the intention to use ARITE system for improving laboratory skills by engineering educators. Education and Information Technologies. https://doi.org/10.1007/s10639-020-10420-z

Law, L., & Authur, D. (2003). What factors influence Hong Kong school students in their choice of a career in nursing? International Journal of Nursing Studies, 40(1), 23–32. https://doi.org/10.1016/S0020-7489(02)00029-9

Lin, H. C., Tu, Y. F., Hwang, G. J., & Huang, H. (2021). From precision education to precision medicine: Factors affecting medical staff’s intention to learn to use AI applications in hospitals. Educational Technology & Society, 24(1), 123–137

Mardia, K. V. (1970). Measures of multivariate skewness and kurtosis with applications. Biometrika, 57(3), 519–530

Matsuda, N., Weng, W., & Wall, N. (2020). The Effect of metacognitive scaffolding for learning by teaching a teachable agent. International Journal of Artificial Intelligence in Education, 30, 1–37. doi:https://doi.org/10.1007/s40593-019-00190-2

Moore, D. R. (2011). Technology literacy: The Extension of cognition. International Journal of Technology and Design Education, 21(2), 185–193. https://doi.org/10.1007/s10798-010-9113-9

Mueller, H., Mayrhofer, M. H., Van Veen, E., & Holzinger, A. (2021). The Ten Commandments of Ethical Medical AI. IEEE COMPUTER, 54(7), 119–123. doi:https://doi.org/10.1109/MC.2021.3074263

Ng, P. T. (2020). The Paradoxes of Student Well-being in Singapore. ECNU Review of Education, 3(3), 437–451. https://doi.org/10.1177/2096531120935127

Nichols, T. P., & Stornaiuolo, A. (2019). Assembling “Digital Literacies”: Contingent pasts, possible futures. Media and Communication, 7(2), 14–24. https://doi.org/10.17645/mac.v7i2.1946

Parasuraman, A. (2000). Technology readiness index (TRI): A Multiple-item scale to measure readiness to embrace new technologies. Journal of Service Research, 2(4), 307–320. https://doi.org/10.1177/109467050024001

Parasuraman, A., & Colby, C. L. (2015). An Updated and streamlined technology readiness index: TRI 2.0. Journal of Service Research, 18(1), 59–74. https://doi.org/10.1177/1094670514539730

Park, S. Y. (2009). An analysis of the Technology Acceptance Model in understanding university students’ behavioral intention to use e-Learning. Educational Technology & Society, 12(3), 150–162

Peter, J., & Churchill, G. (1986). Relationships among research design choices and psychometric properties of rating scales: A meta-analysis. Journal of Marketing Research, 23(1), 1–10. https://doi.org/10.1177/002224378602300101

Qin, J. J., Ma, F. G., & Guo, Y. M. (2019). Foundations of Artificial Intelligence for primary school. Beijing, CN: Popular Science Press

Raykov, T., & Marcoulides, G. A. (2008). An introduction to applied multivariate analysis. New York, NY: Routledge

Seldon, A., & Abidoye, O. (2018). The Fourth education revolution. London, UK: Legend Press Ltd.

Stopar, K., & Bartol, T. (2019). Digital competences, computer skills and information literacy in secondary education: mapping and visualization of trends and concepts. Scientometrics, 118, 479–498. https://doi.org/10.1007/s11192-018-2990-5

Tang, X., & Chen, Y. (2018). Fundamentals of artificial intelligence. Shanghai, CN: East China Normal University

Teo, T., Huang, F., & Hoi, C. K. W. (2018). Explicating the influences that explain intention to use technology among English teachers in China.Interactive Learning Environments, 26(4), 460–475

Teo, T., Zhou, M., & Noyes, J. (2016). Teachers and technology: Development of an extended theory of planned behavior. Educational Technology Research and Development, 64(6), 1033–1052

Tucker, C. (2019). Privacy, Algorithms, and Artificial Intelligence. In A. Agrawal, J. Gans, & A. Goldfarb (Eds.), The Economics of Artificial Intelligence (pp. 423–438). Chicago: University of Chicago Press. https://doi.org/10.7208/9780226613475-019

Veletsianos, G., & Russell, G. (2014). Pedagogical Agents. In M. Spector, D. Merrill, J. Elen, & M. J. Bishop (Eds.), Handbook of Research on Educational Communications and Technology (4th ed., pp. 759–769). New York: Springer Academic

Walczuch, R., Lemmink, J., & Streukens, S. (2007). The effect of service employees’ technology readiness on technology acceptance. Information & Management, 44(2), 206–215. https://doi.org/10.1016/j.im.2006.12.005

Wang, Y. Y., & Wang, Y. S. (2019). Development and validation of an artificial intelligence anxiety scale: an initial application in predicting motivated learning behavior. Interactive Learning Environments. https://doi.org/10.1080/10494820.2019.1674887

Watkins, T. (2020). Cosmology of artificial intelligence project: Libraries, makerspaces, community and AI literacy. AI Matters, 5(4), 14–17. https://doi.org/10.1145/3375637.3375643

Weng, F., Yang, R. J., Ho, H. J., & Su, H. M. (2018). A TAM-based study of the attitude towards use intention of multimedia among School Teachers. Applied System Innovations, 1(3), 36. https://doi.org/10.3390/asi1030036

Yeager, D. S., & Bundick, M. J. (2009). The role of purposeful work goals in promoting meaning in life and in schoolwork during adolescence. Journal of Adolescent Research, 24(4), 423–452

Zawacki-Richter, O., Marín, V. I., Bond, M., & Gouverneur, F. (2019). Systematic review of research on artificial intelligence applications in higher education–where are the educators? International Journal of Educational Technology in Higher Education, 16(1), 39. https://doi.org/10.1186/s41239-019-0171-0

Acknowledgements

The authors would like to express gratitude to participants in this study.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

None

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Sing, C.C., Teo, T., Huang, F. et al. Secondary school students’ intentions to learn AI: testing moderation effects of readiness, social good and optimism. Education Tech Research Dev 70, 765–782 (2022). https://doi.org/10.1007/s11423-022-10111-1

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11423-022-10111-1