Abstract

Summarization and persuasive writing are important in postsecondary education and often require the use of source text. However, students entering college with low literacy skills often find this type of writing difficult. The present study compared predictors of performance on text-based summarization and persuasive writing in a sample of low-skilled adult students enrolled in college developmental education courses. The predictors were general reading and writing ability, self-efficacy, and teacher judgments. Both genre-specific and general dependent variables were used. A series of hierarchical regressions modeling participants’ writing skills found that writing ability and self-efficacy were predictive of the proportion of functional elements in the persuasive essays, reading ability predicted the proportion of main ideas from source text in the summaries, and teacher judgments were predictive of vocabulary usage. General reading and writing skills predicted written summarization and persuasive writing differently; the data showed relationships between general reading comprehension and text-based summarization on one hand, and between general writing skills and persuasive essay writing on the other.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Writing skill depends on an intricate interweaving of cognitive, linguistic and affective processes. The writer must rapidly retrieve knowledge from long term memory, manage working memory and attention, express ideas cohesively in verbal form, and think clearly about topics (Kellogg & Whiteford, 2009). It has been proposed that proficient writers utilize a “task environment” made up of social and physical factors including awareness of the audience for the writing, and the text written so far. Further, writers draw on processes at the level of “the individual,” including planning, drafting, and revision, and motivation and affect. Affective factors in writing include one’s goals, predispositions and beliefs about writing (Hayes, 1996).

At the postsecondary level, proficient writing relies on strategies intended to ensure an appropriate response to an assigned prompt (Fallahi, 2012; MacArthur, Philippakos, & Ianetta, 2015). The writer is now expected to understand the informational needs of a reader, generate text appropriate to the purpose and convey information accurately and coherently, while at the same time obeying conventions of grammar and spelling. At this point, there is a focus on discipline-specific writing that summarizes, synthesizes, analyzes and responds to information, and offers evidence to support a position (O’Neill, Adler-Kassner, Fleischer, & Hall, 2012).

For many years, two types of writing that have featured prominently in postsecondary education are persuasive writing and written summarization (Bridgeman & Carlson, 1984; Hale et al., 1996; Wolfe, 2011). These two forms of writing present difficulty for low-skilled adults in this setting (Johns, 1985; MacArthur et al., 2015), and understanding predictors of performance on the two tasks would be an important contribution to intervention planning. The purpose of this study is to determine the extent to which general reading and writing ability, self-efficacy and teacher judgments served as predictors of persuasive writing compared to summarization among low-skilled adult students enrolled in postsecondary developmental education (also known as college remedial programs). In particular, predictors were examined for persuasive writing and summaries written based on source text.

Persuasive writing

The goal of persuasive writing is to convince readers of a position. The writer should state and defend an opinion on an issue, and, at an advanced level, acknowledge and rebut an opposing position, and draw a conclusion (De La Paz, Ferretti, Wissinger, Yee, & MacArthur, 2012; Ferretti, Lewis, & Andrews-Weckerly, 2009; Wolfe, 2011). Writers of well-written persuasive essays are aware of the level of knowledge of their audience and can anticipate readers’ views (Ferretti et al., 2009). Discourse knowledge important in persuasive writing includes an awareness of customary norms of thought of members of a disciplinary community (Goldman & Bisanz, 2002; Shanahan & Shanahan, 2008; Wineburg, 1991; Yore, Hand, & Prain, 2002). Little information is available in previous literature on the persuasive writing abilities of postsecondary remedial students. However, it has been reported that the persuasive essays of students in adult literacy programs may be of low overall quality and display problems with all elements of the persuasive genre, although these weaknesses may decrease after a focused intervention (MacArthur & Lembo, 2009).

Written summarization

Summarization involves arriving at the gist of a message by condensing information to its most important ideas (Perin, Keselman, & Monopoli, 2003; Brown & Day, 1983; Kintsch & van Dijk, 1978; Westby, Culatta, Lawrence, & Hall-Kenyon, 2010). Key processes in summarization are knowing the purpose of a summary, abstracting only the most important information, integrating ideas, and creating a brief but cohesive message expressing condensed information in one’s own words (Westby et al., 2010). Several studies have reported difficulties in text-based summarization among academically underprepared students in postsecondary education, and specifically, with including important ideas from the source text and representing information accurately (Perin et al., 2003; Perin, Bork, Peverly & Mason, 2013; Johns, 1985; Selinger, 1995). For example, Johns (1985) reported that only six percent of a sample of developmental education students included two-thirds of the main ideas in a reading passage when writing a summary and that over half of the information in the summaries was inaccurate. Perin et al. (2003), in a study of written summarization in a similar population, found that approximately 15% of sentences written were extraneous to information in source text, and about one-third of sentences portrayed information inaccurately.

Text-based writing

When the stimulus for writing is written text, reading comprehension skills are required (Fitzgerald & Shanahan, 2000; Mateos, Martin, Villalon, & Luna, 2008). As students enter postsecondary education, they should be able to analyze text to identify the most important information, utilize background knowledge from specific content areas, interpret language and vocabulary appropriately for the context, use strategies to understand new concepts, and draw analogies between different pieces of information (Holschuh & Aultman, 2009; Macaruso & Shankweiler, 2010; Paulson, 2014; Wang, 2009). The ability to comprehend complex expository text is particularly important (Armstrong, Stahl, & Kantner, 2015). Competent reading at the postsecondary level depends on self-regulatory and metacognitive mechanisms involved in setting goals for reading a particular text, applying knowledge of text structure to comprehension, assessing one’s understanding while reading, and evaluating the trustworthiness of text (Bohn-Gettler & Kendeou, 2014; Bråten, Strømsø, & Britt, 2009).

From a cognitive and linguistic perspective, reading and writing are closely related (Fitzgerald & Shanahan, 2000). In higher education, students need to integrate these skills since, at this level, writing assignments tend to be text-based (Carson, Chase, Gibson, & Hargrove, 1992; Jackson, 2009; McAlexander, 2003) and require critical reading of source text (O’Neill et al., 2012; Yancey, 2009). Many students entering postsecondary education have low literacy skills (Sparks & Malkus, 2013) and do not meet college readiness standards on reading and writing (National Governors’ Association and Council of Chief State School Officers, 2010).

Self-efficacy for reading and writing

Self-efficacy, defined as the level of confidence a person has in his/her own ability to perform a challenging task (Bruning, Dempsey, Kauffman, McKim, & Zumbrunn, 2013), is an important construct in postsecondary education. This variable is associated with academic achievement and perseverance, including a tendency to increase effort or attempt new strategies in the face of academic difficulty (Conley & French, 2014).

Self-efficacy appears to mediate learning among college students (Kitsantas & Zimmerman, 2009), and systematic relationships between this construct and both reading and writing achievement have been reported (MacArthur, Philippakos, & Graham, 2016; Pajares & Valiante, 2006). College developmental (remedial) reading students report lower levels of self-efficacy than students not placed in developmental reading courses (Cantrell et al., 2013). Further, low-skilled postsecondary students may increase their self-efficacy for reading and writing tasks as their skills improve (Caverly, Nicholson, & Radcliffe, 2004; MacArthur et al., 2015).

Teacher judgments of students’ reading and writing skills

Teachers have been found to be reliable judges of their students’ reading ability (Ritchey, Silverman, Schatschneider, & Speece, 2015). An early review of studies of the relation between teacher ratings and student test scores reported correlations from r = .28 to r = .86, with a median correlation of r = .62 (Hoge & Coladarci, 1989). A more recent review corroborated this finding, with a mean effect size of .63 (Südkamp, Kaiser, & Möller, 2012). Teacher judgments of students’ general writing ability have been found to be moderate predictors of students’ motivational beliefs about writing, including self-efficacy (Troia, Harbaugh, Shankland, Wolbers, & Lawrence, 2013). However, it has also been reported that teacher ratings of reading skill are more reliable predictors for higher than lower achieving students achieving students (Begeny, Krouse, Brown, & Mann, 2011; Feinberg & Shapiro, 2009), and that teachers may overestimate their students’ ability, especially for students of average reading ability (Martin & Shapiro, 2011). One question that has rarely been addressed in the literature is the relation between teacher judgments and students’ self-efficacy. Especially where low-skilled adults are concerned, it is not known whether students and/or their instructors are able to predict performance levels accurately, and the extent to which instructors’ judgments match their students’ estimates of their own performance.

Current study

Previous studies of persuasive writing and summarization have focused on typically-performing postsecondary students, and students of a range of ability in elementary and secondary education. To our knowledge, no study has reported on predictors of the two types of writing among low-skilled adults in higher education. Seeking to address this gap in the literature, the current study examined predictors of persuasive writing and summarization performance by developmental education students in two colleges in the U.S. The study asked, what are the contributions of general reading and writing ability, self-efficacy ratings, and teacher judgments to measures of text-based summarization and persuasive writing, controlling for college attended? College was entered as a control variable because initial analyses showed differences in reading scores as a function of the participants’ college.

Method

Participants and setting

The participants were N = 211 students attending community college developmental education courses in which reading and writing instruction were integrated. The purpose of developmental education is to provide instruction in basic skills to prepare low-skilled students for the academic demands of the postsecondary curriculum (Boylan, 2002). Entrants to community colleges (also known as two-year and junior colleges) are assessed for reading, writing and mathematics skills and referred to developmental education if test scores are below a predetermined cut point (Bailey, Jeong, & Cho, 2010). The courses attended by participants were one level or two levels below freshman English composition courses in two community colleges in a southern US state (N = 123 in College 1, N = 88 in College 2). The initial sample was N = 212 but one participant was dropped from the analysis because the written summary task was not completed. Fifty-four percent of the participants were Black or African-American, 64% were female, and 75% spoke English as a native language. All were fluent in oral English. Mean age was 24.55 years (SD = 10.66), with 71% aged 18–24 years. All participants had completed secondary education; 83% had a regular high school diploma and the others had a General Education Development (GED) equivalency diploma. Thirty-one percent of participants were responsible for children in the home, and 59% were employed.

The students’ developmental courses centered on three state-mandated goals: demonstrate the use of reading and writing processes; apply critical thinking strategies in reading and writing; and recognize and compose well-developed, coherent, and unified texts. Competencies prescribed by the state relevant to the current study were to compose a text with a consistent point of view, and summarize complex texts. These goals and competencies were developed in a statewide educational reform effort that had occurred prior to the current study and pertained only to postsecondary developmental education.

Classroom observations and interviews with instructors indicated that students did in fact write summaries and persuasive essays during the course. The courses lasted eight weeks and at the time of data collection, the participants were nearing the end of the course. Instructors reported anecdotally that almost all of the study participants were expected to pass the course, meaning that they were considered to be almost ready for postsecondary-level reading and writing.

Measures

Text-based writing tasks necessarily involve both reading comprehension and writing skills (Fitzgerald & Shanahan, 2000). Further, text-based persuasive writing and summarization may trigger different reading processes (Gil, Bråten, Vidal-Abarca, & Strømsø, 2010a, b). In addition, self-efficacy has been found to be related to writing skills among low-skilled adults in postsecondary education (MacArthur et al., 2015). For these reasons, general reading and writing ability, and self-efficacy were selected as basic measures of skill and affect in the current study, which we see as an initial foray into this area. A fourth predictor, teacher judgments, was selected for the purpose of eventual intervention planning, as previous work with children has found this variable to be systematically related to classroom literacy performance (e.g., Südkamp et al., 2012). As a practical matter, we were also interested in the extent to which self-efficacy ratings matched teacher judgments in the target population.

General reading and writing skill

The Nelson-Denny Reading Test, Comprehension subtest (Brown, Fishco, & Hanna, 1993) and the Woodcock–Johnson III (WJ-III, Woodcock, McGrew, & Mather et al., 2001) Writing Fluency subtest, were used as measures of general reading and writing skills. Raw scores, obtained according to instructions in the test manuals, were used in the analysis.

The Nelson-Denny Comprehension subtest consists of 38 multiple-choice factual and inferential questions based on seven unrelated reading passages. The test is normed for Grades 9 through 16. The publisher reports Kuder–Richardson Formula 20 reliability coefficients of .85–.91 for the Comprehension subtest but does not provide information on validity. However, the measure is thought to have reasonable face validity for screening general reading skills (Smith, 1998).

The WJ-III Writing Fluency subtest assesses the ability to write grammatically correct sentences using target words, in speeded conditions. The WJ-III battery is normed for ages 2 through 90. The median score reliability, using a Rasch procedure appropriate for speeded measures, is .88 (reliabilities of .80 and above interpreted as desirable, Schrank, McGrew, & Woodcock, 2001). Further, previous research with low skilled adults found a reliable relationship between the measure and the quality of persuasive essays (MacArthur et al., 2015).

Text-based writing

Two researcher-designed, 30-min tasks were administered to measure text-based writing. Intact text from newspaper articles was used in the interest of ecological validity (Neisser, 1976). To select source text, articles from several newspapers were scanned for readability in combination with appropriateness of topic, and length. We used the readability level of typical introductory college textbooks as the standard for our search for text for the study. These textbooks tend to be written at the 12th grade readability level, although developmental reading texts, as used in the courses in which the current participants were enrolled, are generally written at a lower level (Armstrong et al., 2015). The introductory college level was considered optimal as participants were considered by their instructors to be almost ready for college reading.

Topics were selected to correspond to those taught in high-enrollment, introductory college-level general education courses in which reading and writing assignments were of importance in both learning and assessment. The highest enrollments in the participating colleges in courses with such literacy demands, which students had not yet taken, were in psychology and sociology. Interviews with content-area faculty indicated that newspaper articles were assigned regularly to supplement readings in course textbooks. Further, it was important that the articles were readable by participants within the time allowed for the task. Instructors consulted during the design of the study provided guidance on text length.

Articles meeting the readability and topic criteria were found in the newspaper USA Today, and selected as source text. The articles selected were on the psychology topic of stress experienced by teenagers and the sociology topic of intergenerational tensions in the workplace. The psychology topic was used for the persuasive essay, and the sociology topic was used for the written summarization task. Because instructors requested that assessment time be kept short, it was necessary for the research staff to reduce word length by eliminating several paragraphs in each article. The deleted paragraphs presented examples to illustrate main points in the articles and did not add new meaning. Two teachers with experience in reading instruction read the reduced-length articles and verified that neither cohesion nor basic meaning had been lost as a result of the reduction.

Word count was 650 for the psychology text and 676 for the sociology text. Flesch-Kincaid grade levels were 10–11, and Lexile levels were 1250–1340, corresponding to an approximately 12th grade level. High school students who can comprehend text at a 1300 Lexile level are considered ready for college reading (National Governors’ Association and Council of Chief State School Officers, 2010, Appendix A), and the syllabus for the top level developmental course indicated that texts were within a Lexile range of 1185–1385. Retrospective reports on the summary task (Perin, Raufman & Kalamkarian 2015), which included comprehension questions, indicated that students had no difficulty understanding the article used for that task. Comprehension of the other article, used for the persuasive essay, was not assessed. However, developmental course instructors stated that both articles were within students’ reading ability.

Persuasive essay

Students were asked to read the article on stress experienced by teenagers and write an essay expressing their opinion on a controversy discussed in the article. The prompt instructed students to pretend that they were trying to persuade a friend to agree with them, and use their own words, with no quotations. It was also stated that students could mark the article if they wished, and that a dictionary could be used during the task.

Summary

The text-based summarization task required students to read a second newspaper article, on intergenerational conflict, and summarize it in one or two paragraphs using their own words, again with no quotations. As with the essay, students were permitted to use a dictionary and mark the source text as they wished. The task had another part, in which students stated how information in the article could be applied in a job setting. However, only the summary portion of the response was analyzed for the current study.

Self-efficacy

On a scale designed for the study based on the work of MacArthur, Philippakos, and Ianetta (2015), participants were asked to respond to 16 questions that asked them to rate the level of confidence they felt in their ability on the text-based tasks and related skills (items listed in “Appendix” section). The ratings were obtained prior to the administration of the summarization and persuasive writing tasks so that they could be used predictively in the analysis. The instructions informed respondents that they would shortly be asked to read two newspaper articles and write a summary and persuasive essay based on them. They were directed to rate their confidence on these tasks by selecting, for each of the 16 questions, one point on a 100-point scale reflecting their level of confidence. Participants had to circle one of 11 points on the scale (0, 10, 20, 30, 40, 50, 60, 70, 80, 90 and 100). Examples of points on the scale were provided in the instructions: 0 (“you are sure you cannot do it”), 50 (“there is an equal chance that you can do it or not do it”), and 100 (“you are sure you can do it”). The same 16 questions were used for teacher judgments, as described below, although the wording of the student items was simplified to ensure comprehension.

Teacher judgments

A teacher judgment scale was developed for the study based on work suggesting that teacher judgments are predictive of student performance (Hoge & Coladarci, 1989; Speece et al., 2010; Südkamp et al., 2012; Troia et al., 2013). Using the online Qualtrics platform, instructors were asked to provide ratings on the same items for which students had rated their self-efficacy, using the same 11 points on a 100-point scale. Thus, each teacher judgment was keyed to a student self-efficacy item. As with the self-efficacy scale, the teachers were asked to rate their level of confidence that the student was able to perform the task indicated. A rating of 100% indicated that the teacher was sure that the student had the skill, 50% meant that the teacher thought that there was an equal chance that the student did or did not have the skill, and 0% meant that the teacher thought that the student did not have the skill.

Scoring of text-based writing

The persuasive essays and summaries, which had been handwritten by participants, were word processed, correcting for spelling, capitalization, and punctuation (Graham, 1999; MacArthur & Philippakos, 2010; Olinghouse, 2008). Two genre-specific scores were obtained for each type of writing: holistic persuasive quality and number of persuasive essay parts for the essays, and proportion of main ideas from the source text and analytic quality for the summaries. In addition, the use of academic words, and length of the writing sample, in number of words, was obtained for both types of writing. Thus, there were four scores for each type of writing.

Holistic quality of the persuasive essay was scored using a 7-point rubric (based on MacArthur et al., 2015). Raters were asked to bear in mind the clarity of expression of ideas, the organization of the essay, the choice of words, the flow of language and variety of sentences written, and the use of grammar. For the essay parts measure (adapted from Ferretti, MacArthur, & Dowdy, 2000), each essay was first parsed to idea units (Gil et al., 2010b; Magliano, Trabasso, & Graesser, 1999) and then labeled as a functional or non-functional element. Following Ferretti et al. (2000), functional elements consisted of a proposition, or statement of belief or opinion; a reason for the position stated; an elaboration of proposition or reason; an alternative proposition, or counterargument; a reason for the alternative proposition; a rebuttal of the counterargument; or a concluding statement. Nonfunctional persuasive elements were defined as repetitions or information not relevant to the prompt. Almost all of the functional persuasive units in the current corpus were propositions, reasons, elaborations of propositions or reasons, or conclusions, with very few counterarguments or rebuttals. The functional and non-functional elements were summed and functional elements were expressed as a proportion of total elements for a proportion elements score.

On the summaries, the proportion of main ideas from the source text was calculated (Perin et al., 2003; Perin et al., 2013). To identify the main ideas, the first author and two research assistants who were experienced English language arts teachers each independently read the article and listed the main ideas. Then, through discussion consensus was reached on which ideas to retain, eliminate, or add to the list. Through this process, nine main ideas were identified in the source text. For the analysis, the number of main ideas found in each summary was expressed as a proportion of the nine main ideas in the source text.

The quality of the summary was assessed using an analytic summarization quality rubric with four 4-point ratings, for a maximum score of 16 (based on Westby et al., 2010). Ratings were made for four aspects of summary quality: (1) topic/key sentence, main idea; (b) text structure; (c) gist, content (quantity, accuracy, and relevance); and (d) sentence structure, grammar, and vocabulary.

Both the essays and summaries were scored for the number of academic words used (Lesaux, Kieffer, Kelley, & Harris, 2014; Olinghouse & Wilson, 2013). Academic words are defined as those that appear frequently in academic texts but are not specific to any specific subject area (Lesaux et al., 2014) (Examples: circumstances, category, debate, demonstrate, estimate, interpret, guarantee). The percentage of academic words was obtained from the automated vocabulary profiler software program VocabProfile (Cobb, n.d.), which computes the percentage of words that occur on the Academic Word List (Coxhead, 2000) and contains words not among the 2000 most frequent words in the English language. The academic word measure reflects the assumption that less mature writing contains a predominance of highly frequent words and fewer low-frequency words (McNamara, Crossley, & McCarthy, 2010; Uccelli, Dobbs, & Scott, 2013).

Finally, the number of words written on the persuasive essays and summaries was obtained using the word count feature in the Word program. This measure was taken because low-achieving students often produce inappropriately short compositions (Doolan, 2014; Nelson & Van Meter, 2007; Puranik, Lombardino, & Altmann, 2008).

All of the measures were scored by three trained research assistants who were unfamiliar with the goals of the project. Each assistant scored one of the three full sets of writing samples (WJ-III Writing Fluency, essay, summary). A random sample of 30% of each set was scored by another assistant to determine the interrater reliabilities of scores on the WJ-III Writing Fluency, essay functional elements and quality, and the summary proportion of main ideas and analytic quality. Anchor papers for the essays and summaries obtained in piloting were used, and pairs of assistants were trained by scoring sets of 10 protocols until they reached a criterion of 80% exact agreement. It is noted that training was a lengthy process because the writing was poor and sometimes difficult to understand.

Interrater reliabilities (Pearson’s r) for the measures were adequate: WJ-III Writing Fluency: .98; essay functional elements: .92; essay quality: .85; summary proportion of main ideas: .72; summary quality: .85. Exact agreement for persuasive essay quality was adequate at 77%. However, exact agreement on the other variables was moderate or low: 40% for writing fluency, 43% for essay functional elements, 14% for summary main ideas, and 23% for summary quality. Agreement within one point improved to 79% for writing fluency, 68% for essay functional elements, 100% for essay quality; 63% for summary main ideas and 44% for summary quality. It can be seen that the inter-scorer agreement of summary quality measure was problematic; however, agreement within two points on this 16-point measure improved to 71%.

Procedure

Participants were recruited by college liaisons through classroom presentations and flyers. The measures were administered by the third and fourth authors, following a script, in 2.5 h group sessions, outside of class time, with several rest breaks. Order of administration was fixed, as follows: self-efficacy, persuasive essay, WJ-III Writing Fluency, summary, Nelson-Denny Comprehension, and a questionnaire on demographic and academic information. The teacher judgments were submitted on the Qualtrics platform. Along with the link to the scale, instructors received copies of the reading and writing tasks on which their judgments focused, and were given monetary compensation for completion of the task. Participants received gift cards. All data in the study were collected in accordance with the standards and guidelines of the Institutional Review Board of Teachers College, Columbia University.

Results

Descriptive statistics

Raw and standard scores on the general reading and writing measures (Nelson-Denny Comprehension and WJ-III Writing Fluency tests) are shown in Table 1 (Only raw scores were used in the correlations and regression analyses reported below). The scores verify that participants were low-skilled, and translate to the 22nd and 27th percentiles for reading and writing, respectively, using as a reference group 12th graders at the end of the school year. There were no statistically significant differences between developmental course levels on either test at either college. However, there was a statistically significant between-college difference on the comprehension scores, with higher scores at College 1 (t = 2.5, df = 210, p < .01) although there was no systematic difference in writing fluency (t = .33, df = 209, p = .744).

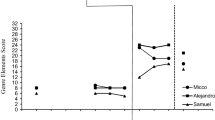

Mean (unadjusted) writing scores, and the self-efficacy ratings and teacher judgments are shown in Table 2. Intercorrelations between general reading and writing, and text-based writing scores are shown in Table 3, and between self-efficacy ratings, teacher judgments and text-based writing scores in Table 4. Pearson product-moment correlations (Pearson’s r) were performed. Data are provided for all N = 211 participants on the reading and writing variables. Instructors provided teacher judgments for N = 162 of the participants, i.e., data were missing on this variable for N = 49 participants, and only these individuals were included in the analysis of the self-efficacy and teacher judgement data. As mentioned earlier, the teacher judgments were provided by instructors on an online platform; follow-up with instructors did not result in successful provision of the missing information.

Table 3 shows that the correlation between reading comprehension and writing ability was statistically significant. Both of these variables correlated significantly with proportion of functional elements, holistic persuasive quality, length of the persuasive essay, and summary analytic quality. Only reading comprehension correlated significantly with proportion of main ideas in the summary and percentage of academic words in the summary. Neither variable correlated significantly with percent academic words in the persuasive essay or length of the summary.

In Table 4 it can be seen that the correlation between the self-efficacy ratings and teacher judgments was statistically significant. Both of these measures were significantly correlated with the proportion of functional persuasive elements, and summary analytic quality. Only the teacher judgments ratings correlated significantly with holistic persuasive quality, proportion of main ideas in the summary, length of the summary, and proportion of academic words in the summary. Thus, overall, the teacher judgments appeared more predictive of student performance than the self-efficacy ratings.

Contribution of general reading and writing ability, self-efficacy, and teacher judgments to text-based writing

A series of hierarchical regressions was completed in order to determine the contribution of general reading and writing ability (as measured by scores on the Nelson-Denny and WJ-III Writing Fluency tests), self-efficacy ratings, and teacher judgments to scores on the persuasive essays and written summaries. We modeled six variables: the proportion of functional persuasive elements in the essay, essay quality, the percentage of academic words in the essay, the proportion of main ideas in the summary, summary quality, and the percentage of academic words in the summary.

College was entered in the first block as a control variable, since there were statistically significant differences in general reading and writing ability (Nelson-Denny Comprehension and WJ-III Writing Fluency, which we refer to as standardized tests below) between the two sites. The scores on the standardized tests were entered in the second block, and the third block consisted of the self-efficacy ratings and teacher judgments. The results of the third block indicate whether the self-efficacy ratings and teacher judgments were statistically significant predictors of text-based writing skills over and above the contributions of the scores on the standardized tests. The results of the analyses are shown in Tables 5, 6, 7, 8, 9 and 10. Total R 2, or the total amount of variance accounted for, ranged from 9% (percentage of academic words in the summary) to 18% (percentage of academic words in the essay and summary quality).

Proportion of functional persuasive elements in the essay

As seen in Table 5, in the full model for the proportion of functional elements in the essay, Model 3, the change in R 2 was statistically significant (F = 4.580, p = .012), which can be explained by the contributions of the WJ-III Writing Fluency scores and the self-efficacy ratings. The change in R 2 in Model 2 (scores on standardized tests only), was also significant (F = 7.106, p = .001), which can be attributed to the contribution of the scores on the standardized writing test.

Essay quality

The change in R 2 in the full model for essay quality was not statistically significant, as seen in Table 6, although, again, the WJ-III Writing Fluency scores made a significant contribution. However, the change in R 2 in Model 2 (scores on the standardized tests only) was significant (F = 4.960, p = .008), which was a result of the contribution of the standardized writing scores.

Percentage of academic words in the essay

Neither Model 2 nor Model 3 was statistically significant in explaining the percentage of academic words used in the essay, as shown in Table 7, suggesting that performance could not be predicted by general reading or writing ability, self-efficacy, or teacher judgments.

Proportion of main ideas from the source text in the summary

As seen in Table 8, the change in R 2 for the proportion of main ideas in the summary was statistically significant in the full model (F = 7.659, p = .001). This result was explained by the significant contributions of the Nelson-Denny Comprehension scores and the teacher judgments. Model 2 was also significant (F = 5.190, p = .007) because of a significant contribution of the scores on the standardized reading tests.

Summary quality

The change in R 2 was statistically significant in the full model for summary quality (F = 8.725, p = .000) as well as Model 2 (F = 5.178, p = .007). In the full model, as seen in Table 9, only the teacher judgments made a significant contribution to summary quality, and in Model 2, only the scores on the standardized reading tests made a significant contribution.

Percentage of academic words in the summary

As shown in Table 10, the full model of the percentage of academic words in the summary was statistically significant (F = 3.901, p = .022) because of the significant contribution of teacher judgments.

Discussion

Description of skills

The present study set out to contribute information about the writing of an often-overlooked population in the research literature, low-skilled adults who have completed secondary education and are in higher education. The participants tested at the lower end of the average range for end-of-year 12th graders on the general reading and writing measures. The scores can be compared with those found in previous research on developmental education students (MacArthur et al., 2016; Perin et al., 2003; Perin et al., 2013). In the (Perin et al., 2015) study, top level developmental education students obtained a mean raw score of 30.95 (SD = 15.22), or 41% correct, on the Nelson-Denny Comprehension subtest, which is similar to the score of 29.32 (SD = 12.29), or 38% correct, found in the current sample of intermediate and top level developmental students. MacArthur et al. (2015) reported a mean raw score of 19.9 (SD = 4.5), or 50% correct, for upper level developmental students on WJ-III Writing Fluency, which is somewhat similar to the mean raw score of 22.50 (SD = 5.25), or 56% correct, in the present sample.

Performance on the text-based writing tasks provides insight into participants’ readiness for college-level literacy demands because they required both reading comprehension and writing skill and were typical of classroom assignments. When summarizing a newspaper article, the participants included 24% of the main ideas from the source text. Although it is not expected that all of the main ideas would necessarily be included in a summary written even by the most proficient writer, 24% of the main ideas is low if the summary is to capture the gist of the source text. Further, although norms are not available for this task, performance for this sample fell below that of two other developmental education samples, 28% in Perin et al. (2003) and 42% in Perin et al. (2013). The quality of the written summaries, measured by an analytic rubric that focused on four components of summarization, also tended to be somewhat low, with a mean score of 8.09 (SD = 2.64) on a 16-point scale.

Participants also demonstrated weakness in text-based persuasive essay writing, with a mean quality score of 2.58 (SD = .80) on a 7-point holistic scale. This is similar to the scores of 2.44-2.96 reported by MacArthur et al. (2015) on experiential (i.e., not text based) persuasive writing of top-level developmental students. Almost one half of the content written in the persuasive essays in the current study, measured in terms of functional persuasive elements, was not pertinent to the development of a persuasive argument. Comparable data on the use of persuasive elements are not available in the literature, although Ferretti et al. (2009; Table 2) reported that 50.8% of learning disabled and 25% of typically developing sixth graders included non-functional elements in their persuasive essays. Further, although there are no prior studies of the use of academic words in the writing of adults (or adolescents), a study of children found that one percent of the words used in the writing of typically-developing fifth graders were academic words (Olinghouse & Wilson, 2013). In this light, the three percent seen in the current postsecondary sample seems low.

Relationships between measures

Scores on the two text-based writing tasks had a statistically significant but relatively weak relationship to each other (r = .16, p < .05 for summary and essay quality), suggesting that the two tasks called for different skills. This possibility is supported by the different relationships of the scores on the standardized reading and writing tests to the two tasks. The reading scores had a higher correlation with summary quality (r = .31, p < .01) than with essay quality (r = .16, p < .05), and the writing scores had a higher correlation with essay quality (r = .28, p < .01) than with summary quality (r = .14, p < .05). Although both tasks required both reading and writing, it appears that reading skills were more important for the written summarization task and writing skills were more important for the text-based persuasive essay.

Another notable finding was that, although the scores on the standardized reading and writing tests were reliably related to performance on the text-based writing measures, even the highest correlations were moderate, suggesting that they were tapping different skill sets. Of the 45 correlations we ran between the scores on reading and writing skills, only five exceeded r = .40: essay quality and essay word count (r = .51, p < .01), essay quality and the percentage of academic words in the essay (r = .57, p < .01), proportion of main ideas in the summary and summary quality (r = .61, p < .01), proportion of main ideas in the summary and summary word count (r = .47, p < .01), and summary quality and summary word count (r = .50, p < .01). Of these five relatively strong correlations, three concerned word count. Although the direction of the relationship between each pair of variables is unknown, a hypothesis can be proposed that working with students to lengthen their writing samples may help them improve their writing.

The self-efficacy ratings were significantly correlated with teacher judgments, but the correlation was only moderate (r = .30, p < .01), suggesting that for individual students, there were discrepancies between students’ and teachers’ level of confidence in the student’s proficiency.

Self-efficacy ratings correlated significantly with only two of the eight text-based writing variables, whereas teacher judgments correlated significantly with six of these variables. Therefore, the current data suggest that the teachers may be better than their students at predicting the students’ reading and writing skills. Although previous research has found self-efficacy to be a significant predictor of literacy skills (MacArthur et al., 2016; Pajares & Valiante, 2006; Proctor, Daley, Louick, Leidera, & Gardner, 2014), the current study has the added advantage of being able to compare self-efficacy ratings with teacher judgments for the same sample.

Contribution of general reading and writing, self-efficacy ratings and teacher judgments to variance in text-based writing

The results of hierarchical regression analyses indicated the importance of the general writing scores in predicting the proportion of functional elements in the persuasive essay and the quality of the essay, while the general reading scores were important in predicting the proportion of main ideas in the summary and the quality of the summary. Although directionality is not established in the analysis, these findings suggest a hypothesis that improvement in text-based summarization may require particular attention to reading comprehension skills, while improvement in text-based persuasive essay writing may depend more on developing general writing skills.

Self-efficacy was only important in predicting the proportion of functional elements in the essay, and teacher judgments were only important in predicting the percentage of academic words in the summary. Thus, self-efficacy and teacher judgments had only a small explanatory role in text-based writing once the specific college attended and the standardized test scores were taken into account. Previous research suggests that self-efficacy is a reliable predictor of literacy performance (e.g., MacArthur et al., 2016; Martinez, Kock, & Cass, 2011) and that teacher judgments correlate with literacy skills, although relationships are stronger at higher skill levels (Begeny et al., 2011; Feinberg & Shapiro, 2009). Perhaps the lack of high achievers in the current sample, in conjunction with the ceiling effect on self-efficacy (with many scores at the highest part of the scale), at least partly explains the relatively small amount of variance of self-efficacy and teacher judgments, compared with standardized test scores, in accounting for the text-based writing scores.

Limitations and research directions

We see two limitations in this study. First, interscorer agreement on some of the measures was low despite extensive training of raters, which we attribute to the low-quality and high variability of the writing samples analyzed. Additional research is needed to determine whether more refined scoring techniques can be developed for this population to ensure higher reliability. Second, interpretation of the current data is limited by the lack of prior literature that could be used for comparison. Future research is needed not only on text-based writing itself, but this particular skill among students with low writing skills.

Implications

The present findings help illuminate the challenges to supporting struggling writers at the postsecondary level and suggest some possible pathways to explore in order to improve literacy outcomes in this population. As discussed in the introduction, postsecondary writing places a multiple demands on the student’s cognitive and self-regulatory skills. However, it appears from the r = .16 correlation between the written summarization and the persuasive essay quality scores that these tasks are very different and that the writers themselves have uneven writing skills. In examining the low correlations between some of the tasks and the low levels of predictiveness, it seems that there are different and unique ways to be poor writer.

We would hypothesize that the low interrater reliability on the scoring of the writing could be related to the complexity of poor writing, making it difficult to evaluate an evidently wide range of approaches to writing among this population. While there clearly needs to be more research with this population, our data suggest there are numerous of “moving parts” when it comes to understanding what makes poor writers poor writers.

However, our research can begin to suggest some of the ways poor postsecondary writers can be supported. First, it is clear that for text-based writing (a common postsecondary task), reading comprehension needs to be supported. Second, the relationship between length of writing and the quality of the writing sample warrants further exploration in order to determine whether supporting writing stamina leads to better writing or if better writing skills themselves serve to increase stamina. Additionally, the complexity of the pattern of skill displayed by the low-achieving writers in the current study constitutes a challenge to educators to develop customized interventions that take into account both the individual writer and the writing context.

Conclusions

The study revealed low levels of college-readiness on an important postsecondary literacy skill for a sample of students many of who were about to exit developmental (remedial) coursework and proceed to college-level courses. Further, the hierarchical regressions suggest that improvement in general reading skills may promote better text-based summarization while improvement in general writing skills may be key to better persuasive essay-writing skills.

The text-based writing measures, as examples of authentic college level assignments, may have good potential for adding to currently-used measures of college readiness such as college placement tests. Problems have been identified in the use of single measures for placing students into developmental education (Hughes & Scott-Clayton, 2011), and it is possible that the use of multiple measures might lead to more accurate course placement (Scott-Clayton, 2012). Finally, given the lack of prior data against which to compare current findings, this study may be best seen as an exploration of the writing of a large, high-need population.

References

Armstrong, S. L., Stahl, N. A., & Kantner, M. J. (2015). What constitutes ‘college-ready’ for reading? An investigation of academic text readiness at one community college (Technical Report Number 1). DeKalb, IL: Center for the Interdisciplinary Study of Literacy and Language, Northern Illinois University. Retrieved from http://www.niu.edu/cisll/_pdf/reports/TechnicalReport1.pdf.

Bailey, T. R., Jeong, D.-W., & Cho, S.-W. (2010). Referral, enrollment, and completion in developmental education sequences in community colleges. Economics of Education Review, 29(2), 255–270. doi:10.1016/j.econedurev.2009.09.002.

Begeny, J. C., Krouse, H. E., Brown, K. G., & Mann, C. M. (2011). Teacher judgments of students’ reading abilities across a continuum of rating methods and achievement measures. School Psychology Review, 40(1), 23–38.

Bohn-Gettler, C. M., & Kendeou, P. (2014). The interplay of reader goals, working memory, and text structure during reading. Contemporary Educational Psychology, 39(3), 206–219. doi:10.1016/j.cedpsych.2014.05.003.

Boylan, H. R. (2002). What works: Research-based best practices in developmental education. Boone, NC: Continuous Quality Improvement Network and the National Center for Developmental Education, Appalachian State University.

Bråten, I., Strømsø, H. I., & Britt, M. A. (2009). Trust matters: Examining the role of source evaluation in students’ construction of meaning within and across multiple texts. Reading Research Quarterly, 44(1), 6–28.

Bridgeman, B., & Carlson, S. B. (1984). Survey of academic writing tasks. Written Communication, 1(2), 247–280. doi:10.1177/0741088384001002004.

Brown, A. L., & Day, J. D. (1983). Macrorules for summarizing texts: The development of expertise. Journal of Verbal Learning and Verbal Behavior, 22, 1–14.

Brown, J. I., Fishco, V. V., & Hanna, G. S. (1993). The Nelson-Denny reading test, forms G and H. Itasca, IL: Riverside/Houghton-Mifflin.

Bruning, R., Dempsey, M. S., Kauffman, D. F., McKim, C., & Zumbrunn, S. (2013). Examining dimensions of self-efficacy for writing. Journal of Educational Psychology, 105(1), 25–38. doi:10.1037/a0029692.

Cantrell, S. C., Correll, P., Clouse, J., Creech, K., Bridges, S., & Owens, D. (2013). Patterns of self-efficacy among college students in developmental reading. Journal of College Reading and Learning, 44(1), 8–34. doi:10.1080/10790195.2013.10850370.

Carson, J. G., Chase, N. D., Gibson, S. U., & Hargrove, M. F. (1992). Literacy demands of the undergraduate curriculum. Reading Research and Instruction, 31(4), 25–50. doi:10.1080/19388079209558094.

Caverly, D. C., Nicholson, S. A., & Radcliffe, R. (2004). The effectiveness of strategic reading instruction for college developmental readers. Journal of College Reading and Learning, 35(1), 25–49.

Cobb, T. (n.d.). The compleat lexical tutor website. Retrieved November 13, 2015, from http://www.lextutor.ca.

Conley, D. T., & French, E. M. (2014). Student ownership of learning as a key component of college readiness. American Behavioral Scientist, 58(8), 1018–1034. doi:10.1177/0002764213515232.

Coxhead, A. (2000). A new academic word list. TESOL Quarterly, 34(2), 213–238.

De La Paz, S., Ferretti, R., Wissinger, D., Yee, L., & MacArthur, C. A. (2012). Adolescents’ disciplinary use of evidence, argumentative strategies, and organizational structure in writing about historical controversies. Written Communication, 29(4), 412–454. doi:10.1177/0741088312461591.

Doolan, S. M. (2014). Comparing language use in the writing of developmental generation 1.5, L1, and L2 tertiary students. Written Communication, 31(2), 215–247. doi:10.1177/0741088314526352.

Fallahi, C. R. (2012). Improving the writing skills of college students. In E. L. Grigorenko, E. Mambrino, & D. D. Preiss (Eds.), Writing: A mosaic of new perspectives (pp. 209–219). New York, NY: Psychology Press.

Feinberg, A. B., & Shapiro, E. S. (2009). Teacher accuracy: An examination of teacher-based judgments of students’ reading with differing achievement levels. Journal of Educational Research, 102(6), 453–462.

Ferretti, R. P., Lewis, W. E., & Andrews-Weckerly, S. (2009). Do goals affect the structure of students’ argumentative writing strategies? Journal of Educational Psychology, 101(3), 577–589. doi:10.1037/a0014702.

Ferretti, R. P., MacArthur, C. A., & Dowdy, N. S. (2000). The effects of an elaborated goal on the persuasive writing of students with learning disabilities and their normally achieving peers. Journal of Educational Psychology, 92(4), 694–702. doi:10.10377//0022:2-0663.92.4.694.

Fitzgerald, J., & Shanahan, T. (2000). Reading and writing relations and their development. Educational Psychologist, 35(1), 39–50. doi:10.1207/S15326985EP3501_5.

Gil, L., Bråten, I., Vidal-Abarca, E., & Strømsø, H. I. (2010a). Summary versus argument tasks when working with multiple documents: Which is better for whom? Contemporary Educational Psychology, 35(3), 157–173. doi:10.1016/j.cedpsych.2009.11.002.

Gil, L., Bråten, I., Vidal-Abarca, E., & Strømsø, H. I. (2010b). Understanding and integrating multiple science texts: Summary tasks are sometimes better than argument tasks. Reading Psychology, 31(1), 30–68. doi:10.1080/02702710902733600.

Goldman, S. R., & Bisanz, G. L. (2002). Toward a functional analysis of scientific genres: Implications for understanding and learning processes. In J. Otero, J. A. Leon, & A. C. Graesser (Eds.), The psychology of science text comprehension (pp. 19–50). Mahwah, NJ: Erlbaum.

Graham, S. (1999). Handwriting and spelling instruction for students with learning disabilities: A review. Learning Disability Quarterly, 22(2), 78–98. doi:10.2307/1511268.

Hale, G., Taylor, C., Bridgeman, B., Carson, J., Kroll, B., & Kantor, R. (1996). A study of writing tasks assigned in academic degree programs (RR-95-44, TOEFL-RR-54). Princeton, NJ: Educational Testing Service.

Hayes, J. R. (1996). A new framework for understanding cognition and affect in writing. In C. M. Levy & S. Ransdell (Eds.), The science of writing: Theories, methods, individual differences, and applications (pp. 1–27). Mahwah, NJ: Erlbaum.

Hoge, R. D., & Coladarci, T. (1989). Teacher-based judgments of academic achievement: A review of literature. Review of Educational Research, 59(3), 297–313. doi:10.3102/00346543059003297.

Holschuh, J. P., & Aultman, L. P. (2009). Comprehension development. In R. F. Flippo & D. C. Caverly (Eds.), Handbook of college reading and study strategy research (2nd ed., pp. 121–144). New York, NY: Routledge.

Hughes, K. L., & Scott-Clayton, J. (2011). Assessing developmental assessment in community colleges. Community College Review, 39(4), 327–351. doi:10.1177/0091552111426898.

Jackson, J. M. (2009). Reading/writing connection. In R. F. Flippo & D. C. Caverly (Eds.), Handbook of college reading and study strategy research (2nd ed., pp. 145–173). New York: Routledge.

Johns, A. M. (1985). Summary protocols of ‘underprepared’ and ‘adept’ university students: Replications and distortions of the original. Language Learning, 35(4), 495–517. doi:10.1111/j.1467-1770.1985.tb00358.x.

Kellogg, R. T., & Whiteford, A. P. (2009). Training advanced writing skills: The case for deliberate practice. Educational Psychologist, 44(4), 250–266. doi:10.1080/00461520903213600.

Kintsch, W., & van Dijk, T. A. (1978). Toward a model of text comprehension and production. Psychological Review, 85(5), 363–394. doi:10.1037/0033-295X.85.5.363.

Kitsantas, A., & Zimmerman, B. J. (2009). College students’ homework and academic achievement: The mediating role of self-regulatory beliefs. Metacognition and Learning, 4(2), 97–110.

Lesaux, N. K., Kieffer, M. J., Kelley, J. G., & Harris, J. R. (2014). Effects of academic vocabulary instruction for linguistically diverse adolescents: Evidence from a randomized field trial. American Educational Research Journal, 51(6), 1159–1194. doi:10.3102/0002831214532165.

MacArthur, C. A., & Lembo, L. (2009). Strategy instruction in writing for adult literacy learners. Reading and Writing: An Interdisciplinary Journal, 22(9), 1021–1039.

MacArthur, C. A., & Philippakos, Z. (2010). Instruction in a strategy for compare-contrast writing. Exceptional Children, 76(4), 438–456.

MacArthur, C. A., Philippakos, Z. A., & Graham, S. (2016). A multicomponent measure of writing motivation with basic college writers. Learning Disability Quarterly, 39(1), 31–43. doi:10.1177/0731948715583115.

MacArthur, C. A., Philippakos, Z. A., & Ianetta, M. (2015). Self-regulated strategy instruction in college developmental writing. Journal of Educational Psychology, 107(3), 855–867. doi:10.1037/edu0000011.

Macaruso, P., & Shankweiler, D. (2010). Expanding the simple view of reading in accounting for reading skills in community college students. Reading Psychology, 31(5), 454–471.

Magliano, J. P., Trabasso, T., & Graesser, A. C. (1999). Strategic processing during comprehension. Journal of Educational Psychology, 91(4), 615–629.

Martin, S. D., & Shapiro, E. S. (2011). Examining the accuracy of teachers’ judgments of DIBELS performance. Psychology in the Schools, 48(4), 343–356. doi:10.1002/pits.20558.

Martinez, C. T., Kock, N., & Cass, J. (2011). Pain and pleasure in short essay writing: Factors predicting university students’ writing anxiety and writing self-efficacy. Journal of Adolescent & Adult Literacy, 54(5), 351–360. doi:10.1598/JAAL.54.5.5.

Mateos, M., Martin, E., Villalon, R., & Luna, M. (2008). Reading and writing to learn in secondary education: Online processing activity and written products in summarizing and synthesizing tasks. Reading and Writing: An Interdisciplinary Journal, 21, 675–697.

McAlexander, P. J. (2003). From personal to text-based writing: The use of readings in developmental composition. Research and Teaching in Developmental Education, 19(2), 5–16.

McNamara, D. S., Crossley, S. A., & McCarthy, P. M. (2010). Linguistic features of writing quality. Written Communication, 27(1), 57–86. doi:10.1177/0741088309351547.

National Governors’ Association and Council of Chief State School Officers. (2010). Common core state standards: English language arts and literacy in history/social studies, science, and technical subjects. Washington, DC: Author. Retrieved from http://www.corestandards.org/.

Neisser, U. (1976). Cognition and reality: Principles and implications of cognitive psychology. San Francisco, CA: W.H. Freeman and Co.

Nelson, N. W., & Van Meter, A. M. (2007). Measuring written language ability in narrative samples. Reading and Writing Quarterly, 23(3), 287–309. doi:10.1080/10573560701277807.

Olinghouse, N. G. (2008). Student- and instruction-level predictors of narrative writing in third-grade students. Reading and Writing: An Interdisciplinary Journal, 21(1), 3–26. doi:10.1007/s11145-007-9062-1.

Olinghouse, N. G., & Wilson, J. (2013). The relationship between vocabulary and writing quality in three genres. Reading and Writing: An Interdisciplinary Journal, 26(1), 45–65. doi:10.1007/s11145-012-9392-5.

O’Neill, P., Adler-Kassner, L., Fleischer, C., & Hall, A. (2012). Creating the framework for success in postsecondary writing. College English, 74(6), 520–533.

Pajares, F., & Valiante, G. (2006). Self-efficacy beliefs and motivation in writing development. In C. A. MacArthur, S. Graham, & J. Fitzgerald (Eds.), Handbook of writing research (pp. 158–170). New York: Guilford Press.

Paulson, E. J. (2014). Analogical processes and college developmental reading. Journal of Developmental Education, 37(3), 2–13.

Perin, D., Keselman, A., & Monopoli, M. (2003). The academic writing of community college remedial students: Text and learner variables. Higher Education, 45(1), 19–42.

Perin, D., Bork, R. H., Peverly, S. T., & Mason, L. H. (2013). A contextualized curricular supplement for developmental reading and writing. Journal of College Reading and Learning, 43(2), 8–38.

Perin, D., Raufman, J. R., & Kalamkarian, H. S. (2015). Developmental reading and English assessment in a researcher-practitioner partnership (CCRC Working Paper No. 85). New York, NY: Community College Research Center, Teachers College, Columbia University. Available from http://ccrc.tc.columbia.edu/publications/developmental-readingenglish-assessment-researcherpractitioner-partnership.html..

Proctor, C. P., Daley, S., Louick, R., Leidera, C. M., & Gardner, G. L. (2014). How motivation and engagement predict reading comprehension among native English-speaking and English-learning middle school students with disabilities in a remedial reading curriculum. Learning and Individual Differences, 36, 76–83. doi:10.1016/j.lindif.2014.10.014.

Puranik, C. S., Lombardino, L. J., & Altmann, L. J. P. (2008). Assessing the microstructure of written language using a retelling paradigm. American Journal of Speech-Language Pathology, 17(2), 107–120.

Ritchey, K. D., Silverman, R. D., Schatschneider, C., & Speece, D. L. (2015). Prediction and stability of reading problems in middle childhood. Journal of Learning Disabilities, 48(3), 298. doi:10.1177/0022219413498116.

Schrank, F. A., McGrew, K. S., & Woodcock, R. W. (2001). Woodcock–Johnson III assessment service bulletin number 2. Retrieved from Itsaca, IL: WJ III technical abstract.

Scott-Clayton, J. (2012). Do high-stakes placement exams predict college success? CCRC Working Paper No. 41. Retrieved from New York, NY.

Selinger, B. M. (1995). Summarizing text: Developmental students demonstrate a successful method. Journal of Developmental Education, 19(2), 14–20.

Shanahan, T., & Shanahan, C. (2008). Teaching disciplinary literacy to adolescents: Rethinking content-area literacy. Harvard Educational Review, 78(1), 40–59.

Smith, D. K. (1998). Review of the Nelson-Denny reading test, forms G and H. In J. C. Impara & B. S. Plake (Eds.), Thirteenth mental measurements yearbook. Buros Institute of Mental Measurement. Retrieved from the Burros Institute’s Mental Measurements Yearbook online database.

Sparks, D., & Malkus, N. (2013). Statistics in brief: First-year undergraduate remedial coursetaking: 1999–2000, 2003–04, 2007–08 (NCES 2013-013). Washington, DC: National Center for Education Statistics. Retrieved from http://nces.ed.gov/pubs2013/2013013.pdf.

Speece, D. L., Ritchey, K. D., Silverman, R., Schatschneider, C., Walker, C. Y., & Andrusik, K. N. (2010). Identifying children in middle childhood who are at risk for reading problems. School Psychology Review, 39(2), 258–276.

Südkamp, A., Kaiser, J., & Möller, J. (2012). Accuracy of teachers’ judgments of students’ academic achievement: A meta-analysis. Journal of Educational Psychology, 104(3), 743–762. doi:10.1037/a0027627.

Troia, G. A., Harbaugh, A. G., Shankland, R. K., Wolbers, K. A., & Lawrence, A. M. (2013). Relationships between writing motivation, writing activity, and writing performance: Effects of grade, sex, and ability. Reading and Writing: An Interdisciplinary Journal, 26(1), 17–44. doi:10.1007/s11145-012-9379-2.

Uccelli, P., Dobbs, C. L., & Scott, J. (2013). Mastering academic language: Organization and stance in the persuasive writing of high school students. Written Communication, 30(1), 36–62. doi:10.1177/0741088312469013.

Wang, D. (2009). Factors affecting the comprehension of global and local main idea. Journal of College Reading and Learning, 39(2), 34–52.

Westby, C., Culatta, B., Lawrence, B., & Hall-Kenyon, K. (2010). Summarizing expository texts. Topics in Language Disorders, 30(4), 275–287. doi:10.1097/TLD.0b013e3181ff5a88.

Wineburg, S. (1991). Historical problem solving: A study of the cognitive processes used in the evaluation of documentary and pictorial evidence. Journal of Educational Psychology, 83(1), 73–87. doi:10.1037/0022-0663.83.1.73.

Wolfe, C. R. (2011). Argumentation across the curriculum. Written Communication, 28(1), 193–219. doi:10.1177/0741088311399236.

Woodcock, R. W., McGrew, K. S., & Mather, N. (2001). Woodcock–Johnson III tests of achievement and tests of cognitive abilities. Itasca, IL: Riverside Publishing.

Yancey, K. B. (2009). The literacy demands of entering the university. In L. Christenbury, R. Bomer, & P. Smagorinsky (Eds.), Handbook of adolescent literacy research (pp. 256–270). New York, NY: Guilford Press.

Yore, L. D., Hand, B., & Prain, V. (2002). Scientists as writers. Science Education, 86(5), 672–692.

Acknowledgements

Funding for the research reported here was provided by the Bill & Melinda Gates Foundation to the Community College Research Center, Teachers College, Columbia University under a project entitled “Analysis of Statewide Developmental Education Reform: Learning Assessment Study.”

Author information

Authors and Affiliations

Corresponding author

Appendix

Appendix

Self-efficacy items

-

1.

I can read short newspaper articles and understand them well.

-

2.

I can read the articles carefully and form my own opinion about the issues discussed.

-

3.

I can figure out how information in a newspaper article might be useful.

-

4.

I can write a good summary of a short article from a newspaper.

-

5.

I can write a summary of a newspaper article that includes only the most important information.

-

6.

I can write an essay that expresses my opinion clearly.

-

7.

In my essay I can persuade someone to agree with me on my opinion.

-

8.

If I write a summary or essay based on something I have read, I can express the information from the reading accurately.

-

9.

I can write a summary or essay in my own words, without copying directly from a reading passage.

-

10.

I can write a summary or essay using correct grammar and spelling.

-

11.

I can write a summary or essay that is the right length – not too long, not too short.

-

12.

I can write a summary or essay using appropriate academic vocabulary.

-

13.

I can revise my summary or essay to make sure what I’ve written is accurate and clear.

-

14.

I will be able to understand the reading in the courses I take after I pass the English or reading class I’m in now.

-

15.

I will be able to write well in the courses I take after I pass the English or reading class I’m in now.

-

16.

When reading or writing assignments are hard, I keep going and finish the assignment.

Rights and permissions

About this article

Cite this article

Perin, D., Lauterbach, M., Raufman, J. et al. Text-based writing of low-skilled postsecondary students: relation to comprehension, self-efficacy and teacher judgments. Read Writ 30, 887–915 (2017). https://doi.org/10.1007/s11145-016-9706-0

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11145-016-9706-0