Abstract

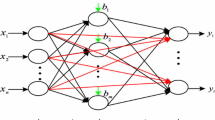

This study proposed supervised learning probabilistic neural networks (SLPNN) which have three kinds of network parameters: variable weights representing the importance of input variables, the reciprocal of kernel radius representing the effective range of data, and data weights representing the data reliability. These three kinds of parameters can be adjusted through training. We tested three artificial functions as well as 15 benchmark problems, and compared it with multi-layered perceptron (MLP) and probabilistic neural networks (PNN). The results showed that SLPNN is slightly more accurate than MLP, and much more accurate than PNN. Besides, the data weights can find the noise data in data set, and the variable weights can measure the importance of input variables and have the greatest contribution to accuracy of model among the three kinds of network parameters.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Abbreviations

- f A (X):

-

The probabilistic density function of Category A at point X

- f p :

-

The weight of the p-th sample in the sample base

- h p :

-

The data weight of the p-th sample in the sample base

- M :

-

The number of output variables

- m :

-

The number of input variables

- n :

-

The number of samples in the sample base

- n A :

-

The number of training vectors of Category A

- t pq :

-

The known value of the q-th output variable of the p-th sample in the sample base

- V p :

-

The reciprocal of kernel radius of the p-th sample in the sample base

- W i :

-

The i-th input variable weight

- X :

-

The testing data vectors

- X A p :

-

The p-th training data of Category A

- x i :

-

The value of i-th input variable in the testing sample

- \({x_i^p }\) :

-

The i-th input variable of the p-th sample in the sample base

- y :

-

The inference value of the output variable of the training data

- σ :

-

The smooth parameter

- σ p :

-

The smooth parameter of the p-th sample in the sample base

References

Specht DF (1988) Probabilistic neural networks for classification, or associative memory. In: Proceedings of the 1988 IEEE international conference on neural networks, San Diego, vol 1, pp 525–535

Specht DF: Probabilistic neural networks. Neural Netw 3(1), 109–118 (1990)

Specht DF, Shapiro PD (1991) Generalization accuracy of probabilistic neural networks compared with back-propagation networks. In: 1991 international joint conference on neural networks Seattle, vol 1, pp 887–892

Specht DF (1992) Enhancements to probabilistic neural networks. In: 1992 international joint conference on neural networks Baltimore, vol 1, pp 761–768

Patra PK, Nayak M, Nayak SK, Gobbak NK (2002) Probabilistic neural network for pattern classification. In: Proceedings of the 2002 international joint conference on neural networks Honolulu, vol 2, pp 1200–1205

Parzen E: On estimation of a probability density function and mode. Annal Math Stat 33(3), 1065–1076 (1962)

Specht DF: A general regression neural network. IEEE Trans Neural Netw 2(6), 568–576 (1991)

Adeli H, Panakkat A: A probabilistic neural network for earthquake magnitude prediction. Neural Netw 22(7), 1018–1024 (2009)

Song T, Jamshidi MM, Lee RR, Huang M: A modified probabilistic neural network for partial volume segmentation in brain MR image. IEEE Trans Neural Netw 18(5), 1424–1432 (2007)

Yu SN, Chen YH: Electrocardiogram beat classification based on wavelet transformation and probabilistic neural network. Pattern Recog Lett 28(10), 1142–1150 (2007)

Rutkowski L: Adaptive probabilistic neural networks for pattern classification in time-varying environment. IEEE Trans Neural Netw 15(4), 811–827 (2004)

Berthold MR, Diamond J: Constructive training of probabilistic neural networks. Neurocomputing 19(1), 167–183 (1998)

Burrascano P: Learning vector quantization for the probabilistic neural network. IEEE Trans Neural Netw 2(4), 458–461 (1991)

Villmann T, Schleif FM, Hammer B: Comparison of relevance learning vector quantization with other metric adaptive classification methods. Neural Netw 19(5), 610–622 (2006)

Schneider P, Biehl M, Hammer B (2009) Hyperparameter learning in robust soft LVQ. In: ESANN’2009 proceedings, European symposium on artificial neural networks—advances in computational intelligence and learning, Bruges (Belgium), 22–24 April 2009, pp 517–522

Seo S, Obermayer K: Soft learning vector quantization. Neural Comput 15(7), 1589–1604 (2003)

Galleske I, Castellanos J: Optimization of the kernel functions in a probabilistic neural network analyzing the local pattern distribution. Neural Comput 14(5), 1183–1194 (2002)

Montana D: A weighted probabilistic neural network. Adv Neural Inf Process Syst 4, 1110–1117 (1992)

Frank A, Asuncion A (2010) UCI machine learning repository http://archive.ics.uci.edu/ml. University of California, School of Information and Computer Science, Irvine, CA

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Yeh, IC., Lin, KC. Supervised Learning Probabilistic Neural Networks. Neural Process Lett 34, 193–208 (2011). https://doi.org/10.1007/s11063-011-9191-z

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11063-011-9191-z