Abstract

This paper reports results of a natural field experiment on the dictator game where subjects are unaware that they are participating in an experiment. Three other experiments explore, step by step, how laboratory behavior of students relates to field behavior of a general population. In all experiments, subjects display an equally high amount of pro-social behavior, whether they are students or not, participate in a laboratory or not, or are aware of their participating in an experiment or not. This paper shows that there are settings where laboratory behavior of students is predictive for field behavior of a general population.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

One of the most influential experiments in economics is the dictator game (Kahneman et al. 1986; Forsythe et al. 1994; Engel 2011). A ‘dictator’ is endowed with an amount of money and is matched with an anonymous recipient. The dictator has to determine how much money to give to the recipient. Behavior in this game is usually explained by altruism or a willingness to conform to social norms (the latter is also referred to as ‘manners’ Camerer and Thaler, 1995). As a result, theorists have altered the standard model. Motivations such as altruism, fairness, inequity aversion, and reciprocity have been incorporated into new models.Footnote 1

Most evidence regarding behavior in the dictator game has so far come from laboratory experiments.Footnote 2 Critics have argued that laboratory experiments on pro-social preferences produce biased outcomes, because of scrutiny or obtrusiveness by the experimenter.Footnote 3 Some studies have indeed shown that pro-social behavior decreases when subjects are unaware of the presence of an experimenter (List, 2006a; Benz and Meier, 2008, see Bandiera et al., 2005 for a non-experimental study on monitoring).

Little is known about whether experimenter scrutiny affects behavior in the dictator game.Footnote 4 In a study similar to this one, Franzen and Pointner (2012) look at the effects of scrutiny for student subjects. They conduct a dictator game in a lab with students and then send ‘misdirected’ envelopes with cash to the same participants. The authors find a positive correlation between dictator gifts and the likelihood that an envelope is returned. Winking and Mizer (2013) conduct a field experiment where they give money to random bystanders, who are unaware that they are taking part in an experiment. Almost none of the subjects choose to share the money they have received with another random bystander. In contrast, when subjects are informed up front that they are taking part in an experiment, sharing rates are much greater.

This paper reports results of a dictator game in a natural field experiment. A random sample of subjects in a Dutch city receives a transparent envelope with cash due to a supposed misdelivery. They are thus placed in the role of dictator, because they can decide to return part or all of the cash to the intended recipient. In this experiment, subjects are unaware of the experimenter. More experiments are conducted to identify possible differences between the natural field experiment and behavior in the laboratory. These experiments are conducted with student subjects in a laboratory, and with subjects from the same Dutch city in a laboratory or at their home. Roughly half of the subjects in the natural field experiment return the envelope. The other experiments show similar results. This paper shows that the predictive power of laboratory findings is supported in some settings.

2 From the laboratory to the field

2.1 General description of the experiments

In all experiments reported in this paper, each subject receives one transparent envelope with a ‘thank you’ card and two notes of €5. From the outside of the envelope the money is clearly visible, as well as the text written on the card. This text reads: ‘To you and all others, thank you very much for your voluntary services.—Tilburg University’. Each transparent envelope is stamped and addressed to a volunteer of Tilburg University. Subjects are told that the university wants to thank its volunteers in this way. The experimental task is whether or not to send the card to one of Tilburg University’s volunteers. The volunteers from this study are real people who have done real voluntary work (gathering data for a report by the university). All volunteers could keep any money sent by subjects.

All four experiments are carried out in Tilburg, the Netherlands, to address the external validity of altruism, in agreement with the principles of Harrison and List (2004) and List (2006b). Table 1 gives an overview.

StuLab is a conventional laboratory experiment and is conducted in a laboratory with students as subjects. In CitLab, representative citizens rather than students take part in the experiment in a laboratory. CitLab isolates differences in behavior between the student subject pool and the subjects in the natural field experiment. In CitHome, subjects participate in the experiment at home instead of in a laboratory. A comparison of CitLab and CitHome isolates the influence of the physical environment of the experiment (the laboratory versus someone’s home). In CitField, no instructions are provided, but a postmarked transparent envelope with a thank you card and two notes of €5 are slipped into a subject’s mailbox. The postmarked stamp makes it seem that the envelope has been misdelivered. Sending the card back to the volunteer comes naturally. Because subjects in CitField are unaware of the experimenter, comparing CitHome with CitField shows the effects of scrutiny on behavior.

2.2 Design of the StuLab, CitLab, and CitHome experiments

This section describes the information and conditions for subjects in the StuLab, CitLab, and CitHome experiments (for instructions, see Electronic supplementary material (ESM)).

First, the addressed recipient on the envelope has the property rights of the card in CitField. Property rights have an impact on pro-social behavior in dictator game giving. The party with the property rights gets the largest surplus (Fahr and Irlenbusch, 2000; Cherry et al., 2002; Oxoby and Spraggon, 2008). Hence, the property rights in the three other experiments also belong to the volunteers. Each card in those experiments is addressed to a volunteer. Subjects get no information on the type of voluntary work.

Second, because the subjects in CitField are unaware of the experimenter, they think that they are anonymous (in fact, they are not anonymous: the serial numbers on the bank notes allow me to track where returned letters come from). Therefore, subjects in all the other experiments have to be anonymous as well (in these, it is impossible to track where returned envelopes come from). From previous research on social preferences, we know that the degree of anonymity influences pro-social behavior. A higher degree of anonymity makes people more selfish (Hoffman et al., 1996; Cadsby et al., 2010; Barmettler et al., 2012). Anonymity is ensured by a double-blind procedure. Subjects are told that they will not have to give their name, that more participants will be taking part in the experiment, and that the experimenter can observe all returned envelopes, but that envelopes cannot be linked to subjects personally.

Third, in CitField, someone who wants to return the envelope has to make an effort. A subject must either physically go to the address of the volunteer, or go to a post office box so the mail company returns the envelope. To keep these costs equal in the other experiments, subjects in the StuLab, CitLab, and CitHome experiments have to mail or deliver the envelope themselves. Subjects cannot give the envelope to the experimenter.

Fourth, subjects in CitField do not receive a show-up fee. Therefore, none of the subjects in the other experiments receive a show-up fee.

Fifth, in the CitField experiment, subjects are made to believe that the envelope is obtained randomly due to a misdelivery. The text on the card reveals that a card has been sent to ‘all other’ volunteers as well. Believing that a card has been delivered randomly, and other volunteers receive similar cards, may affect pro-social behavior (but the direction is unclear). In each of the StuLab, CitLab, and CitHome sessions, 40 subjects randomly take their instructions and ‘thank you’ card from a pile of 45 envelopes. They are told that some envelopes are left over after the last subject has taken an envelope, and that he experimenter sent out all these envelopes. This way, subjects have randomly received a card while knowing that similar cards have been sent to other volunteers, comparable to the CitField experiment.

Sixth, in the StuLab experiment, students know that the money used in the experiment comes from a university. Knowing who is funding the experiment may influence behavior. Therefore, the name of the university is explicitly mentioned on all the cards in all experiments.

Seventh, in CitField only, the same name and address of one volunteer is used on all transparent envelopes. This volunteer has a Dutch last name and an address close to the city center of Tilburg. The volunteer is male (but this is not revealed). The information that subjects have in CitField influences the design of the three other experiments threefold. First, volunteers taking part in these experiments also have to live close to the city center of Tilburg. Pro-social behavior may be influenced by the district of the recipient (Falk and Zehnder, 2007; Etang et al., 2011). Second, all volunteers must have a Dutch last name. Ethnicity of the receiver may influence decisions (Buchan et al., 2006; Charness et al., 2007). Third, all volunteers must be male (subjects remain unaware of the volunteer’s gender). Studies show that giving to males or females may differ (Eckel and Grossman 2001).

Eighth, 88 % of the citizens in Tilburg have the Dutch nationality (CBS 2010). Therefore, the majority of subjects in CitField is Dutch as well. Previous research has shown that people from different cultures behave differently in social preference experiments (Henrich et al. 2004; Herrmann et al. 2008). Only Dutch subjects are invited to participate in the other experiments. Subject are considered Dutch if they can read the Dutch instructions.

Ninth, Tilburg has a 49.64 % share of males (CBS 2010). Thus, the division of subjects in the StuLab, CitLab, and CitHome experiments is gender balanced (Andreoni and Vesterlund 2001; Aguiar et al. 2009).

2.3 Procedure of StuLab, CitLab and CitHome

Students were recruited for StuLab at the campus square of Tilburg University, which is a gathering place for students of all disciplines (economics, law, the social sciences, humanities, and theology). From 10 a.m. to 5 p.m., six students per hour were asked to participate in a short experiment. No mention was made about earnings or show-up fee. In the lab, subjects were asked to randomly pick one opaque A4 sized envelope from a pile. Each envelope contained a set of instructions and a stamped transparent envelope addressed to a volunteer. After reading the instructions in private, subjects left with all materials they were given.

Subjects were recruited for CitLab in the downtown city area of Tilburg where the city hall is located. This is where the CitField experiment is conducted, and where all the volunteers live. Here, representative citizens of Tilburg can be recruited. Only people who were at the city hall to extend or renew their passports or ID cards were asked to take part in the experiment. By Dutch law, all citizens are required to carry one or the other. Therefore, there is no selection effect of citizens visiting the city hall. The city hall is closed at weekends, and opens daily from 10 a.m. to 6 p.m., but on Thursdays it closes at 8 p.m. From 10 a.m. to 8 p.m., four subjects per hour took part in the CitLab experiment. Subjects were asked if they were at city hall to extend their passport or identification card and if they wanted to participate in an experiment. Eligible subjects were then asked to randomly take an opaque A4 sized envelope from a pile, and were shown directions to a lab. The envelope contained instructions and a stamped transparent envelope addressed to one of the volunteers. Once the subjects had read the instructions, they were asked to leave the lab, and take along all that they had been given.

CitHome used exactly the same procedure as CitLab. However, rather than directing these subjects to a lab, they were asked to take the opaque A4 sized envelope with them, and read it once they were at home. One wave of the StuLab, CitLab, and CitHome experiments was conducted in spring 2011. Another wave was conducted in winter 2012–2013.

2.4 Procedure of the CitField experiment

In CitField, the ‘misdirected letter technique’ is used (Howitt et al. 1977; Howitt and McCabe 1978; Kremer et al. 1986; Franzen and Pointner 2012). A postmarked transparent envelope with two notes of €5 was slipped into a subject’s mailbox. The name and address on the envelope were different from the of the subject. An envelope delivered this way suggests a misdelivery. The transparency of the envelope is important, because the money is clearly visible. If an opaque envelope had been used, subjects may have returned or kept it (possibly to throw it away) without knowing about the money. Either way, with an opaque envelope control is lost over whether subjects actively decide to return or keep money (rather, they would have decided over ‘just an envelope’). Note, the volunteer’s address ends with ‘… street 27’. As a randomization technique, each envelope was delivered at an address in the center of Tilburg that ends in ‘…street 27’. Only streets that are located near the city hall were used so that subjects would likely go to the same city hall as subjects recruited for CitLab and CitHome. Eighty streets from around the center were randomly drawn.

The postmark on the stamp makes subjects believe this is a misdelivery. This postmark was acquired by sending the envelope to the volunteer first, before slipping it into a subject’s mailbox. The envelope was misdelivered the same day the volunteer received it, because of the date shown on the postmark. Envelopes were delivered in the afternoon, like the mail company’s delivery time. CitField was conducted in spring 2011 and winter 2012–2013.

2.5 Errors in the delivery process

In all four experiments, an envelope that is not returned to a volunteer can be at two places: with the subject, or lost in the delivery process of the (monopolist) mail company. It is impossible to separate the two alternatives.

To study the noise in the delivery process, transparent envelopes with cash were sent and checked whether they had arrived. One hundred and fifty envelopes, each with two notes of €5, 147 were delivered. In a pilot study, 58 envelopes, each with two notes, one of €10 and one of €5, were sent and all were delivered. The return rate of 98.56 % shows noise in the experimental data. This noise is independent of the experiment and does not confound the causal effects of interest.

2.6 Self-selection

The subjects of the CitField experiment were randomly selected whereas the subjects of the other experiments are self-selected. This may cause different behavior across experiments. However, laboratory research and self-selection are inextricably linked. The purest test of lab-field generalizability should therefore use self-selection in the laboratory experiments, but randomized selection in the natural field experiment. If no differences between laboratory and field are found, then in this way the most relevant evidence is obtained that the laboratory predicts field behavior well.

Previous research shows no evidence that self-selection has an impact on pro-social behavior. Using social preference experiments, Cleave et al. (2011) and Falk et al. (2012) test for participation biases within student subjects and find none. Anderson et al. (2010) come to a similar conclusion for truck drivers. Bellemare and Kröger (2007) investigate selection effects in a sample of the Dutch population. They find no correlation between deciding to participate in an experiment (trust game) and being pro-social.

3 Data analysis

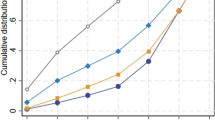

Figure 1 shows the percentage of returned envelopes.Footnote 5 Result 1 summarizes:

- Result 1 :

-

There is no difference in return rates of envelopes that contain money across all four experiments. The subject pool, physical environment in which the experiment is conducted, and awareness of scrutiny have no effect on pro-social behavior.

Support for Result 1: A two-sided Kruskal-Wallis test on returned envelopes that contain money cannot reject the hypothesis that the four experiments are equal (N 1=80,N 2=80,N 3=80,N 4=80,p=0.23). A χ 2-test on this data reveals a p-value of 0.29. Next, a pairwise comparison is made across all four experiments with two-sided Mann-Whitney tests. The return rates are similar in StuLab and CitLab (p=0.82), CitLab and CitHome (p=0.11), CitHome and CitField (p=0.52), and StuLab and CitField (p=0.23). Results are qualitatively similar when empty returned envelopes are added to the analysis. Only now, the pairwise comparison of CitLab versus CitHome has a p-value of 0.08. Finally, a Kruskal-Wallis test on empty returned envelopes shows a p-value of 0.74.

Because the main result is based on the non-rejection of the null hypothesis, power analyses were carried out. A priori, a sample of 80 allows an effect size of at least 40 percent to be detected with a significance level of 5 percent and a power of 80 percent (Faul et al. 2007). The differences in behavior reported in Fig. 1 are so small that even if they were statistically significant, they would be economically insignificant. The StuLab and CitLab experiments show a difference with CitField of approximately 7.5 percent points. To make such a difference statistically significant (with a significance level of 5 percent and a power of 80 percent), the number of observations in each experiment would have to be 577.

Electronic supplementary material (ESM) presents analyses that look at issues other than return rates.

4 Conclusion

This paper reports results of a dictator game in a natural field experiment. In the main experiment, a random sample in a Dutch city receives a transparent envelope with cash in their mailbox, due to a suggested misdelivery. These dictators can choose to return the envelope, but are unaware that an experiment is taking place. Other experiments explore possible differences between the laboratory and the field. First, students in a laboratory have to decide to send an envelope with cash to a volunteer addressed on it. Second, the student subjects in the laboratory are replaced by subjects from a Dutch city. Third, the laboratory is replaced for a subject’s home, and the subject is aware that behavior is monitored. These experiments identify the influences of the subject pool (students versus citizens), physical environment (the laboratory versus home), and awareness of the experimenter.

The results show that behavior is the same in all four experiments: roughly half of the subjects in each experiment return the full amount of money. The results of this paper show that student behavior is predictive for behavior of a more general population. In similar spirit, Franzen and Pointner (2012) show in a within-subject design that students who are pro-social in a laboratory dictator game are more likely to return a misdirected letter with cash. These findings are of importance to theorists, because they motivate the need to model other-regarding preferences. The results are also of importance to experimental economists, because they support the relevance of findings in the laboratory for the field.

Notes

Some studies correlate behavior in a laboratory dictator game with field behavior, such as Carpenter and Myers (2010). Alternatively, one can think of dictator game behavior to be reflected in philanthropy (see Andreoni, 2006 for an overview of philanthropy). See Benz and Meier (2008) for a non-laboratory study on philanthropy.

Dana et al. (2006, 2007), and Lazear et al. (2012) find that dictators are more selfish when their role or intentions are hidden from scrutiny by the recipient (as opposed to scrutiny of the experimenter). Andreoni and Bernheim (2009) find similar results when dictators are scrutinized by an audience.

Data were gathered in two waves of N=40 per experiment. Mann-Whitney tests show no differences between two waves of a given experiment, allowing to pool the data.

References

Aguiar, F., Brañas-Garza, P., Cobo-Reyes, R., Jimenez, N., & Miller, L. (2009). Are women expected to be more generous? Experimental Economics, 12(1), 93–98.

Anderson, J., Burks, S., Carpenter, J., Götte, L., Maurer, K., Nosenzo, D., Potter, R., Rocha, K., & Rustichini, A. (2010). Self selection does not increase other-regarding preferences among adult laboratory subjects, but student subjects may be more self-regarding than adults. IZA Discussion Paper, 5389.

Andreoni, J. (2006). Philanthropy (Vol. 2, Chap. 18, pp. 1201–1269). Amsterdam: Elsevier.

Andreoni, J., & Bernheim, B. (2009). Social image and the 50–50 norm: a theoretical and experimental analysis of audience effects. Econometrica, 77, 1607–1636.

Andreoni, J., & Miller, J. (2002). Giving according to GARP: an experimental test of the consistency of preferences for altruism. Econometrica, 70, 737–753.

Andreoni, J., & Vesterlund, L. (2001). Which is the fair sex? Gender differences in altruism. The Quarterly Journal of Economics, 116(1), 293–312.

Bandiera, O., Barankay, I., & Rasul, I. (2005). Social preferences and the response to incentives: evidence from personnel data. The Quarterly Journal of Economics, 120(3), 917–962.

Barmettler, F., Fehr, E., & Zehnder, C. (2012). Big experimenter is watching you! Anonymity and prosocial behavior in the laboratory. Games and Economic Behavior, 75(1), 17–34.

Bellemare, C., & Kröger, S. (2007). On representative social capital. European Economic Review, 51, 183–202.

Benz, M., & Meier, S. (2008). Do people behave in experiments as in real life? Evidence from donations. Experimental Economics, 11(3), 268–281.

Buchan, N., Johnson, E., & Croson, R. (2006). Let’s get personal: an international examination of the influence of communication, culture, and social distance on other regarding preferences. Journal of Economic Behavior & Organization, 60, 373–398.

Cadsby, B., Servátka, M., & Song, F. (2010). Gender and generosity: does degree of anonymity or group gender composition matter? Experimental Economics, 13(3), 299–308.

Camerer, C. (2011). The promise and success of lab-field generalizability in experimental economics: a critical reply to Levitt and List. Working Paper.

Camerer, C., & Thaler, R. (1995). Anomalies: ultimatums, dictators and manners. The Journal of Economic Perspectives, 9(2), 209–219.

Carpenter, J., & Myers, C. (2010). Why volunteer? Evidence on the role of altruism, image, and incentives. Journal of Public Economics, 94(11), 911–920.

CBS (2010). Bevolking op 1 januari. Centraal Bureau voor de Statisktiek.

Charness, G., Haruvy, E., & Sonsino, D. (2007). Social distance and reciprocity: an Internet experiment. Journal of Economic Behavior & Organization, 63, 88–103.

Charness, G., & Rabin, M. (2002). Understanding social preferences with simple tests. The Quarterly Journal of Economics, 117(3), 817–869.

Cherry, T., Frykblom, P., & Shogren, J. (2002). Hardnose the dictator. The American Economic Review, 92(4), 1218–1221.

Cleave, B., Nikiforakis, N., & Slonim, R. (2011). Is there selection bias in laboratory experiments? IZA Discussion Paper, 5488.

Dana, J., Cain, D., & Dawes, R. (2006). What you don’t know won’t hurtme: costly (but quiet) exit in dictator games. Organizational Behavior and Human Decision Processes, 100, 193–201.

Dana, J., Weber, R., & Kuang, J. (2007). Exploiting moral wiggle room: experiments demonstrating an illusory preference for fairness. Economic Theory, 33, 67–80.

Eckel, C., & Grossman, P. (2001). Chivalry and solidarity in ultimatum games. Economic Inquiry, 39(2), 171–188.

Engel, C. (2011). Dictator games: a meta study. Experimental Economics, 14(4), 583–610.

Etang, A., Fielding, D., & Knowles, S. (2011). Does trust extend beyond the village? Experimental trust and social distance in Cameroon. Experimental Economics, 14(1), 15–35.

Fahr, R., & Irlenbusch, B. (2000). Fairness as a constraint on trust in reciprocity: earned property rights in a reciprocal exchange experiment. Economics Letters, 66, 275–282.

Falk, A., & Heckman, J. (2009). Lab experiments are a major source of knowledge in the social sciences. Science, 326, 535–538.

Falk, A., & Zehnder, C. (2007). Discrimination and in-group favoritism in a citywide trust experiment. IZA Discussion Paper, 2765.

Falk, A., Meier, S., & Zehnder, C. (2012). Did we overestimate he role of social preferences? The case of self-selected student samples. Journal of the European Economic Association, forthcoming.

Faul, F., Erdfelder, E., Lang, A., & Buchner, A. (2007). G*power 3: a flexible statistical power analysis program for the social, behavioral, and biomedical sciences. Behavior Research Methods, 39, 1.

Fehr, E., & Schmidt, K. (1999). A theory of fairness, competition and cooperation. The Quarterly Journal of Economics, 114, 817–868.

Forsythe, R., Horowitz, J., Savin, N., & Sefton, M. (1994). Fairness in simple bargaining experiments. Games and Economic Behavior, 6, 347–369.

Franzen, A., & Pointner, S. (2012). The external validity of giving in the dictator game: a field experiment using the misdirected letter technique. Experimental Economics. doi:10.1007/s10683-012-9337-5.

Harrison, G., & List, J. (2004). Field experiments. Journal of Economic Literature, XLII, 1009–1055.

Henrich, J., Boyd, R., Bowles, S., Camerer, C., Fehr, E., & Gintis, H. (2004). Foundations of human sociality: economic experiments and ethnographic evidence from fifteen small-scale societies. New York: Oxford University Press.

Herrmann, B., Thöni, C., & Gächter, S. (2008). Antisocial punishment across societies. Science, 319, 1362–1367.

Hoffman, E., McCabe, K., & Smith, V. (1996). Social distance and other-regarding behavior in dictator games. The American Economic Review, 86(3), 653–660.

Howitt, D., & McCabe, J. (1978). Attitudes do predict behaviour—in mails at least. British Journal of Social and Clinical Psychology, 17, 285–286.

Howitt, D., Craven, G., Iveson, C., Kremer, J., McCabe, J., & Rolph, T. (1977). The misdirected letter. British Journal of Social and Clinical Psychology, 16, 285–286.

Kahneman, D., Knetsch, J., & Thaler, R. (1986). Fairness as a constraint on profit seeking: entitlements in the market. The American Economic Review, 76(4), 728–741.

Kessler, J., & Vesterlund, L. (2011). External validity of laboratory experiments. London: Oxford University Press.

Kremer, J., Barry, R., & McNally, A. (1986). The misdirected letter and the quasi-questionnaire: unobtrusive measures of prejudice in Northern Ireland. Journal of Applied Social Psychology, 16(4), 303–309.

Lazear, E., Malmendier, U., & Weber, R. (2012). Sorting in experiments with application to social preferences. American Economic Journal: Applied Economics, 4(1), 136–163.

Levitt, S., & List, J. (2007a). Viewpoint: on the generalizability of lab behaviour to the field. Canadian Journal of Economics, 40, 347–370.

Levitt, S., & List, J. (2007b). What do laboratory experiments measuring social preferences reveal about the real world? The Journal of Economic Perspectives, 21(2), 153–174.

Levitt, S., & List, J. (2008). Homo economicus evolves. Science, 319(5865), 909–910.

List, J. (2006a). The behavioralist meets the market: measuring social preferences and reputation effects in actual transactions. Journal of Political Economy, 114(1), 1–37.

List, J. (2006b). Field experiments: a bridge between lab and naturally occurring data. Advances in Economic Analysis & Policy, 6(2), 1–45.

List, J. (2009). Social preferences: some thoughts from the field. Annual Review of Economics, 1, 563–579.

Oxoby, R., & Spraggon, J. (2008). Mine and yours: property rights in dictator games. Journal of Economic Behavior & Organization, 65, 703–713.

Winking, J., & Mizer, N. (2013). Natural-field dictator game shows no altruistic giving. Evolution and Human Behavior. doi:10.1016/j.evolhumbehav.2013.04.002.

Zizzo, D. (2010). Experimenter demand effects in economic experiments. Experimental Economics, 13(1), 75–98.

Author information

Authors and Affiliations

Corresponding author

Additional information

I would like to thank Aurélien Baillon, Han Bleichrodt, Enrico Diecidue, Dennie van Dolder, Ido Erev, Emir Kamenica, John List, Steven Levitt, Wieland Müller, Charles Noussair, Rogier Potters van Loon, Drazen Prelec, Kirsten Rohde, Ingrid Rohde, three anonymous referees and participants from seminars at the Erasmus University Rotterdam and the University of Chicago for their useful comments. Special thanks to Jan Potters and Peter Wakker for some significant contributions. I would like to thank Thibault van Heeswijk and Bart Stoop for their excellent research assistance. Finally, I would like to thank ERIM for financial support.

Electronic Supplementary Material

Below is the link to the electronic supplementary material.

Rights and permissions

About this article

Cite this article

Stoop, J. From the lab to the field: envelopes, dictators and manners. Exp Econ 17, 304–313 (2014). https://doi.org/10.1007/s10683-013-9368-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10683-013-9368-6