Abstract

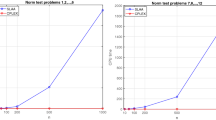

Given a non empty polyhedral set, we consider the problem of finding a vector belonging to it and having the minimum number of nonzero components, i.e., a feasible vector with minimum zero-norm. This combinatorial optimization problem is NP-Hard and arises in various fields such as machine learning, pattern recognition, signal processing. One of the contributions of this paper is to propose two new smooth approximations of the zero-norm function, where the approximating functions are separable and concave. In this paper we first formally prove the equivalence between the approximating problems and the original nonsmooth problem. To this aim, we preliminarily state in a general setting theoretical conditions sufficient to guarantee the equivalence between pairs of problems. Moreover we also define an effective and efficient version of the Frank-Wolfe algorithm for the minimization of concave separable functions over polyhedral sets in which variables which are null at an iteration are eliminated for all the following ones, with significant savings in computational time, and we prove the global convergence of the method. Finally, we report the numerical results on test problems showing both the usefulness of the new concave formulations and the efficiency in terms of computational time of the implemented minimization algorithm.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Alon, U., Barkai, N., Notterman, D.A., Gish, K., Ybarra, S., Mack, D., Levine, A.J.: Broad patterns of gene expression revealed by clustering analysis of tumor and normal colon tissue probed by oligonucleotide arrays. Proc. Natl. Acad. Sc. 96, 6745–6750 (1999)

Amaldi, E., Kann, V.: On the approximability of minimizing nonzero variables or unsatisfied relations in linear systems. Theor. Comput. Sci. 209, 237–260 (1998)

Bradley, P.S., Mangasarian, O.L.: Feature selection via concave minimization and support vector machines. In: Shavlik, J. (ed.) Machine Learning Proceedings of the Fifteenth International Conference (ICML ’98), pp. 82–90. Morgan Kaufmann, San Francisco (1998)

Bruckstein, A.M., Donoho, D.L., Elad, M.: From sparse solutions of systems of equations to sparse modeling of signals and images. SIAM Rev. (2008, to appear)

Chen, S.S., Donoho, D.L., Saunders, M.A.: Atomic decomposition basis pursuit. SIAM Rev. 43, 129–159 (2001)

Donoho, D.L., Elad, M., Temlyakov, V.N.: Stable recovery of sparse overcomplete representation in the presence of noise. IEEE Trans. Inf. Theory. 52, 6–18 (2006)

Frank, M., Wolfe, P.: An algorithm for quadratic programming. Nav. Res. Logist. Q. 3, 95–110 (1956)

Gribonval, R., Nielsen, M.: Sparse representation in union of bases. IEEE Trans. Inf. Theory. 49, 3320–3325 (2003)

Guyon, I., Elisseeff, A.: An introduction to variable and feature selection. J. Mach. Learn. Res. 3, 1157–1182 (2003)

Mangasarian, O.L.: Machine learning via polyhedral concave minimization. In: Fischer, H., Riedmueller, B., Schaeffler, S. (eds.) Applied Mathematics and Parallel Computing—Festschrift for Klaus Ritter, pp. 175–188. Physica, Heidelberg (1996)

Warmack, R.E., Gonzalez, R.C.: An algorithm for optimal solution of linear inequalities and its application to pattern recognition. IEEE Trans. Comput. 22, 1065–1075 (1973)

Weston, J., Elisseef, A., Scholkopf, B.: Use of the zero-norm with linear models and kernel model. J. Mach. Learn. Res. 3, 1439–1461 (2003)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Rinaldi, F., Schoen, F. & Sciandrone, M. Concave programming for minimizing the zero-norm over polyhedral sets. Comput Optim Appl 46, 467–486 (2010). https://doi.org/10.1007/s10589-008-9202-9

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10589-008-9202-9