Abstract

In this paper, we compare the performance of two non-parametric methods of classification and regression trees (CART) and the newly multivariate adaptive regression splines (MARS) models, in forecasting bankruptcy. Models are tested on a large universe of US banks over a complete market cycle and run under a K-fold cross validation. Then, a hybrid model which combines K-means clustering and MARS is tested as well. Our findings highlight that (i) Either in training or testing sample, MARS provides, in average, better correct classification rate than CART model (ii) Hybrid approach significantly increases the classification accuracy rate in the training sample (iii) MARS prediction underperforms when the misclassification of the bankrupt banks rate is adopted as a criteria (iv) Finally, results prove that non-parametric models are more suitable for bank failure prediction than the corresponding Logit model.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

The financial crisis, which started in 2007, has dramatically affected banks sector throughout the world. The shock wave epicenter was in the US and it took long time for regulators to stop the default chain and save big banks. Therefore, the prevention against the systemic risk - failure of the banking system- becomes an ineluctable concern and the need of new forecasting tools is of major importance to not only regulators but also academics.

In this sense, federal banking supervisors [the Federal Reserve, the Federal Deposit Insurance Corporation (FDIC), and the Office of the Comptroller of the Currency (OCC)] and other financial supervisory agencies provide a supervisory rating (convenient summary of bank conditions at the time of an exam). This helps investors to detect banks that have a great default probability ratio. A key outcome of such an on-site exam is a CAMELS rating. The acronym “CAMEL” refers to the five components of a bank’s condition that are evaluated: Capital adequacy, Asset quality, Management, Earnings, and Liquidity. A sixth component, a bank’s Sensitivity to market risk, was added in 1997; hence the acronym was changed to CAMELS.

The FDIC developed also a Statistical CAMELS Off-site Rating system (SCOR) to perform the bank’s stability evaluation. Collier et al. (2003) examine the performance of this model over the period 1986–2002 and point out the limitations of this model despite the usefulness of SCOR which is based only on financial ratios. Cole and Gunther (1995) prove the same results and report that the CAMELS ratings decay rapidly.

Predicting bank bankruptcy has reached a specific interest in financial literature. Thus, numerous models have been developed since the early 70s. All proposed models are based on classification methods in a multidimensional space defined by a set of specific variables.

The literature is rich of non-parametric and parametric models. With regard to the later, Beaver (1966) was one of the first researchers who focused on univariate analysis to study bankruptcy prediction. He tested the power of financial ratios to classify and predict bankrupt firms. Cash flow and debt ratios appeared to be the important predictors of bankruptcy. Altman (1968) used Multivariate Discriminant Analysis (MDA) to develop a five-factor model to calculate the well-known “Z-score” and predict bankruptcy of manufacturing firms.

As an examples of well-known statistical methods to predict failure, the logistic regression (logit) (Ohlson 1980; Demirgüç-Kunt and Detragiache 1997; Affes and Hentati-Kaffel 2017), Probit analysis (Zmijewski 1984; Hanweck et al. 1977) and factor analysis. West et al. (1985) demonstrated that that the combination of factor analysis and Logit estimation was promising in evaluating banks’ condition. The factors identified by the Logit model as important descriptive variables for the banks’ operations are similar to those used for CAMELS ratings.

Martin (1977) used both Logit and MDA statistical methods to predict bank failures. Results proved that the two models have similar classifications of defaulted and healthy banks. In the same sense, Jones and Hensher (2004) presented mixed Logit model for distress firm prediction and compared it with Multinomial Logit Models (MNL). They concluded that mixed Logit obtained substantially better predictive accuracy than Multinomial Logit models.

Affes and Hentati-Kaffel (2017) identified over the period 2008–2013 US banks leading to bankruptcy by conducting a comparative analysis based on both Canonical Discriminant Analysis and Logit models and highlighted also that suitability of models is improved by comparing different optimal cut-off score.

A second stream of literature tested non-statistical methods to set up a model for default prediction. For example, Kolari et al. (2002) use a Trait Recognition Model (TRA) (a kind of the image recognition algorithm). Empirical results recognized that these methods perform better than the statistical approach. Lanine and Vander Vennet (2006) showed that TRA approach outperforms Logit in predicting failures among Russian commercial banks.

Other non-statistical methods include Intelligence techniques such as induction of classification trees and Neural Networks methods (NM). NM procedures follow a process similar to the human brain and contain mathematical and algorithmic elements that mimic the biological neural networks of the human nervous system (see Odom and Sharda 1990; Lenard et al. 1995; Zhang et al. 1999; McKee and Greenstein 2000; Anandarajan et al. 2001). Boyacioglu et al. (2009) tested various neural networks techniques and multivariate statistical methods to the problem of predicting bank failures in Turkey and presented a comparison of the classification performances of the techniques tested. They used similar financial ratios to those used in CAMELS ratings.

More recently, Decision Trees (DT) and regression tree (CART) methods were used to implement the financial distress prediction. Chen (2011) compared empirically DT with Logit for Taiwan firms, and found that DT got higher accuracy than Logit in short run (less than 1 year), while Logit performed better in long run (above one and half year).

Iturriaga and Sanz (2015) developed a hybrid neural network model to study the bankruptcy of U.S banks by combining a Multilayer Perceptrons (MLP) network and Self-Organizing Maps (SOM). They found that the MLP-SOM can detect 96.15% of the failures in the period between May 2012 and December 2013 and outperforms traditional models of bankruptcy forecast.

De Andrés et al. (2011) and Sánchez-Lasheras et al. (2012) combined Multivariate Adaptive Regression Splines (MARS) model with fuzzy clustering and Self Organized Map (SOM). They found that these hybrids models outperform a single classification models (i) LDA, NN (Feed-forward neural networks) and single MARS (ii) NN (back propagation neural networks) and MARS in terms of correct classification and of the identification of the companies that go bankrupt.

In this paper, we aim to model the relationship between ten financial variables and default probability of US banks by using the so-called non-parametric or flexible models. The main purpose is to test the accuracy of non-parametric methods of classification, to increase their prediction ability and to reduce misclassification problem. Here, we propose a blend of k-means and MARS model. We suggest, for the first time in the bank failure literature, the use of MARS and also a hybrid model which combines K-means and MARS model.

The method consists, in addition to Classification And Regression Trees (CART), to validate the Multivariate Adaptive Regression Splines (MARS) model which gained an increasing interest in financial literature. MARS was first proposed by Friedman (1991). The main advantage of this model is the capacity to explore the complex nonlinear relationships between response variable and various predictor variables.

Unlike the used methodology, the empirical part newly contributes to the existing literature by implementing all these models to a large universe of US banks, over the period spanning 6 years, from 2008 to 2013, under a K-fold Cross validation. According to the size of our data set we apply a 10-fold cross validation to separate it into training and testing sets. In order to evaluate models fitting, we consider the confusion matrix for both the training and the testing samples. Also, we use the Receiver Operating Characteristic Curves (ROC) for evaluating classification success. Finally, we evaluate the performance of each model according to the Area under the ROC Curve.

Our main findings highlights the promising functionality of MARS model and suggest that:

-

(i)

Either in training or in the testing sample, MARS provide better correct classification than CART model in average (97.88–97.58% versus 95.02–93.4%)

-

(ii)

Hybrid approach enhanced the classification accuracy for the training sample

-

(iii)

Relying on misclassification rate of bankrupt banks, MARS underperformed, especially in 2008, 2009 and 2010

-

(iv)

According to the AUC of the Receiver Operating Characteristic Curve we note the supremacy of the hybrid model

-

(v)

Finally, CART provides a better interpretation of splitting values at the root node variables. Splitting values can be considered as early warning signals. CART method allows, in this sense, to carry out and to define the target values sheets that the regulators should take into account in order to be able to identify upstream banks in difficulty.

The paper is organized as follows. Section 2 presents the methodology and data used. Section 3 describes results of CART and MARS models. In Sect. 4, we analyze used models’ predictability. Section 5 concludes. Models outcomes are in the Appendix.

2 The model

For empirical validation, we consider a large panel of US banks. We collect data for active banks (AB) from BankScope and non-active (NAB) ones from FDIC, over the period from 2008 to 2013.

We extract all ratios to build 10 financial variables, detailed as follow:

Categories CAMEL | Variables | Definition |

|---|---|---|

Capital adequacy | EQTA | Total equity/total assets |

EQTL | Total equity/total loans | |

Assets quality | NPLTA | Non performing loans/total assets |

NPLGL | Non performing loans/gross loans | |

LLRTA | Loan loss reserves/total assets | |

LLRGL | Loan loss reserves/gross loans | |

Earnings ability | ROA | Net income/total assets |

ROE | Net income/total equity | |

Liquidity | TLTD | Total loans/total customer deposits |

TDTA | Total customer deposits/total assets |

The choice of these ten ratios was conducted and justified by an abundant literature (Sinkey 1979; Martin 1977; Thomson 1991; Barr and Siems 1994; Pantalone et al. 1987; Godlewski et al. 2003).

We adopt the same rule of bank status selection as in Affes and Hentati-Kaffel (2017). Thus, the number of (NAB) banks was 410 over the entire period 2008–2013. The total number of active banks obtained in 2013 is 835, 850 in 2012, 883 in 2011, 955 in 2010, 1077 in 2009 and 1205 in 2008.

However, it was proved that classification tends to favor dominant category, here active banks (AB). This means that the original database has a highly skewed distribution. To create homogeneous groups, we apply K-fold Cross validation.

We apply a 10-fold cross validation to separate our data set into training and testing sets. 10-fold is the most widely used number of fold in cross validation.

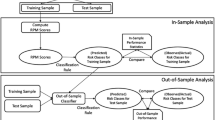

The procedure for each model is the same and summarized as follow:

-

1.

For each of 10 experiments, we use 9 folds for training and the remaining one for testing,

-

2.

we reiterate 10 times for each 10-fold cross validation experiments,

-

3.

we select parameters of the best model and then we minimize the cross validation error rate.

In order to evaluate the classification suitability of models, we established the confusion matrix for the training and the testing samples. Moreover, we use the Receiver Operating Characteristic Curves (ROC) to evaluate classification performance.

The ROC curve presents the possible distributions of scores for the banks. We determine the optimal cut-off value that maximize the correct classification rate (default and healthy banks correctly classified) and then classify the banks as a potential default bank when the score is higher than the cut-off or as healthy if the score is lower than the cut-off value.

3 Running classifications methods

3.1 Multivariate adaptive regression splines (MARS) implementation

Introduced by Jerome Friedman in 1991, Multivariate Adaptive Regression Splines is a form of stepwise linear regression which can model non-linearity between variables.

MARS is based on three parameters:

-

1.

the maximum number of basis functions (term)

-

2.

the smooth parameter (called also the penalty parameter), which is recommended to be equal to 3

-

3.

and the maximum number of iteration between variables (equal to 2) (see Andalib and Atry 2009).

In MARS, the basis function (term) is fitted to segregate independent variable intervals by using recursive splits. In this model, all possible splitting points are estimated with a linear spline (also called piecewise polynomials). The best splitting point (knot positions) is the one for which the model extensions minimize a squared error criterion. Knot is the point at which two polynomial pieces connect. The best splitting point is the one for which the model extension minimize a squared error criterion. Knots uses two-sided truncated power functions as spline basis functions, described in Eqs. (1) and (2)

where \(\left[ q\right] _{+}=\max \left\{ 0,q\right\} \) and t is a univariate knot. MARS is represented as a combination of piecewise linear or hinge functions. The later have a knot or hinge at t, are zero on the one side of the knot and are linear on the other side.

The MARS fit a linear model in basis functions \(\left\{ h_{m}\left( \mathbf {\varkappa }\right) _{m=1}^{M}\right\} \):

where \(h_{m}\left( \mathbf {\varkappa }\right) \) is a basis function of the form described below in Eqs. (1) and (2), M is the number of linearly independent basis functions, and \(\beta _{m}\) is the unknown coefficient for te mth basis function.

As mentioned above, a knot is the point in a range at which the slope of the curve changes. Both the number of the knots and their placement are unknown at the beginning of the process. A stepwise procedure is used to find the best points to place the spline knots. In its most general form, each value of the independent variable is tested as a possible point for the placement of a knot. The model initially developed is overfit (forward phase). A statistical criterion (generalized cross-validation) that tests for a significant impact on a goodness of fit measure is used to remove knots. Only those that have a significant impact on the regression are retained (backward phase).

MARS can perform regressions on binary variables. In binary mode, the dependent variable is converted into a 0 (non-failed banks) or a 1 (failed bank). Ordinary Least Square (OLS) regression is then performed.

We conduct our methodology in three steps:

-

1.

We apply a 10-fold cross validation to divide our data sets into training and testing, and we repeat this process 10 times to get different partition of the database.

-

2.

We choose the best number of basis functions according to the model that minimizes the misclassification rate. MARS does not display relationships in terms of the original 10 financial ratios, but reclassifies the target-predictor variable dependencies into a set of basis functions (BFs to represent the calculated splines. To find the optimal number of BFs and target values, MARS employs a forward/backward stepwise approach to determine the knot points in the data set. At the beginning, the model is tested by allowing more basis functions than are needed (100 BFS). Afterward, this model is shrunken to describe an optimal model. During this stage, basis functions are removed one by one from the over-fit model based on a ‘residual sums of squares’ criterion. The best model will have a GCV \(\hbox {R}^{2}\) score converging to 1 (see Table 1).

-

3.

We conduct the analysis of variance (ANOVA) decomposition procedure to assess the parameter relative importance based on the contributions from the input variables and the BFs (see Tables 2 and 3). In fact, interpretation of the MARS model is greatly facilitated through its ANOVA decomposition.

For purpose of simplification, only 2013 basis functions and corresponding equations are detailed in this section. Results for other years are detailed in the Appendix.

We start our analysis by detecting interaction between variables.

Table 1 delivers the best MARS model selected based on on the 10 times repeated K-fold Cross validation by minimizing the misclassification rate and maximizing ROC. The optimal model has the lowest value of GCV, an index for measuring generalized mean square errors. We use a backward method by minimizing the value of GCV .

Tables 2 and 3 displays the ANOVA decomposition of the built MARS models and exhibits the importance of each ratio in the model. Generalized Cross Validation (GCV) gives the amount of degradation in the model when a ratio is deleted. A model with minimum GCV should be chosen. In fact, GCV reaches its lowest value where the quantity of error is most minimized in the model.

For 2013, the liquidity ratio (TDTA) is the most important variables. Both of capital adequacy variables and the assets quality proxy (NPLGL) have 51.22, 30.61 and 40.53 percent of importance, whereas percent of the rest of variables are null.

According to the ANOVA decomposition outputs, we note that function 1 has the greatest effect on the model with a GCV score of 0.01452 which means that the most important variable (TDTA) impacts significantly the dependent variable.

Basis functions (BF) in 2013 are as follow:

The above basis functions prove the non-linear relationships between the dependent and independent variables.

The final model is expressed as follow:

It appears that, for example, in BF1, on variable TDTA (liquidity proxy), data is grouped into two sets: the first one is assigned 0 for all TDTA values that are below a threshold (e.g., c: 0.9180) and the second set contains values exceeding 0.9180. The BF1 does not appear in the final model but it contributes in the construction of BF6. Indeed, BF6 is defined as a combination between two variables EQTA and TDTA. This basis function has a negative effect on the target variable only when the value of EQTA is less than 0.0132 and the value of TDTA is greater than 0.9180.

In the final model MARS produces a single regression equation, taking into account only BF6, BF28, BF37 and BF48 (see Table 4). Thus, BF1 and BF32 were removed from the regression model because they have an indirect effect and they especially create BFs assigned in the model.

The viability of the bank depends positively on the (TDTA) variable with a positive beta coefficient of 19.3976. Thus, the greater the liquidity caused by the total customer deposit, the better is the financial health of the bank. The MARS model also suggests a negative correlation with BF6. Thus, if a bank has a TDTA > 0.9180 and a level of (Total equity/Total asset) < 0.0132 then this has a negative influence on the score attributed to each bank studied.

3.2 CART algorithm to build tree classifiers

The CART (Classification and Regression Trees) algorithm proposed by Breiman et al. (1984) is a widely used statistical procedure. It produces classification and regression models via tree-based structure. It is based on a hierarchy of univariate binary decisions and operates by selecting the best variable for splitting the data into two groups at the root node. CART is a form of binary recursive partitioning in which partitions can be split into sub-partitions.

This classifier assigns a predicted class membership obtained under a specific measurement (\(x_{1}, x_{2}, \ldots , x_{k}\)). Let X the measurement space of all possible values of X. Tree’s classifiers are constructed by making repetitive splits of X and the subsequently obtained subsets of X. As consequence, a hierarchical structure is formed.

In Finance feature, Frydman et al. (1985) were the first who employed decision trees to forecast default. After that, many research highlighted the accuracy of this method to predict bankruptcy (Carter and Catlett 1987; Gepp et al. 2010).

To build a tree by CART, the procedure should specify a number of parameters:

-

1.

the splitter that will allow to visualize the left branch if the splitter’s variable < value split.

-

2.

the competitor identifier variable. In our bank failure problem, the dependent variable is either bankrupt or non-bankrupt, so classification tree is suitable for our case. Under this assumption and rules, we implement CART on our data.

CART methodology consists of three steps: (i) Construction of maximum tree (ii) Choice of the right tree size (iii) and classification of new data using constructed tree.

Table 5 identifies the node competitors by order of improvement. Thus, at the upper levels of the tree there are more significant variables, and less significant at the bottom of the tree. Splitting in regression trees is made in accordance with squared residuals minimization algorithm which implies that expected sum variances for two resulting nodes should be minimized.

In fact, the process of finding the smallest tree that fits the data could reduce the number of important variables. The tree obtained, is the one that yields the lowest cross-validated error.

Table 2 exhibits the importance of each ratio in the building of CART tree. For example, from 2009 to 2013, capital adequacy variables are the most important ones. However, in 2008, the (NPLGL) is the most important variable.

Figures 1, 2, 3, 4, 5 and 6 illustrate the final classification Tree.

In 2008, as showed by Fig. 1, for the first node, splits are formed on (NPLGL) ratio and in a particular location (0.08). NPLGL produces the greatest “separation”.

This tree is split at each branch by a decision boundary [left is Yes (class “0”), right is No (class “1”)]. Thus, if a bank has a ratio \( NPLGL\le 0.08\), then the bank is considered as healthy (AB) and non-active (NAB) otherwise.

Classification must normally converge to the following clusters: among the 1242 banks analyzed in 2008, 97% are in (AB) group (1205 banks) and only 37 banks (3%) are in the (NAB) group. According to the right branch of the tree (\(\hbox {NPLGL} > 0.08\)), among the 1242 banks, 160 are classified in (NAB) (class 1). However, only 33 banks were actually NAB, yielding to a misclassification of 127 (AB). Looking at the left branch of the tree (\( NPLGL\le 0.08\)), 1078 banks are classified in the (AB) group. Among these 1082 banks, 1078 banks are actually active banks meaning that 4 (NAB) are misclassified. In the left branch of the second level, a second distinction is based on the target value of “\(-\,0.05\)” of the ratio ROA. For banks having a ROA less than \(-\,0.05\), among the 1082 (AB), only 25 are classified as (NAB) and for banks having a ROA ratio \(> -\,0.05\), 1057 are classified as (AB). Among the 25 banks classified as (NAB), only 2 are actually (NAB) meaning that 23 (AB) are misclassified. Among the 1057 banks classified as (AB), 1055 are actually (AB) meaning that 2 (NAB) are misclassified. In the right branch of the second level, a second distinction is based on the target value of “0.07” of the ratio EQTA. For banks having an EQTA less than 0.07, among the 160 (NAB), 82 are classified as (NAB) and for banks having an EQTA ratio > 0.07, 78 are classified as (AB). Among the 82 banks classified as (NAB), 32 are actually (NAB) meaning that 50 (AB) are misclassified. Among the 78 banks classified as (AB), 77 are actually (AB) meaning that only 1 (NAB) is misclassified.

In addition to this hierarchical clustering, CART classification allows regulator to provide a lot of information about banks by pointing to banks that show signs of financial fragility. Indeed, in our case we have checked that banks that were misclassified by CART actually default in the following years (from 2008 to 2013).

A second interpretation linked to the early warning system, values of splitters allows the regulator to set target values that should be used to detect these suspected banks.

For 2008, we can consider that banks with simultaneously an \(NPLGL \le 0.08 \) and \(ROA \le -0.05\) or \(\hbox {NPLGL} > 0.08\) and \(EQTA \le 0.07\) should be classified in the group of fragile banks (bordering on default zone) which must be controlled.

4 Models accuracy and prediction results

In this section, models’ accuracy analysis relies on the confusion matrix estimation. Type I error happens when the model incorrectly predicts a (NAB) to survive, whereas a type II error occurs when the model predicts an (AB) to go bankrupt. Thus, the predictive capacity of model’s classification is based on sensitivity and specificity rates. Accuracy rates are the proportion of the total number of predictions that were correct and specificity is calculated as the number of correct (NAB) predictions divided by the total number of (NAB). The best specificity is 1, whereas the worst is 0. We notice that the choice of the cut-off is crucial. Indeed, in a crisis period type I error decreases but at the same time, the number of (AB) classified as (NAB) increases, bringing on an suitableness cost in terms of economic policy.

Additionally, in this paper the quality of models has been measured with the use of the Receiver-Operating characteristic (ROC) curves and also the Area under (ROC) Curve. (ROC) curve shows the relation between specificityFootnote 1 and sensitivityFootnote 2 of the given test or detector for all allowable values of threshold (cut-off). In a ROC curve the true positive rate (Sensitivity) is plotted in function of the false positive rate (1-Specificity) for different cut-off points of a parameter. Each point on the ROC curve represents a (sensitivity/specificity) pair corresponding to a particular decision threshold.

In this section, we test the performance of MARS, CART and K-MARS models by comparing its classification and predictions with the actual bankruptcies between 2008 and 2016.

4.1 MARS versus CART

Tables 6, 13 and 12 displays sensitivity, accuracy rates and misclassifications rates (Type I and II errors) both in Testing Sample and Training Sample.

For 2013 and in Testing Sample (TS), MARS model was able to correctly classify 99.29%. Only three (NAB) was misclassified (type I error: 20%) and three (AB) banks were considered by the model as (NAB) (type II error 0.36%). The same results were observed in the “Training Sample, (TRS)”. The results for 2012 and 2011 were much better according to type I error, both in the “Testing Sample” and “Training Sample, (TRS)”.

For 2008, 2009 and 2010, MARS underperformed CART by sensitivity scale. For example, Type I error rate was relatively high in 2008 [59.46% in the (TS)]. However, percentage of (AB) correctly predicted was 100, 98.05 and 98.32% respectively for the years 2008, 2009 and 2010 in the (TS). On the other hand, in terms of correct classification rate, MARS model performed better for 2008 (98.23%) than 2009 (94.7%) and 2010 (96.94%).

To summarize, we conclude that MARS model has a good predictive performance, measured by its ability to reduce the type I error and also by generating the best signal to to monitor fragile banks among the (AB).In this sense, MARS would be a powerful tool to enhance identification of the most outstanding patterns to forecast banking distress.

Tables 7, 13 and 12 highlighted also that (CART) produces a high level of correct classification for 2013.

We observe that 98.59% of the banks in the (TNS) are correctly classified and 97.53% of the banks in (TS) are classified in their adequate groups. CART for the (TNS) classify correctly all the failed banks (sensitivity: 100%) and for the testing only one (NAB) was predicted as (AB) (error type I: 6.67%). We also notice that, in the (TNS), Type I error is null for 2012.

However, results obtained for 2008, 2009 and 2010 showed that CART didn’t procure a high correct classification rate. In fact, for 2009 we note a correct classification rate of about 89% in both (TNS) and (TS). Moreover, for this period, CART model provided a high misclassification rate. We noticed an average of 8.99% for Type II error and 8.59% for Type I error in the (TS).

Finally, in order to validate the failure prediction efficiency of the two non-parametric models, we propose to analyze the type II error as follows: From 2008 to 2013, we retrieve the (NAB) banks classified by MARS and CART models. We identify banks that are misclassified ((AB) that the model has considered as failing).

We check the survival of each bank for the next (N + 5) years. The results are summarized in Tables 8 and 9.

For example, for the CART model, 95 banks were incorrectly classified in 2009. 69.30% will default in 2010, 21.05% in 2011, 8.77% in 2012, and 0.88% in 2013. For MARS, the number of misclassified banks is less important. Among the 19 misclassified, which will be failing in the next 5 years, 94.74% will default in 2010 and the rest in 2012.

We can thus pick up the following conclusions: studied models provide a powerful EWS (Early Warning System). On average, CART put a red flag on 66.11% of unstable banks in year \(\hbox {N} + 1\). The MARS model is much more efficient and prevents the failure, in year \(\hbox {N} + 1\), of 80.81% of analyzed cases.

The CART model is much more penalizing in terms of classification. This explains the greater number of misclassified (AB).

4.2 Hybrid model accuracy

In order to improve the results of both models CART and MARS, we propose to build a hybrid model based on the classification model K-means and MARS.

Clustering is a method of grouping (Anderberg 2014; Hartigan 1975; Jain and Dubes 1988) a set of objects into groups according to criteria predefined similarities between objects. Most clustering methods are based on a distance measure between two objects. Technically, clustering can be regarded as a minimization problem.

Let X the matrix of dimension data (N, n):

N corresponds to the number of banks, n the number of years and \(x_{ij}\) ratios variables.

From \(\hbox {N} \times \hbox {n}\) dimensional data set K-means algorithms allocates each data point to one of c clusters to minimize the within-cluster sum of squares:

where \(A_{i}\) is banks in the cluster i and \(v_{i}\) is the mean for these banks group over cluster i. This equation denotes actually a distance norm. In K-means clustering \(v_{i}\) is called the cluster prototypes, i.e. the cluster centers:

where \(N_{i}\) is the number of banks in \(A_{i}\).

In our paper Z-score standardization is applied to find clusters and the number of cluster solutions is Two.

To implement the hybrid model, we proceed as follows:

-

Step 1

We apply the K-means clustering method on all banks. The cluster number is two (AB and NAB). In Table 10 we provide results of the classification based only on K-means.

-

Step 2

We do not run the MARS model on the data with their actual identifications. We use the classification generated by k-means. Hybrid’s results are summarized in Table 11.

For 2013, the hybrid model provides a satisfactory rate of correct classification but we notice a slight gap between the training and the testing sample (98% against 96%). The model classifies correctly all the bankrupt banks both in (TNS) and (TS) testing sample (sensitivity: 100%). Misclassification rate of (AB) in (TS) is higher than those of TNS (4.20% against 2.10%).

For the others years, we mainly observe the same results in term of correct classification rate. Moreover, the hybrid model provides a lower Type I error. However, we notice that the misclassification of (AB) (Type II error) is more important in the testing than in the learning sample.

Finally, according to the Area under Curve (ROC) (see Table 12), we conclude that on average MARS model provides a better accuracy results than CART model in the (TS) (94.84% against 93.3%). During all the period MARS outperforms CART, except for 2013, where the AUC of CART is slightly greater than MARS in the testing sample (95.89% against 95.10%).

The hybrid model K-MARS outperforms all the other models CART and MARS in both training and testing samples by using AUC.

To sum up, if we think in terms of average rates over the entire period we can confirm that MARS model provides better results than CART both in terms of correct classification rate and AUC for the testing sample. But it failed to classify correctly the bankrupt banks.

The combined model K-means and MARS is the best model in terms of accuracy in both training and testing sample in terms of average sensitivity (see Table 13; Figs. 7, 8, 9). Results of the training sample show the supremacy of the K-MARS model. It correctly classifies 98.91% of banks. It also provides a low type I error, meaning that only 0.19% of the bankrupt banks are misclassified. In (TS), results proved the supremacy of MARS in terms of correct classification rates (97.58% against 93.4% for CART and 97.04% for K-MARS) and in terms of type II error (1.15% against 6.7% for CART and 3.2% for K-MARS).

In (TS), the lowest level of type I error of K-MARS (1.16%) highlights the ability of the model to better classify (NAB). We note also that CART model delivers the highest level of type II error. This means that CART was able to predict the failure of banks in advance (The predictive power of the model). Also, in (TS), we observe the same trend during the period P1 (2008–2010) and P2 (2011–2013). Indeed, MARS model outperforms the other models in terms of specificity but it provides a less performance in terms of type I error. K-MARS was more accurate to classify banks during P1 that P2. In fact, in P2, we observe an upgrade of the correct classification rate only for MARS and CART and a decrease of the level of type I error for all the models.

5 Conclusion

In this paper, we developed a blend model based on two non-parametric classification models to study the bankruptcy of US banks. We provide a comparative approach between CART, MARS and K-means-MARS. Our main objective is to predict bank defaults some time before the bankruptcy occurs, and to build an early warning system based on CAMEL’s ratios.

We based our empirical validation on a large panel of US banks gathered from both Bankscope and from the Federal Deposit Insurance Corporation.

The main contribution of our paper with regard to the existing literature is twofold:

-

Methodological and conceptual: First, we propose, for the first time, a hybrid model that combines K-means and MARS models. We provide a comparative framework not only to non-parametric models but also to parametric models Logit and CDA (Affes and Hentati-Kaffel 2017).

-

Empirical validation:

-

(i)

Our study focuses on a large sample of US banks with different size. The paper analyses the behavior of banks over a 6-year period rich in events (it encompasses tow sub-periods, stress period 2008–2009 and a recovery one 2010–2013).

-

(ii)

The comparative approach highlighted the supremacy of the proposed hybrid model in terms of accuracy classification for both training and validation samples.

-

(iii)

The model enhanced the classification accuracy by 1% for the training sample

-

(iv)

We established that MARS underperforms, by the misclassification rate of the bankrupt banks, notably for 2008 and 2009. Also, according to the Area under Curve (ROC), MARS model showed better accuracy results than CART model

-

(v)

The results differ from 1 year to another, but a general behavior for all distressed banks could be conducted. CART classification shows that among the 10 tested ratios, the most important predictors are capital adequacy variables. Also, we note that the asset quality ratios (NPLTA) and (NPLGL) are much more important than the other two components (LLRTA) and (LLRGL). According to MARS the most important variables was also the components of the capital adequacy. The Liquidity variables (TLTD and TDTA) have an importance in detecting bank failure only in 2010 and 2013. We note that, with respect to parametric models (see Affes and Hentati-Kaffel 2017) the asset quality was also an important component to explain the financial conditions of banks (except for 2009 and 2010).

-

(i)

Finally, as mentioned in the introduction, the ultimate goal of this paper is to provide regulators and investors an early warning model. The study we carried out meets this objective in two ways:

First, our detailed study shows how for a CART model it is possible to identify and detect target variables that enable banks in fragile financial situations to be detected in advance. For example, in 2008 banks which are in fragile and alarming situations are those who presents the following characteristics simultaneously: (i) \(NPLGL \le 0.08 \) and \(ROA \le -0.05\) (ii) NPLGL > 0.08 and \(EQTA \le 0.07\).

Second, MARS and CART models are a useful tool to identify in advance financial institutions in stress and so will be deserved with a special attention by supervisors. On average, CART put a red flag on 66.11% of unstable banks in year N + 1. The MARS model is much more efficient and prevents the failure, in year N + 1, of 80.81% of analyzed cases.

Finally, we believe that further extensions can be developed by including more financial variables and macroeconomic variables.

Notes

The specificity represents the number of the (AB) classified in the group of the (AB).

The sensitivity represents the percentage of the (NAB) correctly classified.

References

Affes, Z., & Hentati-Kaffel, R. (2017). Predicting us banks bankruptcy: Logit versus canonical discriminant analysis. Computational Economics, 1–46. https://doi.org/10.1007/s10614-017-9698-0.

Altman, E. I. (1968). Financial ratios, discriminant analysis and the prediction of corporate bankruptcy. The Journal of Finance, 23(4), 589–609.

Anandarajan, M., Lee, P., & Anandarajan, A. (2001). Bankruptcy prediction of financially stressed firms: An examination of the predictive accuracy of artificial neural networks. Intelligent Systems in Accounting, Finance and Management, 10(2), 69–81.

Andalib, A., & Atry, F. (2009). Multi-step ahead forecasts for electricity prices using narx: A new approach, a critical analysis of one-step ahead forecasts. Energy Conversion and Management, 50(3), 739–747.

Anderberg, M. R. (2014). Cluster analysis for applications: Probability and mathematical statistics: A series of monographs and textbooks (Vol. 19). New York: Academic press.

Barr, R. S., Siems, T. F., et al. (1994). Predicting bank failure using DEA to quantify management quality. Federal Reserve Bank of Dallas: Technical report.

Beaver, W. H. (1966). Financial ratios as predictors of failure. Journal of accounting research, 4, 71–111.

Boyacioglu, M. A., Kara, Y., & Baykan, Ö. K. (2009). Predicting bank financial failures using neural networks, support vector machines and multivariate statistical methods: A comparative analysis in the sample of savings deposit insurance fund (SDIF) transferred banks in Turkey. Expert Systems with Applications, 36(2), 3355–3366.

Breiman, L., Friedman, J., Stone, C. J., & Olshen, R. A. (1984). Classification and regression trees. Boca Raton: CRC Press.

Carter, C., & Catlett, J. (1987). Assessing credit card applications using machine learning. IEEE Expert, 2(3), 71–79.

Chen, M.-Y. (2011). Predicting corporate financial distress based on integration of decision tree classification and logistic regression. Expert Systems with Applications, 38(9), 11261–11272.

Cole, R. A. & Gunther, J. (1995). A camel rating’s shelf life. Available at SSRN 1293504.

Collier, C., Forbush, S., Nuxoll, D., & O’Keefe, J. P. (2003). The SCOR system of off-site monitoring: Its objectives, functioning, and performance. FDIC Banking Review Series, 15(3), 17.

De Andrés, J., Lorca, P., de Cos Juez, F. J., & Sánchez-Lasheras, F. (2011). Bankruptcy forecasting: A hybrid approach using fuzzy c-means clustering and multivariate adaptive regression splines (MARS). Expert Systems with Applications, 38(3), 1866–1875.

Demirgüç-Kunt, A., & Detragiache, E. (1997). The determinants of banking crises-evidence from developing and developed countries (Vol. 106). Washington, D.C.: World Bank Publications.

Friedman, J. H. (1991). Multivariate adaptive regression splines. The Annals of Statistics, 19(1), 1–67.

Frydman, H., Altman, E. I., & KAO, D.-L. (1985). Introducing recursive partitioning for financial classification: The case of financial distress. The Journal of Finance, 40(1), 269–291.

Gepp, A., Kumar, K., & Bhattacharya, S. (2010). Business failure prediction using decision trees. Journal of Forecasting, 29(6), 536–555.

Godlewski, C. J., et al. (2003). Modélisation de la prévision de la défaillance bancaire une application aux banques des pays emergents. Document de travail LARGE: Université Robert Schuman Strasbourg.

Hanweck, G. A., et al. (1977). Predicting bank failure. Board of Governors of the Federal Reserve System (US): Technical report.

Hartigan, J. A. (1975). Clustering algorithms. New york: Wiley.

Iturriaga, F. J. L., & Sanz, I. P. (2015). Bankruptcy visualization and prediction using neural networks: A study of US commercial banks. Expert Systems with Applications, 42(6), 2857–2869.

Jain, A. K., & Dubes, R. C. (1988). Algorithms for clustering data. Englewood Cliffs: Prentice-Hall Inc.

Jones, S., & Hensher, D. A. (2004). Predicting firm financial distress: A mixed logit model. The Accounting Review, 79(4), 1011–1038.

Kolari, J., Glennon, D., Shin, H., & Caputo, M. (2002). Predicting large US commercial bank failures. Journal of Economics and Business, 54(4), 361–387.

Lanine, G., & Vander Vennet, R. (2006). Failure prediction in the Russian bank sector with logit and trait recognition models. Expert Systems with Applications, 30(3), 463–478.

Lenard, M. J., Alam, P., & Madey, G. R. (1995). The application of neural networks and a qualitative response model to the auditor’s going concern uncertainty decision. Decision Sciences, 26(2), 209–227.

Martin, D. (1977). Early warning of bank failure: A logit regression approach. Journal of Banking & Finance, 1(3), 249–276.

McKee, T. E., & Greenstein, M. (2000). Predicting bankruptcy using recursive partitioning and a realistically proportioned data set. Journal of Forecasting, 19(3), 219–230.

Odom, M. D. & Sharda, R. (1990). A neural network model for bankruptcy prediction. In IJCNN International Joint Conference on neural networks, 1990 (pp. 163–168).

Ohlson, J. A. (1980). Financial ratios and the probabilistic prediction of bankruptcy. Journal of accounting research, 18(1), 109–131.

Pantalone, C. C., & Platt, M. B., et al. (1987). Predicting commercial bank failure since deregulation. New England Economic Review, 37–47.

Sánchez-Lasheras, F., de Andrés, J., Lorca, P., & de Cos Juez, F. J. (2012). A hybrid device for the solution of sampling bias problems in the forecasting of firms’ bankruptcy. Expert Systems with Applications, 39(8), 7512–7523.

Sinkey, J. F. (1979). Problem and failed institutions in the commercial banking industry. London: Wiley.

Thomson, J. B. (1991). Predicting bank failures in the 1980s. Economic Review-Federal Reserve Bank of Cleveland, 27(1), 9.

West, M., Harrison, P. J., & Migon, H. S. (1985). Dynamic generalized linear models and bayesian forecasting. Journal of the American Statistical Association, 80(389), 73–83.

Zhang, G., Hu, M. Y., Patuwo, B. E., & Indro, D. C. (1999). Artificial neural networks in bankruptcy prediction: General framework and cross-validation analysis. European Journal of Operational Research, 116(1), 16–32.

Zmijewski, M. E. (1984). Methodological issues related to the estimation of financial distress prediction models. Journal of Accounting research, 22, 59–82.

Author information

Authors and Affiliations

Corresponding author

Additional information

This work was achieved through the Laboratory of Excellence on Financial Regulation (Labex ReFi) supported by PRES heSam under the reference ANR-10-LABX-0095.

Appendix A: Basis function

Appendix A: Basis function

Basis functions For 2008

The final model is expressed as follows:

The basis function BF2 does not appear in the model but it contributes in the construction of others basis function (BF3 and BF7). From BF2, on variable EQTL (capital adequacy proxy), data is grouped into two sets: the first one is assigned 0 for all EQTL values that are more than 0.08 and the second set contains the elevation values that are below a threshold (e.g., c = 0.08) Indeed, the BF3 is defined as a combination between NPLGL and EQTL. This basis function has a negative effect on the target variable only when the value of NPLGL exceeds 0.109 and the value of EQTL is lower than 0.08. The basis function (BF7) which is a combination of (BF1) with NPLGL, have a positive impact on the output. In other word, a value of NPLGL greater than 0.08 multiplied by a value of EQTL lower than 0.08 affects negatively the target ‘Y’. Moreover, a decrease in the value of NPLGL above 0.316 (in BF12) will decrease the variable ‘Y’. The negative effect of (BF36) appears only when the value of TLTD at least 0.787 and BF10 is positive (for EQTA lower than 0.0248).

Basis functions For 2009

The final Model is expressed as below:

We note that BF15 is zero for value of EQTA lower than 0.0129. A negative sign for the estimated beta factor of BF15 indicates a decrease of the output variable. On the other hand a value of EQTA greater than 0.0296 (BF17) effects positively the target variable. We also note the presence of interaction between predictor variables, which means that the effect of a predictor on the target variable may depend on the value of another predictor. We see in the definition of the BF45 that the effect of the variable ROA on the output variable depends on the value of the ratio EQTA. The effect of this interaction can be explained as follow. If the value of EQTA is lower than 0.054 and the value of ROA is above \(-\,0.022\), it has a positive impact on the target variable.

Basis functions For 2010

The final model is expressed as follows:

The BF2 does not appear in the model but it contributes to compute the BF10. Indeed, for values of TLTD and EQTL respectively lower than 0.94 and 0.0798, the BF10 has a positive effect on the output variable. For a value of ROA inferior than \(-\,0.00827\) (BF14) impacts negatively the target value. We also note that the interaction between EQTA and ROA (BF18) has a negative effect on the target variable for a value of EQTA lower than 0.033 and ROA upper than \(-\,0.008\). Moreover the negative impact of the BF34 appears only when EQTA is below 0.0143 and EQTA lower than 0.028. The BF49 and BF50 together define a piecewise linear function of EQTA with a knot of 0.0635. The values of these basis functions are positives when the value of EQTA is respectively superior to 0.0635 and inferior than 0.0635. The basis function BF50 is not involved in the model but it’s used to compute the BF51, BF53, BF55 and BF59. Indeed, when the value of EQTL is above 0.0314 and the EQTA is lower than 0.0635, then the output variable will increase. On the other hand, a value of EQTL greater than 0.03 and EQTA below 0.0635, have a negative effect on the target variable. A positive impact of the BF55 and BF59 on the target variable appear respectively for ROA bigger than \(-\,0.0135\) and TLTD higher than 0.516 multiplied by the BF50. The BF63 has a positive effect on the output variable for a value of TDTA superior to 0.9 and an EQTA higher than 0.0635.

Basis functions For 2011

The final model is expressed as follow:

As it can be seen, the positive effect of the BF2 appears only when the value of EQTL is lower than 0.07. The BF2 was used in the construction of other basis functions (BF4 and BF12). In fact, we note that the BF4 and BF12 have a negative impact on the target variable for values of NPLGL inferior than 0.077 and ROA lower than \(-\,0.0248\), multiplied by BF2. The BF27 is a function of the variable of ROA with a knot of \(-\,0.0216\) multiplied by the BF25. It means that when the value of ROA is lower than \(-\,0.0216\) and the value of EQTA is inferior than 0.0367, we note a positive impact on the target variable.

Basis functions For 2012

The final model is expressed as follow:

The basis functions BF2, BF12 and BF14 appear in the construction of others basis functions (BF19, BF30, BF43, BF45 and BF49) only when the values of EQTA are respectively inferior to 0.0667, 0.00495 and 0.0325. The BF30 has a positive impact on the target when the value of NPLTA is lower than 0.137 multiplied by BF2. A positive impact on the output appears for values of NPLGL superior to 0.076 and a value of LLRTA above 0.197, multiplied by BF14. But for value of NPLGL upper than 0.159 and an EQTA lower than 0.0325, we note a negative effect on the target variable. Moreover we note the presence of interaction between the LLRTA ratio and two other variables (EQTA and ROE). The positive effect of BF45 appears for a value of LLRTA higher than 0 and EQTA lower than 0.00495. The BF74 have a positive effect on the target value when LLRTA is superior to 0 and ROE is less than \(-\,1.925\). We also note that a value of ROA higher than \(-\,1.925\) (BF15) impacts negatively the target.

Rights and permissions

About this article

Cite this article

Affes, Z., Hentati-Kaffel, R. Forecast bankruptcy using a blend of clustering and MARS model: case of US banks. Ann Oper Res 281, 27–64 (2019). https://doi.org/10.1007/s10479-018-2845-8

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10479-018-2845-8