Abstract

In this paper, we propose a cancelable multi-biometric face recognition method that uses multiple convolutional neural networks (CNNs) to extract deep features from different facial regions. We also propose a new CNN architecture that exploits batch normalization, depth concatenation and a residual learning framework. The proposed method adopts a region-based technique in which face, eyes, nose and mouth regions are detected from the original face images. Multiple CNNs are used to extract deep features from each region, and then, a fusion network combines these features. Moreover, to provide user’s privacy and increase the system resistance against spoof attacks, a cancelable biometric technique using bio-convolving encryption is performed on the final facial descriptor. Our experiments on the FERET, LFW and PaSC datasets show excellent and competitive results compared to state-of-the-art methods in terms of recognition accuracy, specificity, precision, recall and fscore.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

Biometrics such as face, fingerprint and iris are defined as the physiological characteristics of an individual. It has become a need now to get more efficient biometric systems that can be easily embedded in smart devices such as tablets and mobile phones. A human face is considered as one of the most effective biometric traits compared to other biometrics. Face recognition (FR) is characterized by its cheap cost, contactless nature and high acceptability during acquisition [1,2,3]. However, the performance of FR systems is affected by changes in resolution, pose and illumination [4, 5]. To overcome this problem, most of the FR techniques extract features from face images using well-known algorithms such as scale-invariant feature transform (SIFT) [6], speeded up robust feature (SURF) extraction [7] or histogram of oriented gradients (HOG) [8]. In the last few years, researchers interested in the field of FR proved that the utilization of CNNs gives more robust, representative and detailed features that improve the overall system performance. Furthermore, to reach optimal results, a CNN has to be trained by a large number of face images without implementation of external pre-processing methods as the system accuracy could be affected by the easy failures of these methods [9,10,11,12,13,14,15,16,17,18].

Paying attention to enhancing the recognition accuracy should not make us overlook protection of biometric data from hackers. For this purpose, encryption techniques could be applied [19]. In practice, illumination variations have a great effect on biometric templates. So, different digests are produced when there is a minor change in the input. Another drawback of using encryption with biometrics is the need to apply data decryption that represents an attack point. Recently, cancelable biometrics has attracted a great attention. In cancelable biometrics, a one-way function transforms the biometric template instead of storing the original one. This way can provide revocability since another transformation is used to re-enroll a compromised biometric, and privacy since the original biometric cannot be recovered easily from a transformed one. Additionally, the recognition accuracy is not degraded as the statistical characteristics of features after transformation are approximately maintained [20,21,22].

Motivated by the previous observations, we propose a cancelable multi-biometric FR method in which a fusion network combines deep features which are extracted using multiple CNNs. Security and privacy of biometric data are provided by performing a bio-convolving encryption step on the final facial descriptor.

Our main contributions are as follows:

- 1.

We propose a new, simple and efficient CNN architecture.

- 2.

We present a FR method that achieves remarkable results compared to the state-of-the-art techniques in terms of recognition accuracy, specificity, precision, recall and fscore.

- 3.

We provide protection of biometric data without degradation of the recognition accuracy.

- 4.

We perform extensive experiments on different datasets.

This paper is organized as follows. Section 2 is about the related work. Section 3 describes the proposed method. Section. 4 presents the experimental results and Sect. 5 gives the concluding remarks of the paper.

2 Related work

Recently, CNN-based FR methods have achieved remarkable results on different datasets [23, 24]. To study these methods, the following aspects should be taken into consideration: (a) number and architecture of CNNs; (b) dataset used for training; (c) type of loss function; and (d) the incorporated learning strategies. To enhance the system performance, some operations are applied on face images to generate different types of inputs to train multiple CNNs. These operations could be: (a) cropping of certain face regions [25,26,27,28,29]; (b) utilization of synthesized or frontalized faces [30,31,32]; or (c) utilization of different numbers of training images from different datasets [26, 33]. Kurban et al. [34] proposed an approach in which score level fusion is applied between two different datasets to create a new one. In addition, energy imaging method is used for gesture feature extraction and principal component analysis (PCA) is used for dimensionality reduction. They achieved encouraging performance with high genuine match rate (GMR) and low false acceptance rate (FAR). Li et al. [35] designed a method that improves recognition accuracy through updating the classification model in real time by using self-detection, decision and learning (S-DDL).

Several researches are concerned with improving FR performance, but few are concerned with protecting biometric patterns and providing user’s privacy. Generation of cancelable biometric templates [36,37,38] is an approach that is used to protect users’ data. Traditional techniques reconstruct biometric templates by performing reverse engineering [39]. We can classify cancelable FR systems based on the number of biometrics into: (a) unimodal systems, in which cancelability is achieved using a single biometric trait, and (b) multi-biometric systems, in which multiple traits are used [22]. We can follow one of the two approaches to produce cancelable biometric templates: (a) cryptosystem approach [40,41,42], in which a key is obtained from the original data, or (b) transformation-based approach, in which discrimination between templates is enhanced using original templates with a specific key [43]. In practice, several techniques are used to provide cancelability for both unimodal and multi-biometric systems such as bio-convolving with random kernels, non-invertible geometric transforms, random projections or cancelable biometric filters [44]. In this work, bio-convolving with random kernels is adopted due to its simplicity and great success with CNN-based FR methods.

3 The proposed method

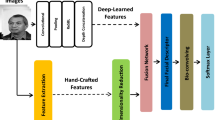

Figure 1 presents the proposed approach pipeline that consists of four main stages: (a) detections of different facial regions; (b) extraction of deep features using multiple CNNs; (c) utilization of a fusion network to form a discriminative facial descriptor; and (d) application of bio-convolving with random kernels to protect biometric data from different attacks.

3.1 Detection of facial regions

Face, nose, eyes and mouth regions are detected from the original face images [45]. Figure 2 shows examples of the detection and separation operations. Eyes, nose and mouth are very effective regions on which several changes are clearly noticed. These changes include laughing, closing eyes, opening mouth or wearing glasses. Figure 3 depicts the visual activations of the first five convolutional layers of the proposed CNN model for each facial region.

3.2 Deep feature extraction

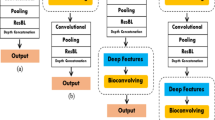

Multiple CNNs of the same architecture are used to extract deep features from the detected facial regions. We propose a CNN model that includes “22” convolutional [46], “8” max pooling [46], “1” batch normalization [47], “2” residual learning framework “ResBL” [48], “3” depth concatenation [49], “1” fully connected [50], “1” feature normalization [51] and “1” softmax [52] layers as illustrated in Table 1. A multi-GPU platform could be implemented to limit the computation time consumption.

3.3 Fusion of deep features

We adopt a fusion network to combine the extracted features into a more representative, reliable, useful and detailed facial descriptor. This network consists of two layers: local and fusion layers. The local layer is composed of four parallel CNNs. If we consider that F(i)(.) represents the deep feature vector which is extracted from a CNN i, then the output of the fusion layer could be computed as illustrated in Eq. (1):

where the corresponding weights and bias in the fusion layer are denoted by \( {\bf W}^{\left( i \right)} \) and \( {\bf b}^{\left( i \right)} \), respectively. The number of CNNs is represented by N; here, N = 4.

3.4 Bio-convolving encryption

This method [44] adopts a convolution approach that leads to generating cancelable biometric templates. A transformed sequence \( f \left[ i \right], \;\;i = 1, \ldots ,F \) is obtained using an original sequence \( r \left[ i \right], \;\;i = 1, \ldots ,F \) through a convolution with a random kernel h[i].

4 Experimental results

To verify the performance of the proposed method, we present experiments on different datasets: FERET [53], LFW [54] and PaSC [55]. In addition, recognition accuracy, specificity, precision, recall and fscore are used for evaluation; see Eqs. 3, 4, 5, 6 and 7. All experiments have been performed using a platform with the specifications shown in Table 2. We used the stochastic gradient descent method to train the CNN. Momentum is set to 0.9. We applied L2 regularization with a weight decay of 5 × 104. We began the CNN training with a learning rate equal to 0.1 and stopped the training after 5 epochs.

where TP = true positive, FN = false negative, FP = false positive and TN = true negative.

4.1 Evaluation of unimodal and multi-biometric FR methods

As mentioned before, the proposed method adopts an FR technique in which four regions are detected from the original face images. Firstly, we studied the performance of the unimodal FR method to know the most effective part of a face image in the recognition process. The unimodal FR method uses a specific region: face, nose, eyes or mouth to train a single CNN. Table 3 illustrates the results of the unimodal FR method using different facial regions.

We observe from Table 3 that unimodal FR system based on face region achieves better results than those based on other regions as the face region contains plenty of features. The utilization of nose region comes next as the changes in nose are less than those of eyes and mouth under different positions and expressions of persons in images. Table 4 gives the comparison results of the unimodal FR system based on face region using state-of-the-art CNNs. To guarantee a fair comparison, hyper-parameters are tuned for all methods.

Table 4 shows that the proposed CNN model is superior to other state-of-the-art CNN models. With the proposed CNN, the recognition accuracy reaches 97.14% on FERET dataset compared to 97.02% for CoCo loss, 97.94% on LFW dataset compared to 97.81 for CoCo loss, and 95.42% on PaSC dataset compared to 95.27% for CoCo loss.

The performance of the proposed multi-biometric FR system has been studied. Table 5 provides the comparison results of the proposed multi-biometric FR system using the state-of-the-art CNN models.

From Table 5, compared to the state-of-the-art CNN models, the proposed CNN achieves remarkable results. With the proposed model, the recognition accuracy reaches 98.89% on FERET dataset compared to 98.73% for CoCo loss, 98.93% on LFW dataset compared to 98.82 for CoCo loss, and 97.38% on PaSC compared to 97.27% for CoCo loss. Figure 4 depicts the receiver operating characteristic (ROC) curves of the proposed CNN model and CoCo loss model for different datasets.

As shown in Fig. 4, the proposed model gives better results than those of CoCo loss CNN on FERET and LFW datasets, while on PaSC dataset the performance of both CNN models is almost the same. Furthermore, Fig. 5 illustrates the ROC plots of both multi-biometric and unimodal systems on different datasets.

Figure 5 confirms the superiority of the proposed multi-biometric FR method to the unimodal one as the area under the curve for the multi-biometric system is greater than that of the unimodal system.

4.2 Evaluation of bio-convolving method

The proposed method uses bio-convolving encryption to provide protection of biometric data without degradation in the system accuracy. To prove that, Table 6 illustrates the change in recognition accuracy for both unimodal and multi-biometric FR methods after applying bio-convolving and bloom filter [64] methods.

Table 6 shows that the recognition accuracy is slightly affected after applying bio-convolving. In addition, Fig. 6 shows a graphical comparison between different cancelable techniques and their influence on recognition accuracy for both unimodal and multi-biometric FR systems.

The ability of bioconvolving method to provide security and privacy of user’s data can be verified through performing encryption and decryption operations on a number of facial images using different de-convolution masks as shown in Fig. 7. Figure 8 presents the probability density functions (PDFs) of the mean square error (MSE) and normalized absolute error (NAE) for both correct and slightly different de-convolution masks.

As illustrated in Figs. 7 and 8, it is clear that we can distinguish easily between correct and incorrect de-convolution results. Overall, the experimental results prove that the proposed multi-biometric FR method is superior to the unimodal one due to exploiting multiple CNNs to obtain a variety of features from different facial regions and applying fusion to get more appropriate and sufficient facial descriptors. On the other hand, the utilization of four CNNs adds complexity to the proposed FR method. Furthermore, a single CNN model takes about 4 h in the training process. So, the proposed method suffers from a time consumption issue.

5 Conclusions

In this paper, we presented a cancelable multi-biometric FR method that achieves a promising performance across different datasets. Thanks to depth concatenation and the residual learning framework, we proposed an efficient CNN architecture. In the proposed method, multiple CNNs extract deep features from different facial regions, a fusion network combines these features into a more representative, reliable and detailed output, and finally, a bio-convolving method maintains privacy and security of biometric templates without affecting the recognition accuracy. The experiments on the FERET, LFW and PaSC datasets demonstrated that (a) the proposed method outperforms the state-of-the-art techniques in the presence of cancelability and (b) the multi-biometric system achieves excellent results compared to the unimodal one.

References

Choi, J.Y.: Spatial pyramid face feature representation and weighted dissimilarity matching for improved face recognition. Vis. Comput. 34, 1535–1549 (2018)

Yongjie, Ch., Lindu, Z., Touqeer, A.: Multiple feature subspaces analysis for single sample per person face recognition. The visual figure 7: encryption and decryption operations on a number of facial images using different de-convolution masks. Computer 35(2), 239–256 (2019)

Li, K., Yi, J., Muhammad, W., Ruize, H., Jiongwei, Ch.: Facial expression recognition with convolutional neural networks via a new face cropping and rotation strategy. Vis. Comput. (2019). https://doi.org/10.1007/s00371-019-01627-4

Cheng, Y., Jiao, L., Cao, X., Li, Z.: Illumination-insensitive features for face recognition. Vis. Comput. 33, 1483–1493 (2017)

Yueming, W., Gang, P., Jianzhuang, L.: A deformation model to reduce the effect of expressions in 3D face recognition. Vis. Comput. 27(5), 333–345 (2011)

Yujie, L., Deng, Y., Xiaoming, Ch., Zongmin, L., Jianping, F.: TOP-SIFT: the selected SIFT descriptor based on dictionary learning. Vis. Comput. 35, 667–677 (2019)

Leila, K., Mehrez, A., Ali, D.: Image classification by combining local and global features. Vis. Comput. 35(5), 679–693 (2019)

Baozhen, L., Hang, W., Weihua, S., Wenchang, Z., Jinggong, S.: Rotation-invariant object detection using Sector-ring HOG and boosted random ferns. Vis. Comput. 34(5), 707–719 (2018)

Fengping, A., Zhiwen, L.: Facial expression recognition algorithm based on parameter adaptive initialization of CNN and LSTM. Vis. Comput. (2019). https://doi.org/10.1007/s00371-019-01635-4

Jianfeng, Z., Xia, M., Jian, Z.: Learning deep facial expression features from image and optical flow sequences using 3D CNN. Vis. Comput. 34(10), 1461–1475 (2018)

He, K., Zhang, X., Ren, S., et al.: Deep residual learning for image recognition. In: Proceedings of Computer Vision and Pattern Recognition (CVPR), pp. 770–778 (2016)

Gaurav, T., Kuldeep, S., Dinesh, V.: Convolutional neural networks for crowd behaviour analysis: a survey. Vis. Comput. 35(5), 753–776 (2019)

Schroff, F., Kalenichenko, D., Philbin, J.: Facenet: A unified embedding for face recognition and clustering. In: CVPR, pp. 815–823 (2015)

Heekyung, Y., Kyungha, M.: Classification of basic artistic media based on a deep convolutional approach. Vis. Comput. (2019). https://doi.org/10.1007/s00371-019-01627-4

Wu, X., He, R., Sun, Z.,; Tan, T.: A light CNN for deep face representation with noisy labels. In: Proceedings of the IEEE Transactions on Information Forensics and Security, pp. 2884–2896 (2018)

Deng, J., Guo, J., Zafeiriou, S.: Arcface: additive angular margin loss for deep face recognition. arXiv preprint arXiv:1801.07698 (2018)

Liu, Y., Li, H., Wang, X.: Rethinking feature discrimination and polymerization for large-scale recognition. arXiv preprint arXiv:1710.00870 (2017)

Zheng, Y., Pal, D., Savvides, M.: Ring loss: convex feature normalization for face recognition. In: CVPR (2018)

Lobache, O.: Direct visualization of cryptographic keys for enhanced security. Vis. Comput. 34, 1749–1759 (2018)

Ratha, N., Chikkerur, S., Connell, J., Bolle, R.: Generating cancelable fingerprint templates. IEEE Trans. Pattern Anal. Mach. Intell. 29, 561–572 (2007)

Teoh, A., Goh, A., Ngo, D.: Random multispace quantization as an analytic mechanism for biohashing of biometric and random identity inputs. IEEE Trans. Pattern Anal. Mach. Intell. 28, 1892–1901 (2006)

Polash, P., Gavrilova, M., Klimenko, S.: Situation awareness of cancelable biometric system. Vis. Comput. 30, 1059–1067 (2014)

Klare, B., Klein, B., Jain, A.: Pushing the frontiers of unconstrained face detection and recognition: Iarpa janus benchmark a. In: Proceedings of the IEEE CVPR, pp. 1931–1939 (2015)

Je-Kang, P., Dong, K.: Unified convolutional neural network for direct facial keypoints detection. Vis. Comput. (2018). https://doi.org/10.1007/s00371-018-1561-3

Li, L., Jun, Z., Fei, J., Li, S.: An incremental face recognition system based on deep learning. In: Proceedings of the Fifteenth IAPR International Conference on Machine Vision Applications (MVA) Nagoya University, Nagoya, Japan, pp. 238–241 (2017)

Xujie, L., Hui, H., Hanli, Z., Yandan, W., Mingxiao, H.: Learning a convolutional neural network for propagation-based stereo image segmentation. Vis. Comput. (2018). https://doi.org/10.1007/s00371-018-1582-y

Xiaoxiao, L., Qingyang, X., Ning, W.: A survey on deep neural network-based image captioning. Vis. Comput. 35(3), 445–470 (2019)

Sun, Y., Wang, X., Tang, X.: Deeply learned face representations are sparse, selective, and robust. In: Proceedings of the IEEE CVPR, pp. 2892–2900 (2015)

Sun, Y., Wang, X., Tang, X.: Sparsifying neural network connections for face recognition. In: Proceedings of the IEEE CVPR (2016)

Taigman, Y., Yang, M., Ranzato, M., Wolf, L.: Deepface: closing the gap to human-level performance in face verification. In: Proceedings of the IEEE CVPR, pp. 1701–1708 (2014)

Hao, G., Baozhong, C.: How do deep convolutional features affect tracking performance: an experimental study. Vis. Comput. 34(12), 1701–1711 (2018)

Masi, I., Rawls, S., Natarajan, P.: Pose-aware face recognition in the wild. In: Proceedings of the IEEE CVPR, pp. 4838–4846 (2016)

Taigman, Y., Yang, M., Wolf, L.: Web-scale training for face identification. In: Proceedings of the IEEE CVPR, pp. 2746–2754 (2015)

Kurban, O., Yildirim, T., Bi̇lgi̇ç, A.: A multi-biometric recognition system based on deep features of face and gesture energy image. In: Proceedings of the IEEE International Conference on Innovations in Intelligent Systems and Applications (INISTA), pp. 361 – 364 (2017)

Li, L., Jun, Z., Fei, J., Li, S.: An incremental face recognition system based on deep learning. In: Proceedings of the Fifteenth IAPR International Conference on Machine Vision Applications (MVA), pp. 238–241 (2017)

Feng, Y.C., Yuen, P.C., Jain, A.K.: A hybrid approach for generating secure and discriminating face template. IEEE Trans. Inf. Forensics Secur. 5(1), 103–117 (2010)

Yang, W., Wang, S., Zheng, G., Chaudhry, J., Valli, C.: ECB4CI: an enhanced cancelable biometric system for securing critical infrastructures. J. Supercomput. 74, 4893–4909 (2018)

Paul, P.P., Gavrilova, M.: A novel cross folding algorithm for multimodal cancelable biometrics. Int. J. Softw. Sci. Comput. Intell. 4(3), 21–38 (2012)

Alder, A.: Sample images can be independently restored from face recognition templates. Electr. Comput. Eng. 2, 1163–1166 (2003)

Bedad, F., Adjoudj, R.: Multi-biometric template protection: an overview. In: Proceedings of International Conference in Artificial Intelligence in Renewable Energetic Systems, pp. 70–79 (2018)

Natgunanathan, I., Mehmood, A., Xiang, Y., Hua, G., Li, G., Bangay, S.: An overview of protection of privacy in multibiometrics. Multimed. Tools Appl. 77, 6753–6773 (2018)

Droandi, G., Barni, M., Lazzeretti, R., Pignata, T.: SEMBA:SEcure multi-biometric authentication. arXiv:1803.10758 (2018)

Teoh, A., Ngo, D., Goh, A.: Biohashing: two factor authentication featuring fingerprint data and tokenised random number. Pattern Recognit. 37(11), 2245–2255 (2004)

Patel, V.M., Ratha, N.K., Chellappa, R.: Cancelable biometrics: a review. IEEE Signal Process. Mag. 32(5), 54–65 (2015)

Marco, C., Oscar, D., Cayetano, G., Mario, H.: ENCARA2: real-time detection of multiple faces at different resolutions in video streams. J. Vis. Commun. Image Represent. 18(2), 130–140 (2007)

Yuan, Z., Liu, Y., Yue, J., Li, J., Yang, H.: CORAL: coarse-grained reconfigurable architecture for convolutional neural networks. In: Proceedings of the IEEE/ACM International Symposium on Low Power Electronics and Design (ISLPED), pp. 1–6 (2017)

Ioffe, S., Szegedy, Ch.: Batch normalization: accelerating deep network training by reducing internal covariate shift. arXiv: 1502.03167v3 [cs.LG], pp. 1–11 (2015)

Zhang, X., Ren, Sh., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Liu, W., Jia, Y., Sermanet, P., Rabinovich, A.: Going deeper with convolutions. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1–9 (2015)

Xiaoliang, Z., Zijian, C.: Dual-modality spatiotemporal feature learning for spontaneous facial expression recognition in e-learning using hybrid deep neural network. Vis. Comput. (2019). https://doi.org/10.1007/s00371-019-01660-3

Dandan, L., Huagang, L., Zhenbo, Y., Yipu, Z.: Deep convolutional BiLSTM fusion network for facial expression recognition. Vis. Comput. (2019). https://doi.org/10.1007/s00371-019-01636-3

Hasnat, A., Bohn´e, J., Milgram, J., Gentric, S., Chen, L.: DeepVisage: making face recognition simple yet with powerful generalization skills. In: Proceedings of the CVPR, pp. 1–12 (2017)

https://www.nist.gov/itl/iad/image-group/color-feret-database

Huang, G., Ramesh, M., Berg, T., Learned-Miller, E.: Labeled faces in the wild: a database for studying face recognition in unconstrained environments. Technical Report 07-49, University of Massachusetts, Amherst (2007)

Beveridge, J., Phillips, J., Bolme, D., Draper, B., Givens, G., Lui, Y., Teli, M., Zhang, H., Scruggs, W., Bowyer, K.: The challenge of face recognition from digital point-and-shoot cameras. In: Proceedings of IEEE International Conference on Biometrics: Theory, Applications Systems, pp. 1–8 (2013)

Wu, X., He, R., Sun, Z., Tan, T.: A Light CNN for deep face representation with noisy labels. In: CVPR, pp. 1–13 (2017)

Liu, W., Wen, Y., Yu, Z., Li, M., Raj, B., Song, L.: SphereFace: deep hypersphere embedding for face recognition. In: CVPR (2017)

Liu, J., Deng, Y., Huang, C.: Targeting ultimate accuracy: face recognition via deep embedding. arXiv:1506.07310 (2015)

Sun, Y., Liang, D., Wang, X., Tang, X.: DeepID3: face recognition with very deep neural networks. arXiv:1502.00873 (2015)

Ding, C., Tao, D.: Trunk-branch ensemble convolutional neural networks for video-based face recognition. IEEE Trans. Pattern Anal. Mach. Intell. 40, 1002–1014 (2017)

Shu, X., Liyan, Z., Jinhui, T., Guo-Sen, X., Shuicheng, Y.: Computational face reader. In: MMM, Part I LNCS 9516, pp. 114–126 (2016)

Shu, X., Jinhui, T., Zechao, L., Hanjiang, L., Liyan, Z., Shuicheng, Y.: Personalized age progression with bi-level aging dictionary learning. IEEE Trans. Pattern Anal. Mach. Intell. (2017)

Shu, X., Jinhui, T., Hanjiang, L., Zhiheng, N., Shuicheng, Y.: Kinship-guided age progression. Pattern Recognit. 59, 156–167 (2016). https://doi.org/10.1016/j.patcog.2015.12.015

Lin, Y., Xun, L.: A Cancelable multi-biometric template generation algorithm based on bloom filter. In: International Conference on Algorithms and Architectures for Parallel Processing, pp. 547–559 (2018)

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Ethical approval

All procedures performed in studies involving human participants were in accordance with the ethical standards of the institutional and/or national research committee and with the 1964 Helsinki Declaration and its later amendments or comparable ethical standards.

Informed consent

Informed consent was obtained from all individual participants included in the study.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Abdellatef, E., Ismail, N.A., Abd Elrahman, S.E.S.E. et al. Cancelable multi-biometric recognition system based on deep learning. Vis Comput 36, 1097–1109 (2020). https://doi.org/10.1007/s00371-019-01715-5

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00371-019-01715-5