Abstract

Right-hand preference has been demonstrated for visually guided reaching and grasping. Grasping, however, requires the integration of both visual and haptic cues. To what extent does vision influence hand preference for grasping? Is there a hand preference for haptically guided grasping? Two experiments were designed to address these questions. In Experiment 1, individuals were tested in a reaching-to-grasp task with vision (sighted condition) and with hapsis (blindfolded condition). Participants were asked to put together 3D models using building blocks scattered on a tabletop. The models were simple, composed of ten blocks of three different shapes. Starting condition (Vision-First or Hapsis-First) was counterbalanced among participants. Right-hand preference was greater in visually guided grasping but only in the Vision-First group. Participants who initially built the models while blindfolded (Hapsis-First group) used their right hand significantly less for the visually guided portion of the task. To investigate whether grasping using hapsis modifies subsequent hand preference, participants received an additional haptic experience in a follow-up experiment. While blindfolded, participants manipulated the blocks in a container for 5 min prior to the task. This additional experience did not affect right-hand use on visually guided grasping but had a robust effect on haptically guided grasping. Together, the results demonstrate first that hand preference for grasping is influenced by both vision and hapsis, and second, they highlight how flexible this preference could be when modulated by hapsis.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Many studies have investigated hand preference during visually guided grasping. Tasks that require the use of one hand to pick up an object (i.e., unimanual) have shown that participants will reach and grasp with the hand closest to the object (i.e., ipsilateral; e.g., Annett et al. 1979; Bryden et al. 1994). When the target is located at the midline, however, participants prefer their right hand to pick it up (Bryden et al. 2000; Gabbard and Rabb 2000). This right-hand preference for grasping has been observed when picking up different objects: geometric 3D shapes (Gabbard et al. 2003), cards (Bishop et al. 1996; Calvert and Bishop 1998; Carlier et al. 2006), toys (Bryden and Roy 2006; Sacrey et al. 2012), tools (Mamolo et al. 2004, 2005, 2006), or blocks (Gonzalez et al. 2007; Stone et al. 2013), and it has also been shown in studies that employ bimanual tasks (Fagard and Marks 2000; Stone et al. 2013). An example of a bimanual task is the block-building task, where participants are asked to reproduce an exact copy of a given model using the numerous blocks available from a tabletop. The interaction of the two hands is essential in order to complete the task. Using this task, a marked right-hand preference has been shown, even for contralateral grasps (Gonzalez et al. 2007; Stone et al. 2013). However, all these previous studies have documented hand preference for grasping when vision is available, that is when the movement is guided by vision. To what extent does vision contribute to this right-hand preference?

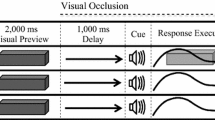

To our knowledge, no study has investigated hand preference for grasping without vision. Kinematic studies have shown differences in hand use when vision is occluded: Peak velocities are lower, maximum grip apertures are larger, overall movement time is longer (Connolly and Goodale 1999; Kritikos et al. 2002; Pettypiece et al. 2010; Schettino et al. 2003; Winges et al. 2003). Even when vision of one eye is occluded, individuals reach slower and grasp with less accuracy (Melmouth et al. 2009). These studies emphasize the critical role that vision plays during reaching and grasping movements. Perhaps, it should not be surprising that in the absence of vision, hand preference would also be affected. Studies investigating object recognition without vision (i.e., with hapsis) have shown a left-hand advantage during haptic discrimination (Benton et al. 1978; De Renzi et al. 1969; Fagot et al. 1993; Milner and Taylor 1972; Riege et al. 1980; Tomlinson et al. 2011). For example, Fagot et al. (1993) presented participants with a shape identification task and found that the left hand was better at identifying the correct shape. Similarly, Tomlinson et al. (2011) asked blindfolded participants to lift a dowel with a textured knob and to rate the “coarseness” of the knob on a 3-point scale. After an equivalent number of lifts with each hand, they found that participants were significantly more accurate with their left hand. These studies demonstrate the left-hand advantage during haptic recognition. With this information in mind, one might ask what hand would be used when trying to pick up an object in the dark (e.g., in the middle of the night). Would it be the hand that is used more often for grasping (i.e., right hand) or the one that is more proficient for haptic object recognition (i.e., left hand)? Two experiments were conducted to address these questions.

In both experiments, participants were tested on the block-building task (Gonzalez et al. 2007; Stone et al. 2013) and hand preference for grasping was documented. In Experiment 1, participants built models sighted and blindfolded (counterbalanced). In Experiment 2, participants received an additional haptic experience (i.e., blindfolded) by manipulating the blocks in a container before completing the task as in Experiment 1.

Methods and procedures

Experiment 1: grasping with and without vision

Participants

Thirty-eight self-reported right-handed individuals from the University of Lethbridge, between the ages of 18 and 35, participated. Nineteen (eight males) and nineteen (four males) were assigned to the Vision-First and the Hapsis-First groups, respectively. No gender differences were found previously in this task (Gonzalez and Goodale 2009), so no action was taken to balance the genders. The studies were approved by the local ethics committee, and all participants gave written informed consent before participating in the study. Participants were naïve to the purposes of the study.

Apparatus and stimuli

Handedness Questionnaire

A modified version of the Edinburgh (Oldfield 1971) and Waterloo (Brown et al. 2006) Handedness Questionnaires were given to all participants (see Stone et al. 2013 for a full version of the questionnaire) at the end of the block-building task. This version of the questionnaire included questions on hand preference for 22 different tasks. Participants had to rate which hand they prefer on a scale of +2 (right always), +1 (right usually), 0 (equal), −1 (left usually), or −2 (left always). Each response was scored as (2, 1, −1, or −2), and a total score was obtained by adding all values. Possible scores range from +44 for exclusive right-hand use to −44 for exclusive left-hand use.

Block-building task

A total of five models built with MEGA BLOKS® were used for the experiment. The blocks ranged in size from 3.1 L × 3.1 W × 2.0 cm H to 6.3 L × 3.1 W × 2.0 cm H. Each model contained ten blocks of various colors and three types of shapes. The blocks that made up the models were scattered on a table with a working space of 122 L × 122 W × 74 cm H 70 L × 122 W × 74 cm H. A strip of clear tape was used to divide the workspace in half, and an equal number of pieces were distributed onto the left and right sides.

Procedures

Participants were comfortably seated centrally in front of a table and were instructed to replicate five different models. Participants started the task either sighted (Vision-First group) or blindfolded (by using a blindfold; Hapsis-First group). In the Vision-First group, the 50 blocks that made all five models were available on the tabletop. One model at a time, participants replicated two 10-piece models sighted followed by three 10-piece models blindfolded. In the Hapsis-First group, only 30 blocks were available on the tabletop. Participants were asked to replicate three models blindfolded. After the completion of the three models, the investigator placed back all 50 blocks and allowed the participant to build two models while sighted. This procedure was done to maintain consistency in the number of blocks that participants would use to build each model between the Vision- and Hapsis-First groups. In both conditions, the 10-piece model to be replicated was placed on a building plate directly in front of the participant within arms’ reach. Participants were instructed to replicate the model as quickly and accurately as possible on a second building plate positioned in front of them (see Figs. 1, 2). No other information was given to the participants. Following the replication of the model, both models were removed and a different model to be replicated was provided. The same five models were used for all participants. Starting condition (Vision-First/Hapsis-First) was counterbalanced, and model presentation order was randomized between participants. The task was recorded on a JVC HD Everio video recorder approximately 160 cm away from the individual with a clear view of the tabletop, building blocks, and participants’ hands.

a Photograph of a participant engaging in the building task in Experiment 1. Please note that the participant is blindfolded and cannot see her working space. b This graph demonstrates the average right-hand use in percentage for all participants in both the sighted and blindfolded conditions. Note the significant difference in right-hand use between these conditions. c This graph demonstrates right-hand use in percentage for starting condition: Vision-First and Hapsis-First. Both groups are divided into sighted (white bars) and blindfolded (black bars) conditions. Note the significant differences within the Vision-First group and between the sighted conditions

a Photograph of a participant from Experiment 2 manipulating the blocks in a container prior to the building task. b This graph demonstrates the average right-hand use in percentage for all participants in both the sighted and blindfolded conditions. Note the significant difference in right-hand use between these conditions. c This graph demonstrates right-hand use in percentage for starting condition: Vision-First and Hapsis-First. Both groups are divided into sighted (white bars) and blindfolded (black bars) conditions. Note the significant differences within both starting conditions and between the sighted conditions

Data analysis

All recorded videos were analyzed offline. Each grasp was recorded as a left- or right-hand grasp in the participants’ ipsilateral or contralateral space. The total number of grasps was calculated to determine a percent for right-hand use (number of right grasps/total number of grasps × 100). The time in which it took participants to construct each model was recorded on a stopwatch and reported in seconds. Data were assessed, and no violations for homogeneity of variance and normalcy were found prior to analysis. Partial eta-squared values were used to show effect size (ES).

Results

Means and standard error are reported in percentage and seconds.

Handedness Questionnaire

All participants self-reported as right handers, and this was confirmed by the Handedness Questionnaire. Overall, the average score on the questionnaire was +30.5 (±1.0 SE; range +15 to +40) out of the maximum possible score of +44/−44. The Vision-First group scored an average of +31.0 (±1.6 SE; range +15 to +40), and the Hapsis-First group scored an average of +30.0 (±1.1 SE; range +18 to +37). The difference between the two groups was not significant (p = 0.6).

Hand use for grasping

Analysis using a 2 (visual availability) × 2 (starting condition) repeated-measures ANOVA was performed on the percentage of right-hand use for grasping during the task. Visual availability (sighted, blindfolded) was a within-subject factor and starting condition (Vision-First, Hapsis-First) a between-subject factor. Overall, we found a main effect of visual availability (F(1, 36) = 6.4; p = 0.01; ES = 0.151) as shown in Fig. 1b. Please note that during the blindfolded condition, participants could only use their sense of touch (hapsis) to construct the models. Participants used their right hand significantly more to grasp the blocks when they were sighted (66.3 ± 1.8 %) than when they were blindfolded (61.2 ± 2.1 %). There was no main effect of starting condition (F(1, 36) = 0.4; p > 0.1; ES = 0.01). However, the interaction between visual availability and starting condition was significant (F(1, 36) = 9.1; p = 0.005; ES = 0.203; see Fig. 1c). Follow-up analysis (pairwise t test) revealed that in the Vision-First group, right-hand use significantly decreased when participants were blindfolded (t(18) = 4.6; p < 0.001), yet this was not the case in the Hapsis-First group (p > 0.1). In addition, when comparing both sighted conditions, the right hand was used significantly less in the Hapsis-First group (t(36) = 2.3; p < 0.05; independent-samples t test). Figure 1c shows that even though in both groups participants were sighted, right-hand use was 70.5 ± 2.8 % in the Vision-First group but only 62.2 ± 2.8 % in the Hapsis-First group. In none of the conditions did hand use decrease to chance level [revealed by performing a pairwise t test on the values of right-hand use for grasping against 50; Vision-First: (t(18) = 3.82; p = 0.001; Hapsis-First: t(18) = 3.84; p = 0.001)]. In other words, regardless of the sensory domain being utilized, a right-hand preference for grasping remained. Number of grasps: To investigate whether the number of grasps executed between the sighted and blindfolded conditions differed, we conducted a repeated-measures ANOVA with visual availability (sighted, blindfolded) as a within-subject factor and starting condition as between-subject factor. Results showed a main effect of visual availability: Participants executed more grasps per model while blindfolded (13.6) when compared with when they were sighted (10.4; F(1, 36) = 58.1; p < 0.0001; ES = 0.61). There was no main effect of starting condition (F(1, 36) = 0.007; p = 0.93) or significant interaction (F(1, 36) = 0.002; p = 0.96).

Build times for models

Overall, participants were faster at building models while sighted than while blindfolded (F(1, 36) = 44.8; p < 0.001; ES = 0.886). During the sighted trials, it took participants in the Vision-First group on average 29.7 ± 2.0 s to complete one model, whereas it took participants in the Hapsis-First group on average 27.3 ± 1.4 s to complete one model. These values were not significantly different from one another (p = 0.3). During the blindfolded trials, participants in the Vision-First group completed one model on average 142.3 ± 9.5 s, whereas participants in the Hapsis-First group completed one model on average 114.1 ± 9.2 s. There was a significant difference between these two values (t(36) = 2.1; p < 0.05; independent-samples t test).

Discussion

The results showed a decrease in right-hand use for haptically guided grasping. Participants used their right hand less often to grasp the blocks when blindfolded than when they were sighted. This change, however, was modulated by starting condition. Participants in the Vision-First group used their right hand 70.5 % of the time while sighted, but once they were blindfolded, right-hand use decreased significantly to 59.2 %. On the other hand, participants in the Hapsis-First group demonstrated similar hand preference regardless of visual condition (62.2 % while sighted; 63.2 % while blindfolded). One puzzling finding of these results was the significant decrease (~8 %) in right-hand use in the Hapsis-First group when participants were sighted. In other words, even though participants were locating the pieces and guiding their movements using vision, they used their right hand less often if they have completed the hapsis portion of the task first. Could a brief haptic experience modulate subsequent hand preference for grasping? We tested this suggestion in Experiment 2.

Experiment 2: grasping with an added haptic experience

Participants

Thirty-eight self-reported right-handed individuals from the University of Lethbridge, between the ages of 18 and 35, participated. Nineteen (five males) and nineteen (six males) were assigned to the Vision-First and the Hapsis-First groups, respectively. These studies were approved by the local ethics committee, and all participants gave written informed consent before participating in the study. Participants were naïve to the purposes of the study.

Apparatus and stimuli

All the display material and equipment were the same as in Experiment 1, except that one model was added to the sighted condition. Thus, participants built three models sighted and three models blindfolded.

Procedures

All procedures were the same as Experiment 1 except that participants were blindfolded and asked to manipulate the MEGA BLOKS® in a container (42 L × 30 W × 30 cm H; see Fig. 2a) for 5 min prior to starting the block-building task.

Data analysis

Data analysis was the same as Experiment 1.

Results

Means and standard error are reported in percentage and seconds.

Handedness Questionnaire

The average score on the Handedness Questionnaire was +33.5 (±1.1 SE; range: +20 to +44) out of a total possible +44/−44. The Vision-First group scored an average of +32.5 (±1.5 SE; range: +20 to +42), and the Hapsis-First group scored an average of +34.5 (±1.7 SE; range: +23 to +44). The difference between the two groups was not significant (p = 0.3).

Hand use for grasping

Analysis using a 2 (visual availability) × 2 (starting condition) repeated-measures ANOVA was performed on the percentage of right-hand use for grasping during the task. Visual availability (sighted, blindfolded) was a within-subject factor and starting condition (Vision-First, Hapsis-First) a between-subject factor. Overall, we found a main effect of visual availability, as shown in Fig. 2b. Participants used their right hand significantly more to grasp the blocks while sighted (67.7 ± 2.3 %) than while blindfolded (52.6 ± 1.9 %). There was no main effect of starting condition (F(1, 36) = 1.4; p > 0.1; ES = 0.039) but once again, the interaction between visual availability and starting condition was significant (F(1, 36) = 7.3; p = 0.01; ES = 0.170). Follow-up analysis (independent-samples t test) showed that, as in Experiment 1, when comparing both sighted conditions, the right hand was used significantly less in the Hapsis-First condition (t(36) = 2.1; p < 0.05). In contrast to Experiment 1, however, the reduction in right-hand use when blindfolded was significant regardless of starting condition (t(18) = 5.7; p < 0.001; t(18) = 3.2; p < 0.01, for the Vision-First and Hapsis-First groups, respectively). The prior haptic experience of touching the blocks blindfolded, thus affected hand preference within its own sensory domain. In contrast to Experiment 1, in Experiment 2, hand use did decrease to chance level, but only for the Vision-First group. Once again, we performed a pairwise t test analysis on the values of right-hand use for grasping against 50. The results show that for the Vision-First group, these values were not significantly different (t(18) = 0.58; p = 0.56). However, for the Hapsis-First group, the values were significantly different from 50 % (t(18) = 2.6; p = 0.015). These results suggest that the brief haptic experience had a different effect on hand use with hapsis depending on the starting condition. If they started with vision, right-hand use was reduced to chance. Number of grasps: Again, to investigate whether the number of grasps executed between the sighted and blindfolded conditions differed, we conducted a repeated-measures ANOVA with visual availability (sighted, blindfolded) as a within-subject factor and starting condition as between-subject factor. Results showed a main effect of visual availability: Participants executed more grasps per model while blindfolded (14.1) when compared with when they were sighted (10.3; F(1, 36) = 87.1; p < 0.001; ES = 0.708). There was no main effect of starting condition (F(1, 36) = 0.211; p = 0.64) or significant interaction (F(1, 36) = 0.92; p = 0.34).

Build times for models

Overall, participants were faster at building models while sighted than while blindfolded (F(1, 36) = 68.0; p < 0.001; ES = 0.901). During the sighted trials, it took participants in the Vision-First group approximately 27.6 ± 1.2 s to complete one model, whereas it took participants in the Hapsis-First group approximately 26.2 ± 1.3 s to complete one model. These values were not significantly different from one another (p = 0.4). During the blindfolded trials, participants in the Vision-First group completed one model on average 139.6 ± 10.0 s, whereas participants in the Hapsis-First group completed one model on average 141.4 ± 8.5 s. Again, these values were not significantly different (p = 0.8).

Comparison between Experiment 1 and Experiment 2

To gain a better understanding of how the added haptic experience affected hand use, we directly compared Experiments 1 and 2. Visual availability (sighted, blindfolded) was a within-subject factor, and starting condition (Vision-First, Hapsis-First) and experience (additional haptic experience, no additional haptic experience) were between-subject factors. The repeated-measures ANOVA showed a main effect of visual availability (F(1, 74) = 43.3; p < 0.001). Participants used their right hand significantly more when they were sighted (67.0 ± 1.4 %) than when they were blindfolded (56.9 ± 1.2 %). There was no main effect of starting condition or experience, but there was a significant interaction between visual availability and starting condition (F(1, 74) = 16.2; p < 0.001). While sighted, the Hapsis-First group used their right hand significantly less (62.5 ± 2.0 %) than the Vision-First group (71.5 ± 2.0 %). This interaction is illustrated in Fig. 3a. There was also a significant interaction between visual availability and experience (F(1, 74) = 10.4; p = 0.002). Follow-up analysis (independent-samples t test) revealed that this interaction was due to the significant reduction in right-hand use during the blindfolded condition when participants had additional haptic experience with the blocks (t(74) = −3.3; p < 0.05). If participants manipulated the blocks in the container before starting the building task (Experiment 2), they used their right hand 52.6 ± 1.7 % of the time (see Fig. 3b). Without this experience (Experiment 1), participants used their right hand 61.2 ± 1.7 % of the time. This was regardless of starting condition as the three-way interaction (visual availability, starting condition, and experience) was not significant (F(1, 74) = 0.001; p = 0.9).

a This graph demonstrates right-hand use in percentage for Experiments 1 and 2 while sighted. White bars represent the Vision-First groups. Black bars represent the Hapsis-First groups. Note the significant reduction in right-hand use for the Hapsis-First group. b This graph demonstrates right-hand use in percentage for Experiments 1 and 2 while blindfolded. White bars represent the Vision-First groups. Black bars represent the Hapsis-First groups. Note the significant reduction in right-hand use in both groups during Experiment 2

General discussion

The series of experiments assessed hand use for grasping with and without vision. Many studies have investigated the kinematics of grasping when vision is occluded (e.g., Connolly and Goodale 1999; Kritikos et al. 2002; Pettypiece et al. 2010; Schettino et al. 2003; Winges et al. 2003), but none, to our knowledge, have assessed whether hand preference is affected when vision is prevented. Participants were asked to replicate 3D block models from a tabletop containing numerous blocks, while sighted and while blindfolded. Hand use for grasping the blocks was documented. The results of Experiment 1 showed that when blindfolded, participants used their right hand significantly less than when they were sighted. Interestingly, starting condition played a significant role in the modulation of hand use. Participants that started the task with vision demonstrated a significant decrease in right-hand use when blindfolded. When compared with participants that started the task with vision, participants that started the task blindfolded displayed a significant decrease in right-hand use while sighted. Experiment 2 investigated the possibility that the haptic experience of touching the blocks without vision had influenced hand use during the sighted portion of the task. To this end, blindfolded participants manipulated the blocks in a container prior to the building task. This haptic experience did not affect right-hand use while sighted regardless of starting condition. The experience, however, significantly affected hand use while blindfolded: When compared to Experiment 1, participants used their right hand less often in both starting conditions. Together, these results demonstrate first that hand preference for grasping is influenced by vision, and second, they highlight how flexible this preference could be when modulated by hapsis.

The results demonstrated a decrease in right-hand preference for haptically guided grasping when compared with visually guided grasping. Numerous studies have shown a right-hand preference for grasping during uni- and bimanual tasks (Bishop et al. 1996; Bryden and Roy 2006; Calvert and Bishop 1998; Carlier et al. 2006; Gabbard et al. 2003; Mamolo et al. 2004, 2005, 2006; Sacrey et al. 2012). In the course of our investigations using the block-building task, we have shown the robustness of this preference in right handers and even in some left handers (Gonzalez et al. 2007; Gonzalez and Goodale 2009; Stone et al. 2013). The reduction in right-hand use when vision is unavailable shown in the current study is therefore noteworthy. We consider two possible explanations for this reduction. First, studies have shown a left-hand advantage for object discrimination when guided by hapsis (Benton et al. 1978; De Renzi et al. 1969; Fagot et al. 1993; Milner and Taylor 1972; Riege et al. 1980; Squeri et al. 2012; Tomlinson et al. 2011). Squeri et al. (2012), for example, found that during a passive discrimination haptic task, right handers performed better when they used their left hand. In another haptic discrimination task, Tomlinson et al. (2011) found that the left hand was more specialized for identifying the haptic-related properties of the object (e.g., texture). Based on these studies, it is possible to speculate that when grasping for an object that you cannot see, one would resort to the hand with greater discriminatory abilities (i.e., the left hand). In the current experiments, there was a significant decrease in right-hand use when participants were blindfolded (and an even greater decrease with prior haptic manipulation). It is possible that left-hand use for grasping increased because it was being recruited for the discrimination of the blocks.

A second factor that could have contributed to the reduction in right-hand use for haptically guided grasping is the difference in spatial demands between sighted and blindfolded conditions. Arguably, understanding the spatial characteristics of the environment would be more demanding without vision. In a review on spatial representations, Thinus-Blanc and Gaunet (1997) assert that perceiving the environment without vision takes significantly more cognitive resources than with vision. Millar and Al-Attar (2005) tested participants on a spatial task with and without vision. Participants were presented with a tactile map and asked to remember the location of six landmarks. They traced the map with their right index finger under both conditions. Participants were significantly more accurate when using vision. The authors suggested that spatial demands of the task had a greater effect on haptic condition. A fundamental aspect of the block-building task used in the current experiments is to recognize the spatial arrangement of the blocks on the tabletop and to construct a spatial map in which to guide their movements. This would be particularly relevant when participants are blindfolded. With these increased spatial demands, it is possible that both hands would be recruited more in order to gain a better understanding of space. Furthermore, it has been suggested that the right hemisphere, which controls movement of the left hand, is responsible for encoding the spatial aspects of the environment (Bartolomeo 2006; Serrien et al. 2006; Vallar 1997; Vogel et al. 2003). One could speculate that increased spatial demands would lead to an increase in left-hand use. Future experiments could investigate whether hand use changes as a function of spatial demands with and without vision.

The next finding, present in both experiments, was the interaction between starting condition and visual availability. That is, in the Vision-First group right-hand use decreased in the subsequent blindfolded part of the task. However, hand use in the Hapsis-First group was not significantly different between the vision and blindfolded condition. This was due to the 10 % reduction in right-hand use during the sighted portion of the task. This is intriguing as in both groups (Vision-First, Hapsis-First), participants were building the same models, performing the task with full vision, and the only difference was that one group had completed the blindfolded condition first. This finding suggests that haptically guided grasping has a powerful effect on subsequent hand use behavior. Other studies have also found interactions between visual and haptic modalities. For example, Wismeijer et al. (2012) found that information in the haptic domain is transferred more readily to the visual domain than vice versa. The authors suggest that learning between the senses depends on its direction (Wismeijer et al. 2012). It is possible that in our experiment, the increase in left-hand use during the haptic portion of the task influenced subsequent hand use in the visual domain. Our results align with Wismeijer and colleagues suggesting that learning can be unidirectional across sensory modalities. Other studies have also shown that information extracted in one sensory modality is later utilized by a different modality. Participants that had received experience identifying the properties of an object either by touch or vision were better at identifying the same property but in the other sensory domain (Förster 2011). This suggests that the initial focus of a task, whether it be in the visual or haptic domain, could be carried over into the next part of the task. Again, we could speculate that increased left-hand use during the haptic portion of the task carried over into the visual condition. The initial focus of the task also seemed to affect build times in the blindfolded condition. In Experiment 1, we found that participants were faster to complete the models if they had started the task with hapsis. That is, it took participants longer to build the models while blindfolded if they had built the models while sighted first. This suggests that perhaps the visual experience interfered with the subsequent performance of building the models with hapsis. Intriguingly, however, this was not found in the second experiment. Future studies investigating visual and haptic interactions that focus on performance time should examine the effects of starting condition on this variable.

Experiment 2 was designed to assess the possibility that touching the blocks blindfolded prior to the building task could lead to the increase in left-hand use seen during the visual condition in the Hapsis-First group. Contrary to our prediction, we did not find this to be the case. When comparing Experiments 1 and 2, participants did not further reduce their right-hand use in the visual condition, and hand use between the two experiments was, in fact, virtually identical (Fig. 3a). It is possible that right-hand preference for grasping when vision is available could not reach lower levels (i.e., “floor effect”) and that is why the additional haptic experience did not further decrease its use. The results suggest that other factors, besides the haptic feedback, modulate hand preference across the two sensory modalities. In other words, simply “feeling” the blocks while blindfolded was not sufficient to change hand use in a different sensory domain (vision). Transfer of information between sensory domains has been shown in some studies (Bratzke et al. 2012; Gottfried et al. 1977; Held 2009; Norman et al. 2008; Volcic et al. 2010), but not in others. For example, Newell et al. (2001) showed that participants perform worse when transferring information from one sensory modality to the other (i.e., vision to haptics) than within sensory modalities. In their experiment, participants had to visually or haptically assess a platform in which various wooden objects were placed. For the cross-modal condition, participants studied the platform in one sensory modality and were tested in the other sensory modality. Errors were significantly higher in the cross-modal condition. In our experiment, we did not find that additional experience in the haptic domain (i.e., feeling the blocks in the container) influenced hand preference in the vision domain. However, this additional experience did have a significant impact on hand preference within the haptic domain. That is, hand preference for participants in the Vision-First group was significantly reduced to the point that the right and the left hands were used equally (i.e., 51 % right-hand preference) to grasp the blocks while blindfolded. These results suggest the possibility that there is a transfer of information within the haptic domain. Evidence for transfer within sensory modalities has been shown before (Butler and James 2011; Easton et al. 1997; Hupp and Sloutsky 2011; Reales and Ballesteros 1999) but none have documented a change in hand use or preference.

Regardless of starting condition and compared with Experiment 1, participants in Experiment 2 displayed an additional and substantial reduction in right-hand use when they were blindfolded. This is 10 and 11 % reduction in right-hand use in the Vision-First and the Hapsis-First groups, respectively. We speculate that the additional ~10 % decrease in the blindfolded conditions was due specifically to the haptic manipulation of the blocks in the container. The results of some studies have shown that experience within sensory modalities significantly influence subsequent behavior. Craddock and Lawson, for example, conducted an identification task where participants either studied specific objects using vision or using hapsis. During the test period, they were asked to identify these objects using hapsis only. The group who had used hapsis to study the objects were significantly faster at identifying them. These results suggest that experience within one modality affects later performance in the same modality. In our study, the haptic experience of touching the blocks in the container altered hand use while blindfolded. Because participants were blindfolded and used both hands to explore the blocks in the container, it is possible that they adopted the same strategy when presented with the blindfolded condition. It is also likely that if there is a left-hand advantage for haptic discrimination (Benton et al. 1978; De Renzi et al. 1969; Fagot et al. 1993; Milner and Taylor 1972; Riege et al. 1980; Squeri et al. 2012; Tomlinson et al. 2011), a prior exposure to the details of the blocks (in the container) prompted its use.

In conclusion, hand preference for grasping is modulated by vision and by hapsis. Haptically guided grasping remains lateralized but to a lesser extent than visiually guided grasping, particularly if followed by a brief haptic experience. Future research in special populations such as congenitally blind individuals and deafferented patients would bring insight into the sensorimotor control of hand actions and the preferences that follow.

References

Annett J, Annett M, Hudson PTW, Turner A (1979) The control of movement in the preferred and the non-preferred hands. Q J Exp Psychol 31:641–652

Bartolomeo P (2006) A parietofrontal network for spatial awareness in the right hemisphere of the human brain. Arch Neurol 63:1238–1241

Benton AL, Varney NR, de Hamsher KS (1978) Lateral differences in tactile directional perception. Neuropsychologia 16:109–114

Bishop DV, Ross VA, Daniels MS, Bright P (1996) The measurement of hand preference: a validation study comparing three groups of right-handers. Br J Psychol 81:269–285

Bratzke D, Seifried T, Ulrich R (2012) Perceptual learning in temporal discrimination: asymmetric cross-modal transfer from audition to vision. Exp Brain Res 221:205–210

Brown S, Roy E, Rohr L, Bryden P (2006) Using hand performance measures to predict handedness. Laterality 11:1–14

Bryden PJ, Roy EA (2006) Preferential reaching across regions of hemispace in adults and children. Dev Psychobiol 48:121–132

Bryden MP, Singh M, Steenhuis R, Clarkson KL (1994) A behavioral measure of hand preference as opposed to hand skill. Neuropsychologia 32:991–999

Bryden PJ, Pryde KM, Roy EA (2000) A performance measure of the degree of hand preference. Brain Cogn 44:402–414

Butler AJ, James KH (2011) Cross-modal versus within-modal recall: differences in behavioral and brain responses. Behav Brain Res 224:387–396

Calvert GA, Bishop DV (1998) Quantifying hand preference using a behavioural continuum. Laterality 3:255–268

Carlier M, Doyen A-L, Lamard C (2006) Midline crossing: developmental trend from 3 to 10 years of age in a preferential card reaching task. Brain Cogn 61:255–261

Connolly JD, Goodale MA (1999) The role of visual feedback of hand position in the control of manual prehension. Exp Brain Res 125:281–286

De Renzi E, Faglioni P, Scotti G (1969) Impairment of memory for position following brain damage. Cortex 5:274–284

Easton RD, Greene AJ, Srinivas K (1997) Transfer between vision and haptics: memory for 2-D patterns and 3-D objects. Psychon Bull Rev 4:403–410

Fagard J, Marks A (2000) Unimanual and bimanual tasks and the assessment of handedness in toddlers. Dev Sci 3:137–147

Fagot J, Hopkins WD, Vauclair J (1993) Hand movements and hemispheric specialization in dichhaptic explorations. Percept 22:847–853

Förster J (2011) Local and global cross-modal influences between vision and hearing, tasting, smelling, or touching. J Exp Psychol 140:364–389

Gabbard C, Rabb C (2000) What determines choice of limb for unimanual reaching movements? J Gen Psychol 127:178–184

Gabbard C, Tapia M, Helbig CR (2003) Task complexity and limb selection in reaching. Int J Neurosci 113:143–153

Gonzalez CL, Goodale MA (2009) Hand preference for precision grasping predicts language lateralization. Neuropsychologia 47:3182–3189

Gonzalez CL, Whitwell RL, Morrissey B, Ganel T, Goodale MA (2007) Left handedness does not extend to visually guided precision grasping. Exp Brain Res 182:275–279

Gottfried AW, Rose SA, Bridger WH (1977) Cross-modal transfer in human infants. Child Dev 48:118–123

Held R (2009) Visual-haptic mapping and the origin of cross-modal identity. Optom Vis Sci 86:595–598

Hupp JM, Sloutsky VM (2011) Learning to learn: from within-modality to cross-modality transfer in infancy. J Exp Child Psychol 110:408–421

Kritikos A, Beresford M, Castiello U (2002) Tactile interference in visually guided reach-to-grasp movements. Exp Brain Res 144:1–7

Mamolo CM, Roy EA, Bryden PJ, Rohr LE (2004) The effects of skill demands and object position on the distribution of preferred hand reaches. Brain Cogn 55:349–351

Mamolo CM, Roy EA, Bryden PJ, Rohr LE (2005) The performance of left-handed participants on a preferential reaching test. Brain Cogn 57:143–145

Mamolo CM, Roy EA, Rohr LE, Bryden PJ (2006) Reaching patterns across working space: the effects of handedness, task demands, and comfort levels. Laterality 11:465–492

Melmouth DR, Finlay AL, Morgan MJ, Grant S (2009) Grasping deficits and adaptations in adults with stereo vision losses. Invest Ophthalmol Vis Sci 50:3711–3720

Millar S, Al-Attar Z (2005) What aspects of vision facilitate haptic processing? Brain Cogn 59:258–268

Milner B, Taylor L (1972) Right-hemisphere superiority in tactile pattern-recognition after cerebral commissurotomy: evidence for nonverbal memory. Neuropsychologia 10:1–15

Newell FN, Ernst MO, Tjan BS, Bülthoff HH (2001) Viewpoint dependence in visual and haptic object recognition. Psychol Sci 12:37–42

Norman JF, Clayton AM, Norman HF, Crabtree CE (2008) Learning to perceive difference in solid shape through vision and touch. Perception 37:185–196

Oldfield RC (1971) The assessment and analysis of handedness: the Edinburgh inventory. Neuropsychologia 9:97–113

Pettypiece CE, Goodale MA, Culham JC (2010) Integration of haptic and visual cues in perception and action revealed through cross-modal conflict. Exp Brain Res 201:863–873

Reales JM, Ballesteros S (1999) Implicit and explicit memory for visual and haptic objects: cross-modal priming depends on structural descriptions. J Exp Psychol 25:644–663

Riege WH, Metter EJ, Williams MV (1980) Age and hemispheric asymmetry in nonverbal tactual memory. Neuropsychologia 18:707–710

Sacrey LR, Karl JM, Whishaw IQ (2012) Development of rotational movements, hand shaping, and accuracy in advance and withdrawal for the reach-to-eat movement in human infants aged 6 to 12 months. Infant Behav Dev 35:543–560

Schettino LF, Adamovich SV, Poizner H (2003) Effects of object shape and visual feedback on hand configuration during grasping. Exp Brain Res 151:158–166

Serrien DJ, Ivry RB, Swinnen SP (2006) Dynamics of hemispheric specialization and integration in the context of motor control. Nat Rev Neurosci 7:160–167

Squeri V, Sciutti A, Gori M, Masia L, Sandini G, Konczak J (2012) Two hands, one perception: how bimanual haptic information is combined by the brain. J Neurophysiol 107:544–550

Stone KD, Bryant DC, Gonzalez CLR (2013) Hand use for grasping in a bimanual task: evidence for different roles? Exp Brain Res 224:455–467

Thinus-Blanc C, Gaunet F (1997) Representation of space in blind persons: vision as a spatial sense? Psychol Bull 121:20–42

Tomlinson SP, Davis NJ, Morgan HM, Bracewell RM (2011) Hemispheric specialisation in haptic processing. Neuropsychologia 49:2703–2710

Vallar G (1997) Spatial frames of reference and somatosensory processing: a neuropsychological perspective. Philos Trans R Soc Lond B Biol Sci 352:1401–1409

Vogel JL, Bowers CA, Vogel DS (2003) Cerebral lateralization of spatial abilities: a meta-analysis. Brain Cogn 52:197–204

Volcic R, Wihntjes MW, Kool EC, Kapper AM (2010) Cross-modal visuo-haptic mental rotation: comparing objects between the senses. Exp Brain Res 203:621–627

Winges SA, Weber DJ, Santello M (2003) The role of vision on hand pre-shaping during reach to grasp. Exp Brain Res 152:489–498

Wismeijer DA, Gegenfurtner KR, Drewing K (2012) Learning from vision-to-touch is different than learning from touch-to-vision. Front Integr Neurosci 6:105

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Stone, K.D., Gonzalez, C.L.R. Grasping with the eyes of your hands: Hapsis and vision modulate hand preference. Exp Brain Res 232, 385–393 (2014). https://doi.org/10.1007/s00221-013-3746-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00221-013-3746-3