Abstract

Transsaccadic integration (TSI) refers to the perceptual integration of visual information collected across separate gaze fixations. Current theories of TSI disagree on whether it relies solely on visual algorithms or also uses extra-retinal signals. We designed a task in which subjects had to rely on internal oculomotor signals to synthesize remembered stimulus features presented within separate fixations. Using a mouse-controlled pointer, subjects estimated the intersection point of two successively presented bars, in the dark, under two conditions: Saccade task (bars viewed in separate fixations) and Fixation task (bars viewed in one fixation). Small, but systematic biases were observed in both intersection tasks, including position-dependent vertical undershoots and order-dependent horizontal biases. However, the magnitude of these errors was statistically indistinguishable in the Saccade and Fixation tasks. Moreover, part of the errors in the Saccade task were dependent on saccade metrics, showing that egocentric oculomotor signals were used to fuse remembered location and orientation features across saccades. We hypothesize that these extra-retinal signals are normally used to reduce the computational load of calculating visual correspondence between fixations. We further hypothesize that TSI may be implemented within dynamically updated recurrent feedback loops that interconnect a common eye-centered map in occipital cortex with both the “dorsal” and “ventral” streams of visual analysis.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Humans typically make about two to four saccades per second (Rayner 1978). Since visual input is largely suppressed during saccades (e.g. Matin 1974), inter-saccadic fixations provide the brain with only discrete visual “snapshots” of the world from spatially and temporally separated perspectives. Despite this, we perceive the visual world as stable and unified (MacKay 1973; Rayner and Pollatsek 1983; for review see Simons et al. 2003). But perhaps the more important question is how do we make use of the visual information gathered from these various fixations? Ideally, the visual system would retain and integrate visual features from separate fixations in order to build up a complete internal construct of the world—much like piecing together a jigsaw puzzle. The mechanism for this hypothetical process is called transsaccadic integration (TSI) (e.g. Irwin 1996).

The capacity and mechanism of TSI is currently unclear. Early theories assumed that the brain retains highly detailed visual information from one fixation and then integrates them with similar information from another fixation according to their spatial coordinates, that is, in a point-by-point manner (Helmholtz 1963; McConkie and Rayner 1976). However, many subsequent studies have questioned the memory capacity of TSI (Bridgeman and Mayer 1983; Irwin et al. 1983, 1988, 1990; McConkie and Zola 1979; O’Regan and Levy-Schoen 1983; Rayner and Pollatsek 1983). The current consensus seems to be that the brain cannot store highly detailed representations across saccades, but may retain certain key features, especially those that are attended and task-related (Irwin 1991, 1992; Pollatsek et al. 1984; Palmer and Ames 1992; Irwin and Andrews 1996; Henderson and Hollingworth 1999; Henderson and Siefert 1999; Germeys et al. 2002).

However, it is important to distinguish between transsaccadic memory and TSI. Transsaccadic memory of visual features is a necessary condition for TSI, but TSI further implies that these features are integrated to form some more complete representation. However, compared to transsaccadic memory, very little is known about the capacity and mechanisms of TSI (Verfaillie et al. 2001).

One possibility is that TSI is implemented through purely visual (i.e. retinally derived) mechanisms, whereby contents from separate fixations are spatially fitted together like a jigsaw puzzle (i.e. Haywood 1986; Zheng and Chellappa 1993). A recently popular variation of this scheme is the “saccade-target theory” (e.g. Irwin et al. 1994). In this theory, intended saccade targets are encoded and stored as an “object file,” and then compared to post-saccadic parafoveal information in order to align the stored and perceived visual information (Currie et al. 2000; Henderson and Hollingworth 1999; McConkie and Currie 1996). One possible way to implement this model might be to rely solely on visual input to derive the abstractions needed for the pre- and post-saccadic representations, disregarding attributes of the saccade itself.

However, in some cases retinal information may be insufficient to establish the overlap of successive visual fixations. In such cases, the visual system would have to rely on egocentric information about the eye rotation. For example, it is well known that primates (both humans and monkeys) can accurately aim saccades to remembered targets in complete darkness after an intervening eye movement has changed its retinal coordinates (Blohm et al. 2003; Hallett and Lightstone 1976; Mays and Sparks 1980). Furthermore, several areas of the primate visual system are capable of “remapping” the spatial representations of saccade and arm movement targets in an eye-centered frame during eye movements (Duhamel et al. 1992; Medendorp et al. 2003; Nakamura and Colby 2002; Umeno and Goldberg 1997; Walker et al. 1995). But these studies deal only with the most rudimentary form of TSI (updating point locations) in purely visuomotor saccade and pointing tasks.

To our knowledge, only two studies have directly demonstrated TSI in a task that might be called perceptual. Hayhoe et al. (1991) presented subjects with three dots—separately and in succession—either within a single fixation or by fixating each dot in turn. Subjects were required to judge whether these dots formed either an acute or obtuse triangle. As long as a constant reference point was present during each dot presentation, judgments for the saccade trials were as accurate as that of the no-saccade trials. Based on these findings one can conclude that under certain conditions the spatial positions of simple stimuli can be integrated across saccades to form more complex representations. However, this study did not measure eye movements and used a forced-choice measure, so it did not allow for a quantitative evaluation of the input–output relations between TSI and a motor behavior.

More recently, Melcher and Morrone (2003) showed that visual motion can be spatiotopically and retinotopically integrated across saccades. In their task, subjects indicated the direction of two briefly presented motion signals consisting of a subset of dots moving in one coherent direction among a larger group of random motion dots. These motion signals were either presented within a single fixation or separated by a saccade. Sensitivity to motion coherence was not affected by an intervening saccade so long as the pre- and post-saccadic motion signals shared the same retinal or spatial locations. But again, to our knowledge, the accuracy of TSI, with respect to integrating extra-retinal measures of saccade metrics with the “retinal” aspects of coding stimulus location and orientation, has never been measured.

In the present study, we introduce a new experimental task for examining TSI. Two oriented bars were successively presented, either within one ocular fixation (Fixation task) or within two different fixations separated by a saccade (Saccade task). Subjects were required to judge the spatial position where these bars would intersect if the bars had been presented simultaneously. Thus, to perform this task accurately, subjects had to integrate remembered information about both the orientation and spatial location of the pre- and the post-saccadic bars. Moreover, to perform this task accurately they had to account for the amount and direction of eye rotation during the saccade. Therefore, eye orientation was monitored during this task to quantify the degree to which subjects accounted for their saccades in the integration process.

Methods

Subjects

A total of seven subjects (four males and three females; mean age was 27.25) participated in this study. All subjects had normal or corrected-to-normal visual acuity. All procedures were approved by the York University Human Participates Review Sub-Committee. Informed consent was obtained from each subject. Two subjects were aware of the purpose of the experiments but their data followed the same trends as the naïve subjects.

Apparatus

A customized network system of three personal computers was used for on-line monitoring of eye position, stimulus presentation, and data recording. An LCD projector back-projected stimuli onto a 1.9 m×1.4 m display screen spanning 100° of visual angle horizontally by 90° of visual angle vertically. The image was filtered to remove background illumination. The screen background was thus unlit (appearing black) with a luminance level of 0.015 cd/m2. Moreover, due to the size of the screen and the location at which subjects sat in front of it, the edges of the screen were not potentially visible for subjects to use as an aid in judging the orientation or spatial position of the stimuli. Eye position was monitored using the scleral search coil technique (Robinson 1963) with a sampling rate of 1,000 Hz. At the beginning of each experiment subjects fixated a spatial grid of visual targets and a program was run for on-line calibration of the eye-coil signals (Niemeier et al. 2003). Saccades were detected using a velocity criterion of 36° per second and eye position criterion of 1.5° of visual angle from the fixation point. The subject’s head was stabilized using a bite-plate made from dental compound.

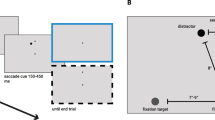

Design

Figure 1 illustrates the experimental design for this experiment. The staggered rectangles of each panel show the temporal order for presentation of ocular fixation targets and probes, and their relative spatial locations for each condition. This experiment consisted of three tasks: Control, Fixation, and Saccade (explained in more detail below). The tasks were presented in a block design with two blocks per task. Each block consisted of 60 trials for a total of 360 trials for the entire experimental session. The blocks were ordered as A–B–C–C–B–A, C–A–B–B–A–C, and A–C–B–B–C–A. These orders were counter-balanced between subjects. Each experimental session began with the calibration sequence and a block of 180 practice trials, consisting of trials from each task.

Stimuli

Figure 2 illustrates the fixation crosses and the 12 possible target positions where successively presented bars might either virtually intersect (in Saccade and Fixation tasks) or where a dot might appear (in Control task). Ocular fixation positions were indicated by a cross, subtending 0.4°×0.4°. The central fixation position (for the Control and Fixation tasks), was located directly in front of the subject’s right eye (0°, 0°). Fixation positions for the Saccade task were located 4° left and 4° right. The probe for the Control task was a white dot subtending 0.75° in diameter. Probes for both the Saccade and Fixation tasks were white bars that passed through either the left or right fixation position and extended across the entire length of the display with a width of 0.5° visual angle. These probes always tilted inward above center so that their intersection points fell in the region above the center fixation cross. Their orientations were varied to virtually intersect at one of the same 12 target positions used in the Control task. These targets were located in the grid described by 1°, 3°, 5°, and 7° upwards on the vertical plane, to the left −1° and −3° and to the right +1° and +3°. Many other possible configurations of stimuli could be possible in such a task with intersections to the left, right, or below a fixation point at any point in the oculomotor range. This particular configuration was chosen simply for the sake of simplicity and to give a range of intersection points that could be repeated a sufficient number of times during the duration of an eye-coil experiment for statistical analysis.

All probes were presented for 40 ms. This relatively short stimulus duration was used to minimize the possibility that eye movements or light adaptation would contaminate the data. The luminance level for the probes and mask were 27.2 and 32.3 cd/m2, respectively. The mask was a blank white screen covering the entire visual display (100°×90°) presented for 300 ms to eliminate visual persistence (see Kahneman 1968; Schiller 1965; Stewart and Purcell 1974). The mask was employed to eliminate iconic afterimages that might occur in a dark lab, but presumably would not come into play during saccades in normal day-light vision. Subjects reported that they were unable to consciously see the horizontal borders of the screen while fixating and attending to the task-related stimuli near the middle of our screen. Moreover, the mask was never presented at the same time as the probe stimuli. Therefore, it is unlikely that the edges of the mask provided a useful allocentric cue to help with the TSI task. However, to be certain that this did not influence our results, we repeated a control study on all of our subjects in which the second mask was missing (i.e. so that there was no common visual cue between the initial and final fixation points, but there was still a mask between the probes). The section “Results” reports data from the two-mask version (Fig. 1), unless declared otherwise.

Experimental paradigm. a Control task: subjects were required to maintain eye fixation on the fixation cross (plus mark) throughout the trial. A probe (filled circle) was briefly presented followed by a mask (filled rectangle). Subjects responded by moving a mouse-cursor (vertical and horizontal crossed dots) to estimate the location of the probe. Subjects’ eyes remained fixated on the fixation cross throughout the trial. b Fixation task: subjects were presented by two probes shown as the white bars (slanting line). Each probe was followed by a mask. Subjects viewed both probes while maintaining eye fixation on the fixation cross at head-center throughout the trial. Subjects moved the mouse-cursor to the location they estimated the probes would have intersected. c Saccade task: trials began with a fixation cross randomly presented either 4° left or 4° right of head-center. Subjects were required to maintain eye fixation with the fixation cross during the brief presentation of the first probe and mask. After the first probe and mask, subjects saccaded to the second fixation cross at the opposite side of the display. When subjects were fixated on the second fixation cross, the second probe and mask were presented. As in the Fixation task, subjects moved the mouse-cursor to the location they estimated to be the point the probes would have intersected

Procedure

As shown in Fig. 1a, Control trials began with fixation of the center fixation cross. One of the 12 control targets then flashed in random order. A mouse-cursor always appeared straight ahead (i.e. 0°, 0°). The fixation cross then disappeared but subjects were still required to maintain central eye fixation while manually moving the mouse-cursor to the remembered location of the target. This location was indicated by pressing the computer’s mouse button. Subjects were instructed to make their best guess if they were unsure. The Control task was included to provide a baseline measure for pointing accuracy at remembered target locations using the computer mouse.

During Fixation trials (Fig. 1b), subjects maintained ocular fixation on the center cross during probe and mask presentations. The first probe was presented randomly at either 4° left or 4° right from the fixation cross followed by a mask. After the mask and a brief delay (matched to the subject’s saccade latency from previous Saccade trials), the second probe was presented in the opposite location. Saccade trials (Fig. 1c) were similar to Fixation trials with the exception that the first fixation cross was presented randomly at either 4° left or 4° right from subjects’ straight ahead.

Once fixation was detected, the first probe was presented and immediately followed by the mask. The second fixation-cross was presented at the opposite spatial position as the first fixation cross. Subjects made a saccade to fixate on the second fixation cross. Upon re-fixation, the second probe was presented after a short delay (200 ms) at the same location as the second fixation cross. Each probe in the Saccade task intersected the fixation cross so that subjects fixated at a point on the first probe and then the second probe. At the end of Fixation and Saccade trials, subjects were required to judge where the two probes would have intersected, had they appeared simultaneously. As in the Control task, subjects responded manually by moving a mouse-cursor to the desired location and pressing the computer’s mouse button and they were instructed to make their best guess if they were unsure. The mouse-cursor was presented after the second mask at straight ahead. Again, no fixation cross was present as subjects made their response but subjects were still required to maintain eye fixation. Trials with errors in eye movements or eye position were removed from the data.

In an additional experiment, we varied the distance between fixation points in the Saccade task. This was done on four subjects (three of which had previously participated in the main experiment). In this Variable Saccade task, we used the same Saccade task but varied the horizontal distance between the pre- and post-saccadic fixation crosses to ±2°, 4°, and 6°, that is, to provide 4°, 8°, and 12° horizontal saccades, presented in random order. The inter-stimulus interval separating pre- and post-saccadic probes was held constant at 400 ms. As in the main Saccade task, the probe lines intersected the initial and final fixation crosses, so that foveal vision was constant across tasks, but note that this also meant that the angles between the two probes tended to be more acute or obtuse depending on the distance between fixation points.

Results

Basic observations

Subjects’ performance was determined by recording their mouse-pointing errors measured in degrees of visual angle. Here, pointing error is defined as the absolute distance from the true target position to the position indicated by the subject. For all statistical analyses we adopted a P-value of 0.05 for significance.

Figure 2 shows one typical subject’s pointing performance for one target location in each task. Each black dot is a possible target position and the “plus-signs” shown at the bottom of each panel represent the locations where the fixation crosses were presented before each probe was presented. In the Control task (Fig. 2a), the subject pointed to the target indicated by a briefly presented probe shown as the star. Pointing positions (shown as gray circles) clustered close to the target but tend to underestimate the target’s vertical location. Targets in the Fixation task (Fig. 2b) are indicated by the intersection point of the probes shown as gray bars. As in the Control task, pointing positions were clustered around the target with slight downward bias. Finally, the Saccade task is shown in Fig. 2c. If the subject correctly remembered the orientation of the first presented line, but stored this information in retinal coordinates, they would judge the intersection point to be equal to the second fixation point. As this figure shows, however, there was no tendency for this to happen. Instead, the subject pointed with the same accuracy as in the Fixation task.

One subject’s pointing positions for one target in each task. The target is indicated by a probe illuminated at the same location in the Control task (a) or where the two probes would have intersected in the Fixation (b) and Saccade task (c). The probes of the Fixation and Saccade tasks are shown as they would appear if they were presented simultaneously. Individual pointing positions are shown as gray open circles in each panel. Pointing performance for this target shows a similar trend for each task

Figure 3 shows a summary of averaging the pointing positions for each target in each task. The left column’s panels show the average pointing positions for one typical subject while the right column show the results of average pointing positions across all subjects. The open circles are the mean pointing position for a particular target (joined by dashed lines to the actual target). Standard deviations for vertical and horizontal pointing directions are indicated by the length of the lines superimposed on the open circles. In the left column, standard deviations are calculated from the subject’s raw pointing positions, whereas in the right column the standard deviations are calculated from each subject’s mean pointing positions.

Performance in the three tasks was similar with some small differences. Generally, pointing was most accurate in the Control task (Fig. 3a) and about the same between the Fixation task (Fig. 3b) and Saccade task (Fig. 3c). For each task, the subject tended to underestimate the height of the target position, and pointing accuracy decreased as a function of target height. Horizontal pointing errors, manifested primarily as a slight overall tendency to compress toward the center of the target display, were similar in the Fixation and Saccade tasks, but less evident in the Control task.

Average pointing positions for each target. Panels of the left column show the results from one typical subject. The mean pointing position is indicated by open circles for each target position (specified by the dashed lines). The horizontal and vertical standard deviations are shown by the length of the bars (vertical and horizontal crossed lines). Pointing was most accurate for the Control task (a) and about the same between the Fixation task (b) and Saccade task (c). Furthermore, both Fixation and Saccade tasks show some compression of mean pointing positions. For all conditions, mean vertical pointing error increased as a function of the target’s vertical position. These findings were found for the mean pointing positions across all subjects as shown by the panels of the right column

Table 1 provides a quantitative summary of the data across all subjects. Separate one-way analyses of variance (ANOVA) were performed for mean pointing error and mean standard deviation to compare the conditions. The ANOVA for mean pointing error was significant: F (2,18)=9.297; P=0.002. Planned comparisons between each task yielded no significant difference between the Fixation task and the Saccade task: t (6)=−0.648; P=0.541. Mean pointing error of the Control task were significantly less than both the Fixation and Saccade tasks: t (6)=2.504; P=0.0046 and t (6)=5.850; P=0.001, respectively.

The ANOVA for comparing the tasks’ mean standard deviation of pointing errors was significant: F (2,18)=4.284; P=0.03. No significant difference was found in a planned comparison between the Fixation task and the Saccade task: t (6)=1.215; P=0.27. However, significant differences were found when comparing the Control task with the Fixation task [t (6)=4.914; P=0.003] and the Saccade task [t (6)=2.394; P=0.009].

Effect of the mask

To account for the possibility that the second mask following the post-saccadic bar stimulus may provide allocentric information that may allow subjects to judge the length of their eye movements we conducted an additional control experiment that was identical to the basic study except we removed the second mask. Comparing data from all six subjects, we again found no significant difference between the Saccade and Fixation tasks in this control experiment: t (5)=−0.362; P=0.732. Furthermore, the results from the control experiment’s Saccade task was not significantly different from the Saccade task of our original study: t (5)=1.41; P=0.22. Similarly, no difference was found between the Fixation tasks: t (5)=1.19; P=0.35. These findings suggest that subjects’ performance was not influenced by the second mask and eliminate the possibility that our subjects required any sort of allocentric information to solve the task. Therefore, to avoid redundancy, henceforth we provide a more detailed analysis of the basic two-mask version of our task.

Component analysis of errors

In order to guide a more detailed comparison of the Saccade and Fixation tasks, we analyzed the errors in each task. Since the saccade was always horizontal and the mouse pointer movement was largely vertical, it is natural to break the errors down into these two components. But note that in an intersection task with obliquely tilted bars (used here to force subjects to encode orientation information), a systematic mistake in encoding one line’s location along one dimension could influence both dimensions of the perceived intersection point. Figure 4a, b shows two (purely hypothetical) ways in which this might influence subjects behavior, selected specifically because each predicts a pattern of errors that resembles some—but not all—aspects of the error patterns shown below. We will call this phenomenon the “oblique interaction effect.”

The fundamental prediction of these two models, or any related “oblique interaction effect” models, is that errors observed in one dimension (horizontal or vertical) of the intersection point should correlate with errors observed in the other dimension. However, we found no significant correlations between horizontal and vertical errors in any task (Fig. 4c, Table 2). This suggests that the variable errors observed in both tasks did not arise from the oblique effect described above, but rather from some “higher level” source. Henceforth we will quantify those errors in more detail, breaking them down into their horizontal and vertical components.

Two possible explanations of pointing bias. a The primacy model suggests that the second probe may be perceived as orthogonally shifted down the axis of the first probe (indicated by the gray dashed bar). Thus, the perceived target position would be shifted downward and in the direction of the first probe as shown by our data. b The retinal exaggeration model is based on the findings of previous studies on pointing at remembered peripheral targets. The first probe is shown as the dark bar and the second probe is shown as the gray bar. According to this model, subjects miss-localize a remembered target because of retinal eccentricity and the apparent target-location is exaggerated. Thus, in the example shown in the figure, the first probe is perceived as being more rightward (dark dashed bar) so that the perceived target is shifted in the same direction which is in agreement with our data. However, inconsistent with our data, this model would predict that subjects would perceive the target as being above its true position especially during the Fixation task. c Mean horizontal and vertical errors of each target for a typical subject in the Saccade task. Ordinate values indicate vertical errors: positive for upward errors and negative for downward errors. Abscissa values indicate horizontal errors: positive for rightward errors and negative for leftward errors. Mean errors of each target during left-probe-first trials are shown as the open circles. Mean errors of each target during right-probe-first trials are shown as the closed circles. Horizontal pointing errors were systematically shifted in the direction of the first presented probe in a trial. Vertical pointing errors tended to undershoot the target. No significant correlations between downward and horizontal errors were found to validate the “primacy” model in (a)

Figure 5 shows the pointing performance from one typical subject when we separate the overall pointing performance into its horizontal (left column) and vertical (right column) components. Average pointing positions are plotted as a function of actual target position. Again, the three tasks (Control, Fixation, and Saccade) showed similar patterns. In general, the scatter of pointing positions is larger in the Fixation task (Fig. 5b) and Saccade task (Fig. 5c) than in the Control task (Fig. 5a) in both pointing directions. In addition, subjects’ pointing was most accurate in the Control task particularly in the vertical direction (right column) which showed systematic undershooting. Vertical pointing errors increased as a function of the targets’ vertical position in all tasks (Tables 3, 4).

One typical subject’s horizontal and vertical pointing performance for each task. The panels on the left show horizontal pointing and the panels on the right show vertical pointing. The graphs plot the subject’s pointing positions with respect to the targets true position. Actual pointing positions are represented as gray dots and the mean pointing position for each target are shown as the black open circles. The dashed diagonal line indicates perfect pointing performance. Overall, horizontal pointing (left panels) was more accurate than vertical pointing (right panels). Mean vertical pointing errors increased as a function of the target’s vertical position. As shown in (a), pointing performance was most accurate in the Control task. The Fixation task (b) and the Saccade task (c) showed the same trends of pointing performance

To quantify this vertical “undershooting” in pointing, we separated the vertical pointing component from overall pointing performance and analyzed it independently. We performed a two-way ANOVA for repeated measures to find any differences among the tasks and target positions with respect to mean vertical pointing error. Significance was found for both main effects: task [F (2,12)=4.715; P=0.031] and target position [F (3,18)=10.447; P=0.000]. The interaction was not significant, F (6,36)=1.669; P=0.157. Planned comparisons among all three tasks were significant: Fixation vs. Saccade [t (27)=−2.354; P=0.026]; Control vs. Saccade [t (27)=2.377; P=0.025]; and, Control vs. Fixation [t (27)=3.82; P=0.001]. Four comparisons for target position were significant: 1° vs. 5° [t (20)=−2.255; P=0.035]; 1° vs. 7° [t (20)=−3.76; P=0.001]; 3° vs. 7° [t (20)=−2.875; P=0.009]; and, 5° vs. 7° [t (20)=−3.73; P=0.001]. These findings show that (1) the higher target positions elicited greater undershooting in pointing, and (2) vertical pointing was most accurate in the Control task, least accurate in the Fixation task.

Horizontal pointing was also systematically biased, that is, in the direction of the first probe presented. Figure 6 is similar to Figure 3, but now we show the results when the data is separated into trials that began with either the left-probe or the right-probe in both the Fixation (Fig. 6a) and the Saccade tasks (Fig. 6b). In general, pointing was slightly biased to the right of the target’s position when the right-probe was presented first in a trial. Likewise, pointing was biased more leftward of the target for trials that began with the left probe. Moreover, this effect was position-dependent: the rightward bias had the greatest effect on leftward targets, and vice versa.

Pointing bias in the direction of the first probe. The results of one typical subject are shown for the Fixation task (a) and Saccade task (b). Generally, pointing was biased to the left of the target for left-probe-first trials (left column). Likewise, right-probe-first trials led to biased rightward pointing (right column)

The observations were quantified in a similar way as in Fig. 5, but now separating the data into left-probe-first trials and right-probe-first trials (Fig. 7). Horizontal pointing is shown in the left column and vertical pointing is shown in the right column. The downward bias of pointing was found to be independent of which probe was first presented. As Fig. 7a shows, however, horizontal pointing in the Fixation task for left-probe-first trials was shifted in the leftward direction. Likewise, right-probe-first trials of the Fixation task elicited a rightward shift of pointing (Fig. 7b). The same trend is shown in the Saccade task for left-probe-first trials (Fig. 7c) and right-probe-first trials (Fig. 7d). But once again, we found no significant difference between the Fixation task (mean bias=0.90°) and the Saccade task (mean bias=0.72°): t (13)=−0.932; P=0.368.

Horizontal and vertical pointing for left-probe-first trials and right-probe-first trials. Like Fig. 5, the panels on the left show horizontal pointing and the panels on the right show vertical pointing. As shown earlier, mean vertical pointing errors increased as a function of the target’s vertical position and does not show to be influenced by the order of probe presentation. For left-probe-first trials of the Fixation task (a), pointing tended to be shifted to the left of the targets. These shifts were most evident with the middle two targets at positions −1° and +1°. The same trends were found in the Saccade task when we separate left-probe-first trials (c) and right-probe-first trials (d)

Variations in saccade size

How much did the pattern of these errors depend on the details of the stimulus configuration and saccade size? In a variation of our main Saccade task, we varied saccadic amplitude to see if pointing errors were influenced by the distance between the initial and final fixation points. In this Variable Saccade task, subjects made either 4°, 8°, or 12° saccades between line probes intersecting pre- and post-saccadic fixation crosses.

Figure 8 shows the average pointing positions across all subjects (n=4) for each saccade amplitude: 4° saccade condition (Fig. 8a), 8° saccade condition (Fig. 8b), and 12° saccade condition (Fig. 8c). Left-probe-first trials are shown in the left column and right-probe-first trials in the right column. In general, the patterns were similar to those observed above but there was also an effect of saccade size (or probe orientation, since this co-varied with fixation). Horizontal pointing errors were larger with larger saccades than those with smaller saccades (e.g. Fig. 8a vs. Fig. 8c). Separately for horizontal and vertical pointing, we calculated the mean correlation (Pearson’s r) of pointing errors for every pair of saccade amplitude (i.e. 4° vs. 8°, 8° vs. 12°, and 4° vs. 12°). Horizontal pointing errors yielded moderate mean correlations: r(4° vs. 8°)=0.46, r(8° vs. 12°)=0.48, and r(4° vs. 12°)=0.34. Mean correlations of vertical pointing errors were weaker: r(4° vs. 8°)=0.47, r(8° vs. 12°)=0.37, and r(4° vs. 12°)=0.16.

Mean pointing performance across subjects for a 4° horizontal saccades, b 8° saccades, and c 12° saccades. Pointing performance continued to be biased leftward for left-probe-first trials (left column) and rightward for right-probe-first trials (right column). Horizontal pointing errors increased slightly as a function of saccade amplitude (or distance between the probe lines), whereas vertical pointing yielded the same undershoot bias in each saccade condition

Within each subject, we performed pairwise t-tests to compare the pointing errors for each of these pairs. For all subjects, horizontal pointing errors in the 12° saccade condition were significantly larger than those in the 4° and 8° saccade conditions. The results from one typical subject, for example, were 8° vs. 12°: t (23)=−3.949, P=0.001; and 4° vs. 12°: t (23)=−4.53, P<0.00. Conversely, only half of our subjects showed significant differences in horizontal pointing errors between the 8° and 4° saccade conditions: AK t (23)=3.296, P=0.003; JP t (23)=4.168, P<0.00; RR t (23)=1.923, P=0.067; JG t (23)=0.427, P=0.427. Most of comparisons of vertical pointing errors did not differ significantly: for example, AK 8° vs. 12°: t (23)=−1.275, P=0.22, 4° vs. 8°: t (23)=0.048, P=0.96. These results suggest that the general pattern of the results remained constant for different saccade sizes, but a portion of the errors was influenced either by the size of the saccade or the distance and angle between the probes. In the following sections, we attempt to disambiguate the sources of these errors and focus on the contribution of the saccade.

Source of the errors

To summarize our main result so far, we have found minor task-dependent variations in subjects’ errors, but no significant differences between the amount of errors in the Fixation and Saccade tasks when the stimuli are otherwise held constant. Given this, a natural question to ask is whether the errors observed in these two tasks originated from the same source, or were due to two different (but approximately equal) sources of variability. In particular, was the main source of the error simply the intersection judgment, independent of the eye movement?

If a single mechanism were the cause the Fixation and Saccade task errors, we should expect to find significant correlations between these errors for similar targets. Table 5 shows each subject’s correlation using Pearson’s r and r 2 (how much variance of Saccade task errors can be accounted for by Fixation task errors). Correlations were calculated from each target’s right-probe-first and left-probe-first mean pointing errors of the Saccade task with those of the Fixation task. For horizontal pointing, the lowest correlation (i.e. r and r 2) was a moderate relationship (e.g. r=0.36) and highest was a strong relationship (e.g. r=0.76). Generally, correlations of vertical pointing were higher with the lowest correlation at r=0.52 and the highest at r=0.89. These data suggest that some of the source of error may have been shared between the two tasks, but some proportion of the errors in the Saccade task could not be accounted for by similar errors in the Fixation task.

Accounting for eye rotation

Finally, the latter section raises the possibility that subjects may have made some errors specific to judging the metrics of the eye rotation in the Saccade task. To test this directly, we plotted the subjects’ pointing errors as a function of predicted pointing errors if subjects remembered the probes’ orientation but completely failed to account for the saccade, that is, if they had simply used retinal inputs to calculate the intersection point. Figure 9a–g shows the actual horizontal errors vs. predicted horizontal errors for each subject in the Saccade task after we subtract out Fixation task errors from Saccade task errors. Predicted errors were calculated using the retinal position of the resulting intersection points of the lines relative to the final eye position (which varied slightly). Each circle corresponds to the mean horizontal pointing error from the Saccade task minus the mean horizontal pointing error from the Fixation task for the same target (thus, this figure only shows the errors that are specific to the Saccade task). Standard error bars are shown for both the actual and predicted pointing errors. Positive values represent rightward pointing errors whereas negative values signify leftward errors. Pointing errors averaged across all subjects are shown in Fig. 9 h.

Actual errors vs. errors predicted if subjects failed to account for saccadic eye movements after subtracting away average horizontal pointing errors from the Fixation task. a–g Individual subjects’ results of average horizontal pointing errors of every target’s horizontal position (i.e. −3°, −1°, +1°, +3°). Standard error bars are given for each target position. Rightward pointing errors correspond to positive values and leftward pointing errors given by negative values. h Average horizontal pointing errors for each horizontal target position across all subjects. i–j Average vertical pointing errors of every target’s vertical position (i.e. +1°, +3°, +5°, +7°) for one typical subject and average pointing across all subjects, respectively. Open circles represent right-probe-first trials as closed circles represent left-probe-first trials. Negative values correspond to downward pointing errors. The thick dashed lines in panels a, h, i, and j represent how the pointing errors would appear if subjects do not account for saccadic eye movements

The thick dashed lines in Fig. 9a, h show how the data should be plotted if subjects failed to account for eye movements according to the “retinal” model. As this figure illustrates, subjects’ pointing errors did not follow this prediction. However, as one can see, after subtracting out the baseline errors for this task there was virtually no systematic error related to the saccade, but rather, the data follow the predictions of a spatially correct model of TSI.

The “retinal” model also predicts systematic vertical errors, due to the oblique interaction effect described above. Figure 9i shows the actual errors vs. predicted of vertical pointing for one typical subject in the Saccade task. Each circle corresponds to the Saccade task errors minus the Fixation task errors from a single target. Right-probe-first trials (open circles) and left-probe-first trials (closed circles) were separated to minimize the variance in the data. Positive values represent upward pointing errors whereas negative values correspond to downward errors. Again, the thick dashed line shows the predicted data if subjects failed to account for eye movements. As shown in Fig. 9i, pointing errors did not follow this prediction in the subject shown. This is also evident in the average errors of vertical pointing across all subjects (Fig. 9j). Thus, it appears that our subjects used egocentric information to completely compensate for the eye movement in the Saccade task.

Discussion

The results of our study suggest that humans are able to spatially and temporally integrate visual orientation and location information across saccades at least as well as they do within a single fixation. Compared to our Control task, subjects made more errors in both the Fixation and Saccade line intersection tasks, presumably because they required subjects to retain and integrate more information—that is, both orientation and spatial location. However, our results show that mouse-pointing in the Saccade task was just as accurate as in the Fixation task.

These results are in accord with previous studies that show retention for orientation in transsaccadic memory (Henderson and Hollingworth 1999; Henderson and Siefert 1999; Landman et al. 2003; Verfaillie et al. 1994; Verfaillie and De Graef 2000). However, our results go beyond these studies to show how this information can be spatially integrated over time—true TSI. Our results are also generally consistent with those of Hayhoe et al. (1991), who were the first to demonstrate integration of simple dot stimuli across saccades to produce a higher level internal construct. But our results expand on these, not only because the stimuli and task were different, but also because our direct measures of eye orientation allowed for a quantitative measure of the incorporation of oculomotor signals into TSI.

Importantly, our Saccade task was designed so that it could only be correctly performed if subjects integrated extra-retinal signals about the direction and size of the eye movement into the process of TSI. Notably, this was also true in our single-mask control, in which subjects were never allowed to witness the same allocentric cue across the initial and final fixation points. The presence or absence of a second mask had no effect on our results. Therefore, since the visual probes were presented at successive fixations in an otherwise dark room with no retinal information about their relative alignment, correct judgment of their intersection point required an internal knowledge of the intervening eye movement. Although subjects showed some errors in this task, these errors failed to follow the predictions of a model that relies solely on input derived from retinal signals.

Indeed, once the baseline errors from the Fixation task were subtracted from the corresponding errors in the Saccade task, there was hardly any systematic error left to attribute to the saccade itself. The errors observed in the two tasks were not perfectly correlated—suggesting that an intervening saccade did (not surprisingly) have some effect on storage, retrieval, or integration of visual information. However, these errors were not systematic. Thus, it appears that the human visual system can use extra-retinal signals to account, nearly perfectly, for shifts in gaze between successive fixations and incorporate this into an overall transsaccadic percept.

Errors in the intersection task

Although the main point of our study was to compare the Fixation and Saccade tasks, some unexpected errors arose in both these versions of our line intersection task. These include two systematic biases that merit consideration. First, subjects tended to underestimate the target’s true vertical position. This undershooting increased as a function of the targets’ vertical positions. Second, pointing was biased in the direction of the first bar probe. Subjects tended to point to the left of the target in the left-probe-first trials and right of the target in right-probe-first trials. In the Variable Saccade task, these errors tended to increase with the distance between the initial and final fixation points, either because of the increase in saccade size, or perhaps more likely (given the rest of our analysis), simply because this changed the orientation and location of the stimulus probes. When we were able to make a direct comparison, the errors in the two tasks (Fixation and Saccade) were moderately correlated, and not significantly different, suggesting that much of the error in the Saccade task was due to the same perceptual elements of the Fixation task. Again, the detailed reasons for these errors are orthogonal to our main goal, but since this was such an obvious by-product of the task, we shall attempt to address them.

The vertical pointing biases are consistent with previous studies of finger-pointing to remembered peripheral targets after making an eye movement (Henriques et al. 1998; Henriques and Crawford 2000). Similar horizontal effects have also been observed in previous pointing studies (Bock 1986; Henriques and Crawford 2000). Such errors might be attributed to a bias toward initial hand position (Vindras et al. 1998). Other explanations for similar results include the idea of “cognitive gravity” (Hubbard 1995; Kerzel 2002) and (since our targets were located above the fovea) a localization bias toward the fovea (Mateeff and Gourevich 1983; Kerzel 2002; Musseler et al. 1999; Sheth and Shimojo 2001; van der Heijden et al. 1999). However, other studies have reported a “retinal exaggeration” bias toward pointing away from the fovea, in both the vertical or horizontal directions (Bock 1986; Henriques et al. 1998; Henriques and Crawford 2000).

It is less clear why the order of stimulus presentation had such a strong effect on the judged horizontal location of the line intersection point. The “retinal exaggeration effect” (Bock 1986; Henriques et al. 1998; Henriques and Crawford 2000) predicts some aspects of this in the Saccade task (Fig. 4b), but fails to account for it in the Fixation task (because the distance of both probes would be exaggerated centrifugally). If subjects showed a greater downward bias in encoding the first probe, an “oblique interaction effect” predicts this observation fairly well (Fig. 4a), but we failed to find evidence for such an effect when we correlated vertical and horizontal pointing errors. Some of these questions might be resolved by varying the fixation points, hand position, and intersection points in our task, but this is more relevant to understanding the basic aspects of memory and synthesis of linear features, as opposed to TSI, which was our goal.

Finally, it is likely that some or all of these pointing biases were a product of an impoverished visual environment and lack of allocentric cues in our experiment (Lemay et al. 2004). This was necessary to probe the existence of egocentric extra-retinal signals, but clearly the exocentric cues present in a normal visual environment influence and improve performance (Barry et al. 1997; Lemay et al. 2004; Schoumans et al. 2000). This would likely be true for the basic aspects of our line intersection task in the Fixation condition and for TSI in the Saccade condition. For example, Hayhoe et al. (1991) found an improvement subjects’ performance in their TSI task simply by adding a single visual reference point. In the following section, we consider the relevance of our study for understanding TSI in more natural visual conditions.

Mechanisms for TSI: egocentric vs. exocentric cues

Based on our findings we conclude that subjects are able to retain and integrate orientation and location information across saccades. This is potentially important for normal perception and short-term memory, because the perception of large objects or complex scenes may require the integration of information across several gaze fixations. In as much as the synthesis of information about lines (edges, contours, etc.) is thought to be crucial for object perception (Hess and Field 1999; Kourtzi et al. 2003), TSI of such features—as demonstrated in the current study—would be critical in such situations.

The question again arises, how is this TSI implemented? The design of our experiment does not differentiate between “early visual,” “cognitive,” and “visuomotor” mechanisms; all of these come into play in our task. In particular, this task involves spatial vision, short-term memory, spatial updating across saccades, and the visuomotor transformations for pointing. Further, our task might be solved using a number of internal algorithms that could not be distinguished during the present experiment. However, distinguishing between all of the possible algorithms for TSI was not our goal. We instead focus on distinguishing between the possible sources of information for solving such tasks: retinal signals (which we will refer to as a visual mechanism) and extra-retinal signals based on egocentric measures of self-motion.

The first possibility is that TSI could be done using a purely visual matching algorithm. For example, some recent studies suggest that visual stability occurs by the visual system comparing the pre-saccadic representation of a saccade-target with its post-saccadic foveated image to realign the other contents of the visual scene (Currie et al. 2000; Henderson and Hollingworth 1999; Irwin et al. 1994; McConkie and Currie 1996). However, these theories do not account for our data, first, because our task required integration of information retained from beyond the foveal target zone. Second, because we observed TSI in a situation where subjects had no common visual cues to align the remembered stimuli. This result shows that TSI can rely on internal oculomotor signals to align the stimuli—presumably within some egocentric frame of reference.

Conversely, our data cannot prove that the egocentric mechanism used here is always used in natural situations where more visual information is available. It is possible that our subjects developed some short-term memory mechanism that is never used in real life. But it does seem unlikely that the brain would develop such a mechanism just in case of such an experiment. In general, it makes sense for an optimal visual system to retain some information across saccades, saving the time and energy required to reprocess this information (Henderson 1992; Salthouse et al. 1981). Even so, our data would not contradict the co-existence of other TSI mechanisms such as that proposed in the saccade-target theory.

So then, what role would egocentric signals play in normal vision when both egocentric and exocentric cues are available? An optimal visual system would use all available signals to do its job, including both retinal and extra-retinal signals (Schreiber et al. 2001; Niemeier et al. 2003). One possibility is that in normal behavior the TSI system uses an egocentric mechanism—like that demonstrated in our experiment—to reduce the degrees of freedom and workload of the visual search for corresponding pre- and post-saccadic visual stimuli (Deubel et al. 1996, 1998). This egocentric component of the TSI mechanism would continue to function in the sparse conditions of our experiment, but in normal life it could provide the rough guess that is then refined by visual matching mechanisms—such as the saccade-target theory.

Possible neural mechanisms

The question remains, what is the physiological substrate for these egocentric/exocentric mechanisms? Since so little is known about TSI, we may look to other systems for clues. Psychophysical studies suggest that visuomotor systems do this for simple goals by spatially updating target position in an eye-centered map (Henriques et al. 1998; Crawford et al. 2004). Physiological studies have shown evidence of such “remapping” in area V3 (Nakamura and Colby 2000), lateral intra-parietal cortex (Duhamel et al. 1992; Medendorp et al. 2003), frontal eye fields (Umeno and Goldberg 1997), and the superior colliculus’s intermediate layer (Walker et al. 1995). There is also evidence that a similar remapping mechanism could contribute to some aspects of perception (Colby et al. 1996; Pisella and Mattingley 2004).

It is not known if “dorsal stream” remapping signals contribute directly to TSI or if “ventral stream” perceptual structures develop a parallel updating mechanism, or if they use an entirely different mechanism. Since TSI requires the synthesis of both spatial “dorsal stream” and object recognition “ventral stream” processes, it poses a fundamental visual binding problem that is not present in spatial updating for saccades. In other words, based on our current understanding of the visual system (e.g. Milner and Goodale 1995; Mishkin et al. 1983), it does not seem likely that either the dorsal spatio-motor system or the ventral recognition system could solve this individually, or in isolation from each other. This again begs the question—where is the egocentric map that allows TSI to solve problems like that posed in our experiment in conjunction with more normal and complete perceptual demands?

One possibility is that recurrent oculomotor signals leading from the dorsal stream back to early visual areas (Moore and Armstrong 2003), and eye-centered updating (Nakamura and Colby 2000) provide the basis for a common, coordinated eye-centered map that contributes to both “dorsal stream” and “ventral stream” processes, like transsaccadic perceptual integration. In such a scheme, early visual areas like V2/V3 are not only the source of information for the “dorsal” and “ventral” streams—through feed-forward connections, but also the target, through recurrent connections. This would make those earlier visual areas the common visuospatial “sketch pad” wherein both “dorsal” and “ventral” type information could be compared in a common, eye-centered, updateable frame, and then shared back to both streams.

Such a scheme would not limit the system to eye-centered calculations. Other, more complex allocentric and “object-centered” elements of the task (e.g. Hayhoe et al. 2003) could be elaborated in the higher level visual association areas (Chen and Nakamura 1998; Dimattia and Kesner 1988). Moreover, it does not preclude the possibility that further calculations are implemented in “higher level” centers like frontal cortex, particularly in a task like that used here. However, a common, early eye-centered map working through recurrent feedback connections to both streams of vision does seem to provide a parsimonious neural substrate for the particular types of egocentric binding problems that commonly occur in TSI.

References

Barry SR, Bloomberg JJ, Huebner WP (1997) The effects of visual context on manual localization of remembered targets. Neuroreport 8:469–473

Blohm G, Missal M, Lefevre P (2003) Interaction between smooth anticipation and saccades during ocular orientation in darkness. J Neurophysiol 89(3):1423–1433

Bock O (1986) Contribution of retinal versus extraretinal signals towards visual localization in goal-directed movements. Exp Brain Res 64:476–482

Bridgeman B, Mayer M (1983) Failure to integrate visual information from successive fixations. Bull Psychon Soc 21:285–286

Chen LL, Nakamura K (1998) Head-centred representation and spatial memory in rat posterior parietal cortex. Psychobiology 26:119–127

Colby CL, Duhamel JR, Goldberg ME (1996) Visual, presaccadic and cognitive activation of single neurons in monkey lateral intraparietal area. J Neurophysiol 76(5):2841–2852

Crawford JD, Medendorp WP, Marotta JJ (2004) Spatial transformations for eye–hand coordination. J Neurophysiol 92:10–19

Currie CB, McConkie GW, Carlson-Radvansky LA, Irwin DE (2000) The role of the saccade target object in the perception of a visually stable world. Percept Psychophys 62:673–683

Deubel H, Schneider WX, Bridgeman B (1996) Postsaccadic target blanking prevents saccadic suppression of image displacement. Vision Res 36:985–996

Deubel H, Bridgeman B, Schneider WX (1998) Immediate post-saccadic information mediates space constancy. Vision Res 38:3147–3159

DiMattia BV, Kesner RP (1988) Spatial cognitive maps: differential role of parietal cortex and hippocampal formation. Behav Neurosci 102(4):471–480

Duhamel J, Colby CL, Goldberg ME (1992) The updating of the representation of visual space in parietal cortex by intended eye movements. Science 255:90–92

Germeys F, De Graef P, Verfaillie K (2002) Transsaccadic perception of saccade target and flanker objects. J Exp Psychol Hum Percept Perform 28:868–883

Hallett PE, Lightstone AD (1976) Saccadic eye movements towards stimuli triggered by prior saccades. Vision Res 16:99–106

Hayhoe M, Lachter J, Feldman J (1991) Integration of form across saccadic eye movements. Perception 20:393–402

Hayhoe MM, Shrivastava A, Mruczek R, Pelz JB (2003) Visual memory and motor planning in a natural task. J Vis 3(1):49–63

Haywood R (1986) Acquisition of a micro scale photographic survey using an autonomous submersible. IEEE Oceans 5:1423–1426

von Helmholtz H (1963) Hanbuch der Physiologischen Optik [Handbook of physiological optics]. In: Southall JPC (ed. 2nd Trans.) Helmholtz’s treatise on physiological optics, vol 3. Dover, New York (Original published 1866; English translation originally published 1925), pp 247–270

Henderson JM (1992) Identifying objects across saccades: effects of extrafoveal preview and flanker object context. J Exp Psychol Learn Mem Cogn 18(3):521–530

Henderson JM, Hollingworth A (1999) The role of fixation position in detecting scene changes across saccades. Psychol Sci 10:438–443

Henderson JM, Siefert ABC (1999) The influence of enantiomorphic transformation on transsaccadic object integration. J Exp Psychol Hum Percept Perform 25:243–255

Henriques DYP, Crawford JD (2000) Direction-dependent distortions of retinocentric space in the visuomotor transformation for pointing. Exp Brain Res 132:179–194

Henriques DYP, Klier EM, Smith MA, Lowy D, Crawford JD (1998) Gaze-centered remapping of remembered visual space in an open-loop pointing task. J Neurosci 18(4):1583–1594

Hess R, Field D (1999) Integration of contours: new insights. Trends Cogn Sci 3:480–486

Hubbard TL (1995) Cognitive representation of motion: evidence for friction and gravity analogues. J Exp Psychol Learn Mem Cogn 21:241–254

Irwin DE (1991) Information integration across saccadic eye movements. Cogn Psychol 23:420–456

Irwin DE (1992) Memory for position and identity across eye movements. J Exp Psychol Learn Mem Cogn 18:307–317

Irwin DE (1996) Integrating information across saccadic eye movements. Curr Dir Psychol Sci 5:94–100

Irwin DE, Andrews R (1996) Integration and accumulation of information across saccadic eye movements. In: Inui T, McClelland JL (eds) Attention and performance XVI: information integration in perception and communication. MIT Press, Cambridge, pp 125–155

Irwin DE, Yantis S, Jonides J (1983) Evidence against visual integration across saccadic eye movements. Percept Psychophys 34:49–57

Irwin DE, Brown J, Sun J (1988) Visual masking and visual integration across saccadic eye movements. J Exp Psychol Gen 117:276–287

Irwin DE, Zacks J, Brown J (1990) Visual memory and the perception of a stable visual environment. Percept Psychophys 47:35–46

Irwin DE, McConkie GW, Carlson-Radvansky L, Currie C (1994) A localist evaluation solution for visual stability across saccades. Behav Brain Sci 17:265–266

Kahneman D (1968) Method, findings, and theory in studies of visual masking. Psychol Bull 70:404–425

Kerzel D (2002) The locus of “memory displacement” is at least partially perceptual: effects of velocity, expectation, friction, memory averaging, and weight. Percept Psychophys 64:680–692

Kourtzi Z, Tolias AS, Altman CF, Augath M, Logothetis NK (2003) Integration of local features into global shapes: monkey and human fMRI studies. Neuron 37:333–346

Landman R, Spekreijse H, Lamme VAF (2003) Large capacity storage of integrated objects before change blindness. Vision Res 43:149–164

Lemay M, Bertram CP, Stelmach GE (2004) Pointing to an allocentric and egocentric remembered target. Motor Control 8(1): 16–32

MacKay DM (1973) Visual stability and voluntary eye movements. In: Jung R (ed) Handbook of sensory physiology, vol 8. Springer, Berlin Heidelberg New York, pp 307–331

Mateeff J, Gourevich A (1983) Peripheral vision and perceived visual direction. Biol Cybern 49:111–118

Matin E (1974) Saccadic suppression: a review and an analysis. Psychol Bull 81:899–917

Mays LE, Sparks DL (1980) Saccades are spatially, not retinocentrically, coded. Science 208(4448):1163–1165

McConkie GW, Currie C (1996) Visual stability across saccades while viewing complex pictures. J Exp Psychol Hum Percept Perform 22:563–581

McConkie GW, Rayner K (1976) Identifying the span of the effective stimulus in reading: literature review and theories in reading. In: Singer H, Ruddell RB (eds) Theoretical models and processes of reading. International Reading Association, Newark, pp 137–162

McConkie GW, Zola D (1979) Is visual information integrated across successive fixations in reading? Percept Psychophys 25:21–224

Melcher D, Morrone MC (2003) Spatiotopic temporal integration of visual motion across saccadic eye movements. Nat Neurosci 8:877–881

Medendorp WP, Goltz H, Vilis T, Crawford JD (2003) Gaze-centered updating of visual space in human parietal cortex. J Neurosci 23:6209–6214

Milner AD, Goodale MA (1995) The visual brain in action. Oxford University Press, London

Mishkin M, Ungerleider LG, Macko KA (1983) Object vision and spatial vision: two cortical pathways. Trends Neurosci 6(10):414–417

Moore T, Armstrong KM (2003) Selective gating of visual signals by microstimulation of frontal cortex. Nature 421:370–373

Musseler J, van der Heijden AHC, Mahmud SH, Deubel H, Ertsey S (1999) Relative mislocalization of briefly presented stimuli in the retinal periphery. Percept Psychophys 61: 1646–1661

Nakamura K, Colby CL (2000) Visual, saccade-related, and cognitive activation of single neurons in monkey extrastriate area V3A. J Neurophysiol 84(2):677–692

Nakamura K, Colby CL (2002) Updating of the visual representation in monkey striate and extrastriate cortex during saccades. Proc Natl Acad Sci 99:4026–4031

Niemeier M, Crawford JD, Tweed DB (2003) Optimal transsaccadic integration explains distorted spatial perception. Nature 422(6927):76–80

O’Regan JK, Levy-Schoen A (1983) Integrating visual information from successive fixations: does transsaccadic fusion exist? Vision Res 23:765–768

Palmer J, Ames CT (1992) Measuring the effect of multiple eye fixations on memory for visual attributes. Percept Psychophys 52:295–306

Pisella L, Mattingley JB (2004) The contribution of spatial remapping impairments to unilateral visual neglect. Neurosci Biobehav Rev 28:181–200

Pollatsek A, Rayner K, Collins W (1984) Integrating pictorial information across eye movements. J Exp Psychol Gen 113:426–442

Rayner K (1978) Eye movements in reading and information processing. Psychol Bull 85:618–660

Rayner K, Pollatsek A (1983) Is visual information integrated across saccades? Percept Psychophys 34:39–48

Robinson DA (1963) A method of measuring eye movement using a sclera search coil in a magnetic field. IEEE Trans Biomed Eng 10:137–145

Salthouse TA, Ellis CL, Diener DC, Somberg BL (1981) Stimulus processing during eye fixations. J Exp Psychol Hum Percept Perform 7(3):611–623

Schiller PH (1965) Monotopic and dichoptic visual masking by patterns and flashes. J Exp Psychol 69:193–199

Schoumans N, Koenderink JJ, Kappers AML (2000) Change in perceived spatial directions due to context. Percept Psychophys 63(3) 532–539

Schreiber K, Crawford JD, Fetter M, Tweed D (2001) The motor side of depth vision. Nature 410(6830):819–822

Simons DJ, Mitroff SR, Franconeri SL (2003) Scene perception: what we can learn from visual integration and change detection. In: Rhodes G, Peterson MA (eds) Perception of faces, objects, and scenes: analytic and holistic processes. Oxford University Press, London, pp 335–351

Sheth BR, Shimojo S (2001) Compression of space in visual memory. Vision Res 41:329–341

Stewart AL, Purcell DG (1974) Visual backward masking by a flash of light: a study of U-shaped detection functions. J Exp Psychol 103:553–566

Umeno MM, Goldberg ME (1997) Spatial processing in the monkey frontal eye field I. Predictive visual responses. J Neurophysiol 78:1373–1383

van der Heijden AHC, van der Geest JN, de Leeuw F, Krikke K, Muessler J (1999) Sources of position-perception error for small isolated targets. Psychol Res 62(1):20–35

Verfaillie K, De Graef P (2000) Transsaccadic memory for position and orientation of saccade source and target. J Exp Psychol Hum Percept Perform 26:1243–1259

Verfaillie K, De Troy A, Van Rensbergen J (1994) Transsaccadic integration of biological motion. J Exp Psychol Learn Mem Cogn 20:649–670

Verfaillie K, De Graef P, Germeys F, Gysen V, Van Eccelpoel C (2001) Selective transsaccadic coding of object and event-diagnostic information. Psychol Belg 41(1–2):89–114

Vindras P, Desmurget M, Prablanc C, Viviani P (1998) Pointing errors reflect biases in the perception of the initial hand position. J Neurophysiol 79:3290–3294

Walker MF, Fitzgibbon EJ, Goldberg ME (1995) Neurons in the monkey superior colliculus predict the visual result of impending saccadic eye movements. J Neurophysiol 73:1988–2003

Zheng Q, Chellappa R (1993) A computational vision approach to image registration. IEEE Trans Image Process 2(3):311–325

Acknowledgments

The authors thank Saihong Sun and Dr. Hongying Wang for technical assistance. This work was supported by grants from the Natural Sciences and Engineering Research Council of Canada and the Canadian Institutes of Health Research. J.D. Crawford holds a Canada Research Chair.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Prime, S.L., Niemeier, M. & Crawford, J. Transsaccadic integration of visual features in a line intersection task. Exp Brain Res 169, 532–548 (2006). https://doi.org/10.1007/s00221-005-0164-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00221-005-0164-1