Abstract

Purpose

To assess the feasibility, validity, and reliability of a multi source feedback program for anesthesiologists.

Methods

Surveys with 11, 19, 29 and 29 items were developed for patients, coworkers, medical colleagues and self, respectively, using five-point scales with an ‘unable to assess’ category. The items addressed communication skills, professionalism, collegiality, continuing professional development and collaboration. Each anesthesiologist was assessed by eight medical colleagues, eight coworkers, and 30 patients. Feasibility was assessed by response rates for each instrument. Validity was assessed by rating profiles, the percentage of participants unable to assess the physician for each item, and exploratory factor analyses to determine which items grouped together into scales. Cronbach’s alpha and generalizability coefficient analyses assessed reliability.

Results

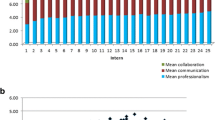

One hundred and eighty-six physicians participated. The mean number and percentage return rate of respondents per physician was 17.7 (56.2%) for patients, 7.8 (95.1%) for coworkers, and 7.8 (94.6%) for medical colleagues. The mean ratings ranged from four to five for each item on each scale. There were relatively few items with high percentages of ‘unable to assess’. The factor analyses revealed a two-factor solution for the patient, a two-factor solution for the coworker and a three-factor solution for the medical colleague survey, accounting for at least 70% of the variance. All instruments had a high internal consistency reliability (Cronbach’s α > 0.95). The generalizability coefficients were 0.65 for patients, 0.56 for coworkers and 0.69 for peers.

Conclusion

It is feasible to develop multi source feedback instruments for anesthesiologists that are valid and reliable.

Résumé

Objectif

Évaluer la faisabilité, la validité et la fiabilité ďun programme de rétroaction multisources pour les anesthésiologistes.

Méthode

Des sondages comportant 11, 19, 29 et 29 éléments ont été élaborés pour les patients, les collègues de travail, les collègues médecins et nous-mêmes respectivement, en utilisant des échelles en cinq points dont une catégorie «impossible ďévaluer». Les éléments concernaient les habiletés de communications, le professionnalisme, la collégialité, la formation professionnelle continue et la collaboration. Chaque anesthésiologiste était évalué par huit médecins, huit collègues de travail et 30 patients. La faisabilité a été évaluée par les taux de réponses pour chaque instrument. La validité a été évaluée par les profils de cotation, le pourcentage des participants incapables ďévaluer le médecin pour chaque élément et les analyses factorielles exploratrices pour déterminer quels étaient les éléments regroupables avec ľusage ďune échelle. La fiabilité a été évaluée par les analyses du coefficient Alpha de Cronback et de généralisabilité.

Résultats

On a compté 186 médecins participants. Le nombre moyen de répondants et de pourcentage de questionnaires retournés par médecin a été de 17,7 (56,2 %) pour les patients, 7,8 (95,1 %) pour les collègues de travail et 7,8 (94,6 %) pour les collègues médecins. Les scores moyens étaient de quatre ou cinq pour chaque élément de chaque échelle. Il y a eu relativement peu ďéléments avec de hauts pourcentages de réponses «impossible ďévaluer». Les analyses factorielles ont révélé une solution bifactorielle au sondage des patients, une bifactorielle à celui des collègues de travail et une trifactorielle à celui des collègues médicaux, ce qui constitue au moins 70 % de la variance. Tous les instruments avaient une fiabilité de forte cohérence interne (coefficient α de Cronback > 0,95). Les coefficients de généralisabilité ont été de 0,65 pour les patients, 0,56 pour les collègues de travail et 0,69 pour les pairs.

Conclusion

Il est faisable ďélaborer des instruments valides et fiables de rétroaction multisources pour les anesthésiologistes.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Royal College of Physicians and Surgeons of Canada, Information by specialty or subspecialty anesthesia. Available from URL; http://rcpsc.medical.org/information/index.php. Accessed July 21, 2005.

Fletcher GC, McGeorge P, Flin RH, Glavin RJ, Maran NJ. The role of non-technical skills in anaesthesia: a review of current literature. Br J Anaesth 2002; 88: 418–29.

Slagle J, Weinger MB, Dinh MT, Brumer VV, Williams K. Assessment of the intrarater and interrater reliability of an established task analysis methodology. Anesthesiology 2002; 96: 1129–39.

St Jacques PJ, Patel N, Higgins MS. Improving anaesthesiologist performance through profiling and incentives. J Clin Anesthesiol 2004, 16: 523–8.

Evans R, Elwyn G, Edwards A. Review of instruments for peer assessment of physicians. BMJ 2004, 328: 1240.

Lockyer J. Multisource feedback in the assessment of physician competencies. J Contin Educ Health Prof 2003; 23: 4–12.

Hall W, Violato C, Lewkonia R, et al. Assessment of physician performance in Alberta: The physician achievement review. CMAJ 1999; 161: 52–7.

Violato C, Lockyer J, Fidler H. Multisource feedback: a method of assessing surgical practice. BMJ 2003; 326: 546–8.

Lockyer J, Violato C. An examination of the appropriateness of using a common peer assessment instrument to assess physician skills across specialties, Acad Med 2004; 79(10 Suppl.): S5–8.

Lipner RS, Blank LL, Leas BF, Fortna GS. The value of patient and peer ratings in recertification. Acad Med 2002; 77(10 Suppl.): S64–6.

Ramsey PG, Wenrich MD, Carline JD, Inui TS, Larson EB, LoGerfo JP. Use of peer ratings to evaluate physician performance. JAMA 1993; 269: 1655–60.

Ramsey PG, Carline JD, Blank LL, Wenrich MD. Feasibility of hospital-based use of peer ratings to evaluate the performances of practicing physicians. Acad Med 1996; 71: 364–70.

Le May S, Dupuis G, Harel F, Taillefer MC, Dube S, Hardy JF. Clinimetric scale to measure surgeons’ satisfaction with anesthesia services. Can J Anesth 2000; 47: 398–405.

Carnie J. Patient feedback on the anaesthetist’s performance during the pre-operative visit2. Anaesthesia 2002; 57: 697–701.

Thoms GM, McHugh GA, Lack JA. What information do anaesthetists provide for patients. Br J Anesth 2002; 89: 917–9.

Dexter F, Aker J, Wright WA. Development of a measure of patient satisfaction with monitored anesthesia care. The Iowa Satisfaction with Anesthesia Scale. Anesthesiology 1997; 87: 865–73.

Fidler H, Lockyer JM, Toews J, Violato C. Changing physicians’ practices: the effect of individual feedback. Acad Med 1999; 74: 702–14.

College of Physicians and Surgeons of Alberta, Physician Achievement Program. Available from URL; http:// www.par-program.org/ (accessed July 21, 2005).

Author information

Authors and Affiliations

Corresponding author

Electronic supplementary material

Rights and permissions

About this article

Cite this article

Lockyer, J.M., Violato, C. & Fidler, H. A multi source feedback program for anesthesiologists. Can J Anesth 53, 33–39 (2006). https://doi.org/10.1007/BF03021525

Accepted:

Issue Date:

DOI: https://doi.org/10.1007/BF03021525