Abstract

Objective:To determine the degree and sources of variability in faculty evaluations of residents for the American Board of Internal Medicine (ABIM) Clinical Evaluation Exercise (CEX).

Design:Videotaped simulated CEX containing programmed resident strengths and weaknesses shown to faculty evaluators, with responses elicited using the openended form recommended by the ABIM followed by detailed questionnaires.

Setting:University hospital.

Participants:Thirty-two full-time faculty internists.

Intervention:After the open-ended form was completed and collected, faculty members rated the resident’s performance on a five-point scale and rated the importance of various aspects of the history and physical examination for the patient shown.

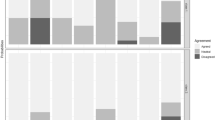

Measurements and Main Results:Very few of the resident’s strengths and weaknesses were mentioned on the openended form, although responses to specific questions revealed that faculty members actually had observed many errors and some strengths that they had failed to document. Faculty members also displayed wide variance in the global assessment of the resident: 50% rated him marginal, 25% failed him, and 25% rated him satisfactory. Only for performance areas not directly related to the patient’s problems could substantial variability be explained by disagreement on standards.

Conclusions:Faculty internists vary markedly in their observations of a resident and document little. To be useful for resident feedback and evaluation, exercises such as the CEX may need to use more specific and detailed forms to document strengths and weaknesses, and faculty evaluators probably need to be trained as observers.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

American Board of Internal Medicine. Evaluation of clinical competence. Portland, OR: American Roard of Internal Medicine, 1986.

Blank LL, Grosso LJ, Benson JA. A survey of clinical skills evaluation practices in internal medicine residency programs. J Med Educ. 1984; 59:401–6.

Cooper WH. Ubiquitous halo. Psychol Bull. 1981; 90:218–44.

Stillman PL, Swanson DB, Smee S, et al. Assessing clinical skills of residents with standardized patients. Ann Intern Med. 1986; 105:762–71.

Woolliscroft JO, Stross JK, Silva J. Clinical competence certification: A critical appraisal. J Med Educ. 1984; 59:799–805.

Kroboth FJ, Kapoor W, Brown FH, Karpf M, Levey GS. A comparative trial of the clinical evaluation exercise. Arch Intern Med. 1985; 145:1121–3.

American Board of Internal Medicine. Evaluation of clinical competence. Portland, OR: American Board of Internal Medicine, 1985.

Feinstein, A. Clinical epidemiology: the architecture of clinical research. Philadelphia: W. B. Saunders, 1985; 184–6, 638–9.

Moulopoulos SD, Stamatelopoulos S, Nanas S, Economides K. Medical education and experience affecting intra-observer variability. Med Educ. 1986; 20:133–5.

Sackett DL, Haynes RB, Tugwell P. Clinical epidemiology: a basic science for clinical medicine. Boston: Little, Brown, 1985:22–32.

Wakefield J. Direct observation. In: Neufeld VR, Norman GR, eds. Assessing clinical competence. New York: Springer, 1985:51–70.

Orkin FK, Greenhow DE. A study of decision making: how faculty define competence. Anesthesiology. 1978; 48:267–71.

Wigton RS. Factors important in the evaluation of clinical performance of internal medicine residents. J Med Educ. 1980; 55:206–8.

Butzin DW, Finberg L, Brownlee RC, Guerin RO. A study of the reliability of the grading process used in the American Board of Pediatrics oral examination. J Med Educ. 1982; 57:944–6.

Borman WC. Consistency of rating accuracy and rating errors in the judgment of human performance. Organ Behav Hum Perform. 1977; 20:238–52.

Neufeld VR. Implications for education. In: Neufeld VR, Norman GR, eds. Assessing clinical competence. New York: Springer, 1985; 301–5.

Author information

Authors and Affiliations

Additional information

Received from the Fellowship Program in General Internal Medicine, Departments of Medicine, Walter Reed Army Medical Center, Washington, D.C., and the Uniformed Services University of the Health Sciences, Bethesda, Maryland.

Supported in part by the Department of Clinical Investigation, Walter Reed Army Medical Center.

The opinions or assertions contained herein are the private views of the authors and are not to be construed as official or as reflecting the views of the Department of the Army or the Department of Defense.

Rights and permissions

About this article

Cite this article

Herbers, J.E., Noel, G.L., Cooper, G.S. et al. How accurate are faculty evaluations of clinical competence?. J Gen Intern Med 4, 202–208 (1989). https://doi.org/10.1007/BF02599524

Issue Date:

DOI: https://doi.org/10.1007/BF02599524