Abstract

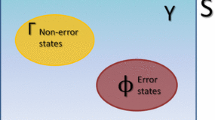

We have developed a new reinforcement learning technique called Bayesian-discrimination-function-based reinforcement learning (BRL). BRL is unique, in that it not only learns in the predefined state and action spaces, but also simultaneously changes their segmentation. BRL has proven to be more effective than other standard RL algorithms in dealing with multi-robot system (MRS) problems, where the learning environment is naturally dynamic. This paper introduces an extended form of BRL that improves its learning efficiency. Instead of generating a random action when a robot encounters an unknown situation, the extended BRL generates an action calculated by a linear interpolation among the rules with high similarity to the current sensory input. In both physical experiments and computer simulations, the extended BRL showed higher search efficiency than the standard BRL.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

References

Sutton, R.S.: Generalization in Reinforcement Learning: Successful Examples Using Sparse Coarse Coding. In: Advances in Neural Information Processing Systems, vol. 8, pp. 1038–1044. MIT Press, Cambridge (1996)

Morimoto, J., Doya, K.: Acquisition of Stand-Up Behavior by a Real Robot using Hierarchical Reinforcement Learning for Motion Learning: Learning “Stand Up” Trajectories. In: Proc. of International Conference on Machine Learning, pp. 623–630 (2000)

Lin, L.J.: Scaling Up Reinforcement Learning for Robot Control. In: Proc. of the 10th International Conference on Machine Learning, pp. 182–189 (1993)

Asada, M., Noda, S., Hosoda, K.: Action-Based Sensor Space Categorization for Robot Learning. In: Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 1502–1509. IEEE, Los Alamitos (1996)

Takahashi, Y., Asada, M., Hosoda, K.: Reasonable Performance in Less Learning Time by Real Robot Based on Incremental State Space Segmentation. In: Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 1502–1524. IEEE, Los Alamitos (1996)

Svinin, M., Kojima, F., Katada, Y., Ueda, K.: Initial Experiments on Reinforcement Learning Control of Cooperative Manipulations. In: Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 416–422. IEEE, Los Alamitos (2000)

Yasuda, T., Ohkura, K.: Autonomous Role Assignment in Homogeneous Multi-Robot Systems. Journal of Robotics and Mechatronics 17(5), 596–604 (2005)

Doya, K.: Reinforcement Learning in Continuous Time and Space. Neural Computation 12, 219–245 (2000)

Williams, R.J.: Simple Statistical Gradient-Following Algorithms for Connectionist Reinforcement Learning. Machine Learning 8, 229–256 (1992)

Author information

Authors and Affiliations

Editor information

Rights and permissions

Copyright information

© 2007 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Yasuda, T., Ohkura, K. (2007). Improving Search Efficiency in the Action Space of an Instance-Based Reinforcement Learning Technique for Multi-robot Systems. In: Almeida e Costa, F., Rocha, L.M., Costa, E., Harvey, I., Coutinho, A. (eds) Advances in Artificial Life. ECAL 2007. Lecture Notes in Computer Science(), vol 4648. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-74913-4_33

Download citation

DOI: https://doi.org/10.1007/978-3-540-74913-4_33

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-74912-7

Online ISBN: 978-3-540-74913-4

eBook Packages: Computer ScienceComputer Science (R0)