Abstract

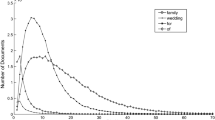

There is increasing interest in improving the robustness of IR systems, i.e. their effectiveness on difficult queries. A system is robust when it achieves both a high Mean Average Precision (MAP) value for the entire set of topics and a significant MAP value over its worst X topics (MAP(X)). It is a well known fact that Query Expansion (QE) increases global MAP but hurts the performance on the worst topics. A selective application of QE would thus be a natural answer to obtain a more robust retrieval system.

We define two information theoretic functions which are shown to be correlated respectively with the average precision and with the increase of average precision under the application of QE. The second measure is used to selectively apply QE. This method achieves a performance similar to that with unexpanded method on the worst topics, and better performance than full QE on the whole set of topics.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Amati, G.: Probability Models for Information Retrieval based on Divergence from Randomness. PhD thesis, Glasgow University (June 2003)

Amati, G., Carpineto, C., Romano, G.: FUB at TREC 10 web track: a probabilistic framework for topic relevance term weighting. In: Voorhees, E.M., Harman, D.K. (eds.) Proceedings of the 10th Text Retrieval Conference TREC 2001, Gaithersburg, MD, pp. 182–191. NIST Special Pubblication 500-250 (2002)

Amati, G., Van Rijsbergen, C.J.: Probabilistic models of information retrieval based on measuring the divergence from randomness. ACM Transactions on Information Systems (TOIS) 20(4), 357–389 (2002)

Carpineto, C., De Mori, R., Romano, G., Bigi, B.: An information theoretic approach to automatic query expansion. ACM Transactions on Information Systems 19(1), 1–27 (2001)

Carpineto, C., Romano, G., Giannini, V.: Improving retrieval feedback with multiple termranking function combination. ACM Transactions on Information Systems 20(3), 259–290 (2002)

Cronen-Townsend, S., Zhou, Y., Bruce Croft, W.: Predicting query performance. In: Proceedings of the 25th annual international ACM SIGIR conference on Research and development in information retrieval, pp. 299–306. ACM Press, New York (2002)

DeGroot, M.H.: Probability and Statistics, 2nd edn. Addison-Wesley, Reading (1989)

Kwok, K.L.: A new method of weighting query terms for ad-hoc retrieval. In: Proceedings of the 19th annual international ACM SIGIR conference on Research and development in information retrieval, pp. 187–195. ACM Press, New York (1996)

Steel, R.G.D., Torrie, J.H., Dickey, D.A.: Principles and Procedures of Statistics, 3rd edn. A Biometrical Approach. McGraw-Hill, New York (1997)

Sullivan, T.: Locating question difficulty through explorations in question space. In: Proceedings of the first ACM/IEEE-CS joint conference on Digital libraries, pp. 251–252. ACM Press, New York (2001)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2004 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Amati, G., Carpineto, C., Romano, G. (2004). Query Difficulty, Robustness, and Selective Application of Query Expansion. In: McDonald, S., Tait, J. (eds) Advances in Information Retrieval. ECIR 2004. Lecture Notes in Computer Science, vol 2997. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-24752-4_10

Download citation

DOI: https://doi.org/10.1007/978-3-540-24752-4_10

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-21382-6

Online ISBN: 978-3-540-24752-4

eBook Packages: Springer Book Archive