Abstract

Corrosion circuit mark up in engineering drawings is one of the most crucial tasks performed by engineers. This process is currently done manually, which can result in errors and misinterpretations depending on the person assigned for the task. In this paper, we present a semi-automated framework which allows users to upload an undigitised Piping and Instrumentation Diagram, i.e. without any metadata, so that two key shapes, namely pipe specifications and connection points, can be localised using deep learning. Afterwards, a heuristic process is applied to obtain the text, orient it and read it with minimal error rates. Finally, a user interface allows the engineer to mark up the corrosion sections based on these findings. Experimental validation shows promising accuracy rates on finding the two shapes of interest and enhance the functionality of optical character recognition when reading the text of interest.

Access provided by Autonomous University of Puebla. Download conference paper PDF

Similar content being viewed by others

Keywords

1 Introduction

Experienced corrosion and material engineers have the task of defining corrosion circuits within a system based on construction materials, operating conditions and active damage mechanisms [1]. This is part of a recommended practice developed by the American Petroleum Institute (API), which outlines the basic elements to maintain a credible risk-based inspection (RBI) programmeFootnote 1. Once the circuits have been defined, the Condition Monitoring Locations (CMLs) and the Thickness Monitoring Locations (TMLs) are installed and documented on a type of engineering drawings known as a Piping and Instrumentation Diagram (P&ID). This process involves a manual mark up which becomes time-consuming and error prone, as shown in Fig. 1.

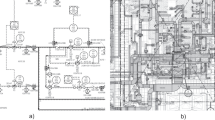

To define a corrosion circuit, engineers need to identify two key elements within the piping system on the P&IDs. The first one is the pipe specification (pipe spec), which is a character string formed by seven sections divided by hyphens. The second one is the connection point. This is a pair of text lines and arrows pointing towards a division line. In a specific P&ID, both the pipe spec and the connection point can be oriented in different directions (see Fig. 2a and 2b). The more complex a piping system is, the larger the amount of information displayed in the drawing. At first, our aim is to detect the text in both pipe specs and connection points, to then read it and identify the limits of the corrosion sections.

In this paper, we propose a framework to develop a semi-automatic novel tool that allows the user to mark up the corrosion circuits in P&IDs based on automatically locating pipe specs and the connection points. The paper is organised as follows: Sect. 2 presents the related work, Sect. 3 the methodology, Sect. 4 the experiments and results, and Sect. 5 concludes and presents future directions.

2 Related Work

Text detection is one of the cornerstones in document image analysis, as it can lead to the location and identification of the depicted shapes. Later on, it can also help on the mapping of the structural representation, as the labels usually contain relevant information such as the direction of flow, sectioning, and other useful data [2].

Many attempts have been presented in literature to digitise P&IDs, mostly following two lines of work. The first one involves the use of heuristics to detect certain well-known shapes, such as geometrical symbols, arrows, connectors, tables and even text [3,4,5,6]. The second and most recent one relies on deep learning techniques in which the algorithms are trained to recognise shapes based on the collection and tagging of numerous samples [7,8,9,10]. Both approaches have advantages and disadvantages depending on the use case. The first family of methods is better suited when the characteristics of the P&ID follow a certain standard; however, if this is not the case, then the latter option can be used.

In the previous edition of this workshop, we presented a paper addressing the challenges and future directions of P&ID digitisation [11]. In that work, we addressed three main challenges: image quality, class imbalance and information contextualisation. Why being able to digitise drawings with reduced quality is still a work in progress, in this new challenge we focus on the two latter. On the one hand, there is a need to locate symbols and text strings which do not appear often in these drawing. Therefore, we must resort to consider heuristic and automatic solutions to properly locate the text strings and symbols which depict pipe specs and connection points respectively. On the other hand, by correctly identifying these pointers, we are able to allow the user to manually mark up the corrosion sections, bringing us one step closer to identifying the structures depicted within the engineering diagram [12].

3 Methodology

The digitisation workflow of our method is comprised of five steps:

-

I.

Train two different deep learning models to detect pipe specs and connection points using the YOLO v5 framework and store the detection coordinates.

-

II.

Create a binarised image for each detection and look over for connected components based on pixel connectivity to locate the region of interest (ROI) containing the text.

-

III.

Get the components statistics from the ROIs and apply a heuristic method to align the detections horizontally.

-

IV.

Apply the TesseractFootnote 2 Optical Character Recognition (OCR) engine with a custom configuration.

-

V.

Link the codes found in both pipe specs and connection points with their respective locations on the drawing.

3.1 Connection Points and Pipe Specs Detection

A pre-processing step was applied to the dataset to standardise the P&IDs and convert them into grayscale images to reduce noise. Subsequently, two different models were built to detect the pipe specs and connection points separately (see Fig. 3). The convolutional neural network selected was YOLOv5xFootnote 3 with the default configuration. Each model was trained at 3000 epochs with a batch size of 8. The marker tool runs the trained models on PyTorch to generate a cropped image of every single detection and store the positional coordinate relative to the P&ID.

3.2 Text Bloc Localisation

The next step is to localise the text block regions. While the text in pipe specs tends to have a consistent shape, the text in the connection points can vary considerably in size and orientation. Depending on the amount of information shown on the P&ID, textual and non-textual elements can overlap and appear on the detection boxes. Thus, to extract the ROI, a noise removal technique was introduced. Firstly, a binarisation process is applied to all the cropped images to reduce noise. Secondly, a connected component labelling (CCL) technique is used to get the shape and size information of the elements in the image. Finally, the components that fulfil a heuristic threshold criterion are kept (see Figs. 4a and 4b for more details).

3.3 Text Alignment

One of the limitations of the Tesseract OCR engine is that it can only interpret the text when horizontally aligned. Although this engine has a built-in configuration to assess the orientation of the text in an image, the number of characters in the detections is not enough to implement this feature. Hence, an additional method is applied to adjust those detections that are misaligned.

This method consists of a two-step process. First, the marker tool identifies the nonaligned detections, and then it determines the direction to rotate them. Given the height and width of the cropped image, we can classify which detections are vertically oriented. While this is a general rule for pipe specs, it does not apply to connection points (see Fig. 2b). Thus, a conditional criterion is added.

Figure 5 shows the rotation conditions for connection points. For each ROI, the x-coordinate of the components are calculated and checked for collinearity among themselves. If the group standard deviation falls behind a heuristically learned threshold, the image is vertically oriented. Subsequently, if the ROI is on the left side of the image, a clockwise rotation is applied. Conversely, if it is located on the right side, the flip direction is counter-clockwise.

3.4 Text Recognition

The text strings inside the detected areas contain the code which delimits the corrosion circuits; therefore, the next stage is to read them as accurately as possible. The ROI in the image can contain text in a single or a double line (see Fig. 2b). Connection points are composed of two text blocs with three characters, whereas pipe specs contain a long code string. These aspects can affect accuracy performance. Hence, different experiments were tested on Tesseract OCR to set a custom configuration which delivered the highest accuracy. Given the size of the detections being significantly small compared to the original image, different filters were tested to remove the noise caused by this distortion. The results of these configurations will be discussed in detail in Sect. 4.2.

3.5 Linkage

The last step is to link the codes extracted in the connection points and pipe specs with their respective positional coordinates relative to the P&ID. The pair codes on the connection points are processed and stored as three-string characters (see Fig. 6). For pipe specs, since the code is right next to the fourth hyphen, this is extracted by iterating over the string of characters. Finally, both codes and coordinates are stored in a dictionary.

4 Experiments and Results

The private dataset consists of 85 P&IDs provided by our industrial partner Archimech Limited. A total of 75 images were labelled, 70% were used for training, 20% for validation, and 10% for the testing, having 1653 and 537 annotations for pipe specs and connection points, respectively. The remaining 10 images from our dataset were used to test the end-to-end performance of the marker tool.

4.1 Detection

Several experiments were deployed modifying the hyperparameters. The optimal results were attained by setting the input image size to 2048 × 1080 pixels, a batch size of 8, and 3000 epochs. The confidence threshold used to run the detection was 0.4 for both models. In the end, we have achieved an accuracy of 96.23% for detecting detect pipe specs and 92.68% for connection points (see Table 1).

4.2 Text Recognition

The text recognition accuracy for pipe specs is 98.72% and 93.55% for connection points. Due to the variation in shape and the overlapping of text with graphics, it becomes more challenging to recognize the codes on the connection points. Different experiments were tested in order to improve the text recognition accuracy. The optimal performance was achieved by applying a median blur filter to the detections and setting a custom page segmentation model in the Tesseract-OCR engine. In Tables 2 and 3, we show how this method can successfully improve the accuracy text recognition for both connection points and pipe specs, respectively.

4.3 Final Output

The marker tool allows uploading either a single or a set of multiple P&IDs at once. After running the application, a list of all the codes detected in the drawing is deployed. The user can then select the code to visualize on the drawing and the colour of the marker. Finally, the user can mark up the corrosion circuits and save the document as a jpg file. Figure 7 shows an example of a P&ID section with three different corrosion circuits marked with our novel tool. The company works with many stakeholders which have even more P&IDs to digitise.

5 Conclusion and Future Work

In this paper, we presented an end-to-end framework which allows Oil & Gas engineers to load undigitised P&IDs and locate the corrosion circuits with minimal intervention and error. We used two models trained with a state-of-the-art deep learning technique (i.e. YOLOv5) to find the two shapes of interest, namely the pipe specs and the connection points. Once these are located, there are additional post-processing steps that have to be performed prior to presenting these symbols to the user. In the case of the connection points, the text needs to be found, oriented properly and read with total accuracy. This allows the system to identify which are the symbols of interest which will be shown to the engineer so that the corrosion circuits can be marked up.

In future work, we would like to explore the scalability of our system to perform appropriately in P&IDs generated in other standards and qualities. Moreover, we aim to test more novel deep learning frameworks which allow us to increase the accuracy of the detection and OCR tasks by using techniques that work with limited character sets [13]. Finally, we aim to automate the last step so that the engineer is shown the corrosion circuits automatically.

References

Mohammed, M.: Corrosion looping for down stream petroleum plants: An Enigma for RBI Engineers A Perspective from the Review of Mechanical Integrity Systems. AMPP. Corrosion and Monitoring Control (2016)

Moreno-García, C.F., Elyan, E., Jayne, C.: New trends on digitisation of complex engineering drawings. Neural Comput. Appl. 31(6), 1695–1712 (2019)

Howie, C., Kunz, J., Binford, T., Chen, T., Law, K.H.: Computer interpretation of process and instrumentation drawings. Adv. Eng. Softw. 29, 563–570 (1998)

Arroyo, E., Hoernicke, M., Rodríguez, P., Fay, A.: Automatic derivation of qualitative plant simulation models from legacy piping and instrumentation diagrams. Comput. Chem. Eng. 92, 112–132 (2016)

Moreno-García, C.F., Elyan, E., Jayne, C.: Heuristics-based detection to improve text/graphics segmentation in complex engineering drawings. In: Engineering Applications of Neural Networks (EANN), pp. 87–98 (2017)

Sinha, A., Bayer, J., Bukhari, S.S.: Table localization and field value extraction in piping and instrumentation diagram images. In: Graphics Recognition Methods and Applications (GREC), pp. 26–31 (2019)

Mani, S., Haddad, M.A., Constantini, D., Douhard, W., Li, Q., Poirier, L.: Automatic digitization of engineering diagrams using deep learning and graph search. In: Computer Vision and Pattern Recognition (CVPR) (2020)

Yun, D.Y., Seo, S.K., Zahid, U.: Deep neural network for automatic image recognition of engineering diagrams. Appl. Sci. 10, 1–16 (2020)

Sierla, S., Azangoo, M., Fay, A., Vyatkin, V., Papakonstantinou, N.: Integrating 2D and 3D digital plant information towards automatic generation of digital twins. In: 2020 IEEE 29th International Symposium on Industrial Electronics (ISIE), pp. 460–467 (2020)

Elyan, E., Jamieson, L., Ali-Gombe, A.: Deep learning for symbols detection and classification in engineering drawings. Neural Netw. 129, 91–102 (2020)

Moreno-García, C.F., Elyan, E.: Digitisation of assets from the oil & gas industry: challenges and opportunities. In: International Conference on Document Analysis and Recognition (ICDARW), pp. 16–19 (2019)

Rica, E., Moreno-García, C.F., Álvarez, S., Serratosa, F.: Reducing human effort in engineering drawing validation. Comput. Ind. 117, 103198 (2020)

Majid, N., Barney Smith, E.H.: Performance comparison of scanner and camera-acquired data for Bangla offline handwriting recognition. In: International Conference on Document Analysis and Recognition Workshops (ICDARW), pp. 31–36 (2019)

Acknowledgements

We would like to thank Innovate UK for funding this research under the Innovation Voucher scheme.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Toral, L., Moreno-García, C.F., Elyan, E., Memon, S. (2021). A Deep Learning Digitisation Framework to Mark up Corrosion Circuits in Piping and Instrumentation Diagrams. In: Barney Smith, E.H., Pal, U. (eds) Document Analysis and Recognition – ICDAR 2021 Workshops. ICDAR 2021. Lecture Notes in Computer Science(), vol 12917. Springer, Cham. https://doi.org/10.1007/978-3-030-86159-9_18

Download citation

DOI: https://doi.org/10.1007/978-3-030-86159-9_18

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-86158-2

Online ISBN: 978-3-030-86159-9

eBook Packages: Computer ScienceComputer Science (R0)