Abstract

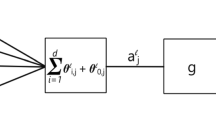

As shown by Russel et al., 1995 [7], Bayesian networks can be equipped with a gradient descent learning method similar to the training method for neural networks. The calculation of the required gradients can be performed locally along with propagation. We review how this can be done, and we show how the gradient descent approach can be used for various tasks like tuning and training with training sets of definite as well as non-definite classifications. We introduce tools for resistance and damping to guide the direction of convergence, and we use them for a new adaptation method which can also handle situations where parameters in the network covary.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Enrique Castillo, José Manuel Gutiérrez, and Ali S. Hadi. A new method for efficient symbolic propagation in discrete Bayesian networks. Networks, An International Journal, 28(1):31–43, August 1996.

Finn V. Jensen. An introduction to Bayesian Networks. UCL Press Limited, 1996.

K. B. Laskey. Adapting connectionist learning to Bayes network. International Journal of Approximate Reasoning, (4):261–282, 1990.

K. B. Laskey. Sensitivity analysis for probability assessments in Bayesian networks. In David Heckerman and Abe Mamdani, editors, Proceedings of the Ninth Conference on Uncertainty in Artificial Intelligence, pages 136–142. Morgan Kaufmann Publishers, 1993.

Anders L. Madsen and Finn V. Jensen. Lazy propagation in junction trees. InGregory F. Cooper and Serafin Moral, editors, Proceedings of the Fourteenth Conference on Uncertainty in Artificial Intelligence, pages 362–369. Morgan Kaufmann Publishers, 1998.

Kristian G. Olesen, Steén L. Lauritzen, and Finn V. Jensen. aHUGIN: A system creating adaptive causal probabilistic networks. In Proceedings of the Eighth Conference on Uncertainty in Artificial Intelligence, pages 223–229. Morgan Kaufmann Publishers, 1992.

Stuart Russell, John Binder, Daphne Koller, and Keiji Kanazawa. Local learning in probabilistic networks with hidden variables. In Chris S. Mellish, editor, Proceedings of the Fourteenth International Joint Conference on Artificial Intelligence, volume 2, pages 1146–1152. Morgan Kaufmann Publishers, 1995.

David J. Spiegelhalter and Steén L. Lauritzen. Sequential updating of conditional probabilities on directed graphical structures. Networks, 20:579–605, 1990.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 1999 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Jensen, F.V. (1999). Gradient Descent Training of Bayesian Networks. In: Hunter, A., Parsons, S. (eds) Symbolic and Quantitative Approaches to Reasoning and Uncertainty. ECSQARU 1999. Lecture Notes in Computer Science(), vol 1638. Springer, Berlin, Heidelberg. https://doi.org/10.1007/3-540-48747-6_18

Download citation

DOI: https://doi.org/10.1007/3-540-48747-6_18

Published:

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-66131-3

Online ISBN: 978-3-540-48747-0

eBook Packages: Springer Book Archive