Abstract

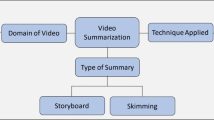

The techniques of video summarization (VS) has garnered immense interests in current generation leading to enormous applications in different computer vision domains, such as video extraction, image captioning, indexing, and browsing. By the addition of high-quality features and clusters to pick representative visual elements, conventional VS studies often aim at the success of the VS algorithms. Many of the existing VS mechanisms only take into consideration the visual aspect of the video input, thereby ignoring the influence of audio features in the generated summary. To cope with such issues, we propose an efficient video summarization technique that processes both visual and audio content while extracting key frames from the raw video input. Structural similarity index is used to check similarity between the frames, while mel-frequency cepstral coefficient (MFCC) helps in extracting features from the corresponding audio signals. By combining the previous two features, the redundant frames of the video are removed. The resultant key frames are refined using a deep convolution neural network (CNN) model to retrieve a list of candidate key frames which finally constitute the summarization of the data. The proposed system is experimented on video datasets from YouTube that contain events within them which helps in better understanding the video summary. Experimental observations indicate that with the inclusion of audio features and an efficient refinement technique, followed by an optimization function, provides better summary results as compared to standard VS techniques.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Agyeman, R., Muhammad, R., Choi, G.S.: Soccer video summarization using deep learning. In: 2019 IEEE Conference on Multimedia Information Processing and Retrieval (MIPR). pp. 270–273 (2019)

Bhosale, A., Badve, P., Gholap, R., Joshi, P., Mone, M.S.: Video summarization using convolutional neural network. IJRASET 7(4), 2483–2489 (2019)

Huang, C., Wang, H.: A novel key-frames selection framework for comprehensive video summarization. IEEE Trans. Circuits Syst. Video Technol. 30(2), 577–589 (2020). https://doi.org/10.1109/TCSVT.2019.2890899

Iandola, F.N., Han, S., Moskewicz, M.W., Ashraf, K., Dally, W.J., Keutzer, K.: Squeezenet: Alexnet-level accuracy with 50x fewer parameters and <0.5mb model size (2016)

Jadon, S., Jasim, M.: Unsupervised video summarization framework using keyframe extraction and video skimming. In: 2020 IEEE 5th International Conference on Computing Communication and Automation (ICCCA). pp. 140–145, (2020). https://doi.org/10.1109/ICCCA49541.2020.9250764

Javed, A., Irtaza, A., Malik, H., Mahmood, M.T., Adnan, S.: Multimodal framework based on audio-visual features for summarisation of cricket videos. IET Image Proc. 13(4), 615–622 (2019)

Kamedo2: FFmpeg. ffmpeg.org (2013)

Lee, H., Lee, G.: Summarizing long-length videos with gan-enhanced audio/visual features. In: 2019 IEEE/CVF International Conference on Computer Vision Workshop (ICCVW). pp. 3727–3731 (2019)

Muhammad, K., Hussain, T., Del Ser, J., Palade, V., de Albuquerque, V.H.C.: Deepres: A deep learning-based video summarization strategy for resource-constrained industrial surveillance scenarios. IEEE Trans. Industr. Inf. 16(9), 5938–5947 (2020)

Muhammad, K., Hussain, T., Tanveer, M., Sannino, G., de Albuquerque, V.H.C.: Cost effective video summarization using deep cnn with hierarchical weighted fusion for iot surveillance networks. IEEE Internet Things J. 7(5), 4455–4463 (2020)

Rosebrock, A.: SSIM. https://www.pyimagesearch.com/2014/09/15/python-compare-two-images/

Smith, L.: MFCC. https://musicinformationretrieval.com/mfcc.html (2014)

Tejero-de-Pablos, A., Nakashima, Y., Sato, T., Yokoya, N., Linna, M., Rahtu, E.: Summariza tion of user-generated sports video by using deep action recognition features. IEEE Trans. Multimedia 20(8), 2000–2011 (2018)

Vivekraj, V.K., Sen, D., Balasubramanian, R.: Vector ordering based multimodal video skimming for user videos. In: TENCON 2017—2017 IEEE Region 10 Conference. pp. 775–780, (2017). https://doi.org/10.1109/TENCON.2017.8227964

Wang, Z., Zhu, Y.: Video key frame monitoring algorithm and virtual reality display based on motion vector. IEEE Access 8, 159027–159038 (2020)

Xia, G., Sun, H., Liu, Q., Hang, R.: Learning-based sphere nonlinear interpolation for motion synthesis. IEEE Trans. Industr. Inf. 15(5), 2927–2937 (2019)

de Avila, S.E.F., Lopes, A.P.B., da Luz Jr., A., de Albuquerque Araújo, A.: Vsumm: a mechanism designed to produce static video summaries and a novel evaluation method. Pattern Recognit. Lett. 32(1), 56–68 (2011). https://doi.org/10.1016/j.patrec.2010.08.004. <ce:title>Image Processing, Computer Vision and Pattern Recognition in Latin America</ce:title>

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Rhevanth, M., Ahmed, R., Shah, V., Mohan, B.R. (2022). Deep Learning Framework Based on Audio–Visual Features for Video Summarization. In: Gupta, D., Sambyo, K., Prasad, M., Agarwal, S. (eds) Advanced Machine Intelligence and Signal Processing. Lecture Notes in Electrical Engineering, vol 858. Springer, Singapore. https://doi.org/10.1007/978-981-19-0840-8_17

Download citation

DOI: https://doi.org/10.1007/978-981-19-0840-8_17

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-19-0839-2

Online ISBN: 978-981-19-0840-8

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)