Abstract

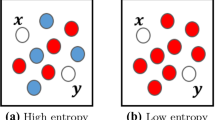

For complex data sets, the pairwise similarity or dissimilarity of data often serves as the interface of the application scenario to the machine learning tool. Hence, the final result of training is severely influenced by the choice of the dissimilarity measure. While dissimilarity measures for supervised settings can eventually be compared by the classification error, the situation is less clear in unsupervised domains where a clear objective is lacking. The question occurs, how to compare dissimilarity measures and their influence on the final result in such cases. In this contribution, we propose to use a recent quantitative measure introduced in the context of unsupervised dimensionality reduction, to compare whether and on which scale dissimilarities coincide for an unsupervised learning task. Essentially, the measure evaluates in how far neighborhood relations are preserved if evaluated based on rankings, this way achieving a robustness of the measure against scaling of data. Apart from a global comparison, local versions allow to highlight regions of the data where two dissimilarity measures induce the same results.

Chapter PDF

Similar content being viewed by others

Keywords

- Dimensionality Reduction

- Unsupervised Learning

- Neighborhood Size

- Dissimilarity Measure

- Intelligent Tutoring System

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Ackerman, M., Ben-David, S., Loker, D.: Towards property-based classification of clustering paradigms. In: NIPS 2010, pp. 10–18 (2010)

Cha, S.-H.: Comprehensive survey on distance/similarity measures between probability density functions. Int. J. of Mathematical Models and Methods in Appl. Sci. 1(4), 300–307 (2007)

Chen, Y., Garcia, E.K., Gupta, M.R., Rahimi, A., Cazzanti, L.: Similarity-based classification: Concepts and algorithms. JMLR 10, 747–776 (2009)

Cilibrasi, R., Vitányi, P.: Clustering by compression. IEEE Trans. on Information Theory 51(4), 1523–1545 (2005)

Frasconi, P., Gori, M., Sperduti, A.: A general framework for adaptive processing of data structures. IEEE TNN 9(5), 768–786 (1998)

Gärtner, T.: Kernels for Structured Data. PhD thesis, Univ. Bonn (2005)

Gisbrecht, A., Mokbel, B., Hammer, B.: Relational generative topographic mapping. Neurocomputing 74(9), 1359–1371 (2011)

Hammer, B., Hasenfuss, A.: Topographic mapping of large dissimilarity datasets. Neural Computation 22(9), 2229–2284 (2010)

Hammer, B., Jain, B.: Neural methods for non-standard data. In: ESANN 2004, pp. 281–292 (2004)

Hammer, B., Micheli, A., Sperduti, A.: Universal approximation capability of cascade correlation for structures. Neural Computation 17, 1109–1159 (2005)

Hammer, B., Micheli, A., Sperduti, A.: Adaptive Contextual Processing of Structured Data by Recursive Neural Networks: A Survey of Computational Properties. In: Hammer, B., Hitzler, P. (eds.) Perspectives of Neural-Symbolic Integration. SCI, vol. 77, pp. 67–94. Springer, Heidelberg (2007)

Hammer, B., Mokbel, B., Schleif, F.-M., Zhu, X.: White Box Classification of Dissimilarity Data. In: Corchado, E., Snášel, V., Abraham, A., Woźniak, M., Graña, M., Cho, S.-B. (eds.) HAIS 2012, Part III. LNCS, vol. 7208, pp. 309–321. Springer, Heidelberg (2012)

Hathaway, R.J., Bezdek, J.C.: Nerf c-means: Non-euclidean relational fuzzy clustering. Pattern Recognition 27(3), 429–437 (1994)

Lee, J.A., Verleysen, M.: Quality assessment of dimensionality reduction: Rank-based criteria. Neurocomputing 72(7-9), 1431–1443 (2009)

Lee, J.A., Verleysen, M.: Nonlinear dimensionality redcution. Springer (2007)

Lee, J.A., Verleysen, M.: Scale-independent quality criteria for dimensionality reduction. Pattern Recognition Letters 31, 2248–2257 (2010)

Lewis, J., Ackerman, M., Sa, V.D.: Human cluster evaluation and formal quality measures. In: Proc. of the 34th Ann. Conf. of the Cog. Sci. Society (2012)

Liu, H., Song, D., Rüger, S., Hu, R., Uren, V.: Comparing Dissimilarity Measures for Content-Based Image Retrieval. In: Li, H., Liu, T., Ma, W.-Y., Sakai, T., Wong, K.-F., Zhou, G. (eds.) AIRS 2008. LNCS, vol. 4993, pp. 44–50. Springer, Heidelberg (2008)

Malerba, D., Esposito, F., Gioviale, V., Tamma, V.: Comparing dissimilarity measures for symbolic data analysis. In: Pre-Proc. of ETK-NTTS 2001, HERSONISSOS, pp. 473–481 (2001)

Mokbel, B., Lueks, W., Gisbrecht, A., Biehl, M., Hammer, B.: Visualizing the quality of dimensionality reduction. In: ESANN 2012, pp. 179–184 (2012)

Neuhaus, M., Bunke, H.: Edit distance-based kernel functions for structural pattern classification. Pat. Rec. 39(10), 1852–1863 (2006)

Pearson, W.R., Lipman, D.J.: Improved tools for biological sequence comparison. Proc. of the National Academy of Sciences USA 85(8), 2444–2448 (1988)

Pekalska, E., Duin, R.P.: The Dissimilarity Representation for Pattern Recognition. Foundations and Applications. World Scientific (2005)

Qin, A.K., Suganthan, P.N.: Kernel neural gas algorithms with application to cluster analysis. In: ICPR 2004, vol. 4, pp. 617–620. IEEE Computer Society (2004)

Robertson, S.: Understanding inverse document frequency: On theoretical arguments for idf. Journal of Documentation 60(5), 503–520 (2004)

Rossi, F., Villa-Vialaneix, N.: Consistency of functional learning methods based on derivatives. Pat. Rec. Letters 32(8), 1197–1209 (2011)

Scarselli, F., Gori, M., Tsoi, A.C., Hagenbuchner, M., Monfardini, G.: Computational capabilities of graph neural networks. IEEE TNN 20(1), 81–102 (2009)

Gross, S., Zhu, X., Hammer, B., Pinkwart, N.: Cluster Based Feedback Provision Strategies in Intelligent Tutoring Systems. In: Cerri, S.A., Clancey, W.J., Papadourakis, G., Panourgia, K. (eds.) ITS 2012. LNCS, vol. 7315, pp. 699–700. Springer, Heidelberg (2012)

Mozgovoy, M., Karakovskiy, S., Klyuev, V.: Fast and reliable plagiarism detection system. In: 37th Annual Frontiers In Education Conference - Global Engineering: Knowledge Without Borders, Opportunities Without Passports, FIE 2007 (2007)

Wise, M.J.: Running Karp-Rabin Matching and Greedy String Tiling. Technical report 463 (Univ. of Sydney. Basser Dept. of Comp. Sci.) (1993) ISBN 0867586699

van der Maaten, L., Hinton, G.: Visualizing high-dimensional data using t-sne. JMLR 9, 2579–2605 (2008)

Venna, J.: Dimensionality reduction for Visual Exploration of Similarity Structures. PhD thesis, Helsinki University of Technology, Espoo, Finland (2007)

Venna, J., Peltonen, J., Nybo, K., Aidos, H., Kaski, S.: Information retrieval perspective to nonlinear dimensionality reduction for data visualization. JMLR 11, 451–490 (2010)

Yin, H.: On the equivalence between kernel self-organising maps and self-organising mixture density networks. Neural Netw. 19(6), 780–784 (2006)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2012 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Mokbel, B., Gross, S., Lux, M., Pinkwart, N., Hammer, B. (2012). How to Quantitatively Compare Data Dissimilarities for Unsupervised Machine Learning?. In: Mana, N., Schwenker, F., Trentin, E. (eds) Artificial Neural Networks in Pattern Recognition. ANNPR 2012. Lecture Notes in Computer Science(), vol 7477. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-33212-8_1

Download citation

DOI: https://doi.org/10.1007/978-3-642-33212-8_1

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-33211-1

Online ISBN: 978-3-642-33212-8

eBook Packages: Computer ScienceComputer Science (R0)