Abstract

For an adequate comprehension of scientific phenomena, concepts, and experiments, it is necessary to understand several different representations and their interconnections. From a scientific point of view, representational competence (RC) can be understood as students’ ability to correctly generate, interconnect, and translate several representations and use them as problem-solving tools. As theoretical basis for assessing RC, it is not sufficient to only know how students can work with one or several single representations. It is also very important to know how they are able to interconnect representations, for example by translating information from one to another representation-type or adapting representations. Thus, students’ ability to achieve consistency between the overlapping information of a set of representations is a central part of RC and can be called representational coherence ability (RCA). Goal of the study is to develop and evaluate test items for RCA that must require solution processes that focus on representations and interconnections of representations. To vary this focus, two item variants were developed. One variant requires students to relate representations more implicitly and to work only with two representations; the other variant requires students to compare or modify them more explicitly and to use a higher number of representations to solve the tasks. By combining these variants, the spectrum of RC from single or few representations to a system of interconnected representations can be covered. An evaluation of 15 items (488 students) showed acceptable discrimination indexes and reliability on the whole. In particular, the items can be used to assess RCA, and in general, the proposed strategy for item-construction was successful and can be used for the development of further RCA instruments.

Access provided by CONRICYT-eBooks. Download chapter PDF

Similar content being viewed by others

Theory

For a proper understanding of scientific experiments, phenomena and concepts, various mental representations, and their interplay are vitally important (Gilbert and Treagust 2009; Mayer 2005). The term ‘representation’ is understood as a tripartite relation of a referent (or object), its representation, and the meaning (or interpretation) of referent, representation and of their interaction. This relation is referred to in various ways (e.g. ‘Peircean triangle’, or ‘triangle of meaning’), and a detailed discussion of its underpinnings in epistemology and semiotics can be found in Tytler et al. (2013, Ch. 6.). Schnotz (in line with dual coding theory) distinguishes two types of representations (2005, 2002, Integrated Text and Picture Comprehension). Photographs or schematic drawings are depictive representations. Formulas, tables, and verbal descriptions are descriptive representations. All forms of representation can appear internally (in the mind) or externally (e.g., on a paper or screen). Representations are selective; therefore they can differ in content and can be useful for solving different tasks (see Schnotz 1994; Herrmann 1993). A representation is not necessarily only an illustrative picture. In addition, it can be used as a tool for problem solving tasks and is an essential means of reasoning. Schnotz and Mayer describe in detail the theory of cognitive processes that occur during the work with multiple representations (Schnotz 2005; Mayer 2005). So, information of different representations can be processed by the auditive or visual channel and integrated in propositions and mental models in the working memory (Schnotz 2005, Mayer 2005). Also, they provide task-construction strategies to reduce a possible cognitive overload (Sweller 1999) that can occur by the work with multiple representations (e.g., multimedia principle and coherence principle, Mayer 2005; Schnotz 2005).

The skilled use of several representations as problem-solving tools is well known in physics and science education (Ainsworth 1999; Tsui and Treagust 2013; Schnotz et al. 2011). The ability to generate and use different specific depictive or descriptive representations (Schnotz and Bannert 2003) of a situation or a problem in a skilled and interconnected way is called representational competence (RC, Guthrie 2002; Kozma and Russell 2005); the ability to change and translate between different forms of representations (Ainsworth 1999) and communicate about underlying, not obviously perceived, physical entities (e.g. radiation or atoms) and processes is included in the set of skills and practices of RC (Kozma and Russell 1997; Kozma 2000; Dolin 2007). A translation of information between representations is often necessary (Ainsworth 1999); in a scientific context, it means that some parts of the represented information can be expressed in different forms of representation. Experts perform significantly better than novices when translating the content of a graph, a video, or an animation about molecules into any other type of representation (Kozma and Russell 1997). Of course, the overlapping content of a set of representations should be translated without contradictions. Each representation type needs a specific way of thinking and has its assets and drawbacks; therefore a skilled combination of different representations is assumed to be beneficial for the learning process (Leisen 1998a; Leisen 1998b). In view of its importance for scientific thinking and based on a considerable body of evidence (e.g. Kozma et al. 2000; Dunbar 1997; Roth and McGinn 1998), Kozma (2000) emphasized that the development of RC should be included in the chemistry curriculum. In Denmark, it is already implemented in the school curriculum (Dolin 2007).

Many studies have shown that the levels of students RC are low. Kozma and Russell reported (2005) that students with different levels of RC show differences in their work with representations. They pointed out that persons with a low level of RC work on the surface level of a representation (Chi et al. 1981; diSessa et al. 1991; Kozma and Russell 1997), whereas those with a high level of RC show features of deep-level processing; for instance, they use a higher number of formal and informal representations to solve problems, make predictions, or explain phenomena (Dunbar 1997; Goodwin 1995; Kozma et al. 2000; Kozma and Russell 1997; Roth and McGinn 1998). Research has identified possible reasons for the low level of students’ RC in chemistry (Devetak et al. 2004): Secondary school students (average age: 18 years) had to solve several tasks in their high school examination. To do this, they had to be able to connect macroscopic, submicroscopic, and symbolic levels of chemical concepts (Thiele and Treagust 1994; Devetak et al. 2004). However, they concluded that teachers do not usually focus on teaching students how to connect several representations; they only concentrate on it during the preparation of the high school examination. If students are not able to connect levels of representations sufficiently, their knowledge is fragmented and can only be remembered temporarily. Additionally, it is known that the problem is not only a lack of students’ ability to interconnect different representation levels; in addition, students do not clearly see the role of symbolic and submicroscopic levels of representation (Treagust et al. 2003). Research has shown that students of physics also have a low level of RC (Saniter 2003): Even advanced learners (seventh semester of university) were not able to connect the meanings of formulas with phenomena and with practical implementation in experiments better than less advanced learners in the fifth semester of university. Problems occurred especially when students tried to explain an experiment only with one type of representation (e.g., with the symmetric form of coulombs law for a single source point charge, Saniter 2003). Only when students made a connection with a representation on the phenomenological level, they were able to estimate the measurement value correctly. Other representational problems have also been found (Saniter 2003). When a student was not familiar with a topic of a representation level very well, he/she could not use it to solve the task, even if he/she had already worked on the content in the task directly before (Saniter 2003). A kind of content-specific blindness is presumed as a reason for this. A danger for students is that they continue to operate based on the surface-level features of representations (Chi et al. 1981; diSessa et al. 1991; Kozma and Russell 1997). Another possible reason for the low level of students’ RC might be the way teachers deal with representations in classes. Lee (2009) analyzed lessons in three eighth-grade classes on ray optics and found an implicit, short, partly inaccurate and receptive way of using representations. Accordingly, the students are not sufficiently cognitively activated regarding representations (for an activating learning strategy for development of RC see Scheid et al. 2015; Scheid 2013).

Not only does the lack of RC lead to deficits in the learning process, the students have also not met the expectations of teachers with regard to learning with experiments (Novak 1990; Harlen 1999). In school lessons, students had only seldom the opportunity to speak about experiments or design or analyze them on their own. Therefore, they were not able to make connections to the experiments (Tesch 2005, seventh to ninth grade, video study about mechanics and electricity). For this reason, students’ opportunities to process the different types of representations in greater depth were low. An appropriate level of understanding of science experiments generally requires a certain level of RC, because information is usually spread over several representations of different types or also the same type and has to be connected. In particular, this means the ability to connect the content of different representations with each other and to translate the overlapping contents from one representation into another is a key competence to achieve connected knowledge. This competence is called Representational Coherence Ability (RCA, Scheid et al. 2015; Scheid 2013). Translating information between several representations is inherently susceptible to misinterpretation or failure, which can lead to unwanted contradictions and inconsistencies. Therefore, a central part of RC can be seen in the above mentioned RCA as the level of students’ ability to achieve consistency between the overlapping information of a set of representations, which is scientifically correct. RCA is essential for the use of multiple representations; it includes also translating of information between different types of representations or adapting representations, and has a fundamental connection to achievement in the subject matter (e.g., physics). The facts of the importance of representational abilities for the understanding and, simultaneously, the low representational abilities of the students (see above) lead to an urgent need of a diagnostic instrument for RCA. Therefore, the goal of the study is to develop test items for RCA and to probe it for reliability via psychometrical values.

Methods

The research took place in several grammar schools in Germany (federal state Rhineland-Palatinate). All schools were located in small towns. The topic of the study was physics, in particular the subject of ray optics and image forming by a convex lens. According to the curriculum, this topic is taught in the seventh grade. We had three measurement times: directly before and after six optics lessons, and six weeks after these lessons. The data were analyzed with classical methods of item analysis: factor analysis, α C , item-total correlation, item difficulty and for the expert rating intra-class correlation. We recruited 488 students in 17 classes and six schools. The students were between 12 and 14 years of age (M = 13.3; SD = 0.5), 54% boys and 46% girls.

Design of the RCA Test

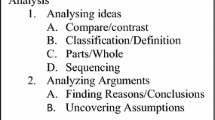

The ability to design and adequately work with representations is connected closely with physics achievement, because scientific representations are often domain specific and describe, depict, or relate to scientific content. The ability to handle representations is in the same time foundation to develop achievement of physics and a consequence of it. So they are interdependent and their development goes hand in hand. Therefore, RCA assessing tasks are inherently related to physics achievement, even though they focus on RCA. This focus is set by the multiplicity of experiment- or phenomenon-related representations. It was measured through lesson-related assessment tasks, which require working with two representations (item-type A) or measured through tasks that require working mostly with three or more representations (item-type B, both see Table 1). By combining these variants, the spectrum of RC from single or few representations to a system of interconnected representations can be covered. Items 1b, 1c, 4a and 7 are discussed in this chapter. For illustration of the different representations of the items, the test is added in the appendix.

There are six items of type A and nine of type B. The test has an open-answer format and is interval scaled (Scheid 2013; Scheid et al. 2017; complexity of the answers was considered, Kauertz 2008).

As an example for item-type A, Fig. 1 shows a task that assesses RCA via performance with a representational focus and uses a text and a ray diagram (Table 1); students were asked to find out the focus of a convex lens by constructing a ray diagram of an image-formation process with given numeric parameters. Students were only able to solve the task if they understood the verbal part of the task, found out how to obtain the solution, and if they were able to translate the relevant part of the verbal information into a depictive schematic drawing and apply the physics rules of ray diagram construction. Figure 2 also shows an item that assesses RCA with two representations.

It looks like a traditional text task focusing on the magnification equation but solving the task also requires RCA (i.e., a level of coherence between the verbal representation and mathematical formula, Table 1). The students had to be able to allocate the verbally implemented numeric parameters to the correct physics quantities, otherwise they were not able to correctly insert the values into the formula and, therefore, not able to apply the formula correctly.

Also when students were asked to express the answer to the task verbally, they need to translate the mathematical representation into a text. For this purpose, they had to know at least the meaning of the physical parameters in the equation.

In contrast, items of type B measure RCA consisting of a set of tasks that normally require three or more coherent mental representations related to experiments or phenomena and therefore deep, under-the-surface processing to be able to solve the tasks.

Figure 3 shows an item set that required the student to develop a representational mental model to produce the correct answer. This model has to contain relevant information that is shown in the ray diagram of the experimental setting shown in the picture. To perform this, it is necessary to know the physics law concerning how certain rays can be changed by a convex lens if the object distance changes. This model was not usually available for students, but they had basic knowledge and therefore an opportunity to design the mental model. They know how to draw a ray diagram and the next step is to develop an appropriate mental model that compares and describes the outcomes of several ray diagrams with different image-lens distances and then to develop the required relation at the end of the representational thinking process (the ray construction had to alter mentally, Table 1). In this way it was possible to estimate how the image distance changes in connection with the object distance, both in particular and in general. In the second part of the task students were asked to explain verbally how they solved the tasks above. This approach attempted to gain an insight into their thinking processes and enabled the identification of students who randomly gave correct answers. For this reason, this task was only considered correct if a coherent mental model and three types of adequate representations were used: the schematic drawing of the printed task, a mental model of the schematic drawing in connection with a useful physics law (e.g., how the rays change), and a produced text describing the outcomes of the mental model as part of the answer.

Results of Item and Test Analysis

An exploratory factor analysis (principal component analysis, quartimax rotation, Eid et al. 2011) shows that the first factor has a large eigenvalue whereas the other factors have the level of individual items or are below that level. Factors can be seen as relevant, if they clearly show higher eigenvalues as the others (Cattell 1966) and the eigenvalues of the factors are higher than the ones of single items (Kaiser and Dickman 1959; Eid et al. 2011). So, only one factor is really relevant and the instrument can be seen as one dimensional.

With regard to reliability and validity for the curriculum, we obtained internal consistencies of the RCA test of α C = 0.8 (N(post) = 488, N(follow-up) = 484, Cronbach and Snow 1977). Pretest values cannot be expected to be in the desired ranges, as there is no consistent knowledge yet (Nersessian 1992; Ramlo 2008; Nieminen et al. 2010). Excluding individual items does not lead to an increase of the internal consistency. The item-total correlation of the RCA test was calculated, what means the correlation of single items with the total score of the remaining items. Every item has the desired correlation above r it > 0.3 with the total score of the rest of the items (Weise 1975). Only item 3a has a correlation of r it = 0.2.

In the pretest, the item difficulty is within the desired range of 0.2 < P i < 0.8 for eight items and lower than 0.2 for seven items (\( \overline{P} \) i (pretest) = 0.16). For the other measurement times, no item has a difficulty outside the desired range except for item 1c (post and follow-up) and item 6 (follow-up). The mean item difficulty of the posttest (P i (post) = 0.36) is 17% higher than the mean of the follow-up test (\( \overline{P} \) i (follow-up) = 0.30).

The results of the expert rating showed that the RCA test is seen as “valid for the curriculum” or “completely valid for the curriculum”. The intra-rater correlations were highly significant and the values were between 0.5 < ICC < 0.7.

Discussion

Regarding the item analysis, a possible explanation for the low item difficulties of the RCA pretest is that the physics content that was asked was actually the subject of the following lessons. Nevertheless, it made sense to assess RCA before the lessons started, because students showed variance in RCA in the pretest and this information could be used in the statistical analysis for measuring changes. The low item difficulties in the pretest and several missing values in the datasheet were the reason why the corrected item-total correlation and α C could not be calculated for that time. Altogether three items were conspicuous at other measuring times. The item difficulty of item 1c is low because it required to logically reason about an abstract interrelation between the item distance and the image distance of an image formation experiment. Item 6 showed also a low item difficulty (but only in follow-up test); it asks for reasons why it is not possible to derive the magnification equation with two triangles that were marked in a ray diagram. For both items, the contents are difficult from the physics point of view and known to be difficult from the teaching practice; however, just because of these contents the items are interesting and important for measuring high levels of RCA. Therefore they are useful and may remain in the test. For item-total correlation, only item 3a had a low value and appeared to be an exception. A possible explanation could be that the topic of the item differed from the topics of the remaining items. It asked for the application possibilities of the magnification equation, therefore requiring metacognition. A remaining of the item in the instrument is questionable and it may be excluded. The values of the corrected item-total correlations and the value of α C of the post- and follow-up tests were acceptable (Kline 2000). In sum, the RCA test can be considered as reliable.

The intra-class correlation of the expert rating was highly significant with acceptable values (Wirtz and Caspar 2002). So the expert rating showed clearly that the RCA test is valid for the curriculum and can, therefore, be used in schools to diagnose students’ individual levels of RCA and also, if needed, for grading purposes.

Implications, Limitations, and Recommendations for Future Research

The development of a theory-based strategy for designing items for a RCA instrument was successful in respect to the considered aspects. So, with knowledge about the outcomes of this study, instructors can either generally assess whether teaching lessons fostering RCA are necessary or, in particular, identify which students need individual help to develop RCA and how much help they need. This can help to overcome well-known problems students face in understanding science concepts, phenomena, and experiments. The test instrument for RCA allows also to investigate the effects of future strategies to foster the development of RCA (e.g. by using RATs, see Scheid et al. 2015; Scheid 2013). A limitation is that the instrument is only available for the domain of ray optics. We recommend the design of RCA tests for other topics of science education that use multiple representations, as example for thermodynamics or genetics.

References

Ainsworth, S. (1999). The functions of multiple representations. Computers & Education, 33, 131–152.

Cattell, R. B. (1966). The scree test for the number of factors. Multivariate Behavioral Research, 1, 245–276.

Chi, M., Feltovich, P., & Glaser, R. (1981). Categorization and representation of physics problems by experts and novices. Cognitive Science, 5, 121–152.

Cronbach, L., & Snow, R. (1977). Aptitudes and instructional methods: A handbook for research on interactions. New York: Irvington.

Devetak, I., Urbančič, M. W., Grm, K. S., Krnel, D., & Glažar, S. A. (2004). Submicroscopic representations as a tool for evaluating students’ chemical conceptions. Acta Chimica Slovenica, 51, 799–814.

Dolin, J. (2007). Science education standards and science assessment in Denmark. In D. Waddington, P. Nentwig, & S. Schanze (Eds.), Making it comparable. Standards in science education (pp. 71–82). Waxmann: Münster.

Dunbar, K. (1997). How scientists really reason: Scientific reasoning in real-world laboratories. In R. Sternberg & Davidson (Eds.), The nature of insight (pp. 365–396). Cambridge, MA: MIT Press.

Eid, M., Gollwitzer, M., & Schmitt, M. (2011). Statistik und Forschungsmethoden. (2. Aufl.) [Statistics and research methods] (2nd ed.). Weinheim: Beltz.

Gilbert, J. K., & Treagust, D. (Eds.). (2009). Multiple representations in chemical education. The Netherlands: Springer.

Goodwin, C. (1995). Seeing in depth. Social Studies of Science, 25, 237–274.

Guthrie, J. W. (Ed.). (2002). Encyclopedia of education. New York: Macmillan.

Harlen, W. (1999). Effective teaching of science. Edinburgh: The Scottish Council for Research in Education (SCRE).

Herrmann, T. (1993). Mentale Repräsentation ein erläuterungsbedürftiger Begriff [mental representation, an explanation needy expression]. In J. Engelkamp & T. Pechmann (Eds.), Mentale Repräsentation (pp. 17–30). Huber: Bern.

Kaiser, H. F., & Dickman, K. W. (1959). Analytic determination of common factors. American Psychologist, 14, 425.

Kauertz, A. (2008). Schwierigkeitserzeugende Merkmale physikalischer Leistungstestaufgaben [Difficulty-generating features of physics assessment tasks]. In H. Niedderer, H. Fischler, & E. Sumfleth (Eds.), Studien zum Physik- und Chemielernen Band 79. Berlin: Logos Verlag.

Kline, P. (2000). The handbook of psychological testing (2nd ed.). London: Routledge.

Kozma, R. (2000). Representation and language: The case for representational competence in the chemistry curriculum. Paper presented at the Biennial Conference on Chemical education, Ann Arbor, MI.

Kozma, R. B., & Russell, J. (1997). Multimedia and understanding: Expert and novice responses to different representations of chemical phenomena. Journal of Research in ScienceTeaching, 43(9), 949–968.

Kozma, R. B., & Russell, J. (2005). Students becoming chemists: Developing representational competence. In J. Gilbert (Ed.), Visualization in science education. Dordrecht: Springer.

Kozma, R. B., Chin, E., Russell, J., & Marx, N. (2000). The role of representations and tools in the chemistry laboratory and their implications for chemistry learning. Journal of the Learning Sciences, 9(2), 105–143.

Lee, V. (2009). Examining patterns of visual representation use in middle school science classrooms. Proceedings of the National Association of research in science teaching (NARST) annual meeting compact disc, Garden Grove, CA: Omnipress.

Leisen, J. (1998a). Physikalische Begriffe und Sachverhalte. Repräsentationen auf verschiedenen Ebenen [physical notions and concepts. Representations on different levels]. Praxis der Naturwissenschaften Physik, 47(2), 14–18.

Leisen, J. (1998b). Förderung des Sprachlernens durch den Wechsel von Symbolisierungsformen im Physikunterricht [Fostering the learning of conversation via the change of symbolizitation-types]. Praxis der Naturwissenschaften Physik, 47(2), 9–13.

Mayer, R. E. (2005). Cognitive theory of multimedia learning. In R. E. Mayer (Ed.), The Cambridge handbook of multimedia learning (pp. 31–48). New York: Cambridge University Press.

Nersessian, N. J. (1992). How do scientists think? Capturing the dynamics of conceptual change in science. In R. N. Giere (Ed.), Cognitive models of science, Minnesota studies in the philosophy of science (Vol. 15, pp. 129–186). Minneapolis: University of Minnesota Press.

Nieminen, P., Savinainen, A., & Viiri, J. (2010). Force concept inventory-based multiple-choice test for investigating students’ representational consistency. Phyical Review Special Topics Physics Education Research, 6(2), 1–12.

Novak, J. D. (1990). The interplay of theory and methodology. In E. Hegarty-Hazel (Ed.), The student laboratory and the science curriculum. London, New York: Routledge.

Ramlo, S. (2008). Validity and reliability of the force and motion conceptual evaluation. American Journal of Physics, 76(9), 882–886.

Roth, W. M., & McGinn, M. (1998). Inscriptions: A social practice approach to representations. Review of Educational Research, 68, 35–59.

Saniter, A. (2003). Spezifika der Verhaltensmuster fortgeschrittener Studierender der Physik [The specifics of behavior patterns in advanced students of physics]. In H. Niedderer & H. Fischler (Eds.), Studien zum Physiklernen Band 28. Berlin: Logos.

Scheid, J. (2013). Multiple Repräsentationen, Verständnis physikalischer Experimente und kognitive Aktivierung: Ein Beitrag zur Entwicklung der Aufgabenkultur [Multiple representations, understanding physics experiments and cognitive activation: A contribution to developing a task culture]. In H. Niedderer, H. Fischler, & E. Sumfleth (Eds.), Studien zum Physik- und Chemielernen, Band 151. Logos Verlag: Berlin, Germany.

Scheid, J., Müller, A., Hettmannsperger, R., & Schnotz, W. (2015). Scientific experiments, multiple representations, and their coherence. A task based elaboration strategy for ray optics. In W. Schnotz, A. Kauertz, H. Ludwig, A. Müller, & J. Pretsch (Eds.), Multiple perspectives on teaching and learning. Basingstoke: Palgrave Macmillan.

Scheid, J., Müller A., Hettmannsperger, R. & Kuhn, J. (2017). Erhebung von repräsentationaler Kohärenzfähigkeit von Schülerinnen und Schülern im Themenbereich Strahlenoptik [Assessment of Students Representational Coherence Ability in the Area of Ray Optics]. Zeitschrift für Didaktik der Naturwissenschaften, 23, 181–203.

Schnotz, W. (1994). Aufbau von Wissensstrukturen. Untersuchungen zur Kohärenzbildung bei Wissenserwerb mit Texten [Construction of knowledge structures. Research on coherence development during knowledge acquisition with texts]. Beltz, Psychologie-Verl.Union: Weinheim.

Schnotz, W. (2002). Towards an integrated view of learning from text and visual displays. Educational Psychology Review, 14(1), 101–119.

Schnotz, W. (2005). An integrated model of text and picture comprehension. In R. E. Mayer (Ed.), The Cambridge handbook of multimedia learning (pp. 49–70). New York: Cambridge University Press.

Schnotz, W., & Bannert, M. (2003). Construction and interference in learning from multiple representation. Learning and Instruction, 13(2), 141–156.

Schnotz, W., Baadte, C., Müller, A., & Rasch, R. (2011). Kreatives Denken und Problemlösen mit bildlichen und beschreibenden Repräsentationen [Creative thinking and problem solving with depictoral and descriptive representations]. In R. Sachs-Hombach & R. Totzke (Eds.), Bilder Sehen Denken (pp. 204–252). Köln: Halem Verlag.

diSessa, A., Hammer, D., Sherin, B., & Kolpakowski, T. (1991). Inventing graphing: Metarepresentational expertise in children. Journal of Mathematical Behavior, 10(2), 7–160.

Sweller, J. (1999). Instructional design in technical areas. Melbourne: ACER Press.

Tesch, M. (2005). Das experiment im Physikunterricht [The experiment in physics education]. In H. Niedderer, H. Fischler, & E. Sumfleht (Eds.), Studien zum Physik- und Chemielernen Band 42. Berlin: Logos.

Thiele, R. B., & Treagust, D. F. (1994). An interpretive examination of high school chemistry teachers’ analogical explanations. Journal of Research in Science Teaching, 31, 227–242.

Treagust, D. F., Chittleborough, G. D., & Mamiala, T. L. (2003). The role of sub-microscopic and symbolic representations in chemical explanations. International Journal of Science Education, 25(11), 1353–1369.

Tsui, C., & Treagust, D. (Eds.). (2013). Multiple representations in biological education. Dordrecht: Springer.

Tytler, R., Prain, V., Hubber, P., & Waldrip, B. (Eds.). (2013). Constructing representations to learn in science. Rotterdam: Sense.

Weise, G. (1975). Psychologische Leistungstests [Psychological performance tests]. Göttingen: Hogrefe.

Wirtz, M., & Caspar, F. (2002). Beurteilerübereinstimmung und Beurteilerreliabilität [Rater agreement and rater reliability]. Göttingen: Hogrefe.

Acknowledgments

We thank all teachers and students from the schools for participating in the study and the federal state Rhineland-Palatinate for the permission to realize it. We are grateful to the German Research Association (DFG, Graduate School GK1561), who funded this research. The opinions reported do not represent the views of the funding body.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Appendix

Appendix

Rights and permissions

Copyright information

© 2018 Springer International Publishing AG, part of Springer Nature

About this chapter

Cite this chapter

Scheid, J., Müller, A., Hettmannsperger, R., Schnotz, W. (2018). Representational Competence in Science Education: From Theory to Assessment. In: Daniel, K. (eds) Towards a Framework for Representational Competence in Science Education. Models and Modeling in Science Education, vol 11. Springer, Cham. https://doi.org/10.1007/978-3-319-89945-9_13

Download citation

DOI: https://doi.org/10.1007/978-3-319-89945-9_13

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-89943-5

Online ISBN: 978-3-319-89945-9

eBook Packages: EducationEducation (R0)