Abstract

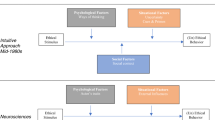

There are no clear recommendations, best practices, or enough experience in validation studies aimed at obtaining validity evidence using response processes. Cognitive interviewing and think aloud methods can provide such validity evidence. The overlapping labels and the blurred delimitation between cognitive interviewing and think aloud methods can lead to researchers consolidating bad practices, and avoiding obtaining full advantages from these methods. The aim of the chapter is to help researchers make informed decisions about what method is the best option when planning a validation study to obtain response processes validity evidence. First, we describe the state-of-the-art in conducting think aloud and cognitive interviewing studies, and second, we describe the main procedural issues in conducting both methods. Similarities and differences between both methods will be evident throughout the chapter.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

American Educational Research Association, American Psychological Association, & National Council on Measurement in Education [AERA, APA, & NCME]. (1999). Standards for educational and psychological testing. Washington, DC: American Educational Research Association.

American Educational Research Association, American Psychological Association, & National Council on Measurement in Education [AERA, APA, & NCME] (2014). The standards for educational and psychological testing. Washington DC: Author.

Beatty, P., & Willis, G. (2007). Research synthesis: The practice of cognitive interviewing. Public Opinion Quarterly, 71(2), 287–311.

Benítez, I., He, J., van de Vijver, F. J. R., & Padilla, J. L. (2016). Linking extreme response styles to response processes: A cross-cultural mixed methods approach. International Journal of Psychology, 51, 464–473.

Benítez, I., & Padilla, J. L. (2014). Analysis of non-equivalent assessments across different linguistic groups using a mixed methods approach: Understanding the causes of differential item functioning by cognitive interviewing. Journal of Mixed Methods Research, 8, 52–68.

Bloom, B. S., Engerhart, M. D., Furst, E. J., Hill, W. H., & Krathwohl, D. R. (1956). Taxonomy of educational objectives: The classification of educational goals. Handbook I: Cognitive domain. New York, NY: David McKay.

Castillo, M., & Padilla, J. L. (2013). How cognitive interviewing can provide validity evidence of the response processes to scale items. Social Indicators Research, 114, 963–975.

Chepp, V., & Gray, C. (2014). Foundations and new directions. In K. Miller, S. Willson, V. Chepp, & J. L. Padilla (Eds.), Cognitive interviewing methodology (pp. 7–14). Hoboken, NJ: John Wiley & Sons.

Cizek, G. J., Rosenberg, S. L., & Koons, H. H. (2007). Sources of validity evidence for educational and psychological tests. Educational and Psychological Measurement, 68, 397–412.

Collins, D. (2015). Cognitive interviewing practice. London, UK: Sage.

Conrad, F. G., & Blair, J. (2009). Sources of error in cognitive interviews. Public Opinion Quarterly, 73, 32–55.

Embretson, S. (1983). Construct validity: Construct representation versus nomothetic span. Psychological Bulletin, 93, 179–197.

Ericsson, K. A., & Simon, H. A. (1980). Verbal reports as data. Psychological Review, 87, 215–251.

Ericsson, K. A., & Simon, H. A. (1993). Protocol analysis: Verbal reports as data. Cambridge, MA: The MIT Press.

Fox, M. C., Ericsson, A., & Best, R. (2011). Do procedures for verbal reporting of thinking have to be reactive? A meta-analysis and recommendations for best reporting methods. Psychological Bulletin, 137, 316–344.

Kane, M. T. (2013). Validation as a pragmatic, scientific activity. Journal of Educational Measurement, 50, 115–122.

Krosnick, J. A. (1999). Survey research. Annual Review of Psychology, 50, 537–567.

Jabine, T., Straf, M., Tanur, J., & Tourangeau, R. (Eds.). (1984). Cognitive aspects of survey design: Building a bridge between disciplines. Washington, DC: National Academy Press.

Landis, J. R., & Koch, G. G. (1977). The measurement of observer agreetment for categorical data. Biometrics, 33, 159–174.

Leahey, T. H. (1992). A history of psychology (3rd ed.). Englewood Cliffs, NJ: Prentice Hall.

Leighton, J. P. (2004). Avoiding misconception, misuse, and missed opportunities: The collection of verbal reports in educational achievement testing. Educational Measurement: Issues and Practice, 23, 6–15.

Leighton, J. P. (2013). Item difficulty and interviewer knowledge effects on the accuracy and consistency of examinee response processes in verbal reports. Applied Measurement in Education, 26, 136–157.

Leighton, J. P. (2017a). Collecting, analyzing and interpreting verbal response process data. In K. Ercikan & J. Pellegrino (Eds.), Validation of score meaning in the next generation of assessments. Routledge.

Leighton, J. P. (2017b). Using think aloud interviews and cognitive labs in educational research. Oxford, UK: Oxford University Press.

Leighton, J. P., Cui, Y., & Cor, M. K. (2009). Testing expert-based and student-based cognitive models: An application of the attribute hierarchy method and hierarchical consistency index. Applied Measurement in Education, 22, 229–254.

Loftus, E. (1984). Protocol analysis of response to survey recall questions. In T. Jabine, M. Straf, J. Tanur, & R. Tourangeau (Eds.), Cognitive aspects of survey design: Building a bridge between disciplines (pp. 61–64). Washington, DC: National Academy Press.

Messick, S. (1990). Validity of test interpretation and use, Research report No. 90–11. Princeton, NJ: Education Testing Service.

Miller, K. (2011). Cognitive interviewing. In K. Miller, J. Madans, A. Maitland, & G. Willis (Eds.), Question evaluation methods: Contributing to the science of data quality (pp. 51–75). New York, NY: Wiley.

Miller, K. (2014). Introduction. In K. Miller, S. Willson, V. Chepp, & J. L. Padilla (Eds.), Cognitive interviewing methodology (pp. 1–6). New York, NY: Wiley.

Miller, K., Chepp, V., Willson, S., & Padilla, J. L. (Eds.). (2014). Cognitive interviewing methodology. New York, NY: Wiley.

Miller, K., Willson, S., Chepp, V., & Ryan, J. M. (2014). Analyses. In K. Miller, S. Willson, V. Chepp, & J. L. Padilla (Eds.), Cognitive interviewing methodology (pp. 35–50). New York, NY: Wiley.

Newell, A., & Simon, H. A. (1972). Human problem solving. Englewood Cliffs, NJ: Prentice-Hall.

Padilla, J. L., & Benítez, I. (2014). Validity evidence based on response processes. Psicothema, 26, 136–144.

Presser, S., Couper, M. P., Lessler, J. T., Martin, E., Martin, J., Rothgeb, J. M., & Singer, E. (2004). Methods for testing and evaluating survey questions. Public Opinion Quarterly, 68, 109–130.

Ridolfo, H., & Schoua-Glusberg, A. (2011). Analyzing cognitive interview data using the constant comparative method of analysis to understand cross-cultural patterns in survey data. Field Methods, 23, 420–438.

Schwarz, N. (2007). Cognitive aspects of survey methodology. Applied Cognitive Psychology, 21, 277–287.

Shear, B. R., & Zumbo, B. D. (2014). What counts as evidence: A review of validity studies in educational and psychological measurement. In B. D. Zumbo & E. K. H. Chan (Eds.), Validity and validation in social, behavioral, and health sciences (pp. 91–111). New York, NY: Springer.

Shulruf, B., Hattie, J., & Dixon, R. (2008). Factors affecting responses to Likert type questionnaires: Introduction of the ImpExp, a new comprehensive model. Social Psychology of Education, 11, 59–78.

Sireci, S. G. (2012, April). “De-constructing” test validation. Paper presented at the annual conference of the National Council on Measurement in Education as part of the symposium “Beyond Consensus: The Changing Face of Validity” (P. Newton, Chair), Vancouver, BC.

Stone, J., & Zumbo, B. D. (2016). Validity as a pragmatist project: A global concern with local application. In V. Aryadoust & J. Fox (Eds.), Trends in language assessment research and practice (pp. 555–573). Newcastle, UK: Cambridge Scholars.

Tourangeau, R. (1984). Cognitive science and survey methods: A cognitive perspective. In T. Jabine, M. Straf, J. Tanur, & R. Tourangeau (Eds.), Cognitive aspects of survey design: Building a bridge between disciplines (pp. 73–100). Washington, DC: National Academy Press.

Willis, G. B. (2005). Cognitive interviewing. Thousand Oaks, CA: Sage.

Willis, G. B. (2009). Cognitive interviewing. In P. Lavrakas (Ed.), Encyclopedia of survey research methods (Vol. 2, pp. 106–109). Thousand Oaks, CA: SAGE.

Willis, G. B. (2015). Analysis of the cognitive interview in questionnaire design. New York, NY: Oxford University Press.

Willis, G., & Miller, K. (2011). Cross-cultural cognitive interviewing: Seeking comparability and enhancing understanding. Field Methods, 23, 331–341.

Wilson, T. D. (1994). The proper protocol: Validity and completeness of verbal reports. Psychological Science, 5, 249–252.

Willson, S., & Miller, K. (2014). Data collection. In K. Miller, S. Willson, V. Chepp, & J. L. Padilla (Eds.), Cognitive interviewing methodology (pp. 15–33). New York, NY: Wiley.

Zumbo, B. D. (2009). Validity as contextualized and pragmatic explanation, and its implications for validation practice. In R. W. Lissitz (Ed.), The concept of validity (pp. 65–83). Charlotte, NC: Information Age Publishing, Inc.

Zumbo, B. D., & Shear, B. R. (2011). The concept of validity and some novel validation methods. In Northeastern Educational Research Association (p. 56). Rocky Hill, CT.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2017 Springer International Publishing AG

About this chapter

Cite this chapter

Padilla, JL., Leighton, J.P. (2017). Cognitive Interviewing and Think Aloud Methods. In: Zumbo, B., Hubley, A. (eds) Understanding and Investigating Response Processes in Validation Research. Social Indicators Research Series, vol 69. Springer, Cham. https://doi.org/10.1007/978-3-319-56129-5_12

Download citation

DOI: https://doi.org/10.1007/978-3-319-56129-5_12

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-56128-8

Online ISBN: 978-3-319-56129-5

eBook Packages: Social SciencesSocial Sciences (R0)