Abstract

The automation of visual quality inspection is becoming increasingly important in manufacturing industries. The objective is to ensure that manufactured products meet specific quality characteristics. Manual inspection by trained personnel is the preferred method in most industries due to the difficulty of identifying defects of various types and sizes. Sensor placement for 3D automatic visual inspection is a growing computer vision and robotics area. Although some methods have been proposed, they struggle to provide high-speed inspection and complete coverage. A fundamental requirement is to inspect the product with a certain specific resolution to detect all defects of a particular size, which is still an open problem. Therefore, we propose a novel model-based approach to automatically generate optimal viewpoints guaranteeing maximal coverage of the object’s surface at a specific spatial resolution that depends on the requirements of the problem. This is done by ray tracing information from the sensor to the object to be inspected once the sensor model and the 3D mesh of the object are known. In contrast to existing algorithms for optimal viewpoints generation, our approach includes the spatial resolution within the viewpoint planning process. We demonstrate that our approach yields optimal viewpoints that achieve complete coverage and a desired spatial resolution at the same time, while the number of optimal viewpoints is kept small, limiting the time required for inspection.

Access provided by Autonomous University of Puebla. Download conference paper PDF

Similar content being viewed by others

Keywords

- Ray tracing

- Sampling density matrix

- Set coverage problem

- Spatial resolution

- Viewpoint planning

- Visibility matrix

1 Introduction

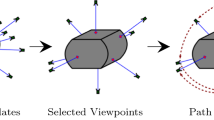

In the last decades, the automation of industrial production has emerged to play a pivotal role in the manufacturing industries. Companies have made substantial investments toward realizing fully automated processes, driven by optimizing production efficiency. An important process to maximize production performance is visual quality inspection. Automated visual inspection planning can be conceptualized as a Coverage Path Planning Problem, see [1, 18], which can be divided into two sub-problems, namely the Viewpoint Planning Problem (VPP) and the Path Planning Problem (PPP). The VPP involves generating a set of viewpoint candidates (VPCs) and selecting the minimum number of viewpoints by solving the Set Coverage Problem (SCP). The optimal solution consists of the smallest number of viewpoints necessary to achieve full coverage. The PPP involves finding a collision-free and time-optimal trajectory for a given mechanical stage or robotic manipulator that connects the optimal viewpoints generated by solving the VPP. In this work, we introduce a novel metric for selecting optimal viewpoints that ensures comprehensive object coverage at a desired spatial resolution. In industrial inspection, it is important to cover an object at a specific spatial resolution for identifying all defects with a minimal size that the user defines. The state of the art in literature for solving the VPP does not take into account any information about how each surface element is covered. Moreover, the object to be inspected is often provided as a non-homogeneous 3D mesh, and all surface elements are treated similarly, regardless of their actual size. To overcome these issues, we define a new matrix to provide maximum coverage and minimum spatial resolution. This is done by considering the area of each surface element and the number of ray intersections. We emphasize that the primary focus of this work is not on generating viewpoint candidates since many methods are already available and offer good performance. Instead, our focus is on selecting optimal viewpoints to achieve complete coverage and provide the desired spatial resolution, given a predefined set of candidates and specific requirements for the inspection task. This paper is organized as follows: In Sect. 2, we introduce the mathematical formulation of the VPP. The state of the art is presented in Sect. 3. Then, in Sect. 4, we describe our novel formulation for viewpoints evaluation and optimal viewpoints selection. In Sect. 5, we show the results obtained when applying our new formulation of the VPP compared to the standard definition. Finally, conclusions are drawn in Sect. 6.

2 Viewpoint Planning Problem Formulation

The VPP consists of a generation and a selection step. We consider a meshed object with \(s_i~\in ~\mathcal {S},\; i=1,2,\ldots , M\) surface elements and a set of VPCs defined as \(\mathcal {V}~=~\{\textbf{v}_1, \textbf{v}_2, ..., \textbf{v}_N \}\), with cardinality \(N = |\mathcal {V} |\). Each viewpoint is formulated as \(\textbf{v}_j^{\textrm{T}} = \begin{bmatrix} \textbf{c}_j^{\textrm{T}}&\textbf{g}_j^{\textrm{T}} \end{bmatrix} \in \mathbb {R}^6\) for \(j=1,\ldots ,N\) in world coordinates \(\mathcal {W}\) given by the camera position \(\textbf{c}_j\) and the gaze direction \(\textbf{g}_j\). Typically, \(M \gg N\) holds. In the context of VPP, see [14], \(\textbf{V}\in \mathbb {R}^{M\times N}\) is the so-called visibility matrix with matrix elements

Note that the row \(\textbf{V}[i,\mathcal {V}]\) of the visibility matrix corresponds to the surface element \(s_i\) of the mesh, and the column \(\textbf{V}[\mathcal {S},j]\) to the viewpoint \(\textbf{v}_j\). This is the input of the SCP together with \(\mathcal {V}\) that reads as

The SCP () constitutes a linear integer programming problem that aims to minimize (2a) subject to the constraints of (2b) and (2c). Its optimal solution is the smallest set of viewpoints necessary to fully cover the surface of the object, i.e., \(\mathcal {V^*}~=~\{\textbf{v}_k, \ldots ,\textbf{v}_l, \ldots , \textbf{v}_m \} \), with cardinality \(K \le N\) and \(\mathcal {J}^*=\{k,\ldots , l,\ldots , m\} \subseteq \{1,2, ..., N\}\). The binary variable \(y_j\) is the j-th element of the vector \(\textbf{y}\) and is weighted by the coefficient \(w_j\). Without loss of generality, we assume \(w_j=1\), which means that all viewpoints are of equal cost. Following [13], the coverage of a single viewpoint \(\textbf{v}_j\) is defined as

and the coverage due to all the optimal viewpoints computes as

This is the maximum achievable coverage considering all the viewpoint candidates.

3 State of the Art of the VPP

Over more than three decades, extensive research has been conducted on generating optimal viewpoints. Numerous algorithms have been developed for this purpose. Despite these efforts, the problem of generating optimal viewpoints still needs to be solved. No existing method can simultaneously achieve complete coverage of an object, utilize a small set of viewpoints, maintain low computational costs, and provide a high spatial resolution [6, 10, 15, 19]. A comprehensive overview of viewpoint generation methods can be found in [4]. The optimal viewpoint generation methods can be broadly classified into three main groups: space sampling [6, 12, 19], vertex sampling [3, 5, 13], and patch sampling [10, 11, 16]. Space sampling methods are often combined with Next Best View (NBV) approaches, initially introduced in [2]. NBV approaches do not require a detailed model of the object under inspection, but certain information, such as its size, can be beneficial. Various variants of NBV algorithms are documented in [7]. NBV algorithms involve determining a candidate viewpoint as the starting point of the inspection and selecting subsequent viewpoints based on the percentage of the unseen surface area of the object they offer. When the model of the object to be inspected is available, it is advantageous to leverage this knowledge and adopt an alternative approach to NBV. This alternative approach revolves around solving the SCP. The core component of this formulation is the visibility matrix, which we introduced in Sect. 2. The visibility matrix is generated by performing sight ray tracing using the sensor model, viewpoint information, and the 3D model of the object.

In the literature, a surface element is typically considered visible if it satisfies four conditions: (i) it falls within the field of view of the sensor, (ii) it is within the sensor’s depth of field, (iii) there are no occlusions along the line of sight between the viewpoint and the surface element, and (iv) the incident angle between the line of sight and the surface’s normal is below a certain threshold. This approximation helps to determine if a surface element is intersected by at least one ray originating from a given viewpoint. However, it does not provide information about the surface element’s spatial resolution or level of coverage. For an overview of criteria commonly used to evaluate view planning outcomes, we refer to [13]. The author of the aforementioned paper proposes a suitable approach for line-scan range cameras, which considers the precision and sampling density provided by the viewpoints. In this approach, a surface element is deemed visible if it falls within the sensor’s frustum, has no occlusions along the line of sight, and the estimated precision and sampling density for that specific surface element and viewpoint meet the problem’s requirements. However, one drawback of this approach is that the sampling density is evaluated per surface element from a specific viewpoint. As a result, it may identify optimal viewpoints with excessively high sampling density, leading to an unnecessarily dense representation of surface elements that does not provide significant additional information or contribute to the analysis. Moreover, this high density incurs substantial computational costs. To address these issues, we propose to define a new matrix to replace the visibility matrix to consider the spatial resolution of each surface element in relation to the entire set of VPCs.

4 Sampling Density Matrix

This paper presents a novel formulation to determine optimal viewpoints. We propose a new metric that measures not only visibility but also spatial resolution. In what follows, we will often use the term (spatial) density, which is closely related to spatial resolution. We propose a new formulation that aims to achieve high spatial resolution in the sense of a small distance between the rays striking a face and thus a high spatial density. To this end, we introduce a new matrix called the ”sampling density” matrix, which serves a similar role as the visibility matrix in the SCP but incorporates additional measurement features. This enables us to resolve the VPP to select optimal viewpoints that maximize coverage while ensuring a minimum spatial resolution \(\bar{r}_s\), tailored to the problem’s requirements. This is particularly important in visual inspections, where the objective is to detect defects of varying sizes based on the specific use case.

The visual inspection of an object involves two main components. On the left side, we have the generation of rays in the case of a perspective projection. Each ray originates from a viewpoint \(\textbf{v}_j\) and its direction is determined by the line connecting the viewpoint and the location of a pixel on the image plane denoted as \(\varDelta _\textrm{image}\). The image plane consists of \(n_x \times n_y\) pixels, with each pixel separated from one another by distances \(r_{s_x}\) and \(r_{s_y}\) along the two directions of the image plane. On the right side, we have the representation of the object to be inspected in the form of a 3D mesh with non-homogeneous surface elements \(s_i\), each having an area \(\varDelta _{s_i}\).

To accurately capture the object’s geometry and detect defects, it is necessary to consider the number of rays intersecting each surface element. However, this information is not captured by the visibility matrix. It only distinguishes between visible and non-visible surface elements based on whether at least one ray intersects with a given surface element. One drawback of relying on the visibility matrix is that in the case of a 3D model represented by non-uniform triangular faces (or surface elements), a single ray intersect may be sufficient to identify small faces, especially if they are smaller than the defect of interest. However, this may not hold for larger surface elements since more rays are required to cover larger areas. Moreover if a defect is smaller than the distance between two rays (spatial resolution), it may go undetected. One potential solution to overcome this issue would be to re-mesh the object to have uniform faces, all with a size comparable to the desired spatial resolution. However, this approach introduces geometric changes to the meshed object, negatively impacting viewpoint planning for defect detection.

The objective of this paper is to address the mentioned challenges while avoiding the need for object re-meshing. Additionally, we aim to overcome the lack of information about the achieved spatial resolution, which is influenced by both the surface element’s area and the number of rays intersecting it. In order to integrate spatial resolution in the VPP, we consider four crucial factors: (i) the image place with area \(\varDelta _\textrm{image}\), (ii) the surface area \(\varDelta _{s_i}\) of the surface element \(s_i\), (iii) the number of rays \(R_{ij}\) associated with \(\textbf{v}_j\) intersecting with \(s_i\), and (iv) the spatial resolution \(r_{s_x}\) and \(r_{s_y}\) in both directions.

As shown in Fig. 1, we consider an image with \(n_x \times n_y\) pixels. This is fitted into a rectangle of size \((r-l)\times (t-b)\) that corresponds to the image plane area \(\varDelta _\textrm{image}\) at a reference distance \(d_r\) equal to the effective focal length from the viewpoint \(\textbf{v}_j\). Mathematically, this relationship can be expressed as \(\varDelta _\textrm{image} = (r-l) \times (t-b)\). Furthermore, the spatial resolution of the sensor at the reference distance can be represented as \(r_{s_x} = (r-l) / n_x\) and \(r_{s_y} = (t-b) / n_y\), as detailed in [8]. For notation purpose, we define the inverse of the spatial resolution at reference distance d as

which we refer to as the (spatial) sampling density. To determine if a surface element. In the following, we assume the reference distance d to be equal to the working distance of the sensor. To determine if a surface element \(s_i\) is intersected by rays originating from viewpoint \(\textbf{v}_j\) and to track the number of rays hitting it, we draw inspiration from Eq. (5). We introduce the ray matrix \(\textbf{R}\). Each matrix element \(\textbf{R}_{ij}\) represents the number of rays originating from the viewpoint \(\textbf{v}_j\) that intersect the surface element \(s_i\). In addition, we introduce the surface element density as \(\delta _{s_{ij}}\), where the index i pertains to the surface element \(s_i\), and the index j pertains to the viewpoint \(\textbf{v}_j\). This variable relates the number of rays \(\textbf{R}_{ij}\) that intersect with a surface element \(s_i\) to its surface area \(\varDelta _{s_i}\). Mathematically, the surface element sampling density due to a single viewpoint \(\textbf{v}_j\) can be expressed as

Furthermore, we introduce the surface element sampling density \(\delta _{s_i}\), which is the cumulative sum of \(\delta _{s_{ij}}\) over all viewpoints \(\textbf{v}_j\), with \(i=1,\ldots ,N\), i.e.

In the problem at hand, we aim not only at full coverage but at a specific minimal sampling density per surface element \(s_i\), i.e. \(\delta _{s_i}\ge \bar{\delta }_s\), with target surface element sampling density \(\bar{\delta }_s\). At this point, we make use of the relations (6) and (7) as well as of the definition of the visibility matrix (1) to introduce the so-called sampling density matrix \(\textbf{S}~\in ~\mathbb {R}^{M \times N}\). This reads as

where \(\textbf{V}_{ij}=\{0,1\}\) are the elements of the visibility matrix \(\textbf{V}\) introduced in Sect. 2 and \(\bar{\delta }_s\) is the target surface element sampling density. In particular, \(\textbf{S}_{ij} = 0\) if there is no ray originating from \(\textbf{v}_j\) intersecting with the surface element \(s_i\) and \(\textbf{S}_{ij}=1\) if the viewpoint \(\textbf{v}_j\) can provide the required spatial density of \(s_i\) or if there is no combination of the VPCs that can guarantee \(\bar{\delta }_s\). The reason for this is that we want to determine a set of optimal viewpoints which are able to cover all the visible surface elements, even when they cannot be covered with target spatial density. In this case, we use the definition of visible as it is commonly used in the literature and as described in Sect. 3.

In the subsequent steps, we aim to utilize the sampling density matrix to identify the optimal set of viewpoints \(\mathcal {V^*}\). These viewpoints should not only maximize coverage but also provide a sampling density that exceeds the target sampling density, i.e., \(\delta _{s_i} \ge \bar{\delta _s}\) and therefore offer the required spatial resolution. To achieve this, we replace the visibility matrix with the sampling density matrix in the SCP (). Additionally, the sampling density per surface element is considered as the sum of the spatial density offered by each viewpoint. This approach helps to avoid excessively high sampling densities that could be computationally expensive.

Evaluation of the sampling density matrix \(\textbf{S}\): (left) Achieved coverage with respect to the number of surface elements of the mesh used to generate the viewpoints candidates (VPCs). Coverage is evaluated adopting the visibility matrix \(\textbf{V}\) (dashed lines) and the novel sampling density matrix (solid lines) as introduced in this work. (right) Relationship between spatial resolution and number of optimal viewpoints (#OVP) when the sampling density matrix is adopted. We indicate with a star the values that have been achieved when the visibility matrix was used.

5 Results and Discussions

Histograms showing the resolution per surface element in the case of the crankshaft (left) and hirt (right) when the optimal viewpoints are determined using the visibility matrix \(\textbf{V}\) and the novel sampling density matrix \(\textbf{S}\). The vertical line indicates the target spatial resolution \(\bar{r}_s={0.18}\,\textrm{mm}\). (Color figure online)

The simulation results were conducted using a sensor model with dimensions of \(1200 \times 1920\) pixels. The sensor has a field of view of \(38.7^\circ \times 24.75^\circ \), a depth of field ranging from 300 to \({700}\,\textrm{mm}\), and a maximum incidence angle of \(75^\circ \). Our evaluation metric considered two objectsFootnote 1 with distinct geometric characteristics, namely a hirth and a crankshaft. These objects are depicted in Fig. 2. Without loss of generality, in the following we assume a target spatial resolution \(\bar{r}_s={0.18}\,\textrm{mm}\) and a set of 500 viewpoint candidates (VPCs). These were generated with the method proposed in [17] because it achieves a higher coverage than the one achieved with the other methods for VPCs generation. Firstly, our objective is to demonstrate that using our metric allows us to generate candidates starting from a decimated mesh, resulting in higher coverage compared to when the visibility matrix is adopted. In Fig. 3, the coverage is evaluated on the original 3D mesh of the target objects, while the VPCs are generated using different decimated meshes. Typically, when decimated mesh models are used for generating VPCs, there are losses in the coverage of the original target object due to geometric differences between the meshes. However, this effect is mitigated when our metric is utilized, as demonstrated in the left subfigure of Fig. 3. The achieved coverage, shown by the solid lines representing the sampling matrix, consistently surpasses the coverage obtained with the visibility matrix (indicated by the dashed lines). Notably, the solid lines are always positioned to the right of their corresponding dashed lines, highlighting the superior coverage achieved when our metric is applied. The difference between the coverage obtained using the two metrics is more pronounced when the VPCs are generated from highly decimated meshes. The number of optimal viewpoints (#OVP) obtained using our metric is relatively small, ranging from 4 to 12 for a higher spatial resolution (smaller values) depending on the mesh model used for generating the viewpoint candidates. The number of viewpoints decreases as the spatial resolution decreases (larger values), eventually reaching a value equivalent to the number of OVPs required when the visibility matrix is employed (indicated by a star in the plots of Fig. 3), as shown in the right subfigure of the same figure. It is important to emphasize that the primary objective of our formulation for the VPP is to provide optimal viewpoints for generating a dense point cloud that satisfies the spatial resolution constraint. This is evident in Fig. 4, where a scalar field representing the number of neighbours within a sphere of radius equal to the target resolution is presented. For both objects, the hirth and crankshaft, the number of neighbours is higher when our formulation is adopted. This indicates that a higher spatial resolution is obtained, enabling the detection of smaller defects. In addition, two histograms are shown in Fig. 5 to compare the spatial resolution achieved with the visibility matrix (blue) and the novel sampling density matrix (red) per surface element. The spatial resolution is larger when our VPP formulation is employed, as shown by the concentration of red bins on the left side of the plots. On the other hand, when the visibility matrix is used, only a few surface elements meet the required spatial resolution (indicated by blue bins between 0 and \(\bar{r}_s\)), while the majority of surface elements do not meet the requirement (blue bins to the right of \(\bar{r}_s\) in Fig. 5).

6 Conclusions and Outlook

In this paper, we introduce a novel metric for evaluating viewpoint candidates by utilizing a new matrix called the sampling density matrix. This replaces the state of the art visibility matrix in the set coverage problem. Our approach takes into account both the surface area of each element of the mesh and the number of rays intersecting it, allowing it to be adapted to homogeneous and non-homogeneous 3D meshes of the target object. The new formulation of the viewpoint planning problem presented in this paper can be integrated into various model-based pipelines that employ sight ray tracing to evaluate viewpoint candidates and solve the SCP. Our method generates optimal viewpoints that ensure desired spatial resolution on a per-surface element basis. The method is versatile and well-suited for different types of visual inspection tasks, including surface and dimensional inspection [9]. The simulation experiments conducted in this study demonstrate that decimated meshes can be used to compute viewpoints for models with a high number of faces, thereby accelerating the computation of optimal viewpoints without sacrificing the achievable coverage. This is in contrast to the standard VPP formulation, where decimated meshes worsen the performance in terms of coverage. Considering that the appearance of the target object can impact visibility during inspection tasks, we propose further research to incorporate photometric information into the viewpoint evaluation process. This would require extending the ray tracing capabilities of our method to account for the reflectivity of the object and to consider the relative positions of the camera and of the illumination points with respect to the position of the object being inspected. By incorporating photometric considerations, a more comprehensive evaluation of viewpoints can be achieved.

Notes

- 1.

https://owncloud.fraunhofer.de/index.php/s/H8jV9rwGN84knzP (Accessed August 19, 2023).

References

Almadhoun, R., Taha, T., Seneviratne, L., Dias, J., Cai, G.: A survey on inspecting structures using robotic systems. Int. J. Adv. Rob. Syst. 13(6), 1–18 (2016)

Connolly, C.: The determination of next best views. In: IEEE International Conference on Robotics and Automation, vol. 2, pp. 432–435 (1985)

Englot, B.J.: Sampling-based coverage path planning for complex 3D structures. Ph.D. thesis, Massachusetts Institute of Technology (2012). http://hdl.handle.net/1721.1/78209

Gospodnetić, P., Mosbach, D., Rauhut, M., Hagen, H.: Viewpoint placement for inspection planning. Mach. Vis. Appl. 33(1), 1–21 (2022)

Gronle, M., Osten, W.: View and sensor planning for multi-sensor surface inspection. Surf. Topogr. Metrol. Prop. 4(2), 777–780 (2016)

Jing, W., Polden, J., Lin, W., Shimada, K.: Sampling-based view planning for 3D visual coverage task with unmanned aerial vehicle. In: IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 1808–1815 (2016)

Karaszewski, M., Adamczyk, M., Sitnik, R.: Assessment of next-best-view algorithms performance with various 3D scanners and manipulator. ISPRS J. Photogramm. Remote Sens. 119, 320–333 (2016)

Marschner, S., Shirley, P.: Fundamentals of Computer Graphics. CRC Press (2018)

Mohammadikaji, M.: Simulation-Based Planning of Machine Vision Inspection Systems with an Application to Laser Triangulation, vol. 17. KIT Scientific Publishing (2020)

Mosbach, D., Gospodnetić, P., Rauhut, M., Hamann, B., Hagen, H.: Feature-driven viewpoint placement for model-based surface inspection. Mach. Vis. Appl. 32(1), 1–21 (2021)

Prieto, F., Lepage, R., Boulanger, P., Redarce, T.: A CAD-based 3D data acquisition strategy for inspection. Mach. Vis. Appl. 15(2), 76–91 (2003)

Sakane, S., Sato, T.: Automatic planning of light source and camera placement for an active photometric stereo system. In: IEEE International Conference on Robotics and Automation, pp. 1080–1081 (1991)

Scott, W.R.: Model-based view planning. Mach. Vis. Appl. 20(1), 47–69 (2009)

Scott, W.R., Roth, G., Rivest, J.F.: Performance-oriented view planning for model acquisition. In: International Symposium on Robotics, pp. 212–219 (2000)

Scott, W.R., Roth, G., Rivest, J.F.: View planning for automated three-dimensional object reconstruction and inspection. ACM Comput. Surv. (CSUR) 35(1), 64–96 (2003)

Sheng, W., Xi, N., Tan, J., Song, M., Chen, Y.: Viewpoint reduction in vision sensor planning for dimensional inspection. In: IEEE International Conference on Robotics, Intelligent Systems and Signal Processing, vol. 1, pp. 249–254 (2003)

Staderini, V., Glück, T., Schneider, P., Mecca, R., Kugi, A.: Surface sampling for optimal viewpoint generation. In: 2023 IEEE 13th International Conference on Pattern Recognition Systems (ICPRS), pp. 1–7 (2023)

Tan, C.S., Mohd-Mokhtar, R., Arshad, M.R.: A comprehensive review of coverage path planning in robotics using classical and heuristic algorithms. IEEE Access 9, 119310–119342 (2021)

Tarbox, G.H., Gottschlich, S.N.: Planning for complete sensor coverage in inspection. Comput. Vis. Image Underst. 61(1), 84–111 (1995)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Staderini, V., Glück, T., Mecca, R., Schneider, P., Kugi, A. (2023). Spatial Resolution Metric for Optimal Viewpoints Generation in Visual Inspection Planning. In: Christensen, H.I., Corke, P., Detry, R., Weibel, JB., Vincze, M. (eds) Computer Vision Systems. ICVS 2023. Lecture Notes in Computer Science, vol 14253. Springer, Cham. https://doi.org/10.1007/978-3-031-44137-0_17

Download citation

DOI: https://doi.org/10.1007/978-3-031-44137-0_17

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-44136-3

Online ISBN: 978-3-031-44137-0

eBook Packages: Computer ScienceComputer Science (R0)