Abstract

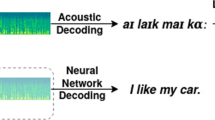

The automatic speech recognition is a challenging deep learning problem and transformer architectures have gained an immense improvement in the performance on that task. However, transformer-based models are computationally expensive and comparatively large, which creates issues on deploying them on the memory-constrained devices. Quantization is one of the most promising approaches in reducing the neural network’s size and latency. In this paper, we mainly focus on the optimization of the ASR transformer model by applying quantization and knowledge distillation. We apply the SotA quantization methods on the baseline ASR model and examine the sensitive layers which make significant contribution to the performance drop. We’ve come up with the improvements to accelerate the convergence of quantization methods and to enhance the quantization representation quality. Our modified 2-bit model has shown less than 1% drop in WER in comparison to the float model on the LibriSpeech dataset.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Amodei, D., et al.: Deep speech 2: End-to-end speech recognition in english and mandarin. arXiv preprint. arXiv:1512.02595 (2015)

Banner, R., Nahshan, Y., Hoffer, E., Soudry, D.: Post-training 4-bit quantization of convolution networks for rapid-deployment. arXiv preprint. arXiv:1810.05723 (2019)

Bhalgat, Y., Lee, J., Nagel, M., Blankevoort, T., Kwak, N.: Lsq+: Improving low-bit quantization through learnable offsets and better initialization. arXiv preprint. arXiv:2004.09576 (2020)

Bhandare, A., et al.: Efficient 8-bit quantization of transformer neural machine language translation model. arXiv preprint. arXiv:1906.00532 (2019)

Bie, A., Venkitesh, B., Monteiro, J., Haidar, M., Rezagholizadeh, M., et al.: A simplified fully quantized transformer for end-to-end speech recognition. arXiv preprint. arXiv:1911.03604 (2019)

Blalock, D., Ortiz, J.J.G., Frankle, J., Guttag, J.: What is the state of neural network pruning? arXiv preprint. arXiv:2003.03033 (2020)

Chiu, C.C., et al.: State-of-the-art speech recognition with sequence-to-sequence models. In: 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 4774–4778 (2018). https://doi.org/10.1109/ICASSP.2018.8462105

Chorowski, J., Bahdanau, D., Serdyuk, D., Cho, K., Bengio, Y.: Attention-based models for speech recognition. arXiv preprint. arXiv:1506.07503 (2015)

Devlin, J., Chang, M.W., Lee, K., Toutanova, K.: BERT: Pre-training of deep bidirectional transformers for language understanding. In: Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Vol. 1 (Long and Short Papers), pp. 4171–4186. Association for Computational Linguistics, Minneapolis, Minnesota (2019). https://doi.org/10.18653/v1/N19-1423, https://www.aclweb.org/anthology/N19-1423

Dong, L., Xu, S., Xu, B.: Speech-transformer: a no-recurrence sequence-to-sequence model for speech recognition. In: 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 5884–5888 (2018). https://doi.org/10.1109/ICASSP.2018.8462506

Esser, S.K., McKinstry, J.L., Bablani, D., Appuswamy, R., Modha, D.S.: Learned step size quantization. CoRR abs/1902.08153 (2019). http://arxiv.org/abs/1902.08153

Esser, S.K., McKinstry, J.L., Bablani, D., Appuswamy, R., Modha, D.S.: Learned step size quantization. arXiv preprint. arXiv:1902.08153 (2020)

Fan, A., et al.: Training with quantization noise for extreme model compression. arXiv preprint. arXiv:2004.07320 (2021)

Gou, J., Yu, B., Maybank, S.J., Tao, D.: Knowledge Distillation: a Survey. Int. J. Comput. Vis. 129(6), 1789–1819 (2021). https://doi.org/10.1007/s11263-021-01453-z

Graves, A.: Sequence transduction with recurrent neural networks. arXiv preprint. arXiv:1211.3711 (2012)

Graves, A., Mohamed, A.R., Hinton, G.: Speech recognition with deep recurrent neural networks. arXiv preprint. arXiv:1804.09028 (2013)

Han, S., Mao, H., Dally, W.J.: Deep compression: compressing deep neural networks with pruning, trained quantization and huffman coding. arXiv preprint. arXiv:1510.00149 (2016)

He, Q., et al.: Effective quantization methods for recurrent neural networks. arXiv preprint. arXiv:1611.10176 (2016)

Hinton, G., Vinyals, O., Dean, J.: Distilling the knowledge in a neural network. arXiv preprint. arXiv:1503.02531 (2015)

Hubara, I., Courbariaux, M., Soudry, D., El-Yaniv, R., Bengio, Y.: Quantized neural networks: training neural networks with low precision weights and activations. arXiv preprint. arXiv:1609.07061 (2016)

Jacob, B., et al.: Quantization and training of neural networks for efficient integer-arithmetic-only inference. arXiv preprint. arXiv:1712.05877 (2017)

Levenshteyn, V.I.: Dvoichnyye kody s ispravleniyem vypadeniy, vstavok i zameshcheniy simvolov. In: Doklady Akademii nauk, vol. 163, pp. 845–848. Rossiyskaya akademiya nauk (1965)

Lin, Y., Li, Y., Liu, T., Xiao, T., Liu, T., Zhu, J.: Towards fully 8-bit integer inference for the transformer model. arXiv preprint. arXiv:2009.08034 (2020)

Liu, J., Wen, D., Wang, D., Tao, W., Chen, T.-W., Osa, K., Kato, M.: QuantNet: learning to quantize by learning within fully differentiable framework. In: Bartoli, A., Fusiello, A. (eds.) ECCV 2020. LNCS, vol. 12539, pp. 38–53. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-68238-5_4

Lou, Q., Guo, F., Liu, L., Kim, M., Jiang, L.: Autoq: automated kernel-wise neural network quantization. arXiv preprint. arXiv:1902.05690 (2020)

Mohamed, A., Okhonko, D., Zettlemoyer, L.: Transformers with convolutional context for asr. arXiv preprint. arXiv:1904.11660 (2019)

Nagel, M., Amjad, R.A., Van Baalen, M., Louizos, C., Blankevoort, T.: Up or down? adaptive rounding for post-training quantization. arXiv preprint.arXiv:2004.10568 (2020)

Nahshan, Y., et al.: Loss aware post-training quantization. arXiv preprint. arXiv:1911.07190 (2020)

Panayotov, V., Chen, G., Povey, D., Khudanpur, S.: Librispeech: an asr corpus based on public domain audio books. In: 2015 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 5206–5210. IEEE (2015)

Park, J., Qian, X., Jo, Y., Sung, W.: Low-latency lightweight streaming speech recognition with 8-bit quantized simple gated convolutional neural networks. In: ICASSP 2020–2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 1803–1807 (2020). https://doi.org/10.1109/ICASSP40776.2020.9053054

Prato, G., Charlaix, E., Rezagholizadeh, M.: Fully quantized transformer for machine translation. arXiv preprint. arXiv:1910.10485 (2020)

Sainath, T.N., et al.: A streaming on-device end-to-end model surpassing server-side conventional model quality and latency. arXiv preprint. arXiv:2003.12710 (2020)

Sakthi, M., Tewfik, A., Pawate, R.: Speech recognition model compression. In: ICASSP 2020–2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 7869–7873 (2020). https://doi.org/10.1109/ICASSP40776.2020.9053927

Shen, S., et al.: Q-bert: hessian based ultra low precision quantization of bert. arXiv preprint. arXiv:1909.05840 (2019)

Shkolnik, M., et al.: Robust quantization: one model to rule them all. arXiv preprint. arXiv:2002.07686 (2020)

Vaswani, A., et al.: Attention is all you need. arXiv preprint. arXiv:1706.03762 (2017)

Wang, P., Xie, X., Deng, L., Li, G., Wang, D., Xie, Y.: Hitnet: hybrid ternary recurrent neural network. NIPS’18, pp. 602–612. Curran Associates Inc., Red Hook, NY, USA (2018)

Wu, E.: Learning accurate integer transformer machine-translation models. SN Comput. Sci. 2(4), 1–8 (2021). https://doi.org/10.1007/s42979-021-00688-4

Yang, Z., et al.: Searching for low-bit weights in quantized neural networks. arXiv preprint. arXiv:2009.08695 (2020)

Zafrir, O., Boudoukh, G., Izsak, P., Wasserblat, M.: Q8bert: quantized 8bit bert. arXiv preprint. arXiv:1910.06188 (2019)

Zhao, X., Wang, Y., Cai, X., Liu, C., Zhang, L.: Linear symmetric quantization of neural networks for low-precision integer hardware. In: International Conference on Learning Representations (2020). https://openreview.net/forum?id=H1lBj2VFPS

Zhou, S., Dong, L., Xu, S., Xu, B.: Syllable-based sequence-to-sequence speech recognition with the transformer in mandarin Chinese. arXiv preprint. arXiv:1804.10752 (2018)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Zharikov, I., Krivorotov, I., Alexeev, V., Alexeev, A., Odinokikh, G. (2023). Low-Bit Quantization of Transformer for Audio Speech Recognition. In: Kryzhanovsky, B., Dunin-Barkowski, W., Redko, V., Tiumentsev, Y. (eds) Advances in Neural Computation, Machine Learning, and Cognitive Research VI. NEUROINFORMATICS 2022. Studies in Computational Intelligence, vol 1064. Springer, Cham. https://doi.org/10.1007/978-3-031-19032-2_12

Download citation

DOI: https://doi.org/10.1007/978-3-031-19032-2_12

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-19031-5

Online ISBN: 978-3-031-19032-2

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)