Abstract

The user’s palm plays an important role in object detection and manipulation. The design of a robust multi-contact tactile display must consider the sensation and perception of the stimulated area, aiming to deliver the right stimuli at the correct location. To the best of our knowledge, there is no study to obtain the human palm data for this purpose. The objective of this work is to introduce a method to investigate the user’s palm sensations during the interaction with objects. An array of fifteen Force Sensitive Resistors (FSRs) was located at the user’s palm to get the area of interaction, and the normal force delivered to four different convex surfaces. Experimental results showed the active areas at the palm during the interaction with each of the surfaces at different forces. The obtained results were verified in an experiment for pattern recognition to discriminate the applied force. The patterns were delivered in correlation with the acquired data from the previous experiment. The overall recognition rate equals 84%, which means that user can distinguish four patterns with high confidence. The obtained results can be applied in the development of multi-contact wearable tactile and haptic displays for the palm, and in training a machine-learning algorithm to predict stimuli aiming to achieve a highly immersive experience in Virtual Reality.

You have full access to this open access chapter, Download conference paper PDF

Similar content being viewed by others

Keywords

1 Introduction

Virtual Reality (VR) experiences are used by an increasing number of people, through the introduction of devices that are more accessible to the market. Many VR applications have been launched and are becoming parts of our daily life, such as simulators and games. To deliver a highly immersive VR experience, a significant number of senses have to be stimulated simultaneously according to the activity that the users perform in the VR environment.

The tactile information from haptic interfaces improves the user’s perception of virtual objects. Haptic devices introduced in [1,2,3,4,5], provide haptic feedback at the fingertips and increase the immersion experience in VR.

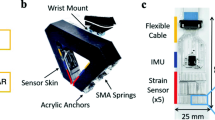

a) Sensor array at the user’s palm to record the tactile perception of objects. Fifteen FSRs were located according to the physiology of the hand, using the points over the joints of the bones, and the location of the pollicis and digiti minimi muscles. b) Experimental Setup. Each object was placed on the top of the FT300 force sensor to measure the applied normal force. The data from the fifteen FSRs and the force sensor are visualized in real-time in a GUI and recorded for future analysis.

Many operations with the hands involve more that one contact point between the user’s fingers, palm, and the object, e.g., grasping, detection, and manipulation of objects. To improve the immersion experience and to keep the natural interaction, the use of multi-contact interactive points has to be implemented [6, 7]. Choi et al. [8, 9] introduced devices that deliver the sensation of weight and grasping of objects in VR successfully using multi-contact stimulation. Nevertheless, the proposed haptic display provides stimuli only at the fingers and not on the palm.

The palm of the users plays an essential role in the manipulation and detection of objects. The force provided by the objects to the user’s palms determines the contact, weight, shape, and orientation of the object. At the same time, the displacement of the objects on the palm can be perceived by the slippage produced by the forces in different directions.

A significant amount of Rapidly Adapting (RA) tactile receptors are present on the glabrous skin of the hand, a total of 17, 023 units on average [10]. The density of the receptors located in the fingertips is \(141\ \mathrm{units/cm}^2 \), which is bigger than the density in the palm \(25\ \mathrm{units/cm}^2\). However, the overall receptor number on the palm is compensated by its large area, having the \(30\%\) of all the RA receptors located at the hand glabrous skin. To arrive at this quantity, we should cover the surface of the five fingertips. For this reason, it is imperative to take advantage of the palm and to develop devices to stimulate the most significant area with multi-contact points and multi-modal stimuli. Son et al. [11] introduced a haptic device that provides haptic feedback to the thumb, the middle finger, the index finger, and on the palm. This multi-contact device provides kinesthetic (to the fingers) and tactile stimuli (at the palm) to improve the haptic perception of a large virtual object.

The real object perception using haptic devices depends on the mechanical configuration of the devices, the contact point location on the user’s hands, and the correctness of the information delivered. To deliver correct information by the system introduced by Pacchierotti et al. [12], their haptic display was calibrated using a BioTac device.

There are some studies on affective haptics engaging the palm area. In [13], the pressure distribution on the human back generated by the palms of the partner during hugging was analyzed. Toet et al. [14] studied the palm area that has to be stimulated during the physical experience of holding hands. They presented the areas of the hands that are stimulated during the hand holding in diverse situations. However, the patterns extraction method is not defined, and the results are only for parent-child hand-holding conditions.

Son et al. [15] presented a set of patterns that represent the interaction of the human hand with five objects. Nevertheless, the investigated contact point distribution is limited to the points available in their device, and the different sizes of the human hands and the force in the interaction are not considered.

In the present work, we study the tactile engagement of the user’s palms when they are interacting with large surfaces. The objective of this work is to introduce a method to investigate the sensation on the user’s palm during the interaction with objects, finding the area of interaction, and the normal force delivered. This information is used to the reproduction of tactile interaction, and the development of multi-contact wearable tactile displays. The relation between the applied normal force and the location of the contact area should be found, considering the deformation of the palm interacting with different surfaces.

2 Data Acquisition Experiment

A sensor array was developed to investigate the palm area that is engaged during the interaction with the different surfaces. Fifteen FSRs were located according to the physiology of the hand, using the points over the bones’ joints, and the location of the pollicis and digiti minimi muscles. In Fig. 1 the distribution of the fifteen FSRs is shown.

The fifteen FSRs are held by a transparent adhesive contact paper that is attached to the user’s skin. The transparent adhesive paper is flexible enough to allow users to open and close their hands freely. The different shape of the user’s hands is considered every time the array is used by a different user. The method to attach the FSRs on the user’s palms is the following: a square of transparent adhesive paper is attached to the skin of the users, the points, where the FSR must be placed, are indicated with a permanent marker. After that, the transparent adhesive paper is taken off from the hand to locate the fifteen FSRs at the marked points. The FSRs are connected to ESP8266 microcontroller through a multiplexer. The microcontroller receives the data from the FSRs and sends it to the computer by serial communication.

The hand deformation caused by the applied force to the objects is measured in this study. The force applied to the objects is detected by a Robotiq 6 DOF force/torque sensor FT300. This sensor was chosen because of its frequency of 100 Hz for data output and the low noise signal of \(0.1\ N\) in \(F_z\), which allowed getting enough data for the purposes of this study. The sensor was fixed to a massive and stiff table (Siegmund Professional S4 welding table) using an acrylic base. An object holder was designed to mount the objects on top of the force sensor FT300.

2.1 Experimental Procedure

Four surfaces with different diameters were selected, three of them are balls, and one is a flat surface. The different diameters are used to focus on the position of the hand: if the diameter of the surface increases, the palm is more open. The diameters of the balls are 65 mm, 130 mm, and 240 mm.

Participants: Ten participants volunteering completed the tests, four women and six men, aged from 21 to 30 years. None of them reported any deficiencies in sensorimotor function, and all of them were right-handed. The participants signed informed consent forms.

Experimental Setup: Each object was placed on the top of the FT300 force sensor to measure the applied normal force. The data from the fifteen FSRs and the force sensor are visualized in real-time in a graphical user interface (GUI) and recorded for future analysis.

Method: We have measured the size of the participant’s hands to create a sensor array according to custom hand size. The participants were asked to wear the sensors array on the palm and to interact with the objects. Subjects were asked to press objects in the normal direction of the sensor five times, increasing the force gradually up to the biggest force they can provide. After five repetitions, the object was changed, and the force sensor was re-calibrated.

2.2 Experimental Results

The force from each of the fifteen FSRs and from the force sensor FT300 was recorded at a rate of 15 Hz. To show the results, the data from the FSRs were analyzed when the normal force was 10 N, 20 N, 30 N, and 40 N. In every force and surface, the average values of each FSR were calculated. The average sensor values corresponding to the normal force for each surface are presented in Fig. 2.

Number and location of contact points engaged during the palm-object interaction. The data from the FSRs were analyzed according to the normal force in each surface. The average values of each FSR were calculated. The active points are represented by a scale from white to red. The maximum recorded value in each surface was used as normalization factor, thus, the maximum recorded value represents \(100\%\). Rows A, B, C, D represent results for a small-size ball, a medium-size ball, a large-size ball, a flat surface, respectively. (Color figure online)

From Fig. 2 we can derive that the number of contact points is proportional to the applied force. The maximum number of sensors is activated when 40 N is applied to the medium-sized ball, and the minimum number is in the case when 10 N is applied to large-size and flat surface.

The surface dimension is playing an important role in the activation of FSRs. It can be observed that the surfaces that join up better to the position of the hand activate more points. The shape of the palm is related to the applied normal force and to the object surface. When a normal for of 10 N is applied to a small-size ball, the number of contact points is almost twice the contact points of other surfaces, it increases only to seven at 40 N. The number of contact points with the large-size ball at 10 N is two, and increases to eight at 40 N. Instead, the flat surface does not activate the points at the center of the palm.

3 User’s Perception Experiment

To verify if the data obtained in the last experiment can be used to render haptic feedback in other applications, an array of fifteen vibromotors was developed. Each of the vibromotors was located in the same positions as the FRS, as shown in Fig. 1. The vibration intensity was delivered in correlation with the acquired data from the Sect. 2 to discriminate the applied force from the hand to the large-size ball (object C).

3.1 Experimental Procedure

The results from the big ball were selected for the design of 4 patterns (Fig. 3). The patterns simulate the palm-ball interaction from 0.0 N to 10 N, 20 N, 30 N and 40 N, respectively. The pattern was delivered in 3 steps during 0.5 s each one. For instance in the 0.0 N - 10 N interaction, firstly the FRS values at 3 N were mapped to an vibration intensity and delivered to each vibromotor during 0.5 s, then similar process was done for the FRS values at 6 N and 10 N.

Participants: Seven participants volunteering completed the tests, two women and five men, aged from 23 to 30 years. None of them reported any deficiencies in sensorimotor function, and all of them were right-handed. The participants signed informed consent forms.

Experimental Setup: The user was asked to sit in front of a desk and to wear the array of fifteen vibromotors on the right palm. One application was developed in Python where the four patterns were delivered and the answers of the users were recorded for the future analysis.

Method: We have measured the size of the participant’s hands to create a vibromotors array according to the custom hand size. Before the experiment, a training session was conducted where each of the patterns was delivered three times. Each pattern was delivered on their palm five times in random order. After the delivery of each pattern, subject was asked to specify the number that corresponds to the delivered pattern. A table with the patterns and the corresponding numbers was provided for the experiment.

3.2 Experimental Results

The results of the patterns experiment are summarized in a confusion matrix (see Table 1).

The perception of the patterns was analyzed using one-factor ANOVA without replication with a chosen significance level of \(\alpha <0.05\). The \(p-value\) obtained in the ANOVA is equal to 0.0433, in addition, the Fcritic value is equal to 3.0088, and the F value is of 3.1538. With these results, we can confirm that statistic significance difference exists between the recognized patterns. The paired t-tests showed statistically significant differences between the pattern 1 and pattern 2 (\(p=0.0488 < 0.05\)), and between pattern 1 and pattern 3 (\(p=0.0082 < 0.05\)). The overall recognition rate is 84%, which means that the user can distinguish four patterns with high confidence.

4 Conclusions and Future Work

The sensations of the users were analyzed to design robust multi-contact tactile displays. An array of fifteen Interlink Electronics FSRTM 400 Force Sensitive Resistors (FSRs) was developed for the user’s palm, and a force sensor FT300 was used to detect the normal force applied by the users to the surfaces. Using the designed FSRs array, the interaction between four convex surfaces with different diameters and the hand was analyzed in a discrete range of normal forces. It was observed that the hand undergoes a deformation by the normal force. The experimental results revealed the active areas at the palm during the interaction with each of the surfaces at different forces. This information leads to the optimal contact point location in the design of a multi-contact wearable tactile and haptic display to achieve a highly immersive experience in VR. The experiment on the tactile pattern detection reveled a high recognition rate of 84.29%.

In the future, we are planing to run a new human subject study to validate the results of this work by a psychophysics experiment. Moreover, we will increase the collected data sensing new objects. With the new dataset and our device, we can design an algorithm capable to predict the contact points of an unknown object. The obtained results can be applied in the development of multi-contact wearable tactile and haptic displays for the palm.

The result of the present work can be implemented to telexistence technology. The array of FSR senses objects and the interactive points are rendered by a haptic display to a second user. The same approach can be used for affective haptics.

References

Chinello, F., Malvezzi, M., Pacchierotti, C., Prattichizzo, D.: Design and development of a 3RRS wearable fingertip cutaneous device. In: IEEE/ASME International Conference on Advanced Intelligent Mechatronics, pp. 293–298 (2015)

Kuchenbecker, K.J., Ferguson, D., Kutzer, M., Moses, M., Okamura, A.M.: The touch thimble: providing fingertip contact feedback during point-force haptic interaction. In: HAPTICS 2008: Proceedings of the 2008 Symposium on Haptic Inter-faces for Virtual Environment and Teleoperator Systems (March), pp. 239–246 (2008)

Gabardi, M., Solazzi, M., Leonardis, D., Frisoli, A.: A new wearable fingertip haptic interface for the rendering of virtual shapes and surface features. In: Haptics Symposium (HAPTICS), pp. 140–146. IEEE (2016)

Prattichizzo, D., Chinello, F., Pacchierotti, C., Malvezzi, M.: Towards wearability in fingertip haptics: a 3-DoF wearable device for cutaneous force feedback. IEEE Trans. Haptics 6(4), 506–516 (2013)

Minamizawa, K., Prattichizzo, D., Tachi, S.: Simplified design of haptic display by extending one-point kinesthetic feedback to multipoint tactile feedback. In: 2010 IEEE Haptics Symposium, pp. 257–260, March 2010

Frisoli, A., Bergamasco, M., Wu, S.L., Ruffaldi, E.: Evaluation of multipoint contact interfaces in haptic perception of shapes. In: Barbagli, F., Prattichizzo, D., Salisbury, K. (eds.) Multi-point Interaction with Real and Virtual Objects. Springer Tracts in Advanced Robotics, vol. 18, pp. 177–188. Springer, Heidelberg (2005). https://doi.org/10.1007/11429555_11

Pacchierotti, C., Chinello, F., Malvezzi, M., Meli, L., Prattichizzo, D.: Two finger grasping simulation with cutaneous and kinesthetic force feedback. In: Isokoski, P., Springare, J. (eds.) Haptics: Perception, Devices, Mobility, and Communication, pp. 373–382. Springer, Heidelberg (2012). https://doi.org/10.1007/978-3-642-31401-8_34

Choi, I., Culbertson, H., Miller, M.R., Olwal, A., Follmer, S.: Grabity: a wear-able haptic interface for simulating weight and grasping in virtual reality. In: Proceedings of the 30th Annual ACM Symposium on User Interface Software and Technology - UIST 2017, pp. 119–130 (2017)

Choi, I., Hawkes, E.W., Christensen, D.L., Ploch, C.J., Follmer, S.: Wolverine: a wearable haptic interface for grasping in virtual reality. In: IEEE International Conference on Intelligent Robots and Systems, pp. 986–993 (2016)

Johansson, B.Y.R.S., Vallbo, A.B.: Tactile sensibility in the human hand: relative and absolute sensities of four types of mechanoreceptive units in glabrous skin. 283–300 (1979)

Son, B., Park, J.: Haptic feedback to the palm and fingers for improved tactile perception of large objects. In: Proceedings of the 31st Annual ACM Symposium on User Interface Software and Technology, UIST 2018, pp. 757–763. Association for Computing Machinery, New York (2018)

Pacchierotti, C., Prattichizzo, D., Kuchenbecker, K.J.: Cutaneous feedback of fingertip deformation and vibration for palpation in robotic surgery. IEEE Trans. Biomed. Eng. 63(2), 278–287 (2016). https://doi.org/10.1109/TBME.2015.2455932

Tsetserukou, D., Sato, K., Tachi, S.: ExoInterfaces: novel exosceleton haptic interfaces for virtual reality, augmented sport and rehabilitation. In: Proceedings of the 1st Augmented Human International Conference, pp. 1–6. USA (2010)

Toet, A., et al.: Reach out and touch somebody’s virtual hand: affectively connected through mediated touch (2013)

Son, B., Park, J.: Tactile sensitivity to distributed patterns in a palm. In: Proceedings of the 20th ACM International Conference on Multimodal Interaction, ICMI 2018, pp. 486–491. ACM, New York (2018)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Open Access This chapter is licensed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license and indicate if changes were made.

The images or other third party material in this chapter are included in the chapter's Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the chapter's Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder.

Copyright information

© 2020 The Author(s)

About this paper

Cite this paper

Altamirano Cabrera, M., Heredia, J., Tsetserukou, D. (2020). Tactile Perception of Objects by the User’s Palm for the Development of Multi-contact Wearable Tactile Displays. In: Nisky, I., Hartcher-O’Brien, J., Wiertlewski, M., Smeets, J. (eds) Haptics: Science, Technology, Applications. EuroHaptics 2020. Lecture Notes in Computer Science(), vol 12272. Springer, Cham. https://doi.org/10.1007/978-3-030-58147-3_6

Download citation

DOI: https://doi.org/10.1007/978-3-030-58147-3_6

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-58146-6

Online ISBN: 978-3-030-58147-3

eBook Packages: Computer ScienceComputer Science (R0)