Abstract

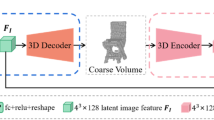

Reconstruction of a 3D model from a single image is challenging. Nevertheless, recent advances in deep learning methods demonstrated exciting progress toward single-view 3D object reconstruction. However, successful training of a deep learning model requires an extensive dataset with pairs of geometrically aligned 3D models and color images. While manual dataset collection using photogrammetry of laser scanning is challenging, the 3D modeling provides a promising method for data generation. Still, a deep model should be able to generalize from synthetic to real data. In this paper, we evaluate the impact of the synthetic data in the dataset on the performance of the trained model. We use a recently proposed Z-GAN model as a starting point for our research. The Z-GAN model leverages generative adversarial training and a frustum voxel model to provide the state-of-the-art results in the single-view voxel model prediction. We generated a new dataset with 2k synthetic color images and voxel models. We train the Z-GAN model on synthetic, real, and mixed images. We compare the performance of the trained models on real and synthetic images. We provide a qualitative and quantitative evaluation in terms of the Intersection over Union between the ground truth and predicted voxel models. The evaluation demonstrates that the model trained only on the synthetic data fails to generalize to real color images. Nevertheless, a combination of synthetic and real data improves the performance of the trained model. We made our training dataset publicly available (http://www.zefirus.org/SyntheticVoxels).

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Balntas, V., Doumanoglou, A., Sahin, C., Sock, J., Kouskouridas, R., Kim, T.: Pose guided RGBD feature learning for 3d object pose estimation. In: IEEE International Conference on Computer Vision, ICCV 2017, Venice, Italy, 22–29 October 2017, pp. 3876–3884 (2017). https://doi.org/10.1109/ICCV.2017.416

Balntas, V., Doumanoglou, A., Sahin, C., Sock, J., Kouskouridas, R., Kim, T.K.: Pose guided RGBD feature learning for 3D object pose estimation. In: The IEEE International Conference on Computer Vision (ICCV) (2017)

Brachmann, E., Krull, A., Nowozin, S., Shotton, J., Michel, F., Gumhold, S., Rother, C.: DSAC - differentiable RANSAC for camera localization. In: The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2017)

Brachmann, E., Rother, C.: Learning less is more - 6d camera localization via 3d surface regression. In: The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2018)

Brock, A., Lim, T., Ritchie, J., Weston, N.: Generative and discriminative voxel modeling with convolutional neural networks, pp. 1–9 (2016). https://nips.cc/Conferences/2016. Workshop contribution; Neural Information Processing Conference : 3D Deep Learning, NIPS, 05–12 Dec 2016

Chang, A.X., Funkhouser, T.A., Guibas, L.J., Hanrahan, P., Huang, Q.X., Li, Z., Savarese, S., Savva, M., Song, S., Su, H., Xiao, J., Yi, L., Yu, F.: Shapenet: an information-rich 3d model repository (2015). CoRR arXiv:abs/1512.03012

Choy, C.B., Xu, D., Gwak, J., Chen, K., Savarese, S.: 3d-r2n2: a unified approach for single and multi-view 3d object reconstruction. In: Proceedings of the European Conference on Computer Vision (ECCV) (2016)

Doumanoglou, A., Kouskouridas, R., Malassiotis, S., Kim, T.: Recovering 6d object pose and predicting next-best-view in the crowd. In: 2016 IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2016, Las Vegas, NV, USA, 27–30 June 2016, pp. 3583–3592 (2016). https://doi.org/10.1109/CVPR.2016.390

Drost, B., Ulrich, M., Bergmann, P., Hartinger, P., Steger, C.: Introducing mvtec itodd - a dataset for 3d object recognition in industry. In: The IEEE International Conference on Computer Vision (ICCV) Workshops (2017)

El-Hakim, S.: A flexible approach to 3d reconstruction from single images. In: ACM SIGGRAPH, vol. 1, pp. 12–17 (2001)

Everingham, M., Van Gool, L., Williams, C.K.I., Winn, J., Zisserman, A.: The pascal visual object classes (VOC) challenge. Int. J. Comput. Vis. 88(2), 303–338 (2009)

Firman, M., Mac Aodha, O., Julier, S., Brostow, G.J.: Structured prediction of unobserved voxels from a single depth image. In: The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2016)

Girdhar, R., Fouhey, D.F., Rodriguez, M., Gupta, A.: Learning a predictable and generative vector representation for objects, chap. 34, pp. 702–722. Springer, Cham (2016)

Goodfellow, I., Pouget-Abadie, J., Mirza, M., Xu, B., Warde-Farley, D., Ozair, S., Courville, A., Bengio, Y.: Generative adversarial nets. In: Advances in Neural Information Processing Systems, pp. 2672–2680 (2014)

Hinterstoisser, S., Lepetit, V., Ilic, S., Holzer, S., Bradski, G., Konolige, K., Navab, N.: Model based training, detection and pose estimation of texture-less 3d objects in heavily cluttered scenes. In: Asian Conference on Computer Vision, pp. 548–562. Springer, Heidelberg (2012)

Hodaň, T., Haluza, P., Obdržálek, Š., Matas, J., Lourakis, M., Zabulis, X.: T-LESS: an RGB-D dataset for 6d pose estimation of texture-less objects. In: IEEE Winter Conference on Applications of Computer Vision (WACV) (2017)

Hodan, T., Haluza, P., Obdrzálek, S., Matas, J., Lourakis, M.I.A., Zabulis, X.: T-LESS: an RGB-D dataset for 6d pose estimation of texture-less objects. In: 2017 IEEE Winter Conference on Applications of Computer Vision, WACV 2017, Santa Rosa, CA, USA, 24–31 March 2017, pp. 880–888 (2017). https://doi.org/10.1109/WACV.2017.103

Hodaň, T., Matas, J., Obdržálek, Š.: On evaluation of 6d object pose estimation. In: European Conference on Computer Vision Workshops (ECCVW) (2016)

Huang, Q., Wang, H., Koltun, V.: Single-view reconstruction via joint analysis of image and shape collections. ACM Trans. Graph. 34(4), 87:1–87:10 (2015)

Isola, P., Zhu, J.Y., Zhou, T., Efros, A.A.: Image-to-image translation with conditional adversarial networks. In: 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 5967–5976. IEEE (2017)

Kniaz, V.V., Remondino, F., Knyaz, V.A.: Generative adversarial networks for single photo 3d reconstruction. ISPRS - International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences XLII-2/W9, 403–408 (2019). https://doi.org/10.5194/isprs-archives-XLII-2-W9-403-2019. https://www.int-arch-photogramm-remote-sens-spatial-inf-sci.net/XLII-2-W9/403/2019/

Knyaz, V.: Deep learning performance for digital terrain model generation. In: Proceedings SPIE Image and Signal Processing for Remote Sensing XXIV, vol. 10789, p. 107890X (2018). https://doi.org/10.1117/12.2325768

Knyaz, V.A., Chibunichev, A.G.: Photogrammetric techniques for road surface analysis. ISPRS - Int. Arch. Photogram. Remote Sens. Spatial Inf. Sci. XLI(B5), 515–520 (2016)

Knyaz, V.A., Kniaz, V.V., Remondino, F.: Image-to-voxel model translation with conditional adversarial networks. In: Leal-Taixé, L., Roth, S. (eds.) Computer Vision - ECCV 2018 Workshops, pp. 601–618. Springer, Cham (2019)

Knyaz, V.A., Zheltov, S.Y.: Accuracy evaluation of structure from motion surface 3D reconstruction. In: Proceedings SPIE Videometrics, Range Imaging, and Applications XIV, vol. 10332, p. 103320 (2017). https://doi.org/10.1117/12.2272021

Krull, A., Brachmann, E., Nowozin, S., Michel, F., Shotton, J., Rother, C.: Poseagent: budget-constrained 6d object pose estimation via reinforcement learning. In: The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2017)

Lim, J.J., Pirsiavash, H., Torralba, A.: Parsing IKEA objects: fine pose estimation. In: Proceedings of the IEEE International Conference on Computer Vision ICCV (2013)

Ma, M., Marturi, N., Li, Y., Leonardis, A., Stolkin, R.: Region-sequence based six-stream CNN features for general and fine-grained human action recognition in videos. Pattern Recogn. 76, 506–521 (2017)

Paszke, A., Gross, S., Chintala, S., Chanan, G., Yang, E., DeVito, Z., Lin, Z., Desmaison, A., Antiga, L., Lerer, A.: Automatic differentiation in pytorch (2017)

Rad, M., Lepetit, V.: BB8: a scalable, accurate, robust to partial occlusion method for predicting the 3d poses of challenging objects without using depth. In: IEEE International Conference on Computer Vision, ICCV 2017, Venice, Italy, 22–29 October 2017, pp. 3848–3856 (2017). https://doi.org/10.1109/ICCV.2017.413

Remondino, F., El-Hakim, S.: Image-based 3D modelling: a review. Photogram. Rec. 21(115), 269–291 (2006)

Remondino, F., Roditakis, A.: Human figure reconstruction and modeling from single image or monocular video sequence. In: Fourth International Conference on 3-D Digital Imaging and Modeling, 2003 (3DIM 2003), pp. 116–123. IEEE (2003)

Richter, S.R., Roth, S.: Matryoshka networks: predicting 3D geometry via nested shape layers. arXiv.org (2018)

Ronneberger, O., Fischer, P., Brox, T.: U-net: convolutional networks for biomedical image segmentation. In: International Conference on Medical Image Computing and Computer-Assisted Intervention, pp. 234–241. Springer, Cham (2015)

Shin, D., Fowlkes, C., Hoiem, D.: Pixels, voxels, and views: a study of shape representations for single view 3d object shape prediction. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2018)

Sock, J., Kim, K.I., Sahin, C., Kim, T.K.: Multi-task deep networks for depth-based 6D object pose and joint registration in crowd scenarios. arXiv.org (2018)

Song, S., Yu, F., Zeng, A., Chang, A.X., Savva, M., Funkhouser, T.: Semantic scene completion from a single depth image. In: The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2017)

Sun, X., Wu, J., Zhang, X., Zhang, Z., Zhang, C., Xue, T., Tenenbaum, J.B., Freeman, W.T.: Pix3d: dataset and methods for single-image 3d shape modeling. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2018)

Tatarchenko, M., Dosovitskiy, A., Brox, T.: Multi-view 3D Models from single images with a convolutional network. arXiv.org (2015)

Tejani, A., Kouskouridas, R., Doumanoglou, A., Tang, D., Kim, T.: Latent-class hough forests for 6 DoF object pose estimation. IEEE Trans. Pattern Anal. Mach. Intell. 40(1), 119–132 (2018). https://doi.org/10.1109/TPAMI.2017.2665623

Wu, J., Wang, Y., Xue, T., Sun, X., Freeman, W.T., Tenenbaum, J.B.: MarrNet: 3D shape reconstruction via 2.5D sketches. arXiv.org (2017)

Wu, J., Zhang, C., Xue, T., Freeman, B., Tenenbaum, J.: Learning a probabilistic latent space of object shapes via 3D generative-adversarial modeling. In: Advances in Neural Information Processing Systems, pp. 82–90 (2016)

Wu, Z., Song, S., Khosla, A., Yu, F., Zhang, L., Tang, X., Xiao, J.: 3D ShapeNets: a deep representation for volumetric shapes. In: 2013 IEEE Conference on Computer Vision and Pattern Recognition, Princeton University, Princeton, United States, pp. 1912–1920. IEEE (2015)

Xiang, Y., Mottaghi, R., Savarese, S.: Beyond pascal: a benchmark for 3d object detection in the wild. In: IEEE Winter Conference on Applications of Computer Vision (WACV) (2014)

Yan, X., Yang, J., Yumer, E., Guo, Y., Lee, H.: Perspective transformer nets: learning single-view 3d object reconstruction without 3d supervision. papers.nips.cc (2016)

Yang, B., Rosa, S., Markham, A., Trigoni, N., Wen, H.: 3D object dense reconstruction from a single depth view. arXiv preprint arXiv:1802.00411 (2018)

Yang, B., Wen, H., Wang, S., Clark, R., Markham, A., Trigoni, N.: 3D object reconstruction from a single depth view with adversarial learning. In: The IEEE International Conference on Computer Vision (ICCV) Workshops (2017)

Zheng, B., Zhao, Y., Yu, J.C., Ikeuchi, K., Zhu, S.C.: Beyond point clouds: scene understanding by reasoning geometry and physics. In: The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2013)

Acknowledgments

The reported study was funded by Russian Foundation for Basic Research (RFBR) according to the project \(\hbox {N}^{\mathrm{o}}\) 17-29-04410, and by the Russian Science Foundation (RSF) according to the research project \(\hbox {N}^{\mathrm{o}}\) 19-11-11008.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Kniaz, V.V., Moshkantsev, P.V., Mizginov, V.A. (2020). Deep Learning a Single Photo Voxel Model Prediction from Real and Synthetic Images. In: Kryzhanovsky, B., Dunin-Barkowski, W., Redko, V., Tiumentsev, Y. (eds) Advances in Neural Computation, Machine Learning, and Cognitive Research III. NEUROINFORMATICS 2019. Studies in Computational Intelligence, vol 856. Springer, Cham. https://doi.org/10.1007/978-3-030-30425-6_1

Download citation

DOI: https://doi.org/10.1007/978-3-030-30425-6_1

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-30424-9

Online ISBN: 978-3-030-30425-6

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)