Abstract

In healthcare, a number of patients experience incidents, where accident models have been used to understand such incidents. However, it has been often traditional accident models used to understand how incidents might occur and how future incidents can be prevented. While other industries also use traditional accident models and built incident investigation techniques based on the traditional models, such models and techniques have been criticised to be insufficient to understand and investigate incidents in complex systems. This paper provides insight into the understanding of patient safety incidents by highlighting the problems with traditional accident models to investigate patient safety incidents, and gives a number of recommendations. We hope that this paper would trigger further discussions on the fundamental concept of the incident investigations in healthcare.

Access provided by Autonomous University of Puebla. Download conference paper PDF

Similar content being viewed by others

Keywords

Introduction

In healthcare, the incident rate is estimated to be between 6 and 25% of the patient admissions (Baker 2004; Landgrigan et al. 2010). Incidents not only lead to patient harm, but also to financial loss, psychological harm to healthcare staff, reputational damage and so on (Davies 2014; Barach and Small 2000). It is, therefore, considerable efforts have been made to minimise the occurrence of incidents in healthcare (Hayes et al. 2014; Vincent and Amalberti 2016; Sujan et al. 2015). For instance, hospitals adopted incident reporting system from aviation (Mitchell et al. 2016), and used a range of techniques to investigate incidents (NHS England 2015; Woodward et al. 2004; House of Commons 2015; Center for Chemical Process Safety 2010). However, such efforts have not demonstrated significant contribution to preventing incidents, and criticised due to underreporting rates, the quality of the reports, inadequate use of techniques and insufficient investigations (Sari et al. 2007; NHS 2015; Vincent 2007; Macrae 2016; Lawton and Parker 2002; Barach and Small 2000). Indeed, researchers discussed problems with incident investigations with often focusing on the use of Root Cause Analysis (Peerally et al. 2016; Card 2017). However, there is little evidence to highlight the problems with the accident models, where such techniques were built on (Perneger 2005; Reason et al. 2006).

Accident Models

Accident models have been developed to understand incidents, and so to prevent similar ones. Hollnagel (2004) divides these models into three categories, sequential (e.g. domino model) (Heinrich 1931), epidemiological (e.g. Swiss Cheese Model) (Reason 1997a, b) and systemic (e.g. FRAM and STAMP) (Hollnagel 2004; Leveson 2004). Figure 1 shows representative examples of their use when investigating the wrong medication incident in a hospital.

Sequential models imply that accidents transpire as a result of sequential events occurring in a specific order (Hollnagel 2004). A specific type of accident potentially follows the same route and series of events (Huang et al. 2004). The domino model is the first model for this way of thinking. Based on the domino model, all accidents result from the social environment leading to a fault of the person, which results in unsafe acts, which lead to an accident and, in turn, an injury (Heinrich 1931). The domino model suggests that accidents can be prevented by removing one of these five blocks so that the domino effect is interrupted (Hollnagel 2004). Among these five blocks, Heinrich focused on the removal of the fault of the person. His study found that 88% of preventable accidents result from the unsafe acts of persons, 10% result from the unsafe machines and 2% are unavoidable (Heinrich 1931). Thus, this model considers humans as the main reason for the accident.

The principles behind the sequential models have been used to built incident investigation techniques, including 5 Whys, Root Cause Analysis, Bow-Tie or Barrier Analysis and Fault Tree Analysis (Mullai and Paulsson 2011; Hollnagel 2004). While these techniques have been successfully applied in a range of industries, the sequential models have still been criticised to be insufficient to explain incidents in complex systems. Epidemiological models were, therefore, developed to understand and investigate incidents more sufficiently.

Epidemiological models view accidents as a result of a combination of factors, which include environmental conditions, performance deviations leading to unsafe acts as well as latent conditions. Such factors pass through system barriers and defences, and, in turn, can lead to accidents. Adding barriers, therefore, can prevent accidents in these models (Hollnagel 2004; Hollnagel et al. 2014; Reason 2000). The Swiss Cheese Model (SCM) is a well-known epidemiological model (Reason 1997a, b). It has been widely accepted in healthcare (Perneger 2005). This model emerges from a triggering event through different levels of barriers from institution to technical. Since these barriers might not be perfect, weaknesses may exist due to latent conditions and active failures. If hazards break through all the “holes”, this could lead to harm or loss (Vincent 2010). However, epidemiological models still follow the principles of sequential models and, therefore, limit the understanding of incidents. In turn, both of these sequential and epidemiological models were considered as traditional models, and systematic models have been developed to fully understand incidents (Hollnagel et al. 2014).

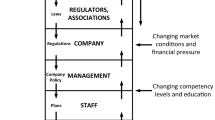

Systemic accident models have been built on systems theory. In systems theory, multiple factors act concurrently and accidents arise from combined mutually interacting factors (Klockner and Toft 2015; Leveson et al. 2016). Therefore, the interaction of these factors must be considered to understand the accidents and prevent similar ones (SIA 2012; Hollnagel 2004). Accimap (Rasmussen 1997), the Functional Resonance Accident Model (FRAM) (Hollnagel et al. 2014) and the Systems-Theoretic Accident Model and Processes (STAMP) (Leveson 2004) are the examples for systemic accident models.

In the healthcare literature, a few studies have used systemic models (Alm and Woltjer 2010; Clay-Williams and Colligan 2015; Pawlicki et al. 2016; Leveson et al. 2016; Chatzimichailidou et al. 2017). For instance, Clay-Williams et al. (2015) used FRAM and revealed the difference between ‘work as done’ and ‘work as imagined’. Alm and Woltjer (2010) used FRAM and uncovered a number of systematic interdependencies within a surgical procedure. Pawlicki et al. (2016) used Systems Theoretic Process Analysis (STPA) and revealed a comprehensive list of causal scenarios as well as a number of unsafe control actions.

Indeed, hospitals are complex by nature, and accidents occur due to several interacting factors. It can, therefore, be expected that hospitals should use incident investigation techniques, which are built on systemic models.

The Problems with Traditional Accident Models

Safety researchers have been accustomed to explain accidents by traditional accident models, which apply the cause-effect way of thinking for centuries. However, defining a sequence of events for an accident does not always lead to the accident itself (Hollnagel et al. 2014). The limitations of the traditional models are summarised as follows:

-

They are not adequate for complex systems, and might not explain today’s accidents (Leveson 2011).

-

Accidents are defined by a chain of events (Leveson 2011).

-

Understanding is limited, where active failures play a major role to lead to an accident (Shorrock et al. 2005).

-

These models do not provide relationships among causal factors (Luxhoj and Kauffeld 2003).

-

Defence layers are not independent and, therefore, one layer may erode another one (Dekker 2002).

-

Holes on the layers do not explain where the errors specifically are, what they consist of, and how the holes lead to an incident when there is a change in the system (Dekker 2002; Shappell and Wiegmann 2000).

-

Latent conditions can be too difficult to control (Shorrock et al. 2005).

Additionally, Reason, himself comments on SCM by saying

the pendulum may have swung too far in our present attempts to track down possible errors and accident contributions that are widely separated in both time and place from the events themselves. (Reason 1997b, p. 234).

With all the limitations given, the use of traditional models may result in overlooking some important accident causation factors or using resources ineffectively and thus contribute to more serious incidents in the future. Consequently, the question that arises is how to prevent this from happening? The safety literature addresses this challenge by introducing new models, including FRAM (Hollnagel 2004) and STAMP (Leveson 2004). While FRAM sees the glass as half-full by considering how to sustain success in daily works, STAMP sees the glass as half-empty by considering how to sustain sufficient safety control structure in the systems.

FRAM suggests that accidents occur when systems are unable to tolerate the system variances. This model focuses on performance variances in system functions and links among these functions by considering how things go right rather than how things go wrong (Hollnagel 2004).

STAMP considers that accidents occur when systems are poorly controlled or individual controllers do not perform their responsibilities (Leveson 2004; Leveson et al. 2016). This model focuses on the changes of technology and software, human behaviour, organisational culture and process by time (Leveson 2004).

On one hand, the systemic models can help fully understand incidents occurred in the complex settings of the healthcare system. On the other hand, not all parts of the healthcare system are complex, and so accidents may be able to be understood through the use of epidemiological models. Furthermore, many other complex industries also use epidemiological models and even use methods that were built on sequential models. For instance, Fault Tree Analysis and Event Tree Analysis are still successfully used in nuclear industries. Accident models can, therefore, be selected depending on the system needs, safety objectives and the complexity of the situations and the different parts of the healthcare system may thus require the use of different or a combination of accident models.

It should be also noted that while a few papers have been published regarding the effectiveness and use of the systemic models in healthcare (Clay-Williams et al. 2015; Alm and Woltjer 2010; Pawlicki et al. 2016; Leveson et al. 2016), these models were found to be difficult to implement as well as time-consuming to use (Larouzée and Guarnieri 2015; Carthey 2013; Roelen et al. 2011).

Discussion and Conclusion

In healthcare, incidents have been explained by the use of traditional accident models. Consequently, it is likely that patient safety incidents might not be understood well, and the true lessons from the incidents might not be learnt. Paving the way towards addressing this problem, it is recommended for incident investigators to consider the following factors.

-

Be aware that accidents may occur at any time.

-

Recognise that accidents may result from changes in the system, system failures, inadequate interactions among system elements, performance variances, system design, and inadequate controls as well as latent conditions and active failures.

-

Determine the use of systematic accident models or a combination of traditional and systemic accident models to understand incidents.

-

Consider the limitations of the existing patient safety investigation techniques as they were built on traditional accident models.

While traditional accident models claimed to pass their sell-by date (Reason et al. 2006), the systemic models have not passed the quality control yet. Thus, there is no ideal way to understand and investigate incidents. Perhaps, there is still a need to develop a simple, but effective accident model for the healthcare use alone. Or perhaps, these models should be combined with each other depending on the nature of the situations and the extension of the system under analysis. However, experiences from other industries have shown that accidents occur as a result of multiple interacting factors.

In this paper, recommendations provided might offer insight into acting in relation to understanding patient safety incidents. We hope that this paper would trigger further discussions on the fundamental concept of the incident investigations in healthcare.

References

Alm, H., & Woltjer, R. (2010). Patient safety investigation through the lens of FRAM. In D. de Waard, A. Axelsson, M. Berglund, B. Peters, & C. Weikert (Eds.), Human factors: A system view of human, technology and organisation (pp. 153–165). Maastricht, The Netherlands.

Baker, G. R. (2004). The Canadian adverse events study: The incidence of adverse events among hospital patients in Canada. Canadian Medical Association Journal, 170, 1678–1686.

Barach, P., & Small, S. D. (2000). Reporting and preventing medical mishaps: Lessons from non-medical near miss reporting systems. BMJ, 320(7237), 759–763.

Card, A. J. (2017). The problem with ‘5 Whys’. BMJ Quality and Safety, 26, 671–677.

Carthey, J. (2013). Understanding safety in healthcare: The system evolution, erosion and enhancement model. Journal of Public Health Research, 2(e25), 144–149.

Center for Chemical Process Safety. (2010). Incident investigation phase an illustration of the FMEA and HRA methods. In Guidelines for hazard evaluation procedures, (3rd ed., pp. 435–50). Hoboken, NJ: Wiley.

Chatzimichailidou, M. M., Ward, J., Horberry, T., & Clarkson, P. J. (2017). A comparison of the bow-tie and STAMP approaches to reduce the risk of surgical instrument retention. Risk Analysis.

Clay-Williams, R., & Colligan, L. (2015). Back to basics: Checklists in aviation and healthcare. BMJ Quality and Safety, 24, 428–431.

Clay-Williams, R., Jeanette, H., & Hollnagel, E. (2015). Where the rubber meets the road: Using FRAM to align work-as-imagined with work-as-done when implementing clinical guidelines. Implementation Science, 10, 125.

Davies, P. (2014). The concise NHS handbook. London: NHS Confederation.

Dekker, S. (2002). The field guide to human error investigations. Aldershot: Ashgate.

England, N. H. S. (2015). Serious incident framework: Supporting learning to prevent recurrence. London: NHS England.

Hayes, C. W., Batalden, P. B., & Goldmann, D. A. (2014). A ‘work smarter, not harder’ approach to improving healthcare quality. BMJ Quality and Safety, 24, 100–102.

Heinrich, H. W. (1931). Industrial accident prevention: A scientific approach. New York: McGraw-Hill.

Hollnagel, E. (2004). Barriers and accident prevention. Surrey: Ashgate.

Hollnagel, E., Hounsgaard, J., & Colligan, L. (2014). FRAM—The functional resonance analysis method—A handbook for the practical use of the method. Middelfart.

House of Commons. (2015). HC 886: Investigating clinical incidents in the NHS. London: The Stationery Office.

Huang, Y.-H., Ljung, M., Sandin, J., & Hollnagel, E. (2004). Accident models for modern road traffic: Changing times creates new demands. IEEE International Conference on Systems, Man and Cybernetics, 1, 276–281.

Klockner, K., & Toft, Y. (2015). Accident modelling of railway safety occurrences: The safety and failure event network (SAFE-Net) method. Procedia Manufacturing, 3, 1734–41.

Landgrigan, C. P., Parry, G. J., Bones, C. B., Hackbarth, A. D., Goldmann, D. A., Sharek, P. J., et al. (2010). Temporal trends in rates of patient harm resulting from medical care. New England Journal of Medicine, 363(22), 2124–2134.

Larouzée, J., & Guarnieri, F. (2015). From theory to practice: Itinerary of reasons’ Swiss Cheese Model. In L. Podofillini, B. Sudret, B. Stojadinovic, E. Zio, & W. Kroger (Eds.), Safety and reliability of complex engineered systems: ESREL 2015 (pp. 817–824). Zurich: Switzerland.

Lawton, R., & Parker, D. (2002). Barriers to incident reporting in a health care system. Quality & Safety in Health Care, 11, 15–18.

Leveson, N. (2004). A new accident model for engineering safer systems. Safety Science, 42(4), 237–270.

Leveson. (2011). Engineering a safer world: Systems thinking applied to safety. Massachusetts: The MIT Press.

Leveson, N., Samost, A., Dekker, S., Finkelstein, S., & Raman, J. (2016). A systems approach to analyzing and preventing hospital adverse events. Journal of Patient Safety, 1–6.

Luxhoj, J. T., & Kauffeld, K. (2003). Evaluating the effect of technology insertion into the national airspace system. The Rutgers Scholar, 5.

Macrae, C. (2016). The problem with incident reporting. BMJ Quality and Safety, 25, 71–75.

Mitchell, I., Schuster, A., Smith, K., Pronovost, P., & Wu, A. (2016). Patient safety incident reporting: A qualitative study of thoughts and perceptions of experts 15 years after ‘to err is human’. BMJ Quality and Safety, 25, 92–99.

Mullai, A., & Paulsson, U. (2011). A grounded theory model for analysis of marine accidents. Accident Analysis and Prevention, 43, 1590–1603.

NHS. (2015). About reporting patient safety incidents. National Health Service. 2015. http://www.nrls.npsa.nhs.uk/report-a-patient-safety-incident/about-reporting-patient-safety-incidents/.

Pawlicki, T., Samost, A., Brown, D. W., Manger, R. P., Kim, G.-Y., & Leveson, N. (2016). Application of systems and control theory-based hazard analysis to radiation oncology. Medical Physics, 43(3), 1514–1530.

Peerally, M. F., Carr, S., Waring, J., & Dixon-Woods, M. (2016). The problem with root cause analysis. BMJ Quality and Safety, 1–6.

Perneger, T. V. (2005). The Swiss Cheese Model of safety incidents: Are there holes in the metaphor? BMC Health Services Research, 5 (71).

Rasmussen, J. (1997). Risk management in a dynamic society: A modelling problem. Safety Science, 27, 183–213.

Reason, J. (1997a). The organisational accident. New York: Ashgate.

Reason. (2000). Human error: Models and management. BMJ, 320: 768–70.

Reason, J. (1997b). Managing the risks of organisational accidents. Aldershot: Ashgate.

Reason, J., Hollnagel, E., & Paries, J. (2006). Revisiting the Swiss Cheese Model of accidents. Eurocontrol.

Roelen, A. L. C., Lin, P. H., & Hale, A. R. (2011). Accident models and organisational factors in air transport: The need for multi-method models. Safety Science, 49, 5–10.

Sari, A. B.-A., Sheldon, T. A., Cracknell, A., & Turnbull, A. (2007). Sensitivity of routine system for reporting patient safety incidents in an NHS hospitals: Retrospective patient case note review. BMJ, 334(79).

Shappell, S. A., & Wiegmann, D. A. (2000). The human factors analysis and classification system-HFACS. Virginia.

Shorrock, S., Young, M., & Faulkner, J. (2005, January). Who moved my (Swiss) Cheese? Aircraft and Aerospace, 31–33.

SIA. (2012). Models of causation: Safety. Victoria: Safety Institute of Australia.

Sujan, M., Spurgeon, P., Cooke, M. Weale, A., Debenham, P., & Cross, S. (2015). The development of safety cases for healthcare services: Practical experiences, opportunities and challenges. Reliability Engineering and System Safety, 140, 200–207.

Vincent, C. (2007). Incident reporting and patient safety. BMJ, 334(7584), 51.

Vincent. (2010). Patient safety (2nd ed). Oxford: Wiley Blackwell.

Vincent, C., & Amalberti, R. (2016). Safer healthcare: Strategies for the real world (pp. 1–157). London: Springer Open.

Woodward, S., Randall, S., Hoey, A., & Bishop, R. (2004). Seven steps to patient safety. London: National Patient Safety Agency.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Switzerland AG

About this paper

Cite this paper

Kaya, G.K., Canbaz, H.T. (2019). The Problem with Traditional Accident Models to Investigate Patient Safety Incidents in Healthcare. In: Calisir, F., Cevikcan, E., Camgoz Akdag, H. (eds) Industrial Engineering in the Big Data Era. Lecture Notes in Management and Industrial Engineering. Springer, Cham. https://doi.org/10.1007/978-3-030-03317-0_39

Download citation

DOI: https://doi.org/10.1007/978-3-030-03317-0_39

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-03316-3

Online ISBN: 978-3-030-03317-0

eBook Packages: EngineeringEngineering (R0)