Abstract

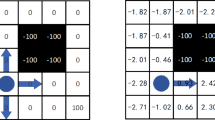

This paper presents a Q-learning method that works in continuous domains. Other characteristics of our approach are the use of an incremental topology preserving map (ITPM) to partition the input space, and the incorporation of bias to initialize the learning process. A unit of the ITPM represents a limited region of the input space and maps it onto the Q-values of M possible discrete actions. The resulting continuous action is an average of the discrete actions of the “winning unit” weighted by their Q-values. Then, TD(λ) updates the Q-values of the discrete actions according to their contribution. Units are created incrementally and their associated Q-values are initialized by means of domain knowledge. Experimental results in robotics domains show the superiority of the proposed continuous-action Q-learning over the standard discrete-action version in terms of both asymptotic performance and speed of learning. The paper also reports a comparison of discounted-reward against average-reward Q-learning in an infinite horizon robotics task.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

Baird, L. C. (1995). Residual algorithms: Reinforcement learning with function approximation. In Proceedings of the 12th International Conference on Machine Learning (pp. 30-37).

Barto, A. G., Sutton, R. S., & Anderson, C. W. (1983). Neuronlike elements that can solve difficult learning control problems. IEEE Transactions on Systems, Man, and Cybernetics, 13, 835–846.

Dedieu, E., & Millán, J. del R. (1998). Efficient occupancy grids for variable resolution map building. In Proceedings of the 6th International Symposium on Intelligent Robotic Systems (pp. 195-203).

Fritzke, B. (1995). A growing neural gas network learns topologies. In Advances in neural information processing systems 7 (pp. 625–632).

Kohonen, T. (1997). Self-organizing maps (2nd edn.). Berlin: Springer-Verlag.

Lin, L.-J. (1992). Self-improving reactive agents based on reinforcement learning, planning and teaching. Machine Learning, 8, 293–321.

Mahadevan, S. (1996). Average reward reinforcement learning: Foundations, algorithms, and empirical results. Machine Learning, 22, 159–195.

Martín, P., & Millán, J. del R. (1998). Learning reaching strategies through reinforcement for a sensor-based manipulator. Neural Networks, 11, 359–376.

Matari?, M. J. (1992). Integration of representation into goal-driven behavior-based robots. IEEE Transactions on Robotics and Automation, 8, 304–312.

Millán, J. del R. (1992). A reinforcement connectionist learning approach to robot path finding. Ph.D. Thesis, Software Dept., Universitat Polit`ecnica de Catalunya, Barcelona, Spain.

Millán, J. del R. (1996). Rapid, safe, and incremental learning of navigation strategies. IEEE Transactions on Systems, Man, and Cybernetics-Part B, 26, 408–420.

Millán, J. del R. (1997). Incremental acquisition of local networks for the control of autonomous robots. In Proceedings of the 7th International Conference on Artificial Neural Networks (pp. 739-744).

Millán, J. del R., & Torras, C. (1992). A reinforcement connectionist approach to robot path finding in non-mazelike environments. Machine Learning, 8, 363–395.

Santamaría, J. C., Sutton, R. S., & Ram, A. (1998). Experiments with reinforcement learning in problems with continuous state and action spaces. Adaptive Behavior, 6, 163–217.

Schwartz, A. (1993). A reinforcement learning method for maximizing undiscounted rewards. In Proceedings of the 10th International Conference on Machine Learning (pp. 298-305).

Singh, S. P., & Sutton, R. S. (1996). Reinforcement learning with replacing eligibility traces. Machine Learning, 22, 123–158.

Sutton, R. S. (1988). Learning to predict by the methods of temporal differences. Machine Learning, 3, 9–44.

Sutton, R. S. (1996). Generalization in reinforcement learning: Successful examples using sparse coarse coding. In Advances in neural information processing systems 8 (pp. 1038-1044).

Sutton, R. S., & Barto, A. G. (1998). Reinforcement learning: An introduction. Cambridge, MA: MIT Press.

Tadepalli, P., & Ok, D. (1998). Model-based average reward reinforcement learning. Artificial Intelligence, 100, 177–224.

Tham, C. L. (1995). Reinforcement learning of multiple tasks using a hierarchical CMAC architecture. Robotics and Autonomous Systems, 15, 247–274.

Thrun, S. B. (1992). The role of exploration in learning control. In D. A. White & D. A. Sofge (Eds.), Handbook of intelligent control: Neural, fuzzy and adaptive approaches (pp. 527–559). New York: Van Nostrand Reinhold.

Watkins, C. J. C. H. (1989). Learning with delayed rewards. Ph.D. Thesis, Cambridge University, England, UK.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Millán, J.d.R., Posenato, D. & Dedieu, E. Continuous-Action Q-Learning. Machine Learning 49, 247–265 (2002). https://doi.org/10.1023/A:1017988514716

Issue Date:

DOI: https://doi.org/10.1023/A:1017988514716