Abstract

When interpolating incomplete data, one can choose a parametric model, or opt for a more general approach and use a non-parametric model which allows a very large class of interpolants. A popular non-parametric model for interpolating various types of data is based on regularization, which looks for an interpolant that is both close to the data and also “smooth” in some sense. Formally, this interpolant is obtained by minimizing an error functional which is the weighted sum of a “fidelity term” and a “smoothness term”.

The classical approach to regularization is: select “optimal” weights (also called hyperparameters) that should be assigned to these two terms, and minimize the resulting error functional.

However, using only the “optimal weights” does not guarantee that the chosen function will be optimal in some sense, such as the maximum likelihood criterion, or the minimal square error criterion. For that, we have to consider all possible weights.

The approach suggested here is to use the full probability distribution on the space of admissible functions, as opposed to the probability induced by using a single combination of weights. The reason is as follows: the weight actually determines the probability space in which we are working. For a given weight λ, the probability of a function f is proportional to exp(− λ ∫ f2 uu du) (for the case of a function with one variable). For each different λ, there is a different solution to the restoration problem; denote it by fλ. Now, if we had known λ, it would not be necessary to use all the weights; however, all we are given are some noisy measurements of f, and we do not know the correct λ. Therefore, the mathematically correct solution is to calculate, for every λ, the probability that f was sampled from a space whose probability is determined by λ, and average the different fλ's weighted by these probabilities. The same argument holds for the noise variance, which is also unknown.

Three basic problems are addressed is this work:

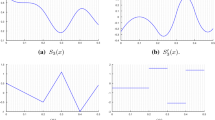

• Computing the MAP estimate, that is, the function f maximizing Pr(f/D) when the data D is given. This problem is reduced to a one-dimensional optimization problem.

• Computing the MSE estimate. This function is defined at each point x as ∫f(x)Pr(f/D) Ũf. This problem is reduced to computing a one-dimensional integral.

In the general setting, the MAP estimate is not equal to the MSE estimate.

• Computing the pointwise uncertainty associated with the MSE solution. This problem is reduced to computing three one-dimensional integrals.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

R.A. Adams, Sobolev Spaces, Academic Press, 1975.

H. Akima, “Bivariate interpolation and smooth surface fitting based on local procedures,” Comm. ACM, Vol. 17, pp. 26–31, 1974.

M. Bertero, T.A Poggio, and V. Torre, “Ill-posed problems in early vision,” in Proceedings of the IEEE, Vol. 8, pp. 869–889, 1988.

R.J. Chorley, Spatial Analysis in Geomorphology, Methuen and Co., 1972.

P. Craven and G. Whaba, “Optimal smoothing of noisy data with spline functions,” Numerische Mathematik, Vol. 31, pp. 377–403, 1979.

R. Fischer, W. Von Der Linden, and V. Dose, “On the Importance of α Marginalization in Maxium Entropy,” Maximum Entropy and Bayesian Methods (book chapter), Kluwer Academic, 1996, 229–236.

S. Geman and D. Geman, “Stochastic relaxation, Gibbs distribution, and the Bayesian restoration of images,” IEEE Trans. on Pattern Analysis and Machine Intelligence, Vol. 6, pp. 721–741, 1984.

L. Gross, “Integration and non-linear transformations in Hilbert space,” Transactions of the American Mathematical Society, Vol. 94, pp. 404–440, 1960.

S.F. Gull, “Developments in maximum entropy data analysis,” in Maximum Entropy and Bayesian Methods, J. Skilling (Ed.) Kluwer Academic, 1989.

P. Hall and I. Johnstone, “Emprical functionals and efficient smoothing parameter selection,” Journal of the Royal Statistical Society, Vol. 54, No. 1, pp. 475–530, 1992.

E. Hille, “Introduction to the general theory of reproducing kernels,” Rocky Mountain Journal of Mathematics, Vol. 2, pp. 321–368, 1972.

B. Horn, Robot Vision, MIT Press, 1986.

B.K.P Horn and B.G. Schunck, “Determining optical flow,” Artificial Intelligence, Vol. 17, pp. 185–203, 1981.

D. Keren and M. Werman, “A Bayesian framework for regularization,” in 12th International Conference on Pattern Recognition, Vol. C, Jerusalem, 1994, pp. 72–76.

D. Keren and M. Werman, “Probabilistic analysis of regularization,” IEEE Trans. on Pattern Analysis and Machine Intelligence, Vol. 15, pp. 982–995, 1993.

Daniel Keren, “Probabilistic analyses of interpolation in computer vision,” Ph.D. thesis, Hebrew University of Jerusalem, 1990.

J. Kuelbs, F.M. Larkin, and J.A. Williamson, “Weak probability disributions on reproducing kernel Hilbert spaces,” Rocky Mountain Journal of Mathematics, Vol. 2, pp. 369–378, 1972.

H.H. Kuo, Guassian Measures in Banach Spaces, Springer-Verlag, 1975.

F.M. Larkin, “Gaussian measure in Hilbert space and applications in numerical analysis,” Rocky Mountain Journal of Mathematics, Vol. 2, pp. 379–421, 1972.

S. Lauritzen, “Random orthogonal set functions and stochastic models for the gravity potential of the earth,” Stochastic Processes and Applications, Vol. 3, pp. 65–72, 1975.

M. Lee, A. Rangarajan, I.G. Zubal, and G. Gindi, “A continuation method for emission tomography,” IEEE Transaction on Nuclear Science, Vol. 40, No. 6, pp. 2049–2058, 1993.

S.J. Lee, A. Rangarajan, and G. Gindi, “Bayesian image reconstruction in spect using higher-order mechanical models as priors,” IEEE Transaction on Medical Imaging, Vol. 4, pp. 669–680, December 1995.

D.J.C. MacKay, “Comparison of approximate methods in handling hyperparameters,” Neural Computation, to appear.

D.J.C. MacKay, “Bayesian methods for adaptive models,” Ph.D. thesis, California Institute of Technology, 1992.

R. Molina, “On the hierarchical Bayesian approach to image restoration: Applications to astronomical images,” IEEE Trans. on Pattern Analysis and Machine Intelligence, Vol. 16, No. 11, pp. 1122–1128, 1994.

R. Molina and A.K. Katsaggelos, “On the hierarchical Bayesian approach to image restoration and the iterative evaluation of the regularization parameter,” in Visual Communications and Image Processing'94, Proc. SPIE 2308, Aggelos K. Katsaggelos (Ed.), 1994, pp. 244–251.

D. Nychka, “Bayesian confidence intervals for smoothing splines,” Journal of the American Statistical Association, Vol. 83, No. 404, pp. 1134–1143, 1988.

D. Nychka, “Choosing a range for the amount of smoothing in nonparametric regression,” Journal of the American Statistical Association, Vol. 86, No. 415, pp. 653–664, 1991.

J.E. Robinson, H.A.K. Charlesworth, and M.J. Ellis, “Structural analysis using spatial filtering in interior plans of south-central alberta,” Amer. Assoc. Petrol. Geol. Bull., Vol. 53, pp. 2341–2367, 1969.

J. Skilling, Quantified Maximum Entropy. Maximum Entropy and Bayesian Methods, Kluwer Academic, 1990.

J. Skilling, “Fundamentals of maxent in data analysis,” in Maximum Entropy in Action, B. Buck and V.A. Macaulay (Eds.). Clarendon Press: Oxford, 1991.

C.E.M. Strauss, D.H. Wolpert, and E.D. Wolf, “Alpha Evidence and the Entropic Prior,” Maximum Entropy and Bayesian Methods, Kluwer Academic, 1995.

R. Szeliski, Bayesian Modeling of Uncertainty in Low-Level Vision, Kluwer, 1989.

S. Szeliski and D. Terzopoulos, “From splines to fractals,” SIGGRAPH, 1989, pp. 51–60.

D. Terzopoulos, “Multi-level surface reconstruction,” in Multiresolution Image Processing and Analysis, A. Rosenfeld (Ed.), Springer-Verlag, 1984.

D. Terzopoulos, “Regularization of visual problems involving discontinuities,” IEEE Trans. on Pattern Analysis and Machine Intelligence, Vol. 8, pp. 413–424, 1986.

A.M. Thompson, J.C. Brown, J.W. Kay, and D.M. Titterington, “A study of methods of choosing the smoothing parameter in image restoration by regularization,” IEEE Trans. on Pattern Analysis and Machine Intelligence, Vol. 13, pp. 326–339, 1991.

A.N. Tikhonov and V.Y. Arsenin, Solution of Ill-Posed Problems, Winston and Sons, 1977.

G. Wahba, “Bayesian ‘confidence intervals’ for the crossvalidated smoothing spline,” Journal of the Royal Statistical Society, Ser. B, Vol. 45, pp. 133–150, 1983.

G. Wahba, Spline Models for Observational Data, Society for Industrial and Applied Mathematics: Philadelphia, 1990.

G.W. Wasilkowski, “Optimal algorithms for linear problems with Gaussian measures,” Rocky Mountain Journal of Mathematics, Vol. 16, pp. 727–749, 1986.

N. Young, An Introduction to Hilbert Space, Cambridge Mathematical Textbooks, 1988.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Keren, D., Werman, M. A Full Bayesian Approach to Curve and Surface Reconstruction. Journal of Mathematical Imaging and Vision 11, 27–43 (1999). https://doi.org/10.1023/A:1008317210576

Issue Date:

DOI: https://doi.org/10.1023/A:1008317210576