Abstract

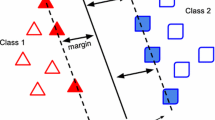

Feature selection is an important combinatorial optimisation problem in the context of supervised pattern classification. This paper presents four novel continuous feature selection approaches directly minimising the classifier performance. In particular, we include linear and nonlinear Support Vector Machine classifiers. The key ideas of our approaches are additional regularisation and embedded nonlinear feature selection. To solve our optimisation problems, we apply difference of convex functions programming which is a general framework for non-convex continuous optimisation. Experiments with artificial data and with various real-world problems including organ classification in computed tomography scans demonstrate that our methods accomplish the desired feature selection and classification performance simultaneously.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

Bach, F., Lanckriet, G., & Jordan, M. (2004). Multiple kernel learning, conic duality, and the SMO algorithm. In Proceedings of the 21st International Conference on Machine Learning. New York, NY: ACM Press.

Ben-Tal, A., & Zibulevsky, M. (1997). Penalty/Barrier multiplier methods for convex programming problems. SIAM Journal on Optimization, 7:2, 347–366.

Bennett, K. P., & Mangasarian, O. L. (1992). Robust linear programming discrimination of two linearly inseparable sets. Optimization Methods and Software, 1, 23–34.

Blake, C. L., & Merz, C. J. (1998). UCI repository of machine learning databases.

Bradley, P. S. (1998). Mathematical programming approaches to machine learning and data mining. Ph.D. thesis, University of Wisconsin, Computer Sciences Dept., Madison, WI, TR-98-11.

Bradley, P. S., & Mangasarian, O. L. (1998). Feature selection via concave minimization and support vector machines. In J. Shavlik (Ed.), Proceedings of the 15th international conference on machine learning (pp. 82–90). San Francisco, CA: Morgan Kaufmann.

Chapelle, O., Haffner, P., & Vapnik, V. N. (1999). SVMs for histogram-based image classification. IEEE Transactions on Neural Networks, 10:5, 1055–1064.

Cristianini, N., Shawe-Taylor, J., Elisseeff, A., & Kandola, J. (2002). On kernel-target alignment. In T. G. Dietterich, S. Becker, & Z. Ghahramani (Eds.), Advances in neural information processing systems 14 (pp. 367–373). Cambridge, MA: MIT Press.

Duda, R., Hart, P., & Stork, D. (2000). Pattern classification. New York, NY: John Wiley & Sons, second edition.

Guyon, I., & Elisseeff, A. (2003). An introduction to variable and feature selection. Journal of Machine Learning Research, 3, 1157–1182.

Haasdonk, B., & Bahlmann, C. (2004). Learning with distance substitution kernels. In C. E. Rasmussen, H. H. Bülthoff, M. A. Giese, & B. Schölkopf (Eds.), Pattern recognition, proc. of 26th DAGM symposium, Vol. 3175 of LNCS. (pp. 220–227). Berlin: Springer.

Heiler, M., Cremers, D., & Schnörr, C. (2001). Efficient feature subset selection for support vector machines. Technical Report TR-01-021, Comp. science series, Dept. of Mathematics and Computer Science, University of Mannheim.

Hermes, L., & Buhmann, J. M. (2000). Feature selection for support vector machines. In Proc. of the International Conference on Pattern Recognition (ICPR'00), Vol. 2 (pp. 716–719).

Ilog, Inc.: 2001, ‘ILOG CPLEX 7.5’.

Jakubik, O. J. (2003). Feature selection with concave minimization. Master's thesis, Dept. of Mathematics and Computer Science, University of Mannheim.

Jebara, T., & Jaakkola, T. (2000). Feature selection and dualities in maximum entropy discrimination. In I. Bratko & S. Dzeroski (Eds.), Proceedings of the 16th international conference on machine learning (pp. 291–300). San Francisco, CA: Morgan Kaufmann.

John, G. H., Kohavi, R., & Pfleger, K. (1994). Irrelevant features and the subset selection problem. In R. S. Michalski & G. Tecuci (Eds.), Proc. of the 11th international conference on machine learning (pp. 121–129). San Francisco, CA: Morgan Kaufmann.

Mangasarian, O. L. (1997). Minimum-support solutions of polyhedral concave programs. Technical Report TR-1997-05, Mathematical Programming, University of Wisconsin.

MathWorks. (2002). Optimization toolbox user's Guide. The MathWorks, Inc.

Neumann, J., Schnörr, C., & Steidl, G. (2004). SVM-based feature selection by direct objective minimisation. In C. E. Rasmussen, H. H. Bülthoff, M. A. Giese, & B. Schölkopf (Eds.), Pattern recognition, proc. of 26th DAGM symposium, Vol. 3175 of LNCS (pp. 212–219). Berlin: Springer.

Pham Dinh, T., & Elbernoussi, S. (1988). Duality in d.c. (difference of convex functions optimization. Subgradient Methods. In Trends in Mathematical Optimization, Vol. 84 of Int. Series of Numer. Math. Basel: Birkäuser Verlag (pp. 277–293).

Pham Dinh, T., & Hoai An, L. T. (1998). A D.C. Optimization Algorithm for Solving the Trust-Region Subproblem. SIAM Journal on Optimization, 8:2, 476–505.

Rockafellar, R. T. (1970). Convex analysis. Princeton, NJ: Princeton University Press.

Schmidt, S. (2004). Context-sensitive image labeling based on logistic regression. Master's thesis, Dept. of Mathematics and Computer Science, University of Mannheim.

Schölkopf, B., & Smola, A. J. (2002). Learning with kernels. Cambridge, MA: MIT Press.

Tibshirani, R. (1996). Regression shrinkage and selection via the Lasso. Journal of the Royal Statistical Society, Series B, 58:1, 267–288.

Weston, J., Elisseeff, A., Schölkopf, B., & Tipping, M. (2003). Use of the zero-norm with linear models and kernel methods. Journal of Machine Learning Research, 3, 1439–1461.

Weston, J., Mukherjee, S., Chapelle, O., Pontil, M., Poggio, T., & Vapnik, V. (2001). Feature Selection for SVMs. In T. K. Leen, T. G. Dietterich, & V. Tresp (Eds.), Advances in neural information processing systems 13 (pp. 668–674). Cambridge, MA: MIT Press.

Yuille, A., & Rangarajan, A. (2003). The convex-concave procedure. Neural Computation, 15, 915–936.

Zhu, J., Rosset, S., Hastie, T., & Tibshirani, R. (2004). 1-norm support vector machines. In S. Thrun, L. Saul, & B. Schölkopf (Eds.), Advances in neural information processing systems 16. Cambridge, MA: MIT Press.

Author information

Authors and Affiliations

Corresponding author

Additional information

Editor: Dale Schuurmans

Rights and permissions

About this article

Cite this article

Neumann, J., Schnörr, C. & Steidl, G. Combined SVM-Based Feature Selection and Classification. Mach Learn 61, 129–150 (2005). https://doi.org/10.1007/s10994-005-1505-9

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10994-005-1505-9