Abstract

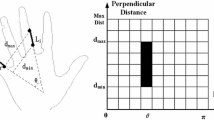

A vital requirement of any recognition system claiming to be real time is the capability to perform feature extraction in real time. In this paper, we propose an innovative fuzzy approach for real-time dynamic gesture recognition and spotting, where a compact local descriptor is designed to model moving gesture skeletons as a time series of fuzzy statistical features. Then, a set of one-vs-rest SVMs is trained on these features for gesture recognition and spotting. In this approach, the meaningful hand movements are successfully spotted while concurrently removing unintentional hand movements from an input video sequence. When evaluated on a gesture data set incorporating a relatively large and diverse collection of video data, the method proposed yields promising results that compare very favorably with those reported in the literature, while retaining real-time performance.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

Y. Tu, C. Kao, and H. Lin, “Human computer interaction using face and gesture recognition,” in: IEEE Conference on Signal and Information Processing Association Annual Summit (APSIPA-2013), p. 1.

S. Bakheet and A. Al-Hamadi, Information, 7, 1 (2016).

J. P. Wachs, M. Kölsch, H. Stern, and Y. Edan, Commun. ACM, 54, 60 (2011).

O. Nierop, A. Helm, K. Overbeeke, and T. Djajadiningrat, Visual Computer, Springer, 24, 31 (2008).

S. Sadek, A. Al-Hamadi, B. Michaelis, and U. Sayed, “Human action recognition via affine moment invariants,” in: The 21st International Conference on Pattern Recognition (ICPR-2012), p. 218.

S. Siddharth and A. Rautaray, J. Artif. Intell. Res., Springer, 1 (2012).

R. Azad, B. Azad, and I. T. Kazerooni, Adv. Comput. Sci., 2, 121 (2013).

S. Sadek, A. Al-Hamadi, B. Michaelis, and U. Sayed, “Cubic-splines neural network-based system for image retrieval,” in: Proceedings of the Sixth International IEEE Conference on Image Processing (ICIP-2009), p. 273.

S. Sadek, A. Al-Hamadi, B. Michaelis, and U. Sayed, EURASIP J. Adv. Signal Process., 540375 (2011).

S. Sadek, A. Al-Hamadi, B. Michaelis, and U. Sayed, “Towards robust human action retrieval in video,” in: Proceedings of the British Machine Vision Conference (BMVC-2010).

P. Garg, N. Aggarwal, and S. Sofat, “Vision based hand gesture recognition,” in: World Academy of Science, Engineering and Technology (2009), p. 1.

S. Mitra and T. Acharya, IEEE Trans. Syst. Man. Cybern. C: Appl. Rev., 37, 311 (2007).

S. Sadek, A. Al-Hamadi, B. Michaelis, and U. Sayed, “Human activity recognition: A scheme using multiple cues,” in: Proceedings of the International Symposium on Visual Computing (ISVC-2010), Vol. 1, p. 574.

A. Erol, G. Bebis, M. Nicolescu, et al., Comput. Vis. Image Underst., 108, 52 (2007).

J. J. Kuch and T. S. Huang, “Human computer interaction via the human hand: a hand model,” in The 28th Asilomar Conference on Signals, Systems, and Computers (1994), p. 1252.

M. Bray, E. Koller-Meier, and L. V. Gool, “Smart particle filtering for 3d hand tracking,” in: Proceedings of the 6th IEEE International Conference on Automatic Face and Gesture Recognition (2004), p. 675.

K. Nirei, H. Saito, M. Mochimaru, and S. Ozawa, “Human hand tracking from binocular image sequences,” in: The 22th International Conference on Industrial Electronics, Control, and Instrumentation (1996), p. 297.

B. Stenger, A. Thayananthan, P. Torr, and R. Cipolla, EEE Trans. Pattern Anal. Machine Intell., 28, 1372 (2006).

M. de La Gorce, N. Paragios, and D. J. Fleet, “Model-based hand tracking with texture, shading and self-occlusions,” in: IEEE Conference on Computer Vision and Pattern Recognition (2008), p. 1.

M. de La Gorce, D. J. Fleet, and N. Paragios, IEEE Trans. Pattern Anal. Machine Intell., 33, no. 9, 1793 (2011).

C. Kerdvibulvech and H. Saito, EURASIP J. Image Video Process., 724947 (2009).

M. H. Yang, N. Ahuja, and M. Tabb, PAMI, 29, 1062 (2002).

C.-C. Wang and K.-C. Wang, “Hand Posture Recognition Using Adaboost with SIFT for Human Robot Interaction,” Springer, Berlin/Heidelberg (2008), p. 317.

S. Bakheet and A. Al-Hamadi, Br. J. Math. Comput. Sci., 17, 1 (2016).

J. Kovac, P. Peer, and F. Solina, “Human skin color clustering for face detection,” in: Proceedings of EUROCON (2003), p. 144.

M. A. Mofaddel and S. Sadek, “Adult image content filtering: A statistical method based on multicolor skin modeling,” in: IEEE International Symposium on Signal Processing and Information Technology (ISSPIT-2010), p. 366.

S. Sadek, A. Al-Hamadi, B. Michaelis, and U. Sayed, “A robust neural system for objectionable image recognition,” in: Proceedings of the Second International Conference on Machine Vision (ICMV-2009), p. 32.

S. Sadek, A. Al-Hamadi, B. Michaelis, and U. Sayed, Int. J. Intell. Sci. (IJIS), 2, 9 (2012).

S. Suzuki and K. Abe, CVGIP, 30, 32 (1985).

S. Sadek, A. Al-Hamadi, G. Krell, and B. Michaelis, ISRN J. Machine Vis., 1, 1 (2013).

S. Sadek, A. Al-Hamadi, and B. Michaelis, WSEAS Trans. Inform. Sci. Appl., 10, 116 (2013).

S. Sadek, A. Al-Hamadi, B. Michaelis, and U. Sayed, ISRN J. Machine Vis., 1, 1 (2012).

V. N. Vapnik, IEEE Trans. Neural Networks, 10, 988 (1999).

C. Cortes and V. Vapnik, Machine Learning, 20, 1 (1995).

H.-K. Lee and J. Kim, IEEE Trans. Pattern Anal. Machine Intell., 21, 961 (1999).

H.-D. Yang, S. Sclaroff, and S.-W. Lee, IEEE Trans. Pattern Anal. Machine Intell., 31, 1264 (2009).

M. Elmezain, A. Al-Hamadi, S. Sadek, and B. Michaelis, “Robust methods for hand gesture spotting and recognition using hidden markov models and conditional random fields,” in: IEEE International Symposium on Signal Processing and Information Technology (ISSPIT-2010), p. 131.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Bakheet, S. A Fuzzy Framework for Real-Time Gesture Spotting and Recognition. J Russ Laser Res 38, 61–75 (2017). https://doi.org/10.1007/s10946-017-9620-1

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10946-017-9620-1