Abstract

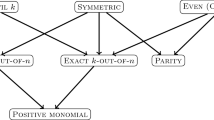

Approximate solution of optimization tasks that can be formalized as minimization of error functionals over admissible sets computable by variable-basis functions (i.e., linear combinations of n-tuples of functions from a given basis) is investigated. Estimates of rates of decrease of infima of such functionals over sets formed by linear combinations of increasing number n of elements of the bases are derived, for the case in which such admissible sets consist of Boolean functions. The results are applied to target sets of various types (e.g., sets containing functions representable either by linear combinations of a ???small??? number of generalized parities or by ???small??? decision trees and sets satisfying smoothness conditions defined in terms of Sobolev norms).

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Adams, R. A.: Sobolev Spaces, Academic Press, New York, 1975.

Barron, A. R.: Neural net approximation, in K. Narendra (ed.), Proc. 7th Yale Workshop on Adaptive and Learning Systems, Yale University Press, 1992, pp. 69???72.

Barron, A. R.: Universal approximation bounds for superpositions of a sigmoidal function, IEEE Trans. on Inform. Theory 39 (1993), 930???945.

Bellman, R.: Dynamic Programming, Princeton University Press, Princeton, NJ, 1957.

Cucker, F. and Smale, S.: On the mathematical foundations of learning, Bull. Amer. Math. Soc. 39 (2001), 1???49.

Daniel, J. W.: The Approximate Minimization of Functionals, Prentice-Hall, Englewood Cliffs, NJ, 1971.

Dontchev, A. L. and Zolezzi, T.: Well-Posed Optimization Problems, Lecture Notes in Math. 1543, Springer-Verlag, Berlin, 1993.

Donahue, M. J., Gurvits, L., Darken, C. and Sontag, E.: Rates of convex approximation in non-Hilbert spaces, Constr. Approx. 13 (1997), 187???220.

Gelfand, I. M. and Fomin, S. V.: Calculus of Variations, Prentice-Hall, Englewood Cliffs, NJ, 1963.

Girosi, F. and Anzellotti, G.: Rates of convsergence for radial basis functions and neural networks, in R. J. Mammone (ed.), Artificial Neural Networks for Speech and Vision, Chapman & Hall, London, 1993, pp. 97???114.

Gurvits, L. and Koiran, P.: Approximation and learning of convex superpositions, J. Comput. System Sci. 55 (1997), 161???170.

Jones, L. K.: A simple lemma on greedy approximation in Hilbert space and convergence rates for projection pursuit regression and neural network training, Ann. Statist. 20 (1992), 608???613.

Kainen, P. C., K??rkov??, V. and Sanguineti, M.: Minimization of error functionals over variable-basis functions, SIAM J. Optim. 14 (2003), 732???742.

Kainen, P. C., K??rkov??, V. and Vogt, A.: Continuity of approximation by neural networks in ???p-spaces, Ann. Oper. Res. 101 (2001), 143???147.

K??rkov??, V.: Dimension-independent rates of approximation by neural networks, in K. Warwick and M. K??rn?? (eds), Computer-Intensive Methods in Control and Signal Processing. The Curse of Dimensionality, Birkh??user, Boston, MA, 1997, pp. 261???270.

K??rkov??, V., Kainen, P. C. and Kreinovich, V.: Estimates of the number of hidden units and variation with respect to half-spaces, Neural Networks 10 (1997), 1061???1068.

K??rkov??, V. and Sanguineti, M.: Bounds on rates of variable-basis and neural-network approximation, IEEE Trans. on Inform. Theory 47 (2001), 2659???2665.

K??rkov??, V. and Sanguineti, M.: Comparison of worst case errors in linear and neural network approximation, IEEE Trans. on Inform. Theory 48 (2002), 264???275.

K??rkov??, V. and Sanguineti, M.: Error estimates for approximate optimization by the extended Ritz method, SIAM J. Optim. 15 (2005), 461???487.

K??rkov??, V. and Sanguineti, M.: Learning with generalization capability by kernel methods of bounded complexity, J. Complexity, in press.

K??rkov??, V., Savick??, P. and Hlav????kov??, K.: Representations and rates of approximation of real-valued Boolean functions by neural networks, Neural Networks 11 (1998), 651???659.

Kushilevicz, E. and Mansour, Y.: Learning decision trees using the Fourier spectrum, SIAM J. Comput. 22 (1993), 1331???1348.

Leshno, M., Pinkus, A. and Schocken, S.: Multilayer feedforward networks with a nonpolynomial activation function can approximate any function, Neural Networks 6 (1993), 861???867.

Lorentz, G. G., v. Golitschek, M. and Makovoz, Y.: Constructive Approximation. Advanced Problems, Springer-Verlag, 1996.

Makovoz, Y.: Uniform approximation by neural networks, J. Approx. Theory 95 (1998), 215???228.

Mhaskar, H. N. and Micchelli, C. A.: Dimension-independent bounds on the degree of approximation by neural networks, IBM J. Res. Devel. 38 (1994), 277???283.

Micchelli, C. A., Xu, Y. and Ye, P.: Cucker Smale learning theory in Besov spaces, in J. Suykens, G. Horv??th, S. Basu, C. Micchelli and J. Vanderwalle (eds), Advances in Learning Theory: Methods, Models, and Applications, Nato Science Series, IOS Press, Amsterdam, 2003.

Narendra, K. S., Balakrishnan, J. and Ciliz, K. M.: Adaptation and learning using multiple models, switching, and tuning, IEEE Control Systems Magazine 15 (1995), 37???51.

Pisier, G.: Remarques sur un resultat non publi?? de B. Maurey, in Seminaire d'Analyse Fonctionelle, vol. I, no. 12, ??cole Polytechnique, Centre de Math??matiques, Palaiseau, France, 1980???81.

Singer, I.: Best Approximation in Normed Linear Spaces by Elements of Linear Subspaces, Springer-Verlag, Berlin, 1970.

Smale, S. and Zhou, D.-X.: Estimating the approximation error in learning theory, Analysis and Applications 1 (2003), 1???25.

Weaver, H. J.: Applications of Discrete and Continuous Fourier Analysis, Wiley, New York, 1983.

Zoppoli, R., Sanguineti, M. and Parisini, T.: Approximating networks and extended Ritz method for the solution of functional optimization problems, J. Optim. Theory Appl. 112 (2002), 403???440.

Author information

Authors and Affiliations

Corresponding author

Additional information

Mathematics Subject Classifications (2000)

49K40, 41A25, 90B99, 90C90, 05C05, 62C99.

Collaboration between V.K. and P.C.K. was supported by a NSF COBASE grant and between V.K. and M.S. by a Scientific Agreement between Italy and Czech Republic, Area MC 6, Project 22 (???Functional Optimization and Nonlinear Approximation by Neural Networks???).

V. K??rkov??: Partially supported by GA ??R Grants 201/02/0428 and 201/05/0557 and by the Institutional Research Plan AV0Z10300504.

M. Sanguineti: Partially supported by a PRIN Grant of the Italian Ministry of University and Research (Project ???New Techniques for the Identification and Adaptive Control of Industrial Systems???).

Rights and permissions

About this article

Cite this article

Kainen, P.C., K??rkov??, V. & Sanguineti, M. Rates of Minimization of Error Functionals over Boolean Variable-Basis Functions. J Math Model Algor 4, 355–368 (2005). https://doi.org/10.1007/s10852-005-1625-z

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10852-005-1625-z