Abstract

Eye gaze plays a fundamental role in social interaction and facial recognition. However, interference processing between gaze and other facial variants (e.g., expression) and invariant information (e.g., gender) remains controversial and unclear, especially the role of facial information discriminability in interference. A Garner paradigm was used to conduct two experiments. This paradigm allows simultaneous investigation of the mutual influence of two kinds of facial information in one experiment. In Experiment 1, we manipulated facial expression discriminability and investigated its role in interference processing of gaze and facial expression. The results show that individuals were unable to ignore expression when classifying gaze with both high and low discriminability but could ignore gaze when classifying expression with high discriminability only. In Experiment 2, we manipulated gender discriminability and investigated its function in interference processing of gaze and gender. Participants were unable to ignore gender when classifying gaze with both high and low discriminability but could ignore gaze when classifying gender with low discriminability only. The results indicate that gaze categorization is affected by facial expression and gender regardless of facial information discriminability, whereas interference of gaze on facial expression and gender depends on the degree of discriminability. The present study provides evidence that the processing of gaze and other variant and invariant information is interdependent.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

The face is the most important visual stimulus for human social and nonverbal interactions because it conveys not only invariant information (e.g., gender and identity) but also variant information (e.g., expression and gaze; Haxby et al., 2000). The eyes are the most significant facial features (Firestone et al., 2007; Henderson et al., 2005; Schyns et al., 2002; Vinette et al., 2004) because they direct our attention with the gaze. Numerous studies have shown that gaze plays a fundamental role in recognizing facial emotion, gender, and identity (Itier & Batty, 2009, for a review). However, interference processing of gaze and other variant or invariant information remains inconsistent across studies. Interference processing refers to how processing of one facial dimension influences the perception of the other. In other words, individuals are unable to pay selective attention to one facial dimension while ignoring the other (Atkinson et al., 2005).

A growing body of studies has focused on the processing of gaze and expression since expressions are important social communicative cues and can convey mental state or intention. According to the distributed neural model for face perception (Haxby et al., 2000), there is interactive processing of gaze and expression, which are variant information. The imaging evidence supports functional overlapping regions (i.e., the superior temporal sulcus) of gaze and expression (Haxby et al., 2002). However, the nature of the combined processing of expression and gaze remains unclear in imaging evidence. More direct behavioral studies using different paradigms found that the perception of gaze influences the processing of expression (Adams & Kleck, 2003, 2005; Bindemann et al., 2008; Rigato et al., 2013), and also the opposite (Adams & Franklin, 2009; Bayless et al., 2011; McCrackin & Itier, 2019; Milders et al., 2011).

Notably, the symmetrical interference of expression and gaze processing was observed using the Garner paradigm (Ganel et al., 2005; see Experiment 1). This paradigm allows simultaneous investigation of the mutual influence of two different aspects of facial information in one experiment, manipulating the relevant and irrelevant task information of the same stimulus (Garner, 1976; Karnadewi & Lipp, 2011). To assess this, baseline and orthogonal blocks are used for each set of categorization tasks. In the baseline block, information relevant to the task (e.g., expression) varies while the irrelevant information (e.g., gaze) is constant (e.g., happy-direct and sad-direct). In the orthogonal block, both relevant and irrelevant information vary so that all possible combinations of the two are found (e.g., happy-direct, happy-averted, sad-direct, sad-averted). The logic of this paradigm is that if relevant and irrelevant information is processed independently, it is easy to ignore irrelevant information and respond to relevant information. Thus, equal performance is observed in the baseline and orthogonal blocks. Alternatively, the worse performance of the orthogonal relative to the baseline block (i.e., the Garner effect) indicates that relevant information has interference from irrelevant information.

However, not all studies employing the Garner paradigm found symmetrical interference of expression and gaze. Asymmetrical interference was observed by Graham and LaBar (2007); see Experiments 1 and 2). Expression impacted gaze processing, but not the reverse. Graham and Labar propose that the asymmetrical interference might result from the fact that facial expression is more discriminable than gaze, and expression is processed before gaze has time to interfere. So, symmetrical interference occurs when the difficulty of judging the expression increases. This difficulty takes two forms—a morphed expression and expressions that are often confused with one another (e.g., fear and surprise; Graham & Labar, 2007; see Experiments 3 and 4). These findings suggest that relative discriminability might be a contributor to inconsistencies among previous studies. Meanwhile, the asymmetrical interference of expression and gaze in other studies might also result from expression discriminability. Different actors were photographed expressing emotions, so they were expressed with differing degrees of intensity (Adams & Franklin, 2009; Adams & Kleck, 2005), which may affect discriminability. Thus, discriminability should be considered in interference processing of expression and gaze.

Another controversial issue in the facial perception literature addressed in this study is interference processing of invariant and variant information. A large body of evidence demonstrates that they are processed independently (Abbruzzese et al., 2019; Dores et al., 2020; Hester, 2019; Li & Tse, 2016; Murphy & Ward, 2017; H. Wang et al., 2017), but others demonstrate symmetrical or asymmetrical interference (Karnadewi & Lipp, 2011; Schuch et al., 2012; Yankouskaya et al., 2012). Importantly, Y. Wang et al. (2013) observed the discriminability effect on interference processing of facial identity (invariant information) and expression (variant information) with the Garner paradigm. They confirm the previous opinion that a more discriminated facial dimension interferes with the processing of a less discriminated dimension, but not vice versa. This finding suggests that discriminability should not be neglected when analyzing the processing of invariant and variant information.

Additionally, individuals show sexual dimorphism, an important classification in an encounter with a new person. Gender categorization moderates social interactions and leads to stereotype behaviors (though often inaccurate; Zhang et al., 2018). However, mixed results were also found regarding interference processing of gender and gaze. An imaging study found that assessing gaze and gender evoked a greater activation of the right superior temporal sulcus and the left fusiform gyrus, respectively (Cloutier et al., 2008). Behavioral studies observe that gender categorization is facilitated by direct (Macrae et al., 2002) or averted gaze (Vuilleumier et al., 2005). Recently, McCrackin and Itier (2019) examined how gaze was processed differently as a function of the task being performed. As a result, gender discrimination was not affected by gaze direction at the behavioral level, but more positive amplitudes (220–290 ms) were observed for averted gaze than for direct gaze at the neural level in the gender discrimination task. Taken together, these studies show that processing of gender and gaze is not completely independent. The symmetrical or asymmetrical interference of facial gender and gaze is poorly understood, especially in the Garner paradigm. More importantly, the effect of discriminability on interference processing of gender and gaze is unclear.

The current study manipulates the discriminability of expression and gender to investigate whether there is asymmetrical or symmetrical interference processing of expression and gaze and interference processing of gender and gaze with the Garner paradigm, especially whether interference processing is influenced by discriminability. Specifically, Experiment 1 investigates the role of expression discriminability in interference processing of expression and gaze, and Experiment 2 investigates the role of gender discriminability in interference processing of gender and gaze. According to previous studies (Atkinson et al., 2005; Graham & LaBar, 2007), the Garner effect and the interaction between two facial dimensions (Experiment 1: gaze vs. expression; Experiment 2: gaze vs. gender) have the same results for interference processing. We hypothesize that a Garner effect or an interaction effect will be observed if there is interference processing of expression and gaze and interference processing of gender and gaze. According to previous studies (Graham & Labar, 2007; Y. Wang et al., 2013), we expect the less discriminable dimension to be affected by the more discriminable dimension, but not vice versa. In addition, to examine whether interference of invariant and variant information on one area of variant information was modulated by discriminability, we focus on whether interference in gaze from expression and gender differs as a function of discriminability.

Experiment 1: Expression versus gaze

The interactions between expression and gaze have been widely investigated using the Garner paradigm (Ganel et al., 2005; Graham & LaBar, 2007). Experiment 1 attempts to extend the previous results in two directions. First, the discriminability of expression indicates the difficulty of classifying different expressions. Morphed and confusing expressions were used to manipulate the difficulty of classifying expressions in previous studies (Graham & LaBar, 2007). However, different actors may display different degrees of intensity when demonstrating expressions and may influence expression discriminability. To this end, we define and directly manipulate the expression discriminability using the assessment of participants. Second, previous studies indicate that the approach-related expressions (e.g., happiness and anger) benefit from direct gaze, while the avoidance-related expressions (e.g., sad and fearful) benefit from averted gaze (Adams & Kleck, 2003; Ganel et al., 2005). Few studies have used surprise as an expression to investigate interference processing of expression and gaze. “Surprise” shares many facial features with “fear” and has ambiguous valence (Fontaine et al., 2007; Gosselin & Simard, 1999; Lassalle & Itier, 2013). Thus, we chose the combination of anger and surprise to investigate the interaction of expression and gaze.

Method

Participants

Using the effect size of η2 p = 0.065–0.084 on Garner effect (P. Wang et al., 2018), a priori power analysis was conducted with G*Power 3.1 (Faul et al., 2007) to reveal that at least 24–31 participants would be required for 80% power to detect the Garner effect with an alpha level of 0.05. Thirty-two participants (21 females, 18–22 years old, mean age = 19.4 years) were recruited. All participants were healthy and right-handed, with normal or corrected-to-normal vision. The research protocol was approved by the local Institutional Review Board (IRB) of the School of Psychology, Shandong Normal University, and all participants gave written informed consent before the experiment.

Apparatus and materials

Forty actors (20 females) were randomly generated by FaceGen Modeller (Singular Inversions, 2006; https://facegen.com). Twelve stimuli from each actor were produced, constituted by the combination of expression (anger, surprise), expression discriminability (high, low) and gaze direction (direct, right, left). The averted gaze stimuli were left and right gaze. The discriminability of expression was modulated by FaceGen. High expression discriminability was achieved by setting the expression intensity at 1, while low expression discriminability was set at 0.4. Thirty people, who did not participate in the formal experiment, were exposed to a 7-point score (1 = very low, 7 = very high) test on expression discriminability (i.e., intensity) of 480 facial stimuli. To eliminate contamination of facial identity, only the actor with a significant difference in discriminability for both anger and surprise was used in the formal experiment. As a result, only four actors (two females, two males) were used. The mean difference between high and low discriminability for the angry expression was significant (5.52 ± 1.21 vs. 3.35 ± 1.27), t(29) = 9.08, p < .01, Cohen’s d = 1.75, as was surprise (3.56 ± 1.08 vs. 2.74 ± .97), t(29) = 1.77, p < .01, Cohen’s d = .80. Thus, a total of 48 pictures (females: males = 1:1) were used in Experiment 1.

All images were converted to grayscale and cropped to eliminate background, ears, hair, and neck, using Adobe Photoshop CS5 software (California, USA). These images were set against a white background and presented on a 14-inch monitor (resolution: 1,024 × 768; refresh rate: 60 Hz) with E-Prime 2.0 software. The viewing distance was 60 cm. The stimulus size was 4° × 5.5° (113 × 156 pixels).

Design and procedure

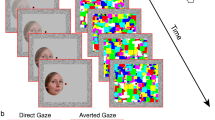

The experiment adopted a 2 (block: baseline, orthogonal) × 2 (gaze: direct, averted) × 2 (expression: angry, surprise) × 2 (task: gaze, expression) × 2 (expression discriminability: high, low) within-subjects design. In the baseline block, the task relevant dimension (e.g., gaze) varies while the task irrelevant dimension (e.g., expression) is constant (i.e., angry-direct and angry-averted, Fig. 1a; or surprise-direct and surprise-averted). In the orthogonal block, both the task relevant and irrelevant dimensions vary so that all four possible combinations of the two dimensions are contained (i.e., angry-direct, angry-averted, surprise-direct, and surprise-averted, Fig. 1a). In the expression task, participants were required to make a two-alternative judgment of expression (surprise or angry). In the gaze task, participants were required to make a two-alternative judgment of gaze direction (direct or averted). Half of Experiment 1 employed high expression discriminability faces (Experiment 1a); the other half used low expression discriminability faces (Experiment 1b).

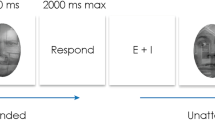

Each trial began with a fixation cross for 400 ms, immediately followed by a facial stimulus for 1,500 ms in the center of the screen. Participants were required to press the keyboard based on the categorization tasks (e.g., direct gaze press “F”; averted gaze press “J”) after the onset of the facial stimuli. Face stimuli disappeared after a response or after 1,500 ms, and participants were asked to make responses in 1,500 ms. The interval between the trials was random, from 200 to 300ms (Fig. 1b). Reaction time (RT) and accuracy (ACC) were recorded, and we handled these data with SPSS 21.0 software (IBM Corp, Armonk, NY). Each condition had 32 trials, with a total of 1,024. The order of block presentation was random for each participant. The order of high and low expression discriminability was counterbalanced across participants.

Results

High and low expression discriminability

RT and ACC were subjected to a repeated-measures analysis of variance (ANOVA), with expression discriminability (high vs. low), task (gaze vs. expression), block (baseline vs. orthogonal), gaze (direct vs. averted), and expression (anger vs. surprise) as within-subjects variables. The Expression Discriminability × Task interaction was significant, RT: F(1, 31) = 36.76, p < .001, η2 p = .54; ACC: F(1, 31) = 28.64, p < .001, η2 p = .48. Participants made expression judgment faster than gaze judgment under high expression discriminability (p < .001). The RT of the gaze task of Experiment 1a and 1b was 551 ± 11.32 and 553 ± 11.02 ms, respectively. The ACC of the gaze task of Experiment 1a and 1b was 91.6% ± .8% and 93.0% ± .5%, respectively. The RT of the expression task of Experiment 1a and 1b was 476 ± 8.21 and 538 ± 10.22 ms, respectively (p < .001). The ACC of the expression task of Experiments 1a and 1b was 94.5% ± .8% and 89.0% ± 1.1%, respectively (p < .001). No speed–accuracy trade-off was found for the gaze and expression tasks. These results suggest that high expression discriminability has a shorter RT and higher ACC than low expression discriminability. The ANOVA also revealed the significant Expression Discriminability × Task × Gaze × Expression interaction, RT: F(1, 31) = 8.98, p < .01, η2 p = .23; ACC: F(1, 31) = 12.00, p < .01, η2 p = .28, and the significant Task × Block × Gaze × Expression interaction, RT: F(1, 31) = 20.18, p < .001, η2 p = .39; ACC: F(1, 31) = 13.24, p < .001, η2 p = .30.

To further evaluate the role of discriminability in interference processing of gaze and expression, a 2 (block: baseline, orthogonal) × 2 (gaze: direct, averted) × 2 (expression: anger, surprise) repeated-measures ANOVA was performed (Greenhouse–Geisser correction) on Experiments 1a and 1b for each task. LSD correction was used for multiple-comparison and post hoc analysis. Table 1 shows the main effect of block and the Gaze × Expression interaction for gaze and expression task under high (Exp. 1a) and low (Exp. 1b) expression discriminability.

Experiment 1a: High expression discriminability

Gaze task

The RT results exhibit significant main effects of block, F(1, 31) = 9.79, p < .01, η2 p = .24; expression, F(1, 31) = 26.30, p < .001, η2 p = .46; gaze, F(1, 31) = 6.33, p < .05, η2 p = .17. The RT of the baseline condition was faster than orthogonal (543 ± 12.43 vs. 560 ± 10.78 ms), indicating a Garner effect (Fig. 2). Meanwhile, the Gaze × Expression interaction was significant, F(1, 31) = 71.63, p < .001, η2 p = .70, showing that the RT of surprise-direct faces was faster than that of surprise-averted faces (530 ± 10.69 vs. 548 ± 10.55 ms, p < .001) and angry-averted faces faster than angry-direct faces (544 ± 12.54 vs. 583 ± 13.48 ms, p < .001; Fig. 3). Additionally, the three-way Block × Gaze × Expression interaction was significant, F(1, 31) = 19.53, p < .001, η2 p = 0.39. Further analysis showed that the RT of surprise-direct faces was faster than that of surprise-averted faces (524 ± 10.94 vs. 533 ± 11.75 ms, p < .029) and angry-averted faces faster than angry-direct in the baseline block (546 ± 14.38 vs. 570 ± 15.82 ms, p < .009). The RT of surprise-direct faces was faster than that of surprise-averted faces (538 ± 11.80 vs. 563 ± 10.25 ms, p < .001) and angry-averted faces faster than angry-direct faces in the orthogonal block (543 ± 11.82 vs. 596 ± 12.07 ms, p < .001).

Mean of the reaction times obtained in Experiment 1 for the baseline and orthogonal conditions across gaze and expression tasks. Error bars show the standard error. “*” indicates the difference was < .05, “**” indicates the difference was < .01

The ACC results exhibit significant main effect of block, F(1, 31) = 9.26, p < .01, η2 p = .23; expression, F(1, 31) = 22.15, p < .001, η2 p = .42. The baseline ACC was higher than orthogonal (92.8% ± .8% vs. 90.5% ± .9%), indicating a Garner effect. Meanwhile, the Gaze × Expression interaction was significant, F(1, 31) = 56.18, p < .001, η2 p = .64, showing that the ACC of surprise-direct faces was higher than surprise-averted faces (96.6% ± .5% vs. 89.4% ± 1.0%, p < .001) and that the ACC of angry-averted faces was higher than angry-direct faces (92.3% ± .9% vs. 88.3% ± 1.3%, p < .01). Likewise, the three-way Block × Gaze × Expression interaction was significant, F(1, 31) = 29.05, p < .001, η2 p = .48. Further analysis showed that the ACC of surprise-direct faces was higher than surprise-averted faces in the baseline block (95.7% ± .7% vs. 91.9% ± .8%, p < .001), the ACC of surprise-direct faces was higher than surprise-averted faces (97.5% ± .6% vs. 86.8% ± 1.4%, p < .001), and angry-averted faces was higher than angry-direct faces in the orthogonal block (92.3% ± 1.0% vs. 85.4% ± 1.7%, p < .002).

Expression task

There were no significant RT results, Fs ≤ 2.71, ps ≥ .110. The ACC results exhibited significant main effect of expression, F(1, 31) = 4.56, p < .05, η2 p = .13. The ACC of surprise was higher than that of anger (95.1% ± .8% vs. 93.9% ± .9%).

Experiment 1b: Low expression discriminability

Gaze task

The RT results exhibit significant main effect of block, F(1, 31) = 7.85, p < .01, η2 p = .20; expression, F(1, 31) = 26.42, p < .001, η2 p = .46; gaze, F(1, 31) = 13.35, p = .001, η2 p = .30. The baseline RT was faster than orthogonal (542 ± 11.75 vs. 564 ± 11.60 ms), indicating a Garner effect (Fig. 2). Meanwhile, the Gaze × Expression interaction was significant, F(1, 31) = 67.78, p < .001, η2 p = .69, showing that the RT of angry-averted faces was faster than angry-direct faces (543 ± 11.29 vs. 580 ± 12.24 ms, p < .001; Fig. 3). Also, the three-way Block × Gaze × Expression interaction was significant, F(1, 31) = 23.28, p < .001, η2 p = .43. Further analysis showed that the RT of surprise-direct faces was faster than surprise-averted faces (546 ± 11.93 vs. 564 ± 12.35 ms, p < .004), and angry-averted faces was faster than angry-direct in the orthogonal block (547 ± 10.89 vs. 599 ± 13.13 ms, p < .001).

The ACC results exhibit significant main effect of expression, F(1, 31) = 9.04, p < .01, η2 p = .23. Meanwhile, the Gaze × Expression interaction was significant, F(1, 31) = 28.75, p < .001, η2 p = .48, showing that the ACC of surprise-direct faces was higher surprise-averted (95.9% ± .5% vs. 92.0% ± .8%, p < .001). What is more, the three-way Block × Gaze × Expression interaction was significant, F(1, 31) = 11.36, p < .01, η2 p = .27. Further analysis showed that the ACC of surprise-direct faces was higher than surprise-averted faces (97.1% ± .5% vs. 91.3% ± 1.0%, p < .001) and angry-averted was higher than angry-direct in the orthogonal block (94.2% ± .8% vs. 89.4% ± 1.4%, p < .008).

Expression task

The RT results exhibit significant main effect of block, F(1, 31) = 5.84, p < .05, η2 p = .16, showing that the baseline RT was faster than orthogonal (530 ± 11.54 vs. 547 ± 9.92 ms), indicating a Garner effect (Fig. 2). The ACC results exhibit significant main effect of expression, F(1, 31) = 22.70, p < .001, η2 p = .42. The ACC of surprise was higher than that of anger (93.2% ± .9% vs. 84.8% ± 1.8%).

Based on these results, the Garner effect was observed in the gaze task of Experiment 1a (high expression discriminability) and the gaze and expression task of Experiment 1b (low expression discriminability), suggesting asymmetrical interference of expression and gaze processing under high expression discriminability and symmetrical interference under low expression discriminability.

Experiment 2: Gender versus gaze

Previous studies show that the direction of gaze affects facial gender processing (Macrae et al., 2002; Vuilleumier et al., 2005), suggesting that individuals are unable to ignore gaze when responding to facial gender. However, it is unclear whether individuals can ignore facial gender when responding to gaze. If they cannot, the influence of gaze on facial gender and of facial gender on gaze would be found, which would contradict the proposal that facial variant and invariant information are processed in parallel mode (Haxby et al., 2000). Furthermore, we are interested in the role of facial gender discriminability in interference processing of gender and gaze because previous studies have observed that the discriminability affected interference processing of variant and invariant information (i.e., expression and identity; Y. Wang et al., 2013).

Method

Participants

Another 32 participants participated in Experiment 2, with one individual excluded because accuracy was less than 30%. Hence, 31 participants (21 females, 18–22 years old, mean age = 19.81 years) were actually employed. All participants were right-handed with normal or corrected-to-normal vision. The research protocol was approved by the local Institutional Review Board (IRB) of School of Psychology, Shandong Normal University, and all participants gave written informed consent before the experiment.

Apparatus and materials

Forty actors (20 females) with neutral expressions were randomly generated by FaceGen Modeller (Singular Inversions, 2006). Twelve stimuli were produced from each actor, constituted by the combination of facial gender (male, female), gender discriminability (high, low), and gaze direction (direct, right, and left). The discriminability of gender was modulated by FaceGen. High gender discriminability was determined by setting gender intensity as very male/female, while low gender discriminability was set as male/female. Thirty people, who did not participate in the formal experiment, were exposed to a 7-point score (1 = very low, 7 = very high) test on the gender discriminability (i.e., intensity) of 480 facial stimuli. To eliminate the contamination of facial identity, only the actor with a significant difference in discriminability was used for the formal experiment. As a result, four actors (two females, two males) were used. The mean difference between high and low discriminability of male facial stimuli was significant (6.47 ± 1.03 vs. 3.35 ± 1.27), t(29) = 4.33, p < .001, Cohen's d = .89, as was the female facial stimuli (5.10 ± 1.15 vs. 3.24 ± 1.33), t(29) = 6.09, p < .001, Cohen's d = 1.50. Forty-eight pictures (females:males = 1:1) were used in Experiment 2. Stimulus details and apparatus were the same as in Experiment 1.

Design and procedure

The experiment adopted a 2 (block: baseline, orthogonal) × 2 (gaze: direct, averted) × 2 (gender: male, female) × 2 (task: gaze, gender) × 2 (gender discriminability: high, low) within-subjects design. In the baseline block, the task relevant dimension (e.g., gender) varies while the task irrelevant dimension (e.g., gaze) is constant (i.e., male-direct and female-direct). In the orthogonal block, both the task relevant and irrelevant dimensions vary so that all four possible combinations of the two dimensions are contained (i.e., male-direct, male-averted, female-direct, and female-averted). In the gender task, participants were required to make a two-alternative judgment of gender (male or female), and in the gaze task, they were required to make a two-alternative judgment of gaze direction (direct or averted). Half of Experiment 2 used high gender discriminability faces (i.e., Experiment 2a); the other half used low discriminability faces (i.e., Experiment 2b). Other settings were the same as in Experiment 1.

Results

High and low gender discriminability

RT and ACC were subjected to a repeated-measures ANOVA, with gender discriminability (high vs. low), task (gaze vs. gender), block (baseline vs. orthogonal), gaze (direct vs. averted), and gender (male vs. female) as within-subjects variables. The Gender Discriminability × Task interaction was significant, RT: F(1, 30) = 117.652, p < .001, η2 p = .79; ACC: F(1, 30) = 30.842, p < .001, η2 p = .51. Participants made gender judgment faster than gaze judgment with high gender discriminability (p < .001). The RT of the gaze task of Experiments 2a and 2b was 561 ± 12.27 and 546 ± 13.14 ms, respectively. The ACC of the gaze task of Experiments 2a and 2b was 91.4 ± .7% and 93.4 ± .6%, respectively. The RT of the gender task of Experiments 2a and 2b was 431 ± 9.53 and 517 ± 8.88 ms, respectively (p < .001). The ACC of the gender task of Experiments 2a and 2b was 97.2 ± .4% and 93.0 ± 1.0%, respectively (p < .001). No speed–accuracy trade-off was found for the gaze and gender task. These results showed that high gender discriminability has shorter RT and higher ACC than low discriminability. The ANOVA revealed significant Gender Discriminability × Gaze × Gender interaction, F(1, 30) = 5.976, p < .05, η2 p = .16), significant Task × Gaze × Gender interaction on RT, F(1, 30) = 7.720, p < .01, η2 p = .20, and significant Gender Discriminability × Task × Block × Gaze × Gender interaction on ACC, F(1, 30) = 5.315, p < .05, η2 p = .15.

Consistent with Experiment 1, to evaluate the role of discriminability in interference processing of gaze and gender, a 2 (block: baseline, orthogonal) × 2 (gaze: direct, averted) × 2 (gender: male, female) repeated-measures ANOVA was performed (Greenhouse–Geisser correction) on Experiments 2a and 2b for each task. LSD correction was used for multiple-comparison and post hoc analysis. Table 2 shows the main effect of block and the Gaze × Gender interaction for gaze and gender task with high (Exp. 2a) and low (Exp. 2b) gender discriminability.

Experiment 2a: High gender discriminability

Gaze task

The RT results show significant main effect of gender, F(1, 30) = 38.56, p < .001, η2 p = .56. The Block × Gender interaction was significant, F(1, 30) = 6.53, p < .05, η2 p = .18; post hoc analysis indicated the RT of female faces was faster than that of male faces with both baseline and orthogonal block (baseline: 540 ± 10.83 vs. 582 ± 16.29 ms, p < .001; orthogonal: 555 ± 11.50 vs. 577 ± 13.46 ms, p < .001). Although there was no significant main effect of block, the Gender × Gaze interaction was significant, F(1, 30) = 19.30, p < .001, η2 p = .39, indicating that gender affects gaze processing. Further analysis shows that the RT of male-averted faces was faster than male-direct (569 ± 14.22 vs. 591 ± 15.01 ms, p < .001; Fig. 4). The ACC results show significant main effect of gender, F(1, 30) = 24.97, p < .001, η2 p = .45, indicating the ACC for female faces was higher than for male (93.5% ± .5% vs. 89.4% ± 1.1%).

Gender task: RT results show significant main effects of block, F(1, 30) = 6.69, p < .05, η2 p = .18, and gaze, F(1, 30) = 7.45, p < .05, η2 p = .20. The baseline condition was faster than orthogonal condition (421 ± 7.99 vs. 438 ± 12.19 ms), indicating a Garner effect (Fig. 5). The ACC results did not show significant effects, Fs ≤ 4.00, ps ≥ .055.

Mean of the reaction times obtained in Experiment 2 for the baseline and orthogonal conditions across gaze and gender tasks. Error bars show the standard error. “*” indicates the difference was < .05

Experiment 2b: Low gender discriminability

Gaze task

The RT results show significant main effect of gaze, F(1, 30) = 20.61, p < .001, η2 p = .41. The Gaze × Gender interaction was significant, F(1, 30) = 4.69, p < .05, η2 p = .14. Post hoc analysis showed that the RT of averted gaze was faster than direct gaze for both male and female faces (male: 539 ± 14.23 vs. 560 ± 13.75 ms, p < .001; female: 541 ± 13.04 vs. 554 ± 13.44 ms, p < .01; Fig. 4), indicating that facial gender influenced the processing of gaze. The ACC results did not show significant effects, Fs ≤ 4.13, ps ≥ .051.

Gender task: the RT and ACC results did not show significant effects, RT: Fs ≤ 1.95, ps ≥ .173; ACC: Fs ≤ 4.01, ps ≥ .054.

In sum, the Garner effect was observed in the gender task of Experiment 2a (high gender discriminability). The Garner effect was not observed in the gaze task of Experiments 2a and 2b (low gender discriminability), but significant interaction between gaze and gender was revealed, indicating that gender influences gaze processing under both high and low gender discriminability. Taken together, the results suggest a symmetrical interference of gender and gaze processing with high gender discriminability and an asymmetrical interference with low discriminability.

Comparison of Experiments 1 and 2

To explore whether interference of expression and gender on gaze processing varies across high and low discriminability, 2 (facial information: expression, gender) × 2 (discriminability: high, low) × 2 (block: baseline, orthogonal) ANOVA analysis was performed on gaze task RT and ACC. The Facial Information × Block interaction was significant on RT, F(1, 30) = 6.33, p < .05, η2 p = .18, showing that there was interference from expression (baseline: 545 ± 11.65 vs. orthogonal: 565 ± 10.65 ms, p < .001), but not from gender. Additionally, the Facial Information × Discriminability interaction on RT was significant, F(1, 30) = 6.39, p < .05, η2 p = .18. Further analysis found that the judgment of gaze was equal with high and low expression discriminability but was different with high and low gender discriminability (564 ± 12.44 vs. 548 ± 13.33 ms, p < .05). The results indicate that the effect of expression on gaze processing is relatively stable, while the effect of gender on gaze is modulated by discriminability.

General discussion

We investigated the effect of discriminability on interference processing of expression and gaze and interference processing of gender and gaze with Garner paradigm, which is a useful tool for demonstrating how different aspects of facial processing interact. The results of the interference processing of different facial dimensions are reflected in the current study. There was asymmetrical/unidirectional interference processing of expression and gaze with high expression discriminability and symmetrical/bidirectional interference with low discriminability. The asymmetric interference with high expression discriminability indicates that participants are able to focus on expression while ignoring gaze, but unable to ignore expression when focusing on gaze. In contrast, a symmetrical/bidirectional interference processing of gender and gaze with high gender discriminability and asymmetrical/unidirectional interference with low discriminability were found. The asymmetric interference with low gender discriminability indicates that participants are able to focus on facial gender while ignoring gaze, but unable to ignore facial gender when focusing on gaze. In addition, relative to facial gender, the influence of expression on gaze processing was not modulated by expression discriminability. Taken together, interference processing of facial information depends on the degree of discriminability.

Interference processing of expression and gaze

The findings of Experiment 1 extend and support those of previous studies. Using stimuli with high discriminability, expression interfered with gaze judgment, but gaze did not interfere with expression judgment. However, using stimuli with low discriminability, expression interfered with gaze judgment, and gaze interfered with expression judgment. This is consistent with the model of facial processing, where there is an interaction between the processing of variant facial features (Haxby et al., 2000).

These results support the speed-of-processing account, which demonstrates that the processing of easily discriminable information occurs before the less discriminable information interferes, and the less discriminable information interferes with easily discriminable information (Atkinson et al., 2005; Graham & LaBar, 2007; Le Gal & Bruce, 2002). The current study found a faster response for expression judgment than gaze judgment with high expression discriminability, with no difference between expression and gaze judgment with low discriminability. Therefore, the unidirectional and bidirectional interference of expression and gaze were found with high and low expression discriminability, respectively.

ERP results may also provide partial support for our observations. Previous studies observed that expression but not gaze modulated the P1 and N170 components, and that the interaction of expression and gaze appears at about 300 ms, indicating that expression and gaze are processed independently before they are processed interactively (Nomi et al., 2013). In our study, it was easier and faster to distinguish each expression with high discriminability than with low discriminability. Therefore, we found symmetrical interference processing of expression and gaze with low discriminability since more time is required to distinguish expression with low discriminability. In addition, previous studies showed that expressions can be processed automatically (Kreegipuu et al., 2013; Luo et al., 2010; Zhao et al., 2015). Thus, as task-irrelevant information, expression interferes with gaze judgment regardless of the degree of discriminability.

In addition, previous studies observed that direct gaze was processed more quickly and accurately than averted gaze when coupled with angry faces (Adams & Franklin, 2009; Artuso et al., 2012). The advantage for averted gaze occurred for angry faces, while the advantage for direct gaze occurred for surprised faces in current gaze judgment. This discrepancy may be related to the relative approach-avoidance motivation of the expression. According to the shared signal hypothesis (Adams & Kleck, 2003, 2005), anger and direct gaze are associated with approach motivation, while fear and averted gaze are associated with avoidance motivation. However, the motivation of expression might change with context. Previous studies suggest that the surprise expression was closely related to reward seeking, which was associated approach motivation (Murty et al., 2016), and the angry expression conveyed avoidance motivation (Sass et al., 2010; Watson, 2009). Thus, our results show that the averted gaze was processed more quickly and accurately for angry faces and the direct gaze was processed more quickly and accurately for surprised faces. The latter was also observed by Graham and LaBar (2007).

Interference processing of gender and gaze

Experiment 2 had a Garner effect in the gender task with high discriminability. Garner effects are not found in the gaze task, but significant interactions between gender and gaze were found both with high and low gender discriminability. Together, these results indicate that when using stimuli with high discriminability in facial gender, gaze interfered with gender judgment, and gender interfered with gaze judgment. However, using stimuli with low gender discriminability, gaze did not interfere with gender judgment, but gender did interfere with gaze judgment. These findings are not consistent with the notion of independence between processing of variant and invariant facial features (Haxby et al., 2000).

Note that these results cannot be attributed to speed of processing. According to this theory, no interference should be observed in the gender task with high discriminability, because there was an overall reaction time advantage for gender judgment relative to gaze judgment. An alternative explanation for the present findings is that classifying gender mainly relies on parts of the faces, especially the eye and eyebrow regions (Dupuis-Roy et al., 2009; Yamaguchi et al., 2013). The eye region might be more salient with high gender discriminability, resulting in symmetrical interference processing of facial gender and gaze. Correspondingly, the eye region was less salient with low gender discriminability, in which individuals could not effectively classify gender based on the eye region. Meanwhile, interference of gaze on gender weakened or even disappeared. However, there was an interference of gender on gaze with low gender discriminability. The reason might come from the neural mechanism of gender and gaze processing. Previous studies showed that gender categorization is highly automatic and can be reflected on N170 component (around 130 ms; Hügelschäfer et al., 2016; Reddy et al., 2004), while gaze processing occurs later (220–350 ms; McCrackin & Itier, 2019; Schweinberger et al., 2007). Taken together, facial gender could affect gaze categorization, but gaze could not affect gender categorization with low discriminability.

In addition, we observed that the averted gaze was processed more quickly than direct gaze, especially when coupled with male faces. This was partially consistent with Vuilleumier et al. (2005), who used a gender task and found this effect for faces of the opposite gender to participants, because most of our participants are female. It is well known that direct gaze, which activates the approach motivational brain systems, has an important role in social communication and behavior (Hietanen et al., 2008). As mentioned by Vuilleumier et al. (2005), this importance might underline the prolonged analysis for direct gaze, resulting in a faster response for averted than direct gaze. Alternatively, our results might be attributed to the facial gender classification mechanism. Potential cues that allow individuals to discriminate male faces from female involve the eye and eyebrow regions, such as the distance between the eyelid and eyebrow (Burton et al., 1993). The smaller distance between the eyelid and eyebrow is a salient feature of male faces (Campbell, 1996). In contrast to direct gaze, averted gaze seems also to be affected by the distance between the eyelid and eyebrow, which should be investigated further.

Effects of expression and gender discriminability on gaze

Comparing Experiments 1 and 2, we found that the influence of expression on gaze was relatively stable and was not modulated by expression discriminability, while the effect size of gender on gaze was modulated by gender discriminability. This may be related to the neural mechanism of facial information processing. Expression had a similar neural mechanism to gaze. The superior temporal sulcus, which is sensitive to the changes of gaze, could also be activated by expression (Furl et al., 2007). The amygdala, which is responsible for expression processing, is also sensitive to gaze processing (Dumas et al., 2013). However, gender and gaze processing are located in the fusiform gyrus and superior temporal sulcus, respectively (Cloutier et al., 2008). Together, these results suggest that expressions are more closely associated with gaze than gender information.

Limitations

To eliminate the contamination of facial identity, only the actor who had significant differences in discriminability for both anger and surprise expressions was used in the formal experiment. This manipulation resulted in the fact that our stimuli number is low and that our surprise expression has lower discriminability than anger. However, we found that identification of surprise has a higher accuracy than anger in the expression task. This might be related to the salient features of the surprise expression, such as eye widening (Bayless et al., 2011). In spite of this, the unbalanced discriminability of anger and surprise should be considered in further work. The other issue is the imbalance between males and females in the sample tested, since previous studies reported that females outperform males on the recognition of expression and gender (Herlitz & Lovén, 2013, for a review; Olderbak et al., 2019).

Conclusion

Using the Garner paradigm, the current study confirmed that gaze affects the categorization of expression and gender according to expression and gender discriminability, whereas expression and gender affect the categorization of gaze regardless of expression and gender discriminability. The results provide further evidence that the processing of gaze and other facial variant and invariant information is interdependent and depends on the degree of discriminability. Relative to gender, expression steadily affected the categorization of gaze, suggesting a unitary system underlying the processing of gaze and expression. What remains to be delineated is the time interval with regard to interference processing of gaze, expression and gender.

References

Abbruzzese, L., Magnani, N., Robertson, I. H., & Mancuso, M. (2019). Age and gender differences in emotion recognition. Frontiers in Psychology, 10, Article 2371. https://doi.org/10.3389/fpsyg.2019.02371

Adams, R. B., & Franklin, R. G. (2009). Influence of emotional expression on the processing of gaze direction. Motivation and Emotion, 33(2), 106–112. https://doi.org/10.1007/s11031-009-9121-9

Adams, R. B., & Kleck, R. E. (2003). Perceived gaze direction and the processing of facial displays of emotion. Psychological Science, 14(6), 644–647. https://doi.org/10.2307/40063926

Adams, R. B., & Kleck, R. E. (2005). Effects of direct and averted gaze on the perception of facially communicated emotion. Emotion, 5(1), 3–11. https://doi.org/10.1037/1528-3542.5.1.3

Artuso, C., Palladino, P., & Ricciardelli, P. (2012). How do we update faces? Effects of gaze direction and facial expressions on working memory updating. Frontiers in Psychology, 3, Article 362. https://doi.org/10.3389/fpsyg.2012.00362

Atkinson, A. P., Tipples, J., Burt, D. M., & Young, A. W. (2005). Asymmetric interference between sex and emotion in face perception. Perception & Psychophysics, 67(7), 1199–1213. https://doi.org/10.3758/BF03193553

Bayless, S. J., Glover, M., Taylor, M. J., & Itier, R. J. (2011). Is it in the eyes? Dissociating the role of emotion and perceptual features of emotionally expressive faces in modulating orienting to eye gaze. Visual Cognition, 19(4), 483–510. https://doi.org/10.1080/13506285.2011.552895

Bindemann, M., Burton, A. M., & Langton, S. R. H. (2008). How do eye gaze and facial expression interact? Visual Cognition, 16(6), 708–733. https://doi.org/10.1080/13506280701269318

Burton, A. M., Bruce, V., & Dench, N. (1993). What’s the difference between men and women? Evidence from facial measurement. Perception, 22(2), 153–176. https://doi.org/10.1068/p220153

Campbell, R. (1996). Real men don’t look down: Direction of gaze affects sex decisions on faces. Visual Cognition, 3(4), 393–412. https://doi.org/10.1080/135062896395643

Cloutier, J., Turk, D. J., & Macrae, C. N. (2008). Extracting variant and invariant information from faces: The neural substrates of gaze detection and sex categorization. Social Neuroscience, 3(1), 69–78. https://doi.org/10.1080/17470910701563483

Dores, A. R., Barbosa, F., Queiros, C., Carvalho, I. P., & Griffiths, M. D. (2020). Recognizing emotions through facial expressions: A largescale experimental study. International Journal of Environmental Research and Public Health, 17, Article 7420. https://doi.org/10.3390/ijerph17207420

Dumas, T., Dubal, S., Attal, T., Chupin, M., Jouvent, R., Morel, S., & George, N. (2013). MEG evidence for dynamic amygdala modulations by gaze and facial emotions. PLOS ONE, 8(9), Article e74145. https://doi.org/10.1371/journal.pone.0074145

Dupuis-Roy, N., Fortin, I., Fiset, D., & Gosselin, F. (2009). Uncovering gender discrimination cues in a realistic setting. Journal of Vision, 9(2), 1–8. https://doi.org/10.1167/9.2.10

Faul, F., Erdfelder, E., Lang, A. G., & Buchner, A. (2007). G*Power 3: A flexible statistical power analysis program for the social behavioral and biomedical sciences. Behavior Research Methods, 39(2), 175–191. https://doi.org/10.3758/BF03193146

Firestone, A., Turk-Browne, N. B., & Ryan, J. D. (2007). Age-related deficits in face recognition are related to underlying changes in scanning behavior. Aging, Neuropsychology, and Cognition, 14(6), 594–607. https://doi.org/10.1080/13825580600899717

Fontaine, J. R., Scherer, K. R., Roesch, E. B., & Ellsworth, P. C. (2007). The world of emotions is not two-dimensional. Psychological Science, 18(12), 1050–1057. https://doi.org/10.1111/j.1467-9280.2007.02024.x

Furl, N., Rijsbergen, N. J. V., Treves, A., Friston, K. J., & Dolan, R. J. (2007). Experience-dependent coding of facial expression in superior temporal sulcus. Proceedings of the National Academy of Sciences of the United States of America, 104(33), 13485–13489. https://doi.org/10.1073/pnas.0702548104

Ganel, T., Goshen-Gottstein, Y., & Goodale, M. A. (2005). Interactions between the processing of gaze direction and facial expression. Vision Research, 45(9), 1191–1200. https://doi.org/10.1016/j.visres.2004.06.025

Garner, W. R. (1976). Interaction of stimulus dimensions in concept and choice processes. Cognitive Psychology, 8(1), 98–123. https://doi.org/10.1016/0010-02857690006-2

Gosselin, P., & Simard, J. (1999). Children’s knowledge of facial expressions of emotions: Distinguishing fear and surprise. The Journal of Genetic Psychology, 160(2), 181–193. https://doi.org/10.1080/00221329909595391

Graham, R., & LaBar, K. S. (2007). Garner interference reveals dependencies between emotional expression and gaze in face perception. Emotion, 7(2), 296–313. https://doi.org/10.1037/1528-3542.7.2.296

Haxby, J. V., Hoffman, E. A., & Gobbini, M. I. (2000). The distributed human neural system for face perception. Trends in Cognitive Sciences, 4(6), 223–233. https://doi.org/10.1016/S1364-66130001482-0

Haxby, J. V., Hoffman, E. A., & Gobbini, M. I. (2002). Human neural systems for face recognition and social communication. Biological Psychiatry, 51(1), 59–67. https://doi.org/10.1016/S0006-3223(01)01330-0

Henderson, J. M., Williams, C. C., & Falk, R. J. (2005). Eye movements are functional during face learning. Memory & Cognition, 33(1), 98–106. https://doi.org/10.3758/BF03195300

Herlitz, A., & Lovén, J. (2013). Sex differences and the own-gender bias in face recognition: A meta-analytic review. Visual Cognition, 24(1), 1306–1336. https://doi.org/10.1080/13506285.2013.823140

Hester, N. (2019). Perceived negative emotion in neutral faces: Gender-dependent effects on attractiveness and threat. Emotion, 19(8), 1490–1494. https://doi.org/10.1037/emo0000525

Hietanen, J. K., Leppänen, J. M., Peltola, M. J., Linna-Aho, K., & Ruuhiala, H. J. (2008). Seeing direct and averted gaze activates the approach-avoidance motivational brain systems. Neuropsychologia, 46(9), 2423–2430. https://doi.org/10.1016/j.neuropsychologia.2008.02.02

Hügelschäfer, S., Jaudas, A., & Achtziger, A. (2016). Detecting gender before you know it: How implementation intentions control early gender categorization. Brain Research, 1649, 9–22. https://doi.org/10.1016/j.brainres.2016.08.026

Itier, R. J., & Batty, M. (2009). Neural bases of eye and gaze processing: The core of social cognition. Neuroscience & Biobehavioral Reviews, 33(6), 843–863. https://doi.org/10.1016/j.neubiorev.2009.02.004

Karnadewi, F., & Lipp, O. V. (2011). The processing of invariant and variant face cues in the garner paradigm. Emotion, 11(3), 563–571. https://doi.org/10.1037/a0021333

Kreegipuu, K., Kuldkepp, N., Sibolt, O., Toom, M., Allik, J., & Näätänen, R. (2013). vMMN for schematic faces: Automatic detection of change in emotional expression. Frontiers in Human Neuroscience, 7, Article 714. https://doi.org/10.3389/fnhum.2013.00714

Lassalle, A., & Itier, R. J. (2013). Fearful, surprised, happy, and angry facial expressions modulate gaze-oriented attention: behavioral and ERP evidence. Social Neuroscience, 8(6), 583–600. https://doi.org/10.1080/17470919.2013.835750

Le Gal, P. M., & Bruce, V. (2002). Evaluating the independence of sex and expression in judgments of faces. Perception & Psychophysics, 64(2), 230–243. https://doi.org/10.3758/BF03195789

Li, Y., & Tse, C. (2016). Interference among the processing of facial emotion, face race, and face gender. Frontiers in Psychology, 7, Article 1700. https://doi.org/10.3389/fpsyg.2016.01700

Luo, Q., Holroyd, T., Majestic, C., Cheng, X., Schechter, J., & Blair, R. J. (2010). Emotional automaticity is a matter of timing. Journal of Neuroscience, 30(17), 5825–5829. https://doi.org/10.1523/JNEUROSCI.BC-5668-09.2010

Macrae, C. N., Hood, B. M., Milne, A. B., Rowe, A. C., & Mason, M. F. (2002). Are you looking at me? Eye gaze and person perception. Psychological Science, 13(5), 460–464. https://doi.org/10.1111/1467-9280.00481

McCrackin, S. D., & Itier, R. J. (2019). Perceived gaze direction differentially affects discrimination of facial emotion, attention, and gender—An ERP study. Frontiers in Neuroscience, 13, Article 517. https://doi.org/10.3389/fnins.2019.00517

Milders, M., Hietanen, J. K., Leppänen, J. M., & Braun, M. (2011). Detection of emotional faces is modulated by the direction of eye gaze. Emotion, 11(6), Article 1456. https://doi.org/10.1037/a0022901

Murphy, K., & Ward, Z. (2017). Repetition blindness for faces: A comparison of face identity, expression, and gender judgments. Advances in Cognitive Psychology, 13(3), 214–223. https://doi.org/10.5709/acp-0221-3

Murty, V. P., Labar, K. S., & Adcock, R. A. (2016). Distinct medial temporal networks encode surprise during motivation by reward versus punishment. Neurobiology of Learning and Memory, 134, 55–64. https://doi.org/10.1016/j.nlm.2016.01.018

Nomi, J. S., Frances, C., Nguyen, M. T., Bastidas, S., & Troup, L. J. (2013). Interaction of threat expressions and eye gaze: An event-related potential study. NeuroReport, 24(14), 813–817. https://doi.org/10.1097/WNR.0b013e3283647682

Olderbak, S., Wilhelm, O., Hildebrandt, A., & Quoidbach, J. (2019). Sex differences in facial emotion perception ability across the lifespan. Cognition and Emotion, 33(3), 579–588. https://doi.org/10.1080/02699931.2018.1454403

Reddy, L., Wilken, P., & Koch, C. (2004). Face-gender discrimination is possible in the near-absence of attention. Journal of Vision, 4(2), 4–4. https://doi.org/10.1167/4.2.4

Rigato, S., Menon, E., Farroni, T., & Johnson, M. H. (2013). The shared signal hypothesis: Effects of emotion-gaze congruency in infant and adult visual preferences. British Journal of Developmental Psychology, 31(1), 15–29. https://doi.org/10.1111/j.2044-835X.2011.02069.x

Sass, S. M., Heller, W., Stewart, J. L., Silton, R. L., Edgar, J. C., Fisher, J. E., & Miller, G. A. (2010). Time course of attentional bias in anxiety: Emotion and gender specificity. Psychophysiology, 47(2), 247–259. https://doi.org/10.1111/j.1469-8986.2009.00926.x

Schuch, S., Werheid, K., & Koch, I. (2012). Flexible and inflexible task sets: Asymmetric interference when switching between emotional expression, sex, and age classification of perceived faces. Quarterly Journal of Experimental Psychology, 65(5), 994–1005. https://doi.org/10.1080/17470218.2011.638721

Schweinberger, S. R., Kloth, N., & Jenkins, R. (2007). Are you looking at me? Neural correlates of gaze adaptation. NeuroReport, 18(7), 693–696. https://doi.org/10.1097/wnr.0b013e3280c1e2d2

Schyns, P. G., Bonnar, L., & Gosselin, F. (2002). Show me the features! Understanding recognition from the use of visual information. Psychological Science, 13(5), 402–409. https://doi.org/10.1111/1467-9280.00472

Vinette, C., Gosselin, F., & Schyns, P. G. (2004). Spatio-temporal dynamics of face recognition in a flash: It’s in the eyes. Cognitive Science, 28(2), 289–301. https://doi.org/10.1207/s15516709cog2802_8

Vuilleumier, P., George, N., Lister, V., Armony, J., & Driver, J. (2005). Effects of perceived mutual gaze and gender on face processing and recognition memory. Visual Cognition, 12(1), 85–101. https://doi.org/10.1080/13506280444000120

Wang, Y., Fu, X., Johnston, R. A., & Yan., Z. (2013). Discriminability effect on garner interference: evidence from recognition of facial identity and expression. Frontiers in Psychology, 4, Article 943. https://doi.org/10.3389/fpsyg.2013.00943

Wang, H., Ip, C., Fu, S., & Sun, P. (2017). Different underlying mechanisms for face emotion and gender processing during feature-selective attention: Evidence from event-related potential studies. Neuropsychologia, 99, 306–313. https://doi.org/10.1016/j.neuropsychologia.2017.03.017

Wang, P., Zhang, Q., & Zhang, K. (2018). Older adults show less interference from task-irrelevant social categories: evidence from the garner paradigm. Cognitive Processing, 19(3), 1–8. https://doi.org/10.1007/s10339-017-0843-4

Watson, D. (2009). Locating anger in the hierarchical structure of affect: Comment on carver and Harmon-Jones (2009). Psychological Bulletin, 135(2), 205–208. https://doi.org/10.1037/a0014413

Yamaguchi, M. K., Hirukawa, T., & Kanazawa, S. (2013). Judgment of gender through facial parts. Perception, 42(11), 1253–1265. https://doi.org/10.1068/p240563n

Yankouskaya, A., Booth, D. A., & Humphreys, G. (2012). Interactions between facial emotion and identity in face processing: Evidence based on redundancy gains. Attention, Perception, & Psychophysics, 74(8), 1692–1711. https://doi.org/10.3758/s13414-012-0345-5

Zhang, X., Li, Q., Sun, S., & Zuo, B. (2018). The time course from gender categorization to gender-stereotype activation. Social Neuroscience, 13(1), 52–60. https://doi.org/10.1080/17470919.2016.1251965

Zhao, M., Liu, T., Chen, G., & Chen, F. (2015). Are scalar implicatures automatically processed and different for each individual? A mismatch negativity (MMN) study. Brain Research, 1599, 137–149. https://doi.org/10.1016/j.brainres.2014.11.049

Acknowledgements

This study is supported by the National Natural Science Foundation of China [Grant No. 32171042, 31700940] for Hailing Wang. The authors thank Jinqi Dong and Lianxian Liu for their hard work in collecting data. The authors would like to express their gratitude to EditSprings (https://www.editsprings.cn/) for the expert linguistic services provided.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Additional information

Open practices statements

None of the data or materials for the experiment reported here is available online, but the data and materials can be provided upon request. The experiment was not preregistered.

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Chen, E., Xia, B., Lian, Y. et al. The role of discriminability in face perception: Interference processing of expression, gender, and gaze. Atten Percept Psychophys 84, 2281–2292 (2022). https://doi.org/10.3758/s13414-022-02561-9

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.3758/s13414-022-02561-9