Abstract

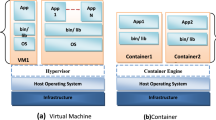

The fast-paced development of computational tools has enabled tremendous scientific progress in recent years. However, this rapid surge of technological capability also comes at a cost, as it leads to an increase in the complexity of software environments and potential compatibility issues across systems. Advanced workflows in processing or analysis often require specific software versions and operating systems to run smoothly, and discrepancies across machines and researchers can impede reproducibility and efficient collaboration. As a result, scientific teams are increasingly relying on containers to implement robust, dependable research ecosystems. Originally popularized in software engineering, containers have become common in scientific projects, particularly in large collaborative efforts. In this Primer, we describe what containers are, how they work and the rationale for their use in scientific projects. We review state-of-the-art implementations in diverse contexts and fields, with examples in various scientific fields. Finally, we discuss the possibilities enabled by the widespread adoption of containerization, especially in the context of open and reproducible research, and propose recommendations to facilitate seamless implementation across platforms and domains, including within high-performance computing clusters such as those typically available at universities and research institutes.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.References

Hsiehchen, D., Espinoza, M. & Hsieh, A. Multinational teams and diseconomies of scale in collaborative research. Sci. Adv. 1, e1500211 (2015).

International Human Genome Sequencing Consortium. Initial sequencing and analysis of the human genome. Nature 409, 860–921 (2001).

Kandoth, C. et al. Mutational landscape and significance across 12 major cancer types. Nature 502, 333–339 (2013).

DeGrace, M. M. et al. Defining the risk of SARS-CoV-2 variants on immune protection. Nature 605, 640–652 (2022).

Berrang-Ford, L. et al. A systematic global stocktake of evidence on human adaptation to climate change. Nat. Clim. Change 11, 989–1000 (2021).

Donoho, D. L. An invitation to reproducible computational research. Biostatistics 11, 385–388 (2010).

Prabhu, P. et al. in State of the Practice Reports 1–12 (Association for Computing Machinery, 2011).

Humphreys, P. in Science in the Context of Application (eds Carrier, M. & Nordmann, A.) 131–142 (Springer Netherlands, 2011).

Cioffi-Revilla, C. in Introduction to Computational Social Science: Principles and Applications (ed. Cioffi-Revilla, C.) 35–102 (Springer International Publishing, 2017).

Levenstein, M. C. & Lyle, J. A. Data: sharing is caring. Adv. Methods Pract. Psychol. Sci. 1, 95–103 (2018).

Kidwell, M. C. et al. Badges to acknowledge open practices: a simple, low-cost, effective method for increasing transparency. PLoS Biol. 14, e1002456 (2016).

Auer, S. et al. Science forum: a community-led initiative for training in reproducible research. eLife https://doi.org/10.7554/eLife.64719 (2021).

Epskamp, S. Reproducibility and replicability in a fast-paced methodological world. Adv. Methods Pract. Psychol. Sci. 2, 145–155 (2019).

Pittard, W. S. & Li, S. in Computational Methods and Data Analysis for Metabolomics (ed. Li, S.) 265–311 (Springer US, 2020).

Baker, M. 1,500 Scientists lift the lid on reproducibility. Nature https://doi.org/10.1038/533452a (2016).

Baker, M. Reproducibility: seek out stronger science. Nature 537, 703–704 (2016).

Button, K. S., Chambers, C. D., Lawrence, N. & Munafò, M. R. Grassroots training for reproducible science: a consortium-based approach to the empirical dissertation. Psychol. Learn. Teach. 19, 77–90 (2020).

Wilson, G. et al. Good enough practices in scientific computing. PLoS Comput. Biol. 13, e1005510 (2017). This article outlines a set of good computing practices that every researcher can adopt, regardless of their current level of computational skill. These practices encompass data management, programming, collaborating with colleagues, organizing projects, tracking work and writing manuscripts.

Vicente-Saez, R. & Martinez-Fuentes, C. Open science now: a systematic literature review for an integrated definition. J. Bus. Res. 88, 428–436 (2018).

McKiernan, E. C. et al. How open science helps researchers succeed. eLife 5, e16800 (2016).

Woelfle, M., Olliaro, P. & Todd, M. H. Open science is a research accelerator. Nat. Chem. 3, 745–748 (2011).

Evans, J. A. & Reimer, J. Open access and global participation in science. Science 323, 1025 (2009).

Sandve, G. K., Nekrutenko, A., Taylor, J. & Hovig, E. Ten simple rules for reproducible computational research. PLoS Comput. Biol. 9, e1003285 (2013).

Fan, G. et al. in Proceedings of the 29th ACM SIGSOFT International Symposium on Software Testing and Analysis 463–474 (Association for Computing Machinery, 2020).

Liu, K. & Aida, K. in 2016 International Conference on Cloud Computing Research and Innovations (ICCCRI) 56–63 (IEEE, 2016).

Hale, J. S., Li, L., Richardson, C. N. & Wells, G. N. Containers for portable, productive, and performant scientific computing. Comput. Sci. Eng. 19, 40–50 (2017).

Boettiger, C., Center for Stock Assessment Research. An introduction to Docker for reproducible research. Oper. Syst. Rev. https://doi.org/10.1145/2723872.2723882 (2015). This article explores how Docker can help address challenges in computational reproducibility in scientific research, examining how Docker combines several areas from systems research to facilitate reproducibility, portability and extensibility of computational work.

Kiar, G. et al. Science in the cloud (SIC): a use case in MRI connectomics. Gigascience 6, gix013 (2017).

Merkel, D. Docker: lightweight Linux containers for consistent development and deployment. Seltzer https://www.seltzer.com/margo/teaching/CS508.19/papers/merkel14.pdf (2013). This article describes how Docker can package applications and their dependencies into lightweight containers that move easily between different distros, start up quickly and are isolated from each other.

Kurtzer, G. M., Sochat, V. & Bauer, M. W. Singularity: scientific containers for mobility of compute. PLoS ONE 12, e0177459 (2017).

Sochat, V. V., Prybol, C. J. & Kurtzer, G. M. Enhancing reproducibility in scientific computing: metrics and registry for Singularity containers. PLoS ONE 12, e0188511 (2017). This article presents Singularity Hub, a framework to build and deploy Singularity containers for mobility of compute. The article also introduces Singularity Python software with novel metrics for assessing reproducibility of such containers.

Walsh, D. & Podman team. Podman: A Tool for Managing OCI Containers and Pods. Github https://github.com/containers/podman (2023).

Potdar, A. M., Narayan, D. G., Kengond, S. & Mulla, M. M. Performance evaluation of Docker container and virtual machine. Procedia Comput. Sci. 171, 1419–1428 (2020).

Gerhardt, L. et al. Shifter: containers for HPC. J. Phys. Conf. Ser. 898, 082021 (2017).

Ram, K. Git can facilitate greater reproducibility and increased transparency in science. Source Code Biol. Med. 8, 7 (2013).

Vuorre, M. & Curley, J. P. Curating research assets: a tutorial on the git version control system. Adv. Methods Pract. Psychol. Sci. 1, 219–236 (2018).

Clyburne-Sherin, A., Fei, X. & Green, S. A. Computational reproducibility via containers in psychology. Meta Psychol. 3, 892 (2019).

Boettiger, C. & Eddelbuettel, D. An introduction to rocker: Docker containers for R. R J. 9, 527 (2017).

Nüst, D. et al. The Rockerverse: packages and applications for containerization with R. Preprint at https://doi.org/10.48550/arXiv.2001.10641 (2020).

Nüst, D. & Hinz, M. containerit: generating Dockerfiles for reproducible research with R. J. Open Source Softw. 4, 1603 (2019).

Xiao, N. Liftr: Containerize R markdown documents for continuous reproducibility (CRAN, 2019).

Peikert, A. & Brandmaier, A. M. A reproducible data analysis workflow with R Markdown, Git, Make, and Docker. Preprint at PsyArXiv https://doi.org/10.31234/osf.io/8xzqy (2019).

Younge, A. J., Pedretti, K., Grant, R. E. & Brightwell, R. in 2017 IEEE International Conference on Cloud Computing Technology and Science (CloudCom) 74–81 (2017).

Freire, J., Bonnet, P. & Shasha, D. in Proceedings of the 2012 ACM SIGMOD International Conference on Management of Data 593–596 (Association for Computing Machinery, 2012).

Papin, J. A., Mac Gabhann, F., Sauro, H. M., Nickerson, D. & Rampadarath, A. Improving reproducibility in computational biology research. PLoS Comput. Biol. 16, e1007881 (2020).

Sochat, V. V. et al. The experiment factory: standardizing behavioral experiments. Front. Psychol. 7, 610 (2016).

Khan, F. Z. et al. Sharing interoperable workflow provenance: a review of best practices and their practical application in CWLProv. Gigascience 8, giz095 (2019).

Kane, S. P. & Matthias, K. Docker: Up & Running: Shipping Reliable Containers in Production (‘O’Reilly Media, Inc., 2018).

Khan, A. Key characteristics of a container orchestration platform to enable a modern application. IEEE Cloud Comput. 4, 42–48 (2017).

Singh, S. & Singh, N. in 2016 2nd International Conference on Applied and Theoretical Computing and Communication Technology (iCATccT) 804–807 (2016).

Singh, V. & Peddoju, S. K. in 2017 International Conference on Computing, Communication and Automation (ICCCA) 847–852 (IEEE, 2017).

Kang, H., Le, M. & Tao, S. in 2016 IEEE International Conference on Cloud Engineering (IC2E) 202–211 (IEEE, 2016).

Sultan, S., Ahmad, I. & Dimitriou, T. Container security: issues, challenges, and the road ahead. IEEE Access. 7, 52976–52996 (2019).

Ruiz, C., Jeanvoine, E. & Nussbaum, L. in Euro-Par 2015: Parallel Processing Workshops 813–824 (Springer International Publishing, 2015).

Nadgowda, S., Suneja, S. & Kanso, A. in 2017 IEEE International Conference on Cloud Engineering (IC2E) 266–272 (IEEE, 2017).

Srirama, S. N., Adhikari, M. & Paul, S. Application deployment using containers with auto-scaling for microservices in cloud environment. J. Netw. Computer Appl. 160, 102629 (2020).

Cito, J. et al. in 2017 IEEE/ACM 14th International Conference on Mining Software Repositories (MSR) 323–333 (IEEE, 2017).

Poldrack, R. A. & Gorgolewski, K. J. Making Big Data open: data sharing in neuroimaging. Nat. Neurosci. 17, 1510–1517 (2014).

Smith, S. M. & Nichols, T. E. Statistical challenges in ‘Big Data’ human neuroimaging. Neuron 97, 263–268 (2018).

Tourbier, S. et al. Connectome Mapper 3: a flexible and open-source pipeline software for multiscale multimodal human connectome mapping. J. Open Source Softw. 7, 4248 (2022).

Nichols, T. E. et al. Best practices in data analysis and sharing in neuroimaging using MRI. Nat. Neurosci. 20, 299–303 (2017).

Halchenko, Y. O. & Hanke, M. Open is not enough. Let’s take the next step: an integrated, community-driven computing platform for neuroscience. Front. Neuroinform. 6, 22 (2012).

Schalk, G. & Mellinger, J. A Practical Guide to Brain–Computer Interfacing with BCI2000: General-Purpose Software for Brain–Computer Interface Research, Data Acquisition, Stimulus Presentation, and Brain Monitoring (Springer Science & Business Media, 2010).

Kaur, B., Dugré, M., Hanna, A. & Glatard, T. An analysis of security vulnerabilities in container images for scientific data analysis. Gigascience 10, giab025 (2021).

Huang, Y. et al. Realized ecological forecast through an interactive Ecological Platform for Assimilating Data (EcoPAD, v1.0) into models. Geosci. Model. Dev. 12, 1119–1137 (2019).

White, E. P. et al. Developing an automated iterative near‐term forecasting system for an ecological study. Methods Ecol. Evol. 10, 332–344 (2019).

Powers, S. M. & Hampton, S. E. Open science, reproducibility, and transparency in ecology. Ecol. Appl. 29, e01822 (2019).

Ali, A. S., Coté, C., Heidarinejad, M. & Stephens, B. Elemental: an open-source wireless hardware and software platform for building energy and indoor environmental monitoring and control. Sensors 19, 4017 (2019).

Morris, B. D. & White, E. P. The EcoData retriever: improving access to existing ecological data. PLoS ONE 8, e65848 (2013).

Schulz, W. L., Durant, T. J. S., Siddon, A. J. & Torres, R. Use of application containers and workflows for genomic data analysis. J. Pathol. Inform. 7, 53 (2016).

Di Tommaso, P. et al. The impact of Docker containers on the performance of genomic pipelines. PeerJ 3, e1273 (2015).

O’Connor, B. D. et al. The Dockstore: enabling modular, community-focused sharing of Docker-based genomics tools and workflows. F1000Res. 6, 52 (2017).

Bai, J. et al. BioContainers registry: searching bioinformatics and proteomics tools, packages, and containers. J. Proteome Res. 20, 2056–2061 (2021).

Gentleman, R. C. et al. Bioconductor: open software development for computational biology and bioinformatics. Genome Biol. 5, R80 (2004).

Zhu, T., Liang, C., Meng, Z., Guo, S. & Zhang, R. GFF3sort: a novel tool to sort GFF3 files for tabix indexing. BMC Bioinformatics 18, 482 (2017).

Müller Paul, H., Istanto, D. D., Heldenbrand, J. & Hudson, M. E. CROPSR: an automated platform for complex genome-wide CRISPR gRNA design and validation. BMC Bioinformatics 23, 74 (2022).

Torre, D., Lachmann, A. & Ma’ayan, A. BioJupies: automated generation of interactive notebooks for RNA-Seq data analysis in the cloud. Cell Syst. 7, 556–561.e3 (2018).

Mahi, N. A., Najafabadi, M. F., Pilarczyk, M., Kouril, M. & Medvedovic, M. GREIN: an interactive web platform for re-analyzing GEO RNA-seq data. Sci. Rep. 9, 7580 (2019).

Dobin, A. & Gingeras, T. R. Mapping RNA-seq reads with STAR. Curr. Protoc. Bioinform. 51, 11.14.1–11.14.19 (2015).

Dobin, A. et al. STAR: ultrafast universal RNA-seq aligner. Bioinformatics 29, 15–21 (2013).

Patro, R., Duggal, G., Love, M. I., Irizarry, R. A. & Kingsford, C. Salmon provides fast and bias-aware quantification of transcript expression. Nat. Methods 14, 417–419 (2017).

Yan, F., Powell, D. R., Curtis, D. J. & Wong, N. C. From reads to insight: a Hitchhiker’s guide to ATAC-seq data analysis. Genome Biol. 21, 22 (2020).

Garcia, M. et al. Sarek: a portable workflow for whole-genome sequencing analysis of germline and somatic variants. Preprint at bioRxiv https://doi.org/10.1101/316976 (2018).

Sirén, J. et al. Pangenomics enables genotyping of known structural variants in 5202 diverse genomes. Science 374, abg8871 (2021).

Zarate, S. et al. Parliament2: accurate structural variant calling at scale. Gigascience 9, giaa145 (2020).

Morris, D., Voutsinas, S., Hambly, N. C. & Mann, R. G. Use of Docker for deployment and testing of astronomy software. Astron. Comput. 20, 105–119 (2017).

Taghizadeh-Popp, M. et al. SciServer: a science platform for astronomy and beyond. Astron. Comput. 33, 100412 (2020).

Herwig, F. et al. Cyberhubs: virtual research environments for astronomy. Astrophys. J. Suppl. Ser. 236, 2 (2018).

The Astropy Collaboration. et al. The Astropy Project: building an open-science project and status of the v2.0 Core Package*. Astron. J. 156, 123 (2018).

Robitaille, T. P. et al. Astropy: a community Python package for astronomy. Astron. Astrophys. Suppl. Ser. 558, A33 (2013).

Abolfathi, B. et al. The fourteenth data release of the sloan digital sky survey: first spectroscopic data from the extended Baryon Oscillation Spectroscopic Survey and from the Second Phase of the Apache Point Observatory Galactic Evolution Experiment. Astrophys. J. Suppl. Ser. 235, 42 (2018).

Nigro, C. et al. Towards open and reproducible multi-instrument analysis in gamma-ray astronomy. Astron. Astrophys. Suppl. Ser. 625, A10 (2019).

Liu, Q., Zheng, W., Zhang, M., Wang, Y. & Yu, K. Docker-based automatic deployment for nuclear fusion experimental data archive cluster. IEEE Trans. Plasma Sci. IEEE Nucl. Plasma Sci. Soc. 46, 1281–1284 (2018).

Meng, H. et al. An invariant framework for conducting reproducible computational science. J. Comput. Sci. 9, 137–142 (2015).

Agostinelli, S. et al. Geant4 — a simulation toolkit. Nucl. Instrum. Methods Phys. Res. A 506, 250–303 (2003).

Vallisneri, M., Kanner, J., Williams, R., Weinstein, A. & Stephens, B. The LIGO open science center. J. Phys. Conf. Ser. 610, 012021 (2015).

Scott, D. & Becken, S. Adapting to climate change and climate policy: progress, problems and potentials. J. Sustain. Tour. 18, 283–295 (2010).

Ebenhard, T. Conservation breeding as a tool for saving animal species from extinction. Trends Ecol. Evol. 10, 438–443 (1995).

Warlenius, R., Pierce, G. & Ramasar, V. Reversing the arrow of arrears: the concept of ‘ecological debt’ and its value for environmental justice. Glob. Environ. Change 30, 21–30 (2015).

Acker, J. G. & Leptoukh, G. Online analysis enhances use of NASA Earth science data. Eos Trans. Am. Geophys. Union 88, 14–17 (2007).

Yang, C. et al. Big earth data analytics: a survey. Big Earth Data 3, 83–107 (2019).

Wiebels, K. & Moreau, D. Leveraging containers for reproducible psychological research. Adv. Methods Pract. Psychol. Sci. 4, 25152459211017853 (2021). This article describes the logic behind containers and the practical problems they can solve. The tutorial section walks the reader through the implementation of containerization within a research workflow, with examples using Docker and R. The article provides a worked example that includes all steps required to set up a container for a research project, which can be easily adapted and extended.

Nüst, D. et al. Ten simple rules for writing Dockerfiles for reproducible data science. PLoS Comput. Biol. 16, e1008316 (2020). This article presents a set of rules to help researchers write understandable Dockerfiles for typical data science workflows. By following these rules, researchers can create containers suitable for sharing with fellow scientists, for including in scholarly communication and for effective and sustainable personal workflows.

Elmenreich, W., Moll, P., Theuermann, S. & Lux, M. Making simulation results reproducible — survey, guidelines, and examples based on Gradle and Docker. PeerJ Comput. Sci. 5, e240 (2019).

Van Moffaert, K. & Nowé, A. Multi-objective reinforcement learning using sets of pareto dominating policies. J. Mach. Learn. Res. 15, 3663–3692 (2014).

Gama, J., Sebastião, R. & Rodrigues, P. P. On evaluating stream learning algorithms. Mach. Learn. 90, 317–346 (2013).

Kim, A. Y. et al. Implementing GitHub Actions continuous integration to reduce error rates in ecological data collection. Methods Ecol. Evol. 13, 2572–2585 (2022).

Wilson, G. et al. Best practices for scientific computing. PLoS Biol. 12, e1001745 (2014).

Eglen, S. J. et al. Toward standard practices for sharing computer code and programs in neuroscience. Nat. Neurosci. 20, 770–773 (2017).

No authors listed. Rebooting review. Nat. Biotechnol. 33, 319 (2015).

Kenall, A. et al. Better reporting for better research: a checklist for reproducibility. BMC Neurosci. 16, 44 (2015).

Poldrack, R. A. The costs of reproducibility. Neuron 101, 11–14 (2019).

Nagarajan, P., Warnell, G. & Stone, P. Deterministic implementations for reproducibility in deep reinforcement learning. Preprint at arXiv https://doi.org/10.48550/arXiv.1809.05676 (2018).

Piccolo, S. R., Ence, Z. E., Anderson, E. C., Chang, J. T. & Bild, A. H. Simplifying the development of portable, scalable, and reproducible workflows. eLife 10, e71069 (2021).

Higgins, J., Holmes, V. & Venters, C. in High Performance Computing 506–513 (Springer International Publishing, 2015).

de Bayser, M. & Cerqueira, R. in 2017 IEEE International Conference on Cloud Engineering (IC2E) 259–265 (IEEE, 2017).

Netto, M. A. S., Calheiros, R. N., Rodrigues, E. R., Cunha, R. L. F. & Buyya, R. HPC cloud for scientific and business applications: taxonomy, vision, and research challenges. ACM Comput. Surv. 51, 1–29 (2018).

Azab, A. in 2017 IEEE International Conference on Cloud Engineering (IC2E) 279–285 (IEEE, 2017).

Qasha, R., Cała, J. & Watson, P. in 2016 IEEE 12th International Conference on e-Science (e-Science) 81–90 (IEEE, 2016).

Saha, P., Beltre, A., Uminski, P. & Govindaraju, M. in Proceedings of the Practice and Experience on Advanced Research Computing 1–8 (Association for Computing Machinery, 2018).

Abdelbaky, M., Diaz-Montes, J., Parashar, M., Unuvar, M. & Steinder, M. in 2015 IEEE/ACM 8th International Conference on Utility and Cloud Computing (UCC) 368–371 (IEEE, 2015).

Hung, L.-H., Kristiyanto, D., Lee, S. B. & Yeung, K. Y. GUIdock: using Docker containers with a common graphics user interface to address the reproducibility of research. PLoS ONE 11, e0152686 (2016).

Salza, P. & Ferrucci, F. Speed up genetic algorithms in the cloud using software containers. Future Gener. Comput. Syst. 92, 276–289 (2019).

Pahl, C., Brogi, A., Soldani, J. & Jamshidi, P. Cloud container technologies: a state-of-the-art review. IEEE Trans. Cloud Comput. 7, 677–692 (2019).

Dessalk, Y. D., Nikolov, N., Matskin, M., Soylu, A. & Roman, D. in Proceedings of the 12th International Conference on Management of Digital EcoSystems 76–83 (Association for Computing Machinery, 2020).

Martín-Santana, S., Pérez-González, C. J., Colebrook, M., Roda-García, J. L. & González-Yanes, P. in Data Science and Digital Business (eds García Márquez, F. P. & Lev, B.) 121–146 (Springer International Publishing, 2019).

Jansen, C., Witt, M. & Krefting, D. in Computational Science and Its Applications — ICCSA 2016 303–318 (Springer International Publishing, 2016).

Brinckman, A. et al. Computing environments for reproducibility: capturing the ‘Whole Tale’. Future Gener. Comput. Syst. 94, 854–867 (2019).

Perkel, J. M. Make code accessible with these cloud services. Nature 575, 247–248 (2019).

Poldrack, R. A., Gorgolewski, K. J. & Varoquaux, G. Computational and informatic advances for reproducible data analysis in neuroimaging. Annu. Rev. Biomed. Data Sci. 2, 119–138 (2019).

Vaillancourt, P. Z., Coulter, J. E., Knepper, R. & Barker, B. in 2020 IEEE High Performance Extreme Computing Conference (HPEC) 1–8 (IEEE, 2020).

Adufu, T., Choi, J. & Kim, Y. in 17th Asia-Pacific Network Operations and Management Symposium (APNOMS) 507–510 (IEEE, 2015).

Cito, J., Ferme, V. & Gall, H. C. in Web Engineering 609–612 (Springer International Publishing, 2016).

Tedersoo, L. et al. Data sharing practices and data availability upon request differ across scientific disciplines. Sci. Data 8, 192 (2021).

Tenopir, C. et al. Data sharing by scientists: practices and perceptions. PLoS ONE 6, e21101 (2011).

Gomes, D. G. E. et al. Why don’t we share data and code? Perceived barriers and benefits to public archiving practices. Proc. Biol. Sci. 289, 20221113 (2022).

Weston, S. J., Ritchie, S. J., Rohrer, J. M. & Przybylski, A. K. Recommendations for increasing the transparency of analysis of preexisting data sets. Adv. Methods Pract. Psychol. Sci. 2, 214–227 (2019).

Acknowledgements

D.M. and K.W. are supported by a Marsden grant from the Royal Society of New Zealand and a University of Auckland Early Career Research Excellence Award awarded to D.M.

Author information

Authors and Affiliations

Contributions

Introduction (D.M., K.W. and C.B.); Experimentation (D.M., K.W. and C.B.); Results (D.M., K.W. and C.B.); Applications (D.M., K.W. and C.B.); Reproducibility and data deposition (D.M., K.W. and C.B.); Limitations and optimizations (D.M., K.W. and C.B.); Outlook (D.M., K.W. and C.B.).

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Reviews Methods Primers thanks Beth Ciimini, Stephen Piccolo and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Related links

ACM Digital Library: https://dl.acm.org

Amazon Web Services: https://aws.amazon.com

Ansible: https://ansible.com

Astropy: https://astropy.org

ATAC-seq Pipeline: https://github.com/ENCODE-DCC/atac-seq-pipeline

BCI2000 project: https://bci2000.org/

Binder: https://mybinder.org/

Bioconductor: https://bioconductor.org

BioContainers: https://biocontainers.pro

Bismark: https://www.bioinformatics.babraham.ac.uk/projects/bismark/

Breakdancer: https://github.com/genome/breakdancer

CERN Container Registry: https://hub.docker.com/u/cern

Chef: https://chef.io

CodeOcean: http://codeocean.com

Containerd: https://containerd.io/

Docker Hub: https://hub.docker.com

EarthData: https://earthdata.nasa.gov

EcoData Retriever: https://ecodataretriever.org

Ecological Niche Modelling on Docker: https://github.com/ghuertaramos/ENMOD

Ecopath: https://ecopath.org/

EIGENSOFT: https://hsph.harvard.edu/alkes-price/software/eigensoft

Environmental Data Commons: https://edc.occ-data.org

Experiment Factory: https://expfactory.github.io

F1000Research guidelines: https://f1000research.com/for-authors/article-guidelines/software-tool-articles

fmriprep: https://fmriprep.org

FSL project: https://fsl.fmrib.ox.ac.uk/fsl/fslwiki

GATK: https://gatk.broadinstitute.org

gdb: https://github.com/haggaie/docker-gdb

GEANT4: https://geant4.web.cern.ch

GeoServer: https://geoserver.org

GitHub Actions: https://github.com/features/actions

GitHub Container Registry: https://github.com/features/packages

Google Cloud Platform: https://cloud.google.com

GRASS GIS: https://grass.osgeo.org

Jenkins: https://jenkins.io

liftr: https://liftr.me/

LIGO Open Science Centre: https://losc.ligo.org

LXC: https://linuxcontainers.org

Marble Station: https://github.com/marblestation/docker-astro

Mesos: https://mesos.apache.org

NEST: https://nest-simulator.org

NeuroDebian: https://neuro.debian.net

NEURON: https://neuron.yale.edu/neuron

OpenShift: https://openshift.com/

Planet Research Data Commons: https://ardc.edu.au/program/planet-research-data-commons

Podman: https://podman.io/

Puppet: https://puppet.com

QGIS: https://qgis.org

Quay: https://quay.io

Rocker project: https://rocker-project.org/

Rocket: https://github.com/rkt/rkt

Salmon: https://combine-lab.github.io/salmon

SciServer: https://sciserver.org

Singularity: https://sylabs.io/

STAR: https://github.com/alexdobin/STAR

strace: https://github.com/amrabed/strace-docker

SVTyper: https://github.com/hall-lab/svtyper

Supplementary information

Glossary

- Clusters

-

Groups of machines that work together to run containerized applications.

- Compute resources

-

The resources required by a container to run, including central processing units, memory and storage.

- Containerization platform

-

A complete system for building, deploying and managing containerized applications, typically including a container runtime, and additional tools and services for things such as container orchestration, networking, storage and security.

- Container runtime

-

The software responsible for running and managing containers on a host machine, involving tasks such as starting and stopping containers, allocating resources to them and providing an isolated environment for them to run in.

- Continuous Integration/Continuous Deployment

-

(CI/CD). A software development practice that involves continuously integrating code changes into a shared repository and continuously deploying changes to a production environment.

- Dependencies

-

Software components that a particular application relies on to run properly, including libraries, tools and frameworks.

- Distributed-control model

-

A deployment model in which control is distributed among multiple independent nodes, rather than being centralized in a single control node.

- Docker engine

-

The containerization technology that Docker uses, consisting of the Docker daemon running on the computer and the Docker client that communicates with the daemon to execute commands.

- Dockerfiles

-

A script that contains instructions for building a Docker image.

- Environment variables

-

A variable that is passed to a container at runtime, allowing the container to configure itself on the basis of the value of the variable.

- High-performance computing

-

The use of supercomputers and parallel processing techniques to solve complex computational problems that require a large amount of processing power, memory and storage capacity.

- Host operating system

-

Primary operating system running on the physical computer or server in which virtual machines or containers are created and managed.

- Image

-

A preconfigured package that contains all the necessary files and dependencies for running a piece of software in a container.

- Namespaces

-

Virtualization mechanisms for containers, which allow multiple containers to share the same system resources without interfering with each other.

- Networking

-

The process of connecting multiple containers together and to external networks, allowing communication between containers and the outside world.

- Orchestration

-

The process of automating the deployment, scaling and management of containerized applications in a cluster.

- Orchestration platform

-

System for automating the deployment, scaling and management of containerized applications.

- Port mapping

-

The process of exposing the network ports of a container to the host machine, allowing communication between the container and the host or other networked systems.

- Production environment

-

Live, operational system in which software applications are deployed and used by end-users.

- Runtime environment

-

Specific set of software and hardware configurations that are present and available for an application to run on, including the operating system, libraries, system tools and other dependencies.

- Scaling

-

The process of increasing or decreasing the number of running instances of a containerized application to meet changing demand.

- Shared-control model

-

Deployment model in which a single central entity has control over multiple resources or nodes.

- Volumes

-

A storage mechanism for containers, which allows data to persist outside the file system of the container, including after a container has been deleted or replaced.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Moreau, D., Wiebels, K. & Boettiger, C. Containers for computational reproducibility. Nat Rev Methods Primers 3, 50 (2023). https://doi.org/10.1038/s43586-023-00236-9

Accepted:

Published:

DOI: https://doi.org/10.1038/s43586-023-00236-9

- Springer Nature Limited

This article is cited by

-

DL4MicEverywhere: deep learning for microscopy made flexible, shareable and reproducible

Nature Methods (2024)