Abstract

Mental workload refers to the cognitive effort required to perform tasks, and it is an important factor in various fields, including system design, clinical medicine, and industrial applications. In this paper, we propose innovative methods to assess mental workload from EEG data that use effective brain connectivity for the purpose of extracting features, a hierarchical feature selection algorithm to select the most significant features, and finally machine learning models. We have used the Simultaneous Task EEG Workload (STEW) dataset, an open-access collection of raw EEG data from 48 subjects. We extracted brain-effective connectivities by the direct directed transfer function and then selected the top 30 connectivities for each standard frequency band. Then we applied three feature selection algorithms (forward feature selection, Relief-F, and minimum-redundancy-maximum-relevance) on the top 150 features from all frequencies. Finally, we applied sevenfold cross-validation on four machine learning models (support vector machine (SVM), linear discriminant analysis, random forest, and decision tree). The results revealed that SVM as the machine learning model and forward feature selection as the feature selection method work better than others and could classify the mental workload levels with accuracy equal to 89.53% (± 1.36).

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Introduction

Mental workload (MWL) is a concept that refers to how hard the brain is working to meet task demands1. It refers to the number of cognitive resources required to perform a task and can be influenced by various factors such as task complexity, time pressure, and environmental conditions. It is a complicated, person-specific, dynamic, and non-linear construct that is multidimensional2. Some theories have been suggested to define, explain, and measure MWL, but a single reliable and valid framework to measure it has not been established yet. The MWL can have adverse effects on workability, and identifying and optimizing the factors affecting MWL and workability is crucial3,4,5. The measurement of MWL is important for both science and human factors. From a scientific perspective, quantifying MWL allows researchers to predict operator and system responses, optimize human–machine interactions, and determine the sources of error to enhance work performance in various industries, including medicine6. This is crucial for the development of effective strategies to manage MWL and improve task performance. From a human perspective, understanding and managing MWL is essential for maintaining well-being and preventing the negative effects of excessive mental demands, such as stress, fatigue, and performance decrements. Therefore, the measurement of MWL plays a vital role in both scientific research and the improvement of human work conditions and performance2.

There are many methods for measuring MWL, including information processing studies, time-line analysis, operator activation level studies, subjective questionnaires, physiological measures, and modeling7. The abundance of measurement methods for MWL can result in inconsistent results, and there is currently no consensus on a specific method suitable for all applications. Despite these challenges, the measurement of MWL remains a crucial aspect of scientific research and human factors as it allows researchers to predict operator and system responses, optimize human–machine interactions, and determine the sources of error to enhance work performance. The subjective measures are the most commonly used method for measuring MWL, as they are low-cost easy to administer, and have a high degree of face validity. A common method is to use a questionnaire asking subjects to rate the difficulty of the task6. There are some well-known indices like the National Aeronautics and Space Administration (NASA) Task Load Index (NASA-TLX)8 and Subjective Workload Assessment Technique (SWAT)9. However, they are subject to bias and may not accurately reflect the actual MWL10. Measuring MWL through performance measures involves evaluating individuals' task performance as an indirect indicator of their MWL. This approach assesses how well individuals perform a task to infer their cognitive workload11.

Finally, the psychophysiological measures method assesses MWL by analyzing physiological signals like heart rate variability, EEG, and fNIRS12. Among these, EEG is extensively used due to its quick data acquisition, convenient usage, real-time assessment, lack of subject bias, portability, high temporal resolution, and non-invasiveness13,14. Traditionally, machine learning methods based on EEG have been used to classify MWL classes using various algorithms, like support vector machine (SVM)15,16,17,18,19,20,21,22,23, and linear discriminative analysis (LDA)24,25,26,27. These methods involve feature extraction, feature selection, and classification of EEG signals to measure MWL. Recently, in28 a framework for assessing MWL is proposed. This framework uses discrete wavelet transform (DWT) to decompose the EEG signal for extracting the non-stationary features of task-wise EEG signals. Additionally29, aimed to investigate the cognitive workload of fighter pilots during different flight phases using physiological signals such as ECG and EEG. The researchers employed classification algorithms, including LDA, SVM, and k-nearest Neighbor (KNN), to classify the pilots' cognitive workload. The results demonstrated that LDA and SVM, with an accuracy of 75%, were more consistent classifiers compared to the k-NN classifier, which achieved an accuracy of 60%. Another study aimed to investigate the impact of theta-to-alpha and alpha-to-theta band ratios on creating models capable of discriminating self-reported perceptions of MWL. The study utilized the STEW dataset and found that models trained with high-level features extracted from the alpha-to-theta ratios and theta-to-alpha ratios achieved high classification accuracy. This indicates the richness of information in the temporal, spectral, and statistical domains extracted from these EEG band ratios for the distinction of self-reported perceptions of MWL30.

Many studies have used various methods to assess MWL. However, there is still no single robust method that can accurately assess the MWL. To tackle this issue, we are exploring a brain connectivity-based approach that has shown promising results in various domains31,32,33. So, we will use neural activity flow based on the direct directed transfer function (dDTF) to explore different regions and networks that distinguish between different levels of MWL. Additionally, we will utilize neural activity flow as a feature in machine learning (ML) models such as SVM, LDA, Decision Tree (DT), and Random Forest (RF) to classify MWL levels. Finally, we will use the feature selection method to select features from all frequency bands and filter out irrelevant or redundant variables to improve the accuracy of the model. This approach aims to enhance the understanding of neuronal mechanisms underlying MWL. The main novelties and contributions of our study are the use of dDTF as a measure of effective neural connectivity for the purpose of extracting features from EEG data and proposing a hierarchical feature selection method to select the most significant features, and finally investigating some ML models and compare their results.

Material and methods

Participants and EEG recording

We utilized the Simultaneous Task EEG Workload (STEW) dataset, an open-access collection of raw EEG data from 48 male subjects who participated in a multitasking workload experiment utilizing the SIMKAP multitasking test. The SIMKAP multitasking assessment involves participants in identifying and marking identical items across two panels, all while answering auditory questions that vary in type, such as arithmetic, comparison, or data retrieval. Certain auditory tasks may necessitate delayed responses, prompting individuals to monitor a clock positioned in the upper right corner. This multitasking segment follows a predetermined sequence of questions34. By focusing solely on male participants, the dataset minimizes variability arising from gender-related physiological differences that could impact EEG data collection and analysis. This approach allows for a more controlled examination of mental workload patterns and EEG responses, particularly in multitasking scenarios like those assessed in the SIMKAP experiment. The experiment consisted of two stages:

-

1.

Information was gathered for 2.5 min when the participants were not engaged in any activity, referred to as ‘low’ MWL.

-

2.

The participants took the SIMKAP test while their brain activity was monitored, and the last 2.5 min were considered the high MWL condition.

The EEG signals were obtained using the Emotiv EPOC EEG headset, featuring a 16-bit A/D resolution, and 128 Hz sampling frequency. Also, 14 channels including AF3, F7, F3, FC5, T7, P7, O1, O2, P8, T8, FC6, F4, F8, and AF4 based on the 10–20 international system, in addition, CMS and DRL were as reference channels. The STEW dataset is valuable for studying multitasking workload and analyzing brain activity during different cognitive tasks. Researchers can use this dataset to develop and evaluate algorithms and models for MWL classification and prediction.

Preprocessing

We implemented the preprocessing pipeline recommended in the database-providing paper34. The pipeline involved:

-

1.

High-pass filtering of the raw data at 1 Hz to filter out low frequency noise that can come from sources such as movement of the head and electrode wires, perspiration on the scalp, or slow drifts in the EEG signal over many seconds

-

2.

Removing line noise which is caused by electrical equipment, such as power lines, that emit electromagnetic fields that interfere with the EEG signal

-

3.

Performing Artifact Subspace Reconstruction (ASR) to automatically detect and remove unusual noise or artifacts from EEG signals

-

4.

Re-reference the data to average to transform the data from a fixed or common reference to an 'average reference,' which is advocated by some researchers, especially when the electrode montage covers nearly the whole head

The application of ASR was emphasized due to the presence of large amplitude artifacts in the data. ASR is a non-stationary method to remove large-amplitude artifacts35. The preprocessing was conducted using the EEGLAB toolbox in MATLAB software (version 2019a).

Effective connectivity

Effective connectivity refers to the directional or unequal dependencies between distinct brain regions36. The primary technique for assessing effective connectivity is Granger causality (GC), which can be calculated within the frequency domain. To accomplish this, it is necessary to estimate the parameters of a Multi-Variable Auto-Regressive (MVAR) model for each individual signal dataset. Two crucial parameters for estimating the MVAR model from EEG signals are the window length and the model order. The window length is determined using the Variance-Ratio Test to maintain the stationarity of EEG signals. Subsequently, the estimated model is validated based on the whiteness of residuals, consistency percentage, and stability, and is chosen based on the Akaike Information Criterion (AIC). For a set of M channels of EEG data with lengths of T, denoted as X = {x_1; x_2;…;x_T}, the MVAR process of order p is represented as follows37:

where \(v\) represents an (M × 1) vector comprising intercept terms, denoted as \(v={\left[{v}_{1}\dots {v}_{M}\right]}{\prime}\), \({A}_{k}\) are (M × M) matrices of model coefficients, and \({u}_{t}\) signifies a white noise process characterized by a zero mean and a non-singular covariance matrix Σ.

Rearranging terms results in:

where \({\widehat{A}}_{k}=-{A}_{k}\) and \({\widehat{A}}_{0}=-I\).

After applying the Fourier transform to both sides:

where

By multiply Eq. (4) at \(A(f{)}^{-1}\) and rearrange terms we have:

where \(X\left(f\right)\) is the (M × M) spectral matrix of the multivariate process, \(U(f)\) is a random sinusoidal shocks matrix and \(H\left(f\right)\) is the transfer matrix of the system. The spectral density matrix of the process is determined as follows:

The matrices of \(S\left(f\right), A(F)\) and \(H(f)\) are utilized to establish various metrics of effective connectivity. dDTF explicitly captures directional interactions between brain regions, distinguishing between driving and response regions. This directional information is valuable for understanding the flow of information within neural networks. Also, dDTF allows for frequency-specific analysis of directed connectivity, providing insights into how different frequency bands contribute to information processing within the brain. This can be particularly useful in studying cognitive processes that are associated with specific frequency ranges. In addition, dDTF has proven efficacy in neuroscience investigations31,3237,38.

The calculation of dDTF from channel j to channel i at frequency f is determined by the following equation:

where H(f) is the transfer matrix of the system at a specific frequency and S(f) is the spectral density matrix. We extract five frequency bands for the dDTF measure by averaging the frequency spectrum as follows: delta (2–4), theta (4–8), alpha (8–13), beta (13–32), and gamma (32–50). All steps for dDTF measurement are done in MATLAB software by the Source Information Flow Toolbox (SIFT) version 0.1a36.

Feature Selection

Feature selection plays a crucial role in the interpretability of machine learning models. By carefully choosing which features to include in the model, researchers and practitioners can enhance the understanding of how the model makes predictions. In the context of MWL assessment from EEG data, feature selection is essential for understanding the relationship between brain regions and MWL assessment. By selecting the most relevant EEG features, researchers can better understand the underlying mechanisms of mental workload and provide more accurate and interpretable models. The removal of less important features can help enhance the performance of classification tasks. In order to distinguish between high-MWL and lo-MWL groups, a series of steps were followed. Initially, one-seventh of the data was set aside for testing. Subsequently, the area under the curve (AUC) for each neural activity flow in every band was calculated using LDA. The AUC-ROC value was derived from the mean values of all cross-validation sets, serving as a valid measure of the model's performance in a generalized setting. Following this, the top 30 connections from each frequency band with the highest AUC were selected which made the top 150 features among five frequency bands based on the AUC of each feature, and feature selection algorithms were subsequently applied. This process was designed to leverage neural activity flow features in each band to differentiate between high-MWL and lo-MWL, with a specific focus on the connections exhibiting the highest AUC values. Some popular feature selection algorithms include forward feature selection, minimum-redundancy-maximum-relevance (mRMR), and Relief-F which we have used in this paper. Forward Feature Selection is a stepwise feature selection method that starts with an empty feature set and iteratively adds one feature at a time based on the classifier performance. The process begins by evaluating the individual predictive power of each feature and selecting the best feature. Subsequently, additional features are sequentially added, with each subsequent feature chosen to maximize the improvement in model performance39. mRMR algorithm selects features based on their individual and combined predictive power, aiming to build models that capture the most important aspects of the data. It focuses on reducing redundancy and increasing relevance, ensuring that the selected features are both relevant to the problem and non-redundant to each other40,41. Relief-F is an unsupervised feature selection method that evaluates the importance of features based on their ability to distinguish between different classes. It measures the decrease in class separation (distance) between the closest neighbors of different classes when a feature is removed. The features with the highest decrease in class separation are selected as the most relevant features42,43.

Classification

In this paper, we’ve used four classifiers for data classifications which are SVM, LDA, Decision tree (DT), and Random forest (RF). SVM is a supervised machine learning model used for classification and regression tasks. It works by finding a hyperplane that separates the data points with the largest margin. SVM is particularly useful for handling both linear and nonlinear input spaces and can be more accurate than other algorithms in certain cases44. LDA is an algorithm used for dimensionality reduction and data visualization. It is a probabilistic model that aims to find a linear combination of input features that can maximize the separation between different classes. LDA is commonly used in various applications, such as sentiment analysis and spam detection45. A decision tree in machine learning is a supervised learning algorithm that creates models for classification and regression tasks. It uses a tree-like structure where each internal node represents a decision based on an attribute, leading to leaf nodes that represent outcomes. Decision trees are interpretable and widely used due to their simplicity and effectiveness in predicting values based on input features46. RF is an ensemble learning method used for both classification and regression tasks. It works by constructing multiple decision trees and combining their predictions to improve overall accuracy. RF is known for its simplicity, scalability, and performance in various applications47.

Statistical analysis

In our research, we have utilized k-fold cross-validation, a statistical method commonly used in machine learning to estimate the skill of a model. A cross-validation procedure is used to assess the effectiveness of machine learning models, and it can also be used to evaluate a model if there is insufficient data. For cross-validation to be performed, a portion of the training data must be set aside for evaluation later. We partitioned the data into k equally sized segments and then performed k iterations of training and validation. During each iteration, one of the k segments was held out as the test set, while the model was trained on the remaining data. This process was repeated for each segment, and the performance of the model was evaluated and averaged over the k iterations. After conducting a trial-and-error analysis, it was determined that 7 is the optimal value for k. Further analysis was based on the results of the sevenfold cross-validation. The flowchart of the proposed method is provided in Fig. 1. In addition, we applied AUC to selecting the most important connections. The AUC measure, commonly used in evaluating the performance of binary classification models, does not rely on specific assumptions about the underlying data distribution. Instead, it assesses the ability of a classifier to distinguish between positive and negative instances across all possible decision thresholds. However, while AUC itself does not make assumptions about the data, its interpretation can be influenced by certain factors related to the classification problem and the data being analyzed. AUC assumes that the observations used to evaluate the classifier are independent of each other. Violations of this assumption, such as autocorrelation or clustering of observations, can potentially bias the AUC estimate. In addition, AUC is designed for binary classification tasks where there are two distinct classes (which in our case is low-MWL and high-MWL). It may not be directly applicable to multi-class classification problems without appropriate modifications.

The flowchart of the proposed method. In this method firstly we preprocessed the raw EEG data, then calculated the effective connectivity with the dDTF index in 5 frequency bands. In the next step, the top 30 features based on AUC in each frequency band were calculated and then we applied three feature selection algorithms on them. Finally, we classified the final selected features from each feature selection algorithm.

Results

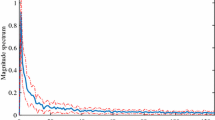

The EEG data from each subject’s 14 channels were pre-processed using the EEGLAB toolbox in MATLAB software (version 2019a). A sample of data before and after the preprocessing pipeline is provided in Fig. 2. Subsequently, we computed the effective connectivity across all EEG data utilizing dDTF. The dDTF connectivities were derived from sequential 6-s segments of data from 14 channels for each subject across 5 frequency bands. Specifically, a 6-s window was slid along the EEG signals with a step size of 4 s. Figure 3 shows some samples of the dDTF image extracted from ‘high’ and ‘low’ MWL related to subject 16 for each frequency band. Horizontal axes and vertical axes represent channels. Considering 150 s of EEG signals, 6-s as window size, and 4-s as window step, we achieved 37 dDTF matrices per EEG data. Subsequently, the AUC values for each directed connection were computed based on their respective dDTF values. These AUC values are then independently ranked, and the top 30 connections are identified according to their AUC values (Table 1, Fig. 4). In addition, Table 3 provides the number of connections within each region. Based on this table, the frontal lobe has the highest number of neural connections. The classification results and computational efficiency of four machine learning models (SVM, LDA, RF, and DT) for each frequency band and a combination of the top 30 AUC-based features from all bands (top 150) are shown in Table 4. The SVM and DT models demonstrated the best and weakest performance respectively, as indicated in Table 4. In addition, the top 150 features have the highest accuracy, specificity, sensitivity, and F1-measure in all models. Based on the information provided in Table 4, the RF model was the most time-consuming of the four investigated models, while the LDA model was the least time-consuming. We applied a hierarchical feature selection in this paper. So, after selecting 150 top features based on AUC, we used three different feature selection algorithms in parallel including Relief-F, forward feature selection, and mRMR. According to Table 5, the forward feature selection’s results were better than others and the SVM model could achieve 89.53% accuracy on 41 features that were selected based on the forward feature selection algorithm. The 41 selected features based on the forward feature selection are provided in Table 6.

Based on the AUC values, the top 30 neural activity patterns that demonstrate differences in propagation between the high MWL and low MWL groups are depicted. In this illustration, nodes represent electrodes in the 10–20 system, the edges indicate connection between channels, and the edges’ color represent the AUC values.

Discussion

In this study, we investigated a new method for the classification of low MWL and high MWL from 14-channel EEG data in 48 participants. In the proposed model we extracted features from EEG signals by the brain’s effective connectivity. In this state, we had 37 matrices with dimensions of 14*14 for each EEG data. For the purpose of extracting the most significant connectivities at the first step, we calculated AUC for all connectivities and selected the top 30 connectivities with high AUC scores which provided 150 features in 5 frequency bands. According to Tables 2 and 3, the most significant connectivities in order to differ between high MWL and low MWL classes are from the frontal lobe. This result is in line with the findings of previous studies48,49,50, who also reported similar outcomes. Table 4 provides a comparison between all features in each frequency band and the top 150 selected features. It revealed that the best accuracy achieved from the top 150 selected features on SVM is equal to 88.96%. At the next step of the feature selection, we applied three feature selection methods on the top 150 features which were forward feature selection, Relief-F, and mRMR. Finally, we used four machine learning algorithms, SVM, LDA, DT, and RF in sevenfold cross-validation to classify the data. Using cross-validation in our research provided a more accurate estimate of out-of-sample accuracy, prevented overfitting, and allowed for more efficient use of data. After the next layer of feature selection, the number of selected features decreased and Table 5 provides a comparison between the accuracy of each algorithm’s results which indicates that the forward feature selection algorithm was most successful among all three feature selection algorithms. Forward feature selection selected the 41 most significant features that are provided in Table 6 and these selected features could achieve an accuracy of 89.53% in SVM which was even better than the accuracy of the top 150 selected features based on AUC.

The proposed framework to classify MWL from EEG data could achieve high accuracy and be in the top range of accuracy between other studies that used machine learning methods to classify MWL into two classes (Table 7). By leveraging brain effective connectivity analysis through dDTF and employing hierarchical feature selection alongside various machine learning models, also with finding and visualizing most important regions in brain for MWL assessment by calculating the brain connectivities, the research significantly has advanced the field of MWL assessment. This approach not only refines the precision of MWL assessment but also contributes to the development of more robust and interpretable models for MWL assessment. In this research, we had some limitations, especially in the dataset. We used the STEW dataset which is a well-known dataset in this field, but this dataset has some constraints such as the low number of participants and gender limitations because the dataset has only male participants and these may affect the generalizability of the findings to the broader population. In addition, the current study used just four machine learning algorithms, but future researchers in this field can try to apply more machine learning algorithms or even deep learning models. Future studies could benefit from expanding the dataset to include a more diverse and representative sample, encompassing participants of different genders and demographics. This would improve the robustness and applicability of the research findings. In addition, researchers can explore a wider range of machine learning algorithms beyond the four used in the current study. Incorporating more algorithms, including advanced deep learning models, can provide a more comprehensive analysis and potentially uncover additional insights from the data.

Conclusion

In this paper, we proposed a framework for classifying MWL into two classes. To reach this purpose, we used effective brain connectivity as the feature extraction technique and applied four machine learning algorithms including SVM, LDA, RF, and DT. In addition, we investigated a hierarchical feature selection method. In the first step, we extracted the top 30 features based on AUC in each frequency band and then applied three feature selection algorithms which were forward feature selection, Relief-F, and mRMR. The results of this study suggest that machine learning algorithms especially SVM and the proposed framework for feature extraction and hierarchical feature selection can classify MWL levels from EEG data with high accuracy (89.53%). In future investigations, deep learning models can be utilized to construct a robust framework for evaluating MWL through the use of effective brain connectivity images.

Data availability

The data used in this study is the Simultaneous Task EEG Workload (STEW) dataset, an open-access collection of raw EEG data from 48 male subjects who participated in a multitasking workload experiment utilizing the SIMKAP multitasking test34. The raw dataset is available for download via: https://ieee-dataport.org/open-access/stew-simultaneous-task-eeg-workload-dataset. The data are available to qualified investigators for purposes of scientific research.

References

Mingardi, M., Pluchino, P., Bacchin, D., Rossato, C. & Gamberini, L. Assessment of implicit and explicit measures of mental workload in working situations: Implications for Industry 4.0. Appl. Sci. 10(18), 6416. https://doi.org/10.3390/app10186416 (2020).

Longo, L., Wickens, C. D., Hancock, G., & Hancock, P. A. Corrigendum: Human mental workload: A survey and a novel inclusive definition. Front. Psychol. 13, 969140. https://doi.org/10.3389/fpsyg.2022.969140 (2022).

Marchand, C., De Graaf, J. B. & Jarrassé, N. Measuring mental workload in assistive wearable devices: A review. J. Neuroeng. Rehabil. 18, 160. https://doi.org/10.1186/s12984-021-00953-w (2021).

Ghanavati, F., Choobineh, A., Keshavarzi, S., Nasihatkon, A. & Jafari Roodbandi, A. S. Assessment of mental workload and its association with work ability in control room operators. La Medicina del Lavoro 110, 389–397. https://doi.org/10.23749/mdl.v110i5.8115 (2019).

Soria-Oliver, M., Lopez, S. & Torrano, F. Relations between mental workload and decision-making in an organizational setting. Psicologia: Reflexão e Crítica 30(14), 23. https://doi.org/10.1186/s41155-017-0061-0 (2017).

Byrne, A. Measurement of mental workload in clinical medicine: A review study. Anesth. Pain Med. 1(2), 90–94. https://doi.org/10.5812/kowsar.22287523.2045 (2011).

Meshkati, N., Hancock. P. A., Rahimi. M., & Dawes, S. Techniques in mental workload assessment. Evaluation of Human Work: A Practical Ergonomics Methodology. (1995)

Hart, S. G. & Staveland, L. E. Development of NASA-TLX (Task Load Index): Results of empirical and theoretical research. Adv. Psychol. 52, 139–183 (1988).

Reid, G. B. & Nygren, T. E. The subjective workload assessment technique: A scaling procedure for measuring mental workload. Adv. Psychol. 52, 185–218 (1988).

Schnotz, W. & Kürschner, C. A reconsideration of cognitive load theory. Educ. Psychol. Rev. 19(4), 469–508 (2007).

Sevcenko, N., Ninaus, M., Wortha, F., Moeller, K. & Gerjets, P. Measuring cognitive load using in-game metrics of a serious simulation game. Front. Psychol. 12, 572437. https://doi.org/10.3389/fpsyg.2021.572437 (2021).

Chen, S., Epps, J., & Chen, F. A comparison of four methods for cognitive load measurement, in Proceedings of the 23rd Australian Computer-Human Interaction Conference (OzCHI '11) 76–79 (Association for Computing Machinery, 2011). https://doi.org/10.1145/2071536.2071547.

Zhu, G., Zong, F., Zhang, H., Wei, B. & Liu, F. Cognitive load during multitasking can be accurately assessed based on single channel electroencephalography using graph methods. IEEE Access 9, 33102–33109. https://doi.org/10.1109/ACCESS.2021.3058271 (2021).

Zhou, Y. et al. Cognitive workload recognition using EEG signals and machine learning: A review. IEEE Trans. Cogn. Dev. Syst. https://doi.org/10.1109/TCDS.2021.3090217 (2021).

Sciaraffa, N. et al. On the use of machine learning for EEG-based workload assessment: Algorithms comparison in a realistic task. In Human Mental Workload: Models and Applications 170–185 (Springer, 2019). https://doi.org/10.1007/978-3-030-32423-0_11.

Dimitrakopoulos, G. et al. Task-independent mental workload classification based upon common multiband EEG cortical connectivity. IEEE Trans. Neural Syst. Rehabil. Eng. https://doi.org/10.1109/TNSRE.2017.2701002r (2017).

Mazher, M., Aziz, A. A., Malik, A. S. & Amin, H. U. An EEG-based cognitive load assessment in multimedia learning using feature extraction and partial directed coherence. IEEE Access 5, 14819–14829 (2017).

Almogbel, M. A., Dang, A. H., & Kameyama, W. Cognitive workload detection from raw EEG-signals of vehicle driver using deep learning, in International Conference on Advanced Communication Technology (ICACT) 1–6 (2018).

Yu, K., Prasad, I., Mir, H., Thakor, N. & Al-Nashash, H. Cognitive workload modulation through degraded visual stimuli: A single-trial EEG study. J. Neural Eng. 12(4), 046020 (2015).

Zarjam, P., Epps, J., & Chen, F. Characterizing working memory load using EEG delta activity, in Proceedings of the 19th European Signal Processing Conference (EUSIPCO) 1554–1558 (2011).

Walter, C., Schmidt, S., Rosenstiel, W., Gerjets, P., & Bogdan, M. Using cross-task classification for classifying workload levels in complex learning tasks, in 2013 Humaine Association Conference on Affective Computing and Intelligent Interaction 876–881 (2013).

Zarjam, P., Epps, J., & Chen, F. Spectral EEG features for evaluating cognitive load, in International Conference of the IEEE Engineering in Medicine and Biology Society (EMBS), Boston, Massachusetts USA 3841–3844 (2011).

So, W. K. Y., Wong, S. W. H., Mak, J. N., Chan, R. H. M. & Emmanuel, M. An evaluation of mental workload with frontal EEG. PLoS ONE 12(4), e0174949 (2017).

Dehais, F. et al. Monitoring Pilot’s mental workload using ERPs and spectral power with a six-dry-electrode EEG system in real flight conditions. Sensors 19(6), 1324. https://doi.org/10.3390/s19061324 (2019).

Aricò, P., Borghini, G., Flumeri, G. D., Colosimo, A. & Babiloni, F. A passive brain-computer interface application for the mental workload assessment on professional air traffic controllers during realistic air traffic control tasks. Prog. Brain Res. 228, 295–328 (2016).

Roy, R. N., Charbonnier, S., Campagne, A. & Bonnet, S. Efficient mental workload estimation using task-independent EEG features. J. Neural Eng. 13(2), 026019 (2016).

Kakkos, I. et al. Mental workload drives different reorganizations of functional cortical connectivity between 2D and 3D simulated flight experiments. IEEE Trans. Neural Syst. Rehabil. Eng. 27(9), 1704–1713 (2019).

Khanam, F., Hossain, A. A. & Ahmad, M. Electroencephalogram-based cognitive load level classification using wavelet decomposition and support vector machine. Brain-Comput. Interfaces 10(1), 1–15. https://doi.org/10.1080/2326263X.2022.2109855 (2023).

Mohanavelu, K., Srinivasan, P., Arivudaiyanambi, J. & Vinutha, S. Machine learning-based approach for identifying mental workload of pilots. Biomed. Signal Process. Control 75, 103623. https://doi.org/10.1016/j.bspc.2022.103623 (2022).

Raufi, B. & Longo, L. An evaluation of the EEG alpha-to-theta and theta-to-alpha band ratios as indexes of mental workload. Front. Neuroinform. 16, 861967. https://doi.org/10.3389/fninf.2022.861967 (2022).

Bagherzadeh, S., Maghooli, K., Shalbaf, A. & Maghsoudi, A. Emotion recognition using effective connectivity and pre-trained convolutional neural networks in EEG signals. Cogn. Neurodyn. 16, 1–20. https://doi.org/10.1007/s11571-021-09756-0 (2022).

Saeedi, A., Saeedi, M., Maghsoudi, A. & Shalbaf, A. Major depressive disorder diagnosis based on effective connectivity in EEG signals: A convolutional neural network and long short-term memory approach. Cogn. Neurodyn. 15, 239 (2021).

Nobakhsh, B. et al. An effective brain connectivity technique to predict repetitive transcranial magnetic stimulation outcome for major depressive disorder patients using EEG signals. Phys. Eng. Sci. Med. https://doi.org/10.1007/s13246-022-01198-0 (2022).

Lim, W., Sourina, O. & Wang, L. STEW: Simultaneous task EEG workload dataset. IEEE Trans. Neural Syst. Rehabil. Eng. 26(10), 2106–2114. https://doi.org/10.1109/TNSRE.2018.2872924 (2018).

Mullen, T. R. et al. Real-time neuroimaging and cognitive monitoring using wearable dry EEG. IEEE Trans. Biomed. Eng. 62, 2553–2567 (2015).

Mullen, T. Source Information Flow Toolbox (SIFT). Swartz Center for Computational Neuroscience, California, San Diego (2010).

Korzeniewska, A., Mańczak, M., Kamiński, M., Blinowska, K. J. & Kasicki, S. Determination of information flow direction among brain structures by a modified directed transfer function (dDTF) method. J. Neurosci. Methods 125(1–2), 195–207 (2003).

Maghsoudi, A., & Shalbaf, A. Hand motor imagery classification using effective connectivity and hierarchical machine learning in EEG signals. J. Biomed. Phys. Eng. 12(2), 161–170. https://doi.org/10.31661/jbpe.v0i0.1264 (2020).

Han, M. & Liu, X. Forward feature selection based on approximate Markov blanket. In Advances in Neural Networks—ISNN 2012. Lecture Notes in Computer Science Vol. 7368 (eds Wang, J. et al.) (Springer, Berlin, Heidelberg, 2012). https://doi.org/10.1007/978-3-642-31362-2_8.

Şen, B., Peker, M., Çavuşoğlu, A. & Çelebi, F. V. A comparative study on classification of sleep stage based on EEG signals using feature selection and classification algorithms. J. Med. Syst. 38(3), 1–21. https://doi.org/10.1007/s10916-014-0018-0 (2014).

Ding, C. & Peng, H. Minimum redundancy feature selection from microarray gene expression data. J. Bioinform. Comput. Biol. 3(2), 185–205 (2005).

Peker, M., Arslan, A., Şen, B., Çelebi, F. V., & But, A. A novel hybrid method for determining the depth of anesthesia level: Combining ReliefF feature selection and random forest algorithm (ReliefF+RF), in INISTA 2015 - 2015 International Symposium on Innovations in Intelligent SysTems and Applications, Proceedings (2015).

Al-Nafjan, A. Feature selection of EEG signals in neuromarketing. PeerJ Comput. Sci. 8, e944 (2022).

Meyer, D., Leisch, F. & Hornik, K. The support vector machine under test. Neurocomputing 55(1–2), 169–186. https://doi.org/10.1016/S0925-2312(03)00431-4 (2003).

Tharwat, A., Gaber, T., Ibrahim, A. & Hassanien, A. E. Linear discriminant analysis: A detailed tutorial. AI Commun. 30(2), 169–190. https://doi.org/10.3233/AIC-170729 (2017).

Rokach, L. & Maimon, O. Decision Trees (Springer, 2005). https://doi.org/10.1007/0-387-25465-X_9.

Breiman, L. Random forests. Mach. Learn. 45(1), 5–32. https://doi.org/10.1023/A:1010950718922 (2001).

Dehais, F., Lafont, A., Roy, R. & Fairclough, S. A neuroergonomics approach to mental workload, engagement and human performance. Front. Neurosci. https://doi.org/10.3389/fnins.2020.00268 (2020).

Causse, M., Chua, Z., Peysakhovich, V., Del Campo, N. & Matton, N. Mental workload and neural efficiency quantified in the prefrontal cortex using fNIRS. Sci. Rep. https://doi.org/10.1038/s41598-017-05378-x (2017).

Causse, M. et al. Facing successfully high mental workload and stressors: An fMRI study. Hum. Brain Mapp. 43(3), 1011–1031. https://doi.org/10.1002/hbm.25703 (2022).

Pandey, V., Choudhary, D. K., Verma, V., Sharma, G., Singh, R., & Chandra, S. Mental workload estimation using EEG, in 2020 Fifth International Conference on Research in Computational Intelligence and Communication Networks (ICRCICN) pp. 83–86 (IEEE, 2020). https://doi.org/10.1109/ICRCICN50933.2020.9296150.

Chakladar, D., Dey, S., Roy, P. P. & Dogra, D. P. EEG-based mental workload estimation using deep BLSTM-LSTM network and evolutionary algorithm. Biomed. Signal Process. Control 60, 101989. https://doi.org/10.1016/j.bspc.2020.101989 (2020).

Acknowledgements

This research is financially supported by "Shahid Beheshti University of Medical Sciences" (Grant No 43004564).

Author information

Authors and Affiliations

Contributions

MohammadReza Safari: conceptualization, methodology, software, validation. Sara Bagherzadeh: software, formal analysis, review and editing. Ahmad Shalbaf, Reza Shalbaf : conceptualization, methodology, writing—review and editing, supervision, project administration. All authors reviewed the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Safari, M., Shalbaf, R., Bagherzadeh, S. et al. Classification of mental workload using brain connectivity and machine learning on electroencephalogram data. Sci Rep 14, 9153 (2024). https://doi.org/10.1038/s41598-024-59652-w

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-024-59652-w

- Springer Nature Limited