Abstract

Background

Random Forests is a popular classification and regression method that has proven powerful for various prediction problems in biological studies. However, its performance often deteriorates when the number of features increases. To address this limitation, feature elimination Random Forests was proposed that only uses features with the largest variable importance scores. Yet the performance of this method is not satisfying, possibly due to its rigid feature selection, and increased correlations between trees of forest.

Methods

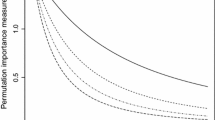

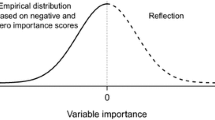

We propose variable importance-weighted Random Forests, which instead of sampling features with equal probability at each node to build up trees, samples features according to their variable importance scores, and then select the best split from the randomly selected features.

Results

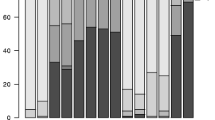

We evaluate the performance of our method through comprehensive simulation and real data analyses, for both regression and classification. Compared to the standard Random Forests and the feature elimination Random Forests methods, our proposed method has improved performance in most cases.

Conclusions

By incorporating the variable importance scores into the random feature selection step, our method can better utilize more informative features without completely ignoring less informative ones, hence has improved prediction accuracy in the presence of weak signals and large noises. We have implemented an R package “viRandomForests” based on the original R package “randomForest” and it can be freely downloaded from http://zhaocenter.org/software.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

Hanahan, D. and Weinberg, R. A. (2011) Hallmarks of cancer: the next generation. Cell, 144, 646–674

Breiman, L. (2001) Random forests. Mach. Learn., 45, 5–32

Palmer, D. S., O’Boyle, N. M., Glen, R. C. and Mitchell, J. B. (2007) Random forest models to predict aqueous solubility. J. Chem. Inf. Model., 47, 150–158

Jiang, P., Wu, H., Wang, W., Ma, W., Sun, X., Lu, Z. (2007) MiPred: classification of real and pseudo microRNA precursors using random forest prediction model with combined features. Nucleic Acids Res. 35, W339–W344, https://doi.org/10.1093/nar/ gkm368

Lee, J. W., Lee, J. B., Park, M., Song, S. H. (2005) An extensive comparison of recent classification tools applied to microarray data. Comput. Stat. Data Anal. 48, 869–885 https://doi.org/ 10.1016/j.csda.2004.03.017

Goldstein, B. A., Polley, E. C. and Briggs, F. B. (2011) Random forests for genetic association studies. Stat. Appl. Genet. Mol. Biol., 10, 32

Amaratunga, D., Cabrera, J. and Lee, Y. S. (2008) Enriched random forests. Bioinformatics, 24, 2010–2014

Granitto, P. M., Furlanello, C., Biasioli, F. and Gasperi, F. (2006) Recursive feature elimination with random forest for PTR-MS analysis of agroindustrial products. Chemometr. Intell. Lab., 83, 83–90

Svetnik, V., Liaw, A., Tong, C. andWang, T. (2004) Application of Breiman’s random forest to modeling structure-activity relationships of pharmaceutical molecules. Lect. Notes Comput. Sci., 3077, 334–343

Díaz-Uriarte, R. and de Andrés, S.A. (2006) Gene selection and classification of microarray data using random forest. BMC Bioinformatics, 7, 3

Breiman, L. (2001) Statistical modeling: the two cultures. Stat. Sci., 16, 199–231

Amaratunga, D. and Cabrera, J. (2009) A conditional t suite of tests for identifying differentially expressed genes in a DNA microarray experiment with little replication. Stat. Biopharm. Res., 1, 26–38

Biau, G. (2012) Analysis of a random forests model. J. Mach. Learn. Res., 13, 1063–1095

Barretina, J., Caponigro, G., Stransky, N., Venkatesan, K., Margolin, A. A., Kim, S., Wilson, C. J., Lehár, J., Kryukov, G. V., Sonkin, D., et al. (2012) The cancer cell line encyclopedia enables predictive modelling of anticancer drug sensitivity. Nature, 483, 603–607

Guyon, I., Gunn, S., Ben-Hur, A. and Dror, G. (2004) Result Analysis of The Nips 2003 Feature Selection Challenge. In Proceeding NIPS’04 Proceedings of the 17th International Conference on Neural Information Processing Systems. pp. 545–552

Pomeroy, S. L., Tamayo, P., Gaasenbeek, M., Sturla, L. M., Angelo, M., McLaughlin, M. E., Kim, J. Y., Goumnerova, L. C., Black, P. M., Lau, C., et al. (2002) Prediction of central nervous system embryonal tumour outcome based on gene expression. Nature, 415, 436–442

Singh, D., Febbo, P. G., Ross, K., Jackson, D. G., Manola, J., Ladd, C., Tamayo P., Renshaw, A. A., D’Amico, A. V., Richie, J. P., et al. (2002) Gene expression correlates of clinical prostate cancer behavior. Cancer Cell, 1, 203–209

Acknowledgements

This study was supported in part by the National Institutes of Health grants R01 GM59507, P01 CA154295, and P50 CA196530.

Author information

Authors and Affiliations

Corresponding author

Electronic supplementary material

Rights and permissions

About this article

Cite this article

Liu, Y., Zhao, H. Variable importance-weighted random forests. Quant Biol 5, 338–351 (2017). https://doi.org/10.1007/s40484-017-0121-6

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40484-017-0121-6