Abstract

Original data envelopment analysis models treat decision-making units as independent entities. This feature of data envelopment analysis results in significant diversity in input and output weights, which is irrelevant and problematic from the managerial point of view. In this regard, several methodologies have been developed to measure the efficiency scores based on common weights. Specifically, Ruiz and Sirvant (Omega 65:1–9, 2016) formulated an aggregated DEA model to minimize the gap between actual performances and best practices and identify a common best practice frontier. Their model is capable of determining target units for all units under evaluation, simultaneously, with the property that all of them are located on a common best practice frontier. However, in practice it is difficult for some units to achieve that specified target in a single step. Consequently, developing a methodology for assisting units to reach their corresponding targets, through a path of intermediate improving targets, is useful. This problem is investigated in this paper, and we propose a stepwise target setting approach which provides a path of intermediate targets for each unit. We study efficient and inefficient units separately and provide two distinct models for each category, although both of them are intrinsically similar. A simple numerical example and an application are also provided to illustrate our approach.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Data envelopment analysis (DEA) is a nonparametric linear programming-based technique first developed by Charnes et al. (1978) for evaluating the performance of homogeneous decision-making units (DMUs) having multiple inputs and multiple outputs. Since then DEA has been implemented in various applications such as service quality evaluation (Najafi et al. 2015), evaluation of academic performance (Monfared and Safi 2013) and facility location problem (Razi 2018). In DEA, efficiency score of each unit is generally defined as the ratio of the weighted sum of outputs to the weighted sum of inputs. The corresponding input and output weights for each unit are then calculated by solving individual linear programming problems that maximize this ratio provided that the corresponding ratio for all the units does not exceed one. Units with efficiency score of one are submitted as efficient and lay on the efficient frontier of the technology, whereas a score less than one signals inefficiency. Zamani and Borzouei (2016) investigated the stability region for preserving this classification in the presence of variable returns to scale. On the other hand, one of the main functions of DEA is benchmarking. It means that for each inefficient unit, DEA determines a (virtual) target unit lying on the frontier that monitors the best levels of inputs and outputs for that unit to perform efficiently. The first research on this subject was developed by Frei and Harker (1999), who have investigated the issue of benchmarking based on projecting inefficient units onto the strongly efficient frontier of DEA, considering Euclidean distance. After that, some researchers generalized the idea of using Euclidean distance in order to define efficiency measure and also to project units onto the strongly efficient frontier, e.g., see Baek and Lee (2009), Amirteimoori and Kordrostami (2010), and Aparicio and Pastor (2014a, b). The issue of least distance to the frontier has been used in other applications of DEA, such as ranking units (e.g., see Ziari 2016; Aghayi and Tavana 2018). The concept of benchmarking based on least distance has also been developed by Pastor and Aparicio (2010), Ando et al. (2012, 2017), Aparicio and Pastor (2013, 2014a, b) and Aparicio et al. (2014). On the other hand, some authors like Cherchye and Van Puyenbroeck (2001) and Silva Portela et al. (2003) have developed some benchmarking approaches based in the issue of similarity and closeness. Their motivation was that a benchmark which is closer to the inefficient unit is easier to be reached. Other measures of efficiency, like the modified Russell measure and the slack-based measure, have been also utilized for benchmarking, e.g., see Gonzalez and Alvarez (2001) and Aparicio et al. (2007). However, benchmarking is considered as an important field of research in DEA and can be viewed from different perspectives such as neural network as discussed in Shokrollahpour et al. (2016), artificial units in Didehkhani et al. (2018) or Fuzzy De-Novo programming by Sarah and Khalili-Damghani (2018). For a complete review of other benchmarking models, the reader is referred to Aparicio (2016), Aparicio et al. (2017a, b).

An important feature of DEA in evaluating units is that it behaves naturally optimistic, in the sense that it primarily treats units as individual and independent entities, as the corresponding optimal weights for each unit are calculated by autonomous programs. Therefore, it would be likely to happen that the input and output weights differ considerably across all units, which is incompatible and irrelevant. Especially, flexibility in choosing each unit’s optimal weights will surely reduce the discrimination power of DEA in distinguishing efficient and inefficient units. This query usually causes critical arguments among researchers. In this regard, there are a variety of means to overcome the aforementioned difficulty. For an early review of methods on improving the power of discrimination in DEA see Angulo-Meza and Lins (2002). One basic approach suggested by Li and Reeves (1999) is the multiple criteria DEA (MCDEA) technique. They formulated DEA in the framework of multi-criteria decision making (MCDM) and tried to evaluate units applying MCDM techniques. Recently, Chaves et al. (2016) studied the main properties of the model proposed by Li and Reeves (1999). On the other hand, Bal and Orkcu (2007) formulated a goal programming problem for weight dispersion in DEA. Their approach was later improved by Bal et al. (2010). Furthermore, Ghasemi et al. (2014) suggested an approach for improving the discrimination power in MCDEA. This study was recently supplemented by Rubem et al. (2017) who introduced a weighted goal programming formulation to solve the MCDEA problem.

Another basic approach to improve the discrimination power of DEA is to determine a common set of weights for all units under assessment. This approach was first proposed by Roll et al. (1991). Actually, a common set of weights (CSWs) are basically considered as coefficients of a supporting hyperplane of the DEA technology at some efficient units. However, the question of how to determine such a hyperplane has been seen in different perspectives. One approach is to minimize the difference between the DEA efficiency scores and those obtained from the associated CSWs. This method was investigated by Despotis (2002) and Kao and Hung (2005). Other formulations have been also proposed, such as maximizing the sum of the efficiency ratios of all the units by Ganley and Cubbin (1992), maximizing the minimum of the efficiency ratios by Troutt (1997), or introducing ideal and anti-ideal virtual units by Khalili-Damghani and Fadaei (2018).

Additionally, Ruiz and Sirvant (2016) formulated a model of CSW in the framework of benchmarking, i.e., they formulated a program to globally minimize a weighted \(L_1\)-distance of all the units to their corresponding benchmarks which are located on a common hyperplane of the DEA technology. In this regard, they suggested that only a facet of the DEA technology should be considered as the best practice frontier. Then, the coefficients of this hyperplane are implemented to calculate efficiency scores. Therefore, it is reasonable to establish benchmarks on this frontier. However, it should be noted that applying this method for target setting may involve deterioration of some of the observed input and output levels. In other words, in the common benchmarking approach the dominance criteria do not prevail necessarily. Moreover, this approach provides benchmark activities for all units under evaluation, simultaneously. Even for efficient units which are not located on the common best practice frontier, a different benchmark unit may be assigned, located on the underlying hyperplane. The inquiry that is highlighted here is that as all the benchmarks lay on a common supporting hyperplane of the technology, it is likely happen that the determined best practice efficient target may be very far from the corresponding unit. Therefore, it would be quite impossible for that unit to achieve its (final) target in one single step, because large-scale input and output adjustments are quite problematic and very demanding for an inefficient unit. This issue is also of great importance even if the final target is obtained via conventional DEA model for individual units separately. In this regard, some researchers investigated the problem of stepwise target setting. This issue includes developing a set of intermediate targets which constitute a path toward the strongly efficient frontier on which the final target is located. The methods of stepwise target setting can be categorized into two types. The first category involves the approaches in which all intermediate targets and final target are chosen from among the set of observed (or existing) units. In contrast, all the approaches that allow all virtual units to be selected as intermediate and final target belong to the second category. In this regard, Seiford and Zhu (2003) were the first who developed a model for type one stepwise benchmarking. Their model has been formulated based on context dependent DEA and layering units into successive layers. After that, most of the existing models in this category adapted the idea of this idea, e.g., Lim et al. (2011) established a path of intermediate benchmarks by clustering units and layering them. Lozano and Villa (2005a, b) investigated the concept of efficiency improvement in DEA, which leads to a stepwise target setting model. Lozano and Villa (2005a) proposed a model which is based on bounded adjustments of inputs and outputs, such that only a limited portion of the corresponding input and output levels is allowed for reduction and expansion at each step, respectively. Also, their model guarantees that each of intermediate targets dominates the previous one. Finally, the algorithm terminates when an efficient target is reached. Also, Suzuki and Nijkamp (2011) developed a stepwise projection DEA model for public transportation in Japan. Khodakarami et al. (2014) formulated a two-stage DEA model which has been applied on industrial Parks. Additionally, Fang (2015) developed a centralized resource allocation based on gradual efficiency improvement. For a complete review on the stepwise target setting models see Lozano and Calzada-Infante (2017). Moreover, Nasrabadi et al. (2018) investigated the concept of stepwise benchmarking in the presence of interval scale data in DEA.

The above discussion clarifies that the enquiry to investigate the problem of sequential benchmarking in case that all final benchmarks are located on a common hyperplane, is of great importance in DEA. In this study, we aim to investigate this subject in the framework of the CSW proposed by Ruiz and Sirvant (2016). Also, we incorporate the idea of bounded adjustments of Lozano and Villa (2005a, b) in our proposed method. Recalling that in the approach of Ruiz and Sirvant (2016) even efficient units that do not lay on the common best practice frontier are also assigned with a benchmark, a qualified model for determining a sequence of benchmarks should be applicable for both efficient and inefficient units. Therefore, we enquire about these two cases separately and establish different formulations for each one, although both models are basically similar.

This paper unfolds as follows. In “A common benchmarking model in DEA” section, we present some preliminary considerations of DEA and review the common benchmarking model developed by Ruiz and Sirvant (2016). Our proposed approach for sequential benchmarking is illustrated in “Developing a benchmark path toward the common best practice frontier” section, followed by a simple numerical example in “Numerical example” section. Then, “Application” section includes an empirical application and finally “Conclusion” section provides discussion and concluding remarks.

A common benchmarking model in DEA

In this section, we briefly present the common benchmarking model developed by Ruiz and Sirvant (2016). Consider a set of n decision-making units (DMUs), each consuming m inputs to produce s outputs. For \(j=1,\ldots ,n\), we denote unit j by the activity vector \((X_j,Y_j)\), where \(X_j\) and \(Y_j\) are input and output vectors of unit j, respectively. It is assumed that \(X_j=(x_{1j},\ldots ,x_{mj})^t \in {\mathcal {R}}^m_{\ge 0}\), \(X_j \ne 0\) and \(Y_j=(y_{1j},\ldots ,y_{sj})^t \in {\mathcal {R}}^s_{\ge 0}\), \(Y_j \ne 0\).

The production possibility set consisting all feasible input–output vectors (X, Y) is generally defined as \(T=\{(X,Y) | X\; {\text{ can }}\; {\text{ produce }}\;Y \}\). Each member of T is called a (virtual) activity. We call each unit j an observed activity or an observed unit, for \(j=1,\ldots ,n\).Footnote 1 An activity \((\bar{X},\bar{Y})\in T\) is said to be non-dominated or efficient in set T iff there exists no other \((X,Y)\in T\) such that \(X \le \bar{X}\) and \(Y \ge \bar{Y}\). The set of all observed extreme efficient units is denoted by E.

If we assume a variable returns to scale (VRS) technology, the set T in DEA is characterized as:

All models here are formulated for a VRS technology. However, the set T for constant returns to scale (CRS) technology can be easily formulated by dropping the affine constraint \(\sum\nolimits _{j=1}^n{\lambda _j} =1\) from the above formulation.

The CSW model of Ruiz and Sirvant (2016)

Based on above notations, the common benchmarking model which provides the closest targets on a common efficient frontier of the technology for all units simultaneously, is formulated as (see Ruiz and Sirvant 2016):

where \(\Vert (X_j,Y_j)-(X_j^*,Y_j^*)\Vert ^{\mathbf {w}}_1 = \sum _{i=1}^{m}{w^x_i |x_{ij}-x_{ij}^*|} + \sum _{r=1}^{s}{w^y_r |y_{rj}-y_{rj}^*|}\) is the weighted \(L_1\)-distance in \({\mathcal {R}}^{m+s}\) space, with the nonnegative weight vector \({\mathbf {w}}=({\mathbf {w}}^{\mathbf {x}},{\mathbf {w}}^{\mathbf {y}}) \in {\mathcal {R}}^{m+s}\). Also, M is assumed to be a sufficiently large positive quantity. Solving model (2), we have:

-

1.

\(H^*=\{ (X,Y)\ |\ -U^* Y + V^* X + \gamma ^* =0 \}\) is the common best practice frontier of the technology which is used as the reference hyperplane in target setting.Footnote 2

-

2.

The set \(RG=\{ k\ |\ \lambda _k^{j*} >0\;{\text{ for }}\;{\text{ some }}\;j, 1\le j\le n \}\) provides the reference group of units which lay on the reference hyperplane \(H^*\).

-

3.

For unit j which is not in the reference group, the coordinates of the corresponding target on \(H^*\) is given by

$$\begin{aligned} (X_j^*, Y_j^*)=\sum _{k \in E} {\lambda _k^{j*} (X_k, Y_k)}. \end{aligned}$$(3)

Note that model (2) can be easily transformed to a zero–one linear program by using transformations \(|x|=x^+ + x^-\) and \(x=x^+ - x^-\) for \(x \in {\mathcal {R}}\).

Difficulties in common benchmarking

Although model (2) determines benchmark activities for all units in the sense of closest targets, but the corresponding targets may be very far from the original units with actual input and output levels in practice. The main reason is that all targets are supposed to lay on a common supporting hyperplane of the underlying technology. Therefore, this makes it roughly impossible for some units to achieve the reference hyperplane in a single action, as it predictably requires significant adjustments of inputs and/or outputs. This issue is also of great importance even for efficient units which do not belong to RG. Here, we illustrate this point by a simple numerical example including 15 units with a single input and a single output. The original data set is provided by the first two columns of Table 1.

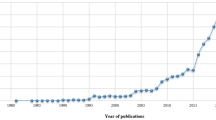

In order to determine the CSWs, target activities and the common best practice frontier, we run model (2) with \(({\mathbf {w}}^{\mathbf {x}},{\mathbf {w}}^{\mathbf {y}})=({\mathbf {1}}_m,{\mathbf {1}}_s)\) and obtain \(H^*=\{ (x,y)\ |\ -2y+x+11=0 \}\) as the reference hyperplane. Also, the set \(RG=\{\mathbf{C },\mathbf{D },\mathbf{P }\}\) is determined as the index set of the reference group. Moreover, the corresponding targets are found out and given in the last two columns of Table 1. The corresponding technology in input–output space is represented in Fig. 1, and the common reference hyperplane is shown by bold line.

It is clear that all units in RG, i.e., units \(\mathbf{C }\), \(\mathbf{D }\) and \(\mathbf{P }\) coincide their corresponding targets. This also happens for the efficient non-extreme unit \(\mathbf{P }\) which is located on \(H^*\). Moreover, the common benchmark for efficient units \(\mathbf{A }\), \(\mathbf{B }\) and \(\mathbf{N }\) is unit \(\mathbf{C }\) and for unit \(\mathbf{D }\) is unit \(\mathbf{E }\). Additionally, the corresponding benchmark for the other inefficient units is a virtual (or observed) activity located on the intersection of \(H^*\) and \(T_v\).

Now, consider unit \(\mathbf{A }\). We observe that although it is efficient, but it has a different benchmark, i.e., unit \(\mathbf{C }\), which is approximately far from it. Therefore, it might be disappointing for unit \(\mathbf{A }\) to just consider such a far unit as its benchmark, since it is roughly impossible to reach it in a single move. Moreover, there exists a similar story for, e.g., the inefficient unit \(\mathbf{I }\).

This setting motivates us to address the above mentioned problem, i.e., to help each unit to achieve the common best practice frontier gradually. We follow a procedure to set up a path of intermediate benchmarks for each unit until we reach \(H^*\). The procedure is developed in next section.

Developing a benchmark path toward the common best practice frontier

In order to develop a procedure for establishing a benchmark path, efficient and inefficient units are investigated separately. Recalling that \(H^*\) is the reference hyperplane characterized by model (2) and assuming that unit “o” is under assessment, with \(o \notin RG\), we aim to establish a sequence of (improving) intermediate benchmarks for this unit that originates from it and converges to \(H^*\) in sequential steps. Let \((Target-t)\) be the model which calculates the tth (intermediate) target from the preceding one. Then, we present the general scheme of our benchmarking algorithm as the following 3-step procedure:

Sequential Target Setting Algorithm for unit “o”

-

Step 1 Set \(t=0\), \((X_o^t,Y_o^t)=(X_o,Y_o)\).

-

Step 2 Set \(t=t+1\) and solve \((Target-t)\) for unit “o” to obtain

$$\begin{aligned} \left(X_o^{t},Y_o^{t}\right)=\left(X_o^{t-1} - S^{-t*},Y_o^{t-1} + S^{+t*}\right). \end{aligned}$$ -

Step 3 If \((X_o^{t},Y_o^{t}) \in H^*\), stop. Otherwise go to Step 2.

Now, the main question is that how to formulate \((Target-t)\) for each unit. The answer is that the structure of \((Target-t)\) depends on the efficiency status of unit “o” under consideration. We first try to formulate \((Target-t)\) for an efficient unit, and then, we go to the case of an inefficient one.

A sequence of targets for an efficient unit

Assuming that unit “o” is efficient, we develop a model which settles intermediate benchmarks through a path toward \(H^*\). We acknowledge that the following remarks should be embedded in the proposed model:

-

1.

As unit “o” is efficient, all intermediate benchmarks are expected to be efficient, too. This means that the benchmark path must go through the efficient frontier until it reaches \(H^*\), which is an especial part of the efficient frontier. This implies that the efficiency status of all intermediate benchmarks is not allowed to be deteriorated along the path. Nevertheless, the benchmark path would inevitably involve deterioration in some of the observed input and/or output levels in return to improving the others.

-

2.

The path is monotonically convergent to \(H^*\), i.e., the direction of the path is toward the common best practice frontier. This means that each intermediate benchmark is closer to the final target than the previous one. We verify this property in our approach by checking whether each individual input/output of unit “o” has improvement in its final target or not. If an individual input (output) has been improved (deteriorated) in the final target, we imply that this property should be satisfied for all intermediate benchmarks. Therefore, the sign of each input/output slack in intermediate targets is determined according to the sign of the corresponding slack in the final target.

-

3.

As the path is expected to move toward \(H^*\) gradually, it is rational to approve bounded adjustments in input and output levels at each step. Hence, like Lozano and Villa (2005a, b) we set upper and lower bounds for input and output adjustments. These bounds are defined as a predetermined portion of their current levels. Note that the corresponding bounds are determined by the decision maker, based on some managerial limitations and they may differ for individual inputs and outputs at each step.

All in all, the proposed benchmark model \((Target-t)\) associated with unit “o”, for \(t=1,2,\ldots\) is formulated as:

Note that, similar to the CSW model of Ruiz and Sirvant (2016), we use weighted \(L_1\)-norm in the objective function of the above model. To illustrate the model, note that constraints (b)–(j) guarantee that the tth target \((X_o^{t-1}-S^{-t},Y_o^{t-1} - S^{+t})\) lies on the efficient frontier (see model (2)). The next two constraints (k) and (l) determine the sign of slacks \(s_i^{-t}\) and \(s_r^{+t}\), respectively, depending on that input i and output r has improvement in the final target or not. This will ensure us that the constructed path moves uniformly toward the final target \((X_o^*,Y_o^*)\). In addition, two constraints (m) and (n) impose bounds on the value of slacks as adjustment values, where \(\delta _x\) and \(\delta _y\) are quantities between zero and one and denote relative amount of each input and output that is allowed for adjustment, respectively. Note that these values may vary in different steps. Finally, the last two constraints (o) and (p) are added to guarantee the convergence of the obtained path in the sense of \(L_1\)-distance. This fact is verified in the following theorem.

Theorem 1

Assume that\((X_o^{t},Y_o^{t})=(X_o^{t-1}-S^{-t*},Y_o^{t-1} - S^{+t*})\)is thetth benchmark for unit “o” determined by model\((Target-t)\), and \((X_o^*,Y_o^*)\)is the final target obtained from model (2). Then:

Proof

We have

Now, recalling that the weight vectors \({\mathbf {w}}^{\mathbf {x}}\) and \({\mathbf {w}}^{\mathbf {y}}\) are both nonnegative, it is sufficient to prove that \(|x_{io}^{t-1} - x_{io}^* - s_i^{-t}| \le |x_{io}^{t-1} - x_{io}^*|\) for \(i=1,\ldots ,m\), and \(|y_{ro}^* - y_{ro}^{t-1} - s_r^{+t}| \le |y_{ro}^* - y_{ro}^{t-1}|\) for \(r=1,\ldots ,s\). Toward this end, we consider three cases as:

Case 1: \(x_{io}^{t-1} - x_{io}^* >0\). In this case, constraint (k) implies that \(s_i^{-t} \ge 0\). On the other hand, by constraint (o), we have \(s_i^{-t} \le x_{io}^{t-1} - x_{io}^*\). Therefore, we have:

Case 2: \(x_{io}^{t-1} - x_{io}^* <0\). First, by constraint (k), we have \(s_i^{-t} \le 0\). Meanwhile, constraint (o) implies that \(s_i^{-t} \ge x_{io}^{t-1} - x_{io}^*\). Therefore, we have:

Case 3: \(x_{io}^{t-1} - x_{io}^* =0\). In this case, constraint (o) implies that \(s_i^{-t} = 0\). Therefore, the inequality clearly holds by equality.

The above three cases prove the first aforementioned statement. By a similar discussion on outputs, one can prove the second inequality, easily. \(\square\)

As we wish to establish a benchmark path converging to the common best practice frontier, we run model (4) until we reach the common frontier \(H^*\), i.e., we have \(-U^* Y_o^{t} + V^* X_o^{t} + \gamma ^* =0\), where \((U^*,V^*,\gamma ^*)\) is an optimal solution of model (2).

A sequence of targets for an inefficient unit

Now, we assume that unit “o” is inefficient. In order to develop a mathematical formulation to establish intermediate benchmarks for this unit, we embed the following considerations in our model:

-

1.

As unit “o” is inefficient, we aim to find its sequential benchmarks such that each intermediate benchmark is better that the previous one. Although in common benchmarking model (2) the domination criteria does not necessarily prevail, it is possible to determine intermediate benchmarks at each step in a way that each target is better than the previous one, in the sense of its weighted \(L_1\)-distance to the efficient frontier. Hence, we consider the following weighed additive model of Cooper et al. (2011) evaluating (virtual) activity \((\bar{X},\bar{Y})\in T\), which minimizes the weighted \(L_1\)-distance of this unit to the efficient frontier, as follows:

$${\text{ADD}}(\bar{X},\bar{Y}) =\begin{array}{lll}\min & \quad -U \bar{Y} + V \bar{X}+\gamma &\\ {\hbox{s.t.}} &\quad -U Y_j + V X_j+\gamma \ge 0, &\quad j\in E \\ &{} \quad U \ge {\mathbf {w}}^{\mathbf {y}},\quad V \ge {\mathbf {w}}^{\mathbf {x}}, \gamma \;{\text{free}}, \\ \end{array}$$(6)where \(({\mathbf {w}}^{\mathbf {x}},{\mathbf {w}}^{\mathbf {y}})\in {\mathcal {R}}^{m+s}\) is a nonnegative weight vector. Our aim is to determine \((X_o^{t},Y_o^{t})\) from the previous (intermediate) benchmark \((X_o^{t-1},Y_o^{t-1})\) with the property that

$$\begin{aligned} {\text{ADD}}(X_o^{t},Y_o^{t}) \le {\text{ADD}}(X_o^{t-1},Y_o^{t-1}). \end{aligned}$$(7)In other words, we wish to move along an improving direction in our benchmarking procedure. The following theorem provides us to find a sufficient condition for such a direction.

Theorem 2

Assume that\((U_o^*,V_o^*,\gamma _o^*)\)is an optimal solution of the weighted additive model (6) evaluating unit “o”. Then for each vector\((S^-,S^+)\in {\mathcal {R}}^{m+s}\)such that\(U_o^* S^+ + V_o^* S^- \ge 0\), we have\({\text{ADD}}(X_o - S^-,Y_o + S^+) \le {\text{ADD}}(X_o,Y_o)\).

Proof

The proof is straightforward if we apply the additive model (6) for evaluating \((X_o - S^-,Y_o + S^+)\). By assumption, it can be verified that \((U_o^*,V_o^*,\gamma _o^*)\) is a feasible solution for model (6) evaluating \((X_o - S^-,Y_o + S^+)\), which implies that \({\text{ADD}}(X_o - S^-,Y_o + S^+) \le {\text{ADD}}(X_o,Y_o)\), and the proof is complete. \(\square\)

The above theorem verifies that if we add the constraint \(U_o^* S^+ + V_o^* S^- \ge 0\) to our benchmarking model, we can guarantee that the resulting path is an improving one w.r.t. the weighted additive model (6).

-

2.

It should be kept in mind that a desirable benchmark path should go monotonically toward the best practice frontier. So, as before, the sign of input and output slacks is determined according to improvement or deterioration in the corresponding input and outputs of the final target. This will be observed in the proposed model similar to model (4).

-

3.

The final consideration deals with bounded adjustments for inputs and outputs at each step. This issue can be observed in the proposed model similar to model (4).

Finally, model \((Target-t)\) associated with inefficient unit “o” is formulated as:

To illustrate the model, note that constraints (b)–(e) guarantee that the tth benchmark is feasible. Constraint (f) ensures that the obtained benchmark is better than the previous one, w.r.t. the weighted additive model (6). Moreover, constraints (g) and (h) determine the direction of the path that should go toward the final target \((X_o^*,Y_o^*)\). Finally, the remaining constraints (g)–(l) are interpreted similar to what we have in model (4).

Computational complexity

Both models (4) and (8) that have been developed for sequential target setting, are nonlinear due to existence of absolute value in their constraints, as well as their objective functions. Nevertheless, this issue can be resolved by introducing two nonnegative variables \(x^+\) and \(x^-\), such that \(x=x^+ - x^-\) and \(|x|=x^+ + x^-\), but it increases the computational complexity of the models, which is not of interest and should be avoided. In this regard, we propose another approach for linearizing these models which is more practical. Without loss of generality, we illustrate this technique for model (4).

Assume that in model (4) we partition the set of input indices \(I=\{1,\ldots ,m\}\) as \(I = I^{t-1}_< \cup I^{t-1}_> \cup I^{t-1}_0\), where:

Then, by constraints (k), (l) and (o), it is concluded that:

A similar technique can be used for output indices, easily. Now, according to (10), and by imposing suitable constraints for both inputs and outputs, one can easily ignore the absolute value notation in the corresponding models. Therefore, both models (4) and (8) are transformed to equivalent linear programs, accordingly.

Numerical example

Recall data set presented in Table 1. We have already obtained the final targets for all units by solving model (2). Now, we aim to set up a benchmark path for each individual unit, except for the RG ones. Based on our theory, we analyze efficient and inefficient units, by running models (4) and (8), respectively. Note that in both models we assume \(({\mathbf {w}}^{\mathbf {x}},{\mathbf {w}}^{\mathbf {y}})=({\mathbf {1}}_m,{\mathbf {1}}_s)\), and \(\delta _x = \delta _y = 0.3\). The results are reported in Table 2.

From the results presented in Table 2, we observe that the three units \(\mathbf C\), \(\mathbf D\) and \(\mathbf P\) which belong to RG coincide their corresponding targets. Furthermore, the longest path is for unit \(\mathbf A\), which reaches its benchmarks in seven steps. On the other hand, unit \(\mathbf H\) has the shortest path to its benchmark, as it needs only one step to reach the reference hyperplane. Finally, we notice that the final destination of the benchmark path for all units is exactly the unit obtained from model (2). The benchmark path for all efficient and inefficient units, except for those belonging to RG, is shown in Fig. 2.

Application

To illustrate the proposed approach, consider the data taken from Coelli et al. (2002), which consists of 28 international airlines during year 1990. This data set has already been used in other DEA papers in order to illustrate different concepts (see Ray 2004, 2008; Ruiz 2013; Aparicio et al. 2007). Especially in Ruiz and Sirvant (2016), this data set has been applied to illustrate the concept of CSW and also ranking of units. Here, we apply our stepwise target setting model on this data set. Note that for each airline, four inputs and two outputs are considered which are provided in Table 3. For further details on the data set, one may refer to Ruiz and Sirvant (2016).

First, a conventional DEA model under the assumption of CRS shows that \(E=\{4,6,7,8,11,13,15,16,18\}\). Then, we apply model (2) in the framework of CRS and with weights defined as:

where \(\bar{x}_i\), \(i=1,\ldots ,m\) and \(\bar{y}_r\), \(r=1,\ldots ,s\) are the averages of the corresponding inputs and outputs of all units. Note that this specification of the \(L_1\)-distance has already been used in the DEA literature (see Thrall 1996). Moreover, by using the weighted \(L_1\)-distance with Eqs. (11), model (2) becomes unit invariant.

By running the model (2), we obtain \(RG=\{4,8,11,18\}\). Then, in order to find the target path for each unit, which does not belong to RG, we run models (4) and (8), for efficient and inefficient units, respectively. Note that in both models we set \(\delta _x=\delta _y=0.3\). The obtained sequential targets for efficient and inefficient units are reported in Tables 4 and 5, respectively.

We first observe that Table 4 does not provide target path airlines in RG, because for these units targets and actual inputs/outputs coincide. However, for the efficient units not in RG, a path of sequential targets toward the reference hyperplane is reported. We observe that all efficient units reach their corresponding final target in two or three steps. For QANTAS and SAUDIA, the targets are less demanding, but the other efficient airlines, i.e., LUFTHANSA, SWISSAIR and PORTUGAL, need considerable adjustments to reach their corresponding target. The maximum adjustment for these three is due to their first input which needs approximately 50% decrease from their actual levels. Also, note that there would be no change in the third input of LUFTHANSA, the second input and second output of SWISSAIR and the second output of PORTUGAL. On the other hand, as the results show the efficiency status of the efficient units do not deteriorate along the path, i.e., for efficient airlines, all intermediate (and final) targets have an optimal value of zero in model (6).

On the other hand, turning to inefficient airlines in Table 5, we observe different behaviors. Among these airlines, CATHAY has the longest path including 5 benchmarks toward its final target. This is due to the level of its third input which needs a decrement of approximately 84% from its actual level (3171 to 549.8). Meanwhile, the level of its first input and second output remains unchanged along the path. Then, the second longest path among the inefficient airlines is of length 3 and belong to EASTERN and USAIR, both consisting of an adjustment of nearly twice in the second output. Although, three inefficient airlines reach their corresponding final target in two steps, the majority of ten inefficient airlines achieve the reference hyperplane in just one step. Moreover, the last column in Table 5 presents the optimal value of the weighted additive model (6) for each (virtual) unit. Observing these values, which constitute a descending sequence for each inefficient unit with a final value of zero, the improving characteristic of the obtained sequential benchmarks is confirmed.

Conclusion

One of the main features of DEA is that it can be used for benchmarking, which is an important issue in management and economics. In practice, in a production technology the decision maker (DM) usually aims to first evaluate the efficiency status of decision-making units and classify them into efficient and inefficient categories, and then to determine a benchmark feasible and efficient activity for each inefficient unit. Benchmarking can assist inefficient units to improve their performance in comparison to best practices of others. On the other hand, applying an aggregated DEA-based model which finds a common set of weights to evaluating the efficiency score of all units, simultaneously, it is possible to determine a common supporting hyperplane of the technology as the best practice frontier. Thus, one could evaluate the units by means of the coefficients of this frontier and also to establish targets for all of them, on this common best practice frontier. However, in practice these targets may be difficult to reach in a single step, and therefore, it is required to propose a gradual improvement strategy to achieve the best practices. This research developed an approach of establishing a gradual improving path of targets for each unit which is not located on the best practice frontier. To apply the proposed method, the common set of weights (CSW) model of Ruiz and Sirvant (2016) is solved in order to find the final target for all units, simultaneously. Then for each unit, a path of targets is found which originates from that unit and proceeds gradually to the final efficient target which has been already determined. The obtained path is an improving one, in the sense that if the underlying unit is an efficient one, then all of the intermediate targets are efficient as well, and for inefficient units each of the intermediate targets is closer to the final target than the previous one, and also has a better performance than the previous one, in the framework of the weighted additive model of Cooper et al. (2011). Also, in the proposed model only a limited amount of adjustment is allowed. The portion of the current levels of inputs and outputs that is allowed for adjustment is determined by the DM based on his/her managerial points of view and practical limitations. Then, this feature guarantees that the path is roughly acceptable by the DM, as it is more practical and understandable.

This approach can be extended along different lines. One can investigate this issue for a free disposal hull (FDH) technology. Furthermore, the question of establishing a target path which in convergent to the final target in a predetermined number of steps might be interesting. Another possibility is to develop a procedure of stepwise target setting in the framework of other common weight methodologies, such as models proposed in Despotis (2002) or Kao and Hung (2005).

Notes

In this paper, we use the terms “unit” and “activity” interchangeably.

In Ruiz and Sirvant (2016) this hyperplane has also been used for ranking units.

References

Aghayi N, Tavana M (2018) A novel three-stage distance-based consensus ranking method. J Ind Eng Int. https://doi.org/10.1007/s40092-018-0268-4

Amirteimoori A, Kordrostami S (2010) A Euclidean distance-based measure of efficiency in data envelopment analysis. Optimization 59:985–996

Ando K, Kai A, Maeda Y, Sekitani K (2012) Least distance based inefficiency measures on the Pareto-efficient frontier in DEA. J Oper Res Soc Jpn 55:73–91

Ando K, Minamide M, Sekitani K (2017) Monotonicity of minimum distance inefficiency measures for data envelopment analysis. Eur J Oper Res 260(1):232–243

Angulo-Meza L, Lins MPE (2002) Review of methods for increasing discrimination in data envelopment analysis. Ann Oper Res 116:225–242

Aparicio J (2016) A survey on measuring efficiency through the determination of the least distance in data envelopment analysis. J Cent Cathedra 9(2):143–167

Aparicio J, Pastor JT (2013) A well-defined efficiency measure for dealing with closest targets in DEA. Appl Math Comput 219:9142–9154

Aparicio J, Pastor JT (2014a) On how to properly calculate the Euclidean distance-based measure in DEA. Optimization 63(3):421–432

Aparicio J, Pastor JT (2014b) Closest targets and strong monotonicity on the strongly efficient frontier in DEA. Omega 44:51–57

Aparicio J, Ruiz JL, Sirvant I (2007) Closest targets and minimum distance to the Pareto-efficient frontier in DEA. J Prod Anal 28(3):209–218

Aparicio J, Mahlberg B, Pastor JT, Sahoo BK (2014) Decomposing technical inefficiency using the principle of least action. Eur J Oper Res 239:776–785

Aparicio J, Cordero JM, Pastor JT (2017a) The determination of the least distance to the strongly efficient frontier in data envelopment analysis oriented models: modelling and computational aspects. Omega 71:1–10

Aparicio J, Pastor JT, Sainz-Pardo JL, Vidal F (2017b) Estimating and decomposing overall inefficiency by determining the least distance to the strongly efficient frontier in data envelopment analysis. Oper Res. https://doi.org/10.1007/s12351-017-0339-0

Baek C, Lee JD (2009) The relevance of DEA benchmarking information and the least distance measure. Math Comput Model 49:265–275

Bal H, Orkcu HH (2007) A goal programming approach to weight dispersion in data envelopment analysis. Gazi Univ J Sci 20:117–125

Bal H, Orkcu HH, Celebioglu S (2010) Improving the discrimination power and weight dispersion in data envelopment analysis. Comput Oper Res 37:99–107

Charnes A, Cooper WW, Rhodes E (1978) Measuring the efficiency of decision making units. Eur J Oper Res 2:429–444

Chaves MCC, Soares de Mello JCCB, Angulo-Meza L (2016) Studies of some duality properties in the Li and Reeves model. J Oper Res Soc 67:474–482

Cherchye L, Van Puyenbroeck T (2001) A comment on multi-stage DEA methodology. Oper Res Lett 28:93–98

Coelli TE, Grifell-Tatje E, Perelman S (2002) Capacity utilization and profitability: a decomposition of short-run profit efficiency. Int J Prod Econ 79(3):261–278

Cooper WW, Pastor JT, Aparicio J, Borras F (2011) Decomposing profit inefficiency in DEA through the weighted additive model. Eur J Oper Res 212(2):411–416

Despotis DK (2002) Improving the discrimination power of DEA. J Oper Res Soc 53:314–323

Didehkhani H, Lotfi FH, Sadi-Nezhad S (2018) Practical benchmarking in DEA using artificial DMUs. J Ind Eng Int. https://doi.org/10.1007/s40092-018-0281-7

Fang L (2015) Centralized resource allocation based on efficiency analysis for step-by-step improvement paths. Omega 51:24–28

Frei FX, Harker PT (1999) Projections onto efficient frontiers: theoretical and computational extensions to DEA. J Prod Anal 11:275–300

Ganley JA, Cubbin JS (1992) Public sector efficiency measurement: applications of data envelopment analysis. North-Holland, Amsterdam

Ghasemi MR, Ignatius J, Emrouznejad A (2014) A bi-objective weighted model for improving the discrimination power in MCDEA. Eur J Oper Res 233:640–650

Gonzalez A, Alvarez A (2001) From efficiency measurement to efficiency improvement: the choice of a relevant benchmark. Eur J Oper Res 133:512–520

Kao C, Hung HT (2005) Data envelopment analysis with common weights: the compromise solution approach. J Oper Res Soc 56:1196–1203

Khalili-Damghani K, Fadaei M (2018) A comprehensive common weights data envelopment analysis model: ideal and anti-ideal virtual decision making units approach. J Ind Syst Eng 11(3):0–0

Khodakarami M, Shabani A, Saen RF (2014) A new look at measuring sustainability of industrial parks: a two-stage data envelopment analysis approach. Clean Technol Environ Policy 16:1577–1596

Li XB, Reeves GR (1999) A multiple criteria approach to data envelopment analysis. Eur J Oper Res 115:507–517

Lim S, Bae H, Lee LH (2011) A study on the selection of benchmark paths in DEA. Expert Syst Appl 38:7665–7673

Lozano S, Calzada-Infante L (2017) Computing gradient-based stepwise benchmarking paths. Omega. https://doi.org/10.1016/j.omega.2017.11.002

Lozano S, Villa G (2005a) Determining a sequence of targets in DEA. J Oper Res Soc 56:1439–1447

Lozano S, Villa G (2005b) Gradual technical and scale efficiency improvement in DEA. Ann Oper Res 173:123–136

Monfared MAS, Safi M (2013) Network DEA: an application to analysis of academic performance. Int J Ind Eng 9(1):9–15

Najafi S, Saati S, Tavana M (2015) Data envelopment analysis in service quality evaluation: an empirical study. Int J Ind Eng 11(3):319–330

Nasrabadi N, Dehnokhalaji A, Korhonen P, Wallenius J (2018) A stepwise benchmarking approach to DEA with interval scale data. J Oper Res Soc. https://doi.org/10.1080/01605682.2018.1471375

Pastor JT, Aparicio J (2010) The relevance of DEA benchmarking information and the least-distance measure: comment. Math Comput Model 52:397–399

Ray SC (2004) Data envelopment analysis: theory and techniques for economics and operations research. Cambridge University Press, Cambridge

Ray SC (2008) The directional distance function and measurement of super-efficiency: an application to airline data. J Oper Res Soc 59(6):788–797

Razi FF (2018) A hybrid DEA-based K-means and invasive weed optimization for facility location problem. J Ind Eng Int. https://doi.org/10.1007/s40092-018-0283-5

Roll Y, Cook WD, Golany B (1991) Controlling factor weights in data envelopment analysis. IEEE Trans 23:2–9

Rubem APS, Soares de Mello CB, Angulo-Meza L (2017) A goal programming approach to solve the multiple criteria DEA model. Eur J Oper Res 260:134–139

Ruiz JL (2013) Cross-efficiency evaluation with directional distance functions. Eur J Oper Res 228(1):181–189

Ruiz JL, Sirvant I (2016) Common benchmarking and ranking of units with DEA. Omega 65:1–9

Sarah J, Khalili-Damghani K (2018) Fuzzy type-II De-Novo programming for resource allocation and target setting in network data envelopment analysis: a natural gas supply chain. Expert Syst Appl 117:312–329

Seiford LM, Zhu J (2003) Context-dependent data envelopment analysis—measuring attractiveness and progress. Omega 31:397–408

Shokrollahpour E, Lotfi FH, Zandieh M (2016) An integrated data envelopment analysis—artificial neural network approach for benchmarking of bank branches. J Ind Eng Int 12(2):137–143

Silva Portela MCA, Castro Borges P, Thanassoulis E (2003) Finding closest targets in non-oriented DEA models: the case of convex and non-convex technologies. J Prod Anal 19:251–269

Suzuki S, Nijkamp P (2011) A stepwise-projection data envelopment analysis for public transport operations in Japan. Lett Spatial Resour Sci 4:139–156

Thrall RM (1996) Duality, classification and slacks in DEA. Ann Oper Res 66:109–138

Troutt MD (1997) Deviation of the maximum efficiency ratio model from the maximum decisional efficiency principle. Ann Oper Res 73:323–338

Zamani P, Borzouei M (2016) Finding stability regions for preserving efficiency classification of variable returns to scale technology in data envelopment analysis. J Ind Eng Int 12(4):499–507

Ziari S (2016) An alternative transformation in ranking using l1-norm in data envelopment analysis. J Ind Eng Int 12(3):401–405

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Nasrabadi, N. A sequence of targets toward a common best practice frontier in DEA. J Ind Eng Int 15, 695–707 (2019). https://doi.org/10.1007/s40092-018-0300-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40092-018-0300-8