Abstract

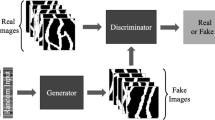

This paper proposes a novel approach for generating 3-dimensional complex geological facies models based on deep generative models. It can reproduce a wide range of conceptual geological models while possessing the flexibility necessary to honor constraints such as well data. Compared with existing geostatistics-based modeling methods, our approach produces realistic subsurface facies architecture in 3D using a state-of-the-art deep learning method called generative adversarial networks (GANs). GANs couple a generator with a discriminator, and each uses a deep convolutional neural network. The networks are trained in an adversarial manner until the generator can create “fake” images that the discriminator cannot distinguish from “real” images. We extend the original GAN approach to 3D geological modeling at the reservoir scale. The GANs are trained using a library of 3D facies models. Once the GANs have been trained, they can generate a variety of geologically realistic facies models constrained by well data interpretations. This geomodelling approach using GANs has been tested on models of both complex fluvial depositional systems and carbonate reservoirs that exhibit progradational and aggradational trends. The results demonstrate that this deep learning-driven modeling approach can capture more realistic facies architectures and associations than existing geostatistical modeling methods, which often fail to reproduce heterogeneous nonstationary sedimentary facies with apparent depositional trend.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

Building geologically realistic facies models based on sparse measurements and interpretations at wells is essential for field development and reservoir management. The 3D sedimentary facies architecture and connectivity play a critical role in the determination of reservoir heterogeneity and hydrocarbon flow. The modeling process involves predicting the spatial distribution of sedimentary facies over a wide geographical area given measurements at a few locations. Several tools exist for creating geological and petrophysical property models, one of the most important being geostatistics (Deutsch and Journel 1998).

Early geostatistical algorithms mainly used spatial linear interpolation or performed simulation of geological attributes by assuming that these attributes follow Gaussian distributions (Cressie 1990; Goovaerts 1997). This linear interpolation is based on a concept called a “variogram” that measures spatial continuity of the variable, such as porosity and geological facies. Most geological patterns, however, are non-Gaussian and highly nonlinear (Journel and Zhang 2006).

To overcome these limitations, a new geostatistical approach called multi-point statistics (MPS) was developed to simulate complex geological patterns based on a training image (Strebelle 2002; Caers and Zhang 2004; Zhang et al. 2006; Chugunova and Hu 2008; Honarkhah and Caers 2012) while having flexibility to honor conditioning data. These methods aim to generate geological models by extracting patterns from a single training image and anchoring them to physical measurements at well locations. However, these algorithms have difficulty in reproducing realistic nonlinear patterns. Furthermore, they do not exhibit the variability and uncertainty of geological inference, particularly in 3D, when the subsurface sedimentary facies have strong heterogeneous characteristics with apparent nonstationary geological spatial trend, which is a ubiquitous phenomenon in most reservoirs.

Another method that can represent geological facies is object-based models (OBM). OBM can generate realistic geobodies based on distributions of geometric shapes using marked point processes (Deutsch and Wang 1996); however, it is limited to objects whose shapes can be parameterized. Additionally, since OBM uses Markov Chain Monte Carlo (MCMC) algorithms to perform data conditioning (Holden et al. 1998), it becomes extremely slow and often fails to converge (Hauge et al. 2007; Skorstad et al. 1999).

Recent research on applying deep machine learning to reservoir modeling using methods called generative adversarial networks (GANs), originally proposed by Goodfellow et al. (2014), becomes more active. Specifically, Chan and Elsheikh (2017) proposed parameterizing a geological model using GANs. GANs have also been proposed to reconstruct porous medium from CT-scan rock samples (Mosser et al. 2017). GANs were also used to train image-based geostatistical inversion, Laloy et al. (2017).

In all of the aforementioned papers, the authors all used a single training image and split it into smaller “patches” as a training set. This limits GANs to reconstruct only the patterns seen in an arbitrary window that is chosen to capture the features from the training dataset. Consequently, these approaches have difficulty in reproducing multiscale patterns and they fail to reproduce large inter-connected features, which are important for subsurface reservoir simulation. The major limitation in these studies is that they only discuss unconditional modeling with GANs without constraining them to physical measurements or interpretations at well locations.

Dupont et al. (2018) was the first publication using GAN’s that addressed geological modeling at the reservoir scale constrained to well data. A library of reservoir-scale 2D models was generated by object-based modeling as training images that exhibit and represent a wide variation of depositional facies patterns. A semantic inpainting scheme (Li et al. 2017; Pathak et al. 2016; Yeh et al. 2016) was used to generate conditional models by GANs that fully honor well data.

In this paper, we extend this technique to build conditional geological facies models in 3D and demonstrate that it outperforms advanced geostatistical reservoir modeling approaches such as MPS in generating more geologically realistic facies models constrained by well data.

2 Methodology

2.1 Principle and method of GANs

GANs have many potential applications, one of the most recognized being for image generation where they have been used to generate artificial photorealistic facial images that are truly indistinguishable from actual pictures of real people. GANs are generative models composed of a generator, G, and discriminator, D, each parametrized by a separate neural network (Goodfellow et al. 2014). G is trained to map a latent vector z into an image x, while D is trained to map an image x to the probability of it being real versus having been generated. The networks are trained adversarially by optimizing the loss function

After training on data, drawn from a distribution Pdata, G will be able to generate samples like those from Pdata, by sampling \({\mathbf{z}} \sim p\left( {\mathbf{Z}} \right)\) and mapping \({\mathbf{x}} = G\left( {\mathbf{z}} \right)\). Training GANs is equivalent to optimizing a two-player game using a minimax objective function in which the discriminator aims to maximize reward by increasing the likelihood of correctly distinguishing real images from fake ones. Meanwhile, the generator attempts to reduce the risk that the generated images are correctly recognized by the discriminator as being fake. Both G and D are trained alternatively, and the training process continues until it reaches to an equilibrium. In another word, each player cannot improve itself, leading to a situation where the discriminator encounters difficulty in telling the difference between a true image and a fake one from the generator.

The generator creates fake images by starting with a noise vector, z, in one-dimensional latent space, whose distribution is normal. The dimension of the latent space is usually low, such as 100 for 2D images and 200 for 3D models in our studies. This latent space can be considered as embedded representation of the complex features or patterns from the true images in a much lower dimension. Once we have a trained GAN, the generator then can perform the prediction by generating new samples that resemble the true images (training images) but does not replicate them. Any noise vector drawn from the latent space can be used by the generator to map to a realistic-looking image. The prediction process is fast because of the reuse of the network parameters from the trained GAN, without the need for retraining. Figure 1 shows a GAN and illustrates the major components and their relationship involving two adversarial networks: a discriminator network against a generator network.

2.2 Unconditional modeling by GANs

We use GANs to build geological facies models by feeding the networks with training images that are deemed to be suitable digital representations of conceptual geological models.

At first, we evaluate the ability of 2D GANs to generate unconditional fluvial samples or realizations. The fluvial training images are created using OBM with varying channel widths and orientations. Figure 2 shows 16 of 15,000 fluvial samples generated (top of figure) with simple binary facies (white: sand; black: background). Each image is 128 × 128 pixels. The proportion of sand in all these training images is around 25%. The lower part of Fig. 2 presents 16 generated unconditional fluvial models using GANs. This suggests that GANs can generate realistic fluvial images that are indistinguishable from the training images used to train the network, specifically in the reproduction of channel width, connectivity and orientation.

The second example is a deltaic system that shows the distributary channels spread out from a point source at the middle of the top boundary of the images. The variation of both the channel widths and orientations along the flow direction indicates strong heterogeneous and nonstationary characteristics of the deltaic depositional system, which is usually quite challenging to model using geostatistical simulation. However, GANs can reproduce this type of nonstationary channel patterns reasonably well. A sample of 16 of the 15,000 deltaic training images used and 16 corresponding unconditional generated samples are shown in Fig. 3, at the top and bottom of the figure respectively.

2.3 Conditional facies modeling

Using a pretrained G and D, we can generate realistic images \({\mathbf{X}}\text{g} = G\left( {\mathbf{Z}} \right)\) conditioned on a set of known values y, which are defined either at pixel locations (in 2D) or voxel location (in 3D). This can be achieved by fixing the weights of G and D and optimizing z to generate realistic samples based on the known values. To generate realistic samples, we would like the samples \({\mathbf{X}}{\text{g}}\) to be close to Pdata, i.e., we would like to generate samples such that the discriminator D assigns high probability to \({\mathbf{X}}{\text{g}}\). This idea is enforced through the perceptual loss. We would also like the samples to honor the pixel or voxel values, i.e., we want the generated \({\mathbf{X}}{\text{g}}\) to match the observations at the known pixel or voxel locations. This is enforced through the contextual loss. The perceptual loss penalizes the unrealistic images that are generated by the generator, while the contextual loss penalizes the mismatch between the generated images and the measurements at data locations.

We propose here an approach to constrain generated samples from GAN that will honor well measurements. In this paper, these measurements are geological facies interpretation at each well location. In so doing, this leads to better data conditioning than the semantic inpainting approach proposed by Dupont et al. (2018). This is achieved by defining the contextual loss using a distance transformation that measures the mismatch between the GAN-generated samples and the conditioning data, i.e., facies observations at each well location. The total loss function is defined as the sum of perceptual loss and the contextual loss:

where the perceptual loss is defined as

and the contextual loss is computed as

where K is the total number of facies, M is the total number of the known facies locations over which we wish to condition the samples generated by the GANs. In the contextual loss, \(\left\{ {\mathbf{I}_{m } | m = 1, \ldots ,M} \right\}\), we have a collection of m-facies indicator variables, while lower case \(\left\{ {i_{m} | m = 1, \ldots ,M} \right\}\) represents the respective observations such that for the observed facies indicator at the datum location d, y(.) maps corresponding facies to its pixel (in 2D) or voxel (in 3D) location. The contextual loss is, therefore, the sum of all the mismatched facies over all well locations, denoted as \(i_{d}^{\left( k \right)}\), by searching for the shortest distance from the facies location at one individual well to its nearest corresponding facies in the sample generated by generator G, which is represented by \(i^{\left( k \right)} \left( {G\left( \mathbf{Z} \right)} \right)\). The distance is computed using L1-norm.

λ is a regularization factor that controls the trade-off between generating realistic images and the match of known facies data. All the data will be honored once contextual loss approaches zero. This is achieved by applying a gradient descent method to the noise z-vector in the latent space through a minimization of the total loss function. The iterative process ceases when the error level of the contextual loss function falls below a given threshold.

Compared with the data conditioning using semantic inpainting (Dupont et al. 2018), computing the contextual loss using a distance transformation based on facies indicators provides a smoother objective function, which leads to improved and faster data conditioning. Furthermore, semantic inpainting requires user-defined, and somewhat arbitrary mask and weighting factors for data measurements. This limitation is eliminated in the distance transformation described above. Moreover, the distance transformation using facies indicators is more robust and universal since it is independent of the categorical values to define the facies.

3 2D conditional examples

We built conditional facies models using the conditional GANs as described above. The same dataset for testing unconditional modeling of fluvial and deltaic systems is used so we can retain the network parameters, obtained from the pretrained GANs, to perform the data conditioning efficiently.

Figure 4 shows 20 known well data locations (sand is shown as green, while shale is shown in red). Three fluvial models honoring data from all 20 wells generated using GANs are shown at the top of the figure. The channel geometry and connectivity are like the training images used in Fig. 2. For comparison, we use MPS to generate facies models that are constrained by the same well data. The training image used for MPS is shown on the lower-left corner of the figure, which contains nonstationary channel patterns due to varying channel widths and orientations. This is particularly challenging for MPS because it infers high-order statistics from a single training image that requires repetitive patterns for reliable statistical inference. Examples of failed MPS realizations are shown at the bottom of Fig. 4 where one observes apparent loss of channel geometry and connectivity that are present in the training image. We observe broken channels and poor reproduction of channel widths in the three conditional MPS simulations exhibited in the lower right of Fig. 4, even though all of them honor the 20 well data.

The conditional deltaic facies modeling results from GANs and comparison with MPS simulation are shown in Fig. 5. In this case study, data from 35 wells are used and the heterogeneity and nonstationarity of the facies deposits are more pronounced due to large variations of channel widths and orientations. This results in poor reproduction of the distributary channels in the three conditional MPS simulations shown. This is due to the difficulty MPS has in finding sufficient repetitive patterns from the nonstationary deltaic training image; it is shown on the left corner of Fig. 5. Consequently, the resulting MPS facies models do not resemble the channel distributions present in the deltaic training image.

In contrast, conditional models using GANs yield a more realistic representation of the deltaic images in source point location, channel widths and orientation. All realizations honor the data from the 35 wells. We feel this demonstrates an advantage of using GAN’s over MPS for modeling facies for nonstationary geological depositional environments, which is ubiquitous in subsurface reservoirs.

4 Facies modeling examples in 3D

The conditional GAN method proposed in this paper was used to build geologically realistic facies architectures in 3D with the models conditioned to well data. We first test unconditional simulations of a depositional fluvial system with three facies: channel sand, levee and shale background. A total of 10,000 fluvial models in 3D were generated using OBM, all with variation in channel width, thickness, amplitude, sinuosity and orientation. The proportions of three facies are around 0.85 (shale), 0.10 (channel) and 0.05 (levee), respectively. The size of each training model is 32 × 64 × 64 in z-, y- and x-direction, respectively.

Figure 6 shows eight of the 10,000 models used as GAN training data sets. They exhibit complexity of depositional facies architecture and their spatial associations. First, the 3D GANs are trained using 10,000 fluvial training images, and second, we generate unconditional facies models using these pretrained GANs. Eight unconditional models (realizations) are displayed at the bottom of Fig. 6. The results suggest that GANs can “learn” facies architectures and patterns quite well to perform prediction through mapping the 1D latent space to 3D facies models. The channel geometry (width, amplitude and sinuosity) and the spatial association among the three facies are all correctly captured and reasonably reproduced in these examples.

The pretrained GANs are further used to generate 3D fluvial facies models, again constrained by facies interpretations in well locations. Figure 7 shows 10 well locations with 3 interpreted facies (left-most) and three conditional facies models being generated by GANs. The channel architectures, geometries and facies associations are reasonably well replicated and all the samples (realizations) honor the given well data.

A more complex example meant to mimic carbonate deposition is tested to demonstrate the modeling capability of 3D GANs. We created 5000 carbonate ramp training models in 3D, each with five transitional facies: tidal flats, lagoon, shoal, shallow marine and deep marine. Figure 8 shows 5 of the 5000 training examples that exhibit clear lateral progradational and vertical aggradational facies associations. The facies belts increase in aggradation angles upward within a given training image. The size of each training model is 32 × 64 × 64 in z-, y- and x-direction, respectively.

At the bottom of Fig. 8 are 3 unconditional models (realizations) generated by GANs. The transitional facies trend exhibited in the training examples (from tidal flats to deep marine) is captured and reproduced in the models predicted by GANs. This type of strongly nonstationary 3D facies patterns has proven to be extremely challenging for conventional geostatistical simulation approaches, yet it is critical for such geological representations to be reflected in reservoir simulation models to allow reliable field development and operating decisions.

The structure of 3D GANs is shown in “Appendix”.

5 Conclusions

We have proposed a flexible framework for generating geologically realistic facies models conditioned to well data in 3D with GANs. This method appears to be superior to existing geological modeling tools in several aspects. Firstly, it can generate realistic geological realizations with a wide range of implicit uncertainty by capturing a distribution of architectures and patterns, as opposed to a single training image used in MPS. Furthermore, it can be conditioned on a much larger number of known well measurements and interpretations than existing geostatistical simulation algorithms, while still generating realistic samples. Most importantly, it can capture and generate nonstationary facies patterns directly from nonstationary training images. Such nonstationarity and trends are the norm in depositional sedimentary system, yet they are very challenging for conventional geostatistical simulations. In the future, we plan to expand this work to generate subsurface geological models constrained by other forms of measurements and interpretations, such as vertical facies proportion curves and areal trends. We believe the methods described herein represent a potentially useful and significant alternative to conventional geostatistical simulation that will better define 3D reservoir modeling while allowing seamless data integration from static, and potentially dynamic, modeling workflows.

References

Caers J, Zhang T. Multiple-point geostatistics: a quantitative vehicle for integrating geologic analogs into multiple reservoir models. AAPG Memoir. 2004;80:383–94.

Chan S, Elsheikh AH. Parametrization and generation of geological models with generative adversarial networks. 2017. arXiv preprint arXiv:1708.01810.

Chugunova T, Hu LY. Multiple-point simulations constrained by continuous auxiliary data. Math Geosci. 2008;40:133–46.

Cressie N. The origins of kriging. Math Geol. 1990;22(3):239–52.

Deutsch CV, Journel AG. Geostatistical software library and user’s guide. New York, Oxford: Oxford University Press; 1998. p. 369.

Deutsch CV, Wang L. Hierarchical object-based stochastic modeling of fluvial reservoirs. Math Geol. 1996;28(7):857–80.

Dupont E, Zhang T, Tilke P, Liang L, Bailey W. Generating realistic geology conditioned on physical measurements with generative adversarial networks. 2018. arXiv preprint arXiv:1802.03065.

Goodfellow I, Pouget-Abadie J, Mirza M, Xu B, Warde-Farley D, Ozair S, Courville A, Bengio Y. Generative adversarial nets. In: Advances in neural information processing systems; 2014. p. 2672–80.

Goovaerts P. Geostatistics for natural resources evaluation. New York, Oxford: Oxford University Press; 1997. p. 483.

Hauge R, Holden L, Syversveen AR. Well conditioning in object models. Math Geol. 2007;39(4):383–98.

Holden L, Hauge R, Skare Ø, Skorstad A. Modeling of fuvial reservoirs with object models. Math Geol. 1998;30(5):473–96.

Honarkhah M, Caers J. Direct pattern-based simulation of non-stationary geostatistical models. Math Geosci. 2012;44:651–72.

Journel AG, Zhang T. The necessity of a multiple-point prior model. Math Geol. 2006;38(5):591–610.

Laloy E, Herault R, Jacques D, Linde N. Training-image based geostatistical inversion using a spatial generative adversarial neural network. Water Resour Res. 2017;54:381–406.

Li H, Li G, Lin L, Yu Y. Context-aware semantic inpainting. 2017. arXiv preprint arXiv:1712.07778.

Mosser L, Dubrule O, Blunt MJ. Reconstruction of three-dimensional porous media using generative adversarial neural networks. Phys Rev E. 2017;96(4):043309.

Pathak D, Krahenbuhl P, Donahue J, Darrell T, Efros AA. Context encoders: feature learning by inpainting. In: Proceedings of the IEEE conference on computer vision and pattern recognition; 2016. p. 2536–44.

Radford A, Metz L, Chintala S. Unsupervised representation learning with deep convolutional generative adversarial networks. 2015. arXiv preprint arXiv:1511.06434.

Skorstad A, Hauge R, Holden L. Well conditioning in a fuvial reservoir model. Math Geol. 1999;31(7):857–72.

Strebelle S. Conditional simulation of complex geological structures using multiple-point statistics. Math Geol. 2002;34(1):1–21.

Yeh R, Chen C, Lim TY, Hasegawa-Johnson M, Do MN. Semantic image inpainting with perceptual and contextual losses. 2016. arXiv preprint arXiv:1607.07539.

Zhang T, Switzer P, Journel A. Filter-based classification of training image patterns for spatial simulation. Math Geol. 2006;38(1):63–80.

Author information

Authors and Affiliations

Corresponding author

Additional information

Edited by Jie Hao

Appendix

Appendix

The structure of 3D GANs that generated the results in this paper is shown in Table 1. This structure is like the deep convolutional GANs proposed by Radford et al. (2015), but revised and extended to 3D for conditional facies modeling purposes. Each generator and discriminator are separate deep convolutional networks. The nonlinearities in the discriminator are LeakyReLU (0.2) except for the output layer which is a sigmoid. The nonlinearities in the generator are ReLU except for the output layer which is a tanh function. The GANs were trained for 500 epochs with Adam and has a learning rate of 1e−4, β1 = 0.5 and β2 = 0.5.

When optimizing z-vector in the latent space to honor the conditional data, we use Adam with a learning rate of 1e−2 and default β parameters. We use λ = 1000 and train for 1500 iterations.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Zhang, TF., Tilke, P., Dupont, E. et al. Generating geologically realistic 3D reservoir facies models using deep learning of sedimentary architecture with generative adversarial networks. Pet. Sci. 16, 541–549 (2019). https://doi.org/10.1007/s12182-019-0328-4

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12182-019-0328-4