Abstract

Introduction

Clinical decision-making is a daily practice conducted by medical practitioners, yet the processes surrounding it are poorly understood. The influence of ‘shortcuts’ in clinical decision-making, known as heuristics, remains unknown. This paper explores heuristics and the valuable role they play in medical practice, as well as offering potential solutions to minimize the risk of incorrect decision-making.

Method

The quasi-systematic review was conducted according to modified PRISMA guidelines utilizing the electronic databases Medline, Embase and Cinahl. All English language papers including bias and the medical profession were included. Papers with evidence from other healthcare professions were included if medical practitioners were in the study sample.

Discussion

The most common decisional shortcuts used in medicine are the Availability, Anchoring and Confirmatory heuristics. The Representativeness, Overconfidence and Bandwagon effects are also prevalent in medical practice. Heuristics are mostly positive but can also result in negative consequences if not utilized appropriately. Factors such as personality and level of experience may influence a doctor’s use of heuristics. Heuristics are influenced by the context and conditions in which they are performed. Mitigating strategies such as reflective practice and technology may reduce the likelihood of inappropriate use.

Conclusion

It remains unknown if heuristics are primarily positive or negative for clinical decision-making. Future efforts should assess heuristics in real-time and controlled trials should be applied to assess the potential impact of mitigating factors in reducing the negative impact of heuristics and optimizing their efficiency for positive outcomes.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

Medicine is a science of uncertainty and an art of probability.—William Osler

Doctors make decisions daily which impact on the lives and livelihood of others. Decision-making is either fast, intuitive, heuristic-like and influenced by our cognitive biases, or analytical, thoroughly assessed and well-reasoned [1]. Heuristics are decisional shortcuts, influenced by our own cognitive biases, and are used by practitioners to ensure efficient practice. The potential risk of error from their use is poorly understood. A publication by the Institute of Medicine’s (IOM) To Err is Human in 2000 reported an estimated 98,000 preventable deaths in the USA [2]. In 2008, medical error costs in the USA—many of which were resulting from poor decision-making—were estimated at $19.5 billion [3]. When considering quality-adjusted life years (QALYs), this cost rises up to $1 trillion [4]. Understanding the important role heuristics play in clinical decision-making is likely to have huge economical and personal ramifications, thus warranting further investigation.

Objectives

The objectives of this narrative review are as follows:

-

To discuss clinical decision-making in medical practice

-

To highlight the most commonly used heuristics in medical practice

-

To explore if heuristics impact medical practice

-

To highlight potential practices and interventions to remediate some of the possible negative implications of heuristics

Method

The review was conducted utilizing the electronic Medline, Embase and Cinahl using a quasi-systematic review according to modified PRISMA guidelines.

Inclusion criteria

All English language papers including cognitive bias and the medical profession were included. Papers with evidence from other healthcare professions were included if medical practitioners were in the study sample.

Exclusion criteria

Papers were excluded if they did not discuss cognitive biases. They were also excluded if they did not focus on the medical profession.

Search strategy

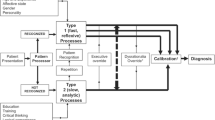

The search terms used were ‘heuristic’, ‘cognitive bias’, ‘tendency’, ‘preconception’, ‘rule of thumb’, ‘problem solving’, ‘mental processes’, ‘attentional bias’, ‘bias’, ‘metacognition’, ‘attitude of health personnel’, ‘control*’, ‘behaviour control’, ‘decision making’, ‘clinical decision making’, ‘decision theory’, ‘decision support techniques’, ‘doctor’, ‘medical staff, hospital’ and ‘internship and residency’. The screening process is elaborated further in Fig. 1 in a modified PRISMA flow diagram. References and bibliography lists and journal content pages were also hand-searched.

Discussion

Clinical decision-making

Clinical decision-making typically involves five stages:

-

collection and analysis of relevant information

-

judgement

-

decision-making

-

decision-acting

-

post hoc evaluation of the outcome

The context, i.e. the speed at which decisions are made and the weighted outcome of decisions, plays an important role. They influence the activation of different decision-making processes. Intuitive decision-making is influenced by personal cognitive biases moulded on influences from interaction with society and activates regions such as the ventral medial prefrontal cortex when fast decisions are made. Reflective decision-making is analytical, logical and thoughtful, resulting in use of the right inferior prefrontal cortex [5].

Decisions to protect patient safety are made from practitioner to policymaker. Frameworks have been established to reflect this continuum, including prescriptive models at a systematic level, to normative and descriptive models at a practitioner level [6]. Resulting guidelines and protocols reflect rational scientific and reflective humanistic decision-making processes and are important for reducing the risk of error associated with intuitive decision-making.

Heuristics

In medicine, simple strategies are used to assist decision-making which are determined by an individual’s cognitive style and environment [7, 8]. A common framework used to describe the relationship between intuitive thinking and reflective analytical reasoning is that of a ‘dual-system’ of thinking [1]. In intuitive thinking processes, the rules of rationality, described elsewhere [9], are not followed when making fast decisions. Instead personal experience and perception, known as ‘cognitive biases’, dominate the direction of decision-making in order to create shortcuts known as ‘heuristics’.

Heuristics are optimally used for simple tasks which are high in volume and low in impact to reduce the cognitive load of thought processes associated with analytical thinking, and to guide our decisions in a way in which the brain perceives to be most efficient and economical [10]. Analytical thought processes should then be applied for complex decisions, which are high in impact and require evidence-based reasoning.

While in many cases these ‘fast and frugal rules’ may lead to correct choices amongst physicians [11], they may also distort our reasoning, thus increasing the risk of incorrect judgements and preventable medical error [1, 12,13,14,15]. These uses of heuristics are influenced by several factors including the practitioner’s social situation, the weighting of the decision and the potential outcomes, as well as whether or not they have successfully used the heuristics previously [16]. It has been theorized that medical error occurs with overuse of intuitive thinking processes in inappropriate contexts, perhaps as a result of an unmanageable patient caseload or due to personal causes such as sleep deprivation. This ultimately results in use of intuitive decision-making in situations where analytical thinking processes should be employed [15, 17,18,19,20,21]. Strategies such as illness scripts, pattern matching and chunking of data are commonly utilized to assist heuristics in reducing cognitive overload [22]. While beneficial for efficiency in patient management, heuristics do increase the likelihood of misjudgement. The most common heuristics in the medical profession are explained below.

Heuristics used in medicine

It is difficult to arrive at an accurate figure for the true prevalence of heuristic use in the medical profession with figures ranging from 7.8 to 75.6% and 5.9 to 87.8% in the most comprehensively studies heuristics—the Availability and Anchoring heuristic, respectively [23]. An elaborative list of heuristics, or ‘cognitive dispositions to respond (CDRs)’, that may lead to diagnostic error is described elsewhere [24].

Availability heuristic

A Senior House Officer (SHO) is on call and has admitted three patients overnight who are each complaining of the cardinal signs of an ischaemic attack. A fourth patient is admitted—an overweight male in his 60s with an extensive history of smoking. He presents with some blurred vision and is feeling faint. The SHO has a preliminary diagnosis of a transient ischaemic attack. They were unaware the patient was chronically dehydrated after trying to increase their physical activity levels. The ‘Availability heuristic’ [25,26,27] is the cognitive bias associated with making a judgement of the likelihood of an event happening based off your previous experience in a similar situation resulting in risk of distorted hypothesis generation.

Confirmation heuristic

The 47-year-old patient with rheumatoid arthritis has been complaining of a recent increase in unrelenting back pain. Only answering what the doctor asked about pain, they are sent home with some medication for pain management following a diagnosis of arthritic-related changes. The patient has noticed a drastic loss in weight recently but felt this was likely due to them cutting out sugar in their tea. The ‘Confirmation heuristic’ [26, 27] supports ‘tunnel-visioned’ searching for data to support initial diagnoses while actively ignoring potential data which will reject initial hypotheses. Closely aligned with the Anchoring heuristic, it increases the likelihood of premature closure of a diagnosis [24].

Representativeness heuristic

A young lady has presented to the neurology clinic with muscle weakness and sensory changes in one of her upper limbs. She also complains of recent onset fatigue, and when asked, she says she has had a few near-falls. The intern thinks a potential diagnosis of multiple sclerosis given its prevalence in this age group and variability in symptom presentation. She was later diagnosed with the rare pathological condition known as multifocal motor neuropathy. The ‘Representativeness heuristic’ is the increased likelihood of practitioners to utilize a cognitive protocol for diagnosis of conditions resulting in over emphasizing particular aspects of their assessment and diagnosis which support their hypothesis while missing atypical variants in the patient [28,29,30]. Closely associated with the prevalent relative-risk bias [12], it leads to misclassification due to overreliance on the prevalence of a condition.

Anchoring heuristic

It is 7 a.m. in the morning, and the orthopaedic registrar is reviewing rounds of the patients who had a total hip replacement yesterday. They notice that one of the elderly patients is quite short of breath on talking. Immediately their assessment is ‘anchored’ on this salient feature. They are convinced it is a pulmonary embolism and unaware that the patient actually has a history of chronic obstructive pulmonary disease. ‘Anchoring’ [26, 27] can be described as when a diagnosis is biased by a specific piece of information on which a physician uses to ‘anchor’ their diagnosis without considering other presenting signs and symptoms with equal value. It can be used as a set reference point, which is useful in quick decision-making, but can negatively impact on a judgement when that anchor is no longer pertinent to the situation [31].

Bandwagon heuristic

The oncology multidisciplinary team meeting has brought up a disagreement in management of a patient with a grade III retroperitoneal sarcoma. The team personalities in the room clash over how best to manage the patient going forward—whether to proceed with palliative or attempt to operate as able. Some members of the team feel the decision made is not in the patients best interest but decide to ultimately go with the preferred option of their senior for fear of repercussion. The ‘Bandwagon heuristic’ [26, 32] is the tendency to side with the majority in decision-making for fear of standing out. It may be closely related to and result in conservative default decision-making for patient care [33].

Overconfidence heuristic

The general practitioner is assessing a patient complaining of headaches and dizziness for the past few weeks. Having completed an extensive amount of neurology training and a postgraduate degree in neurological medicine, they are convinced this person has migraine. The patient thinks they could just be ‘sick’ but the G.P. ignores them. On reflection they consider alternative aetiologies such as sinusitis or otitis media. The ‘Overconfidence heuristic’ [34, 35] is when a physician is too sure of their own conclusion to entertain other possible differential diagnoses. It may result in decision-making being formulated through opinion or ‘hunch’ as opposed to systematic approaches [24]. It occurs in both experienced doctors, and inexperienced doctors in what is known as the Dunning Kruger effect. Closely linked to the hindsight bias, it reflects the inability of the physician to reflect accurately on the erroneous event which may compromise learning from mistakes.

Omission heuristic

An elderly patient complaining of long-term nausea and vomiting has confirmed cholecystitis on investigation. On discussion with the general surgeon, the surgeon advised that this would not be suitable given his age and that he should instead manage his nausea conservatively instead of opting for a laparoscopic cholecystectomy. The conservative management of patients may lead to what is known as the ‘Omission heuristic’ [36] leading to a delayed treatment for some patients and inadequate response to clinical symptoms.

Aggregate heuristic

An experienced surgeon who has recently moved to a new healthcare system was on call when a patient presents with severe bruising and a diagnosis of haemophilia. The patient will not provide consent for a blood transfusion in any circumstance as they are a Jehovah’s Witnesses. In their own experience, where paternalistic medicine dominated, a surgeon should act in the patient’s best interest and so they wait until the patient becomes unconscious and decide to operate. The ‘Aggregate heuristic’ as defined by Croskerry [24] is defined as ‘when physicians believe that aggregated data, such as those used to develop practice guidelines, do not apply to individual patients they are treating’—in this case being the right to bodily autonomy. It is an important heuristic to challenge as healthcare practice move towards an increasingly regulated and patient-centred standardized healthcare system.

Impact of heuristics on medicine and patient outcomes

Heuristics can save lives and are important in healthcare as they allow quick decisions to be made in time-constrained situations. They can also result in patient harm and error if not executed appropriately. Most heuristics have been researched with regard to their interplay in clinical diagnosis (60%) with less focus on treatment or management (35%), and prognosis (10%) [23]. They have been explored most in general practice, obstetrics and gynaecology, and oncology in descending order of prevalence [12] with a dearth of research in acute settings such as emergency medicine and surgery. Given the difficulty in conducting research to evaluate the effects of heuristics’ real-life performance and patient outcomes most of research conducted is through survey and simulation.

Positive impact

Clinical decision aids are effective systematic means of diagnosing common conditions. In this way, more cases than not benefit from use of heuristic models. Heuristics have been found to be particularly useful when used by experienced doctors to provide efficient care to patients [35, 37, 38]. More experienced practitioners are less likely to take risks [39] and utilize heuristic decisions more effectively which is important given they are the ultimate decision-makers. This may mitigate risk of avoidable error [40]. Nonetheless, they are less effective when managing analytical decision-making scenarios [41] which may result in inappropriate use of heuristic style decision-making in cases where more thorough analytical decision-making is required. Alternatively, this may be due to the inability of younger residents to utilize heuristic strategies effectively owing to a lack of sufficient knowledge.

Negative impact

Cognitive firewalls such as standardized approaches have improved patient outcomes [42, 43], but heuristics are still required for the diagnostic process to capture the honest ‘flesh and blood’ decision-making processes [44]. When influenced by cognitive bias, heuristics may be ineffective due to inaccurate data collection or synthesis. Negative impacts of heuristic use have been found [12, 23] including errors when using the Anchoring [26], Availability [26] and Representative heuristics [28,29,30]. Experience is also impacted, and when information is presented in a non-‘script’ format, there is an increased likelihood of performance being negatively affected in experts [45].

Practices and interventions to reduce negative impacts of heuristics

Skill development and reflective practice

Reflective practice aims to challenge biases that place practitioners at risk of incorrect decision-making. It involves building the capacity to critically reflect upon self-decisions [46] to broaden the knowledge base of the practitioner [47]. It encourages practitioners to consider more psychosocial elements [48] and challenges overconfidence by promoting shared decision-making. It is not an analytical situation but rather a reflection on whether the favoured decision will ultimately result in a high likelihood of a successful outcome given the context at hand [49]. Effective strategies associated with reflective practice to reduce the risk of diagnosis inaccuracy include the following:

-

increasing expertise

-

developing clinical reasoning

-

seeking the assistance of peers and available decision-making tools

There are five sets of behaviours, attitudes and reasoning processes associated with reflective practice required of medical practitioners to assist in responding to complex conditions in the clinical setting [46]. These are as follows:

-

deliberate induction

-

deliberate deduction

-

a willingness to test

-

an attitude to openness

-

metareasoning

The skills required and activity examples highlighted by the National Health Service (NHS) in the UK [50] matcheds with Mamede and Schmidt’s [46] behaviours and is described elsewhere.

Education and simulation

Training in heuristics may enhance healthcare delivery [51]. If clinicians are aware of their own biases, they have a greater likelihood of avoiding them by implementing strategies [24]. These techniques include the following:

-

considering alternatives

-

tailored training

-

simulation for a ‘cognitive walkthrough’

-

cognitive forcing

-

enhanced feedback

Education surrounding reasoning in complex clinical scenarios is also expected to improve an individual’s performance [52]. In the case of specific medical conditions, a minimum standardized approach to patient management, encompassing all differential diagnoses, may counteract the potential negative effects of heuristics. Simulated environments, with multitude of factors involved in real-life medical practice such as resource management, interpersonal relations and time restrictions with particular focus on high-acuity settings [41], may assist practitioners in developing awareness of their diagnostic biases in a controlled environment [53, 54].

Traditional behaviourist approaches to teaching which focused on ‘service saturation’ of trainees and leaned towards scholarship and clinical skill [55], as the pillars of performance, are not preparing prospective doctors for the realities of medical practice. Cognitive teaching focuses on a series of skills—such as communication and teamwork—and focuses on students’ ability to self-monitor by involving them in evaluation of decision analysis [56]. Some training bodies, including the Royal College of Surgeons in Ireland, have found positive experiences with this approach [57]. Medical schools should also place a greater emphasis on challenging preconceived socioeconomic biases, as research has shown that early-year medical students perceive patients differently depending on their socioeconomic class [58].

Technology

Technology, through the use of digital clinical decision aids, may be useful as a debiasing technique [24] and decreasing diagnostic errors [59]. One of the key relationships pivotal to effective decision-making involves finding the balance between holistic patient-centred care, and using technology to provide objective assessments of clinical scenarios. For example, an awareness that it might be most appropriate objectively to intubate an elderly patient in palliative care with respiratory alterations may be in fact counteracted by an understanding of alternative methods of maintaining patient airway such as CPAP or BiPAP and wishes of the patient/family members to minimize physical distress.

Shared decision-making

‘Shared decision-making’ involves informing patients of the potential risks and benefits of medical decisions and seeking their input in deciding what direction is most suitable to their needs. Shared decision-making approaches have been shown to improve multidisciplinary team functionality and improve patient-centred outcomes [60,61,62].

Cultural change and open disclosure

A doctor who is more open to reflective practice in an undiagnosed clinical case is less likely to be burdened with feelings of distress as they view uncertainty as an important aspect of the diagnostic process, as opposed to a barrier [63]. Training in human factors may also help to overcome barriers to effective decision-making such as informational cascade, social shirking and reputational cascades [64]. Error disclosure is an important skill to learn in order to make practitioners fully aware of the impact of incorrect decision-making. A commitment from all stakeholders including teaching institutions and policymakers needed for its success [65, 66]. In the workplace, interventions to improve modifiable factors should also be implemented to reduce cognitive overload associated with sleep deprivation and fatigue [67,68,69].

Areas for future research

Future research is needed to explore other potential modifiable factors on clinical decision-making. Personality and its associated traits such as risk-taking, regret, tolerance to ambiguity and even sociocultural biases [23, 70,71,73] may also act as potential influencers to affect doctors’ judgements resulting, in some cases, in an undue self-perception of capability and personal control amongst physicians [74]. Efforts should be made to explore these in real-life clinical settings given the known incongruence between surveys and real-life practice [75].

Conclusion

Due to the capricious nature of medical practice, it remains unknown if, on balance, regular use of heuristics is beneficial or detrimental to medical and patient outcomes. What is known is that their prevalence in practice has been proven, and that they negatively impact patient outcomes if used incorrectly. It is importance to apply context to clinical decision-making—being cognisant of what tasks, specialties and levels of training do heuristics most commonly occur By understanding this, both policymakers and medical professionals themselves can begin to optimise conditions to utilize such clinical decision-making processes for positive outcomes.

References

Tversky A, Kahneman D (1974) Judgment under uncertainty: heuristics and biases. Science 185(4157):1124–1131

Kohn LT, Corrigan J, Donaldson MS (2000) To err is human: building a safer health system, vol 6. National academy press, Washington, DC

Andel C, Davidow SL, Hollander M, Moreno DA (2012) The economics of health care quality and medical errors. J Health Care Finance 39(1):39

Shreve J, Van Den Bos J, Gray T, Halford M, Rustagi K, Ziemkiewicz E, 2010 The economic measurement of medical errors sponsored by society of actuaries’ health section. Milliman Inc. Link available at: http://www.tehandassociates.com/wp-content/uploads/2010/08/researchecon-measurement.pdf. Accessed 25 Apr 2020

Goel V, Dolan RJ (2003) Explaining modulation of reasoning by belief. Cognition 87(1):B11–B22

Bell DE, Raiffa H, Tversky A (1988) Descriptive, normative, and prescriptive interactions in decision making. Decis Mak 1:9–32

Simon HA (1990) Bounded rationality. In: Utility and probability. Palgrave Macmillan, London, pp 15–18

Rieskamp J, Otto PE (2006) SSL: a theory of how people learn to select strategies. J Exp Psychol Gen 135(2):207

Keys DJ, Schwartz B (2007) “Leaky” rationality: how research on behavioral decision making challenges normative standards of rationality. Perspect Psychol Sci 2(2):162–180

Gigerenzer G (2007) Gut feelings: the intelligence of the unconscious, Penguin Books, New York

Wegwarth O, Schwartz LM, Woloshin S, Gaissmaier W, Gigerenzer G (2012) Do physicians understand cancer screening statistics? A national survey of primary care physicians in the United States. Ann Intern Med 156(5):340–349

Blumenthal-Barby JS, Krieger H (2015) Cognitive biases and heuristics in medical decision making: a critical review using a systematic search strategy. Med Decis Mak 35(4):539–557

Stiegler MP, Tung A (2014) Cognitive processes in anesthesiology decision making. Anesthesiology 120(1):204–217

Croskerry P (2002) Achieving quality in clinical decision making: cognitive strategies and detection of bias. Acad Emerg Med 9(11):1184–1204

Mamede S, Van Gog T, Van Den Berge K, Van Saase JL, Schmidt HG (2014) Why do doctors make mistakes? A study of the role of salient distracting clinical features. Acad Med 89(1):114–120

Marewski JN, Gigerenzer G (2012) Heuristic decision making in medicine. Dialogues Clin Neurosci 14(1):77

Van den Berge K, Mamede S (2013) Cognitive diagnostic error in internal medicine. Eur J Int Med 24(6):525–529

Ely JW, Graber ML, Croskerry P (2011) Checklists to reduce diagnostic errors. Acad Med 86(3):307–313

Elstein AS (1999) Heuristics and biases: selected errors in clinical reasoning. Acad Med 74(7):791–794

Dawson NV (1993) Physician judgment in clinical settings: methodological influences and cognitive performance. Clin Chem 39(7):1468–1478

Dawson NV, Arkes HR (1987) Systematic errors in medical decision making. J Gen Intern Med 2(3):183–187

Sweller J, Chandler P (1991) Evidence for cognitive load theory. Cogn Instr 8(4):351–362

Saposnik G, Redelmeier D, Ruff CC, Tobler PN (2016) Cognitive biases associated with medical decisions: a systematic review. BMC Med Inform Decis Making 16(1):138

Croskerry P (2003) The importance of cognitive errors in diagnosis and strategies to minimize them. Acad Med 78(8):776

Mamede S, van Gog T, van den Berge K, Rikers RM, van Saase JL, van Guldener C, Schmidt HG (2010) Effect of availability bias and reflective reasoning on diagnostic accuracy among internal medicine residents. JAMA 304(11):1198–1203

Ogdie AR, Reilly JB, Pang MWG, Keddem MS, Barg FK, Von Feldt JM, Myers JS (2012) Seen through their eyes: residents’ reflections on the cognitive and contextual components of diagnostic errors in medicine. Acad Med 87(10):1361

Crowley RS, Legowski E, Medvedeva O, Reitmeyer K, Tseytlin E, Castine M, Jukic D, Mello-Thoms C (2013) Automated detection of heuristics and biases among pathologists in a computer-based system. Adv Health Sci Educ 18(3):343–363

Perneger TV, Agoritsas T (2011) Doctors and patients’ susceptibility to framing bias: a randomized trial. J Gen Intern Med 26(12):1411–1417

Yee LM, Liu LY, Grobman WA (2014) The relationship between obstetricians’ cognitive and affective traits and their patients’ delivery outcomes. Am J Obstetr Gynaecol 211(6):692–6e1

Sorum PC, Shim J, Chasseigne G, Bonnin-Scaon S, Cogneau J, Mullet E (2003) Why do primary care physicians in the United States and France order prostate-specific antigen tests for asymptomatic patients? Med Decis Mak 23(4):301–313

Seda MC, U.S.N (2008) Look out doctor, you may be getting framed: heuristics in medical decision-making. Mil Med 173(9):2

Colman A, Group think: bandwagon effect. 2003. In: Oxford Dictionary of Psychology. Oxford University Press, New York, p. 77

Kahneman D, Tversky A (2013) Choices, values, and frames. In: Handbook of the Fundamentals of Financial Decision Making: Part I, pp 269–278

Malterud K (2002) Reflexivity and metapositions: strategies for appraisal of clinical evidence. J Eval Clin Pract 8(2):121–126

Kalf AJ, Spruijt-Metz D (1996) Variation in diagnoses: influence of specialists’ training on selecting and ranking relevant information in geriatric case vignettes. Soc Sci Med 42(5):705–712

Sugarman DB (1986) Active versus passive euthanasia: an attributional analysis 1. J Appl Soc Psychol 16(1):60–76

Kempainen RR, Migeon MB, Wolf FM (2003) Understanding our mistakes: a primer on errors in clinical reasoning. Med Teach 25(2):177

McDonald CJ (1996) Medical heuristics: the silent adjudicators of clinical practice. Ann Intern Med 124(1_Part_1):56–62

Nakata Y, Okuno-Fujiwara M, Goto T, Morita S (2000) Risk attitudes of anesthesiologists and surgeons in clinical decision making with expected years of life. J Clin Anesth 12(2):146–150

Hall JC, Ellis C, Hamdorf J (2003) Surgeons and cognitive processes. Br J Surg 90(1):10–16

Murray DJ, Freeman BD, Boulet JR, Woodhouse J, Fehr JJ, Klingensmith ME (2015) Decision making in trauma settings: simulation to improve diagnostic skills. Simul Healthc 10(3):139–145

Collicott PE, Hughes I (1980) Training in advanced trauma life support. JAMA 243(11):1156–1159

Kern KB, Hilwig RW, Berg RA, Sanders AB, Ewy GA (2002) Importance of continuous chest compressions during cardiopulmonary resuscitation: improved outcome during a simulated single lay-rescuer scenario. Circulation 105(5):645–649

Reason J (1990) Human error. Cambridge university press

Custers EJ (2015) Thirty years of illness scripts: theoretical origins and practical applications. Med Teach 37(5):457–462

Mamede S, Schmidt HG (2004) The structure of reflective practice in medicine. Med Educ 38(12):1302–1308

Schön DA (2017) The reflective practitioner: How professionals think in action. Routledge

Engel GL (1977) The need for a new medical model: a challenge for biomedicine. Science 196(4286):129–136

Klein G (2008) Naturalistic decision making. Hum Factors 50(3):456–460

Effectivepractitioner.nes.scot.nhs.uk (n.d.) Clinical Decision Making. [online] Available at: http://www.effectivepractitioner.nes.scot.nhs.uk/media/254840/clinical%20decision%20making.pdf Accessed 19 Mar 2019

Hyman DJ, Pavlik VN, Greisinger AJ, Chan W, Bayona J, Mansyur C, Simms V, Pool J (2012) Effect of a physician uncertainty reduction intervention on blood pressure in uncontrolled hypertensives—A cluster randomized trial. J Gen Intern Med 27(4):413–419

Epstein RM (1999) Mindful practice. JAMA 282(9):833–839

Graber ML (2013) The incidence of diagnostic error in medicine. BMJ Qual Saf. https://doi.org/10.1136/bmjqs-2012-001615

Newman-Toker DE, Pronovost PJ (2009) Diagnostic errors—the next frontier for patient safety. JAMA 301(10):1060–1062

Lynch TG, Woelfl NN, Steele DJ, Hanssen CS (1998) Learning style influences student examination performance. Am J Surg 176(1):62–66

Birkmeyer JD, Birkmeyer NOC (1996) Decision analysis in surgery. Surgery 120(1):7–15

Bhatt NR, Doherty EM, Mansour E, Traynor O, Ridgway PF (2016) Impact of a clinical decision making module on the attitudes and perceptions of surgical trainees. ANZ J Surg 86(9):660–664

Woo JK, Ghorayeb SH, Lee CK, Sangha H, Richter S (2004) Effect of patient socioeconomic status on perceptions of first-and second-year medical students. Can Med Assoc J 170(13):1915–1919

Ehteshami A, Rezaei P, Tavakoli N, Kasaei M (2013) The role of health information technology in reducing preventable medical errors and improving patient safety. Int J Health Syst Disaster Manag 1(4):195

Boss EF, Mehta N, Nagarajan N, Links A, Benke JR, Berger Z, Espinel A, Meier J, Lipstein EA (2016) Shared decision making and choice for elective surgical care: a systematic review. Otolaryngol Head Neck Surg 154(3):405–420

Légaré F, Stacey D, Turcotte S, Cossi MJ, Kryworuchko J, Graham ID, Lyddiatt A, Politi MC, Thomson R, Elwyn G, Donner‐Banzhoff N (2014) Interventions for improving the adoption of shared decision making by healthcare professionals. Cochrane Database Syst Rev 9

Stiggelbout AM, Van der Weijden T, Wit MPTD, Frosch D, Légaré F, Montori VM, Trevena L, Elwyn G (2012) Shared decision making: really putting patients at the centre of healthcare. BMJ 344:e256

Mamede S, Schmidt HG, Rikers R (2007) Diagnostic errors and reflective practice in medicine. J Eval Clin Pract 13(1):138–145

Stiegler MP, Gaba DM (2015) Decision-making and cognitive strategies. Simul Healthc 10(3):133–138

Smith TR, Habib A, Rosenow JM, Nahed BV, Babu MA, Cybulski G, Fessler R, Batjer HH, Heary RF (2014) Defensive medicine in neurosurgery: does state-level liability risk matter? Neurosurgery 76(2):105–114

Kachalia A, Mello MM (2013) Defensive medicine—legally necessary but ethically wrong?: Inpatient stress testing for chest pain in low-risk patients. JAMA

Amirian I (2014) The impact of sleep deprivation on surgeons’ performance during night shifts. Dan Med J 61(9):4912

Philibert I (2005) Sleep loss and performance in residents and nonphysicians: a metaanalytic examination. Sleep 28(11):1392–1402

Wesnes KA, Walker MB, Walker LG, Heys SD, White L, Warren R, Eremin O (1997) Cognitive performance and mood after a weekend on call in a surgical unit. Br J Surg 84(4):493–495

Hall WJ, Chapman MV, Lee KM, Merino YM, Thomas TW, Payne BK, Eng E, Day SH, Coyne-Beasley T (2015) Implicit racial/ethnic bias among health care professionals and its influence on heal

Merrill JM, Camacho Z, Laux LF, Lorimor R, Thornby JI, Vallbona C (1994) Uncertainties and ambiguities: measuring how medical students cope. Med Educ 28(4):316–322

Hozo I, Djulbegovic B (2008) When is diagnostic testing inappropriate or irrational? Acceptable regret approach. Med Decis Mak 28(4):540–553

Mandelblatt JS, Hadley J, Kerner JF, Schulman KA, Gold K, Dunmore‐Griffith J, Edge S, Guadagnoli E, Lynch JJ, Meropol NJ, Weeks JC (2000) Patterns of breast carcinoma treatment in older women: patient preference and clinical and physician influences. Cancer 89(3):561–573

Hall KH (2002) Reviewing intuitive decision‐making and uncertainty: the implications for medical education. Med Educ 36(3):216–224

Patel VL, Kaufman DR, Arocha JF (2002) Emerging paradigms of cognition in medical decision-making. J Biomed Inform 35(1):52–75 Internal Medicine, 173(12), pp.1056-1057

Author information

Authors and Affiliations

Contributions

DFW conceived and designed the analysis, collected the data, contributed to data analysis and wrote the paper.

KCC contributed to data analysis and tools, assisted in editing the paper and contributed to clinical vignettes.

PFR assisted in conceiving and designing the analysis, assisted in editing the paper and contributed to clinical vignettes.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Ethical approval

Not required

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

What this paper adds

This paper provides the reader with an overview of some of the most commonly used heuristics in medical practice. It discusses how best to use them in order to maximize positive patient outcomes. It also highlights some potential interventions to mitigate the risk of negative impacts when using them. This ‘toolkit’ will assist in increasing awareness of clinical decision-making models utilized by medical practitioners.

Rights and permissions

About this article

Cite this article

Whelehan, D.F., Conlon, K.C. & Ridgway, P.F. Medicine and heuristics: cognitive biases and medical decision-making. Ir J Med Sci 189, 1477–1484 (2020). https://doi.org/10.1007/s11845-020-02235-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11845-020-02235-1