Abstract

Purpose

Surgical processes are generally only studied by identifying differences in populations such as participants or level of expertise. But the similarity between this population is also important in understanding the process. We therefore proposed to study these two aspects.

Methods

In this article, we show how similarities in process workflow within a population can be identified as sequential surgical signatures. To this purpose, we have proposed a pattern mining approach to identify these signatures.

Validation

We validated our method with a data set composed of seventeen micro-surgical suturing tasks performed by four participants with two levels of expertise.

Results

We identified sequential surgical signatures specific to each participant, shared between participants with and without the same level of expertise. These signatures are also able to perfectly define the level of expertise of the participant who performed a new micro-surgical suturing task. However, it is more complicated to determine who the participant is, and the method correctly determines this information in only 64% of cases.

Conclusion

We show for the first time the concept of sequential surgical signature. This new concept has the potential to further help to understand surgical procedures and provide useful knowledge to define future CAS systems.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

We all have our own habits that depend on our past. For example, some people take a shower when they wake up, while others prefer to take a shower before going to sleep. Although all surgical procedures are unique because of the patient’s anatomical characteristics, they do not escape to this rule because of the habits and experience of the surgical team. The surgical process modeling methodology, which was introduced around 15 years ago [1, 2], could be used to study these “habits.” A surgical process model describes a surgical procedure at different levels of granularity [2]. For example, a surgical intervention can be divided into successive phases corresponding to the main periods of the intervention. A phase is composed of one or more steps. A step is a sequence of activities used to achieve a surgical objective. An activity is a physical action performed by the surgeon. Each activity is broken down into different components, including the verb of action, the target involved in the action (usually an anatomical structure) and the surgical instrument used to perform the action. Lower granularity levels are closer to kinematic data, such as surgemes and dexemes [3, 4]. A surgeme was defined as a surgical motion with explicit semantic meaning, composed by dexemes. A dexeme is a numerical representation of the performed physical motion. Surgical process models (SPMs) have been developed for three main purposes: (1) formalizing surgical knowledge, (2) evaluating surgical skills and systems, (3) assisting the surgeon in surgical interventions.

A SPM can be acquired manually from observations [5] or automatically thanks to recent advances in automatic recognition of phases [6, 7], steps [8, 9] and activities [10, 11]. These SPMs have recently been used to identify different surgical behaviors, such as those depending on surgical sites [12, 13], surgical skills [14], types of procedures used [15] and surgical expertise levels [12, 13, 16].

In these studies, the analysis is generally done by underlining differences between two or more populations, using one or several information, such as the surgical duration [14, 16], the number of activities [14, 16] or sequence-based metrics [12]. Recently, [13, 17] showed that sequences were highly discriminatory.

In this paper, we introduce the concept of sequential surgical signatures: sequences of phases, stages or activities being common within a more or less homogeneous population. To demonstrate this concept, we propose an approach that is an extension of a method presented in [13].

Materials and methods

The aim of this paper is to identify sequential surgical signatures in the context of micro-surgical suturing training task (see “Data” section). For this, we used a pattern mining method presented in “Methods” section.

Data

The data set was collected at the Tokyo University Hospital. It consists of seventeen micro-surgical suture tasks of a 0.7 mm artificial blood vessel performed using a master–slave robotic platform [18]. Figure 1 shows snapshots of this task. The data set included 4 participants with different levels of surgical expertise and robotics skills. Two of them, called experts, are surgeons but novice roboticians, the other two, called engineering students, have no surgical skills, but are expert roboticians. Each participant conducted between 3 and 6 trials, according to their availability. This explains why there were in total 7 trials made by surgeons, and 10 by engineering students. The average suture duration is about 3 min. For each test, the video was recorded at 30 Hz. Thanks to these videos, both hands were annotated manually, at the level of granularity of the activities, using the software “Surgery Workflow Toolbox [annotate]” [19]. The suture task is relatively simple to describe if the chosen granularity is superficial. Indeed, the stain consists in taking the needle, passing through the two artificial blood vessels and making 3 knots. But, such a description cannot capture variations between participants. Thus, we have broken down each gesture as much as possible in order to better describe the progress of the task. Thus, we are able to take into account the gestures that are repeated several times before being completed, as well as the intra-participant variabilities. Table 1 summarizes the number of trials, average duration and average number of activities per hand for each participant.

The output of the surgical process annotation is a sequential list of phases, steps and/or activities performed by the participant’s left and right hands. In order to analyze both hand sequences, we preprocessed the data by a step called synchronization. It consists of dividing, step a in Fig. 2, the activity from one hand into two parts when on the other hand an activity changes of status (begin or end). Then, the activities of both hands are grouped together in the same sequence, and when no activity is present on one of the hands, the emptiness is supplemented by an activity, called “Idle,” representing this absence of activity, step b in Fig. 2.

As a reminder, an activity is composed of three components: the verb of action, the target and the surgical instrument. To improve readability, we do not use information from the surgical instrument as only one surgical instrument was used in all trials. Thus, we will note the activities of both hands as follows: “\({<}\mathrm{verb}_{\mathrm{left}}, \mathrm{target}_{\mathrm{left}}{>}; {<}\mathrm{verb}_{\mathrm{right}}, \mathrm{target}_{\mathrm{right}}{>}\).”

Methods

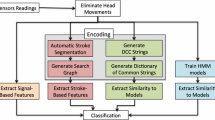

In this paper, we propose to extend a method published in [13] and use it as a means to identify sequential surgical signatures. In summary, the method consists of finding the longest frequent patterns in sequences, i.e., identifying the longest sequence of activities (2 or more) which are present at least \(\mathrm{min}\_\mathrm{fr}\) times in all sequences, where \(\mathrm{min}\_\mathrm{fr}\) is a predetermined threshold. The original method is composed of three steps:

-

Step 1 Establish a vocabulary of frequent activities;

-

Step 2 Generate possible frequent patterns of length k, thanks to the frequent patterns of length \(k-1\) and frequent activities;

-

Step 3 Determine if possible frequent patterns are really frequent and compute the longest frequent patterns of size \(k-1\).

Steps 2 and 3 are repeated to extend the patterns until no new frequent patterns of size k are found. At each loop, the longest frequent patterns of size \(k-1\) are added to the longest frequent patterns of smaller sizes.

The extension consists in removing all patterns that are composed of less than \(\mathrm{min}\_\mathrm{length}\) activities. This step assumes that the shorter patterns do not have enough discriminating power to be interesting. Finally, for the other patterns, we determine if they are sequential surgical signatures or not by checking if they are shared within a more or less homogeneous population. Figure 3 summarizes the complete process for a simple example with the following parameters: frequency threshold \(\mathrm{min}\_\mathrm{fr} = 2\) and the length threshold \(\mathrm{min}\_\mathrm{length} =3\).

To classify sequences, we use the Shared Longest Frequent Sequential Pattern metric (SLFSP metric) developed in [13] to make a hierarchical clustering with the average-link approach using UPGMA algorithm (unweighted pair group method with arithmetic mean) [20]. SLFSP metric as defined as:

where A and B are 2 sequences, \(|\mathrm{shared}_{A,B}|\) is the number of shared longest frequent sequential patterns between A and B, and \(|\mathrm{patterns}_A|\) and \(|\mathrm{patterns}_B|\) are, respectively, the number of longest frequent patterns of A and B.

Validation studies

We propose three validation studies, first of all, to verify the usefulness of the additional step (“Classification according to sequential signatures” section). The aim of the second study (“Analysis of sequential surgical signatures” section) is to identify sequential surgical signatures according to the participants and their level of expertise, but also shared between different populations. Finally, we use sequential surgical signatures to predict from which populations a new sequence belongs to (“Prediction of belonging to a population” section).

Classification according to sequential signatures

The objective of this first study is to ensure that the evolution of the method produces better results than the original, or in the worst case, that it does not deteriorate them. To do this, we try to classify the sequences by level of expertise and participants using both methods.

For the first study we tested different parameter values, varying them in the following way:

-

Frequency threshold \(\mathrm{min}\_\mathrm{fr} \in [2,7]\) for both methods. We have not tested for frequency thresholds superior to 7, because in these cases it would not have been possible to have patterns present only among experts (only 7 trials are made by experts);

-

Length threshold \(\mathrm{min}\_\mathrm{length} \in [3,10]\) for proposed method.

Analysis of sequential surgical signatures

In this study, we use the method on all the data to identify sequential surgical signatures. Based on optimal results of the first study, we selected, for this study and the next one, the following parameters:

-

Frequency threshold \(\mathrm{min}\_\mathrm{fr}=3\);

-

Length threshold \(\mathrm{min}\_\mathrm{length}=3\).

Prediction of belonging to a population

In this study, we determine who is the participant who performs a new sequence and his or her level of expertise, thanks to the signatures present in his or her sequence. To do this, we conduct a leave-one-out cross-validation study. We trained our model on all sequences except one. For all the longest frequent patterns, we determined whether this pattern was an indicator of sequential surgical signature and the percentage of sequences where this pattern is present. For the remaining sequence, we checked the presence of all signatures. With this signature list, we were able to determine the metadata of the remaining sequence. If some signatures are specific to contradictory populations, we have determined the belonging for the remaining sequence based on the highest probability of belonging defined as follows:

Results

In this section, we present the results of the studies, respectively, in “Classification according to sequential signatures,” “Analysis of sequential signatures,” “Prediction of belonging to a population” sections.

Classification according to sequential signatures

Tables 2 and 3 summarize the accuracy of both methods to distinguish between the levels of expertise, and, respectively, between the participants, for different parameter configurations. To determine this accuracy we use a distance of 0.6 to define clusters.

The proposed method gives better results than the original for the same frequency threshold when the length threshold is 3. The only exception is for a frequency threshold of 7, where the accuracy of expertise classification is the same (94.12%) and lower for the classification of participants (76.47% for the original method versus 64.71% for the proposed one). When the length threshold increases, the classification accuracy decreases or stays stable. Figure 4 summarizes the results of the original method and the best of the extended method (\(\mathrm{min}\_\mathrm{length}=3\)).

Accuracy of expertise a and participant b classification for original method (blue square) and extended one with a \(\mathrm{min}\_\mathrm{length} =3\) (red triangle) according to different value of frequency threshold. a Accuracy of expertise classification. b Accuracy of participant classification

For both, the parameters which give the optimal results for classifications are for a frequency threshold of 3. The original method gives 88% of accuracy for expertise and participant classification, whereas the extended one gives 100% of accuracy for expertise classification and 94.12% for participant classification. The results are better for the extended method, even if we have less information than the original one. Indeed, with the optimal parameters, we found 97 patterns for the original method, and only 76 of them for the extended method, i.e., a 22% decrease in information.

The classification results are shown in Fig. 5 for the original method, and in Fig. 6 for the extended one. In these figures, the ordinate corresponds to the distance between sequences, and each leaf corresponds to the sequence ID. This ID is composed of the participant ID for the hundreds and the trial number. Thus, leaf 402 corresponds to the second attempt of the participant 4.

When we cut dendrogram of Fig. 5 at a distance of 0.6, we can define 4 different clusters:

-

\(C^1\): a cluster which gathers all trial of participant 1 together;

-

\(C^2\): a cluster which gathers all trial of participant 2 together except the first trial (201);

-

\(C^3\): a cluster which gathers all trial of participant 3 together;

-

\(C^4\): a cluster which gathers all trial of participant 4 together except the fourth trial (404).

With the same distance to define cluster (0.6), in dendrogram of Fig. 6, we can define 3 different clusters:

-

\(C^1\): a cluster which gathers all trial of participant 1 together;

-

\(C^2\): a cluster which gathers all trial of participant 2 together;

-

\(C^E\): a cluster which gathers all trial of expert participant together.

This last cluster could be divided into two sub-clusters \(C^3\) and \(C^4\). \(C^3\) bringing together the majority of the trials of participant 3 and participant 4, respectively, for \(C^4\). Only participant 4’s trial 404 is grouped with participant 3’s trials.

Analysis of sequential signatures

We looked more closely at the longest frequent patterns. With our parameters (\(\mathrm{min}\_\mathrm{fr}=3\) and \(\mathrm{min}\_\mathrm{length}=3\))), 76 longest patterns composed of 3 or more activities were found. On these 76 patterns, 56 are specific to one of the following metadata: participant 1, participant 2, participant 3, participant 4, student or expert. Table 4 summarizes the number of patterns specific to each type of metadata, the number of patterns that are more frequent than the threshold (Present \(4\,+\)) or whose length is greater than or equal to 5 activities (\(\mathrm{length} \ge 5\)). A pattern specific to a participant is only found in the sequences executed by this participant. Whereas a pattern specific to a level of expertise is found in the sequences performed by the two participants with this level of expertise. In parentheses, we have the result in proportion to the number of trials for the column “Nb Patterns” and in proportion to the number of pattern for the other columns. The length of the longest patterns in each category ranges from 10 to 14 activities.

Prediction of belonging to a population

Results of the leave-one-out cross-validation for predicting population affiliation are summarized in Table 4. Our model is able to perfectly predict the expertise in all cases (accuracy of prediction and correct prediction of 100%). But it is more difficult to predict the participant, the model gives the participant’s information for only 83% of the sequences and makes many errors (correct predictions in 64% of the cases) (Table 5).

Discussion

Method

In this article, we introduced for the first time the concept of sequential surgical signatures. And we have demonstrated this concept using a pattern exploration method. We have decided to ignore patterns that are shorter than a predetermined threshold (\(\mathrm{min}\_\mathrm{length}\)) by deleting them at the end of the method. Another approach would have been to directly find the most frequent patterns with the length of \(\mathrm{min}\_\mathrm{length}\), but in this case, we would have had a large number of results after step 2, which would have caused many unnecessary tests in step 3. As a reminder, to find the longest frequent patterns of length k, it is necessary to find frequent patterns of \(k+1\). In this way, with the example shown in Fig. 3, if we try to find the longest frequent patterns with the length of 3, we have to find frequent patterns of size 4. Thus, with 3 frequent activities, the second step would give 84 candidate models of size 4 (\(3^4\)). Whereas with our method, we do 3 times step 2 but for a total of 12 candidate patterns (9 for \(k=2\), 3 for \(k=2\) and 0 for \(k=4\)).

Classification according to sequential signatures

In this first study, we validated the utility of the extended method for different parameters values. In most cases, not taking the shortest signatures into account increases the classification rate. Although, for the optimal parameters, the accuracy of classification improved (94 vs. 88% for participants and 100 vs. 88% for expertise), this improvement is not significant. However, this classification was carried out with only 78% of the data available using the other method. Thus, it has been shown that our method gives similar results with fewer data. Thus, the hypothesis that the shorter patterns do not have enough discriminating power to be interesting is verified.

Analysis of sequential signatures

Our method is able to distinguish sequences according to the level of expertise and the participant. This differentiation cannot be made by the length of the longest patterns since they all have lengths between 10 and 14 activities regardless the category. We can also find many signatures specific to the level of expertise and each participant, except for participant 3 (Table 4). Although no signature is specific to this participant, this one presents signatures which are common to all the experts. The 6 signatures noted as being specific to the experts are present in the trials of the both expert participants. In order to detect a signature specific to participant 3, it should be present in each of the participant’s trials because only three have been performed, which corresponds exactly to our \(\mathrm{min}\_\mathrm{fr}\) threshold. It is highly improbable that all trials have been proceed in the same way, especially since we did not take into account the signatures composed of 2 activities. To identify the sequential surgical signatures of participant 3, we need to collect more data.

The number of sequential surgical signatures found by each category depends on the number of trials, for example, even though we found fewer sequential surgical signatures for participant 1 than participant 2, 12 versus 22, when we count the average number of signatures per trial, the difference is less significant: 3 versus 3.66. As shown in Table 4, participants 1 and 2 have more sequential surgical signatures specific to participants 1 and 2 than sequential surgical signatures specific to their level of expertise (3 and 3.66 compared to 1.3). On the other hand, for participants 3 and 4, it is the opposite, there are more sequential surgical signatures specific to their level of expertise than for themselves (0.86 vs. 0 and 0.75). This could be interpreted by the fact that the experts’ participants are more consistent when they perform a task, and their signatures are composed of more activities than the students’ participants. This hypothesis is confirmed by the proportion of signatures present more often than the threshold for expert participants (66%) than for student participants (8%), but also by the proportion of signatures composed of many activities (83% for experts vs. 61% for students).

In the 6 expert sequential surgical signatures (Table 4), one of them attracted our attention because of its number of activities (11) and the number of sequences where this signature is present (5 out of 7 expert sequences). This signature, notated \(\mathrm{signature}_1\), is presented at Table 6.

However, a signature may be the marker for something other than a population of individuals. This is the case, for example, of the following signature, noted \(\mathrm{signature}_2\) (Table 7), which are composed of 3 activities and shared between 5 sequences, 2 performed by students and 3 by experts:

These two examples are interesting for multiple reasons:

-

\(\mathrm{Signature}_1\) illustrates the full knot tying process without unnecessary activities;

-

\(\mathrm{Signature}_2\) reflects a mistake independent of the level of expertise. In each case, the participant tried to tie the knot by pulling the two strands of the wire (activity a), but dropped the short wire strand (activity b) so had to catch again the short wire strand (activity c);

-

Both signatures provide hints to improve or facilitate the execution of the task by informing us how an expert performs the task and which mistakes should not be made;

-

These both types of signature, coupled with real-time activity detection methods, can be used for automatic analysis of surgical workflow and thus providing relevant information for situation-aware systems.

A video representation of each of these two signatures is available as supplementary material. In these videos, the animated process was realized thanks to Disco software [21], and each video was synchronized to start each activity at the same time.

Prediction of belonging to a population

Our method also showed that sequential surgical signatures could be used to determine which population a new sequence belongs to. These initial results need to be complemented by more data that would increase not only the number of participants in each population, but also the number of different populations.

Conclusion

In this article, we introduced the concept of sequential surgical signatures and demonstrated their usefulness in classifying surgical sequences and their ability to determine by which individual a sequence was performed. This could be interesting in order to provide an automatic and objective skill assessment system.

The identification of sequential surgical signature could provide leads for understanding surgical skills and consequently useful pedagogical guidance for trainees.

References

Jannin P, Raimbault M, Morandi X, Gibaud B (2001) Modeling surgical procedures for multimodal image-guided neurosurgery. In: Niessen Wiro J, Viergever Max A (eds) Medical image computing and computer-assisted intervention MICCAI 2001. Number 2208 in lecture notes in computer science. Springer, Berlin, pp 565–572

Lalys F, Jannin P (2013) Surgical process modelling: a review. Int J Comput Assist Radiol Surg 9(3):495–511

Reiley CE, Hager GD (2009) Task versus subtask surgical skill evaluation of robotic minimally invasive surgery. In: Medical image computing and computer-assisted intervention MICCAI 2009. Lecture notes in computer science. Springer, Berlin, Heidelberg, pp 435–442

Despinoy F, Bouget D, Forestier G, Penet C, Zemiti N, Poignet P, Jannin P (2016) Unsupervised trajectory segmentation for surgical gesture recognition in robotic training. IEEE Trans Biomed Eng 63(6):1280–1291

Neumuth T, Strau G, Meixensberger J, Lemke HU, Burgert O (2006) Acquisition of process descriptions from surgical interventions. In: Bressan S, Kng J, Wagner R (eds) Database and expert systems applications. Number 4080 in lecture notes in computer science. Springer, Berlin, pp 602–611

Padoy N, Blum T, Feussner H, Berger MO, Navab N (2008) On-line recognition of surgical activity for monitoring in the operating room. In: IAAI, pp 1718–1724

Padoy N, Blum T, Ahmadi S-A, Feussner H, Berger M-O, Navab N (2012) Statistical modeling and recognition of surgical workflow. Med Image Anal 16(3):632–641

Bouarfa L, Jonker PP, Dankelman J (2011) Discovery of high-level tasks in the operating room. J Biomed Inform 44(3):455–462

James A, Vieira D, Lo B, Darzi A, Yang G-Z (2007) Eye-gaze driven surgical workflow segmentation. Med Image Comput Comput Assist Interv MICCAI 2007:110–117

Ko S-Y, Kim J, Lee W-J, Kwon D-S (2007) Surgery task model for intelligent interaction between surgeon and laparoscopic assistant robot. Int J Assit Robot Mech 8(1):38–46

Lalys F, Bouget D, Riffaud L, Jannin P (2012) Automatic knowledge-based recognition of low-level tasks in ophthalmological procedures. Int J Comput Assist Radiol Surg 8(1):39–49

Forestier G, Lalys F, Riffaud L, Collins DL, Meixensberger J, Wassef SN, Neumuth T, Goulet B, Jannin P (2013) Multi-site study of surgical practice in neurosurgery based on surgical process models. J Biomed Inform 46(5):822–829

Huaulmé A, Voros S, Riffaud L, Forestier G, Moreau-Gaudry A, Jannin P (2017) Distinguishing surgical behavior by sequential pattern discovery. J Biomed Inform 67:34–41

Riffaud L, Neumuth T, Morandi X, Trantakis C, Meixensberger J, Burgert O, Trelhu B, Jannin P (2010) Recording of surgical processes: a study comparing senior and junior neurosurgeons during lumbar disc herniation surgery. Oper Neurosurg 67:ons325–ons332

Neumuth T, Wiedemann R, Foja C, Meier P, Schlomberg J, Neumuth D, Wiedemann P (2010) Identification of surgeon individual treatment profiles to support the provision of an optimum treatment service for cataract patients. J Ocul Biol Dis Inf 3(2):73–83

Cao C, MacKenzie CL, Payandeh S (1996) Task and motion analyses in endoscopic surgery. In: Proceedings ASME dynamic systems and control division. Citeseer, pp 583–590

Forestier G, Petitjean F, Senin P, Despinoy F, Jannin P (2017) Discovering discriminative and interpretable patterns for surgical motion analysis. In: Conference on artificial intelligence in medicine in Europe. Springer, Berlin, pp 136–145

Mitsuishi M, Morita A, Sugita N, Sora S, Mochizuki R, Tanimoto K, Baek YM, Takahashi H, Harada K (2013) Master-slave robotic platform and its feasibility study for micro-neurosurgery: master-slave robotic platform for microneurosurgery. Int J Med Robot Comput Assist Surg 9(2):180–189

Garraud C, Gibaud B, Penet C, Gazuguel G, Dardenne G, Jannin P (2014) An ontology-based software suite for the analysis of surgical process model. In: Proceedings of Surgetica’2014. Chambery, France, pp 243–245

Sokal RR, Michener CD (1958) A statistical method for evaluating systematic relationships. Univ Kans Sci Bull 28:1409–1438

Process mining and automated process discovery software for professionals—fluxicon disco. URL https://fluxicon.com/disco/. Accessed 18 Dec 2017

Acknowledgements

This work was funded by ImPACT Program of Council for Science, Technology and Innovation, Cabinet Office, Government of Japan. Authors thanks the IRT b<>com for the provision of the software “Surgery Workflow Toolbox [annotated],” used for this work.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Ethical approval

All procedures performed in studies involving human participants were in accordance with the ethical standards of the institutional and/or national research committee and with the 1964 Helsinki declaration and its later amendments or comparable ethical standards.

Informed consent

This articles does not contain patient data.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

About this article

Cite this article

Huaulmé, A., Harada, K., Forestier, G. et al. Sequential surgical signatures in micro-suturing task. Int J CARS 13, 1419–1428 (2018). https://doi.org/10.1007/s11548-018-1775-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11548-018-1775-x